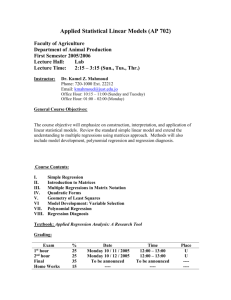

Stats

advertisement

STATISTICS

Inductive learning method

Methods:

Bayesian Inference and other probabilistic methods (use probabilities; input and output

variables can be numeric or non-numeric)

Regression (using training data, construct a model of the data; estimate output for a “new”

sample)

o Predictive regression (input and output are all numeric)

Use ANOVA to estimate the regression error

o Logistic regression (inputs are numeric or non-numeric, the output is a “binary”

categorical value)

o Log-linear model (input and output are non-numeric)

Linear discriminant analysis (inputs are numeric, output is non-numeric).

Bayesian Inference

Used to find conditional probability, i.e. probabilistic “influence” of two variables Y and X, for

example probability that variable Y has a certain value given that we know the value of variable X.

This is written as P(Y if X), or P(Y/X) for short.

General formula:

P(Y/X) = P(Y and X) / P(X) = P(X/Y)*P(Y)/P(X)

Used to predict (new) sample classification, i.e. to find:

P(the new sample belongs to class C / the sample’s values are known).

We assume that the sample is from the input samples for which we know Bayesian probabilities.

Assume: we have a sample set S = {S1, S2, …, Sm) and each Si is a sample containing an ndimensional vector Xi and classification Ck (i.e. each Si is a row in the input file and each Xi is a

vector of xij values for that column, and each Si is assigned output classification Ck.) i = 1, ..,m, j =

1, …, n, k=1, …, c, where c is the max number of output classes.

Assume that P(X) = const for all classes (i.e. the data will not change classification in time.

Also, we know P(X) for the training set.)

Given a “new” sample Sz (i.e. a sample for which we know Xz, i.e. the xzj values, but we

don’t know the output classification Ck) we can predict the output classification. We can predict for

each Ck, k = 1, …, c:

P(Xz belongs to class Ck / we know the xzj values of Xz).

In other words, we predict:

P(Xz/C1) = PRODUCT[j=1,n](P(xzj / C1)

P(Xz/C2) = PRODUCT[j=1,n](P(xzj / C2)

…..

P(Xz/Cc) = PRODUCT[j=1,n](P(xzj / Cc)

What good is this information? It is not certain – it is all probabilistic.

The caveat: since it is all probabilistic, events with the highest probabilities will happen the most –

so our model will be very “accurate” but will miss low probability events. How can we analyze

events with low probabilities (e.g. fraud)?

Example p.97:

Sample Attribute

A1

1

1

2

0

3

2

4

1

5

0

6

2

7

1

Attribute

A2

2

0

1

2

1

2

0

Attribute

A3

1

1

2

1

2

2

1

Output class

C

1

1

2

2

1

2

1

Regression

Assume that the input table has m rows and n columns, and that the last column is the “output

classification” column.

------------The long story useful for coding using matrix packages--------------Assume that the input table is in form:

x11, x12, …, x1n, y1

x21, x22, …., x2n, y2

…

xm1, xm2, …, xmn, ym

Assume that X1, .., Xm are input row vectors, where each vector is a “row” of the input table.

X1 = <x11, x12, …, x1n>

X2 = <x21, x22, …., x2n>

…

Xm = <xm1, xm2, …, xmn>

The input matrix X is X = Transpose<X1 X2 … Xm>.

FYI: Assume that X1’, .., Xn’ are input column vectors, where each vector is a “column” of the

input table.

X1’ = Transpose<x11, x21, …, xm1>

…

Xn’ = Transpose<x1n, x2n, …, xnm>.

Vector Y is the output vector, i.e. a column vector, and contains just the output column.

Y = Transpose< y1, y2, …, ym>

1. Predictive Regression

Input and output are all numeric.

Today, we use mostly linear regression, i.e. the output is a straight line going through the input

points:

Y = X’ * B where Y, X and B are matrices

Matrix B is a vector:

B = Transpose<a b1 b2 b3 … bn>.

Matrix X’ is obtained when we insert column with all 1’s before the first column of matrix X.

When we list all rows and columns out, we get a set of m linear equations for j=1, …,m:

y1 = a + b1*x11 + b2*x12 + … + bn*x1n + error1

…

yj = a + b1*xj1 + b2*xj2 + … + bn*xjn + errorj

…

ym = a + b1*xm1 + b2*xm2 + … + bn*xmn + errorm

--------- The shortcut notation, useful for understanding – watch the change of ij’s!--------Now X1, .., Xn are the columns, and xij is the value of row j in column i:

Y = a + b1*X1 + b2*X2 + … + bn*Xn + Error

yj = a + b1*x1j + b2*x2j + … + bn*xnj + errorj

How to use linear regression:

Linear regression is a way to build a *model* of input data. In the case of linear regression, the

model has the form Y = X’*B. Therefore, the problem can be stated: given Y and X of the training

data set of size mxn, find B; therefore you have the model of input data and can predict value of y

for a new sample x, i.e. sample xz, for z > m.

Solution:

B = Inverse((Transpose(X’)*X’))*(Transpose(X’)*Y))

In case of one-variable regression, i.e. Y = a + bX, things are simpler.

The simplest regression looks only at one input column and one output column, i.e. we assume

Y = a + b*X,

i.e.

yj = a + b*xj j =1, …, m.

In other words: we have a set of input data points X, and we are approximating them with a

regression line Y.

Calculate the parameters a and b by minimizing the squared error, i.e. the distance between the data

points and the regression line. After some math, we get:

a = mean(X) – b*mean(Y)

b = Correlation(X,Y)/Correlation(X,X)

where Correlation formulas are given in the PCA handout

Correlation(X,Y) = (1/m) * SUM[i=1,m]((X-mean(x)) *(Y-mean(y)))

Example: multivariate (multiple) regression

1. We will first use multiple regression formulas but apply them to only one variable, in order to

have it look more manageable. The same principles would apply if we had more than one feature.

Suppose we have 5x1 input data X and one column for output classification Y.

Sample

1

2

3

4

5

X

6

7

8

9

7

Y

1

2

3

3

4

Row vectors are:

X1 = <6>

X2 = <7>

Etc.

Input matrix X is:

X= 6

7

8

9

7

Now append a column of 1’s to the input vector X:

X’ = 1

6

1

7

1

8

1

9

1

7

Transpose(X’) =

1

6

1

7

1

8

Matrix Y is:

Y= 1

2

3

3

4

Transpose(X’)*X =

1

37

Transpose(X’)*Y =

13

99

Inverse(Transpose(X’)*X) = 10.7

-1.4

37

279

-1.4

0.2

1

9

1

7

B=

-1.4

.54

Therefore, y = -1.4 + .54x

2. Let’s apply linear regression to two features:

Sample

1

2

3

4

5

F1

6

7

8

9

7

F2

60

70

80

90

70

Y

1

2

3

3

4

Row vectors are:

X1 = <6

60>

X2 = <7

70>

Etc.

Input matrix X is:

X= 6

60

7

70

8

80

9

90

7

70

Now append a column of 1’s to the input vector X:

X’ = 1

6

60

1

7

70

1

8

80

1

9

90

1

7

70

Matrix Y is the same as in the first example, and matrix B has the same “shape” but the values will

be different.

Continue calculating B using matrix operations. At the end, the result should look like:

Y = a + b1*x11 + b2*x12+ … + bn*x1n

Our book (and other literature) writes this in shortcut as:

Y = a + b1*F1 + b2*F2 + … + bn*Fn

Or:

Yk = a + b1*F1k + b2*F2k + … + bn*Fnk

In our case, n=2, so our Y will be:

Y = a + b1*x11 + b2*x12

Or, in shortcut:

Y = a + b1*F1 + b2*F2

Or:

Yk = a + b1*F1k + b2*F2k

Example p.100: one variable regression: Table 5.2

X

mean

b=

a=

Y

1

8

11

4

3

5.4

0.920245

1.030675

3

9

11

5

2

6

X-mx

-4.4

2.6

5.6

-1.4

-2.4

Y-my

*

-3

3

5

-1

-4

13.2

7.8

28

1.4

9.6

(X-mx)^2

19.36

6.76

31.36

1.96

5.76

12

y = 0.9202x + 1.0307

10

8

6

4

2

0

0

2

4

6

8

10

12

Issues

What is the most important issue about regression? To find the columns which should be included

in the regression line. This is different than feature reduction which we used in Ch.3 (e.g. entropy,

PCA, mean-variance, and other methods.) Feature reduction from Ch.3. was for a generalized case,

to reduce the size of data set and get the data ready for mining. Picking features to be used for

regression is an additional step – once the data set is as small as it can be, pick the features used for

this particular kind of mining.

How can we go about picking features for regression? In the good old ways that work for so many

other approaches:

1. sequential search: start with each feature being a separate set or with a group of selected

features, keep on adding features until some criteria is satisfied (and then check, e.g. with

ANOVA)

2. combinatorial approach: perform search across all possible combinations of features and see

which combo gives the best regression model (and then check, e.g. with ANOVA)

Analysis of Variance (ANOVA)

Assume that we constructed the regression model, so now we have set of real Y values and set of

estimated Y values, called Y*. Our goal is to find out if we included all the features that we should

have included. Assume that the original number of features is n, and we included m of them into the

regression equation.

The pseudocode:

Calculate an estimate of variance by using the square error:

Var* = SQRT( SUM[i=1,m]((yi-y i *)^2)/(m-n)

Then eliminate one feature

Calculate Var* again.

If the new variance is much greater than the old one, this feature must be included

in the regression model. If the new variance is about equal to the old variance, this

feature is not important for the regression model and can be omitted.

2. Logistic Regression

The right side of regression equation is the same; the left side is related to a binary categorical

variable Y.

Y = a + b1*X1 + b2*X2 + … + bn*Xn

yj = log(pj/qj) = a + b1*x1j + b2*x2j + … + bn*xnj

where pj is the probability that yj=0, and qj = 1- pj.

log(pj/qj) is written as logit(p).

Example p.107:

Given logit(p) = 1.5-0.6*x1 + 0.4*x2 – 0.3*x3 and new sample {1,0,1}, estimate Prob(output of this

sample = 1).

logit(p) = log(p/(1-p)) = 1.5-0.6+0-0.3

p = e^0.6 / (1 + e^ 0.6) = 0.35

3. Log Linear Models

The right side of the expression is the same; the left side is related to a Poisson variable with

expected value μ. All X and Y are non-numeric.

Y = a + b1*X1 + b2*X2 + … + bn*Xn

yj = log(μ j) = a + b1*x1j + b2*x2j + … + bn*xnj

Example on p.108: how to use log linear modeling when there is no output variable and we want to

analyze dependency between two columns.

We want to investigate if a person’s support of abortion is related to their gender, and we interview

1100 people of both genders and collect the following data:

Sample ID

1

2

3

…

1100

Gender

M

F

F

…

M

Support for abortion

Y

N

Y

…

N

How do we analyze non-numeric values? Similar to Hamming distance: we count occurrences.

Then we can tabulate that in a contingency table (i.e. the “Sij” table). (Then we can analyze it using

Chi-square.)

1. Calculate contingency table:

Support

Gender

Female

Male

Total

Yes

309

319

628

No

191

281

472

Total

500

600

1100

2. Convert the table into expected values table:

Eij = (row i total * column j total)/total

Gender

Female

Male

Total

Expected Support

Yes

No

500*628/1100

500*472/1100

600*628/1100

600*472/1100

628

472

Total

500

600

1100

3. Calculate Chi-square for a contingency table of m rows and n columns:

Chi_square = SUM[i=1,m]SUM[j=1,n] ((Xji-Eji)^2)/Eji

Pick the confidence interval for the Chi-square (usually a=0.05 or 0.1).

The degrees of freedom for Chi-square is equal to (m-1)*(n-1).

If Chi_square ≥ T(a), then the columns are dependent.

Find T(a) using the chi-square tables.

http://www.itl.nist.gov/div898/handbook/eda/section3/eda3674.htm

In this case, Chi_square = 8.3 and T(a) = 3.84, so there is dependency between gender and abortion

opinion.