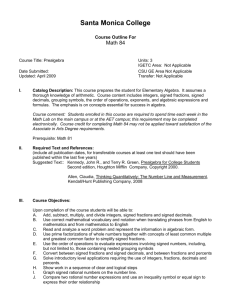

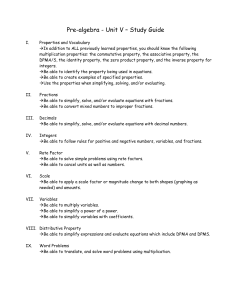

Pre-Algebra Course Description

advertisement

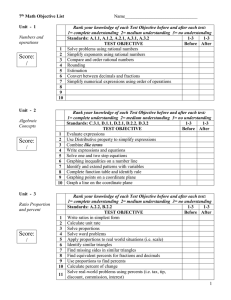

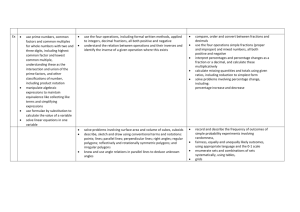

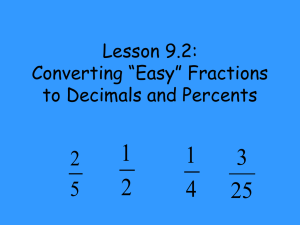

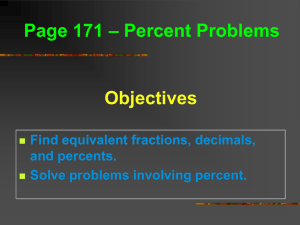

Pre-Algebra Course Description Pre-Algebra Course: The Pre-Algebra course is an introduction to basic algebra concepts and a review of arithmetic algorithms. The course is designed to help students overcome weakness in preparation in mathematics, emphasizing the concepts necessary to be successful in Algebra I and II. The course helps student to develop good mathematical study skills and learning strategies as an integral part of this course. The course begins with a brief review of the number system and operations with whole numbers, fractions, decimal, positive and negative numbers. Eventually covering rational and linear exponents, ratios, proportions and percentage; solving simple and complex equations with one variable. Learning Objectives To develop mathematical skills which may be utilized in other high school and college courses. To develop mathematical skills necessary to enter subsequent mathematics courses. To develop problem solving skills applied in everyday life. Perform basic operations of numbers: adding, subtracting, multiplying, dividing Apply problem-solving skills to every day problems. Solve basic algebraic equations. Organize information into workable material. Determine the place name and place value of each digit in a whole number; round given whole numbers to a given place value; read and write whole numbers in words. Perform the four basic operations of addition, subtraction, multiplication, and division on whole numbers, integers, fractions, mixed numbers, and decimal numbers. Evaluate, collect like terms, and simplify algebraic expressions. Solve linear equations and application problems. Write products in exponential notation, and evaluate exponentials expressions. Simplify expressions containing exponents; use order of operations to simplify expressions. Find the multiples, divisibility, and factors of composite numbers; find the least common multiple and greatest common factor of two or more numbers. Reduce fractions to lowest terms; convert fractions to decimals and decimals to fractions; convert improper fractions to mixed numbers and mixed numbers to improper fractions. Find the ratio of two quantities in fraction notation, write rates as a ratio of two different measures, solve proportions, and solve application problems. Rewrite percents in fractional or decimal form; rewrite fractions and decimals as percents; solve percent equations. ~~~~~~~~~~~~~~~~~~~ Convert units of length, weight, capacity, and energy within the Metric System and the English System of measurement; perform arithmetic operations with the measurements; solve application problems. Find the perimeter or circumference of a geometric figure. Find the area of rectangles, squares, parallelograms, triangles, circles, and composite figures. Find the volume of solids and composite geometric solids. Use the Pythagorean Theorem to find the unknown side of a right triangle; solve problems. Solve similar triangles and application problems. Translate verbal expressions and sentences into mathematical expressions; solve problems. Incorporate knowledge of issues relevant to global education. Assess student growth in course subject matter. Success in this course requires a willingness to work and complete all homework assignments. Lots of Fun!!