Powerpoint slides

COMP 482: Design and

Analysis of Algorithms

Spring 2012

Lecture 15

Prof. Swarat Chaudhuri

Q0: Force calculations

You are working with some physicists who are studying the electrical forces that a set of particles exert on each other.

The particles are arranged on the real line at positions 1,…,n; the j-th particle has charge q j

. The total net force on particle j, by

Coulomb’s law of electricity, is equal to:

Give:

1)

2)

The brute-force algorithm to solve this problem; and

An O(n log n) time algorithm for the problem

2

Partial answer: use convolution!

Consider two vectors: a = (q

1

,q

2

,…q n

) b = (n -2 , (n – 1) -2 , …, ¼, 1, 0, -1, -1/4, …, - n -2 )

Construct the convolution (a’ * b’), where a’ and b’ are obtained from a and b respectively. The convolution has an entry as follows for each j:

3

6.1 Weighted Interval Scheduling

Weighted Interval Scheduling

Weighted interval scheduling problem.

Job j starts at s j

, finishes at f j

, and has weight or value v j

Two jobs compatible if they don't overlap.

.

Goal: find maximum weight subset of mutually compatible jobs.

0 1 b a

2 3 c d e f

4 5 6 7 g

8 h

9 10 11

Time

5

Unweighted Interval Scheduling Review

Recall. Greedy algorithm works if all weights are 1.

Consider jobs in ascending order of finish time.

Add job to subset if it is compatible with previously chosen jobs.

Observation. Greedy algorithm can fail spectacularly if arbitrary weights are allowed.

weight = 999 weight = 1 b a

0 1 2 3 4 5 6 7 8 9 10 11

Time

6

Weighted Interval Scheduling

Notation. Label jobs by finishing time: f

1

f

2

. . . f n

.

Def. p(j) = largest index i < j such that job i is compatible with j.

Ex: p(8) = 5, p(7) = 3, p(2) = 0.

0 1

1

2

3

2 3

4

5

6

4 5 6 7

7

8 9

8

10 11

Time

7

Dynamic Programming: Binary Choice

Notation. OPT(j) = value of optimal solution to the problem consisting of job requests 1, 2, ..., j.

Case 1: OPT selects job j.

– can't use incompatible jobs { p(j) + 1, p(j) + 2, ..., j - 1 }

– must include optimal solution to problem consisting of remaining compatible jobs 1, 2, ..., p(j) optimal substructure

Case 2: OPT does not select job j.

– must include optimal solution to problem consisting of remaining compatible jobs 1, 2, ..., j-1

OPT ( j )

=

ì

î

0 max

{ v j

+

OPT ( p ( j )), OPT ( j

-

1) if j

=

0

} otherwise

8

Weighted Interval Scheduling: Brute Force

Brute force algorithm.

Input : n, s

1

,…,s n , f

1

,…,f n , v

1

,…,v n

Sort jobs by finish times so that f

1

f

2

... f n

.

Compute p(1), p(2), …, p(n)

Compute-Opt(j) { if (j = 0) return 0 else return max(v j

}

+ Compute-Opt(p(j)), Compute-Opt(j-1))

9

Weighted Interval Scheduling: Brute Force

Observation. Recursive algorithm fails spectacularly because of redundant sub-problems exponential algorithms.

Ex. Number of recursive calls for family of "layered" instances grows like Fibonacci sequence.

5

1 4 3

2

3

3 2 2

4

2

5

1 1 0 1 0 p(1) = 0, p(j) = j-2

1 0

1

10

Weighted Interval Scheduling: Memoization

Memoization. Store results of each sub-problem in a cache; lookup as needed.

Input : n, s

1

,…,s n , f

1

,…,f n , v

1

,…,v n

Sort jobs by finish times so that f

1

Compute p(1), p(2), …, p(n)

f

2

... f n

.

for j = 1 to n

M[j] = empty

M[0] = 0 global array

M-Compute-Opt(j) { if (M[j] is empty)

M[j] = max(w j return M[j]

+ M-Compute-Opt(p(j)), M-Compute-Opt(j-1))

}

11

Weighted Interval Scheduling: Running Time

Claim. Memoized version of algorithm takes O(n log n) time.

Sort by finish time: O(n log n).

Computing p( ) : O(n) after sorting by start time.

M-Compute-Opt(j)

: each invocation takes O(1) time and either

–

–

(i) returns an existing value

M[j]

(ii) fills in one new entry

M[j] and makes two recursive calls

Progress measure = # nonempty entries of

M[]

.

–

– initially = 0, throughout n.

(ii) increases by 1 at most 2n recursive calls.

Overall running time of

M-Compute-Opt(n) is O(n). ▪

Remark. O(n) if jobs are pre-sorted by start and finish times.

12

Weighted Interval Scheduling: Finding a Solution

Q. Dynamic programming algorithms computes optimal value. What if we want the solution itself?

A. Do some post-processing.

Run M-Compute-Opt(n)

Run Find-Solution(n)

Find-Solution(j) { if (j = 0) output nothing else if (v print j j

+ M[p(j)] > M[j-1])

Find-Solution(p(j)) else

Find-Solution(j-1)

}

# of recursive calls n O(n).

13

Weighted Interval Scheduling: Bottom-Up

Bottom-up dynamic programming. Unwind recursion.

Input : n, s

1

,…,s n , f

1

,…,f n , v

1

,…,v n

Sort jobs by finish times so that f

1

f

2

... f n

.

Compute p(1), p(2), …, p(n)

Iterative-Compute-Opt {

M[0] = 0 for j = 1 to n

M[j] = max(v j

+ M[p(j)], M[j-1])

}

14

Q1: Billboard placement

You are trying to decide where to place billboards on a highway that goes East-West for M miles. The possible sites for billboards are given by numbers x

1

, …, x billboard at location x i n

, each in the interval [0, M]. If you place a

, you get a revenue r i

.

You have to follow a regulation: no two of the billboards can be within less than or equal to 5 miles of each other.

You want to place billboards at a subset of the sites so that you maximize your revenue modulo this restriction.

How?

15

Answer

Let us show how to compute the optimal subset when we are restricted to the sites x

1 though x j

.

For site x j

, let e(j) denote the easternmost site x i more than 5 miles from x j

.

(for i < j) that is

OPT (j) = mac(r j

+ OPT(e(j)), OPT (j – 1))

16

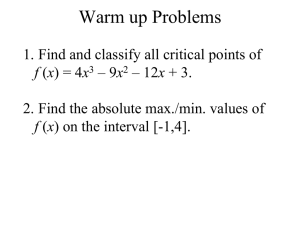

Q2: Longest common subsequence

You are given two strings X = x

1 x

2

… x n and Y = y

1 y

2

… y n

. Find the longest common subsequence of these two two strings.

Note: Subsequence and substring are not the same. The symbols in a subsequence need not be contiguous symbols in the original string; however, they have to appear in the same order.

17

Partial answer

OPT(i, j) = 0 if i = 0 or j = 0

OPT(i – 1, j - 1) if x i

= y j max(OPT(i, j – 1), OPT(j, i – 1)) if x i

≠ y j

18

6.3 Segmented Least Squares

Segmented Least Squares

Least squares.

Foundational problem in statistics and numerical analysis.

Given n points in the plane: (x

1

, y

1

), (x

2

, y

2

) , . . . , (x n

, y n

).

Find a line y = ax + b that minimizes the sum of the squared error: y

SSE

= n

å i

=

1

( y i

ax i

b )

2

Solution. Calculus min error is achieved when a

= n

å i x i y i n

å i x i

2

-

(

å i x i

) (

å i y i

)

, b

=

-

(

å i x i

)

2

å i y i

a

å i x i n x

20

Segmented Least Squares

Segmented least squares.

Points lie roughly on a sequence of several line segments.

Given n points in the plane (x

1 x

1

< x

2

< ... < x n

, y

1

), (x

2

, y

2

) , . . . , (x n

, y n

) with

, find a sequence of lines that minimizes f(x) .

Q. What's a reasonable choice for f(x) to balance accuracy and parsimony?

goodness of fit number of lines y x

21

Segmented Least Squares

Segmented least squares.

Points lie roughly on a sequence of several line segments.

Given n points in the plane (x x

1

–

< x

2

< ... < x n

1

, y

1

), (x

2

, y

2

) , . . . , (x n

, y n

) with

, find a sequence of lines that minimizes: the sum of the sums of the squared errors E in each segment

– the number of lines L

Tradeoff function: E + c L, for some constant c > 0.

y x

22

Dynamic Programming: Multiway Choice

Notation.

OPT(j) = minimum cost for points p

1

, p i+1

, . . . , p j e(i, j) = minimum sum of squares for points p i

, p

.

i+1

, . . . , p j

.

To compute OPT(j):

Last segment uses points p i

Cost = e(i, j) + c + OPT(i-1).

, p i+1

, . . . , p j for some i.

OPT ( j )

=

0 min

1

£ i

£ j

{ if j

=

0 e ( i , j )

+ c

+

OPT ( i

-

1)

} otherwise

23

Segmented Least Squares: Algorithm

INPUT : n, p

1

,…,p

N , c

Segmented-Least-Squares() {

M[0] = 0 for j = 1 to n for i = 1 to j compute the least square error e ij the segment p i

,…, p j for for j = 1 to n

M[j] = min

1 i j

(e ij

+ c + M[i-1]) return M[n]

}

Running time. O(n 3 ).

can be improved to O(n 2 ) by pre-computing various statistics

Bottleneck = computing e(i, j) for O(n 2 ) pairs, O(n) per pair using previous formula.

24