Clustering - Courses - University of California, Berkeley

advertisement

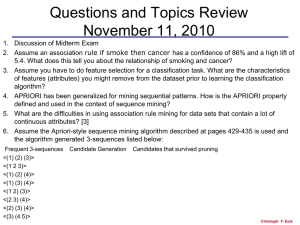

Clustering Ram Akella Lecture 6 February 23, 2011 1290& 280I University of California Berkeley Silicon Valley Center/SC Class Outline Clustering Introduction Mechanics Recommender Systems What is Cluster Analysis? Finding groups of objects such that the objects in a group will be similar (or related) to one another and different from (or unrelated to) the objects in other Inter-cluster groups Intra-cluster distances are distances are minimized maximized Potential Applications Numerical Taxonomy Differentiation between different species of animals or plants according to their physical similarities Fifty plants from3 different species were collected and some measurements were taken such as: sepal length, sepal width, petal length and petal width. Potential Applications Market Segmentation The market is divided into smaller groups that are more homogeneous needs and wants of the market place Objectives of cluster analysis Address the heterogenity in the data Divide the data into more homogeneous groups Find the natural modality of data Determine whether the data contains naturally occurring, homogeneous subsets Measures of distance, dissimilarity and density To measure of proximity of data we can use Direct assessment of proximity Derived measure of proximity or similarity Distance Measurements Euclidean Distance 2 d ij ( xik x jk ) k 1/ 2 This is usually applied to standardized data to give the same weight to all the metrics (except when the input is the Principal Components) Distance Measurements Minkowski Metric p dij xik x jk k The Euclidean distance is a especial case when p=2 When p=1 it is called city-block metric because it is like walking from point A to point B in a city laid out with a grid of streets Mahalanobis Distance This distance takes into account the covariance patterns of the data Dij2 ( xi x j )'1 ( xi x j ) Where Σ is the population covariance matrix of the data matrix X The Mahalanobis distance captures the fact that a point A and a point B are equally likely to have been drawn from a multivariate normal distribution Agglomerative Clustering Start with the points as individual clusters At each step, merge the closest pair of clusters until only one cluster (or k clusters) left More popular hierarchical clustering technique Key operation is the computation of the proximity of two clusters Different approaches to defining the distance between clusters distinguish the different algorithms Agglomerative Clustering Algorithm Basic algorithm is : 1. 2. 3. 4. 5. 6. Compute the proximity matrix Let each data point be a cluster Repeat Merge the two closest clusters Update the proximity matrix Until only a single cluster remains Starting Situation Start with clusters of individual points and a proximity p1 p2 p3 p4 p5 ... matrix p1 p2 p3 p4 p5 . . . Proximity Matrix ... p1 p2 p3 p4 p9 p10 p11 p12 Intermediate Situation After some merging steps, we have some clusters C1 C2 C3 C4 C5 C1 C2 C3 C3 C4 C4 C5 C1 Proximity Matrix C2 C5 ... p1 p2 p3 p4 p9 p10 p11 p12 Intermediate Situation We want to merge the two closest clusters (C2 and C5) and update the proximity matrix. C1 C2 C3 C4 C5 C1 C2 C3 C3 C4 C4 C5 Proximity Matrix C1 C2 C5 ... p1 p2 p3 p4 p9 p10 p11 p12 After Merging The question is: “How do we update the proximity matrix?” C1 C1 C3 C2 U C5 C4 C2 U C5 C3 C4 ? ? ? ? ? C3 ? C4 ? Proximity Matrix C1 C2 U C5 ... p1 p2 p3 p4 p9 p10 p11 p12 How to Define Inter-Cluster Similarity p1 p2 p3 p4 p5 p1 Similarity? p2 p3 p4 p5 MIN MAX Group Average Distance Between Centroids Ward’s Method . . . Proximity Matrix ... How to Define Inter-Cluster Similarity p1 p2 p3 p4 p5 p1 p2 p3 p4 p5 MIN MAX Group Average Distance Between Centroids Ward’s Method . . . Proximity Matrix ... How to Define Inter-Cluster Similarity p1 p2 p3 p4 p5 p1 p2 p3 p4 MIN MAX Group Average Distance Between Centroids Ward’s Method p5 . . . Proximity Matrix ... How to Define Inter-Cluster Similarity p1 p2 p3 p4 p5 p1 p2 p3 p4 p5 MIN MAX Group Average Distance Between Centroids Ward’s Method . . . Proximity Matrix ... How to Define Inter-Cluster Similarity p1 p2 p3 p4 p5 p1 p2 p3 p4 p5 MIN MAX Group Average Distance Between Centroids Ward’s Method . . . Proximity Matrix ... Ward’s Method Similarity of two clusters is based on the increase in squared error when two clusters are merged Less susceptible to noise and outliers Biased towards globular clusters Hierarchical analogue of K-means Can be used to initialize K-means K-means Clustering Partitional clustering approach Each cluster is associated with a centroid (center point) Each point is assigned to the cluster with the closest centroid Number of clusters, K, must be specified The basic algorithm is very simple K-means Clustering – Details Initial centroids are often chosen randomly. Clusters produced vary from one run to another. The centroid is (typically) the mean of the points in the cluster. ‘Closeness’ is measured by Euclidean distance, cosine similarity, correlation, etc. K-means will converge for common similarity measures mentioned above. Most of the convergence happens in the first few iterations. Often the stopping condition is changed to ‘Until relatively few points change clusters’ Two different K-means Clustering Original Points 3 2.5 2 y 1.5 1 0.5 0 -2 -1.5 -1 -0.5 0 0.5 1 1.5 2 x 2.5 2.5 2 2 1.5 1.5 y 3 y 3 1 1 0.5 0.5 0 0 -2 -1.5 -1 -0.5 0 0.5 1 1.5 x Optimal Clustering 2 -2 -1.5 -1 -0.5 0 0.5 1 1.5 2 x Sub-optimal Clustering Importance of Choosing Initial Centroids Iteration 6 3 Iteration 1 2 Iteration Iteration 4 35 3 2.5 33 2.52 2.5 2.5 y 2 1.5 22 yy 1.51 1.5 1.5 1 0.5 11 0.50 0.5 0.5 0 00 -2 -1.5 -1 -0.5 0 0.5 1 1.5 2 0.5 0.5 0.5 1 11 1.5 1.5 1.5 2 22 x -2 -2 -2 -1.5 -1.5 -1.5 -1 -1 -1 -0.5 -0.5 -0.5 0 00 x xx How many clusters To decide the appropriate number of clusters Run the analysis for different values of k and compare the solutions with the pseudo-F statistic given by tr[ B /( K 1)] pseudo F tr[W /(n K )] Where B is the inter-cluster sum of squares matrix, W is the intra-cluster sum of squares, K is the number of clusters and n the number of data points The larger the pseudo-F statistic, the more efficient the clustering is Evaluating K-means Clusters Most common measure is Sum of Squared Error (SSE) For each point, the error is the distance to the nearest cluster To get SSE, we square these errors and sum them. K SSE dist2 (mi , x ) i 1 xCi x is a data point in cluster Ci and mi is the representative point for cluster Ci mi corresponds to the center (mean) of the cluster Given two clusters, we can choose the one with the smallest error One easy way to reduce SSE is to increase K, the number of clusters A good clustering with smaller K can have a lower SSE than a poor clustering with higher K Problems with Selecting Initial Points If there are K ‘real’ clusters then the chance of selecting one centroid from each cluster is small. Chance is relatively small when K is large If clusters are the same size, n, then For example, if K = 10, then probability = 10!/1010 = 0.00036 Sometimes the initial centroids will readjust themselves in ‘right’ way, and sometimes they don’t Consider an example of five pairs of clusters Solutions to Initial Centroids Problem Multiple runs Helps, but probability is not on your side Sample and use hierarchical clustering to determine initial centroids Select more than k initial centroids and then select among these initial centroids Select most widely separated Post-processing Bisecting K-means Not as susceptible to initialization issues Handling Empty Clusters Basic K-means algorithm can yield empty clusters Several strategies Choose the point that contributes most to SSE Choose a point from the cluster with the highest SSE If there are several empty clusters, the above can be repeated several times. Updating Centers Incrementally In the basic K-means algorithm, centroids are updated after all points are assigned to a centroid An alternative is to update the centroids after each assignment (incremental approach) Each assignment updates zero or two centroids More expensive Introduces an order dependency Never get an empty cluster Can use “weights” to change the impact Pre-processing and Postprocessing Pre-processing Normalize the data Eliminate outliers Post-processing Eliminate small clusters that may represent outliers Split ‘loose’ clusters, i.e., clusters with relatively high SSE Merge clusters that are ‘close’ and that have relatively low SSE Can use these steps during the clustering process ISODATA Bisecting K-means Bisecting K-means algorithm Variant of K-means that can produce a partitional or a hierarchical clustering