Pol

Policy Implementation

Does the program work as intended?

What happens after a policy is adopted?

Federal or State departments with administrative authority for the program design regulations.

Draft regulations are submitted for public review.

Regulations may be modified based on public comments.

Final regulations are issued (Federal Register for federal policies)

Money is allocated to the programs that will implement the policy.

Responsibility for implication could lie with federal, state, local government or nonprofit and for-profit agencies.

For nongovernmental agencies there will be some type of grant or contracting process.

For all types of agencies, some type of monitoring process will be put in place to insure compliance with the policy.

Implementation defined:

Implementation analysis focuses on whether policies and procedures have actually been implemented in the organization in the manner intended by decision-makers.

Program implementation is primarily concerned with how policies mandated by a source external to the organization are actually carried out or how the implementation of policies vary across different organizations or locations.

Other term used for examining implementation is program monitoring

:

Program Monitoring involves gathering information about how the program is working and who it serves.

Program monitoring starts when the program has begun to be implemented.. Consequently, it differs from outcome evaluations that take place after a service outcome is produced. What takes place in the program start-up and in the process of producing outcomes.

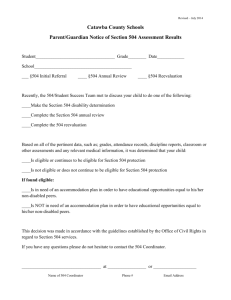

One easy method for determining whether a program is meeting the needs of clients is through a review of information gathered from clients to determine eligibility for services.. Organizations generally gather basic demographic information about applicants such as gender, age, income, and family size. Information is also retained about whether the applicant was found to be eligible for service and the disposition of the application.

If not eligible for service, was the applicant referred elsewhere, placed on a waiting list or turned away?

Case record data allows organizations to look at factors such as demand for service and the incidence and prevalence of social problems. Demand is an indicator of all those people who actually try to obtain a service

(Burch, 1996).

Information is also kept on file about the outcomes associated with the actual service delivery process. Was the service provided successful? The failure of clients to complete the program or unsuccessful case outcomes can be indicators that the program has not been implemented in the manner intended (Chambers, et al., 1992

Program planners also look at data on current and former clients to determine whether the organization provides services to all intended beneficiaries. The program can conceivably serve only a portion of the eligible population, exclude some eligible clients, or provide services to people who do not fit the eligibility criteria.

Program coverage is the terms used to describe whether members of the program’s target population actually receive the service (Rossi & Freeman, 1982). Program bias refers to whether the people served by the program are demographically representative of the target population.

Responsibility for Monitoring/evaluation may originate:

In the original legislation.

In the department responsible for administration and oversight (Federal departments have an Inspector General for that department).

Regular tracking, monitoring, or evaluation processes within each agency or department.

Other sources of monitoring

Nonprofit advocacy or professional groups.

General public or program recipients may make complaints

Whistleblowers in the agency

Congressional or state legislative committees may conduct research or hold hearings about program implementation or lack of implementation.

Why might policies not be

implemented as intended:

Lack of funding and other resources (such as trained staff).

Policy has conflicting goals or policy conflicts with goals of other laws or policies [for example, enforcement of war-related contracting requirements].

Regulations are confusing or limit access to services.

Executive branch does not support, give priority to, or enforce. (example, motor voter laws). Administrative staff is resistant or lacks ability to comply with policy.

Staff are resistant to innovation or lack resources/training.

Policy may not be realistic or does not meet needs.

Policy may have unintended side-effects.

Policy innovation may be wrong intervention with which to address the problem or to serve specific groups of clientele.

Enforcement mechanism is non-existent; may be difficult to make changes in administrative support or staff.

Regional or local variations in how programs are operated or maintained.

Literature on Program Implementation specifies 4 primary reasons for implementation problems

:

Component Evaluation. This method of evaluation focuses on one particular aspect or parts of a program (for example intake services, referrals, intervention planning, staff-client interaction, or a specific type of intervention that can be differentiated from other services).

Effort Evaluation. The primary focus of this type of evaluation is the amount of activity or work that is put into the program and the quality of that work. Effort evaluation can simply look at the number of qualified staff hired for the program, client-staff ratios, and the number of clients actually served. It can also examine the resources (money, facilities, worker time, training modules, etc.) that are devoted to the program.

Treatment Specification. This type of evaluation is used to precisely identify the components of an intervention and the theory of action used to deliver the service and produce outcomes (see Chapter 6).

Program implementation analysis also looks at the degree to which a program has

“drifted” from its original intent and program specifications or the degree of compliance with the expectations of program sponsors or funders. Common implementation problems included failing to deliver the intended intervention, using the wrong intervention to produce the intended outcome, or providing the intervention inconsistently over time (Chambers et al., 1992).

Reform options for policy advocates

(from Jansson)

Changing the policy innovation itself (content, objectives, funding).

Change the activities of oversight organizations, regulations, or monitoring processes.

Naming different agencies or adding new agencies to implement or requiring collaboration among agencies.

Changing the internal or external implementing processes of implementing organizations.

Modifying the context

Influence the assessments (evaluations) of policy outcomes

Obtaining additional resources.

Place pressure on implementing agencies through whistle-blowing, advocacy, political pressure, or protests. [example, public and media pressure around regulation of pesticide use].

Sources of power for challenging policy implementation:

Political pressure – electoral politics/campaign donations; using existing networks or relationships to influence decision-makers.

Disseminating information and informing the public through the media.

Protests and rallies.

Commenting on proposed regulations.

Policy formulation and lobbying for new legislation.

Pressuring agencies for compliance with existing standards.

Legal remedies such as public records requests and lawsuits

[limits on ability to sue federal and state agencies].

Example of Compliance-related

Advocacy:

http://video.google.com/videosearch?q=po licy+advocacy&hl=en&sitesearch=&start=

50