Session 2 - Implementation and process evaluation

Session 2: Implementation and process evaluation

Implementation

Neil Humphrey (Manchester)

Ann Lendrum (Manchester)

Process evaluation

Louise Tracey (IEE York)

Implementation: what is it, why is it important and how can we assess it?

Neil Humphrey, Ann Lendrum and Michael

Wigelsworth

Manchester Institute of Education

University of Manchester, UK neil.humphrey@manchester.ac.uk

Overview

• What is implementation?

• Why is studying implementation important?

• How can we assess implementation?

• Activity

• Feedback

• Sources of further information and support

What is implementation?

• Implementation is the process by which an

intervention is put into practice

• If assessment of outcomes answers the question of

‘what works’, assessment of implementation helps us to understand how and why

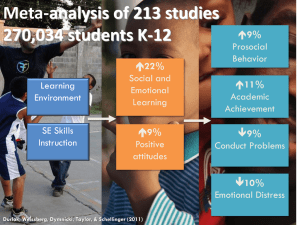

• Implementation science has grown dramatically in recent years. For example, in the field of social and emotional learning, an early review by Durlak (1997) found that only 5 per cent of intervention studies provided data on implementation. This figure had risen to 57 per cent 14 years later (Durlak et al,

2011)

What is implementation?

• Aspects of implementation

– Fidelity/adherence

– Dosage

– Quality

– Participant responsiveness

– Programme differentiation

– Programme reach

– Adaptation

– Monitoring of comparison conditions

• Factors affecting implementation

– Preplanning and foundations

– Implementation support system

– Implementation environment

– Implementer factors

– Programme characteristics

• See Durlak and DuPre (2008), Greenberg et al (2005), Forman et al (2009)

Why is studying implementation important?

• Domitrovich and Greenberg (2000)

1.

So that we know what happened in an intervention

2.

So that we can establish the internal validity of the intervention and strengthen conclusions about its role in changing outcomes

3.

To understand the intervention better – how different elements fit together, how users interact etc

4.

To provide ongoing feedback that can enhance subsequent delivery

5.

To advance knowledge on how best to replicate programme effects in real world settings

• However, there are two very compelling additional reasons!

– Interventions are rarely, if ever, implemented as designed

– Variability in implementation has been consistently shown to predict variability in outcomes

• So, implementation matters!

Why is studying implementation important?

Teacher-rated change: SDQ peer problems

0,1

0,05

0

-0,05

-0,1

-0,15

-0,2

-0,25

-0,3

-0,35

Low PR Moderate PR High PR

PATHS

Control

Why is studying implementation important?

InCAS Reading

3,5

3

2,5

2

0,5

0

1,5

1

PATHS

Control

Low dosage Moderate Dosage High Dosage

How can we assess implementation?

• Some choices

– Quantitative, qualitative or both?

– Using bespoke or generic tools?

– Implementer self-report or independent observations?

– Frequency of data collection?

– Which aspects to assess?

• Some tensions

– Implementation provides natural variation – we cannot randomize people to be good or poor implementers! (although some researchers are randomizing key factors affecting implementation – such as coaching support)

– Assessment of implementation can be extremely time consuming and costly

– Fidelity and dosage have been the predominant aspects studied because they are generally easier/simpler to quantify/measure. We therefore know a lot less about the influence of programme differentiation, quality et cetera

– The nature of a given intervention can influence the relative ease with which we can accurately assess implementation (for example, the assessment of fidelity is relatively straightforward in highly prescriptive, manualised interventions)

Activity

• Think about a school-based intervention that you are evaluating – whether for the EEF or another funder

• How are you assessing implementation?

• What choices did you make (see previous slide) and why?

• What difficulties have you experienced? How are these being overcome?

• How do you plan to analyse your data?

• What improvements could be made to your implementation assessment protocol?

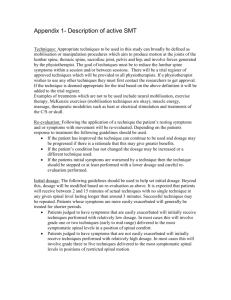

Assessment of implementation – case study (PATHS trial)

• PATHS trial overview

– Universal social-emotional learning curriculum delivered in twice-weekly lessons, augmented by generalisation techniques and home-link work

– 45 schools randomly allocated to deliver PATHS or continue practice as usual for 2 years

– c.5,000 children aged 7-9 at start of trial

– Outcomes assessed: social-emotional skills, emotional and behavioural difficulties, health-related quality of life, various school outcomes (attendance, attainment, exclusions)

• Assessment of implementation

– Independent observations

• Structured observation schedule developed, drawing upon previous existing tools

• Piloted and refined using video footage of PATHS lessons; inter-rater reliability established

• 1 lesson observation per class; moderation by AL in 10% to promote continued inter-rater reliability

• Provides quantitative ratings of fidelity/adherence, dosage, quality, participant responsiveness, reach and qualitative fieldnotes on each of these factors

– Teacher self-report

• Teacher implementation survey developed following structure/sequence of observation schedule to promote comparability

• Teachers asked to report on their implementation on each of the above factors over the course of the school year in addition to providing information about the nature of adaptations made (surface vs deep)

– School liaison report

• Annual survey on usual practice in relation to social-emotional learning (both universal and targeted) to provide data on programme differentiation

• Analysis using 3-level multi-level models (School, class, pupil)

• Plus! Lots of qualitative data derived from interviews with teachers and further quantitative data on factors affecting implementation

Sources of further information and support

• Some reading

– Lendrum, A. & Humphrey, N. (2012). The importance of studying the implementation of school-based interventions. Oxford Review of

Education, 38, 635-652.

– Durlak, J.A. & DuPre, E.P. (2008). Implementation matters: A review of research on the influence of implementation on program outcomes and the factors affecting implementation. American Journal of Community

Psychology, 41, 327-350.

– Kelly, B. & Perkins, D. (Eds.) (2012). Handbook of implementation science

for psychology in education. Cambridge: CUP

• Organisations

– Global Implementation Initiative: http://globalimplementation.org/

– UK Implementation Network: http://www.cevi.org.uk/ukin.html

• Journals

– Implementation Science: http://www.implementationscience.com/

– Prevention Science: http://link.springer.com/journal/11121

Developing our approach to process evaluation

Louise Tracey

Process Evaluation:

‘documents and analyses the development and implementation of a programme, assessing whether strategies were implemented as planned and whether expected output was actually produced’

(Bureau of Justice Assistance (1997)

(cf: EEF 2013)

Reasons for Process Evaluation

1. Formative

2. Implementation/Fidelity

3. Understanding Impact

Methods of Process Evaluation

Quantitative / Qualitative

Observations

Interviews

Focus groups

Surveys

Instruments

Programme data

Plymouth Parent Partnership: SPOKES

Literacy programme for parents of struggling readers in Year 1

6 Cohorts

Impact Evaluation:

• Pre-test, post-test, 6-month & 12month follow-up

Plymouth Parent Partnership: SPOKES

Process Evaluation:

• Parent Telephone Interview

• PARYC

• Teacher SDQs

• Parent Questionnaire

• Attendance Records

• Parent Programme Evaluation Survey

SFA Primary Evaluation

Impact Evaluation:

• RCT of SFA in 40 schools

• Pre-test / post-test (Reception) & 12-month follow-up (Year 1)

• National Data (KS1/2)

Process Evaluation:

• Observation

• Routine Data

Discussion Questions

1. What are the key features of your process evaluation? Why did you choose them?

2. What were the main challenges? How have you overcome them?

Key Features?

Why chosen?

1. Key stakeholders

2. Inclusivity

3. Reliability

4. Costs

5. Inform impact evaluation

Main challenges?

How overcome?

1. Shared understanding with key stakeholders/implementers

2. Reliability

3. Burden on schools

4. Control groups

5. Costs

Any Questions?

Thank you!

louise.tracey@york.ac.uk