NLP

advertisement

CS 4100 Artificial Intelligence

Prof. C. Hafner

Class Notes April 3and5, 2012

Why Natural Language Processing ?

• Huge amounts of data

– Internet = at least 20

billion pages

– Intranet

• Applications for

processing large

amounts of texts

require NLP expertise

•

•

•

•

Classify text into categories

Index and search large texts

Automatic translation

Speech understanding

– Understand phone conversations

• Information extraction

– Extract useful information from

resumes

• Automatic summarization

– Condense 1 book into 1 page

• Question answering

• Knowledge acquisition

• Text generation / dialogues

Natural?

• Natural Language?

– Refers to the language spoken by people, e.g.

English, Japanese, Swahili, as opposed to artificial

languages, like C++, Java, etc.

• Natural Language Processing

– Applications that deal with natural language in a

useful way (beyond token/string matching)

• Computational Linguistics

– Doing linguistics on computers

– More on the linguistic side than NLP, but closely

related ]

Why Natural Language Processing?

•

•

•

•

•

kJfmmfj mmmvvv nnnffn333

Uj iheale eleee mnster vensi credur

Baboi oi cestnitze

Coovoel2^ ekk; ldsllk lkdf vnnjfj?

Fgmflmllk mlfm kfre xnnn!

Computers Lack Knowledge!

• Computers “see” text in English the same you

have seen the previous text!

• People naturally have

– “Common sense”

– Reasoning capacity

– Years of life experience

• Computers naturally have

– No common sense

– No reasoning capacity

– No life experience

Where does it fit in the CS taxonomy?

Computers

Databases

Artificial Intelligence

Robotics

Information

Retrieval

Algorithms

Networking

Search

Natural Language Processing

Machine

Translation

Language

Analysis

Semantics

Parsing

Linguistics Levels of Analysis

• Speech and text (and sign language)

• Levels

–

–

–

–

–

Phonology: sounds / letters / pronunciation

Morphology: the structure of words

Syntax: how these sequences are structured

Semantics: meaning of the strings

Pragmatics: what we use language to accomplish

• Interaction between levels

Issues in Syntax

• Shallow parsing:

“the dog chased the bear”

“the dog” “chased the bear”

subject - predicate

Identify basic structures

NP-[the dog] VP-[chased the bear]

• Deeper analysis: “the dog ate my homework”

– Who did what? (literal meaning) - semantics

– The meaning in context - pragmatics

Issues in Syntax

• Full parsing: John loves Mary

Help figuring out (automatically) questions like: Who did what

and when?

More Issues

• Anaphora Resolution: discourse

“The dog entered my room. It scared me”

• Preposition Attachment (syntax & semantics)

“I saw the man in the park with a telescope”

Issues in Semantics

•

•

•

•

•

Understand language! How?

“plant” = industrial plant

“plant” = living organism

Words are ambiguous

Importance of semantics?

– Machine Translation: wrong translations

– Information Retrieval: wrong information

– Anaphora Resolution: wrong referents

Why Semantics?

• The sea is home to million of plants and animals

• English French [commercial MT system]

• Le mer est a la maison de billion des usines et

des animaux

• French English

• The sea is at the home for billions of factories

and animals

Issues in Semantics

• How to learn the meaning of words?

• From dictionaries: word senses

plant, works, industrial plant -- (buildings for carrying on

industrial labor; "they built a large plant to manufacture

automobiles")

plant, flora, plant life -- (a living organism lacking the

power of locomotion)

They are producing about 1,000 automobiles in the new

plant

The sea flora consists in 1,000 different plant species

The plant was close to the farm.

Issues in Semantics

• Learn from annotated examples:

– Assume 100 examples containing “plant” previously

tagged by a human

– Train a learning algorithm

– How to choose the learning algorithm?

– How to obtain the 100 tagged examples?

Issues in Pragmatics

Why?

To modify the beliefs of other agents

Why?

To change the actions of other agents

Issues in Learning Semantics

• Learning?

– Assume a (large) amount of annotated data = training

– Assume a new text not annotated = test

• Learn from previous experience (training) to classify

new data (test)

• Bayes nets, decision trees, memory based learning

(e.g. nearest neighbor), neural networks

Issues in Information Extraction

• “There was a group of about 8-9 people close to

the entrance on Highway 75”

• Who? “8-9 people”

• Where? “highway 75”

• Extract information

• Detect new patterns:

– Detect hacking / hidden information / etc.

• Gov./mil. puts lots of money put into IE research

Issues in Information Retrieval

• General model:

– A huge collection of texts

– A query

• Task: find documents that are relevant to the

given query

• How? Create an index, like the index in a book

• More …

– Vector-space models

– Boolean models

• Examples: Google, Yahoo, etc.

Issues in Information Retrieval

•

•

•

•

Retrieve specific information

Question Answering

“What is the height of mount Everest?”

11,000 feet

Issues in Information Retrieval

• Find information across languages!

• Cross Language Information Retrieval

• “What is the minimum age requirement for car

rental in Italy?”

• Search also Italian texts for “eta minima per

noleggio macchine”

• Integrate large number of languages

• Integrate into performant IR engines

Issues in Machine Translation

• Text to Text Machine Translations

• Speech to Speech Machine Translations

• Most of the work has addressed pairs of widely

spread languages like English-French, EnglishChinese

Issues in Machine Translations

• How to translate text?

– Learn from previously translated data

• Need parallel corpora

• French-English, Chinese-English have the

Hansards

• Reasonable translations

• Chinese-Hindi – no such tools available today!

Speech Act Theory

“I pronounce you husband & wife” “I sentence you to 5 years”

Natural languages are NOT context free – but almost!

About 40% of words in NY Times are not in a (large) dictionary –

Natural language is “productive”

Example: “I SAW A MAN IN THE PARK WITH A TELESCOPE”

Parsing

• Parsing with CFGs refers to the task of assigning correct

trees to input strings

• Correct here means a tree that covers all and only the

elements of the input and has an S at the top

• It doesn’t actually mean that the system can select the

correct tree from among the possible trees

• As with everything of interest, parsing involves a search

that involves the making of choices

• Example: “I SAW A MAN IN THE PARK WITH A

TELESCOPE”

The problem of “scaling up” – the same as in

knowledge representation, planning, etc but even more difficult

Sentence-Types

• Declaratives: A plane left

– S -> NP VP

• Imperatives: Leave!

– S -> VP

• Yes-No Questions: Did the plane leave?

– S -> Aux NP VP

• WH Questions: When did the plane leave?

– S -> WH Aux NP VP

Potential Problems in CFG

• Agreement

• Subcategorization

• Movement

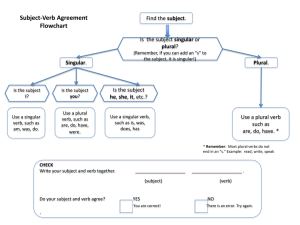

Agreement

•This dog

•Those dogs

•*This dogs

•*Those dog

•This dog eats

•Those dogs eat

•*This dog eat

•*Those dogs eats

Subcategorization

•

•

•

•

•

•

Sneeze: John sneezed

Find: Please find [a flight to NY]NP

Give: Give [me]NP[a cheaper fare]NP

Help: Can you help [me]NP[with a flight]PP

Prefer: I prefer [to leave earlier]TO-VP

Told: I was told [United has a flight]S

• *John sneezed the book

• *I prefer United has a flight

• *Give with a flight

• Subcat expresses the constraints that a predicate (verb for now)

places on the number and type of the argument it wants to take

So?

• So the various rules for VPs overgenerate.

– They permit the presence of strings containing verbs

and arguments that don’t go together

– For example

– VP -> V NP therefore

– Sneezed the book is a VP since “sneeze” is a verb

and “the book” is a valid NP

– Subcategorization frames can help with this problem

(“slow down” overgeneration)

Movement

• Core example

– [[My travel agent]NP [booked [the flight]NP]VP]S

• I.e. “book” is a straightforward transitive verb. It

expects a single NP arg within the VP as an

argument, and a single NP arg as the subject.

Movement

• What about?

• Which flight did the travel agent book ?

(“Which flight” is the object of the verb “book”. It

was “moved” to the front of the sentence!!)

– Which flight do you want me to have the travel

agent book?

• The direct object argument to “book” can be a

long way from where its supposed to appear.

• Here it is separated from its verb by 2 other

verbs.

• Therefore NL cannot be a finite state

language

Semantics: Fillmore’s Case Grammar

• “Cases” are semantically based not grammatical

ones like in Latin or German

• Charles Fillmore, “The Case for Case”, 1968

• Produced more than one version

Case Grammar Semantics

Case grammar semantics:

• Treats the verb as a predicate and the subject, objects,

and other subordinate clauses as “arguments”.

• Labels the arguments with their relationship to the verbpredicate (called “cases”) [uses subcategorization info]

• Ex: John sold his car – agent and object cases

• Ex: John sold his car to Mary – agent, object and

recipient cases

Fillmore’s list of cases

• Agentive (A): the case of the typically animate

perceived instigator of the action identified by

the verb.

• Instrumental (I) the case of the inanimate force

or object causally involved in the action of state

identified by the verb.

Fillmore’s list of cases

• Dative (D) - later Experiencer (E): the case of

the animate being affected by the state or action

identified by the verb.

• Factitive (F) - later Goal (G): the case of the

object or being resulting from the action or state

identified by the verb, or understood as a part of

the meaning of the verb.

Fillmore’s list of cases

• Locative (L): the case which identified the

location or spatial orientation of the state or

action identified by the verb.

• Objective (O): the semantically most neutral

case, the case of anything representable by a

noun whose role in the action or state

identified by the verb is identified by the

semantic interpretation of the verb itself.

Case grammar semantics

Semantic (case) roles don’t depend simply on syntactic

roles

• Ex: John sold his car to Mary – agent, object and

recipient cases

• Ex: John sold Mary his car

• Ex: John broke the window

• Ex: John broke the window with a hammer – agent,

object and instrument cases

• Ex: A hammer broke the window

Informal quiz: Consider these sentences:

1.

2.

3.

4.

5.

6.

The burglar opened the door.

The door was opened by the burglar.

The burglar opened the door with a crowbar.

The door was opened by a crowbar.

The crowbar opened the door.

The door opened.

Case analysis :

1.

2.

3.

4.

5.

6.

The burglar opened the door.

S

O

The door was opened by the burglar.

S

A

The burglar opened the door with a crowbar.

S

O

A

The door was opened by a crowbar.

S

A

The crowbar opened the door.

S

O

The door opened.

S

Strengths

• Only one Noun Phrase occupies each case

role in relation to a particular verb

• Therefore one could classify verbs in terms of

which case roles they took. e.g.:

o “open” - O, {A} {I}

o “shout” - A, O, {E}

{} denotes optional elements

• This model has been used in Artificial

Intelligence, along with the sub-categorization

of verbs (described earlier)

Weaknesses

• Researchers could not agree on a standard

set of cases.

• Not always easy in practice to allocate

particular Noun Phrases to cases.

• When it gets difficult there is a temptation to

use the Objective (O) as a kind of “dustbin

case” for all the NPs that don’t seem to fit

anywhere else.