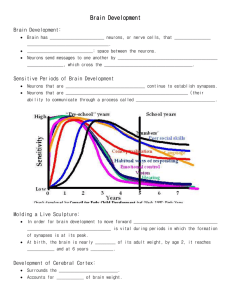

BBB2

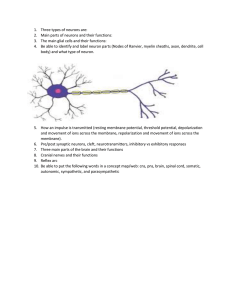

advertisement