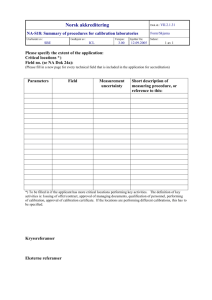

Role of measurement and calibration

advertisement