Run Time Optimization 15-745: Optimizing Compilers Pedro Artigas

advertisement

Run Time Optimization

15-745: Optimizing Compilers

Pedro Artigas

Motivation

A good reason

Compiling a language that contains runtime constructs

Java dynamic class loading

Perl or Matlab eval(“statement”)

Faster than interpreting

A better reason

May use program information only

available at run time

2

Example of run-time

information

The processor that will be used to run the

program

inc ax is faster on a Pentium III

add ax,1 is faster on a Pentium 4

No need to recompile if generating code at

run time

The actual program input/run-time behavior

Is my profile information accurate for the current

program input? YES!

3

The life cycle of a program

Compile

Link

Load/Run

One Object File

One Binary

One Process

Global Analysis

Whole

Program

Analysis

Analysis? No

observation!

Larger scope, better information about program behavior

4

New strategies are possible

Pessimistic x Optimistic approaches

Ex: Does int *a points to the same location

as int *b ?

Compile time/Pessimistic: Prove that in ANY

execution those pointers point to different

addresses

Run Time/Optimistic: Up to now in the current

execution a and b point to different locations

Assume this holds

If the assumption breaks, invalidate generated code and

generate new code

5

A sanity check

Using run time information does not require

run time code generation

Example: Versioning

ISA may allow cheaper tests

IA-64

Transmeta

if (a!=b) {

<generate code assuming a!=b>

} else {

<generate code assuming a==b>

}

6

Drawbacks

Code generation has to be FAST

Rule of thumb: almost linear on program

size

Code quality: Compromise on quality to

achieve fast code generation

shoot for good, not great

Also this usually means:

No time for classical Iterative Data Flow

Analysis at run time

7

No classical IDFA: Solutions

Quasi-Static and/or Staged Compilation

Perform IDFA at compile time

Specialize the dynamic code generator for the

obtained information

That is, encode the obtained data flow information

in the “binary”

Do not rely on classical IDFA

Use algorithms that do not require it

Ex: Dominator based value numbering (coming up!)

Generate code in a style that does not require it

Ex: One entry multiple exits traces

as in deco and dynamo

8

Code generation Strategies

Compiling a language that requires run-time

code generation:

Compile adaptively:

Use a very simple and fast code generation

scheme

Re-compile frequently used regions using more

advanced techniques

9

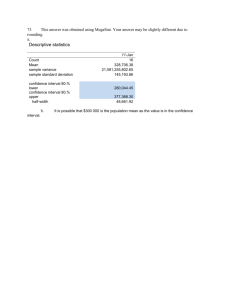

Adaptive Compilation:

Motivation

re-compilation

2 level recompilation

Optimizing Only

Fast Only

Optimal(Oracle)

total cost

Very simple code

generation

Elaborate code

generation

Fast compiler

Optimizing compiler

Cost

threshold

Higher compilation cost

Problem:

execution count

Higher execution cost

We may not know in

advance how frequently

a region will execute

Measure frequencies

and re-compile

dynamically

10

Code generation Strategies

Compiling selected regions that benefit from

run-time code generation:

Pick only the regions that should benefit the

most

Which regions?

Select them statically

Use profile information

Re-compile (that is select then dynamically)

Usually all of the above

11

Code Optimization Unit

1

2

What is the run-time unit of optimization?

3

4

Option: Procedures/static code regions

Option: Traces

1

2

3

4

4

Similar to static compilers

Start at the target of a backward branch

Include all the instructions in a path

May include procedure calls and returns

Branches

Fall through = remain in the trace

Target = exit the trace

12

Current strategies

Static region

Trace

JIT compilers

Java JITs

Matlab JITs

?

Run-time

performance

engines

Dyc

Fabius

Dynamo

Deco

13

Run-Time code generation:

Case studies

Two examples of algorithms that are

suitable for run-time code generation

Run time CSE/PRE replacement:

Dominator based value numbering

Run time Register Allocation:

Linear scan register allocation

14

Sidebar

With traces CSE/PRE become almost

trivial

No need for register allocation if

optimizing a binary (ex: dynamo)

A+B

PRE

A+B

CSE

A+B

A+B

15

Review: Local value

numbering

Store expressions already computed (in a hash table)

Store variable nameVN mapping in the VN array

Store VNvariable name mapping in the Name array

Same value numbersame value

Expression was

computed in the

past, check if

result is available

New expression,

add to the table

for each basic block

Table.empty()

for each computed expression (“x=y op z”)

if V=Table.lookup(“y op z”)

VN[“x”]=V

if VN[Name[V]]==V //expression is still there

replace “x = y op z” with “x = Name[V]”

else

Name[V]=“x”

else

VN[“x”]=new_value_number()

Table.insert(“y op z”,VN[“x”])

Name[VN[“x”]]=“x”

16

Local value numbering

Works in linear time on program size

Assuming accesses to the array and the

hash table occur in constant time

Can we make it work in a scope larger

than a basic block? (Hint: Yes)

What are the potential problems?

17

Problems

How to propagate the hash table

contents across basic blocks?

How to make sure that is safe to access

the location containing the expression

in other basic blocks?

How do we make sure if the location

containing the expression is fresh?

Remember: no IDFA

18

Control flow issues

On split points things are simple

Just keep the content of the hash table from the

predecessor

What about merge points?

We do not know if the same expression was

computed in all incoming paths

We do not want to check the fact anyway (why?)

Reset the state of the hash table to a safe state it

had in the past

Which program point in the past?

The immediate dominator of the merge block

19

Data flow issues

Making sure the def of an expression is fresh

and reaches the blocks of interest

How?

By construction! SSA

All names are fresh (Single Assignment)

All defs dominate its’ uses (regular uses not

functions)

As, by construction, we introduce new

defs using functions at every point this

would not hold

20

Dominator/SSA based value

numbering

DVN(Block B)

Table.PushScope()

for each exp “n=(…)”

if (exp is redundant or meaningless) //meaningless: (x0,x0)

VN[“n”]= Table.lookup(“(…)” or “x0”)

First process the

remove(“n=(…)”)

expressions

else

VN[“n”]=“n”

Table.insert(“(…)”,VN[n])

for each exp “x=y op z”

if (“v”=Table.lookup(“y op z”))

VN[“x”]=“v”

Them the

remove(“x=y op z”)

regular ones

else

VN[“x”]=“x”

Table.insert(“x=y op z”,VN[“x”])

for each successor s of B

Propagate info

Adjust the inputs

about inputs

for each dominator tree child c in CFG reverse post-order

and call DVN

DVN(c)

recursively

21

Table.PopScope()

VN

Name VN

Example

u0

1

v0

u0=a0+b0

w0

v0=c0+d0

x0

w0=e0+f0

2

x0=c0+d0

y0=c0+d0

u2= (u0,u1)

x2=(x0,x1)

y2=(y0,y1)

u3=a0+b0

3

y0

u1=a0+b0

u1

x1=e0+f0

x1

y1=e0+f0

y1

4

u2

x2

y2

u3

22

Problems

Does not catch

x0=a0+b0

x0=a0+b0

x1=a0+b0

x1=(x0,x2)

x2=a0+b0

x2=(x0,x1)

But it performs almost as well as CSE

And runs much faster

linear time ? (YES? NO?)

23

Homework #4

The DVN algorithm scans the CFG in a similar

way as the second phase of SSA translation

SSA translation phase #1

SSA translation phase #2

Placing functions

assigning unique numbers to variables

Combine both and save one pass

Gives us a smaller constant

But, at run time, it pays of!

24

Run time register allocation

Graph Coloring? Not an option

Even the simple stack based heuristic shown in

class is O(n2)

Not even counting:

Building the graph

Move coalescing optimization

But register allocation is VERY important in terms

of performance

Remember, memory is REALLY slow

We need a simple but effective (almost) linear

time algorithm

25

Let’s start simple

Start with a local (basic block) linear time

algorithm

Assuming only one def and one use per variable

(More constrained than SSA)

Assuming that if a variable is spilled it must

remain spilled (Why?)

Can we find an optimum linear time algorithm?

(Hint: Yes)

Ideas?

Think about liveness first …

26

Simple Algorithm:

Computing Liveness

One def and one use per variable, only one

block

A live range is merely the interval between

the def and the use

Live Interval: Interval between the first def and

the last use

OBS: Live Range = Live Interval if there is no

control flow, only one def and use

We could compute live intervals using a linear

scan if we store the def instructions

(beginning of the interval) in a hash table

27

Example

S1:

S2:

S3:

S4:

S5:

S6:

S7:

S8:

A=1

B=2

C=3

D=A

E=B

use(E)

use(D)

use(C)

28

Now Register Allocation

Another linear scan

Keep the active intervals in an list (active)

Assumption: an interval, when spilled, will remain

spilled

Two scenarios

#1:

No problem

#2:

Must spill

Which interval?

| active | R

| active | R

29

Spilling heuristic

Since there is no second chance:

That is a spilled variable will always remain spilled

Spill the interval that ends last

Intuition: As one spill must occur …

Pick the one that makes the remaining allocation

least constrained

That is, the interval that ends last

This is the provably optimum solution (given all

the constraints)

30

Linear Scan Register Allocation

active = {}

freeregs = {all_registers}

for each interval I (in order of increasing start point)

for each interval J in active

if J.end>I.start

Expire old

continue

active.remove(J)

intervals

freeregs.insert(J.register)

end for each interval J

if active.length()==R

spill_candidade=active.last();

if (spill_candidate.end>I.end)

Must spill, pick

I.register = spill_candidate.register

either the last

spill(spill_candidate)

interval in active

active.remove(spill_candidate)

active.insert_sorted(I) //sorted by end point

or the new

else

interval

spill(I)

else

No

I.register = freeregs.pop() //get any register from the free list

active.insert_sorted(I) //sorted by end point

constraints

end for each interval I

31

Example (R=2)

S1:

S2:

S3:

S4:

S5:

S6:

S7:

S8:

A

A

B

C

D

E

A=1

S1

B

B=2

S2

C

C=3

S3

D

D=A

S4

E

E=B

S5

use(E)

S6

use(D)

S7

use(C)

S8

32

Is the second pass really

linear?

Invariant: active.length()<=R

Complexity O(R*n)

R is usually a small constant (128 at

most)

Therefore: O(n)

33

And we are done! Right?

YES and NO

Use the same algorithm as before for register

assignment

Program representation: Linear list of

instructions

Live intervals are not precise anymore given

control flow and multiple def/uses

Not optimum, but still FAST

Code quality: within 10% of graph coloring for

spec95 benchmarks (One problem with this claim)

34

The Worst problem: Obtaining

precise live intervals

How to obtain precise live interval information

FAST?

Claim of 10% relies on live interval

information obtained using liveness analysis

(IDFA)

Most recent solutions:

IDFA is SLOW, O(n3)

Use the local interval algorithm for variables that

only live inside one basic block

Use liveness analysis for more global variables

Alleviates the problem, does not fully solve it

35

More problems: Live intervals

may not be precise

OBS: The idea of lifetime holes leads to allocators that also try to use this

holes to assign the same register to other live ranges (bin-packing)

Such an allocator is used in the Alpha family of compilers (GEM compilers)

36

Other problems: Linearization

order

Register allocation quality depends on

chosen block linearization order

Choose a good order in practice

layout order

depth first traversal of the CFG

Both only 10% slower than graph coloring

37

Graph coloring versus

Linear scan

Compilation cost scaling

38

Conclusion

Run time code generation provides new

optimization opportunities

Challenges

Identify new optimization opportunities

Design new compilation strategies

Design algorithms and implementations that:

example: optimistic versus conservative

minimize run time overhead

Do not compromise much on code quality

Recent examples indicate:

extending fast local methods is a promising way to

obtain fast run-time code generation

39