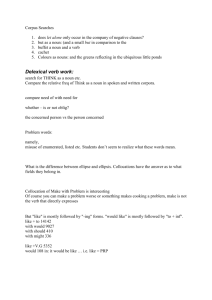

WordsEye: From Text To Pictures

advertisement

WordsEye: From Text To Pictures

The very humongous silver sphere is fifty feet above the ground. The silver castle is in the sphere. The castle is

80 feet wide. The ground is black. The sky is partly cloudy.

Why is it hard to create 3D graphics?

The tools are complex

Too much detail

Involves training, artistic skill, and expense

Pictures from Language

No GUI bottlenecks - Just describe it!

• Low entry barrier - no special skill or training required

• Give up detailed direct manipulation for speed and economy of expression

– Language expresses constraints

– Bypass rigid, pre-defined paths of expression (dialogs, menus, etc) as defined by GUI

– Objects vs Polygons – draw upon objects in pre-made 3D and 2D libraries

Enable novel applications in education, gaming, online communication, . . .

• Using language is fun and stimulates imagination

Semantics

• 3D scenes provide an intuitive representation of meaning by making explicit

the contextual elements implicit in our mental models.

WordsEye Initial Version

(with Richard Sproat)

Developed at AT&T Labs

• Graphics: Mirai 3D animation system on Windows NT

• Church Tagger, Collins Parser on Linux

• WordNet (http://wordnet.princeton.edu/)

• Viewpoint 3D model library

• NLP (linux) and depiction/graphics (Linux) communicate via

sockets

• WordsEye code in Common Lisp

Siggraph paper

(August 2001)

New Version

(with Richard Sproat)

Rewrote software from scratch

•

•

•

•

•

Linux and CMUCL

Custom Parser/Tagger

OpenGL for 3D preview display

Radiance Renderer

ImageMagic, Gimp for 2D post-effects

Different subset of functionality

• No verbs/poses yet

Web interface

(www.wordseye.com)

• Webserver and multiple backend text-to-scene servers

• Gallery/Forum/E-Cards/PIctureBooks/2D effects

Example

A tiny grey manatee is in the aquarium. It is facing right. The

manatee is six inches below the top of the aquarium. The

ground is tile. There is a large brick wall behind the aquarium.

Example

A silver head of time is on the grassy ground. The blossom is

next to the head. the blossom is in the ground. the green light

is three feet above the blossom. the yellow light is 3 feet

above the head. The large wasp is behind the blossom. the

wasp is facing the head.

Example

The humongous white shiny bear is on the American mountain

range. The mountain range is 100 feet tall. The ground is water.

The sky is partly cloudy. The airplane is 90 feet in front of the

nose of the bear. The airplane is facing right.

Example

A microphone is in front of a clown. The microphone is three

feet above the ground. The microphone is facing the clown. A

brick wall is behind the clown. The light is on the ground and

in front of the clown.

Example

Using user-uploaded

image

Example

original version of Software

Mary uses the crossbow. She rides the horse by the store. The store

is under the large willow. The small allosaurus is in front of the

horse. The dinosaur faces Mary. The gigantic teacup is in front of

the store. The gigantic mushroom is in the teacup. The castle is to

the right of the store.

Web Interface – preview mode

Web Interface – rendered (raytraced)

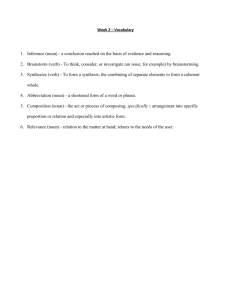

WordsEye Overview

Linguistic Analysis

• Parsing

• Create dependency-tree representation

• Anaphora resolution

Interpretation

• Add implicit objects, relations

• Resolve semantics and references

Depiction

• Database of 3D objects, poses, textures

• Depiction rules generate graphical constraints

• Apply constraints to create scene

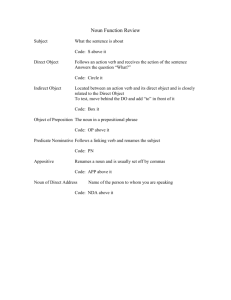

Linguistic Analysis

Tag part-of-speech

Parse

Generate semantic representation

• WordNet-like dictionary for nouns

Anaphora resolution

Example: John said that the cat is on the table.

Parse tree for: John said that the cat was on the table.

Said (Verb)

John (Noun) That (Comp)

Was (Verb)

Cat (Noun) On (Prep)

Table (Noun)

Nouns: Hierarchical Dictionary

Physical Object

Inanimate Object

Living thing

Animal

Plant

Cat

Dog

cat-vp2842

dog-vp23283

cat-vp2843

dog_standing-vp5041

...

WordNet problems

Inheritance conflates functional and lexical relations

•

•

•

•

“Terrace” is a “plateau”

“Spoon’ is a “container”

“Crossing Guard” is a “traffic cop”

“Bellybutton” is a “point”

Lack of multiple inheritance between synsets

• “Princess” is an aristocrat, but not a female

• "ceramic-ware" is grouped under "utensil" and has "earthenware", etc under it. But there

are no dishes, plates, under it because those are categorized elsewhere under "tableware"

Lacks relations other than ISA. Thesaurus vs dictionary.

• Snowball “made-of” snow

• Italian “resident-of” Italy

Cluttered with obscure words and word senses

• “Spoon” as a type of golf club

Create our own dictionary to address these problems

Semantic Representation for: John said that the blue cat was on

the table.

1. Object: “mr-happy” (John)

2. Object: “cat-vp39798” (cat)

3. Object: “table-vp6204” (table)

4. Action: “say”

:subject <element 1>

:direct-object <elements 2,3,5,6>

:tense “PAST”

5. Attribute: “blue”

:object <element 2>

6. Spatial-Relation “on”

:figure <element 2>

:ground <element 3>

Anaphora resolution: The duck is in the sea. It is upside down. The sea is shiny and transparent. The

ground is invisible. The apple is 3 inches below the duck. It is in front of the duck. The yellow

illuminator is 3 feet above the apple. The cyan illuminator is 6 inches to the left of it. The magenta

illuminator is 6 inches to the right of it. It is partly cloudy.

Indexical Reference: Three dogs are on the table. The first dog is blue.

The first dog is 5 feet tall. The second dog is red. The third dog is purple.

Interpretation

Interpret semantic representation

•

•

•

•

•

Object selection

Resolve semantic relations/properties based on object types

Answer Who? What? When? Where? How?

Disambiguate/normalize relations and actions

Identify and resolve references to implicit objects

Object Selection: When object is missing or doesn't exist . . .

Text object: “Foo on table”

Related object: “Robin on table”

Substitute image: “Fox on table”

Object attribute interpretation (modify versus selection)

Conventional: “American horse”

Substance: “Stone horse”

Unconventional: “Richard Sproat horse”

Selection: “Chinese house”

Semantic Interpretation of “Of”

Containment: “bowl of cats”

Grouping: “stack of cats”

Part: “head of the cow”

Substance: “horse of stone”

Property: “height of horse

is..”

Abstraction: “head of time”

Implicit objects & references

Mary rode by the store. Her motorcycle was red.

• Verb resolution: Identify implicit vehicle

• Functional properties of objects

• Reference

• Motorcycle matches the vehicle

• Her matches with Mary

Implicit Reference: Mary rode by the store. Her motorcycle was red.

Depiction

3D object and image database

Graphical constraints

• Spatial relations

• Attributes

• Posing

• Shape/Topology changes

Depiction process

3D Object Database

2,000+ 3D polygonal objects

Augmented with:

• Spatial tags (top surface, base, cup, push handle,

wall, stem, enclosure)

• Skeletons

• Default size, orientation

• Functional properties (vehicle, weapon . . .)

• Placement/attribute conventions

2000+ 3D Objects

10,000 images and textures

B&W drawings

Texture Maps

Artwork

Photographs

3D Objects and Images tagged with semantic info

Spatial tags for 3D object regions

Object type (e.g. WordNet synset)

• Is-a

• represents

Object size

Object orientation (front, preferred supporting surface -- wall/top)

Compound object consituents

Other object properties (style, parts, etc.)

Spatial Tags

Canopy (under, beneath)

Top Surface (on, in)

Spatial Tags

Base (under, below, on)

Cup (in, on)

Spatial Tags

Push Handle (actions)

Wall (on, against)

Spatial Tags

Stem (in)

Enclosure (in)

Stem in Cup: The daisy is in the test tube.

Enclosure and top surface: The bird is in the bird cage. The bird

cage is on the chair.

Spatial Relations

Relative positions

• On, under, in, below, off, onto, over, above . . .

• Distance

Sub-region positioning

• Left, middle, corner,right, center, top, front, back

Orientation

• facing (object, left, right, front, back, east, west . . .)

Time-of-day relations

Vertical vs Horizontal “on”, distances, directions:

The couch is against the wood

wall. The window is on the wall. The window is next to the couch. the door is 2 feet to the right of

the window. the man is next to the couch. The animal wall is to the right of the wood wall. The

animal wall is in front of the wood wall. The animal wall is facing left. The walls are on the huge

floor. The zebra skin coffee table is two feet in front of the couch. The lamp is on the table. The

floor is shiny.

Attributes

Size

• height, width, depth

• Aspect ratio (flat, wide, thin . . .)

Surface attributes

• Texture database

• Color, Texture, Opacity, reflectivity

• Applied to objects or textures themselves

• Brightness (for lights)

Attributes: The orange battleship is on the brick cow.

The battleship is 3 feet long.

Time of day & cloudiness

Time of day & lighting

Poses (original version only -- not yet implemented in web version)

Represent actions

Database of 500+ human poses

• Grips

• Usage (specialized/generic)

• Standalone

Merge poses (upper/lower body, hands)

• Gives wide variety by mix’n’match

Dynamic posing/IK

Poses

Grip wine_bottle-bc0014

Use bicycle_10-speed-vp8300

Poses

Throw “round object”

Run

Combined poses: Mary rides the bicycle. She plays the trumpet.

Combined poses

The Broadway Boogie Woogie vase is on the Richard Sproat coffee

table. The table is in front of the brick wall. The van Gogh picture is

on the wall. The Matisse sofa is next to the table. Mary is sitting on

the sofa. She is playing the violin. She is wearing a straw hat.

Dynamically defined poses

using Inverse Kinematics (IK)

Mary pushes the lawn mower. The lawnmower is 5

feet tall. The cat is 5 feet behind Mary. The cat is

10 feet tall.

Shape Changes (not implemented in web version)

Deformations

• Facial expressions

• Happy, angry, sad, confused . . . mixtures

• Combined with poses

Topological changes

• Slicing

Facial Expressions

Edward runs. He is happy.

Edward is shocked.

The rose is in the vase. The vase is on the half dog.

Depiction Process

Given a semantic representation

• Generate graphical constraints

• Handle implicit and conflicting constraints.

• Generate 3d scene from constraints

• Add environment, lights, camera

• Render scene

Example: Generate constraints for kick

• Case1: No path or recipient; Direct object is large

Pose: Actor in kick pose

Position: Actor directly behind direct object

Orientation: Actor facing direct object

• Case2: No path or recipient; Direct object is small

Pose: Actor in kick pose

Position: Direct object above foot

• Case3: Path and Recipient

Pose+relations . . . (some tentative)

Some varieties of kick

Case1: John kicked the pickup

truck

Case3: John kicked the ball to the

cat on the skateboard

Case2:John kicked the football

Implicit Constraint. The vase is on the nightstand. The lamp is

next to the vase.

Figurative & Metaphorical Depiction

• Textualization

• Conventional Icons and emblems

• Literalization

• Characterization

• Personification

• Functionalization

Textualization: The cat is facing the wall.

Conventional Icons: The blue daisy is not in the army boot.

Literalization: Life is a bowl of cherries.

Characterization: The policeman ran by the parking meter

Functionalization: The hippo flies over the church

Future/Ongoing Work

Build/use scenario-based lexical resource

• Word knowledge (dictionary)

• Frame knowledge

– For verbs and event nouns

– Finer-grained representation of prepositions and spatial relations

• Contextual knowledge

– Default verb arguments

– Default constituents and spatial relations in settings/environments

• Decompose actions into poses and spatial relations

• Learn contextual knowledge from corpora

Graphics/output support

•

•

•

•

•

Add dynamic posing of characters to depict actions

Handle more complex, natural text

Handle object parts

Add more 2D/3D content (including user uploadable 3D objects)

Physics, animation, sound, and speech

FrameNet – Digital lexical resource

http://framenet.icsi.berkeley.edu/

•

•

•

•

947 hierarchically defined frames

10,000 lexical entries (Verbs, nouns, adjectives)

Relations between frame (perspective-on, subframe, using, …)

Annotated sentences for each lexical unit

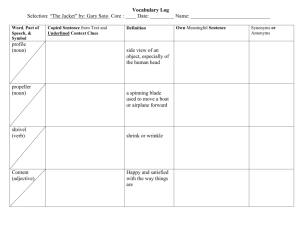

Lexical Units in “Revenge” Frame

Frame elements for avenge.v

Frame Element

Core Type

Degree

Core

Depictive

Peripheral

Injured_party

Extra_thematic

Injury

Core

Instrument

Core

Manner

Peripheral

Offender

Peripheral

Place

Core

Punishment

Peripheral

Purpose

Core

Result

Extra_thematic

Time

Peripheral

Annotations for “avenge.v”

Relations between frames

Frame element mappings between frames

• Core vs Peripheral

• Inheritance

• Renaming (eg. agent -> helper)

Valence patterns for verb “sell” (commerce_sell frame) and

two related frames

<LU-2986 "sell.v" Commerce_sell> patterns:

(33 ((Seller Ext) (Goods Obj)))

(11 ((Goods Ext)))

(7 ((Seller Ext) (Goods Obj) (Buyer Dep(to))))

(4 ((Seller Ext)))

(2 ((Goods Ext) (Buyer Dep(to))))

<frame: Commerce_buy> patterns:

(91 ((Buyer Ext) (Goods Obj)))

(27 ((Buyer Ext) (Goods Obj) (Seller Dep(from))))

(11 ((Buyer Ext)))

(2 ((Buyer Ext) (Goods Obj) (Seller Dep(at))))

(2 ((Buyer Ext) (Seller Dep(from))))

(2 ((Goods Obj)))

<frame: Expensiveness> patterns:

(17 ((Goods Ext) (Money Dep(NP))))

(8 ((Goods Ext)))

(4 ((Goods Ext) (Money Dep(between))))

(4 ((Goods Ext) (Money Dep(from))))

(2 ((Goods Ext) (Money Dep(under))))

(1 ((Goods Ext) (Money Dep(just))))

(1 ((Goods Ext) (Money Dep(NP)) (Seller Dep(from))))

Parsing and generating semantic relations using FrameNet

NLP> (interpret-sentence "the boys on the beach said that the fish swam to island”)

Parse:

(S

(NP (NP (DT "the") (NN2 (NNS "boys")))

(PREPP* (PREPP (IN "on") (NP (DT "the") (NN2 (NN "beach"))))))

(VP (VP1 (VERB (VBD "said"))) (COMP "that")

(S (NP (DT "the") (NN2 (NN "fish")))

(VP (VP1 (VERB (VBD "swam")))

(PREPP* (PREPP (TO "to") (NP (NN2 (NN "island")))))))))

Word Dependency:

((#<noun: "boy" (Plural) ID=18> (:DEP #<prep: "on" ID=19>))

(#<prep: "on" ID=19> (:DEP #<noun: "beach" ID=21>))

(#<verb: "said" ID=22> (:SUBJECT #<noun: "boy" (Plural) ID=18>)

(:DIRECT-OBJECT #<verb: "swam" ID=26>))

(#<verb: "swam" ID=26> (:SUBJECT #<noun: "fish" ID=25>)

(:DEP #<prep: "to" ID=27>))

(#<prep: "to" ID=27> (:DEP #<noun: "island" ID=28>)))

Frame Dependency:

((#<relation: CN-SPATIAL-RELATION-ON ID=19>

(:FIGURE #<noun: "boy" (Plural) ID=18>)

(:GROUND #<noun: "beach" ID=21>))

(#<action: "say.v" ID=22>

(#<frame-element: "Text" ID=29> #<action: "swim.v" ID=26>)

(#<frame-element: "Author" ID=30> #<noun: "boy" (Plural) ID=18>))

(#<action: "swim.v" ID=26>

(#<frame-element: "Self_mover" ID=31> #<noun: "fish" ID=25>)

(#<frame-element: ("Goal") ID=32> #<prep: "to" ID=27>))

(#<prep: "to" ID=27> (:DEP #<noun: "island" ID=28>)))

Acquiring contextual knowledge

Where does “eating breakfast” take place?

•

Inferring the environment in a text-to-scene conversion system. K-CAP 2001 Richard Sproat

Default locations and spatial relations (by Gino Miceli)

•

•

Project Gutenberg corpus of online English prose (http://www.gutenberg.org/),

•

Leverage verb/preposition semantics as well as simple syntactic structure to identify spatial

templates based on verb/{preposition,particle} plus intervening modifiers.

Use seed-object pairs to extract other pairs with equivalent spatial relations (e.g. cups are

(typically) on tables, while books are on desks).

Pragmatic Ambiguity: The lamp is next to the vase on the

nightstand . . .

Syntactic Ambiguity: Prepositional phrase attachment

John looks at the cat on the

skateboard.

John draws the man in the moon.

Potential Applications

• Online communications: Electronic postcards, visual chat/IM,

social networks

• Gaming, virtual environments

• Storytelling/comic books/art

• Education (ESL, reading, disabled learning, graphics arts)

• Graphics authoring/prototyping tool

• Visual summarization and/or “translation” of text

• Embedded in toys

Storytelling: The stagecoach is in front of the old west hotel. Mary is next to

the stagecoach. She plays the guitar. Edward exercises in front of the

stagecoach. The large sunflower is to the left of the stagecoach.

Scenes within scenes . . .

Greeting Cards

1st grade homework: The duck sat on a hen; the hen sat on a pig;...

Conclusion

New approach to scene generation

• Low overhead (skill, training . . .)

• Immediacy

• Usable with minimal hardware: text or speech input

device and display screen.

Work is ongoing

• Available as experimental web service

Related Work

• Adorni, Di Manzo, Giunchiglia, 1984

• Put: Clay and Wilhelms, 1996

• PAR: Badler et al., 2000

• CarSim: Dupuy et al., 2000

• SHRDLU: Winograd, 1972

Bloopers – John said the cat is on the table

Bloopers: Mary says the cat is blue.

Bloopers: John wears the axe. He plays the violin.

Bloopers: Happy John holds the red shark

Bloopers: Jack carried the television

Web Interface - Entry Page (www.wordseye.com)

• Registration

• Login

• Learn more

• Example pictures

Web Interface - Public Gallery

Web Interface - Add Comments to Picture

Web Interface - Link Pictures into Stories & Games

The tall granite mountain range is 300 feet wide.

The enormous umbrella is on the mountain range.

The gray elephant is under the umbrella.

The chicken cube is 6 feet to the right of the gray elephant.

The cube is 5 feet tall. The cube is on the mountain range.

A clown is on the elephant.

The large sewing machine is on the cube.

A die is on the clown. It is 3 feet tall.