The Princeton Shape Benchmark Philip Shilane, Patrick Min,

advertisement

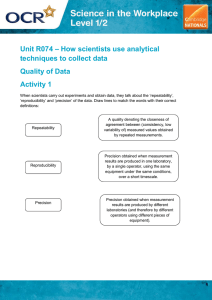

The Princeton Shape Benchmark Philip Shilane, Patrick Min, Michael Kazhdan, and Thomas Funkhouser Shape Retrieval Problem 3D Model Shape Descriptor Best Matches Model Database Example Shape Descriptors • • • • • • • D2 Shape Distributions Extended Gaussian Image Shape Histograms Spherical Extent Function Spherical Harmonic Descriptor Light Field Descriptor etc. Example Shape Descriptors • • • • • • • D2 Shape Distributions Extended Gaussian Image Shape Histograms Spherical Extent Function Spherical Harmonic Descriptor Light Field Descriptor etc. How do we know which is best? Typical Retrieval Experiment • Create a database of 3D models • Group the models into classes • For each model: • Rank other models by similarity • Measure how many models in the same class appear near the top of the ranked list • Present average results Typical Retrieval Experiment • Create a database of 3D models • Group the models into classes • For each model: • Rank other models by similarity • Measure how many models in the same class appear near the top of the ranked list • Present average results Typical Retrieval Experiment • Create a database of 3D models • Group the models into classes • For each model: • Rank other models by similarity • Measure how many models in the same class appear near the top of the ranked list • Present average results Typical Retrieval Experiment • Create a database of 3D models • Group the models into classes • For each model: • Rank other models by similarity • Measure how many models in the same class appear near the top of the ranked list • Present average results Query Typical Retrieval Experiment 1 precision • Create a database of 3D models 0.8 • Group the models into0.6 classes 0.4 • For each model: • Rank other models by similarity 0 • Measure how many models 0 0.2 in the same class appear near the top of the ranked list 0.2 • Present average results Query 0.4 recall 0.6 0.8 1 Typical Retrieval Experiment 1 precision • Create a database of 3D models 0.8 • Group the models into0.6 classes 0.4 • For each model: • Rank other models by similarity 0 • Measure how many models 0 0.2 in the same class appear near the top of the ranked list 0.2 • Present average results Query 0.4 recall 0.6 0.8 1 Typical Retrieval Experiment 1 precision • Create a database of 3D models 0.8 • Group the models into0.6classes 0.4 • For each model: • Rank other models by similarity 0 • Measure how many models 0 0.2 in the same class appear near the top of the ranked list 0.2 • Present average results 0.4 recall 0.6 0.8 1 Typical Retrieval Experiment 1 precision • Create a database of 3D models 0.8 • Group the models into0.6classes 0.4 • For each model: • Rank other models by similarity 0 0 0.2 • Measure how many models in the same class appear near the top of the ranked list 0.2 • Present average results 0.4 0.6 recall 0.8 1 Shape Retrieval Results Shape Descriptor Compare Time (µs) Storage Size (bytes) Norm. DCGain 1,300 4,700 +21.3% REXT 229 17,416 +13.3% SHD 27 2,148 +10.2% GEDT 450 32,776 +10.1% 8 552 +6.0% SECSHEL 451 32,776 +2.8% VOXEL 450 32,776 +2.4% SECTORS 14 552 -0.3% CEGI 27 2,056 -9.6% EGI 14 1,032 -10.9% D2 2 136 -18.2% SHELLS 2 136 -27.3% LFD EXT Outline • • • • • • • Introduction Related work Princeton Shape Benchmark Comparison of 12 descriptors Evaluation techniques Results Conclusion Typical Shape Databases Osada MPEG-7 Hilaga Technion Zaharia CCCC Utrecht Taiwan Viewpoint Num Models 133 1,300 230 1,068 1,300 1,841 684 1,833 1,890 Num Num Classes Classified 25 133 15 227 32 230 17 258 23 362 54 416 6 512 47 549 85 1,280 Largest Class 20% 15% 15% 10% 14% 13% 45% 12% 12% Typical Shape Databases Osada MPEG-7 Hilaga Technion Zaharia CCCC Utrecht Taiwan Viewpoint Num Models 133 1,300 230 1,068 1,300 1,841 684 1,833 1,890 Num Num Classes Classified 25 133 15 227 32 230 17 258 23 362 54 416 6 512 47 549 85 1,280 Largest Class 20% 15% 15% 10% 14% 13% 45% 12% 12% Typical Shape Databases Osada MPEG-7 Hilaga Technion Zaharia CCCC Utrecht Taiwan Viewpoint Num Num Num Models Classes Classified 133 25 133 1,300 15 227 230 32 230 1,068 17 258 Aerodynamic 23 1,300 362 1,841 54 416 684 6 512 1,833 47 549 1,890 85 1,280 Largest Class 20% 15% 15% 10% 14% 13% 45% 12% 12% Typical Shape Databases Osada MPEG-7 Hilaga Technion Zaharia CCCC Utrecht Taiwan Viewpoint Num Models 133 1,300 230 1,068 1,300 1,841 684 1,833 1,890 Num Num Classes Classified 25 133 15 227 32 230 17 258 Letter ‘C’ 23 362 54 416 6 512 47 549 85 1,280 Largest Class 20% 15% 15% 10% 14% 13% 45% 12% 12% Typical Shape Databases Vehicles Furniture Animals Plants Household Buildings Osada MPEG-7 Hilaga Zaharia CCCC Utrecht Taiwan Viewpoint 47% 12% 12% 35% 33% 100% 44% 0% 12% 0% 0% 0% 13% 0% 13% 42% 12% 14% 23% 7% 21% 0% 0% 1% 0% 13% 2% 7% 5% 0% 0% 0% 24% 0% 12% 11% 25% 0% 36% 50% 0% 7% 0% 0% 0% 0% 0% 0% Typical Shape Databases Vehicles Furniture Animals Plants Household Buildings Osada MPEG-7 Hilaga Zaharia CCCC Utrecht Taiwan Viewpoint 47% 12% 12% 35% 33% 100% 44% 0% 12% 0% 0% 0% 13% 0% 13% 42% 12% 14% 23% 7% 21% 0% 0% 1% 0% 13% 2% 7% 5% 0% 0% 0% 24% 0% 12% 11% 25% 0% 36% 50% 0% 7% 0% 0% 0% 0% 0% 0% Typical Shape Databases 13% 2% 7% 39 5% vases 0% 0% 0% Buildings 160% beds Household Plants Animals Furniture Vehicles room chairs Osada153 dining chairs 47%25 living 12% 12% MPEG-7 12% 0% 14% Hilaga 12% 0% 23% Zaharia 35% 0% 7% 28 bottles 21% CCCC 8 chests33% 13% Utrecht 100% 0% 0% Taiwan 44% 13% 0% Viewpoint 0% 42% 1% tables 24%12 dining0% 0% 7% 12% 0% 11% 0% tables 25%36 end0% 0% 0% 36% 0% 50% 0% Typical Shape Databases Vehicles Furniture Animals Plants Household Buildings Osada MPEG-7 Hilaga Zaharia CCCC Utrecht Taiwan Viewpoint 47% 12% 12% 35% 33% 100% 44% 0% 12% 0% 0% 0% 13% 0% 13% 42% 12% 14% 23% 7% 21% 0% 0% 1% 0% 13% 2% 7% 5% 0% 0% 0% 24% 0% 12% 11% 25% 0% 36% 50% 0% 7% 0% 0% 0% 0% 0% 0% Goal: Benchmark for 3D Shape Retrieval • • • • • • Large number of classified models Wide variety of class types Not too many or too few models in each class Standardized evaluation tools Ability to investigate properties of descriptors Freely available to researchers Princeton Shape Benchmark • Large shape database • 6,670 models • 1,814 classified models, 161 classes • Separate training and test sets • Standardized suite of tests • Multiple classifications • Targeted sets of queries • Standardized evaluation tools • Visualization software • Quantitative metrics Princeton Shape Benchmark 51 potted plants 33 faces 15 desk chairs 22 dining chairs 100 humans 28 biplanes 14 flying birds 11 ships Princeton Shape Benchmark (PSB) Num Models Osada Num Classes Num Classified Largest Class 133 25 133 20% 1,300 15 227 15% 230 32 230 15% Technion 1,068 17 258 10% Zaharia 1,300 23 362 14% CCCC 1,841 54 416 13% Utrecht 684 6 512 45% Taiwan 1,833 47 549 12% Viewpoint 1,890 85 1,280 12% PSB 6,670 161 1,814 6% MPEG-7 Hilaga Princeton Shape Benchmark (PSB) Vehicles Furniture Animals Plants Household Buildings Osada MPEG-7 Hilaga Zaharia CCCC Utrecht Taiwan Viewpoint PSB 47% 12% 12% 35% 33% 100% 44% 0% 26% 12% 0% 0% 0% 13% 0% 13% 42% 11% 12% 14% 23% 7% 21% 0% 0% 1% 16% 0% 13% 2% 7% 5% 0% 0% 0% 8% 24% 0% 12% 11% 25% 0% 36% 50% 22% 0% 7% 0% 0% 0% 0% 0% 0% 6% Outline • • • • • • • Introduction Related work Princeton Shape Benchmark Comparison of 12 descriptors Evaluation techniques Results Conclusion Comparison of Shape Descriptors • • • • • • • • • • • • Shape Histograms (Shells) Shape Histograms (Sectors) Shape Histograms (SecShells) D2 Shape Distributions Extended Gaussian Image (EGI) Complex Extended Gaussian Image (CEGI) Spherical Extent Function (EXT) Radialized Spherical Extent Function (REXT) Voxel Gaussian Euclidean Distance Transform (GEDT) Spherical Harmonic Descriptor (SHD) Light Field Descriptor (LFD) Comparison of Shape Descriptors Base (92) LFD 1 REXT SHD 0.8 precision GEDT EXT 0.6 SecShells l Voxel 0.4 Sectors CEGI 0.2 EGI D2 0 0 0.2 0.4 0.6 recall 0.8 1 Shells Evaluation Tools Visualization tools Precision/recall plot Best matches Distance image Tier image Quantitative metrics Nearest neighbor First and Second tier E-Measure Discounted Cumulative Gain (DCG) Evaluation Tools Visualization tools Precision/recall plot Best matches Distance image Tier image Quantitative metrics Nearest neighbor First and Second tier E-Measure Discounted Cumulative Gain (DCG) Evaluation Tools Query Visualization tools Precision/recall plot Best matches Distance image Tier image Quantitative metrics Nearest neighbor First and Second tier E-Measure Discounted Cumulative Gain (DCG) Wrong class Correct class Evaluation Tools Visualization tools Precision/recall plot Best matches Distance image Tier image Quantitative metrics Nearest neighbor First and Second tier E-Measure Discounted Cumulative Gain (DCG) Evaluation Tools Visualization tools Precision/recall plot Best matches Distance image Tier image Quantitative metrics Nearest neighbor First and Second tier E-Measure Discounted Cumulative Gain (DCG) Evaluation Tools Visualization tools Precision/recall plot Best matches Distance image Tier image Quantitative metrics Nearest neighbor First and Second tier E-Measure Discounted Cumulative Gain (DCG) Dining Chair Desk Chair Function vs. Shape Functional at the top levels of the hierarchy, shape based at the lower levels root Man-made Vehicle Furniture Table Rectangular table Chair Round table Natural Base Classification (92 classes) Man-made 1 precision 0.8 0.6 SHD Furniture EGI 0.4 Table 0.2 0 0 0.2 0.4 0.6 recall 0.8 1 Round table Coarse Classification (44 classes) Man-made 1 precision 0.8 0.6 SHD Furniture EGI 0.4 Table 0.2 0 0 0.2 0.4 0.6 recall 0.8 1 Round table Coarser Classification (6 classes) Man-made 1 precision 0.8 0.6 SHD Furniture EGI 0.4 Table 0.2 0 0 0.2 0.4 0.6 recall 0.8 1 Round table Coarsest Classification (2 classes) Man-made 1 precision 0.8 0.6 SHD Furniture EGI 0.4 Table 0.2 0 0 0.2 0.4 0.6 recall 0.8 1 Round table Granularity Comparison Base (92) Man-made vs. Natural (2) LFD 1 REXT 0.8 SHD precision GEDT 0.6 EXT SecShells 0.4 Voxel 0.2 Sectors CEGI 0 0 0.2 0.4 0.6 recall 0.8 1 EGI D2 Shells Rotationally Aligned Models (650) 1 precision 0.8 0.6 SHD GEDT 0.4 0.2 0 0 0.2 0.4 0.6 recall 0.8 1 All Models (907) 1 precision 0.8 0.6 SHD GEDT 0.4 0.2 0 0 0.2 0.4 0.6 recall 0.8 1 Complex Models (200) 1 precision 0.8 0.6 SHD GEDT 0.4 0.2 0 0 0.2 0.4 0.6 recall 0.8 1 Performance by Property Rotation Aligned Depth Complexity Base LFD 18.8 21.3 28.2 REXT 12.3 13.3 15.0 7.6 10.2 8.9 13.0 10.1 13.5 EXT 5.0 6.0 6.1 SecShells 5.2 2.8 2.2 Voxel 4.7 2.4 0.2 Sectors 2.0 -0.3 -1.6 CEGI -8.7 -9.6 -12.7 EGI -11.2 -10.9 -9.1 D2 -19.7 -18.2 -19.9 Shells -29.1 -27.3 -30.9 SHD GEDT Conclusion • Methodology to compare shape descriptors • Vary classifications • Query lists targeted at specific properties • Unexpected results • EGI: good at discriminating man-made vs. natural objects, though poor at fine-grained distinctions • LFD: good overall performance across tests • Freely available Princeton Shape Benchmark • 1,814 classified polygonal models • Source code for evaluation tools Future Work • Multi-classifiers • Evaluate statistical significance of results • Application of techniques to other domains • Text retrieval • Image retrieval • Protein classification Acknowledgements David Bengali partitioned thousands of models. Ming Ouhyoung and his students provided the light field descriptor. Dejan Vranic provided the CCCC and MPEG-7 databases. Viewpoint Data Labs donated the Viewpoint database. Remco Veltkamp and Hans Tangelder provided the Utrecht database. Funding: The National Science Foundation grants CCR-0093343 and 11S-0121446. The End http://shape.cs.princeton.edu/benchmark