Pertemuan 10 Sebaran Binomial dan Poisson – Metoda Statistika Matakuliah

advertisement

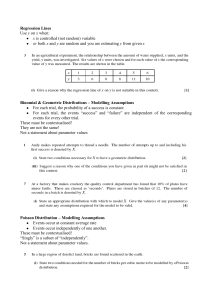

Matakuliah Tahun Versi : I0134 – Metoda Statistika : 2005 : Revisi Pertemuan 10 Sebaran Binomial dan Poisson 1 Learning Outcomes Pada akhir pertemuan ini, diharapkan mahasiswa akan mampu : • Mahasiswa dapat menghitungpeluang, rataan dan varians peubah acak Binomial dan Poisson. 2 Outline Materi • Sebaran Peluang Binomial • Nilai harapan dan varians sebaran Binomial • Sebaran peluang Poisson • Nilai harapan dan varians sebaran Poisson 3 Binomial and Poisson Probability Distributions Binomial Probability Distribution l Consider a situation where there are only two possible outcomes (a “Bernoulli trial”) Example: u flipping a coin head or tail u rolling a dice 6 or not 6 (i.e. 1, 2, 3, 4, 5) Label the possible outcomes by the variable k We want to find the probability P(k) for event k to occur Since k can take on only 2 values we define those values as: k = 0 or k = 1 u let P(k = 0) = q (remember 0 ≤ q ≤ 1) u something must happen so P(k = 0) + P(k = 1) = 1 (mutually exclusive events) P(k = 1) = p = 1 - q u We can write the probability distribution P(k) as: P(k) = pkq1-k (Bernoulli distribution) u coin toss: define probability for a head as P(1) P(1) = 0.5 and P(0=tail) = 0.5 too! u dice rolling: define probability for a six to be rolled from a six sided dice as P(1) P(1) = 1/6 and P(0=not a six) = 5/6. 4 l What is the mean () of P(k)? 1 kP(k) k0 1 P(k) 0 q 1 p p q p discrete distribution k0 l What is the Variance (2) of P(k)? 1 2 k P(k ) 2 k 01 2 02 P(0) 12 P(1) 2 p p 2 p(1 p) pq P(k ) k 0 l l l Suppose we have N trials (e.g. we flip a coin N times) what is the probability to get m successes (= heads)? Consider tossing a coin twice. The possible outcomes are: no heads: P(m = 0) = q2 one head: P(m = 1) = qp + pq (toss 1 is a tail, toss 2 is a head or toss 1 is head, toss 2 is a tail) = 2pq we don't care which of the tosses is a head so 2 two heads: P(m = 2) = p there are two outcomes that give one head Note: P(m=0)+P(m=1)+P(m=2)=q2+ qp + pq +p2= (p+q)2 = 1 (as it should!) We want the probability distribution function P(m, N, p) where: m = number of success (e.g. number of heads in a coin toss) N = number of trials (e.g. number of coin tosses) p = probability for a success (e.g. 0.5 for a head) 5 l l If we look at the three choices for the coin flip example, each term is of the form: CmpmqN-m m = 0, 1, 2, N = 2 for our example, q = 1 - p always! coefficient Cm takes into account the number of ways an outcome can occur without regard to order. for m = 0 or 2 there is only one way for the outcome (both tosses give heads or tails): C0 = C2 = 1 for m = 1 (one head, two tosses) there are two ways that this can occur: C1 = 2. Binomial coefficients: number of ways of taking N things m at time CN , m m!( NN! m)! N m 0! = 1! = 1, 2! = 1·2 = 2, 3! = 1·2·3 = 6, m! = 1·2·3···m Order of occurrence is not important u e.g. 2 tosses, one head case (m = 1) n we don't care if toss 1 produced the head or if toss 2 produced the head Unordered groups such as our example are called combinations Ordered arrangements are called permutations For N distinguishable objects, if we want to group them m at a time, the number of permutations: N! PN,m (N m)! u example: If we tossed a coin twice (N = 2), there are two ways for getting one head (m = 1) u example: Suppose we have 3 balls, one white, one red, and one blue. n Number of possible pairs we could have, keeping track of order is 6 (rw, wr, rb, br, wb, bw): 3! P3,2 6 (3 2)! n If order is not important (rw = wr), then the binomial formula gives 3! C3,2 3 number of “two color” combinations 2!(3 2)! 6 Binomial distribution: the probability of m success out of N trials: l P(m, N , p) CN , m p m q N m p N m m N m q N! p mq N m m!( N m)! p is probability of a success and q = 1 - p is probability of a failure 0.14 0.40 0.10 P (k , 50, 1/3) P (k , 7, 1/3) 0.12 Expectation Value = np = 7 * 1/3 = 2.333... 0.30 Expectation Value = np = 50 * 1/3 = 16.667... 0.20 0.08 0.06 0.04 0.10 0.02 0.00 0.00 0 l 2 4 k 6 8 10 0 5 10 15 k 20 25 30 To show that the binomial distribution is properly normalized, use Binomial Theorem: k ( a b) k a k l l 0 N N P(m, N , p ) m0 k l l p m0 N m b m N m q ( p q) N 1 binomial distribution is properly normalized 7 Mean of binomial distribution: N mP (m, N , p) m0 N P(m, N , p) N N m0 m0 mP (m, N , p) m p N m m N m q m0 A cute way of evaluating the above sum is to take the derivative: N N m N m 0 m p q p m 0 N m m0 p 1 N m m0 p N m m N m q p N m m 1 N m q N (1 p ) N p m0 N 1 N m p m0 N m m m ( N m)(1 p ) N m 1 0 (1 p ) N m (1 p ) 1 N m m0 p N m m (1 p ) N m p 1 N (1 p ) 1 1 (1 p ) 1 Np Variance of binomial distribution (obtained using similar trick): N (m )2 P(m, N, p) 2 m0 N Npq P(m, N, p) m0 8 Example: Suppose you observed m special events (success) in a sample of N events u The measured probability (“efficiency”) for a special event to occur is: m N What is the error on the probability ("error on the efficiency"): Npq N (1 ) (1 ) we will derive this later in the course m N N N The sample sizeN (N) should be as large as possible to reduce certainty in the probability measurement Let’s relate the above result to Lab 2 where we throw darts to measure the value of p. If we inscribe a circle inside a square with side=s then the ratio of the area of the circle to the rectangle is: area of circle pr 2 p ( s / 2) 2 p 2 2 area of square s 4 s So, if we throw darts at random at our rectangle then the probability () of a dart landing inside the circle is just the ratio of the two areas, p/4. The we can determine p using: The error in p is related to the error in by: p4. p 4 (1 ) N We can estimate how well we can measure p by this method by assuming that p/4= (3.14159…)/4: p 4 (1 ) N 1.6 using p / 4 N This formula “says” that to improve our estimate of p by a factor of 10 we have to throw 100 (N) times as many darts! Clearly, this is an inefficient way to determine p. 9 Example: Suppose a baseball player's batting average is 0.300 (3 for 10 on average). u Consider the case where the player either gets a hit or makes an out (forget about walks here!). prob. for a hit: p = 0.30 prob. for "no hit”: q = 1 - p = 0.7 u On average how many hits does the player get in 100 at bats? = Np = 100·0.30 = 30 hits u What's the standard deviation for the number of hits in 100 at bats? = (Npq)1/2 = (100·0.30·0.7)1/2 ≈ 4.6 hits we expect ≈ 30 ± 5 hits per 100 at bats u Consider a game where the player bats 4 times: probability of 0/4 = (0.7)4 = 24% probability of 1/4 = [4!/(3!1!)](0.3)1(0.7)3 = 41% Pete Rose’s lifetime batting average: 0.303 probability of 2/4 = [4!/(2!2!)](0.3)2(0.7)2 = 26% probability of 3/4 = [4!/(1!3!)](0.3)3(0.7)1 = 8% probability of 4/4 = [4!/(0!4!)](0.3)4(0.7)0 = 1% probability of getting at least one hit = 1 - P(0) = 1-0.24=76% 10 Poisson Probability Distribution l l l l The Poisson distribution is a widely used discrete probability distribution. Consider the following conditions: p is very small and approaches 0 u example: a 100 sided dice instead of a 6 sided dice, p = 1/100 instead of 1/6 u example: a 1000 sided dice, p = 1/1000 u radioactive decay N is very large and approaches ∞ u number of Prussian soldiers kicked u example: throwing 100 or 1000 dice instead of 2 dice to death by horses per year per army corps! The product Np is finite u quality control, failure rate predictions Example: radioactive decay Suppose we have 25 mg of an element very large number of atoms: N ≈ 1020 Suppose the lifetime of this element t = 1012 years ≈ 5x1019 seconds probability of a given nucleus to decay in one second is very small: p = 1/t = 2x10-20/sec Np = 2/sec finite! The number of decays in a time interval is a Poisson process. Poisson distribution can be derived by taking the appropriate limits of the binomial distribution N! P(m, N, p) p m q Nm m!(N m)! N! N(N 1) (N m 1)(N m)! Nm (N m)! (N m)! q Nm (1 p) Nm p 2 (N m)(N m 1) ( pN)2 1 p(N m) 1 pN e pN 2! 2! 11 N m m pN P(m, N, p) p e m! Let Np e m P(m, ) m! m m e m m0 m0 note : P(m, ) m! e m m m0 m! e e 1 m is always an integer ≥ 0 The mean and variance of u does not have to be an integer a Poisson distribution are the It is easy to show that: = Np = mean of a Poisson distribution same number! 2 = Np = = variance of a Poisson distribution l Radioactivity example with an average of 2 decays/sec: i) What’s the probability of zero decays in one second? e2 20 e2 1 2 p(0,2) e 0.135 13.5% 0! 1 ii) What’s the probability of more than one decay in one second? e2 20 e2 21 p( 1,2) 1 p(0,2) p(1,2) 1 1 e2 2e2 0.594 59.4% 0! 1! iii) Estimate the most probable number of decays/sec? P(m, ) 0 m m* u To solve this problem its convenient to maximize lnP(m, ) instead of P(m, ). e m ln P(m, ) ln m ln ln m! m! u 12 u In order to handle the factorial when take the derivative we use Stirling's Approximation: ln m! m ln m m ln P(m, ) ( m ln ln m!) m m ( m ln m ln m m) m ln ln m m ln10!=15.10 ln50!=148.48 10ln10-10=13.03 14% 50ln50-50=145.601.9% 1 1 m 0 m* The most probable value for m is just the average of the distribution u This is only approximate since Stirlings Approximation is only valid for large m. u Strictly speaking m can only take on integer values while is not restricted to be an integer. If you observed m events in a “counting” experiment, the error on m is m 13 Comparison of Binomial and Poisson distributions with mean = 1 0.5 0.4 0.35 poisson binomial N=3, p=1/3 0.3 Probability Probability 0.4 0.3 0.2 binomial N=10,p =0.1 poiss on 0.25 0.2 Not much difference between them! 0.15 0.1 0.1 0.05 0 0 0 1 2 m N 3 4 5 0.0 1.0 2.0 3.0 m N 4.0 5.0 6.0 7.0 For N large and fixed: Binomial Poisson 14 Uniform distribution and Random Numbers What is a uniform probability distribution: p(x)? p(x)=constant (c) for a x b p(x)=zero everywhere else Therefore p(x1)dx1= p(x2)dx2 if dx1=dx2 equal intervals give equal probabilities For a uniform distribution with a=0, b=1 we have p(x)=1 1 1 1 0 0 0 p( x)dx 1 cdx c dx c 1 What is a random number generator ? A number picked at random from a uniform distribution with limits [0,1] All major computer languages (FORTRAN, C) come with a random number generator. FORTRAN: RAN(iseed) The following FORTRAN program generates 5 random numbers: iseed=12345 do I=1,5 y=ran(iseed) type *, y enddo end 0.1985246 0.8978736 0.2382888 0.3679854 0.3817045 If we generate “a lot” of random numbers all equal intervals should contain the same amount of numbers. For example: generate: 106 random numbers expect: 105 numbers [0.0, 0.1] 105 numbers [0.45, 0.55] 15 • Selamat Belajar Semoga Sukses. 16