Powerpoint[25.9MB]

advertisement

![Powerpoint[25.9MB]](http://s2.studylib.net/store/data/014976707_1-f3b359d5a1c49624c3d381a540426b29-768x994.png)

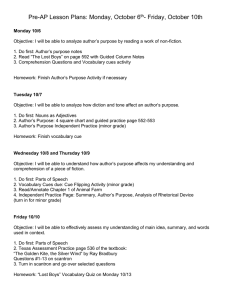

Ecological Statistics and Visual Grouping Jitendra Malik U.C. Berkeley 1 Collaborators • David Martin • Charless Fowlkes • Xiaofeng Ren 2 From Images to Objects "I stand at the window and see a house, trees, sky. Theoretically I might say there were 327 brightnesses and nuances of colour. Do I have "327"? No. I have sky, house, and trees." --Max Wertheimer 3 Grouping factors 4 Critique • Predictive power – Factors for complex, natural stimuli ? – How do they interact ? • Functional significance – Why should these be useful or confer some evolutionary advantage to a visual organism? • Brain mechanisms – How are these factors implemented given what we know about V1 and higher visual areas? 5 6 Our approach • Creating a dataset of human segmented images • Measuring ecological statistics of various Gestalt grouping factors • Using these measurements to calibrate and validate approaches to grouping 7 Natural Images aren’t generic signals • Edges/Filters/Coding: Ruderman 1994/1997, Olshausen/Field 1996, Bell/Sejnowski 1997, Hateren/Schaaf 1998, Buccigrossi/Simoncelli 1999, Alvarez/Gousseau/Morel 1999, Huang/Mumford 1999 • Range Data: Huang/Lee/Mumford 2000 8 Brunswik & Kamiya 1953 • Unification of two important theories of perception: – Statistical/Bayesian formulation, due to Helmholtz: Likelihood Principle – Gestalt Psychology • Attempted an empirical proof of the Gestalt grouping rule of proximity – “892 separations” • Ahead of his time. • Now we have the tools to do this. Egon Brunswik (1903-1955) 9 Outline 1. 2. 3. 4. Collect Data Learn Local Boundary Model (Low-Level Cues) Learn Pixel Affinity Model (Mid-Level Cues) Discussion and Conclusion 10 11 12 13 Protocol You will be presented a photographic image. Divide the image into some number of segments, where the segments represent “things” or “parts of things” in the scene. The number of segments is up to you, as it depends on the image. Something between 2 and 30 is likely to be appropriate. It is important that all of the segments have approximately equal importance. • Custom segmentation tool • Subjects obtained from work-study program (UC Berkeley undergraduates) 14 15 16 Segmentations are Consistent A Perceptual organization forms a tree: Image BG B C grass bush far L-bird beak body beak eye head • A,C are refinements of B • A,C are mutual refinements • A,B,C represent the same percept • Attention accounts for differences R-bird body eye head Two segmentations are consistent when they can be explained by the same segmentation tree (i.e. they could be derived from a single perceptual organization). 17 18 Dataset Summary • 30 subjects, age 19-23 – 17 men, 13 women – 9 with artistic training • • • • 8 months 1,458 person hours 1,020 Corel images 11,595 Segmentations – 5,555 color, 5,554 gray, 486 inverted/negated 19 Gray, Color, InvNeg Datasets • Explore how various high/low-level cues affect the task of image segmentation by subjects – Color = full color image – Gray = luminance image – InvNeg = inverted negative luminance image 20 Color Gray InvNeg 21 InvNeg 22 Color Gray InvNeg 23 Outline 1. Collect Data 2. Learn Local Boundary Model (Low-Level Cues) • • The first step in human vision: finding edges Required for all segmentation algorithms 3. Learn Pixel Affinity Model (Mid-Level Cues) 4. Discussion and Conclusion 24 Dataflow Image Boundary Cues Brightness Color Pb Cue Combination Model Texture Challenges: texture cue, cue combination Goal: learn the posterior probability of a boundary Pb(x,y,) from local information only 25 Non-Boundaries Boundaries I B C T 26 Brightness and Color Features • 1976 CIE L*a*b* colorspace • Brightness Gradient BG(x,y,r,) – 2 difference in L* distribution • Color Gradient CG(x,y,r,) – 2 difference in a* and b* distributions r (x,y) 2 ( g h ) 1 2 ( g , h) i i 2 i g i hi 27 Texture Feature Texton Map • Texture Gradient TG(x,y,r,) – 2 difference of texton histograms – Textons are vector-quantized filter outputs 28 Cue Combination Models • Classification Trees – Top-down splits to maximize entropy, error bounded • Density Estimation – Adaptive bins using k-means • Logistic Regression, 3 variants – Linear and quadratic terms – Confidence-rated generalization of AdaBoost (Schapire&Singer) • Hierarchical Mixtures of Experts (Jordan&Jacobs) – Up to 8 experts, initialized top-down, fit with EM • Support Vector Machines (libsvm, Chang&Lin) – Gaussian kernel, -parameterization Range over bias, complexity, parametric/non-parametric 29 Computing Precision/Recall Recall = Pr(signal|truth) = fraction of ground truth found by the signal Precision = Pr(truth|signal) = fraction of signal that is correct • Always a trade-off between the two • Standard measures in information retrieval (van Rijsbergen XX) • ROC from standard signal detection the wrong approach Strategy • Detector output (Pb) is a soft boundary map • Compute precision/recall curve: – Threshold Pb at many points t in [0,1] – Recall = Pr(Pb>t|seg=1) – Precision = Pr(seg=1|Pb>t) 30 Classifier Comparison Goal More Noise More Signal 31 ROC vs. Precision/Recall Signal 2 pr F max t p r Truth P N P TP FP N FN TN ROC Curve Hit Rate = TP / (TP+FN) = / False Alarm Rate = FP / (FP+TN) = / PR Curve Precision = TP / (TP+FP) = / Recall = TP / (TP+FN) = / 32 Cue Calibration All free parameters optimized on training data All algorithmic alternatives evaluated by experiment • Brightness Gradient – Scale, bin/kernel sizes for KDE • Color Gradient – Scale, bin/kernel sizes for KDE, joint vs. marginals • Texture Gradient – Filter bank: scale, multiscale? – Histogram comparison: L1, L2, L, 2, EMD – Number of textons, Image-specific vs. universal textons • Localization parameters for each cue 33 Calibration Example: Number of Textons for the Texture Gradient 34 Calibration Example #2: ImageSpecific vs. Universal Textons 35 Boundary Localization Boundaries Non-Boundaries TG TG (1) Fit cylindrical parabolas to raw oriented signal to get local shape: (Savitsky-Golay) (2) Localize peaks: f ˆf ~ f f 36 Dataflow Image Optimized Cues Brightness Pb Cue Combination Color Model Texture Benchmark Human Segmentations 37 Classifier Comparison 38 Cue Combinations 39 Alternate Approaches • Canny Detector – Canny 1986 – MATLAB implementation – With and without hysteresis • Second Moment Matrix – – – – Nitzberg/Mumford/Shiota 1993 cf. Förstner and Harris corner detectors Used by Konishi et al. 1999 in learning framework Logistic model trained on full eigenspectrum 40 Pb Images Image Canny 2MM Us Human 41 Pb Images II Image Canny 2MM Us Human 42 Pb Images III Image Canny 2MM Us Human 43 Two Decades of Boundary Detection 44 Findings 1. A simple linear model is sufficient for cue combination – All cues weighted approximately equally in logistic 2. Proper texture edge model is not optional for complex natural images – Texture suppression is not sufficient! 3. Significant improvement over state-of-the-art in boundary detection – Pb(x,y,) useful for higher-level processing 4. Empirical approach critical for both cue calibration and cue combination 45 Spatial priors on image regions and contours 46 Good Continuation • • • • • • • • • • Wertheimer ’23 Kanizsa ’55 von der Heydt, Peterhans & Baumgartner ’84 Kellman & Shipley ’91 Field, Hayes & Hess ’93 Kapadia, Westheimer & Gilbert ’00 …… Parent & Zucker ’89 Heitger & von der Heydt ’93 Mumford ’94 Williams & Jacobs ’95 …… 47 Outline of Experiments Prior model of contours in natural images – First-order Markov model • – Test of Markov property Multi-scale Markov models • • • Information-theoretic evaluation Contour synthesis Good continuation algorithm and results 48 Contour Geometry • First-Order Markov Model ( Mumford ’94, Williams & Jacobs ’95 ) t(s+1) – Curvature: white noise ( independent from position to position ) – Tangent t(s): random walk – Markov property: the tangent at the next position, t(s+1), only depends on the previous tangent t(s) s+1 t(s) s 49 Test of Markov Property Segment the contours at high-curvature positions 50 Prediction: Exponential Distribution If the first-order Markov property holds… • At every step, there is a constant probability p that a high curvature event will occur • High curvature events are independent from step to step Then the probability of finding a segment of length k with no high curvature is (1-p)k 51 Empirical Distribution Exponential ? NO 52 Empirical Distribution: Power Law Probability density 1 Prob . (length )1.62 Contour segment length 53 Power Laws in Nature • Power Laws widely found in nature – – – – – – Brightness of stars Magnitude of earthquakes Population of cities Word frequency in natural languages Revenue of commercial corporations Connectivity in Internet topology …… • Usually characterized by self-similarity and multi-scale phenomena 54 Multi-scale Markov Models • Assume knowledge of contour orientation at coarser scales t(s+1) t(1)(s+1) s+1 s+1 2nd Order Markov: P( t(s+1) | t(s) , t(1)(s+1) t(s) ) s Higher Order Models: P( t(s+1) | t(s) , t(1)(s+1), t(2)(s+1), … ) 55 14.6% of total entropy ( at order 5 ) Conditional Entropy Information Gain in Multi-scale 3 2.5 2 1.5 1 0.5 0 1 2 3 4 5 6 Order of Markov model H( t(s+1) | t(s) , t(1)(s+1), t(2)(s+1), … ) 56 Contour Synthesis First-Order Markov Multi-scale Markov 57 Multi-scale in Natural Images • Arbitrary viewing distance • Multi-scale in object shape 58 Conditioned on “Object Size” Probability density Region size: 250 - 1000 Region size: 1000 - 10000 Region size: 10000 - 300000 Contour segment length 59 Distribution of Region Convexity 60 Multi-scale Contour Completion • Coarse-to-Fine – Coarse-scale completes large gaps – Fine-scale detects details • Completed contours at coarser scales are used in the higher-order Markov models of contour prior for finer scales P( t(s+1) | t(s) , t(1)(s+1), … ) 61 Multi-scale: Example input coarse scale fine scale w/ multi-scale fine scale w/o multi-scale 62 Comparison: same number of edge pixels Canny Our result 63 Comparison: same number of edge pixels Canny Our result 64 Outline 1. Collect and Validate Data 2. Learn Local Boundary Model (Low-Level Cues) 3. Learn Pixel Affinity Model (Mid-Level Cues) – Good representation for segmentation algorithms – Keeps segmentation in a probabilistic framework 4. Discussion and Conclusion 65 Dataflow Estimated Affinity Image Region Cues E Segment Edge Cues Eij = affinity between pixels i and j Representation for graph-theoretic segmentation algorithms: – – – – – Minimum Spanning Trees - Zahn 1971, Urquhart 1982 Spectral Clustering - Scott/Longuet-Higgins 1990, Sarkar/Boyer 1996 Graph Cuts - Wu/Leahy 1993, Shi/Malik 1997, Felzenszwalb/Huttenlocher 1998, Gdalyahu/Weinshall/Werman 1999 Matrix Factorization - Perona/Freeman 1998 Graph Cycles - Jermyn/Ishikawa 2001 66 Pixel Affinity Cues i a) Patch similarity (3) b) Edges: strength of intervening contour (3) c) Image plane distance All cues calibrated with respect to training data j • Goal: Learn affinity function from the dataset using these 7 cues 67 Dataflow Image Region Cues Estimated Affinity (E) Segment Edge Cues Groundtruth Affinity (G) Benchmark Human Segmentations 68 Two Evaluation Methods Eij Gij 1. Precision-Recall of same-segment pairs – Precision is Pr(Gij=1|Eij>t) – Recall is Pr(Eij>t|Gij=1) 2. Mutual Information between E and G 69 Mutual information P ( x, y ) I( x; y ) P( x, y ) log 2 P( x)P( y ) x, y where x is a cue and y is indicator of being in same segment 70 Individual Features Patches Gradients 71 The Distance Cue cf. Bunskwik & Kamiya 1953 72 Feature Pruning Bottom-Up Top-Down 4 Good cues: Texture edge/patch, Color patch, Brightness edge 2 Poor cues: Color edge, Brightness patch 73 Affinity Model vs. Humans 74 Results 1. 2. 3. 4. 5. Common Wisdom: Use patches only / Use edges only Finding : Use both. Common Wisdom : Must use patches for texture Finding : Not true. Common Wisdom : Color is a powerful grouping cue Finding : True, but texture is better Common Wisdom : Brightness patches are a poor cue Finding : True (shadows) Common Wisdom : Proximity is a (Gestalt) grouping cue Finding : Proximity is a result, not a cause of grouping 75 Outline 1. 2. 3. 4. Collect and Validate Data Learn Local Boundary Model (Low-Level Cues) Learn Pixel Affinity Model (Mid-Level Cues) Discussion and Conclusion 76 Contribution • Provide a mathematical foundation for the grouping problem in terms of the ecological statistics of natural images. – "When you can measure what you are speaking about and express it in numbers, you know something about it; but when you cannot measure it, when you cannot express it in numbers, your knowledge is of the meager and unsatisfactory kind." --Lord Kelvin 77