Document 14529475

advertisement

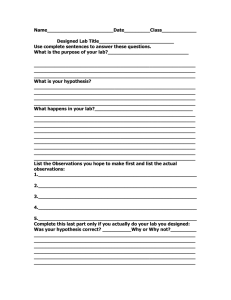

Justifying Small-N for ISS Research: Can NASA Researchers Stray from Traditional Standards? R , James Fiedler1,2, Ph.D., Alan H. Feiveson1, Ph.D. Robert J. Ploutz-Snyder1,2, Ph.D., PStat 1 Universities Space Research Association, Houston, TX, 2NASA Johnson Space Center, Houston, TX Abstract The Threshold Myth of Minimum-n Value of Information Theory Study Value as a Function of Step Costs When submitting research proposals for external funding, principal investigators (PIs) are asked to justify their proposed sample size (n). Grant writing dogma typically requires PIs to justify a sample size that achieves at least 80% power to reject the null hypothesis with statistical tests using 2-tailed α = 0.05, an exercise that depends on knowing variability and the anticipated effect size. This traditional approach is a difficult task for any researcher to truly accomplish due to the number of assumptions that are required (but often not fully appreciated) and the requirement for a known effect size with novel research. NASA investigators have even more difficulty with this tradition because the availability of our experimental subjects (e.g., long duration astronauts, high fidelity analog subjects) is incredibly limited, and also because of the extreme costs and time necessary for conducting large-n studies. We present some recent ideas that extend Value of Information Theory to sample size calculations. We argue that these methods are reasonable alternatives to traditional sample size calculations, and should be considered for research that is highly innovative, costly, difficult to conduct, or prohibitively difficult to complete within a reasonable time frame. Few would argue that well-conceived research is highly valuable when it produces statistically significant findings that are consistent with our understanding of the discipline(s). Is it the case that all “non-significant” research is worthless—i.e., there is no value in a study that fails to reject the null hypothesis? Is it possible that the null hypothesis is actually supported in the data, or that the actual effect size is smaller than we assumed it would be? Alternatively, if the data do not support H0, say at P = 0.06, is there no value to that information? In other words, is there something of value other than P < 0.05 for well-conceived research, or is it the case that these studies are “fundamentally-flawed” as has been commonly claimed by grant reviewers remarking on studies that are “underpowered” to detect statistical significance? Value of Information (VOI) Theory [5] suggests We chose Bedrest as a motivating example because there are substantial “step” costs associated with the maximum number of bedrest participants the facility can process per year. For example, if the facility were completely dedicated to a single experiment, the costs associated with n = 18 would be substantially more than n = 17 because the addition of that one extra subject would incur an expense (i.e., annual costs that are not pro-rated per subject). In reality, the facility runs subjects in multiple studies simultaneously. We are using the best available esimtates of Bedrest cost, but we are simplifying the model by assuming the facility is used for a single study at a time. We arge that this simplification does not invalidate the application of cost-based sample size determination for similar situations. Truth about H0 H0 is false H0 is true Power β which is a function that could be maximized to arrive at the most costefficient sample size. However Projected Value (Vn) is an elusive construct that is difficult, if not impossible, to define. • What is the value of a cure? A missed cure? • What is the value of a negative side effect? • Do your values match mine? α 1−α Convention sets Type I error rates (α), the chance of rejecting the null and claiming a difference exists when it doesn’t, at α = 0.05. Scientific and statistical thinking has long held that Type I errors are the most serious type of mistake, and so our statistical tests are set very conservatively to minimize this possibility. Type II error rates (β), the chance of failing to reject the null and claiming no effect, are errors in which the data do not support rejecting the null hypothesis, when in fact there really is an effect. These errors are represented in sample size calculations as “power” (1 − β), where power is the probability of rejecting a false null. Traditional sample size justification sets power to 80% (thus β = 0.20) so that the likelihood of detecting a true effect is high. One obvious problem with this approach is that it requires PIs to know the effect size that they hope to observe before collecting any data! This requires a large leap of faith from imperfect pilot data or marginally related manuscripts that are “close enough” to the proposed research to be useful, yet “not too similar” as to render the proposed research unnecessary and duplicative. A second problem is that the calculations involved rely heavily on a set of assumptions for which even small deviations can dramatically affect the “answer” (i.e., minimum required n), thereby opening the door for miscalculations and/or the erosion of scientific integrity. One inherent problem with this paradigm is that while it certainly is possible to perform these calculations, the result is an answer that suggests that as long as n subjects participate in the study, the research will be successful. The implicit interpretation is that there is a threshold for n below which the study will provide no value (i.e., “fundamentally flawed”) and above which the study has high value [1]. Bacchetti, McCulloch and Segal (BMS) [3] develop methods that avoid the need to quantify projected value by using a surrogate function for Vn that increases with n at least as fast as any reasonable definition of Vn. Their model posits that there is a positive function f (n) and a value n∗ such that Cn∗ Cn ∗, and ≤ for all n > n f (n∗) f (n) Vn Vn∗ ∗, and ≥ for all n ≥ n f (n∗) f (n) Vn Vn∗ ∗ ≥ for all n ≥ n Cn∗ Cn Figure 1: Qualitative depiction of the threshold myth. Reproduced from [1] with permission. Even if one considers only traditional value metrics, like power, there simply is no sample size threshold that separates valuable from valueless research, yet the belief is pervasive. In fact, through statistical simulations, ten common metrics of study value have been shown to have roughly the same curve relating value to sample size [3] (4) (5) (6) Applying BMS’ Model to Bedrest Research Given the challenges and limitations with traditional sample size calculations, and particularly given the challenges associated with conducting NASA research in the space or analog environments, we argue that sample size justification for NASA-funded research should incorporate broader appreciation of what is valuable. We review one approach that links study cost to n in order to design the most cost-effective study. We applied these methods to cost data derived with cooperation from the NASA Flight Analog Project’s Bedrest Research Program. NASA’s Bedrest Research Program is designed to operate year-round with continuous subject participation unless disrupted by severe weather events or other unforseen circumstances. Given the number of beds in the facility, and assuming 70-day bedrest studies are being conducted (plus the requisite pre and post support), the facility is able to complete approximately 17 bedrest subject trials per year. Some of the primary costs involved in running this facility include recruitment, screening, dietary staff and supplies, medical personnel, administrative staff, costs associated with collecting standard measures (e.g. bloodwork, MRI), and hospital/facility rental. Investigators might also propose collecting data beyond the standard measures, which would incur additional costs. Some of these costs are essentially fixed per year and others are dependent on n. 1 34 51 55 n No Step Costs Large Step Costs Moderate Step Costs Substantial Step Costs (3) and suggest choosing either nmin, the smallest n that minimizes cost per subject (5), or nroot, the smallest n that minimizes the cost per square root of n (6). Figure 2: Shapes of the relationship between projected value and sample size for 10 measures of study values and situations (scale removed for clarity). Curves include (a) Shannon information with n0 = 100, where no is n equivalent of the prior information; (b) reciprocal of confidence interval width; (c) reduction in Bayesian credible interval width when n0 = 100; (d) reduction in squared error versus using prior mean when n0 = 100; (e) power for a standardized effect size of 0.2; (f) additional cures from a Bayesian clinical trial with prior means (SDs) for cure rates of 0.4 (0.05) versus 0.4 (0.1); (g) gain in Shannon information with n0 = 2; (h) reduction in squared error versus using a single observation; (i) reduction in squared error versus using prior mean when n0 = 2; (j) reduction in Bayesian credible interval width when n0 = 2. Reproduced from [3] with permission. 17 (2) and that if f (n) can be chosen so that condition (2) holds for any n under consideration, then choosing n∗ to minimize Cn/f (n) selects the smallest sample size that meets (2) and (3) and guarantees the most cost-efficient n is met or exceeded. They propose two choices of f (n) for implementing their strategy f (n) = n √ f (n) = n Study Costs per n 1/2 • How would you combine all of the positive and negative values to arrive at “study value”? BMS’s Extension of VOI Model Conventional sample size justification is based on hypothesis testing, where one rejects the null hypothesis of no difference if P < 0.05, assuming that we know the effect size a-priori, the standard deviation (σ) of our dependent variable(s),and we accept conventional choices for Type I and II errors that we may make in our hypothesis testing. Applying traditional frequentist hypothesis test theory, there are four possible outcomes following a decision to reject or “accept” a null hypothesis We reject H0 and assume Ha to be true We accept H0 and assume it to be true (1) • What is the value of a scientific publication? Are all journals equal? Background and Significance Our decision Projected Value (given n) Cost Efficiency = Total Study Cost (given n) Vn = , Cn Figure 3: Study value per square root of n vs. sample size (n) with different magnitudes of annual costs (i.e., step costs). Study cost per subject decreases in a smooth curve until an optimal n is reached, and then rises. This is in sharp contrast to the optimal n when step costs are involved, where the annual costs based on steps of n is apparent. Smaller optimal n results when a larger proporion of the total costs are step costs. Figure 3 above shows four curves representing the relationship between the cost per subject using BMS’s root-n function (6) and proposed sample size. The most cost-effective sample size is the location where the curve begins to increase. The dashed curve serves as a reference, and it represents this relationship if there were no step costs associated with each block of n = 17 subjects. In the reference curve, n = 55 represents the bottom of the curve, where both increasing and decreasing n results in increasing cost per subject. The other three curves show the relative impact of moderate, large, and substantial step costs, with their respective cost-effective sample sizes of n = 17, 34 and 51, respectively. Conclusion We support Bacchetti and colleagues in arguing that, particularly for highly innovative, novel and expensive research, the dogmatic application of traditional sample size justification is unreasonable, and new ideas are necessary. Their extension of Value of Information Theory seems as reasonable a method as any that we are aware of. References [1] Bacchetti, P., (2010). Current Sample Size Conventions: Flaws, Harms, and Alternatives. Biomed Central Medicine, 8:17. [2] Bacchetti, P., Wolf, L.E., Segal, M.R., McCulloch, C.E. (2005). Ethics and Sample Size. American Journal of Epidemiology, 161(2): 105-110. [3] Bacchetti, P., McCulloch, C.E., Segal, M.R. (2008). Simple, Defensible Sample Sizes Based on Cost Efficiency. Biometrics, 64:577-594. [4] Bacchetti, P., Deeks, S.G., McCune, J.M. (2011). Breaking Free of Sample Size Dogma to Perform Innovative Translational Research Science Translational Medicine, 3(87):24 [5] Yokota, F.,& Thompson, K.M (2004). Value of Information Analysis in Environmental Health Risk Management Decisions: Past, Present and Future. Risk Analysis, 24:635-650.