Response by UCL (University College London) to a consultation on

advertisement

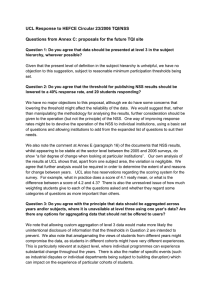

Response by UCL (University College London) to a consultation on changes to public information about higher education published by institutions (Key information Set – ‘KIS’) 1 UCL welcomes the opportunity to comment on the proposals. However, we would note that in providing public information on programmes, steps should be taken by those developing the KIS dataset/document to ensure that the information is clear and easily understood by prospective students. Institutions have traditionally used prospectuses in order to provide information on programmes and modules in a format that is more easily digestible and aimed specifically at those who will not have a full understanding of institutional practice and university workings. It would be unfortunate if the KIS were to inhibit clear understanding on the part of its target audience and cause confusion. 2 We would also like to draw attention to the potential resource burden for HEIs in implementing the KIS. At the recent HEFCE conference on public information (18 February 2011) a number of concerns were expressed by HE representatives regarding the additional resource which would be required to provide the KIS; particularly at a time when HEIs are operating within severe financial constraints. Operating and ongoing maintenance of the KIS dataset will require major investment. Some participants from HEIs involved in the pilot schemes for the KIS have reported that providing the KIS data for a small percentage of their provision has proved to be an onerous undertaking and costly in terms of resource and staff time. As we noted in our response to the consultation on the evaluation of the Academic Infrastructure in May 2010, ‘we trust that the lessons of the past have been learned and that the eventual dataset agreed upon will not involve the commitment of large amounts of scarce institutional resource to the preparation and maintenance of qualitative data’. QUESTION 1 Are the three key purposes of public information outlined in paragraph 42 still appropriate? If not, what additional or alternative purposes should a public information set seek to address? 3 Yes, the three key purposes seem broadly appropriate. QUESTION 2 Do you think the KIS fulfils our objective of providing the information students have identified as useful, in a place they look for it, in a standardised and complete manner? 4 It is easy to see why the survey participants opted for the three categories of employment data as detailed in Table 1. However, there are issues with this data which may make it misleading to prospective students. In Annex E, the pie chart contains data about actual destinations and level of destination (graduate/nongraduate). There are a number of issues with this. ‘Work and Study’ does not appear to be categorised at graduate or nongraduate level. This may lead to misleading results and is confusing. Table 1 in the document states that graduate-level work is only considered for full-time roles, which begs the question: where do those who are in graduate-level parttime roles appear in the pie chart? Study is not broken down into graduate and non graduate level, meaning that those who are studying for a PhD are given equal status with those who are taking a much lower level qualification. Again this is misleading. For these reasons, we feel it is essential that the level of destination is separate from the actual destination. If details are to be given about the graduate/non graduate level of destinations, this should include both those in employment and those in further study. The pie chart as it stands seems to send the message that employment is a more valid destination than further study. Average salary is a very questionable measure of career success and programme quality. In addition, salary levels offered to new entrants can be dependent not only on sector but also location, previous experience etc. In a simple KIS, it is doubtful whether any of this will be taken into account. In addition, the salary data is obtained, along with all other information, from the graduates themselves in the DLHE survey. This is unverified and could be open to abuse. Paragraph 19 of the consultation document rightly states that ‘public information should be robust’. We do not feel that salary is a true measure of programme quality and should not be published as such. However, if this data is to be used in such a high profile way, there should be some form of verification system built into its collection. It may be that the DLHE survey is not the best vehicle for this. For repayment of student fees (assuming the proposed system goes ahead), there will be a central system for monitoring graduate salaries. This will, we assume, be reliable. In addition, it should be made clear that all this data is taken at six months, not ‘in first year’ if the data is to be correct. QUESTION 3 Do you agree that links should be provided to the KIS from the UCAS web-site? 5 The UCAS website would seem a sensible place to hold this information. We think it is important to have one central reference point. With regard to students looking for information on institutional websites, this may be more difficult in practice as there will be no standardised repository. It will also be very difficult to monitor. This also makes direct comparisons between programmes more difficult as the user is required to look in numerous places to gather the information. QUESTION 4 Given that we want the production of the KIS to be as efficient as possible, are there particular administrative or logistical issues which the pilot phase should consider? 6 The report states that the NSS data will be presented at ‘course’ level (which we assume means ‘degree programme’) where possible, or otherwise at the most refined JACS subject level available (paragraphs 55 and 63). There are presentational issues to consider relating to the potential difficulties in using NSS data broken down at both programme and subject level (see question 6 below). A number of logistical and administrative implications arise. Even with high response rates many programmes with low population sizes would struggle to achieve the necessary response rate (23 responses and 50%) to appear in the published results. For some programmes this would be impossible. 7 There are also difficulties in attributing the JACS subject data to specific programmes, as response data from programmes/subjects under the threshold may only appear in wider subject-level categories (and in some cases, not obviously relevant categories). Broadly speaking, the wider the JACS subject level shown, the harder it is to identify groups of students or produce meaningful data, and relating these to programmes with small numbers could be problematic. Where a programme 2 relates to more than one subject area (i.e. an interdisciplinary programme), it is not obvious which subject area should be used if the programme is below the threshold. Some programmes are published under odd, often unexpected, JACS subject areas due to the way in which its students are reported in the HESA returns which are a requirement for their funding. 8 The practice of aggregating two years’ worth of data in order for it to be published (this is already done on Unistats) remains controversial, as it combines two separate student cohorts and makes it difficult to identify trends between different years. 9 The ‘hours per week’ for each programme may prove difficult as this may vary from week to week and may be dependent on which modules have been selected. It would be more effective to have it linked to the ECTS ‘value’ of the year or the programme overall, translated into average hours per week. It is also hard to divide the time across learning and teaching as this will be module-dependent. Where a programme has a degree of student selection, this could vary quite significantly. 10 There are a number of difficulties with the assessment information. As with the HEAR, we have found that the overarching generic terms are not always helpful, especially if an institution has, as we have, a significant number of different assessment types (eg. coursework, orals, practicals, examinations of different types seen and unseen, multiple choice and written etc.). Institutions may also have a level of granularity of assessment data which may make it difficult to summarise in the overarching categories stated. Also, in a healthy institution, assessment methods change all the time. At UCL, proposals for amendment are submitted in the March prior to the September in which the modules are first made available. At the time the KIS data set is populated therefore, data may be inaccurate as these amendments are underway. QUESTION 5 Should the information set to be published on institutional web-sites (shown at Annex F) include short, up-to-date employability statements for prospective students, in addition to information about links with employers? 11 Employability statements may sound appealing and useful to prospective students but we would question their effectiveness in reality. A statement linked to the KIS would be a reasonably easy action to implement. However, it would be helpful to know how useful prospective students found the Employability statements published by HEIs on the Unistats website in August 2010. Information about links with employers would potentially be helpful for students but this might vary widely across an institution from programme to programme. Clear guidelines would therefore need to be given to institutions to ensure that students were not given misleading information. QUESTION 6 3 Does Annex F set out the right information items for inclusion in the wider published information set (subject to agreement on the inclusion of employability statements as proposed in Question 5)? If you think items should be added/removed, please tell us about them. 12 The presentation of the NSS scores, either by programme or by subject area has the potential to confuse prospective students. If the KIS dataset were to be applied to the 2010 NSS results, there would inevitably be mixed use of the programme and subject data across an institution. Students would find feedback data identified by programme in one place and by subject area in another (at three possible levels of granularity) and this might be compounded by some programmes or subjects aggregating two years worth of data. Recent experience at UCL shows that some UCL staff new to the NSS find the split between subject and programme confusing (despite explanatory material). There is therefore a danger that this might confuse, or even frustrate prospective students if not carefully presented. 13 We do not consider that ‘results of internal student surveys’ should be included. A survey just looking at one institution will not be particularly useful. It will also be being read out of context, creating a confused picture. If institutions choose not to publish any negative survey results (if this is an optional element of the information set), this will give a skewed picture. If an institution were to publish negative results, even where issues have been resolved, this old information would remain in the public domain. If this information is published, then institutions should be allowed to indicate what steps have been taken to address the issues. Institutions will not be happy having to publish information which damages recruitment. It should also be remembered that such surveys are often used internally to identify areas of concern and areas of success. They are part of the feedback for improvement. Their use in the wider information set may therefore detract from this. 14 We are also concerned about the proposal to include information on ‘students continuing/completing/leaving without awards’. Most institutions have students who transfer from one programme to another, where, without an accompanying explanation, the programme might appear to have lost a large number of students where this is not the case. There may also be reasons unrelated to student satisfaction with the programme that affect their departure (eg. financial reasons, personal issues, non-continuation due to legal constraints (eg. visa expiry)). There are students who do not leave but repeat and students who finish their registration without an award but who achieve it in the next year while not in attendance. How would these situations be captured? 15 Employment, salary and destination data would be meaningless at programme level, because samples would be too low. We would suggest that data for a sample of fewer than 100 respondents should NOT be provided, or should be provided with a health warning stating ‘small sample’. QUESTION 7 Do you agree that the list of items for the information set should be maintained on HEFCE’s web-site and updated as necessary on advice from HEPISG and QHE Group? 16 We agree that this seems appropriate. QUESTION 8 4 Do you agree that student unions should be able to nominate one optional question bank in their institution’s NSS each year? 17 We understand that this is intended to encourage HEIs to take up the optional questions (only half do at present) and to help student unions to increase student involvement with the NSS. Reluctance on the part of an HEI to do this could make it appear unwilling to engage with students. However, we would like to note that an HEI could be justified in not taking up the optional questions on the grounds that the value added by the additional data provided might not justify the additional resource required for its analysis. However, UCL would wish to encourage any proposal which leads to greater student involvement with the NSS and therefore we have no objection to this. QUESTION 9 Do you have any other comments on the proposals in this document, or further suggestions for what we might do? 18 See our introductory comments at paragraphs 1 and 2 above. 19 On the issue of comparability of NSS data, the Institute of Education research warns that simplistic comparisons between different HEIs do not take into account varying subject mix and student profiles1. HEFCE intends to change the format of publishing 2011 data to avoid such comparisons being made. HEFCE recognises the validity of careful comparison of the same subject areas at different HEIs, but states that it is not valid to compare different subject areas or to ‘construct league tables of institutions’ (paragraph 124). It has endorsed the Institute of Education’s suggestion (Annex B, Recc. 5) that guidance on appropriate use for the results be widely available. This is not inconsistent with HEFCE’s previous statements on using the NSS in league tables, but is perhaps its strongest indication to date that the survey should not be used for these purposes. 20 We are concerned that some of the information made available would be effectively a set of content-free platitudes, much of it unverifiable (such as mission statements). We would also reiterate that some potentially useful information would be statistically meaningless at programme level because it would be drawn from small samples. 1 Enhancing and Developing the National Student Survey: Report to HEFCE by the Centre for Higher Education Studies at the Institute of Education (paragraph16.3). 5