Heuristic Planning with Time and Resources P@trik Haslum H´ector Geffner

advertisement

Proceedings of the Sixth European Conference on Planning

Heuristic Planning with Time and Resources

P@trik Haslum

Héctor Geffner

Linköping University, Sweden

(Now at Australian National University & NICTA.

firstname.lastname@anu.edu.au.)

Universitat Pompeu Fabra, Spain

hector.geffner@upf.edu

Abstract

son et al. (2000) describe the need for sophisticated engineering of domain dependent search control for the HSTS

planner). Many highly expressive planners, e.g., O-Plan

(Tate, Drabble, and Dalton 1996), ASPEN (Fukunaga et al.

1997) or TALplanner (Kvarnström and Doherty 2000), are

“knowledge intensive”, relying on user-provided problem

decompositions, evaluation functions or search constraints1 .

We present an algorithm for planning with time and resources, based on heuristic search. The algorithm minimizes

makespan using an admissible heuristic derived automatically

from the problem instance. Estimators for resource consumption are derived in the same way. The goals are twofold: to

show the flexibility of the heuristic search approach to planning and to develop a planner that combines expressivity and

performance. Two main issues are the definition of regression in a temporal setting and the definition of the heuristic

estimating completion time. A number of experiments are

presented for assessing the performance of the resulting planner.

Action Model and Assumptions

The action model we use is propositional STRIPS with extensions for time and resources. As in GRAPHPLAN (Blum

and Furst 1997) and many other planners, the action set is

enriched with a no-op for each atom p which has p as its

only precondition and effect. Apart from having a variable

duration, a no-op is viewed and treated like a regular action.

Introduction

Recently, heuristic state space search has been shown to be a

good framework for developing different kinds of planning

algorithms. It has been most successful in non-optimal sequential planning, e.g., HSP (Bonet and Geffner 2001) and

FF (Hoffmann 2000), but has been applied also to optimal

and parallel planning (Haslum and Geffner 2000).

We continue this thread of research by developing a

domain-independent planning algorithm for domains with

metric time and certain kinds of resources. The algorithm

relies on regression search guided by a heuristic that estimates completion time and which is derived automatically

from the problem representation. The algorithm minimizes

the overall execution time of the plan, commonly known as

the makespan.

A few effective domain-independent planners exhibit

common features: TGP (Smith and Weld 1999) and TPSys

(Garrido, Onaindia, and Barber 2001) handle actions with

duration and optimize makespan, but not resources. RIPP

(Koehler 1998) and GRT- R (Refanidis and Vlahavas 2000)

handle resources, and are in this respect more expressive

than our planner. Sapa (Do and Kambhampati 2001) deals

with both time and resources, but non-optimally.

Among planners that exceed our planner in expressivity,

e.g., Zeno (Penberthy and Weld 1994), IxTeT (Ghallab and

Laruelle 1994) and HSTS (Muscettola 1994), none have reported significant domain-independent performance (Jons-

Time

When planning with time each action a has a duration,

dur(a) > 0. We take the time domain to be R+ . In most

planning domains we could use the positive integers, but we

have chosen the reals to highlight the fact that the algorithm

does not depend on the existence of a least indivisible time

unit. Like Smith and Weld (1999), we make the following assumptions: For an action a executed over an interval

[t, t + dur(a)]

(i) the preconditions pre(a) must hold at t, and preconditions

not deleted by a must hold throughout [t, t + dur(a)] and

(ii) the effects add(a) and del(a) take place at some point in

the interior of the interval and can be used only at the end

point t + dur(a).

Two actions, a and b, are compatible iff they can be safely

executed in overlapping time intervals. The assumptions

above lead to the following condition for compatibility: a

and b are compatible iff for each atom p ∈ pre(a) ∪ add(a),

p 6∈ del(b) and vice versa (i.e., p ∈ pre(b) ∪ add(b) implies

p 6∈ del(a)).

1

The distinction is sometimes hard to make. For instance,

parcPlan (Lever and Richards 1994) domain definitions appear to

differ from plain STRIPS only in that negative effects of actions

are modeled indirectly, by providing a set of constraints, instead

of explicitly as “deletes”. parcPlan has shown good performance

in certain resource constrained domains, but domain definitions are

not available for comparison.

c 2014, Association for the Advancement of Artificial

Copyright Intelligence (www.aaai.org). All rights reserved.

107

Resources

time t if P makes all the atoms in E true at t and schedules the actions ai at time t − δi . The initial search state

is s0 = (GP , ∅), where GP is the goal set of the planning

problem. Final states are all s = (E, ∅) such that E ⊆ IP .

The planner handles two types of resources: renewable and

consumable. Renewable resources are needed during the execution of an action but are not consumed (e.g., a machine).

Consumable resources, on the other hand, are consumed or

produced (e.g., fuel). All resources are treated as real valued

quantities; the division into unary, discrete and continuous

is determined by the way the resource is used. Formally, a

planning problem is extended with sets RP and CP of renewable and consumable resource names. For each resource

name r ∈ RP ∪ CP , avail(r) is the amount initially available and for each action a, use(a, r) is the amount used or

consumed by a.

Branching Rule A successor to a state s = (E, F ) is constructed by selecting for each atom p ∈ E an establisher

(i.e., a regular action or no-op a with p ∈ add(a)), subject to

the constraints that the selected actions are compatible with

each other and with each action b ∈ F , and that at least one

selected action is not a no-op. Let SE be the set of selected

establishers and let

Fnew = {(a, dur(a)) | a ∈ SE}.

The new state s0 = (E 0 , F 0 ) is defined as the atoms E 0 that

must be true and the actions F 0 that must be executing before

the last action in F ∪Fnew begins. This will happen in a time

increment δadv :

Planning with Time

We describe first the algorithm for planning with time, not

considering resources. In this case, a plan is a set of action

instances with starting times such that no incompatible actions overlap in time, action preconditions hold over the required intervals and goals are achieved on completion. The

cost of a plan is the total execution time, or makespan. We

describe each component of the search scheme: the search

space, the branching rule, the heuristic, and the search algorithm.

δadv = min{δ | (a, δ) ∈ F ∪ Fnew and a is not a no-op}

where no-op actions are excluded from consideration since

they have variable duration (the meaning of the action

no-op(p) in s is that p has persisted in the last time slice).

Setting the duration of no-ops in Fnew equal to δadv , the

state s0 = (E 0 , F 0 ) that succeeds s = (E, F ) becomes

E 0 = {pre(a) | (a, δadv ) ∈ F ∪ Fnew }

F 0 = {(a, δ − δadv ) | (a, δ) ∈ F ∪ Fnew , δ > δadv }

Search Space

Regression in the classical setting is a search in the space of

“plan tails”, i.e., partial plans that achieve the goals provided

that the preconditions of the partial plan are met. A regression state, i.e., a set of atoms, summarizes the plan tail: if s

is the state obtained by regressing the goal through the plan

tail P 0 and P is a plan that achieves s from the initial state,

then the concatenation of P and P 0 is a valid plan. A similar

decomposition is exploited in the forward search for plans.

In a temporal setting, a set of atoms is no longer sufficient

to summarize a plan tail or plan head. For example, the set

s of all atoms made true by a plan head P at time t holds

no information about the actions in P that have started but

not finished before t. Then, if a plan tail P 0 maps s to a

goal state, the combination of P and P 0 is not necessarily a

valid plan. To make the decomposition valid, search states

have to be extended with the actions under execution and

their completion times. Thus, in a temporal setting states

become pairs s = (E, F ), where E is a set of atoms and

F = {(a1 , δ1 ), . . . , (an , δn )} is a set of actions ai with time

increments δi .

An alternative representation for plans will be useful: instead of a set of time-stamped action instances, a plan is represented by a sequence h(A0 , δ0 ), . . . , (Am , δm )i of action

sets Ai and positive time increments δi . Actions in A0 begin

executing P

at time t0 = 0 and actions in Ai , i = 1 . . . m, at

time ti = 06j<i δj (i.e., δi is the time to wait between the

beginning of actions Ai and the beginning of actions Ai+1 ).

The cost of the transition from s to s0 is c(s, s0 ) = δadv and

the fragment of the plan tail that corresponds to the transition

is P (s, s0 ) = (A, δadv ), where A = {a | (a, δadv ) ∈ F ∪

Fnew } The accumulated cost (plan tail) along a state-path

is obtained by adding up (concatenating) the transition costs

(plan fragments) along the path. The accumulated cost of a

state is the minimum cost along all the paths leading to s.

The evaluation function used in the search algorithm adds

up this cost and the heuristic cost defined below.

Properties The branching rule is sound in the sense that it

generates only valid plans, but it does not generate all valid

plans. This is actually a desirable feature2 . It is optimality

preserving in the sense that it generates some optimal plan.

This, along with soundness, is all that is needed for optimality (provided an admissible search algorithm and heuristic

are used).

Heuristic

As in previous work (Haslum and Geffner 2000), we derive

an admissible heuristic by introducing approximations in the

recursive formulation of the optimal cost function.

For any state s = (E, F ), the optimal cost is H ∗ (s) = t

iff t is the least time t such that there is a plan P that achieves

2

The plans generated are such that a regular action is executing

during any given time interval and no-ops begin only at the times

that some regular action starts. This is due to the way the temporal

increments δadv are defined. Completeness could be achieved by

working on the rational time line and setting δadv to the gcd of

all actions durations, but as mentioned above this is not needed for

optimality.

State Representation A search state s = (E, F ) is a pair

consisting of a set of atoms E and a set of actions with corresponding time increments F = {(a1 , δ1 ), . . . , (an , δn )},

0 < δi ≤ dur(ai ). A plan P achieves s = (E, F ) at

108

s at t. The optimal cost function, H ∗ , is the solution to

Bellman’s (1957) equation:

(

0

if s is final

∗

0

∗ 0

H (s) =

(1)

min

c(s,

s

)

+

H

(s

)

0

For a fixed m, equation (6) can be simplified because only

a limited set of states can appear in the regression set. For

example, for m = 1, the state s in (6) must have the form

s = ({p}, ∅) and the regression set R(s) contain only states

s0 = (pre(a), ∅) for actions a such that p ∈ add(a). As a

result, for m = 1, (6) becomes

s ∈R(s)

where R(s) is the regression set of s, i.e., the set of states

that can be constructed from s by the branching rule.

HT1 ({p}, ∅) =

Approximations. Since equation (1) cannot be solved in

practice, we derive a lower bound on H ∗ by considering

some inequalities. First, since a plan that achieves the state

s = (E, F ), for F = {(ai , δi )}, at time t must achieve the

preconditions of the actions ai at time t − δi and these must

remain true until t, we have

[

H ∗(

(ak ,δk )∈F

pre(ai ), ∅) + δk

(2)

[

∗

H

. (E, F ) > H (E ∪

pre(ai ), ∅)

(3)

Any admissible search algorithm, e.g., A∗ , IDA∗ or DFS

branch-and-bound (Korf 1999), can be used with the search

scheme described above to find optimal solutions.

The planner uses IDA∗ with some standard enhancements

(cycle checking and a transposition table) and an optimality preserving pruning rule explained below. The heuristic

used is HT2 , precomputed for sets of at most two atoms as

described above.

(ai ,δi )∈F

Incremental Branching In the implementation of the

branching scheme, the establishers in SE are not selected

all at once. Instead, this set is constructed incrementally, one

action at a time. After each action is added to the set, the cost

of the resulting “partial” state is estimated so that dead-ends

(states whose cost exceeds the bound) are detected early. A

similar idea is used in GRAPHPLAN. In a temporal setting,

things are a bit more complicated because no-ops have a duration (δadv ) that is not fixed until the set of establishers is

complete. Still, a lower bound on this duration can be derived from the regular actions selected so far and in the state

being regressed.

Second, since achieving a set of atoms E implies achieving

each subset E 0 of E we also have

H ∗ (E, ∅) >

max

E 0 ⊆E,|E 0 |6m

H ∗ (E 0 , ∅)

(4)

where m is any positive integer.

Temporal Heuristic HTm . We define a lower bound HTm

on the optimal function H ∗ by transforming the above inequalities into equalities. A family of admissible temporal

heuristics HTm for arbitrary m = 1, 2, . . . is then defined by

HTm (E, ∅) =

min

s0 ∈R(s=(E,∅))

max

0

if E ⊆ IP

0

m 0

c(s, s ) + HT (s ) if |E| 6 m

E 0 ⊆E,|E 0 |6m

HTm (E 0 , ∅)

if |E| > m

(5)

(6)

Selecting the Atom to Regress The order in which atoms

are regressed makes no difference for completeness, but

does affect the size of the resulting search tree. We regress

the atoms in order of decreasing “difficulty”: the difficulty

of an atom p is given by the estimate HT2 ({p}, ∅).

(7)

and

Right-Shift Pruning Rule In a temporal plan there are

almost always some actions that can be shifted forward or

backward in time without changing the plan’s structure or

makespan (i.e., there is some “slack”). A right-shifted plan

is one in which such movable actions are scheduled as late

as possible.

As mentioned above, it is not necessary to consider all

valid plans in order to guarantee optimality. In the implemented planner, non-right-shifted plans are excluded by the

following rule: If s0 is a successor to s = (E, F ), an action

a compatible with all actions in F may not be used to establish an atom in s0 when all the atoms in E 0 that a adds

have been obtained from s by no-ops. The reason is that a

could have been used to support the same atoms in E, and

thus could have been shifted to the right (delayed).

HTm (E, F ) = max[

max

(ak ,δk )∈F

HTm (E ∪

HTm (

[

pre(ai ), ∅) + δk ,

(ai ,δi )∈F,δi >δk

[

pre(ai ), ∅) ]

(9)

Search Algorithm

(ai ,δi )∈F, δi >δk

∗

dur(a) + HT1 (pre(a), ∅)

The corresponding equations for HT2 can be found in our

earlier workshop paper (Haslum and Geffner 2001).

H ∗ (E, F ) >

max

min

a:p∈add(a)

(8)

(ai ,δi )∈F

The relaxation is a result of the last two equations; the first

two are also satisfied by the optimal cost function. Unfolding the right-hand side of equation (6) using (8), the first

two equations define the function HTm (E, F ) completely for

F = ∅ and |E| ≤ m. From an implementation point of view,

this means that for a fixed m, HTm (E, ∅) can be solved and

precomputed for all sets of atoms with |E| ≤ m, and equations (7) and (8) used at run time to compute the heuristic

value of arbitrary states. The precomputation is a simple

variation of a shortest-path problem and its complexity is a

low order polynomial in |A|m , where |A| is the number of

atoms.

Planning with Resources

Next, we show how the planning algorithm deals with renewable and consumable resources.

109

Renewable Resources

provided as part of the domain definition and is set to 0 by

default. Since the duration of a no-op is variable, we interpret use(maintain(p), r) as the rate of consumption. For

the rest, maintenance actions are treated as regular actions,

and no other changes are needed in the planning algorithm.3

Renewable resources limit the set of actions that can be executed concurrently and therefore need to enter the planning

algorithm only in the branching rule. When regressing a

state s = (E, F ), we must have that

X

use(ai , r) 6 avail(r)

(10)

Experimental Results

(ai ,δi )∈F ∪Fnew

We have implemented the algorithm for planning with time

and resources described above, including maintenance actions but with the restriction that consumable resources are

monotonically decreasing, in a planner called TP 4.4 The

planner uses IDA∗ with some standard enhancements and

the HT2 heuristic. The resource consumption estimators consider only single atoms.

for every renewable resource r ∈ RP .

Heuristic The HTm heuristics remain admissible in the

presence of renewable resources, but in order to get better lower bounds we exclude from the regression set any

set of actions that violates a resource constraint. For unary

resources (capacity 1) this heuristic is informative, but for

multi-capacity resources it tends to be weak.

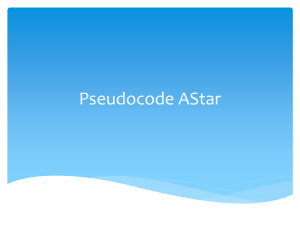

Non-Temporal Planning

First, we compare TP 4 to three optimal parallel planners,

IPP , BLACKBOX and STAN , on standard planning problems

without time or resources. The test set comprises 60 random

problems from the 3-operator blocksworld domain, ranging

in size from 10 to 12 blocks, and 40 random logistics problems with 5 – 6 deliveries. Blocksworld problems were generated using Slaney & Thiebaux’s (2001) BWSTATES program.

Figure 1(a) presents the results in the form of runtime distributions. Clearly TP 4 is not competitive with non-temporal

planners, which is expected considering the overhead involved in handling time. Performance in the logistics domain, however, is very poor (e.g., TP 4 solves less than 60%

of the problems within 1000 seconds, while all other planners solve 90% within only 100 seconds), indicating that

other causes are involved (most likely the branching rule,

see below).

Consumable Resources

To ensure that resources are not over-consumed, a state s

must contain the remaining amount of each consumable resource r. For the initial state, this is rem(s0 , r) = avail(r),

and for a state s0 resulting from s

X

use(ai , r)

(11)

rem(s0 , r) = rem(s, r) −

(ai ,ti )∈Fnew

for each r ∈ CP .

Heuristic The heuristics HTm remain admissible in the

presence of consumable resources, but become less useful

since they predict completion time but not conflicts due to

overconsumption. If, however, consumable resources are restricted to be monotonically decreasing (i.e., consumed but

not produced), then a state s can be pruned if the amount of

any resource r needed to achieve s from the initial situation

exceeds rem(s, r), the amount remaining in s. The amount

needed is estimated by a function needm (s, r) defined in a

way analogous to the function HTm (s) that estimates time.

The planner implements need1 (s, r).

Because resource consumption is treated separately from

time, this solution is weak when the time and resources

needed to achieve a goal interact in complex ways. The

HTm estimator considers only the fastest way of achieving

the goal regardless of resource cost, while the needm estimator considers the cheapest way to achieve the goal regardless of time (and other resources). To overcome this

problem, the estimates of time and resources would have to

be integrated, as in, for example, Refanidis’ and Vlahavas’

(2000) work. Integrated estimates could also be used to optimize some combination of time and resources, as opposed

to time alone.

Temporal Planning

To test TP 4 in a temporal planning domain, we make a small

extension to the logistics domain5 , in which trucks are allowed to drive between cities as well as within and actions

are assigned durations as follows:

Action

Load/Unload

Drive truck (between cities)

Drive truck (within city)

Fly airplane

Duration

1

12

2

3

3

This treatment of maintenance actions is not complete in general. Recall that the branching rule does not generate all valid

plans: in the presence of maintenance actions it may happen that

some of the plans that are not generated demand less resources than

the plans that are. When this happens, the algorithm may produce

non-optimal plans or even fail to find a plan when one exists. This

is a subtle issue that we will address in the future.

4

TP 4 is implemented in C. Planner, problems, problem generators and experiment scripts are available from the authors. Experiments were run on a Sun Ultra 10.

5

The goal in the logistics domain is to transport a number of

packages between locations in different cities. Trucks are used for

transports within a city and airplanes for transports between cities.

The standard domain is available, e.g., as part of the AIPS 2000

Competition set .

Maintenance Actions

In planning, it is normally assumed that no explicit action is

needed to maintain the truth of a fact once it has been established, but in many cases this assumption is not true. We

refer to no-ops that consume resources as maintenance actions. Incorporating maintenance actions in the branching

scheme outlined above is straightforward: For each atom p

and each resource r, a quantity use(maintain(p), r) can be

110

80

60

40

100

Blocksworld

TP4

IPP

Blackbox

STAN

90

80

70

20

0

0

1

10

10

Time (seconds)

2

Problems solved (%)

Problems solved (%)

100

3

10

10

Problems solved (%)

100

80

Logistics

60

50

40

40

20

20

10

0 −1

10

TP4

TGP (normal memo)

TGP (int. backtrack)

Sapa

30

60

0

10

1

10

Time (seconds)

2

10

0

3

10

0

100

200

300

400

500

600

700

800

900

1000

Runtime (seconds)

(a)

(b)

Figure 1: (a) Runtime distributions for TP 4 and optimal non-temporal parallel planners on standard planning problems. A

point hx, yi on the curve indicates that y percent of the problems were solved in x seconds or less. Note that the time axis is

logarithmic. (b) Runtime distributions for TP 4, TGP and Sapa on problems from the simple temporal logistics domain. Sapa’s

solution makespan tends to be around 1.25 – 2.75 times optimal.

tasks and approximately 550 instances in each (sets J12,

J14 and J16 from PSPLib). A specialized scheduling algorithm solves all problems in the set, the hardest in just below

300 seconds (Sprecher and Drexl 1996). TP 4 solves 59%,

41% and 31%, respectively, within the same time limit.

This is a simple example of a domain where makespanminimal plans tend to be different from minimal-step parallel plans.

For comparison, we ran also TGP and Sapa.6 The test set

comprised 80 random problems with 4 – 5 deliveries. Results are in figure 1(b). We ran two versions of TGP, one using plain GRAPHPLAN-like memoization and the other minimal conflict set memoization and “intelligent backtracking”

(Kambhampati 2000). TP 4 shows a behaviour similar to the

plain version of TGP, though somewhat slower. As the top

curve shows, the intelligent backtracking mechanism is very

effective in this domain (this was indicated also by Kambhampati, 2000).

Conclusions

We have developed an optimal, heuristic search planner that

handles concurrent actions, time and resources, and minimizes makespan. The two main issues we have addressed

are the formulation of an admissible heuristic estimating

completion time and a branching scheme for actions with

durations. In addition, the planner incorporates an admissible estimator for consumable resources that allows more of

the search space to be avoided. Similar ideas can be used to

optimize a combination of time and resources as opposed to

time alone.

The planner achieves a tradeoff between performance and

expressivity. While it is not competitive with either the best

parallel planners or specialized schedulers, it accommodates

problems that do not fit into either class. An approach for

improving performance that we plan to explore in the future

is the combination of the lower bounds provided by the admissible heuristics HTm with a different branching scheme.

Planning with Time and Resources

Finally, for a test domain involving both time and non-trivial

resource constraints we have used a scheduling problem,

called multi-mode resource constrained project scheduling

(MRCPS) (Sprecher and Drexl 1996). The problem is to

schedule a set of tasks and to select for each task a mode

of execution so as to minimize project makespan, subject

to precedence constraints among the tasks and global resource constraints. For each task, each mode has different

duration and resource requirements. Resources include renewable and (monotonically decreasing) consumable. Typically, modes represent different trade-offs between time and

resource use, or between use of different resources. This

makes finding optimal schedules very hard, even though the

planning aspect of the problem is quite simple.

The test comprised sets of problems with 12, 14 and 16

Acknowledgments

We’d like to thank David Smith for much help with TGP, and

Minh Binh Do for providing results for Sapa. This research

has been supported by the Wallenberg Foundation and the

ECSEL/ENSYM graduate study program.

6

We did not run Sapa ourselves. Results were provided by

Minh B. Do, and were obtained using a different, but approximately equivalent, computer.

111

References

Muscettola, N. 1994. Integrating planning and scheduling.

In Zweben, M., and Fox, M., eds., Intelligent Scheduling.

Morgan-Kaufmann.

Penberthy, J., and Weld, D. 1994. Temporal planning with

continous change. In Proc. 12th National Conference on

Artificial Intelligence (AAAI’94), 1010–1015.

PSPLib:

The project scheduling problem library.

http://www.bwl.uni-kiel.de/Prod/psplib/

library.html.

Refanidis, I., and Vlahavas, I. 2000. Heuristic planning with

resources. In Proc. 14th European Conference on Artificial

Intelligence (ECAI’00), 521–525.

Slaney, J., and Thiebaux, S. 2001. Blocks world revisited.

Artificial Intelligence 125. http://users.cecs.anu.

edu.au/∼jks/bw.html.

Smith, D., and Weld, D. 1999. Temporal planning with mutual exclusion reasoning. In Proc. 16th International Joint

Conference on Artificial Intelligence (IJCAI’99), 326–333.

Sprecher, A., and Drexl, A. 1996. Solving multi-mode

resource-constrained project scheduling problems by a simple, general and powerful sequencing algorithm. I: Theory

& II: Computation. Technical Report 385 & 386, Instituten

für Betriebswirtschaftsrlehre der Universität Kiel.

Tate, A.; Drabble, B.; and Dalton, J. 1996. O-Plan: a

knowledge-based planner and its application to logistics. In

Advanced Planning Technology. AAAI Press.

2000. AIPS competition. http://www.cs.toronto.

edu/aips2000/.

Bellman, R. 1957. Dynamic Programming. Princeton University Press.

Blum, A., and Furst, M. 1997. Fast planning through graph

analysis. Artificial Intelligence 90(1-2):281–300.

Bonet, B., and Geffner, H. 2001. Planning as heuristic

search. Artificial Intelligence 129(1-2):5–33.

Do, M., and Kambhampati, S. 2001. Sapa: A domainindependent heuristic metric temporal planner. In Proc. 6th

European Conference on Planning (ECP’01), 109–120.

Fukunaga, A.; Rabideau, G.; Chien, S.; and Yan, D. 1997.

ASPEN: A framework for automated planning and scheduling of spacecraft control and operations. In Proc. International Symposium on AI, Robotics and Automation in Space.

Garrido, A.; Onaindia, E.; and Barber, F. 2001. Timeoptimal planning in temporal problems. In Proc. 6th European Conference on Planning (ECP’01), 397–402.

Ghallab, M., and Laruelle, H. 1994. Representation and control in IxTeT, a temporal planner. In Proc. 2nd International

Conference on AI Planning Systems (AIPS’94), 61–67.

Haslum, P., and Geffner, H. 2000. Admissible heuristics for

optimal planning. In Proc. 5th International Conference on

Artificial Intelligence Planning and Scheduling (AIPS’00),

140–149. AAAI Press.

Haslum, P., and Geffner, H. 2001. Heuristic planning with

time and resources. In Proc. IJCAI Workshop on Planning

with Resources. http://www.ida.liu.se/∼pahas/

hsps/.

Hoffmann, J. 2000. A heuristic for domain independent

planning and its use in an enforced hill-climbing algorithm.

In Proc. 12th International Symposium on Methodologies

for Intelligent Systems (ISMIS’00), 216–227.

Jonsson, A.; Morris, P.; Muscettola, N.; Rajan, K.; and

Smith, B. 2000. Planning in interplanetary space: Theory and practice. In Proc. 5th International Conference on

Artificial Intelligence Planning and Scheduling (AIPS’00),

177–186.

Kambhampati, S. 2000. Planning graph as a (dynamic) CSP:

Exploiting EBL, DDB and other CSP search techniques in

Graphplan. Journal of AI Research 12:1–34.

Koehler, J. 1998. Planning under resource constraints. In

Proc. 13th European Conference on Artificial Intelligence

(ECAI’98), 489–493.

Korf, R. 1999. Artificial intelligence search algorithms. In

Handbook of Algorithms and Theory of Computation. CRC

Press. chapter 36.

Kvarnström, J., and Doherty, P. 2000. TALplanner: A temporal logic based forward chaining planner. Annals of Mathematics and Artificial Intelligence 30(1):119–169.

Lever, J., and Richards, B. 1994. parcPLAN: A planning

architecture with parallel actions, resources and constraints.

In Proc. 9th International Symposium on Methodologies for

Intelligent Systems (ISMIS’94), 213–222.

112