Team Familiarity, Role Experience, and Performance: Evidence from Indian Software Services

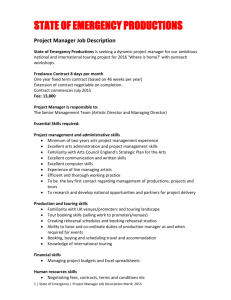

advertisement