TDDB68

Concurrent programming

and operating systems

Contents

The Critical-Section Problem

Disable interrupts – for small critical sections and 1 CPU

Peterson’s Solution for 2 processes

Hardware Support for Synchronization

Synchronization

Atomic TestAndSet, Atomic Swap

Semaphores and Locks

Why and How to Avoid Busy Waiting

Classic Problems for Studying Synchronization

[SGG 7e, 8e, 9e] Chapter 6

Bounded Buffer, Readers/Writers, Dining Philosophers

Monitors

Copyright Notice: The lecture notes are partly based on Silberschatz’s, Galvin’s and Gagne’s book (“Operating System

Concepts”, 7th ed., Wiley, 2005). No part of the lecture notes may be reproduced in any form, due to the copyrights

reserved by Wiley. These lecture notes should only be used for internal teaching purposes at Linköping University.

Christoph Kessler, IDA,

Linköpings universitet

may result in data inconsistency

And nondeterminism – result may depend on relative speed

of processes involved (”race conditions”)

Maintaining data consistency requires mechanisms to ensure

the orderly execution of cooperating processes

3

TDDB68 Concurrent Programming and Operating Systems

out in

Producer

Consumer

buffer

Producer:

A shared integer variable count

Initially, count is set to 0.

Producer increments count after it produces a new item

Consumer decrements count after it consumes an item.

C. Kessler, IDA, Linköpings universitet.

in = (in+1)%BUFFER_SIZE;

while (count == 0)

;

// do nothing

nextConsumed = buffer[out];

out = (out + 1) % BUFFER_SIZE;

count--;

Added shared integer variable

// … consume item in nextConsumed

}

5

TDDB68 Concurrent Programming and Operating Systems

Not

atomic!

22: register2 = count

23: register2 = register2 - 1

24: count = register2

while (1) {

}

C. Kessler, IDA, Linköpings universitet.

: load r1, _count

: add r1, r1, #1

: store _count, r1

count-- could be implemented as

Consumer:

count++;

count, initialized to 0, in order

to count #items in buffer

TDDB68 Concurrent Programming and Operating Systems

count++ could be implemented in machine code as

39: register1 = count

40: register1 = register1 + 1

41: count = register1

while (count == BUFFER_SIZE)

buffer[in] = nextProduced;

4

Race Conditions lead to Nondeterminism

// produce an item and put in nextProduced

// do nothing

TDDB68 Concurrent Programming and Operating Systems

Add a counting mechanism to keep track of the number of

while (true) {

;

2

items in the buffer.

See non-updated or only partly updated data structures

Producer/Consumer

with Counter

Concerns both processes sharing memory and threads

(in the following we only write ”processes”, for brevity)

C. Kessler, IDA, Linköpings universitet.

Producer-Consumer Problem (cf. Lecture 3)

Concurrent access to shared data (with at least one update)

C. Kessler, IDA, Linköpings universitet.

Atomic Transactions

Example:

The Synchronization Problem

Condition variables

Consider this execution interleaving, with “count = 5” initially:

39: producer executes register1 = count

{ register1 = 5 }

40: producer executes register1 = register1 + 1 { register1 = 6 }

22: consumer executes register2 = count

{ register2 = 5 }

23: consumer executes register2 = register2 - 1 { register2 = 4 }

41: producer executes count = register1

{ count = 6 }

24: consumer executes count = register2

{ count = 4 }

Compare to a different interleaving, e.g., 39,40,41,switch,22,23,24…

Context

switch

C. Kessler, IDA, Linköpings universitet.

6

TDDB68 Concurrent Programming and Operating Systems

1

Requirements on a Solution to

the Critical-Section Problem

Critical Section

Critical Section: A set of instructions, operating on

shared data or resources, that should be executed

by a single process at a time without interruption

Atomicity of execution

Mutual exclusion: At most one process should

be allowed to operate inside at any time

Consistency: inconsistent intermediate states of

shared data not visible to other processes outside

May consist of different program parts for different processes

Ex: producer and consumer code accessing count is 1 critical section

1. Mutual Exclusion

2. Progress

General structure, with structured control flow:

...

Entry of critical section C

A First Attempt…

Code for process Pi :

Assumes that Load and Store

execute atomically

(Notation: j = 1 – i )

do {

flag[i] = TRUE;

Shared variables:

boolean flag[2] = { false, false };

flag[i] == TRUE

Pi ready to enter its

critical section, for i = 0, 1

while (flag[j]) ;

… critical section

flag[i] = false;

… remainder section

} while (TRUE);

Satisfies mutual exclusion,

but not progress requirement.

P0 sets flag[0]=TRUE; context switch; P1 sets flag[1]=TRUE; …

infinite loop

C. Kessler, IDA, Linköpings universitet.

Fairness of admission to C

A bound must exist on the number of times that other processes are

allowed to enter critical section C after a process has made a request

to enter C and before that request is granted.

Assume that each process executes at a nonzero speed

No assumption concerning relative speed of the N processes

C. Kessler, IDA, Linköpings universitet.

8

TDDB68 Concurrent Programming and Operating Systems

Peterson’s Solution

For 2 processes P0 P1

Absence of deadlocks

If no process is executing in critical section C and there exist some

processes that wish to enter C, then the selection of the process that

will enter C next cannot be postponed indefinitely.

3. Bounded Waiting

… critical section C: operation on shared data

Exit of critical section C

…

C. Kessler, IDA, Linköpings universitet.

TDDB68 Concurrent Programming and Operating Systems

7

Absence of race conditions in C

If process Pi is executing in critical section C, then no other processes

can be executing in C (accessing the same shared data/resource).

9

TDDB68 Concurrent Programming and Operating Systems

Hardware Support for Synchronization

Code for process Pi

For 2 processes P0 P1

Assumes that Load and Store

instructions execute atomically

( j = 1 – i ):

do {

flag[i] = TRUE;

Shared variables:

turn = j;

while ( flag[j] && turn == j) ;

int turn;

boolean flag[2] = { false, false };

…. CRITICAL SECTION

The variable turn indicates

whose turn it is to enter the

critical section.

flag[i] = FALSE;

flag[i] = true process Pi is ready

to enter, for i=0,1.

…. REMAINDER SECTION

} while (TRUE);

C. Kessler, IDA, Linköpings universitet.

10

TDDB68 Concurrent Programming and Operating Systems

TestAndSet Instruction

Most systems provide hardware support for protecting critical sections

Definition in pseudocode:

Uniprocessors – could disable interrupts

Currently running code would execute without preemption

Generally too inefficient on multiprocessor systems

Operating

systems using this are not broadly scalable

Modern machines provide special atomic instructions

TestAndSet: test memory word and set value atomically

Swap: swap contents of two memory words atomically

Atomic

= non-interruptable

boolean TestAndSet (boolean *target)

{

atomic

boolean rv = *target;

*target = TRUE;

return rv;

// return the OLD value

}

If

multiple TestAndSet or Swap instructions are executed

simultaneously (each on a different CPU in a multiprocessor),

then they take effect sequentially in some arbitrary order.

C. Kessler, IDA, Linköpings universitet.

11

TDDB68 Concurrent Programming and Operating Systems

C. Kessler, IDA, Linköpings universitet.

12

TDDB68 Concurrent Programming and Operating Systems

2

Solution using TestAndSet

Swap Instruction

Shared boolean variable lock, initialized to FALSE (= unlocked)

Definition in pseudocode:

do {

void Swap (boolean *a, boolean *b)

{

boolean temp = *a;

atomic

*a = *b;

*b = temp;

}

while ( TestAndSet (&lock ))

; // do nothing but spinning on the lock (busy waiting)

// … critical section

lock = FALSE;

// …

remainder section

} while ( TRUE);

C. Kessler, IDA, Linköpings universitet.

13

TDDB68 Concurrent Programming and Operating Systems

Solution using Swap

C. Kessler, IDA, Linköpings universitet.

Semaphore S:

Each process has a local Boolean variable tmp.

do {

tmp = TRUE;

while ( tmp == TRUE)

Swap (&lock, &tmp );

shared integer variable

two standard atomic operations to modify S:

wait() and signal(), originally called P() and V()

Provides mutual exclusion

// busy waiting…

wait (S) {

while S <= 0

;

critical section

// busy waiting…

S--;

}

lock = FALSE;

// remainder section

} while (TRUE);

C. Kessler, IDA, Linköpings universitet.

TDDB68 Concurrent Programming and Operating Systems

Semaphore

Shared Boolean variable lock initialized to FALSE;

//

14

15

signal (S) {

atomic

S++;

}

TDDB68 Concurrent Programming and Operating Systems

C. Kessler, IDA, Linköpings universitet.

16

TDDB68 Concurrent Programming and Operating Systems

Semaphore Implementation using a Lock

Semaphores and Locks

Counting semaphore – integer value, increment/decrement

Binary semaphore – value in { 0, 1 } resp., { FALSE, TRUE };

can be simpler to implement (e.g., using TestAndSet)

Also known as (mutex) locks

Called spinlocks if using busy waiting

Must guarantee that no two processes can execute wait(S)

and signal(S) on the same semaphore S at the same time

Thus, implementation becomes the critical section problem

where the wait and signal code are placed in the critical

section.

Protect this critical section by a mutex lock L.

Can implement a counting semaphore S using a lock

Provides mutual exclusion:

Semaphore S;

// initialized to 1

wait (S);

… Critical Section code

signal (S);

C. Kessler, IDA, Linköpings universitet.

17

TDDB68 Concurrent Programming and Operating Systems

C. Kessler, IDA, Linköpings universitet.

18

TDDB68 Concurrent Programming and Operating Systems

3

Busy Waiting?

Eliminate Busy Waiting

Up to now, we used busy waiting in critical section

With each semaphore there is an associated waiting queue.

implementations (spinning on semaphore / lock)

Each entry in a waiting queue contains:

Mostly OK on multiprocessors

Process table index, e.g. pid

OK if critical section is very short and rarely occupied

Pointer to next entry

But accesses shared memory frequently …

For longer critical sections or high congestion,

waste of CPU time

As applications may spend lots of time in critical sections,

this is not a good solution.

C. Kessler, IDA, Linköpings universitet.

19

TDDB68 Concurrent Programming and Operating Systems

Semaphore Implementation w/o Busy Waiting

typedef struct { int value; struct process *wqueue; } semaphore;

void wait ( semaphore *S ) {

S->value--;

if (S->value < 0) {

add this process to S->wqueue;

block(); // I release the lock on the critical section for S …

}

// … and release the CPU

}

Two operations:

block – place the process invoking the operation on the

appropriate waiting queue.

wakeup – remove one of processes in the waiting queue

and place it in the ready queue.

C. Kessler, IDA, Linköpings universitet.

20

TDDB68 Concurrent Programming and Operating Systems

Synchronization with a Semaphore

Initially:

S->value = 1;

// for 1 resource to protect

S->wqueue = NULL;

Semaphore queue administration routines:

add, remove

S->wqueue

block() wakeup()

wait:

S->value--;

while (S->value < 0)

{ add( me, S->wqueue ); block();

}

Implicit critical

section for the

accesses to S

(atomic by implem.

of wait and signal)

void signal ( semaphore *S ) {

S->value++;

if (S->value <= 0) {

remove a process P from S->wqueue;

wakeup (P); // append P to ready queue

}

}

C. Kessler, IDA, Linköpings universitet.

21

TDDB68 Concurrent Programming and Operating Systems

Problems: Deadlock and Starvation

Deadlock – two or more processes are waiting

indefinitely for an event that can be caused

only by one of the waiting processes

Typical example: Nested critical sections

Guarded by semaphores S and Q, initialized to 1

P0

P1

wait (S);

wait (Q);

wait (Q);

wait (S);

…

time

CRITICAL SECTION

protected by semaphore S

signal: S->value++;

if (S->value <= 0)

{ P = remove( S->wqueue ); wakeup(P); }

C. Kessler, IDA, Linköpings universitet.

22

Remark:

A negative S->value

corresponds to the

number of threads

waiting in

TDDB68 Concurrent Programming

andS->wqueue

Operating Systems

Classical Problems of Synchronization

Bounded-Buffer Problem

Readers and Writers Problem

Dining-Philosophers Problem

…

signal (S);

signal (Q);

signal (Q);

signal (S);

Starvation – indefinite blocking. A process may never be

removed from the semaphore queue in which it is suspended.

C. Kessler, IDA, Linköpings universitet.

23

TDDB68 Concurrent Programming and Operating Systems

C. Kessler, IDA, Linköpings universitet.

24

TDDB68 Concurrent Programming and Operating Systems

4

Bounded-Buffer Problem

Bounded Buffer

Producer:

out in

Producer

Consumer:

do {

Consumer

do {

wait (full);

buffer

Atomic

decrement;

block if no

place filled

(all empty)

// … produce an item

wait (mutex);

Buffer with space for N items

wait (empty);

Semaphore mutex initialized to the value 1

// … remove an item from buffer

wait (mutex);

Protects accesses to out, in, buffer

signal (mutex);

Semaphore full initialized to the value 0

// … add item to buffer

signal (empty); // atomic incr.

Counts number of filled buffer places

Semaphore empty initialized to the value N

Counts number of free buffer places

signal (mutex);

// … consume the removed item

C. Kessler, IDA, Linköpings universitet.

25

signal (full);

} while (true);

TDDB68 Concurrent Programming and Operating Systems

Readers-Writers Problem

Variant 1: Priority for readers

W

W

W

R

R

No writer

waiting

Reader inside? Reader can

enter, bypassing waiting writers:

W

W

W

R

R

R

R

R

R

R

R

R

Readers may starve.

Writers may starve.

C. Kessler, IDA, Linköpings universitet.

27

Readers – only read accesses, no updates

Writers – can both read and write.

Relaxed Mutual Exclusion Problem:

No writer

waiting

Reader inside? Waiting writers

have priority:

W

R

TDDB68 Concurrent Programming and Operating Systems

2 types of processes accessing a shared data set:

R

W

} while (true);

26

Readers-Writers Problem

Variant 2: Priority for writers

R

C. Kessler, IDA, Linköpings universitet.

TDDB68 Concurrent Programming and Operating Systems

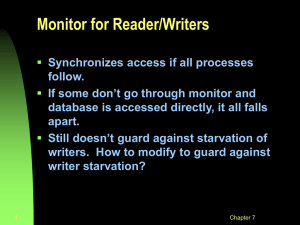

Readers-Writers Problem (Cont.)

allow either multiple readers or one single writer

to access the shared data at the same time.

Solution: use

Semaphore mutex initialized to 1.

// to protect readcount

Semaphore wrt initialized to 1.

// 0: someone inside

Integer readcount initialized to 0.

C. Kessler, IDA, Linköpings universitet.

28

// count readers inside

TDDB68 Concurrent Programming and Operating Systems

Readers-Writers Problem (Cont.)

The structure of a reader process:

The structure of a writer process:

do {

wait (wrt) ;

// writers queue on wrt

// … writing is performed

} while (true);

29

TDDB68 Concurrent Programming and Operating Systems

// first reader queues on wrt,

// and the others on mutex.

// … reading is performed

wait (mutex) ;

readcount - - ;

if (readcount == 0) signal (wrt) ;

signal (mutex) ;

} while (true)

signal (wrt) ;

C. Kessler, IDA, Linköpings universitet.

do {

wait (mutex) ;

readcount ++ ;

if (readcount == 1) wait (wrt) ;

signal (mutex) ;

C. Kessler, IDA, Linköpings universitet.

30

This solution favors

the readers.

Writers may

starve.

TDDB68 Concurrent Programming and Operating Systems

5

3+1 Deadlock-Free Solutions of the

Dining-Philosophers Problem

Dining-Philosophers Problem

Shared data

Bowl of rice (data set)

Array of 5 semaphores chopstick [5],

all initialized to 1

The structure of Philosopher i:

do {

wait ( chopstick[ i ] );

wait ( chopstick[ (i + 1) % 5] );

// … eat …

signal ( chopstick[ i ] );

C. Kessler, IDA, Linköpings universitet.

signal (chopstick[ (i + 1) % 5] );

// … think …

} while (true) ;

31

TDDB68 Concurrent Programming and Operating Systems

Possible sources of synchronization errors:

chopsticks are available (both in the same critical section)

Odd philosophers pick up left chopstick first;

even philosophers pick up right chopstick first

(Asymmetric solution)

In Fork: Represent chopsticks as bits in a bitvector (integer)

and use bit-masked atomic operations to grab both chopsticks

simultaneously. Works for up to bitsizeof(int) philosophers.

[Keller, K., Träff: Practical PRAM Programming, Wiley 2001]

C. Kessler, IDA, Linköpings universitet.

32

TDDB68 Concurrent Programming and Operating Systems

effective mechanism for process synchronization

Protect all possible entries/exits of

control flow into/from critical section:

wait (mutex) …. signal (mutex)

Allow a philosopher to pick up her/his chopsticks only if both

Monitor: high-level abstraction à la OOP that provides a convenient and

Correct use of semaphore operations:

the table

Monitors

Pitfalls with Semaphores

Allow at most four philosophers to be sitting simultaneously at

Omitting wait (mutex) or signal (mutex) (or both) ??

wait (mutex) …. wait (mutex) ??

wait (mutex1) …. signal (mutex2) ??

Multiple semaphores with different orders of wait() calls ??

Example: Each philosopher first grabs the chopstick to

its left risk for deadlock!

Encapsulate shared data, protecting semaphore, and all operations in a

single abstract data type

Only one process may be active within the monitor at a time

monitor monitor-name

{

… // shared variable declarations

initialization ( ….) { … }

procedure P1 (…) { …. }

…

procedure Pn (…) {……}

}

In Java realized as classes with

synchronized methods

C. Kessler, IDA, Linköpings universitet.

33

TDDB68 Concurrent Programming and Operating Systems

TDDB68

Concurrent programming

and operating systems

C. Kessler, IDA, Linköpings universitet.

34

TDDB68 Concurrent Programming and Operating Systems

Condition Variables

condition c;

Conditional Critical Sections

Operations on a condition variable c:

Condition Variables

Conditions with mutex locks

Conditions with monitors

Copyright Notice: The lecture notes are partly based on Silberschatz’s, Galvin’s and Gagne’s book (“Operating System

Concepts”, 7th ed., Wiley, 2005). No part of the lecture notes may be reproduced in any form, due to the copyrights

reserved by Wiley. These lecture notes should only be used for internal teaching purposes at Linköping University.

Christoph Kessler, IDA,

Linköpings universitet

Waiting room (e.g. process queue)

associated with each condition variable

Associated with a critical section

(mutex-protected region or monitor)

wait(c) or c.wait ()

– process/thread that invokes the operation is suspended

(and releases the mutex guarding the critical section)

signal(c) or c.signal ()

– unblocks one of the processes/threads (if any)

that invoked wait (c)

signal_all(c) - unblocks all processes/threads waiting for c

C. Kessler, IDA, Linköpings universitet.

36

TDDB68 Concurrent Programming and Operating Systems

6

Condition variable with mutex lock

Condition variable with mutex lock

Condition must be associated with a specific mutex-lock

Condition must be associated with a specific mutex-lock

What about using if instead of while here?

// shared:

add (me, c->queue);

unlock(m);

int ct = ... ; // counter

block();

mutex m;

lock(m);

condition ct_zero; // models event that ct is zero

return;

...

}

void main(...) { ...

// ... create several threads repeatingly calling decr, incr, and (1) guard_zero

}

Not OK –

wait ( c, m ) {

condition needs to be reevaluated

// shared:

add (me, c->queue);

after lock m has been re-acquired by the awoken guard

unlock(m);

int ct = ... ; // counter

thread

block();

mutex m;

lock(m);

condition

ct_zero;

// ...

models

that ct is zero unlock(m)

Ex:

T2: decr:

ct 1event

0, signal(ct_zero),

return;

...

but before guard-thread T3 can get the lock:

}

void main(...)T{1...

: incr: ... acquire m, set ct 0 1, unlock(m)

// ... create Tseveral

threads ...

calling

decr,

and guard_zero

acquire

m,incr,

but now

ct==1 aargh!

3: guard_zero:

}

Double-checking conditions is recommended!

void incr()

{ ...

lock( m );

ct ++;

unlock( m );

...

}

void incr()

{ ...

lock( m );

ct ++;

unlock( m );

...

}

wait ( c, m ) {

void decr()

{ ...

lock( m );

ct --;

if (ct == 0)

signal( ct_zero);

unlock( m );

}

C. Kessler, IDA, Linköpings universitet.

37

void guard_zero()

{ ...

lock( m );

while ( ct != 0 )

wait ( ct_zero, m );

unlock( m );

take_action();

}

TDDB68 Concurrent Programming and Operating Systems

void decr()

{ ...

lock( m );

ct --;

if (ct == 0)

signal( ct_zero);

unlock( m );

}

C. Kessler, IDA, Linköpings universitet.

38

The example in pthreads

Conditions in pthreads

Condition must be associated with a specific mutex-lock

pthread_cond_signal( &c )

// shared:

int ct = ... ; // counter

pthread_mutex_t m;

pthread_cond_t ct_zero; // models event that ct is zero

...

void main(...) { ...

pthread_mutex_init( &m, NULL );

pthread_cond_init( &ct_zero, NULL);

// ... create several pthreads repeatingly calling decr, incr, and guard_zero

}

void decr()

void guard_zero()

void incr()

{ ...

{ ...

{ ...

pthread_mutex_lock(&m);

pthread_mutex_lock(&m);

pthread_mutex_lock(&m);

ct --;

while ( ct != 0 )

ct ++;

if (ct == 0)

pthread_cond_wait(

pthread_mutex_unlock(&m);

pthread_cond_signal(&ct_zero);

&ct_zero, &m);

...

pthread_mutex_unlock(&m);

pthread_mutex_unlock(&m);

}

}

take_action(); ... }

C. Kessler, IDA, Linköpings universitet.

39

TDDB68 Concurrent Programming and Operating Systems

Monitor with Condition Variables

void guard_zero()

{ ...

lock( m );

if ??? ( ct != 0 )

wait ( ct_zero, m );

unlock( m );

take_action();

}

TDDB68 Concurrent Programming and Operating Systems

cf. signal, Java notify

wakes up 1 thread waiting for c

pthread_cond_broadcast( &c )

cf. signal_all, notify_all

wakes up all threads waiting for c

pthread_cond_wait( &c, &m )

pthread_cond_timedwait( &c, &m, abstime ) wait with timeout

Signals are not remembered

threads must already be waiting for a signal to receive it

pthread_cond_signal(&c) or pthread_cond_broadcast(&c)

must be within the mutex-protected region

otherwise, e.g. a guard thread may miss a signal

between (ct!=0) and pthread_cond_wait()

C. Kessler, IDA, Linköpings universitet.

40

TDDB68 Concurrent Programming and Operating Systems

Monitor with Condition Variables

Awoken process(es)/thread(s) must re-apply

for entry into the monitor.

Two scenarios:

x

Signal and wait:

signal’ing

thread steps aside for resumed

Signal and continue:

signal’ing

thread continues

while resumed thread waits for signal’er

(and then possibly for further conditions)

The mutex lock is

implicit in the

monitor construct

C. Kessler, IDA, Linköpings universitet.

41

TDDB68 Concurrent Programming and Operating Systems

C. Kessler, IDA, Linköpings universitet.

42

TDDB68 Concurrent Programming and Operating Systems

7

Example: Monitor with condition variable

monitor {

// Simple resource allocation monitor in pseudocode

boolean inUse = false;

// simple shared state variable

condition available;

// condition variable

initialization { inUse = false; }

procedure getResource ( ) {

while (inUse) wait( available );

inUse = true;

// wait until available is signaled

// resource is now in use

}

Exercise: Generalize this to a monitor

administrating a countable resource

(e.g. a set of fixed-sized memory blocks –

getResource(k) allocates k blocks).

procedure returnResource ( ) {

inUse = false;

signal( available ); // signal a waiting thread to proceed

}

}

C. Kessler, IDA, Linköpings universitet.

43

TDDB68 Concurrent Programming and Operating Systems

Monitor Solution to Dining Philosophers

monitor DP {

enum { THINKING, HUNGRY, EATING } state [5] ;

condition self [5];

void test ( int i ) {

void pickup ( int i ) {

if (

(state[(i+4)%5] != EATING)

&& (state[i] == HUNGRY)

state[i] = HUNGRY;

&& (state[(i+1)%5] != EATING) ) {

test ( i );

state[i] = EATING ;

if (state[i] != EATING)

self[i].signal () ;

self [i].wait();

}

}

}

void putdown ( int i ) {

state[i] = THINKING;

test((i+4)%5); // left neighbor

test((i+1)%5); // right neighbor

}

initialization_code() {

for (int i = 0; i < 5; i++)

state[i] = THINKING;

}

}

C. Kessler, IDA, Linköpings universitet.

44

TDDB68 Concurrent Programming and Operating Systems

Synchronization API Examples

Pthreads

Solaris

Windows XP

Atomic Transactions

Linux

Java threads

Transactional Programming

see [SGG7/8] 6.8 and pp. 238-241 (7e) / pp. 276-280 (8e)

Transactional Memory

(Short introduction)

C. Kessler, IDA, Linköpings universitet.

45

TDDB68 Concurrent Programming and Operating Systems

C. Kessler, IDA, Linköpings universitet.

46

TDDB68 Concurrent Programming and Operating Systems

Atomic Transactions, Serializability

Atomic Transactions

Serialization (in some arbitrary order, dep. on scheduler) implies race-free-ness,

but not vice versa.

For atomic computations on multiple shared memory words

Serialization is overly restrictive.

coarse-grained locking does not scale

declarative rather than hardcoded atomicity

enables lock-free concurrent data structures

Transaction either commits or fails (then to be repeated)

Non-serialized execution can still be correct if it is serializable, i.e. could be

converted into serialized form by swapping non-conflicting memory accesses

Example: Shared variables A, B; transactions T0, T1 e.g. { a=A=..A..; B=..B..a..; }

T0:

T1:

…

…

read(A)

write(A)

read(B)

write(B)

read(A)

write(A)

read(B)

write(B)

T0:

T1:

…

…

read(A)

write(A)

read(A)

write(A)

read(B)

write(B)

read(B)

write(B)

T0:

T1:

…

…

read(A)

read(A)

write(A)

write(A)

read(B)

write(B)

read(B)

write(B)

Serialized as T0T1,

e.g. using a mutex lock

Concurrent, but

serializable as T0T1

[SGG7/8 Sec. 6.9.4.1]

47

Incorrect – not serializable, not atomic

C. Kessler, IDA, Linköpings universitet.

Abstracts from locking and mutual exclusion

Two memory

accesses are in

conflict if both

access the same

data item and at

least one of them

is a write access.

TDDB68 Concurrent Programming and Operating Systems

Transactional Memory / Transactional Programming

Variant 1: atomic { ... } marks transactional code

Variant 2: special transactional instructions

e.g. LT, LTX, ST; COMMIT; ABORT

Implem.: Speculate on serializability of unprotected execution,

track time-stamped values of memory cells,

and roll-back+retry transaction if speculation was wrong

Software transactional memory (high overhead)

Hardware TM, implemented e.g. as extension of cache

coherence protocols [Herlihy,Moss’93],

Sun Rock processor

C. Kessler, IDA, Linköpings universitet.

TDDB68 Concurrent Programming and Operating Systems

48

8

STM Example: Lock-based vs. Transactional

Map (Hashtable) based Data Structure

Coarse-grained locking à la Java

”synchronized” does not scale up

AtomicMap (Map m) {

this.m = m;

}

LockBasedMap (Map m) {

this.m = m;

mutex = new Object();

}

public Object get() {

atomic {

return m.get();

}

}

public Object get() {

synchronized (mutex) {

return m.get();

}

}

Source: A. Adl-Tabatabai, C. Kozyrakis, B. Saha:

Unlocking Concurrency: Multicore Programming with

Transactional Memory. ACM Queue Dec/Jan 2006-2007.

49

Source: A. Adl-Tabatabai, C. Kozyrakis, B. Saha:

Unlocking Concurrency: Multicore Programming with Transactional

Memory. ACM Queue Dec/Jan 2006-2007. © ACM

TDDB68 Concurrent Programming and Operating Systems

Example: Thread-safe composite operation

Move a value from one concurrent hash map to another

C. Kessler, IDA, Linköpings universitet.

50

void move (Object key) {

synchronized (mutex ) {

atomic (mutex ) {

map2.put ( key, map1.remove(key));

map2.put (key, map1.remove( key));

}

}

}

}

Requires (coarse-grain) locking

(does not scale)

Any 2 threads can work in parallel

as long as different hash table buckets are

accessed.

or rewrite hashmap for fine-grained locking

(error-prone)

C. Kessler, IDA, Linköpings universitet.

51

Source: A. Adl-Tabatabai, C. Kozyrakis, B. Saha:

Unlocking Concurrency: Multicore Programming with

Transactional Memory. ACM Queue Dec/Jan 2006-2007.

TDDB68 Concurrent Programming and Operating Systems

TDDB68 Concurrent Programming and Operating Systems

Software Transactional Memory

Implementation Idea

User code:

Compiled code:

Threads see each key occur in exactly one hash map at a time

void move (Object key) {

Fine-grained locking scales

here equally well as atomic

transactions, but is more lowlevel, susceptible to bugs

leading to deadlocks or races

// other Map methods. . .

}

// other Map methods. . .

C. Kessler, IDA, Linköpings universitet.

Times for a sequence of Java

HashMap insert, delete, update

operations, run on a 16-processor

shared memory multiprocessor

class AtomicMap

implements Map

{

Map m;

class LockBasedMap

implements Map

{

Object mutex;

Map m;

}

Transactions vs. Locks

int foo (int arg)

{

...

atomic

{

b = a + 5;

}

...

}

Instrumented with calls to

STM library functions.

In case of abort, control

returns to checkpointed

context by a longjmp()

C. Kessler, IDA, Linköpings universitet.

52

int foo (int arg)

{

checkpoint current

jmpbuf env;

execution context for

…

case of roll-back

do {

if (setjmp(&env) == 0) {

stmStart();

temp = stmRead(&a);

temp1 = temp + 5;

stmWrite(&b, temp1);

stmCommit();

break;

}

} while (1);

…

Source: A. Adl-Tabatabai, C. Kozyrakis, B. Saha:

Unlocking Concurrency: Multicore Programming with

Transactional Memory. ACM Queue Dec/Jan 2006-2007.

TDDB68 Concurrent Programming and Operating Systems

Literature on Transactional Memory

Good introduction:

A. Adl-Tabatabai, C. Kozyrakis, B. Saha:

Unlocking Concurrency: Multicore Programming with Transactional

Memory. ACM Queue Dec/Jan 2006-2007.

Transaction concept comes from database systems

Atomic Transactions [SGG7/8] Sect. 6.9; [SGG9] – (Sect. 6.10.1)

Single transactions (atomicity despite system failure)

Log-based recovery, Checkpointing

Concurrent atomic transactions [SGG7/8] Sect. 6.9.4

Serializability, Two-phase locking protocol

Alternative to TM: Lock-free data structures,

non-blocking synchronization

More: TDDD56 Multicore and GPU Computing

C. Kessler, IDA, Linköpings universitet.

53

TDDB68 Concurrent Programming and Operating Systems

9

0

0

advertisement

Related documents

Download

advertisement

Add this document to collection(s)

You can add this document to your study collection(s)

Sign in Available only to authorized usersAdd this document to saved

You can add this document to your saved list

Sign in Available only to authorized users