A Simple, Combinatorial Algorithm for Solving SDD Systems in Nearly-Linear Time Lorenzo Orecchia,

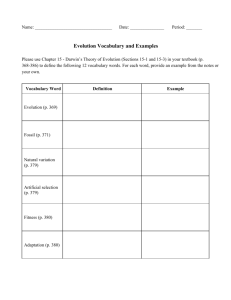

advertisement

A Simple, Combinatorial Algorithm

for Solving SDD Systems

in Nearly-Linear Time

Lorenzo Orecchia, MIT Math

Joint work with Jonathan Kelner, Aaron Sidford and Zeyuan Allen Zhu

Problem Definition: SDD Systems

n£n

; b 2 Rn:

PROBLEM INPUT: A 2 R

Ax = b

SQUARE SYSTEM OF

LINEAR EQUATIONS

GOAL: solve for x

Restriction: A is Symmetric Diagonally-Dominant

2

Problem Definition: SDD Systems

n£n

; b 2 Rn:

PROBLEM INPUT: A 2 R

2

6

6

6

4

a11

a21

..

.

a12

a22

..

.

:::

:::

..

.

a1n

a2n

..

.

an1

an2

: : : ann

30

7B

7B

7B

5@

x1

x2

..

.

xn

1

0

C B

C B

C=B

A @

b1

b2

..

.

bn

1

C

C

C

A

GOAL: solve for x

Restriction: A is Symmetric Diagonally-Dominant

3

Problem Definition: SDD Systems

n£n

; b 2 Rn:

PROBLEM INPUT: A 2 R

2

6

6

6

4

a11

a12

..

.

a12

a22

..

.

:::

:::

..

.

a1n

a2n

..

.

a1n

a2n

: : : ann

30

7B

7B

7B

5@

x1

x2

..

.

xn

1

0

C B

C B

C=B

A @

b1

b2

..

.

bn

1

C

C

C

A

GOAL: solve for x

Restriction: A is Symmetric Diagonally-Dominant

• Symmetry: AT = A

4

Problem Definition: SDD Systems

n£n

; b 2 Rn:

PROBLEM INPUT: A 2 R

2

6

6

6

4

a11

a12

..

.

a12

a22

..

.

:::

:::

..

.

a1n

a2n

..

.

a1n

a2n

: : : ann

GOAL: solve for x

30

7B

7B

7B

5@

x1

x2

..

.

xn

1

0

C B

C B

C=B

A @

b1

b2

..

.

bn

1

C

C

C

A

Restriction: A is Symmetric Diagonally-Dominant

• Symmetry: AT = A

• Diagonal Dominance:

for all rows (and columns) i, diagonal entry dominates

aii ¸

P

j6=i jaij j

5

Problem Definition: SDD Systems

n£n

; b 2 Rn:

PROBLEM INPUT: A 2 R

2

6

6

6

4

a11

a12

..

.

a12

a22

..

.

:::

:::

..

.

a1n

a2n

: : : ann

GOAL: solve for x

a1n

a2n

..

.

30

7B

7B

7B

5@

x1

x2

..

.

xn

1

0

C B

C B

C=B

A @

b1

b2

..

.

bn

1

C

C

C

A

Restriction: A is Symmetric Diagonally-Dominant

• Symmetry: AT = A

P

• Diagonal Dominance: for all i, aii ¸ j6=i jaij j

WHY SDD ? Practically important subcase of positive semidefinite matrices

A IS SDD

Aº0

Easy-to-identify null space

6

Problem Definition: SDD Systems

n£n

; b 2 Rn:

PROBLEM INPUT: A 2 R

2

6

6

6

4

a11

a12

..

.

a12

a22

..

.

:::

:::

..

.

a1n

a2n

: : : ann

GOAL: solve for x

a1n

a2n

..

.

30

7B

7B

7B

5@

x1

x2

..

.

xn

1

0

C B

C B

C=B

A @

b1

b2

..

.

bn

1

C

C

C

A

Restriction: A is Symmetric Diagonally-Dominant

• Symmetry: AT = A

P

• Diagonal Dominance: for all i, aii ¸ j6=i jaij j

WHY SDD ? Practically important subcase of positive semidefinite matrices

A IS SDD

Aº0

Easy-to-identify null space

7

Problem Definition: SDD Systems

n£n

; b 2 Rn; b 2 Im(A)

PROBLEM INPUT: A 2 R

2

nnz(A)

6

6

6

4

a11

0

..

.

0

a22

..

.

:::

:::

..

.

a1n

0

..

.

0

a2n

: : : ann

30

7B

7B

7B

5@

x1

x2

..

.

xn

1

0

C B

C B

C=B

A @

b1

b2

..

.

bn

1

C

C

C

A

GOAL: solve for x

• approximately: kx ¡ x¤kA · ²kx¤kA

•in nearly-linear time in the sparsity nnz(A) and log(1/²) , i.e.

RunTime = O (nnz(A) ¢ polylog(n) ¢ log(1=²))

8

Reduction to Laplacian Systems

LAPLACIAN SYSTEM:

SDD SYSTEM:

[Gremban’96]

Ax = b

Lv = Â

Reduction preserves approximation and sparsity

9

Graph Laplacian

G =(V,E,w) weighted undirected graph with n vertices and m edges

1

GRAPH G

2

1

3

1

GRAPH LAPLACIAN L(G)

L(G) =

10

Graph Laplacian

G =(V,E,w) weighted undirected graph with n vertices and m edges

1

GRAPH G

2

1

3

1

GRAPH LAPLACIAN L(G)

L(G) =

Degree of vertex 2

11

Graph Laplacian

G =(V,E,w) weighted undirected graph with n vertices and m edges

1

GRAPH G

2

1

3

1

GRAPH LAPLACIAN L(G)

L(G) =

- Weight of edge {2,3}

12

Laplacian as Sum of Edges

0

i

0 ::: 0

B .. ..

..

B . .

.

B

B 0 ::: 1

B

X

B

..

L(G) =

we B ... ...

.

B

e=fi;jg2E

B 0 : : : ¡1

B

B . .

..

@ .. ..

.

0 ::: 0

j

:::

..

.

0

..

.

:::

..

.

1

0

.. C

. C

C

: : : ¡1 : : : 0 C

Ci

..

..

.. .. C

.

.

. . C

C

::: 1 ::: 0 C

Cj

..

..

.. .. C

.

.

. . A

::: 0 ::: 0

13

Laplacian as Sum of Edges

0

i

0 ::: 0

B .. ..

..

B . .

.

B

B 0 ::: 1

B

X

B .. ..

..

L(G) =

we B . .

.

B

e=fi;jg2E

B 0 : : : ¡1

B

B . .

..

.

.

@ . .

.

0 ::: 0

j

:::

..

.

0

..

.

:::

..

.

1

0

.. C

. C

C

: : : ¡1 : : : 0 C

Ci

..

..

.. .. C

.

.

. . C

C

::: 1 ::: 0 C

Cj

..

..

.. .. C

.

.

. . A

::: 0 ::: 0

Edge Matrix Le

14

Laplacian as Sum of Edges

0

i

0 ::: 0

B .. ..

..

B . .

.

B

B 0 ::: 1

B

X

B .. ..

..

L(G) =

we B . .

.

B

e=fi;jg2E

B 0 : : : ¡1

B

B . .

..

.

.

@ . .

.

0 ::: 0

j

:::

..

.

0

..

.

:::

..

.

1

0

.. C

. C

C

: : : ¡1 : : : 0 C

Ci

..

..

.. .. C

.

.

. . C

C

::: 1 ::: 0 C

Cj

..

..

.. .. C

.

.

. . A

::: 0 ::: 0

Edge Matrix Le has rank 1

15

Laplacian as Sum of Edges

0

1

0

B .. C

B . C

B

C

B 1 C i

B

C ¡

X

B

C

0 ¢¢¢

L(G) =

we B ... C

B

C

e=fi;jg2E

B ¡1 C j

B

C

B . C

@ .. A

0

Âe

i

+1

-1

i

j

1 ¢¢¢

¡1 ¢ ¢ ¢

0

¢T

ÂT

e

j

ARBITRARY ORIENTATION OF EDGES: WILL BE USEFUL WHEN DISCUSSING FLOWS

16

More Graph Matrices: Incidence Matrix

G =(V,E,w) weighted undirected graph with n vertices and m edges

n

0

B

B

B

B

T

B =B

B

B

B

@

Âe1

Âe2

..

.

Âei

..

.

Âem

1

C

C

C

C

C

C

C

C

A

m

17

More Graph Matrices: Incidence Matrix

G =(V,E,w) weighted undirected graph with n vertices and m edges

n

0

Âe1

Âe2

..

.

B

B

B

B

T

B =B

B

B

B

@

L=

Âei

..

.

Âem

X

e=fi;jg2E

1

C

C

C

C

C

C

C

C

A

m

T

T

we Âe Âe = B W B

diag(w)

18

More Graph Matrices: Incidence Matrix

G =(V,E,w) weighted undirected graph with n vertices and m edges

n

0

Âe1

Âe2

..

.

B

B

B

B

T

B =B

B

B

B

@

L=

Âei

..

.

Âem

X

1

C

C

C

C

C

C

C

C

A

m

T

T

we Âe Âe = B W B

W1/2 B is square root of L

e=fi;jg2E

diag(w)

19

Action of Incidence Matrix

G =(V,E,w) weighted undirected graph with n vertices and m edges

-1

+1

+1

-1

Action of BTon a vector f2 Rm:

(BT f)i = °ow out of i ¡ °ow into of i = net °ow into graph at i

Action of Bon a vector v 2 Rn:

(Bv)e=(i;j) = vi ¡ vj = change in v along (i; j)

20

Graphs as Electrical Circuits

1

2

Edge conductances we

1

3

1

21

Graphs as Electrical Circuits

1/2

Edge conductances we

Edge resistances re

1/2

1

1/3

re= 1/we

1

22

Graphs as Electrical Circuits

1/2

Edge conductances we

Edge resistances re

re= 1/we

1/2

1

1/3

1

one unit of electrical flow

Into vertex 1 and out of 3

23

Graphs as Electrical Circuits

1/2

Edge conductances we

Edge resistances re

re= 1/we

1/2

1

1/3

1

one unit of electrical flow

Into vertex 1 and out of 3

Q: What is resulting electrical flow on the graph?

24

Graphs as Electrical Circuits

1/2

Edge conductances we

Edge resistances re

1/2

1

re= 1/we

1/3

1

one unit of electrical flow

Into vertex 1 and out of 3

1. Ohm's Law: For every edge e = (i; j) 2 E:

f(i;j) =

vi ¡ vj

rij

2. Kircho®'s Conservation Law: For every vertex i 2 V :

°ow out of i ¡ °ow into i = net °ow into network at i

25

Graphs as Electrical Circuits

1/2

Edge conductances we

Edge resistances re

1/2

1

1/3

re= 1/we

1

one unit of electrical flow

Into vertex 1 and out of 3

1. Ohm's Law: For every edge e = (i; j) 2 E:

f(i;j) =

vi ¡ vj

rij

f = R¡1Bv = WBv

2. Kircho®'s Conservation Law: For every vertex i 2 V :

°ow out of i ¡ °ow into i = net °ow into network at i

26

Graphs as Electrical Circuits

1/2

Edge conductances we

Edge resistances re

1/2

1

1/3

re= 1/we

1

one unit of electrical flow

Into vertex 1 and out of 3

1. Ohm's Law:

f = R¡1 Bv = W Bv

2. Kircho®'s Conservation Law:

B T f = es ¡ et

Example above: Current source s is vertex 1. Current sink t is vertex 3

27

Graphs as Electrical Circuits

1/2

Edge conductances we

Edge resistances re

1/2

1

re= 1/we

1/3

1

one unit of electrical flow

Into vertex 1 and out of 3

1. Ohm's Law:

f = R¡1 Bv = W Bv

General net flow

vector  allowed:

2. Kircho®'s Conservation Law:

BT f = Â

ÂT~1 = 0

Example above: Current source s is vertex 1. Current sink t is vertex 3

28

Laplacian Systems as Electrical Problems

1/2

Edge conductances we

Edge resistances re

General net flow

vector  :

1/2

1

1/3

re= 1/we

ÂT~1 = 0

1

one unit of electrical flow

Into vertex 1 and out of 3

Q: What is resulting electrical flow on the graph?

¡1

f = R Bv = W Bv;

BTf = Â

¾

! BT W Bv = Lv = Â

29

Laplacian Systems as Electrical Problems

1/2

Edge conductances we

Edge resistances re

re= 1/we

General net flow

vector  :

1/2

1

1/3

ÂT~1 = 0

1

one unit of electrical flow

Into vertex 1 and out of 3

Q: What is resulting electrical flow on the graph?

Lv = Â

Given input currents Â, finding voltage is equivalent to solving Laplacian

system of linear equations

30

Laplacian Systems as Electrical Problems

1/2

Edge conductances we

General net flow

vector  :

1/2

1

Edge resistances re

re= 1/we

1/3

ÂT~1 = 0

1

one unit of electrical flow

Into vertex 1 and out of 3

Q: What is resulting electrical flow on the graph?

Lv = Â

Pseudo-inverse

+

v=L Â

Given input currents Â, finding voltage is equivalent to solving Laplacian

system of linear equations

31

Energy Intepretation

Lv = Â

Optimality condition

min

x?~

1

1

2

¢ xTLx ¡ xTÂ

Equivalent up to scaling

min

x?~1

s.t.

1

2

¢ xT Lx

xT Â = 1

32

Energy Intepretation

Lv = Â

Optimality condition

min

x?~

1

1

2

¢ xTLx ¡ xTÂ

Equivalent up to scaling

min

x?~

1

s.t.

1

2

P

T

¢ x Lx = (i;j)2E

xT Â = 1

(xi ¡xj )2

rij

Minimize energy

for a fixed

voltage gap

33

Why it matters

• Direct Applications

• Modeling electrical

networks

• Simulating random walks

• PageRank

Aggregate behavior of lazy random walk started at Â

34

Why it matters

• Direct Applications

• Modeling electrical

networks

• Simulating random walks

• PageRank

Numerical Applications

•

•

•

•

Finite element [BHV08]

Matrix exponential [OSV12]

Largest eigenvalue [ST12]

Image Smoothing

35

Why it matters: Faster Graph Algorithms

“The Laplacian Paradigm”

•

•

•

•

•

•

•

•

Maximum flow [CKM+11,LRS13,KOLS12]

Multicommodity flow [KMP12, KOLS12]

Random spanning trees [KM09]

Graph sparsification [SS11]

Lossy flow, min-cost flow [DS08]

Balanced partitioning [OSV12]

Oblivious routing [KOLS12]

… and more

36

Highlights of Previous Work

Direct solvers

for general

matrices

Iterative

methods for

PSD matrices

Conjugate

Gradient

On various

subclasses

For SDD/

Laplacians

Multigrid

…

Chebyshev

Method

Spielman-Teng

…

Many many

others…

O(m polylog n)

37

Our Result

¡

¢

2

1

~

Solve Lx = b in time O m log n log ²

• Very different approach

• Simple and intuitive algorithm

• Proof fits on a single blackboard

• Easily shown to be numerically stable

38

Our Result

¡

¢

2

1

~

Solve Lx = b in time O m log n log ²

• Very different approach

• Simple and intuitive algorithm

• Proof fits on a single blackboard

• Easily shown to be numerically stable

39

Which computational model?

Q: How do we account for running time of arithmetic operations?

A: Constant time for operations on word-size sequence of bits.

0

1

1

Previous works:

0

1

0

1

0

c

O(log n)

Size of Word: Polynomial in size of input

1

1

Which computational model?

Q: How do we account for running time of arithmetic operations?

A: Constant time for operations on word-size sequence of bits.

0

1

1

Previous works:

0

1

0

1

0

1

1

c

O(log n)

Size of Word: Polynomial in size of input

Our work:

O(log n)

UNIT-COST RAM MODEL:

MORE REALISTIC MODEL

Size of Word: Linear in size of input

41

The Philosophy of This Talk

PROBLEM

REPRESENTATION

ITERATIVE

METHOD

42

The Philosophy of This Talk

PROBLEM

REPRESENTATION

Not all representations created equally:

e.g. eigenvalue decompostion

Good representation often requires

combinatorial insight

ITERATIVE

METHOD

43

The Philosophy of This Talk

PROBLEM

REPRESENTATION

Not all representations created equally:

e.g. eigenvalue decompostion

Good representation often requires

combinatorial insight

ITERATIVE

METHOD

Leverage large body of techniques

in continuous optimization

Regularization

Gradient Descent

Accelerated Gradient Descent

Randomized Coordinate Descent

44

The Philosophy of This Talk

PROBLEM

REPRESENTATION

Not all representations created equally:

e.g. eigenvalue decompostion

Good representation often requires

combinatorial insight

ITERATIVE

METHOD

Leverage large body of techniques

in continuous optimization

Regularization

Gradient Descent

Accelerated Gradient Descent

Randomized Coordinate Descent

Efficiency and simplicity of algorithm relies on combining these two tools in the right way

45

Techniques and Challenges in the

Solution of Laplacian Systems

46

Changing Representation:

Gaussian Elimination

Simple Graphic Interpretation:

0

B

B

L=B

B

@

1

4 ¡1 ¡1 ¡1 ¡1

¡1 1

0

0

0 C

C

¡1 0

1

0

0 C

C

¡1 0

0

1

0 A

¡1 0

0

0

1

47

Changing Representation:

Gaussian Elimination

Simple Graphic Interpretation:

Eliminate (pivot) this node

0

B

B

B

B

@

1

4 ¡1 ¡1 ¡1 ¡1

¡1 1

0

0

0 C

C

¡1 0

1

0

0 C

C

¡1 0

0

1

0 A

¡1 0

0

0

1

48

Changing Representation:

Gaussian Elimination

Simple Graphic Interpretation:

Eliminate (pivot) this node

0

B

B

B

B

@

1

4 ¡1 ¡1 ¡1 ¡1

¡1 1

0

0

0 C

C

¡1 0

1

0

0 C

C

¡1 0

0

1

0 A

¡1 0

0

0

1

49

Changing Representation:

Gaussian Elimination

Simple Graphic Interpretation:

0

B

B

B

B

@

1 0

0 34

0 ¡ 14

0 ¡ 14

0 ¡ 14

0

¡ 14

3

4

¡ 14

¡ 14

0

¡ 14

¡ ¡ 14

3

4

¡1

0

¡ 14

¡ 14

¡ 14

3

4

1

C

C

C

C

A

Laplacian on

n-1 vertices

50

Cholesky Decomposition

Simple Graphic Interpretation:

EASY TO INVERT

EASY TO INVERT

0

B

B

L=B

B

@

4

¡1

0

0

0

0

1

0

0

0

0

0

1

0

0

0

0

0

1

0

0

0

0

0

1

10

CB

CB

CB

CB

A@

1 0

0 34

0 ¡ 14

0 ¡ 14

0 ¡ 14

0

¡ 14

3

4

¡ 14

¡ 14

0

¡ 14

¡ ¡ 14

3

4

¡1

0

¡ 14

¡ 14

¡ 14

3

4

10

CB

CB

CB

CB

A@

4 ¡1 0 0 0

0 1 0 0 0

0 0 1 0 0

0 0 0 1 0

0 0 0 0 1

51

1

C

C

C

C

A

Changing Representation:

Gaussian Elimination

Advantages:

• Gives exact algorithm

• Computes inverse matrix (can then be used on any b)

• Can choose elimination order

52

Changing Representation:

Gaussian Elimination

Advantages:

• Gives exact algorithm

• Computes implicit representation of inverse matrix

(can then be used on any b)

• Can choose elimination order

Disadvantages:

• Intermediate Laplacians can be very dense

SPARSE

DENSE

53

Gaussian Elimination

and Nested Dissection

Combinatorial arguments can help preserve sparsity by selecting good order:

G

54

Gaussian Elimination

and Nested Dissection

Combinatorial arguments can help preserve sparsity by selecting good order:

G

Small Vertex Separator

55

Gaussian Elimination

and Nested Dissection

Combinatorial arguments can help preserve sparsity by selecting good order:

G

Eliminate recursively

Small Vertex Separator

Eliminate recursively

56

Gaussian Elimination

and Nested Dissection

Combinatorial arguments can help preserve sparsity by selecting good order:

G

Size of existing separators bounds sparsity

Works well if small separators always exist (and are easy to find)

57

Gaussian Elimination

and Nested Dissection

Combinatorial arguments can help preserve sparsity by selecting good order:

G

Size of existing separators bounds sparsity

Works well if small separators always exist (and are easy to find)

E.G.: Planar Graphs

58

Gaussian Elimination: the bad case

G sparse expander

All separators are large

Any elimination order requires producing a dense graph.

59

Changing Representation:

Gaussian Elimination

Advantages:

• Gives exact algorithm

• Computes implicit representation of inverse matrix

(can then be used on any b)

• Can choose elimination order to minimize running time

Disadvantages:

• Intermediate Laplacians can be very dense

• Very slow in worst case:

O(n3 )

O(nw )

FAR FROM NEARLY LINEAR!

60

Gaussian Elimination: the good case

Easily linear time for some graphs:

PATHS

61

Gaussian Elimination: the good case

Easily linear time for some graphs:

Eliminate in time O(1)

PATHS

62

Gaussian Elimination: the good case

Easily linear time for some graphs:

PATHS

O(n) time

63

Gaussian Elimination: the good case

Easily linear time for some graphs:

PATHS

O(n) time

This works more in general for trees

by recursively eliminating a leaf.

64

Iterative Methods: Gradient Descent

Consider energy interpretation:

min

x?~

1

1

2

¢ xTLx ¡ xTÂ

This is a convex optimization problem on which we can apply gradient

descent techniques.

65

Iterative Methods: Gradient Descent

Consider energy interpretation:

min

x?~

1

1

2

¢ xTLx ¡ xTÂ

f(x)

rf(x) = Lx ¡ Â

r2f(x) = L

This is a convex optimization problem on which we can apply gradient

descent techniques.

66

Iterative Methods: Gradient Descent

Consider energy interpretation:

min

x?~

1

1

2

¢ xTLx ¡ xTÂ

f(x)

rf(x) = Lx ¡ Â

¸2D ¹ r2 f(x) ¹ 2D

Use degree norm k ¢ kD

This is a convex optimization problem on which we can apply gradient

descent techniques.

67

Iterative Methods: Gradient Descent

Consider energy interpretation:

min

x?~

1

1

2

¢ xTLx ¡ xTÂ

f(x)

rf(x) = Lx ¡ Â

¸2D ¹ r2 f(x) ¹ 2D

This is a convex optimization problem on which we can apply gradient

descent techniques.

Construct iterative solutions:

x(0) ; x(1); x(2) ; : : : ; x(t) ; : : :

x(t+1) = x(t) ¡ hD¡1rf(x(t) )

Step length

68

Iterative Methods: Gradient Descent

Consider energy interpretation:

min

x?~

1

1

2

¢ xTLx ¡ xTÂ

f(x)

rf(x) = Lx ¡ Â

¸2D ¹ r2 f(x) ¹ 2D

This is a convex optimization problem on which we can apply gradient

descent techniques.

Construct iterative solutions:

x(0) ; x(1); x(2) ; : : : ; x(t) ; : : :

x(t+1) = x(t) ¡ 12 D¡1 rf(x(t) )

By standard gradient descent analysis h = 1/2

For quadratic function, it can be optimized at every step.

69

Iterative Methods: Gradient Descent

Consider energy interpretation:

min

x?~

1

1

2

¢ xTLx ¡ xTÂ

f(x)

rf(x) = Lx ¡ Â

¸2D ¹ r2 f(x) ¹ 2D

This is a convex optimization problem on which we can apply gradient

descent techniques.

Construct iterative solutions:

x(t+1) =

.

x(0) ; x(1); x(2) ; : : : ; x(t) ; : : :

Pt

j=0

¡ I+W ¢j

2

Â

UNRAVEL RECURSION TO OBTAIN TRUNCATED SERIES: t steps of random walk

70

Iterative Methods: Gradient Descent

Consider energy interpretation:

min

x?~

1

1

2

rf(x) = Lx ¡ Â

¢ xTLx ¡ xTÂ

¸2D ¹ r2 f(x) ¹ 2D

f(x)

This is a convex optimization problem on which we can apply gradient

descent techniques.

Construct iterative solutions:

(t+1)

x

=

x(0) ; x(1); x(2) ; : : : ; x(t) ; : : :

Pt

j=0

¡ I+W ¢j

2

Â

Iterations necessary to converge to ²-approximate solution:

T =O

³

2

¸2

log

¡ n ¢´

²

71

Bad Example for Gradient Descent

PATH: ¸2 =

1

n2

Iterations necessary to converge to ²-approximate solution:

T =O

³

2

¸2

log

¡ n ¢´

²

¡ 2

¡ n ¢¢

= O n log ²

72

Bad Example for Gradient Descent

1=2

GAMBLER’S RUIN

1=2

S

t

PATH: ¸2 =

1

n2

Iterations necessary to converge to ²-approximate solution:

T =O

³

2

¸2

log

¡ n ¢´

²

¡ 2

¡ n ¢¢

= O n log ²

Each Iteration is a matrix-vector multiplication by

¡ I+W ¢

2

, requiring time O(m)

¡ 2

¡ n ¢¢

RunTime = O mn log ²

ESSENTIALLY TIGHT

73

Bad Example for Gradient Descent

PATH: ¸2 =

1

n2

Iterations necessary to converge to ²-approximate solution:

T =O

³

2

¸2

log

¡ n ¢´

²

¡ 2

¡ n ¢¢

= O n log ²

Each Iteration is a matrix-vector multiplication by

¡ I+W ¢

2

, requiring time O(m)

¡ 2

¡ n ¢¢

RunTime = O mn log ²

74

Accelerated Gradient Descent

Consider energy interpretation:

min

x?~

1

1

2

rf(x) = Lx ¡ Â

¢ xTLx ¡ xTÂ

¸2D ¹ r2 f(x) ¹ 2D

This is a convex optimization problem on which we can apply gradient

descent techniques.

Accelerated gradient techniques achieve better convergence:

CHEBYSHEV’S ITERATION

Improved iteration count:

T =O

µr

CONJUGATE GRADIENT

³ n ´¶

2

log

¸2

²

75

Still no luck, but …

PATH: ¸2 =

1

n2

³

³ n ´´

T = O n log

²

¡

¡ n ¢¢

RunTime = O mn log ²

BEST POSSIBLE USING GRADIENT APPROACH

76

Still no luck, but …

IT TAKES n STEPS FOR CHARGE TO TRAVEL ACROSS

PATH: ¸2 =

1

n2

³

³ n ´´

T = O n log

²

¡

¡ n ¢¢

RunTime = O mn log ²

BEST POSSIBLE USING GRADIENT APPROACH

77

Combining Representation and Iteration

Gaussian elimination and gradient methods seem complementary

• Gaussian elimination is nearly-linear on paths (and trees),

but slow on expanders.

• Gradient methods are nearly-linear time on expanders,

but slow on paths.

MAIN APPROACH TO FAST SOLVERS:

Combine Gaussian elimination and gradient methods

to obtain best of both worlds

78

Combining Representation and Iteration:

Combinatorial Preconditioning

Lv = Â

Gradient methods fail when condition number is large.

IDEA: modify system to improve condition number

+

L+

Lv

=

L

H

HÂ

where H is a preconditioner graph.

DESIRED PROPERTIES OF H:

1. New matrix is well conditioned: L+

HL

2.

Linear systems in LH can be solved quickly by Gaussian elimination

79

Combining Representation and Iteration:

Combinatorial Preconditioning

Lv = Â

Gradient methods fail when condition number is large.

IDEA: modify system to improve condition number

+

L+

Lv

=

L

H

HÂ

where H is a preconditioner graph.

DESIRED PROPERTIES OF H:

1. New matrix is well conditioned: L+

HL

2.

IMPROVES

ITERATION COUNT

Linear systems in LH can be solved quickly by Gaussian elimination

KEEPS ITERATIONS

LINEAR TIME

80

Preconditioning: Low-Stretch Trees

G

81

Preconditioning: Low-Stretch Trees

spanning tree T

G

82

Preconditioning: Low-Stretch Trees

spanning tree T

G

e

83

Preconditioning: Low-Stretch Trees

spanning tree T

G

1 X

st(e) =

re0

re 0

e 2Pe

Stretch of e

Pe

e

84

Preconditioning: Low-Stretch Trees

spanning tree T

G

1 X

st(e) =

re0

re 0

e 2Pe

Stretch of e

Pe

e

st(e) = 5

85

Preconditioning: Low-Stretch Trees

spanning tree T

G

1 X

st(e) =

re0

re 0

e 2Pe

Stretch of e

Pe

p

st(e) = n

e

86

Preconditioning: Low-Stretch Trees

spanning tree T

G

1 X

st(e) =

re0

re 0

e 2Pe

Stretch of e

st(T ) =

X

st(e)

e2E

Total Stretch of e

e

Pe

87

Preconditioning: Low-Stretch Trees

spanning tree T

G

1 X

st(e) =

re0

re 0

e 2Pe

Stretch of e

st(T ) =

X

st(e)

e2E

Total Stretch of e

e

Pe

st(T ) = O(n1:5)

88

Preconditioning: Low-Stretch Trees

spanning tree T

G

1 X

st(e) =

re0

re 0

e 2Pe

Stretch of e

st(T ) =

X

st(e)

e2E

Total Stretch of e

e

Pe

st(T ) = O(n1:5)

89

Preconditioning: Low-Stretch Trees

Q: How does this help?

90

Preconditioning: Low-Stretch Trees

Q: How does this help?

A: Can help to bound condition number of system preconditioned by T

1 ¢ I ¹ L+

T LG ¹ st(T ) ¢ I

91

Preconditioning: Low-Stretch Trees

Q: How does this help?

A: Can help to bound condition number of system preconditioned by T

1 ¢ I ¹ L+

T LG ¹ st(T ) ¢ I

Spanning tree

property

[SW09]

Low-stretch-tree

property

92

Preconditioning: Low-Stretch Trees

Q: How does this help?

A: Can help to bound condition number of system preconditioned by T

EASY PROOF:

1 ¢ I ¹ L+

T LG ¹ st(T ) ¢ I

[SW09]

¡ + ¢

¡ + ¢ P

+

¸max LT LG · Tr LT LG = e2E ÂT

L

e T Âe = st(T )

93

Preconditioning: Low-Stretch Trees

Q: How does this help?

A: Can help to bound condition number of system preconditioned by T

EASY PROOF:

1 ¢ I ¹ L+

T LG ¹ st(T ) ¢ I

[SW09]

¡ + ¢

¡ + ¢ P

+

¸max LT LG · Tr LT LG = e2E ÂT

L

e T Âe = st(T )

Trace bounds eigenvalue

94

Preconditioning: Low-Stretch Trees

Q: How does this help?

A: Can help to bound condition number of system preconditioned by T

EASY PROOF:

1 ¢ I ¹ L+

T LG ¹ st(T ) ¢ I

[BH01]

¡ + ¢

¡ + ¢ P

+

¸max LT LG · Tr LT LG = e2E ÂT

L

e T Âe = st(T )

Tree resistance is path length

95

Preconditioning: Partial Results

1.

Preconditioning by low-stretch spanning trees[BH01]

¡n¢

p

~ m log n log

RunTime = O(

² ) ¢ [O(m) + O(n)]

Condition number

96

Preconditioning: Partial Results

1.

Preconditioning by low-stretch spanning trees[BH01]

¡n¢

p

~ m log n log

RunTime = O(

² ) ¢ [O(m) + O(n)]

Condition number

Multiplication by L+

TL

97

Preconditioning: Partial Results

1.

Preconditioning by low-stretch spanning trees[BH01]

¡n¢

p

~ m log n log

RunTime = O(

² ) ¢ [O(m) + O(n)]

Condition number

2.

Multiplication by L+

TL

Improved analysis using Conjugate Gradient and Trace bound [SW09]

~ 4=3polylog n)

RunTime = O(m

NB: Low-stretch is a trace bound, stronger than eigenvalue bound!

98

Recursive Preconditioning:

Spielman-Teng and Koutis-Miller-Peng

Nearly-linear-time algorithms at last: [ST04], [KMP10],[KMP11]

IDEA: Low-stretch trees do not provide good enough condition number.

Use better preconditioner graphs

99

Recursive Preconditioning:

Spielman-Teng and Koutis-Miller-Peng

Nearly-linear-time algorithms at last: [ST04], [KMP10],[KMP11]

IDEA: Low-stretch trees do not provide good enough condition number.

Use better preconditioner graphs

+

L+

Lv

=

L

G1

G1 Â

PROBLEM: Hard to solve system for G1.

SOLUTION: Solve recursively.

100

Recursive Preconditioning:

Spielman-Teng and Koutis-Miller-Peng

Nearly-linear-time algorithms at last: [ST04], [KMP10],[KMP11]

IDEA: Low-stretch trees do not provide good enough condition number.

Use better preconditioner graphs

+

L+

Lv

=

L

G1

G1 Â

PROBLEM: Hard to solve system for G1.

SOLUTION: Solve recursively.

+

L+

L

x

=

L

G2 G1

G2 b

MAIN IDEA: At every recursive level, Gibecomes smaller as some lowdegree vertices are eliminated via Gaussian elimination.

101

Our Algorithm

102

Our Algorithm

(at last)

103

Choosing the Right Representation

Solve for the Flow

All algorithms discussed so far aim to solve voltage problem .

min

x?~

1

1

2

¢ vTLv ¡ vT Â

This is particularly problematic for gradient-based methods:

CURSE OF LONG PATHS

104

Choosing the Right Representation

Solve for the Flow

All algorithms discussed so far aim to solve voltage problem .

min

x?~

1

1

2

¢ vTLv ¡ vT Â

Our algorithm targets the minimum-energy flow problem:

min

f T Rf

s.t B T f = Â

105

Choosing the Right Representation

Solve for Electrical Flow

All algorithms discussed so far aim to solve voltage problem .

min

x?~

1

1

2

¢ vTLv ¡ vT Â

Our algorithm targets the minimum-energy flow problem:

min

f T Rf

s.t B T f = Â

Minimize energy

Route correct net flow

Choosing the Right Representation

Solve for Electrical Flow

All algorithms discussed so far aim to solve voltage problem .

min

x?~

1

1

2

¢ vTLv ¡ vT Â

Our algorithm targets the minimum-energy flow problem:

f T Rf

min

s.t B T f = Â

Minimize energy

Route correct net flow

This choice opens up different representational questions:

1

1

1

1

1

1

Could this be a flow path in our basic representation?

107

Optimality Conditions for Flow Problem

1. Ohm's Law:

f = R¡1 Bv

9v :

2. Kircho®'s Conservation Law:

BT f = Â

108

Optimality Conditions for Flow Problem

1. Ohm's Law:

f = R¡1 Bv

9v :

2. Kircho®'s Conservation Law:

BT f = Â

We eliminate dependence on voltages in Ohm's Law:

Kircho® 's Cycle Law: For any cycle C in G; the optimal electrical °ow has

X

re fe = 0

e2C

Flow-induced voltage drop along cycle is 0.

109

Optimality Conditions for Flow Problem

1. Ohm's Law:

f = R¡1 Bv

9v :

2. Kircho®'s Conservation Law:

BT f = Â

We eliminate dependence on voltages in Ohm's Law:

Kircho® 's Cycle Law: For any cycle C in G; the optimal electrical °ow has

X

re fe = 0

e2C

Fact: Ohm's Law $ Kircho®'s Cycle Law

110

Optimality Conditions for Flow Problem

We eliminate dependence on voltages in Ohm's Law:

Kircho® 's Cycle Law: For any cycle C in G; the optimal electrical °ow has

X

re fe = 0

e2C

Fact: Ohm's Law $ Kircho®'s Cycle Law

Flow values

Unit resistances

1

1

2

1

1

1

1

1

111

Optimality Conditions for Flow Problem

We eliminate dependence on voltages in Ohm's Law:

Kircho® 's Cycle Law: For any cycle C in G; the optimal electrical °ow has

X

re fe = 0

e2C

Fact: Ohm's Law $ Kircho®'s Cycle Law

Flow values

Unit resistances

1

1

2

1

Obeys KCL

1

1

1

1

112

Optimality Conditions for Flow Problem

We eliminate dependence on voltages in Ohm's Law:

Kircho® 's Cycle Law: For any cycle C in G; the optimal electrical °ow has

X

re fe = 0

e2C

Fact: Ohm's Law $ Kircho®'s Cycle Law

2

Flow values

Unit resistances

1

1

1

2

2

1

Obeys KCL

4

Set voltages

using spanning tree

0

1

1

3 1

2

1

1

113

Optimality Conditions for Flow Problem

We eliminate dependence on voltages in Ohm's Law:

Kircho® 's Cycle Law: For any cycle C in G; the optimal electrical °ow has

X

re fe = 0

e2C

Fact: Ohm's Law $ Kircho®'s Cycle Law

2

Flow values

Unit resistances

1

1

1

2

2

1

Obeys KCL

4

Set voltages

using spanning tree

0

1

1

3 1

2

1

KCL ensures that off-tree edges respect Ohm’s Law

1

114

Algorithmic Approach

1. Kircho®'s Cycle Law: For any cycle C in G; the optimal electrical °ow

has

X

re fe = 0 ITERATIVELY FIX BY

e2C

ADDING/REMOVING

FLOW ALONG CYCLES

BT f = Â

MAINTAIN SATISFIED

2. Kircho®'s Conservation Law:

115

Algorithmic Approach

1

1

Initialize:

1

s

Improve:

• Pick a cycle

1

1

1

0

0

0

0

0

0

t

Algorithmic Approach

1

1

1

Initialize:

s

Improve:

• Pick a cycle

• Fix it

1

1

1

0

0

0

0

0

0

t

Algorithmic Approach

Initialize:

1/4

Improve:

Send

• Pick a cycle

• Fix it

¢

R

°ow in opposite direction

1/4

1/4

1/4

Algorithmic Approach

1

1

Initialize:

1

s

Improve:

•

•

•

1

3/4

1

t

1/4

Pick a cycle

Fix it

Repeat

1/4

0

1/4

0

0

Algorithmic Approach

1

Initialize:

1

s

Improve:

1

51/16

Which

cycle?

• Pick a cycle

How many

• Fix it

• Repeat iterations?

25/16

5/8

1

9/16

1

35/16

1/16

7/16

3/8

24/16

1/16

3/8

How to

implement?

Output:

0

t

23/16

29/16

0

23/16

Algorithmic Approach

1

Initialize:

1

s

Improve:

1

51/16

Which

cycle?

• Pick a cycle

How many

• Fix it

• Repeat iterations?

25/16

5/8

1

9/16

1

35/16

1/16

7/16

3/8

24/16

1/16

3/8

How to

implement?

Output:

0

t

23/16

29/16

0

23/16

IDEA: A nearly-linear number of cheap (i.e. O(log n) iterations!

Choosing the Right Representation:

Cycle Space

f = R¡1 Bv

BTf = Â

f0

f1

f2

f*

0

122

Choosing the Right Representation:

Cycle Space

An affine translation of span of cycles (cycle space CG)

CG = fc : BTc = 0g

f = R¡1 Bv

Cycle updates

BTf = Â

f0

f1

f2

f*

0

123

Choosing the Right Representation:

Cycle Space

An affine translation of span of cycles (cycle space CG)

CG = fc : BTc = 0g

f = R¡1 Bv

Cycle updates

BTf = Â

f0

f1

f2

f*

RESTRICT OUR ATTENTION TO CYCLE SPACE

GOAL: FIND A GOOD BASIS TO WORK ON

0

124

Choosing the Right Representation:

Basis of Cycle Space

Fact:

Fundamental cycles of spanning tree give a basis of cycle space

Fix spanning tree T

125

Choosing the Right Representation:

Basis of Cycle Space

Fact:

Fundamental cycles of spanning tree give a basis of cycle space

ce

e

Fix spanning tree T

126

Our New Representation

ORIGINAl PROBLEM

8c 2 CG :

cT R(f0 + f) = 0

BT f = 0

Use spanning tree T

basis for CG

8e 2 E n T :

cTe R(f0 + f) = 0

P

f = e2EnT fe ce

127

Our New Representation

ORIGINAl PROBLEM

8c 2 CG :

cT R(f0 + f) = 0

BT f = 0

Use spanning tree T

basis for CG

8e 2 E n T :

cTe R(f0 + f) = 0

P

f = e2EnT fe ce

m– n+ 1 constraints

m– n+ 1 variables

128

Our New Representation

ORIGINAl PROBLEM

8c 2 CG :

cT R(f0 + f) = 0

BT f = 0

Use spanning tree T

basis for CG

8e 2 E n T :

cTe R(f0 + f) = 0

P

f = e2EnT fe ce

m– n+ 1 constraints

m– n+ 1 variables

Iteratively fix fundamental cycles of T

129

Our New Representation

ORIGINAl PROBLEM

8c 2 CG :

cT R(f0 + f) = 0

BT f = 0

Use spanning tree T

basis for CG

8e 2 E n T :

WHAT ITERATIVE METHOD IS THIS?

cTe R(f0 + f) = 0

P

f = e2EnT fe ce

m– n+ 1 constraints

m– n+ 1 variables

Iteratively fix fundamental cycles of T

130

Algorithmic Approach

1

Initialize:

1

s

Improve:

1

51/16

Which

cycle?

• Pick a cycle

How many

• Fix it

• Repeat iterations?

25/16

5/8

1

9/16

1

35/16

1/16

7/16

3/8

24/16

1/16

3/8

How to

implement?

Output:

0

t

23/16

29/16

0

23/16

IDEA: A nearly-linear number of cheap (i.e. O(log n) iterations!

Choosing the Right Iteration

Gradient Descent?

An affine translation of span of cycles (cycle space CG)

CG = fc : BTc = 0g

f = R¡1 Bv

Basis of fundamental cycles

BTf = Â

ft

f*

0

132

Choosing the Right Iteration

Gradient Descent?

An affine translation of span of cycles (cycle space CG)

CG = fc : BTc = 0g

f = R¡1 Bv

Basis of fundamental cycles

rf

BTf = Â

ft

f*

Gradient descent would update all cycles at once.

Requires linear time at each iteration.

0

133

Choosing the Right Iteration

Randomized Cordinate Descent!

An affine translation of span of cycles (cycle space CG)

CG = fc : BTc = 0g

f = R¡1 Bv

Basis of fundamental cycles

BTf = Â

ft

f*

Move along one coordinate direction at a time.

Choose basis vector ce w.p. proportional to

Convergence analysis?

cT

e Rce

re

= st(e) + 1

[SV09]

0

134

Coordinate Descent: Convergence

min

x?~

1

1

2

¢ xTAx ¡ bTx

8e 2 E n T :

Iteration count for gradient descent:

T =O

³

¸max (A)

¸min (A)

log

Iteration count for randomized coordinate descent:

³

Tr

(A)

T = O ¸min (A) log

cTe R(f0 + f) = 0

P

f = e2EnT fe ce

¡ n ¢´

²

¡ n ¢´

²

135

Coordinate Descent: Convergence

min

x?~

1

1

2

¢ xTAx ¡ bTx

8e 2 E n T :

Iteration count for gradient descent:

T =O

³

¸max (A)

¸min (A)

log

Iteration count for randomized coordinate descent:

³

Tr

(A)

T = O ¸min (A) log

cTe R(f0 + f) = 0

P

f = e2EnT fe ce

¡ n ¢´

²

¡ n ¢´

²

Worse by a factor of m – n + 1 in our case

136

Convergence

min

x?~

1

1

2

¢ xTAx ¡ bTx

¸min(A) = 1

8e 2 E n T :

cTe R(f0 + f) = 0

P

f = e2EnT fe ce

¸max(A) · Tr(A) = st(T) + O(n)

Tree stretch

Same calculation as for voltage preconditioning.

Iteration count for randomized coordinate descent:

T =O

³

Tr(A) log

¸min (A)

¡ n ¢´

²

137

New Condition Numbers

min

x?~

1

1

2

¢ xTAx ¡ bTx

¸min(A) = 1

8e 2 E n T :

cTe R(f0 + f) = 0

P

f = e2EnT fe ce

¸max(A) · Tr(A) = st(T) + O(n)

Tree stretch

Same calculation as for voltage preconditioning.

Iteration count for randomized coordinate descent:

T =O

³

Tr(A) log

¸min (A)

Worse by a factor of m – n + 1 in our case

¡ n ¢´

²

We start with a trace guarantee,

Not an eigenvalue one

138

Final Convergence

min

x?~

1

1

2

¢ xTAx ¡ bTx

¸min(A) = 1

8e 2 E n T :

cTe R(f0 + f) = 0

P

f = e2EnT fe ce

¸max(A) · Tr(A) = st(T) + O(n)

Same calculation as for voltage preconditioning.

Tree stretch

Pick spanning tree T to be low stretch.

Iteration count for randomized coordinate descent:

³

(A)

T = O ¸Tr

log

min (A)

¡ n ¢´

²

¡

¡ n ¢¢

~ m log n log

=O

²

139

Final Convergence

min

x?~

1

1

2

¢ xTAx ¡ bTx

¸min(A) = 1

8e 2 E n T :

cTe R(f0 + f) = 0

P

f = e2EnT fe ce

¸max(A) · Tr(A) = st(T) + O(n)

Same calculation as for voltage preconditioning.

Tree stretch

Pick spanning tree T to be low stretch.

Iteration count for randomized coordinate descent:

³

(A)

T = O ¸Tr

log

min (A)

¡ n ¢´

²

¡

¡ n ¢¢

~ m log n log

=O

²

NEARLY-LINEAR

140

Algorithmic Approach

1

Initialize:

1

s

Improve:

1

51/16

Which

cycle?

• Pick a cycle

How many

• Fix it

• Repeat iterations?

25/16

5/8

1

9/16

1

35/16

1/16

7/16

3/8

24/16

1/16

3/8

How to

implement?

Output:

0

t

23/16

29/16

0

23/16

IDEA: A nearly-linear number of cheap (i.e. O(log n) iterations!

Implement updates efficiently

0

0

2/7

2/7

-2/7

5/7

• When the tree is balanced

0

2/7

• Otherwise

3/7

decompose the tree recursively, i.e. 2/7

nested dissection,

gives O(log n) sparse representation

142

Resulting Algorithm

Which

cycle?

• Pick a cycle

How many

• Fix it

iterations?

• Repeat

How to

implement?

Fundamental cycle from low-stretch

spanning tree T, sampled with

probability proprtional to stretch.

Resulting Algorithm

Which

cycle?

• Pick a cycle

How many

• Fix it

iterations?

• Repeat

How to

implement?

Fundamental cycle from low-stretch

spanning tree T, sampled with

probability proprtional to stretch.

¡

¡ n ¢¢

~

O m log n log ²

Randomized coordinate

descent analysis

Resulting Algorithm

Which

cycle?

• Pick a cycle

How many

• Fix it

iterations?

• Repeat

How to

implement?

Fundamental cycle from low-stretch

spanning tree T, sampled with

probability proprtional to stretch.

¡

¡ n ¢¢

~

O m log n log ²

Randomized coordinate

descent analysis

O(log n)

Exploiting separators

of size 1 in tree

Resulting Algorithm

Which

cycle?

• Pick a cycle

How many

• Fix it

iterations?

• Repeat

How to

implement?

TOTAL RUNNING TIME:

Fundamental cycle from low-stretch

spanning tree T, sampled with

probability proprtional to stretch.

¡

¡ n ¢¢

~

O m log n log ²

Randomized coordinate

descent analysis

O(log n)

Exploiting separators

of size 1 in tree

¡

¡ n ¢¢

2

~

O m log n log ²

Running Time Improvements

¡

¡ n ¢¢

2

~ m log n log

O

²

Multiple phases with restarts

¡

¡ 1 ¢¢

2

~ m log n log

O

²

Lee, Sidford at FOCS’13

Accelerated Coordinate Descent

¡

¡ 1 ¢¢

1:5

~ m log n log

O

²

Running Time Improvements

¡

¡ n ¢¢

2

~ m log n log

O

²

Multiple phases with restarts

¡

¡ 1 ¢¢

2

~ m log n log

O

²

Lee, Sidford at FOCS’13

Accelerated Coordinate Descent

¡

¡ 1 ¢¢

1:5

~ m log n log

O

²

Open Questions

RELATED TO THIS ALGORITHM:

• Can this algorithm be parallelized? Practically important.

• Can it be made deterministic? Random order works on any RHS with high probability.

MORE GENERAL:

• Other application of idea about many cheap iterations?

E.g. cycle update view of undirected maximum flow result?

• Other applications of underlying idea: right representation + right iterative method

Almost-linear-time undirected maximum flow falls in this framework

Can we tackle other important graph problems?

e.g. directed maximum flow, better multicommodity flows, …

149