Case study • IBM Bluegene/L system • InfiniBand

advertisement

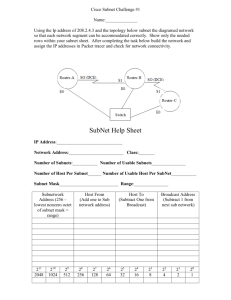

Case study • IBM Bluegene/L system • InfiniBand Interconnect Family share for 06/2011 top 500 supercomputers Interconnect Family Count Share % Rmax Sum (GF) Rpeak Sum (GF) Processor Sum Myrinet 4 0.80 % 384451 524412 55152 Quadrics 1 0.20 % 52840 63795 9968 Gigabit Ethernet 232 46.40 % 11796979 22042181 2098562 Infiniband 206 41.20 % 22980393 32759581 2411516 Mixed 1 0.20 % 66567 82944 13824 NUMAlink 2 0.40 % 107961 121241 18944 SP Switch 1 0.20 % 75760 92781 12208 Proprietary 29 5.80 % 9841862 13901082 1886982 Fat Tree 1 0.20 % 122400 131072 1280 Custom 23 4.60 % 13500813 15460859 1271488 Totals 500 100% 58930025.59 85179949.00 7779924 Overview of the IBM Blue Gene/L System Architecture • Design objectives • Hardware overview – System architecture – Node architecture – Interconnect architecture Highlights • A 64K-node highly integrated supercomputer based on system-on-a-chip technology – Two ASICs • Blue Gene/L compute (BLC), Blue Gene/L Link (BLL) • Distributed memory, massively parallel processing (MPP) architecture. • Use the message passing programming model (MPI). • 360 Tflops peak performance • Optimized for cost/performance Design objectives • Objective 1: 360-Tflops supercomputer – Earth Simulator (Japan, fastest supercomputer from 2002 to 2004): 35.86 Tflops • Objective 2: power efficiency – Performance/rack = performance/watt * watt/rack • Watt/rack is a constant of around 20kW • Performance/watt determines performance/rack • Power efficiency: – 360Tflops => 20 megawatts with conventional processors – Need low-power processor design (2-10 times better power efficiency) Design objectives (continue) • Objective 3: extreme scalability – Optimized for cost/performance use low power, less powerful processors need a lot of processors • Up to 65536 processors. – Interconnect scalability Blue Gene/L system components Blue Gene/L Compute ASIC • 2 Power PC440 cores with floating-point enhancements – 700MHz – Everything of a typical superscalar processor • Pipelined microarchitecture with dual instruction fetch, decode, and out of order issue, out of order dispatch, out of order execution and out of order completion, etc – 1 W each through extensive power management Blue Gene/L Compute ASIC Memory system on a BGL node • BG/L only supports distributed memory paradigm. • No need for efficient support for cache coherence on each node. – Coherence enforced by software if needed. • Two cores operate in two modes: – Communication coprocessor mode • Need coherence, managed in system level libraries – Virtual node mode • Memory is physical partitioned (not shared). Blue Gene/L networks • Five networks. – 100 Mbps Ethernet control network for diagnostics, debugging, and some other things. – 1000 Mbps Ethernet for I/O – Three high-band width, low-latency networks for data transmission and synchronization. • 3-D torus network for point-to-point communication • Collective network for global operations • Barrier network • All network logic is integrated in the BG/L node ASIC – Memory mapped interfaces from user space 3-D torus network • Support p2p communication • Link bandwidth 1.4Gb/s, 6 bidirectional link per node (1.2GB/s). • 64x32x32 torus: diameter 32+16+16=64 hops, worst case hardware latency 6.4us. • Cut-through routing • Adaptive routing Collective network • Binary tree topology, static routing • Link bandwidth: 2.8Gb/s • Maximum hardware latency: 5us • With arithmetic and logical hardware: can perform integer operation on the data – Efficient support for reduce, scan, global sum, and broadcast operations – Floating point operation can be done with 2 passes. Barrier network • Hardware support for global synchronization. • 1.5us for barrier on 64K nodes. IBM BlueGene/L summary • Optimize cost/performance – limiting applications. – Use low power design • Lower frequency, system-on-a-chip • Great performance per watt metric • Scalability support – Hardware support for global communication and barrier – Low latency, high bandwidth support • Case 2: Infiniband architecture – Specification (Infiniband architecture specification release 1.2.1, January 2008/Oct. 2006) available at Infiniband Trade Association (http://www.infinibandta.org) • Infiniband architecture overview • Infiniband architecture overview – Components: • • • • Links Channel adaptors Switches Routers – The specification allows Infiniband wide area network, but mostly adopted as a system/storage area network. – Topology: • Irregular • Regular: Fat tree – Link speed: • Single data rate (SDR): 2.5Gbps (X), 10Gbps (4X), and 30Gbps (12X). • Double data rate (DDR): 5Gbps (X), 20 Gbps (4X) • Quad data rate (QDR): 40Gbps (4X) • Layers: somewhat similar to TCP/IP – Physical layer – Link layer • • • • Error detection (CRC checksum) flow control (credit based) switching, virtual lanes (VL), forwarding table computed by subnet manager – Single path deterministic routing (not adaptive) – Network layer: across subnets. • No use for the cluster environment – Transport layer • Reliable/unreliable, connection/datagram – Verbs: interface between adaptors and OS/Users • Infinoband Link layer Packet format: • Local Route Header (LRH): 8 bytes. Used for local routing by switches within a IBA subnet • Global Route Header (GRH): 40 Bytes. Used for routing between subnets • Base Transport header (BTH): 12 Bytes, for IBA transport • Extened transport header – Reliable datagram extended transport header (RDETH): 4 bytes, just for reliable datagram – Datagram extended transport header (DETH): 8 bytes – RDMA extended transport header (RETH): 16 bytes – Atomic, ACK, Atomic ACK, • Immediate DATA extended transport header: 4 bytes, optimized for small packets. • Invariant CRC and variant CRC: – CRC for fields not changed and changed. • Local Route Header: – Switching based on the destination port address (LID) – Multipath switching by allocating multiple LIDs to one port Subnet management • Initialize the network – Discover subnet topology and topology changes, compute the paths, assign LIDs, distribute the routes, configure devices. – Related devices and entities • Devices: Channel Adapters (CA), Host Channel Adapters, switches, routers • Subnet manager (SM): discovering, configuring, activating and managing the subnet • A subnet management agent (SMA) in every device generates, responses to control packets (subnet management packets (SMPs)), and configures local components for subnet management • SM exchange control packets with SMA with subnet management interface (SMI). • Subnet Management phases: – Topology discovery: sending direct routed SMP to every port and processing the responses. – Path computation: computing valid paths between each pair of end node – Path distribution phase: configuring the forwarding table • Base transport header: • Verbs – OS/Users access the adaptor through verbs – Communication mechanism: Queue Pair (QP) • Users can queue up a set of instructions that the hardware executes. • A pair of queues in each QP: one for send, one for receive. • Users can post send requests to the send queue and receive requests to the receive queue. • Three types of send operations: SEND, RDMA(WRITE, READ, ATOMIC), MEMORY-BINDING • One receive operation (matching SEND) • To communicate: – Make system calls to setup everything (open QP, bind QP to port, bind complete queues, connect local QP to remote QP, register memory, etc). – Post send/receive requests as user level instructions. – Check completion. • InfiniBand has an almost perfect software/network interface: – The network subsystem realizes most user level functionality. • Network supports in-order delivery and and fault tolerance. • Buffer management is pushed out to the user. – OS bypass: User level accesses to the network interface. A few machine instructions will accomplish the transmission task without involving the OS.