Introduction Andy Wang CIS 5930-03 Computer Systems

advertisement

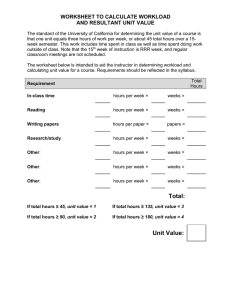

Introduction Andy Wang CIS 5930-03 Computer Systems Performance Analysis Overview of Analysis of Software Systems • Introduction • Common mistakes made in system software analysis – And how to avoid them • Selection of techniques and metrics 2 Introduction • Why do we care? • Why is it hard? • What tools can we use to understand system performance? 3 Why Do We Care? • Performance is almost always a key issue in software • Everyone wants best possible performance • Cost of achieving performance also key • Reporting performance is necessary in many publication venues – Both academic and industry 4 Importance of Performance in Research • Performance is key in CS research • A solution that doesn’t perform well isn’t a solution at all • Must prove performance characteristics to a skeptical community 5 State of Performance Evaluation in the Field • Generally regarded as poor • Many systems have little performance data presented – Measured by improper criteria – Experiments poorly designed – Results badly or incorrectly presented – Replication not generally respected 6 What’s the Result? • Can’t always trust what you read in a research paper • Authors may have accidentally or intentionally misled you – Overstating performance – Hiding problems – Not answering the important questions 7 Where Does This Problem Come From? • Mostly from ignorance of proper methods of measuring and presenting performance • Abetted by reader’s ignorance of what questions they should be asking 8 But the Field Is Improving • People are taking performance measurement more seriously • Quality of published experiments is increasing • Yours had better be of high quality, too – Publishing is tough – Be at the top of the heap of papers 9 What Do You Need To Know? • Selection of proper experiment characteristics • Proper performance measurement techniques • Proper statistical techniques • Proper data presentation techniques 10 Selecting Appropriate Experiment Characteristics • Evaluation techniques • Performance metrics • Workloads 11 Evaluation Techniques • What’s the best technique for getting an answer to a performance question? • Modeling? • Simulation? • Experimentation? 12 The Role of Modeling and Simulation • • • • • Measurement not always best tool Modeling might be better Simulation might be better But that’s not what this class is about We will discuss when to use those techniques, though 13 Performance Metrics • The criteria used to evaluate the performance of a system • E.g., response time, transactions per second, bandwidth delivered, etc. • Choosing the proper metrics is key to a real understanding of system performance 14 Workloads • The requests users make on a system • Without a proper workload, you aren’t measuring what users will experience • Typical workloads: – Types of queries – Jobs submitted to an OS – Messages sent through a protocol 15 Proper Performance Measurement Techniques • You need at least two components to measure performance: 1. A load generator • To apply a workload to the system 2. A monitor • To find out what happened 16 Proper Statistical Techniques • Most computer performance measurements not deterministic • Most performance evaluations weigh effects of different alternatives • How to separate meaningless variations from vital data in measurements? • Requires proper statistical techniques 17 Minimizing Your Work • Unless you design carefully, you’ll measure a lot more than you need to • A careful design can save you from doing lots of measurements – Should identify critical factors – And determine smallest number of experiments that gives sufficiently accurate answer 18 Proper Data Presentation Techniques • You’ve got pertinent, statistically accurate data that describes your system • Now what? • How to present it – Honestly – Clearly – Convincingly 19 Why Is Performance Analysis Difficult? • Because it’s an art - it’s not mechanical • You can’t just apply a handful of principles and expect good results • You’ve got to understand your system • You’ve got to select your measurement techniques and tools properly • You’ve got to be careful and honest 20 Bad Example: Ratio Game • Throughput in transactions per second System Workload 1 Workload 2 Average A 20 10 15 B 10 20 15 Bad Example: Ratio Game • Throughput normalized to system B System Workload 1 Workload 2 Average A 2 0.5 1.25 B 1 1 1 • Throughput normalized to system A System Workload 1 Workload 2 Average A 1 1 1 B 0.5 2 1.25 Example • Suppose you’ve built an OS for a special-purpose Internet browsing box • How well does it perform? • Indeed, how do you even begin to answer that question? 23 Starting on an Answer • What’s the OS supposed to do? • What demands will be put on it? • What HW will it work with, and what are that hardware’s characteristics? • What performance metrics are most important? – Response time? – Delivered bandwidth? 24 Some Common Mistakes in Performance Evaluation • List is long (nearly infinite) • We’ll cover the most common ones …and how to avoid them 25 No Goals • If it’s not clear what you’re trying to find out, you won’t find out anything useful • It’s very hard to design good generalpurpose experiments and frameworks • So know what you want to discover • Think before you start 26 Biased Goals • Don’t set out to show OUR system is better than THEIR system – Doing so biases you towards using certain metrics, workloads, and techniques – Which may not be the right ones • Try not to let your prejudices dictate the way you measure a system • Try to disprove your hypotheses 27 Unsystematic Approach • • • • Avoid scattershot approaches Work from a plan Follow through on the plan Otherwise, you’re likely to miss something – And everything will take longer, in the end 28 Analysis Without Understanding the Problem • Properly defining what you’re trying to do is a major component of the performance evaluation • Don’t rush into gathering data till you understand what’s important • This is the most common mistake in this class 29 Incorrect Performance Metrics • If you don’t measure what’s important, your results don’t shed much light – Example: instruction rates of CPUs with different architectures • Avoid choosing the metric that’s easy to measure (but isn’t helpful) over the one that’s hard to measure (but captures the performance) 30 Unrepresentative Workload • If workload isn’t like what normally happens, results aren’t useful • E.g., for Web browser, it’s wrong to measure its performance against – Just text pages – Just pages stored on the local server 31 Wrong Evaluation Technique • Not all performance problems are properly understood by measurement – E.g., issues of scaling, or testing for rare cases • Measurement is labor-intensive • Sometimes hard to measure peak or unusual conditions • Decide whether you should model/simulate before designing a measurement experiment 32 Overlooking Important Parameters • Try to make a complete list of system/workload characteristics that affect performance • Don’t just guess at a couple of interesting parameters • Despite your best efforts, you may miss one anyway – But the better you understand the system, the less likely you will 33 Ignoring Significant Factors • Factor: parameter you vary • Not all parameters equally important • More factors more experiments – But don’t ignore significant ones – Give weight to those that users can vary 34 Inappropriate Experiment Design • Too few test runs • Wrong values for parameters • Interacting factors can complicate proper design of experiments 35 Inappropriate Level of Detail • Be sure you’re investigating what’s important • Examining at too high a level may oversimplify or miss important factors • Going too low wastes time and may cause you to miss forest for trees 36 No Analysis • Raw data isn’t too helpful • Remember, final result is analysis that describes performance • Preferably in compact form easily understood by others – Especially for non-technical audiences • Common mistake in this class (trying to satisfy page minimum in final report) 37 Erroneous Analysis • Often based on misunderstanding of how statistics should be handled • Or on not understanding transient effects in experiments • Or in careless handling of the data • Many other possible problems 38 No Sensitivity Analysis • Rarely is one number or one curve fully descriptive of a system’s performance • How different will things be if parameters are varied? • Sensitivity analysis addresses this problem 39 Ignoring Errors in Input • Particularly a problem when you have limited control over the parameters • If you can’t measure an input parameter directly, need to understand any bias in indirect measurement 40 Improper Treatment of Outliers • Sometimes particular data points are far outside range of all others • Sometimes they’re purely statistical • Sometimes they indicate a true, different behavior of system • You must determine which are which 41 Assuming Static Systems • A previous experiment may be useless if workload changes • Or if key system parameters change • Don’t rely blindly on old results – E.g. Ousterhout’s old study of file servers – Or old results suggesting superiority of diskless workstations 42 Ignoring Variability • Often, showing only mean does not paint true picture of a system • If variability is high, analysis and data presentation must make that clear 43 Overly Complex Analysis • Choose simple explanations over complicated ones • Choose simple experiments over complicated ones • Choose simple explanations of phenomena • Choose simple presentations of data 44 Improper Presentation of Results • Real performance experiments are run to convince others – Or help others make decisions • If your results don’t convince or assist, you haven’t done any good • Often, manner of presenting results makes the difference 45 Ignoring Social Aspects • Raw graphs, tables, and numbers aren’t always enough for many people • Need clear explanations • Present in terms your audience understands • Be especially careful if going against audience’s prejudices! 46 Omitting Assumptions and Limitations • Tell your audience about assumptions and limitations • Assumptions and limitations are usually necessary in real performance study • But: – Recognize them – Be honest about them, – Try to understand how they affect results 47 A Systematic Approach To Performance Evaluation 1. 2. 3. 4. 5. 6. 7. State goals and define the system List services and outcomes Select metrics List parameters Select factors to study Select evaluation technique Select workload 48 Systematic Approach, Cont. 8. Design experiments 9. Analyze and interpret data 10. Present results Usually, it is necessary to repeat some steps to get necessary answers 49 State Goals and Define the System • Decide what you want to find out • Put boundaries around what you’re going to consider • This is a critical step, since everything afterwards is built on this foundation 50 List Services and Outcomes • What services does system provide? • What are possible results of requesting each service? • Understanding these helps determine metrics 51 Select Metrics • Metrics: criteria for determining performance • Usually related to speed, accuracy, availability • Studying inappropriate metrics makes a performance evaluation useless 52 List Parameters • What system characteristics affect performance? • System parameters • Workload parameters • Try to make it complete • But expect you’ll add to list later 53 Select Factors To Study • Factors: parameters that will be varied during study • Best to choose factors most likely to be key to performance • Also select levels for each factor • Keep list and number of levels short – Because it directly affects work involved 54 Select Evaluation Technique • • • • • Analytic modeling? Simulation? Measurement? Or perhaps some combination Right choice depends on many issues 55 Select Workload • List of service requests to be presented to system • For measurement, usually workload is list of actual requests to system • Must be representative of real workloads! 56 Design Experiments • How to apply chosen workload? • How to vary factors to their various levels? • How to measure metrics? • All with minimal effort • Pay attention to interaction of factors 57 Analyze and Interpret Data • Data gathered from measurement tend to have a random component • Must extract the real information from the random noise • Interpretation is key to getting value from experiment – And is not at all mechanical – Do this well in your project 58 Present Results • Make them easy to understand – For the broadest possible audience • Focus on most important results • Use graphics • Give maximum information with minimum presentation • Make sure you explain actual implications 59