The EM Algorithm for Layered Dynamic Textures

advertisement

The EM Algorithm for Layered Dynamic Textures

Antoni Chan and Nuno Vasconcelos

Department of Electrical and Computer Engineering

University of California, San Diego

Technical Report SVCL-TR-2005-03

June 3rd, 2005

Abstract

A layered dynamic texture is an extension of the dynamic texture that contains multiple

state processes, and hence can model video with multiple regions of different motion. In this

technical report, we derive the EM algorithm for the two models of layered dynamic textures.

1

Introduction

A layered dynamic texture is an extension of the dynamic texture [1] that can model multiple

regions (layers) of different motion. The motion in each layer is abstracted using a time-evolving

state process, and each pixel formed from one of the state processes, which is assigned through a

layer assignment variable. In Section 2 and Section 3, we derive the EM algorithm for learning the

layered dynamic texture (LDT) and the approximate layered dynamic texture (ALDT), respectively.

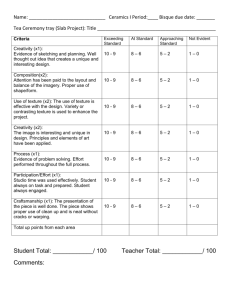

Figure 1: The layered dynamic texture (left), and the approximate layered dynamic texture (right).

The observed pixel process yi dependent on the underlying state processes x j . Each pixel yi is

assigned to one of the state processes through the layer assignment variable z i , which are the

elements of Z.

2

Layered dynamic texture

The model for the layered dynamic texture is shown in Figure 1 (left). Each of the K layers has a

Gauss-Markov state process xj that abstracts the motion of the layer. The N observed pixels are

assigned to one of the layers through the assignment variable z i , which are the elements of Z. The

1

linear system equations for this model are

(

(j)

(j)

(j)

xt = A(j) xt−1 + B (j) vt

, j ∈ {1, · · · , K}

√

(zi ) (zi )

(z

)

i

yi,t = Ci xt + r wi,t , i ∈ {1, · · · , N }

(1)

(j)

where Ci ∈ R1×n is the transformation from the hidden state to the observed pixel domain for each

√

(j)

pixel yi and layer j, the noise processes are B (j) wi,t ∼iid N (0, Q(j) ) and r (j) vt ∼iid N (0, r (j) In ),

(j)

and the initial state is drawn from x 1 ∼ N (µ(j) , S (j) ). In the remainder of this section, we discuss

the modeling of Z, derive the joint log-likelihood function, and derive the EM algorithm for layered

dynamic textures.

2.1

Markov Random Field

In order to insure spatial consistency of each layer, the elements of the layer assignment Z are

modeled as a Markov Random Field (MRF) [2], given by the distribution

p(Z) =

Y

1 Y

ψi (zi )

ψi,j (zi , zj )

Z

i

(2)

(i,j)∈E

where E is the set of edges in the MRF grid, ψ i and ψi,j are the potential functions, and Z is the

normalization constant (partition function) that makes the distribution sum to 1. The potential

functions ψi and ψi,j are

α1 , z i = 1

γ1 , z i = z j

.

.

.. ..

(3)

ψi (zi ) =

ψi,j (zi , zj ) =

γ2 , zi 6= zj

αK , z i = K

which correspond to some prior likelihood of each layer, and favoring neighboring pixels that are in

the same layer, respectively. While the parameters for the potential functions could be learned for

each model, we instead treat them as constants, which can be obtained by estimating their values

over a corpus of manually segmented images.

2.2

Log-likelihood function

The joint log likelihood factors as

`(X, Y, Z) = log p(X, Y, Z)

(4)

= log p(Y |X, Z) + log p(X) + log p(Z)

X (j)

X

=

zi log p(yi |x(j) , zi = j) +

log p(x(j) ) + log p(Z)

i,j

=

X

(6)

j

τ

X

(j)

zi

t=1

i,j

+

(5)

τ

X X

j

t=2

(j)

log p(yi,t |xt , zi = j)

(j) (j)

log p(xt |xt−1 )

2

+

(j)

log p(x1 )

(7)

!

+ log p(Z)

(j)

where zi is the indicator variable that zi = j. Substituting for the probability distributions and

dropping the constant terms, we have

τ 1 X (j) X

(j) (j) 2

(j)

+ log r

yi,t − Ci xt

zi

`(X, Y, Z) = −

2

r (j)

t=1

i,j

τ 2

1X X

(j)

(j)

(j) (j)

(j)

−

xt − A xt−1 (j) + log Q

+ x1 − µ(j)

2

Q

t=2

j

(8)

2

S (j)

+ log S

(j)

+ log p(Z)

τ

1 X (j) X 1 2

(j) (j)

(j) (j) (j) T

(j) T

zi

y

−

2C

x

y

+

tr

C

x

(x

)

(C

)

= −

i,t

i,t

t

t

t

i

i

i

2

r (j)

t=1

i,j

τ X (j)

−

zi log r (j)

2

!

(9)

i,j

τ

1 X X (j) −1 (j) (j) T

(j) (j)

(j)

(j)

xt (xt ) − xt (xt−1 )T (A(j) )T − A(j) xt−1 (xt )T

tr (Q )

2

j t=2

τ − 1 X

(j)

(j)

+ A(j) xt−1 (xt−1 )T (A(j) )T

−

log Q(j)

2

j

1 X (j) −1 (j) (j) T

(j)

(j)

−

tr (S )

x1 (x1 ) − x1 (µ(j) )T − µ(j) (x1 )T + µ(j) (µ(j) )T

2

j

1X

log S (j) + p(Z)

−

2

−

j

2.3

Learning with EM

The parameters of the model are learned using the EM algorithm [3], which is an iterative algorithm

with the following steps

• E-Step: Q(Θ; Θ̂) = EX,Z|Y ;Θ̂ (`(X, Y, Z; Θ))

• M-Step: Θ̂0 = argmaxΘ Q(Θ; Θ̂)

In the remainder of this section, we derive the E and M steps for the layered dynamic texture.

2.3.1

E-Step

Looking at the log-likelihood function (8), we see that the E-step requires the computation of the

following conditional expectations:

(j)

x̂t

(j)

(j)

= EX|Y (xt )

(j)

(j)

(j)

P̂t,t = EX|Y (xt (xt )T )

(j)

P̂t,t−1

=

(j) (j)

EX|Y (xt (xt−1 )T )

(j) (j)

x̂i,t = EZ,X|Y (zi xt )

(j)

(j) (j)

(j)

P̂i|t,t = EZ,X|Y (zi xt (xt )T )

(j)

ẑi

=

(10)

(j)

EZ|Y (zi )

Unfortunately these expectations are intractable to compute in closed-form, since it is not known

which layer each pixel yi is assigned to, and hence we must marginalize over the configurations of

Z.

3

2.3.2

Inference using the Gibbs sampler

Using the Gibbs sampler [4], the expectations can be approximated by averaging over samples from

the posterior distribution p(X, Z|Y ). Noting that it is much easier to sample conditionally from

(j)

the collection of variables X and Z than on any individual x (j) or zi , the Gibbs sampler is then

as follows

1. Initialize X ∼ p(X)

2. Repeat

(a) Sample Z ∼ p(Z|X, Y )

(b) Sample X ∼ p(X|Y, Z)

The distribution of layer assignments p(Z|X, Y ) is given by

p(Y, X|Z)p(Z)

p(Y, X)

p(Y |X, Z)p(X|Z)p(Z)

=

p(Y |X)p(X)

∝ p(Y |X, Z)p(Z)

Y

∝ p(Z)

p(yi |X, zi ))

p(Z|X, Y ) =

(11)

(12)

(13)

(14)

i

If the zi are modeled as independent multinomials, then sampling z i involves sampling from the

posterior of the multinomial p(zi |X, yi ) ∝ p(yi |X, zi )p(zi ). If Z is modeled as an MRF, then the

p(yi |X, zi ) terms are absorbed into the self potentials ψ i of the MRF, and sampling can be done

using the MCMC algorithm [2].

The state processes are independent of each other when conditioned on the video and the

pixel assignments, i.e.

Y

Y

p(X|Y, Z) =

p(xj |Y, Z) =

p(xj |Yj )

(15)

j

j

where Yj = {yi |zi = j} are all the pixels that are assigned to layer j. Using the Markovian structure

of the state process, the joint probability factors into the conditionals probabilites,

τ

Y

(j)

(j) (j)

(j)

(16)

p(xt |xt−1 , Yj )

p(x1 , · · · , xτ(j) |Yj ) = p(x1 |Yj )

t=2

The parameters of each conditional Gaussian is obtained with the conditional Gaussian theorem

[5],

(j)

(j)

(j)

(j)

(j)

(j)

(j)

E(xt |xt−1 , Yj ) = µt + Σt,t−1 (Σt−1,t−1 )−1 (xt−1 − µt−1 )

(j) (j)

cov(xt |xt−1 , Yj )

=

(j)

Σt,t

−

(j)

(j)

(j)

Σt,t−1 (Σt−1,t−1 )−1 Σt−1,t

(17)

(18)

where the marginal mean, marginal covariance, and one-step covariance are

(j)

µt

(j)

Σt,t

(j)

Σt,t−1

(j)

= E(xt |Yj )

(19)

=

(20)

=

(j)

cov(xt |Yj )

(j) (j)

cov(xt , xt−1 |Yj )

(21)

which can be obtained using the Kalman smoothing filter [7, 8]. The sequence x (j) |Yj is then

(j) (j)

(j)

sampled by drawing x1 |Yj , followed by drawing x2 |x1 , Yj , and so on.

4

2.3.3

M-Step

The optimization in the M-Step is obtained by taking the partial derivative of the Q function with

respect to each of the parameters. For convenience, we first define the following quantities,

Pτ −1 (j)

P

P

(j)

(j)

(j)

(j)

φ1 = t=1

P̂t,t φ2 = τt=2 P̂t,t

Φ(j) = τt=1 P̂t,t

P

P

P

(j)

(j)

(j)

(j)

(j)

Φi = τt=1 P̂i|t,t ψ (j) = τt=2 P̂t,t−1 Γi = τt=1 yi,t x̂i,t

P (j)

P

(j)

(j) 2

N̂j = i ẑi

Λi = τt=1 ẑi yi,t

(22)

The parameter updates are

• Transition matrix:

τ

∂Q

1 X

(j) −1 (j)

(j) −1 (j) (j)

=

−

−2(Q

)

P̂

+

2(Q

)

A

P̂

t

−

1

t,t−1

t−1

2

∂A(j)

t=2

⇒A

(j)∗

=

τ

X

(j)

P̂t,t−1

t=2

!

τ

X

(j)

P̂t−1,t−1

t=2

!−1

(j)

= ψ (j) (φ1 )−1

(23)

(24)

• State noise covariance:

τ

1 X

(j)

(j)

−(Q(j) )−T P̂t,t − P̂t,t−1 (A(j) )T −

2 t=2

τ −1

(j) T

(j) −T

(j) (j)

(j) (j)

−

A P̂t−1,t + A P̂t−1,t−1 (A ) (Q )

(Q(j) )−T

2

∂Q

∂Q(j)

⇒ Q(j)∗ =

= −

1

τ −1

τ

X

(j)

P̂t,t

t=2

!

− A(j)∗

τ

X

t=2

!T

(j)

P̂t,t−1 =

1

(j)

(φ2 − A(j)∗ (ψ (j) )T )

τ −1

(25)

(26)

• Initial state:

∂Q

1

(j)

(j)

+

2µ

−2x̂

=

−

1

2

∂µ(j)

(27)

(j)

⇒ µ(j)∗ = x̂1

(28)

• Initial covariance:

∂Q

∂S (j)

= −

1

(j)

(j)

−(S (j) )−T P̂1,1 − x̂1 (µ(j) )T −

2

(j)

µ(j) (x̂1 )T + µ(j) (µ(j) )T (S (j) )−T + (S (j) )−T

(j)

⇒ S (j)∗ = P̂1,1 − µ(j)∗ (µ(j)∗ )T

5

(29)

(30)

• Transformation matrix:

∂Q

(j)

∂Ci

⇒

=−

(j)∗

Ci

=

1 X

(j)

(j) (j)

−2(r (j) )−1 yi,t (x̂i,t )T + 2(r (j) )−1 Ci P̂i|t,t

2 t

X

(j)

yi,t x̂i,t

t

!T

X

(j)

P̂i|t,t

t

!−1

(j)

(31)

(j)

= (Γi )T (Φi )−1

(32)

• Pixel noise variance:

τ N̂

∂Q

1 X

(j) 2

(j) (j)

(j) (j)

(j) T

j (j) −1

(j) −2

(r )

(33)

=

−

−(r

)

ẑ

y

−

2C

x̂

y

+

C

P̂

(C

)

−

i,t

i

i

i,t i,t

i

i

i|t,t

2

2

∂r (j)

i,t

⇒ r (j)∗ =

=

1

(j) 2

(j) (j)

ẑi yi,t

−2

Ci x̂i,t yi,t +

τ N̂j

i,t

i,t

X

1

(j)

(j)∗ (j)

(Λi − Ci Γi )

τ N̂j i

X

X

X

i,t

(j)

(j)

(j)

Ci P̂i|t,t (Ci )T

(34)

(35)

In summary, the M-step is

(j)

(j)

1

(φ2 − A(j)∗ (ψ (j) )T )

Q(j)∗ = τ −1

A(j)∗ = ψ (j) (φ1 )−1

(j)

(j)

S (j)∗ = P̂1,1 − µ(j)∗ (µ(j)∗ )T

µ(j)∗ = x̂1

PN

(j)∗

(j)

(j)

(j)

(j)∗ (j)

Ci = (Γi )T (Φi )−1 r (j)∗ = 1

Γi )

i=1 (Λi − Ci

(36)

τ N̂j

3

Approximate layered dynamic texture

We now consider a slightly different model shown in Figure 1 (right). Each pixel y i is associated

with its own state process xi , whose parameters are chosen using the layer assignment z i . The new

model is given by the following linear system equations

(

xi,t = A(zi ) xi,t−1 + B (zi ) vi,t , i ∈ {1, · · · , N }

√

(37)

(j)

yi,t = Ci xi,t + r (zi ) wi,t

where the noise processes are wi,t ∼iid N (0, 1) and vi,t ∼iid N (0, In ). The log-likelihood factors as

log p(X, Y, Z) = log p(Y |X, Z) + log p(X|Z) + log p(Z)

(38)

If the pixels are assigned independently and identically distributed as a multinomial distribution,

(j)

then the model is similar to a mixture of dynamic textures [6]. The only difference is that C i is

different for each pixel and each layer, rather than for each state. Again, we will model Z as an

MRF grid as in the previous section.

6

3.1

Joint log-likelihood

The joint log-likelihood of the model is given by

`(X, Y, Z) = log p(Y |X, Z) + log p(X|Z) + log p(Z)

Y

Y

(j)

(j)

= log

p(yi |xi , zi = j)zi + log

p(xi |zi = j)zi + log p(Z)

i,j

=

i,j t=1

+

i,j

(40)

i,j

τ

XX

X

(39)

(j)

zi log p(yi,t |xi,t , zi = j)

(j)

zi

log p(xi,1 |zi = j) +

(41)

τ

X

t=2

log p(xi,t |xi,t−1 )

!

+ log p(Z)

Substituting for each of the distributions and ignoring the constant terms, we have

τ

2

1 X X (j)

(j)

(j)

`(X, Y, Z) = −

zi

log r + yi,t − Ci xi,t (j)

2

r

i,j t=1

τ

2

1 X X (j)

(j)

(j)

zi

+ xi,t − A xi,t−1 (j)

log Q

−

Q

2

i,j t=2

2

1 X (j)

−

zi

log S (j) + xi,1 − µ(j) (j) + log p(Z)

2

S

(42)

i,j

τ

1 X (j) X 1 2

(j) (j)

(j) (j) (j) T

(j) T

y

−

2C

x

y

+

tr

C

x

(x

)

(C

)

zi

i,t

t

t

t

i

i

i

2

r (j) i,t

t=1

i,j

τ X (j)

−

zi log r (j)

2

= −

(43)

i,j

τ

1 X (j) X (j) −1 (j) (j) T

(j)

(j)

(j) (j)

zi

tr (Q )

xt (xt ) − xt (xt−1 )T (A(j) )T − A(j) xt−1 (xt )T

−

2

t=2

i,j

τ − 1 X

(j)

(j)

(j)

−

+ A(j) xt−1 (xt−1 )T (A(j) )T

zi log Q(j)

2

i,j

X

1

(j)

(j)

(j)

(j) (j)

−

zi tr (S (j) )−1 x1 (x1 )T − x1 (µ(j) )T − µ(j) (x1 )T + µ(j) (µ(j) )T

2

i,j

1 X (j)

−

zi log S (j) + p(Z)

2

i,j

3.2

Learning with EM

The parameters of the simplified model are learned using the EM algorithm. In the remainder of

this section we derive the E and M steps for the ALDT model.

3.2.1

E-Step

The E-step for the simple model is very similar to that of the mixture of dynamic textures [6]. The

expectations that need to be computed are

(j)

(j) (j)

EX,Z|Y (zi xi,t ) = ẑi x̂i,t

7

(44)

(j)

(j)

(j)

(45)

EX,Z|Y (zi xi,t (xi,t−1 )T ) = ẑi P̂i|t,t−1

(j)

(j)

(46)

(j)

= Ex(j) |y ,z (j) =1 xi,t

i i

i

(j) (j) T

= Ex(j) |y ,z (j) =1 xi,t xi,t

i i

i

(j) (j) T

= Ex(j) |y ,z (j) =1 xi,t xi,t−1

(47)

EX,Z|Y (zi xi,t (xi,t )T ) = ẑi P̂i|t,t

(j)

where

(j)

x̂i,t

(j)

P̂i|t,t

(j)

P̂i|t,t−1

(j)

ẑi

i

i

(48)

(49)

i

= p(zi = j|Y )

(50)

The first three terms can be computed using the Kalman smoothing filter [7, 8]. The posterior of

zi can be estimated by averaging over samples drawn from the full posterior p(Z|Y ) MRF, using

Markov-Chain Monte Carlo (MCMC) [2],

p(Z|Y ) ∝ p(Y |Z)p(Z)

Y

=

p(yi |zi )p(Z)

(51)

(52)

i

=

Y

i

p(yi |zi )ψi (zi )

Y

ψi,j (zi , zj )

(53)

(i,j)∈E

where p(yi |zi ) is computed using the innovations of the Kalman filter [7].

3.2.2

M-Step

(j)

Most of the M-step is similar to that of the mixture of dynamic textures, except that C i

for each pixel and layer. For convenience, we define the quantities,

Φ(j) =

(j)

φ2 =

(j)

Γi

and N̂j =

P

(j)

i ẑi

=

(j) Pτ

(j)

i=1 ẑi

t=1 P̂i|t,t

PN (j) Pτ

(j)

i=1 ẑi

t=2 P̂i|t,t

(j) T

(j) Pτ

ẑi

t=1 yi,t (x̂i,t )

PN

(j)

and Λi

=

(j) 2

t=1 ẑi yi,t .

Pτ

(j)

φ1 =

ψ (j) =

(j)

Φi

=

is different

(j) Pτ −1 (j)

i=1 ẑi

t=1 P̂i|t,t

PN (j) Pτ

(j)

i=1 ẑi

t=2 P̂i|t,t−1

(j)

(j) Pτ

ẑi

t=1 P̂i|t,t

PN

(54)

The updates for the parameters are

• State Transition Matrix

τ

i

∂Q

1 X X (j) h

(j) −1 (j)

(j) −1 (j) (j)

=

−

ẑ

−2(Q

)

P̂

+

2(Q

)

A

P̂

= 0

i

i|t,t−1

i|t−1,t−1

2

∂A(j)

i t=2

τ

XX

i

t=2

(j)

(j)

ẑi P̂i|t,t−1 − A(j)

(j)

⇒ A(j)∗ = ψ (j) (φ1 )−1

8

τ

XX

i

(j)

(j)

ẑi P̂i|t−1,t−1 = 0

(55)

(56)

t=2

(57)

• State Noise Covariance

τ

∂Q

1 X X (j) (j) −1 h (j)

(j)

(j)

=

ẑi (Q )

P̂i|t,t − P̂i|t,t−1 (A(j) )T − A(j) (P̂i|t,t−1 )T +

(j)

2

∂Q

i t=2

i

τ − 1 X (j) (j) −1

(j)

ẑi (Q ) = 0

A(j) P̂i|t−1,t−1 (A(j) )T (Q(j) )−1 −

2

(58)

i

=

τ

XX

−

t=2

i

τ

XX

+

t=2

i

⇒ Q(j) =

Q(j)∗ =

• Initial State Mean

(j) (j)

ẑi P̂i|t,t

τ

XX

t=2

i

(j)

(j) (j)

ẑi P̂i|t,t−1 (A(j) )T

−

τ

XX

i

(j)

(j)

ẑi A(j) (P̂i|t,t−1 )T (59)

t=2

(j)

ẑi A(j) P̂i|t−1,t−1 (A(j) )T − (τ − 1)N̂j Q(j) = 0

1

h

(τ − 1)N̂j

h

1

(τ − 1)N̂j

(j)

(j)

φ2 − ψ (j) (A(j) )T − A(j) (ψ (j) )T + A(j) φ1 (A(j) )T

(j)

φ2 − A(j)∗ (ψ (j) )T

i

(62)

=

i

(60)

(61)

i

1 X (j) h

(j)

ẑi 2(S (j) )−1 x̂i,1 − 2(S (j) )−1 µ(j) = 0

2

i

X (j) (j) X (j)

=

ẑi x̂i,1 −

ẑi µ(j) = 0

∂Q

∂µ(j)

i

(63)

i

⇒ µ(j)∗ =

1 X

N̂j

(j) (j)

(64)

ẑi x̂i,1

i

• Initial State Covariance

i

∂Q

1 X (j) (j) −1 h (j)

(j) (j) T

(j) (j) T

(j) (j) T

(S (j) )−1(65)

ẑ

(S

)

P̂

−

x̂

(µ

)

−

µ

(x̂

)

+

µ

(µ

)

=

i

i,1

i,1

i|1,1

2

∂S (j)

i

1 X (j) (j) −1

−

ẑi (S ) = 0

2

i

i X

X (j) h (j)

(j)

(j)

(j)

ẑi S (j) = 0 (66)

=

ẑi P̂i|1,1 − x̂i,1 (µ(j) )T − µ(j) (x̂i,1 )T + µ(j) (µ(j) )T −

i

i

1 X

⇒ S (j) =

S

(j)∗

N̂j

i

1 X

=

N̂j

• Transformation matrix:

∂Q

(j)

∂Ci

(j)∗

⇒ Ci

(j)

ẑi

=

i

h

(j)

(j)

(j)

P̂i|1,1 − x̂i,1 (µ(j) )T − µ(j) (x̂i,1 )T + µ(j) (µ(j) )T

(j) (j)

ẑi P̂i|1,1

!

− µ(j)∗ (µ(j)∗ )T

(j)

i

1 X ẑi h

(j)

(j)

(j) T

−2x̂

y

+

2

P̂

(C

)

=0

i,t i,t

i

i|t,t

2 t r (j)

!T

!−1

X

X

(j)

(j)

(j)

(j)

yi,t x̂i,t

P̂i|t,t

= (Γi )T (Φi )−1

=−

t

t

9

i

(67)

(68)

(69)

(70)

• Pixel noise variance:

(j)

∂Q

τ X (j) 1

1 X ẑi

(j) (j)

(j) (j)

(j)

ẑ

=

−

+

(y 2 − 2Ci x̂i,t yi,t + Ci P̂i|t,t (Ci )T ) = 0 (71)

i

(j)

(j) )2 i,t

2

2

∂r (j)

r

(r

i

i,t

1 X (j) 2

(j) (j)

(j) (j)

(j)

ẑi (yi,t − 2Ci x̂i,t yi,t + Ci P̂i|t,t (Ci )T ) (72)

⇒ r (j) =

τ N̂j i,t

!

X (j)

1 X (j) X 2

1 X (j)

(j)∗ (j)

(j)∗ (j)

(j)∗

⇒r

=

ẑi (

yi,t ) − Ci ẑi (

x̂i,t yi,t ) =

(Λi − Ci Γi ) (73)

τ N̂j i

τ N̂j i

t

t

In summary, the M-step is

(j)

A(j)∗ = ψ (j) (φ1 )−1

P (j) (j)

µ(j)∗ = 1

i ẑi x̂i,1

N̂j

(j)∗

Ci

(j)

(j)

= (Γi )T (Φi )−1

(j)

1

(φ2 − A(j)∗ (ψ (j) )T )

(τ −1)N̂j

P

(j) (j)

(j)∗ (µ(j)∗ )T

S (j)∗ = 1 ( N

i=1 ẑi P̂i|1,1 ) − µ

N̂j

(j)

(j)∗ (j)

1 PN

r (j)∗ = τ N̂

Γi )

i=1 (Λi − Ci

j

Q(j)∗ =

(74)

References

[1] G. Doretto, A. Chiuso, Y. N. Wu, and S. Soatto. Dynamic textures. International Journal of

Computer Vision, vol. 2, pp. 91-109, 2003.

[2] S. Geman and D. Geman. Stochastic relaxation, Gibbs distribution, and the Bayesian restoration of images. IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 6(6), pp.

721-41, 1984.

[3] A. P. Dempster, N. M. Laird, and D. B. Rubin. Maximum likelihood from incomplete data via

the EM algorithm. Journal of the Royal Statistical Society B, vol. 39, pp. 1-38, 1977.

[4] D. J. C. MacKay. Introduction to Monte Carlo Methods. In Learning in Graphical Models, pp.

175-204, Kluwer Academic Press, 1998.

[5] S. M. Kay Fundamentals of Statistical Signal Processing: Estimation Theory. Prentice-Hall

Signal Processing Series, 1993.

[6] A. B. Chan and N. Vasconcelos Mixtures of dynamic textures. In IEEE International Conference

on Computer Vision, vol. 1, pp. 641-47, 2005.

[7] R. H. Shumway and D. S. Stoffer. An approach to time series smoothing and forecasting using

the EM algorithm. Journal of Time Series Analysis, vol. 3(4), pp. 253-64, 1982.

[8] S. Roweis and Z. Ghahramani. A unifying review of linear Gaussian models. Neural Computation, vol. 11, pp. 305-45, 1999.

10