Chapter 1: Drawing Statistical Conclusions

advertisement

Chapter 1: Drawing Statistical Conclusions

• Define statistical inference.

• Why do statistical statements need a measure of uncertainty?

• Inferences depend on the study design.

Randomized

Experiment

Observational

Study

Random

Sampling

NO Random

Sampling

Table 1: Statistical inference permitted by study designs

• What are the TWO Scope of Inference Questions?

How are they used?

• Why does randomization allow for causal statements?

• Are observational studies useless?

• Experiments

Identify the experimental units (the thing to which the treatment is applied ), the independent units to which the treatments are applied?.

Where did the units come from? Were they randomly selected from a larger population?

What difference does that make for inference?

What does random sampling attempt to ensure?

What can you say about results from a convenience sample?

• Sampling

Simple Random Sample picks any sample of size n the same probability.

Other random sampling procedures: Systematic, Random cluster sampling, variable probability sampling, adaptive sampling, etc.

• Does random sampling apply to randomized experiments or observational studies or both?

• Random sampling or random assignment provides the chance mechanism needed for a

probability model needed for statistical analysis. Provides measures of uncertainty to

accompany inferential conclusions.

• Uncertainties (or confidence levels) only cover variation due to random assignment or

random allocation, NOT due to other factors such as measurement error. Any bias in the

data collection method will carry over into the statistical conclusions.

A statistical analysis cannot salvage a poorly designed study.

• Random assignment

1. helps ensure that the overall response of the group is due to the treatment and not

some confounding variable.

2. allows us to formulate a probability model, e.g. An additive treatment effect model.

1

• Some terminology and definitions:

parameter

statistic

test statistic

estimate

mean (µ)

average (X)

population standard deviation (σ)

sample standard deviation (s)

experimental unit

Null and Alternative Hypotheses statements about parameters.

Randomization distribution imagine all possible ways to randomly assign treatments, and

obtain a test statistic from each.

p-value

– exact

– approximate

– mathematical

Observational studies (no group assignment). Sample from two populations or take one sample

and observe group membership of each individual.

• Chance mechanism from random sample selection provides the same distribution of a

test statistic as random treatment allocation.

Permutation distribution:

• Null hypothesis of no difference means we could re-randomize group membership and look

at how the difference in averages varies.

• The p-value computation: Count the number of re-randomizations which have more extreme differences in sample average than the one observed, and divide by the total number

of ways to re-randomize.

Graphical Methods (be able to interpret and compare)

• Histograms

• Stem-and-Leaf Diagrams

• Box plots

• Scatter plots

2

Chapter 2: Inference Using t-Distributions

• Every statistic varies from sample to sample, giving a distribution of possible values called

the sampling distribution.

• Center of the sampling distribution of the sample average is µ, the population mean.

√

• Standard deviation of the sampling distribution of the sample average is σ/ n, the population standard deviation divided by square root sample size.

• Central Limit Theorem: As n increases, the sampling distribution of y becomes a normal

distribution, no matter how skewed the data are to start with.

• The standard error of a statistic is an estimate of the standard deviation of its sampling

√

distribution. For the sample average, it is s/ n

• The Degrees of Freedom (d.f.) is a measure of the amount of information used to

estimate variability.

• A 95% Confidence Interval for the Mean

Meaning?

Is there a 95% chance that µ is in the interval we made?

• Generic 95% confidence interval:

estimate ± (a multiplier) × (standard error of estimate)

√

• Formula for a CI for µ: y ± tdf (1 − α/2)SE(y) = y ± tdf (1 − α/2)s/ n

• Z-ratio: Use when the population standard deviation is known.

z=

y − µ0

√

σ/ n

Assumes normality, known variance, independent observations.

• One-Sample t-Tools and the Paired t-Test (on differences)

t=

y − µ0

√

s/ n

df = n − 1

Assumptions: normal distribution or large n. Independent observations.

Use with null hypothesis: H0 : µ = µ0 .

Two sample tests:

• Assume equal variance and use pooled estimate of σ:

s

sp =

(n1 − 1)s21 + (n2 − 1)s22

(n1 + n2 − 2)

Standard Error for the Difference

s

SE(Y 2 − Y 1 ) = sp

3

1

1

+

n1 n2

Confidence interval for µ2 − µ1 is

q

y 2 − y 1 ± tdf (1 − α/2)sp 1/n1 + 1/n2

df = n1 + n2 − 2

t-test for H0 : µ2 − µ1 = δ0 :

t=

y 2 − y 1 − δ0

p

sp / 1/n1 + 1/n2

df = n1 + n2 − 2

Has trouble if the ratio of standard errors is more than 2 or less than .5.

• Don’t assume equal variance and use Welch’s t-test. Confidence interval for µ2 − µ1 is

q

y 2 − y 1 ± tdf (1 − α/2) s21 /n1 + s22 /n2 df from formula

t-test for H0 : µ2 − µ1 = δ0 :

y − y 1 − δ0

t= q 2

s21 /n1 + s22 /n2

df from formula

Can confuse a change in spread with a change in means.

Find p-values and t quantiles from a t-table or using R.

The p-value for a t-test is the probability of obtaining a t-ratio as extreme or more extreme

than the observed t-statistic assuming the null hypothesis is true.

• Small p-values occur if

1.

2.

• The

incorrect.

the p-value, the stronger is the evidence that the null hypothesis is

• A large p-value =⇒ study is not capable of excluding the null hypothesis as a possible

explanation. (CANNOT say the null hypothesis is true!) Possible wording: “the data are

consistent with the hypothesis being true.”

• Use one-sided p-value when the researcher knows that violations of the null hypothesis

occur in one direction only.

Important: Always report whether the p-value is one-sided or two-sided!

t based Inference in a Two-Treatment Randomized Experiment

• Randomization used to assign units to two groups.

• T-distribution approximates the randomization distribution.

• p-values and confidence intervals based on the t-distribution are approximations to the

correct values calculated from a randomization distribution.

• Hypothesis tests:

4

– Calculations are the same as for random sampling situations.

– Phrase conclusions in terms of treatment effects and causation, instead of differences

in population means and association.

– Test if δ = 0 rather than if (µ1 − µ2 ) = 0.

• Confidence interval for a treatment effect:

– Calculations are the same as for the difference between population means.

– Based on randomization distribution:

– Use the relationship between a confidence interval and a p-value

– RULE: the 100(1 − α)% confidence interval lists possible values for our parameter

which would not be rejected at significance level α in a two-sided test where H0

supposes the value in question is the true parameter value.

• A p-value is NOT the probability of the null hypothesis being correct. The probability

arises from uncertainty in the data and not uncertainty in the parameter value.

Chapter 3: A Closer Look at Assumptions

Robustness: a statistical procedure is robust to departures from a particular assumption if it

is still valid even when the assumption is not met.

• valid means uncertainty measures (CIs and p-values) are nearly equal to the stated rates.

• Evaluate robustness separately for each assumption.

• Assumptions of the two-sample t-tools?

1. Normality - t-tools remain reasonably valid in large samples because of the central

limit theorem. See display 3.4. Can be a problem with small sample sizes, and

long-tailed or skewed distributions.

2. Equal standard deviations - Can be a serious problem when sample sizes are unequal.

See Display 3.5. Welch’s modifications work, but then we might be confusing a shift

in the mean with one in spread.

3. Independence - clusters in time or space reduce the effective sample size, which can

change inference. Don’t use the regular t-tools.

Resistance: a statistical procedure is resistant if it does not change much when a small part

of the data changes, perhaps drastically.

• Outlier: an observation judged to be far from its group average.

• Averages and standard deviations are not resistant to outliers. Medians are resistant.

• Outliers may indicate

1. a long-tailed distributions

2. contamination by an observation from another population.

• Implication: One or two outliers can affect a CI or p-value enough to change a conclusion.

A conclusion based on one or two data points is fragile!

5

Strategies for the Two-sample problem

1. Consider Serial and Cluster Effects (Independence)

If so, you could average over the clusters (which greatly reduces df) or use a more sophisticated statistical tool.

2. Evaluate the Suitability of the t-Tools:

Plot the data – look for symmetric distributions and equal spread. Outliers appear more

often in bigger samples (but exert weaker effects)

If you see violations: consider a transformation or alternative methods with fewer assumptions:

3. A Strategy for Dealing with Outliers

Does it matter? If not, leave it in.

Is it an error? If yes, fix it.

If those options don’t take care of it, try a resistant analysis.

If need be, report the analysis with and without the outlier – let the reader decide.

Transformations of the Data

Log transformations are useful for positive data.

• Preserves the ordering of the original data

• Results can still be presented on the original scale (after backtransforming).

• Common scale in some scientific fields

• Using natural log (ln(x)) or base 10 log gives the same results after backtransforming

• Use when the ratio of the largest to smallest observations in a group is greater than 10.

• Use if both distributions are skewed and larger average goes with the larger spread.

• Backtransform to the original scale for interpretation

Use exp() to backtransform natural log differences

Interpret exp(log.avg1 - log.avg2) as the multiplicative change in medians from group

1 to group 2. Similarly, apply exp to each end of a confidence interval to get a CI for the

multiplicative shift in medians.

Do not backtransform individual averages or measures of spread.

Other Transformations for Positive Measurements:

• Use Square Root on counts, or measurements of area.

• Reciprocal for waiting times (converts time to speed)

• Arcsine square root for Proportions

• Logit for Proportions

Choosing a transformation:

Plot the data. Look for a scale in which the 2 groups have equal spread.

Also consider ease of interpretation.

Formally testing for equal variance or normality is generally overkill because the tests are not

robust and exact normality is not needed.

6

Chapter 4: Alternatives to the t-Tools

The Rank-Sum Test or Wilcoxon test, or Mann-Whitney test

• The rank-sum test is a resistant alternative to the two-sample t-test.

• Replace each observation by its rank in the combined sample – all data regardless of group.

(Ties all get the average of available ranks). This removes the effect of an outlier and can

handle censored observations.

• The test statistic T = the sum of the ranks in one group

• Null hypotheses: the medians are equal.

• If the null hypothesis is true then the sample of n1 ranks in group 1 is a random sample

from the n1 + n2 available ranks.

• p-value

1. EXACT: Compute exact p-values using the randomization/permutation distribution

(smaller n or lots of ties). pwilcox() in R.

2. NORMAL APPROXIMATION when n > 5, and few ties.

M ean(T ) = q

n1 R where R = average of the combined set of ranks,

n2

SD(T ) = sR (nn11+n

where sR = sample SD of combined set of ranks

2)

Z-statistic =

T − Mean(T )

SD(T )

Continuity correction: Add or subtract 0.5 from the numerator of the Z-statistic to

make is closer to zero

• Confidence interval? Use guess and check. Add value v to the group with smaller median

and run the test again. If you fail to reject the null, then v belongs in the CI, if not it’s

outside the interval. Keep trying values until you locate the endpoints.

Permutation Test

• p-value is the proportion of all possible regroupings of the observed n1 + n2 numbers into

two groups of size n1 and n2 that lead to test statistics as extreme or more extreme than

the observed one.

• Require no distributional assumptions or special conditions

• Are always available (though may require too much computational effort)

• STEPS:

1. Decide on a test statistic.

2. Compute its value from the 2 samples (=observed test statistic)

3. List all regroupings of the n1 + n2 numbers into groups of size n1 and n2 .

4. Recompute the test statistic for each regrouping.

7

5. Count the number of regroupings that produce the test statistics as extreme or more

extreme than the observed test statistic.

6. Calculate the p-value by dividing the count in 5. by the total number of regroupings.

n(n−1)(n−2)···1

n!

• Combinations, written Cn,k or nk = k!(n−k)!

= k(k−1)···1×(n−k)(n−k−1)···1

Counts the number of ways we can choose k objects out of n. For example, if we have letters {A, B, C,

D} there are six possible pairs of letters, {AB, AC, AD, BC, BD, CD} where order is not

considered; BD and DB are the same set.

Welch’s t-Test for Comparing Two Normal Populations with Unequal Spreads

• Avoids the assumption of equal variance.

s

SEW (Y 2 − Y 1 ) =

s22

s2

+ 1

n2 n1

• New formula for the degrees of freedom (Satterthwaite’s approximation):

d.f.W =

[SEW (Y 2 − Y 1 )]4

[SE(Y 2 )]4

(n2 −1)

+

[SE(Y 1 )]4

(n1 −1)

• Compute the t-test and confidence intervals as usual for the two-sample t-test using SEW

and d.f.W .

• Exact distribution of the Welch’s t-ratio is unknown. It’s approximatly a t-distribution

with d.f.W degrees of freedom.

• Danger: if variances differ, then a comparison of means involves variances as well as means.

Alternatives for Paired Data

Sign Test

• Null hypothesis: the differences are equally likely to be positive or negative.

• Test statistic: K =# of positive differences (discard ties) out of n - # ties.

• What do we expect K to be if the null hypothesis is true?

• Exact p-value: Under null, K ∼ Binomial(n, p = 0.5)

The chance of obtaining exactly k positive differences: Cn,k (.5)n

p-value = sum of these chances for all values of k that are as extreme or more extreme

than the observed K.

√

• Normal approximation p-value: Z = K−(n/2)

n/4

continuity correction: add or subtract 0.5 from numerator to make it closer to zero.

Wilcoxon Signed-Rank Test, More powerful alternative than the sign test

• Take differences and rank the absolute values.

Keep track of which were positive and negative. Drop any zeroes.

S = sum of the ranks from the pairs with positive difference.

8

• Exact p-value = the number of possible random assignments with sum of positive ranks

as extreme or more extreme as the one observed divided by 2n .

• Normal approximation: (for n > 20)

M ean(S) =

n(n+1)

4

SD(S) =

q

n(n+1)(2n+1)

24

Z=

S−M ean(S)

SD(S)

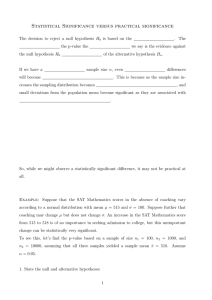

Practical and Statistical Significance

• p-values indicate statistical significance (strength of evidence against a null hypothesis)

• Practical significance refers to the practical importance of the effect in question:

• With a big enough sample size we can make any difference statistically significant.

• Fixed levels like 0.05 and 0.01 have no general validity.

• Confidence intervals are better summaries that p-values.

Levene’s Test for Equality of Two Variances

• To test for equal variances in two populations, don’t use an F test. Not robust to nonnormality. Not resistant to outliers.

• Levene’s Test is robust

Do a t-test on the squared differences from the group averages. R-code: levene.test()

in the Car package.

Survey Sampling Selecting members of a specific (finite) population for survey inclusion.

• Simple random sample (SRS) (often unrealistic to find list of entire population)

• Stratified: separate SRS’s selected from each stratum

• Multistage: SRS within a SRS

• Cluster: SRS of clusters and then all members of cluster are included

• The Finite population correction (FPC)

Large populations: with versus without replacement makes little difference. In small pop’s

we don’t want to pick the same unit twice, so need wor.

Variance gets smaller by a factor of FPC = (NN−n)

• Usual SEs are inappropriate for data from complex sampling designs, and often are smaller

than they should be.

Moral : If you have a finite (known N) population that you are randomly sampling from,

consult a sampling textbook for correct standard error formulas.

• Non-response bias: Individuals who tend to respond to surveys often have much different

views than those who do not choose to respond.

Question: Is there something about the units we missed (or that didn’t respond) that is

related to the response?

/newpage

9

Chapter 5: Comparing Several Means Using ANOVA

• Additive versus multiplicative shift.

• Assumptions

1. Normal distributions for each population

2. Equal standard deviations for the populations

3. Independent observations within each group AND among groups.

• We assume equal spread in each group, so it makes sense to use a pooled estimate of

variance.

s2p =

(n1 − 1)s21 + (n2 − 1)s22 + . . . + (nI − 1)s2I

(n1 − 1) + (n2 − 1) + . . . + (nI − 1)

with

d.f. = n − I

q

• Compare any 2 means for groups j and k using SE(Y j − Y k ) = sp 1/nj + 1/nk

q

Test H0 : µj = µk with t = (Y j − Y k )/(sp 1/nj + 1/nk ) or

q

use a CI: Y j − Y k ± tn−I (1 − α/2)sp 1/nj + 1/nk .

Know where the df come from.

• Extra SSQ F test

– Reduced model (here a single mean) is a special case of Full model (here, separate

means for each group.)

Reduced SS−Full SS

Extra SS/Extra d.f.

ESS F-statistic = Reduced d.f.−Full d.f. =

2

Full SS

σ̂Full

Full d.f.

– Reduced SS is the sum of squared residuals from the reduced model.

– Full SS is the sum of squared residuals from the full model.

– Null hypothesis is: the reduced model is adequate. Alternative: the full model is

needed.

– Dist’n of F-stat under H0 is FExtra.df,F ull.df . If H0 is true, F should be near 1. If F

is large, we’ll get a small p-value and reject H0 .

• ANOVA table

Source of Variation Sum of Squares

d.f.

Between Groups

Extra SS

Extra d.f.

Within Groups

Full SS

Full d.f.

Total

Red. SS

Red. d.f.

Be able to fill in missing parts of the table.

Mean Square

Extra SS/Extra df

Full SS/Full d.f.

F∗

F-stat

= Extra MS/Full MS

– For the Spock Juries example, we used the ESS F test first to test to see if all means

were equal, and rejected that null.

– Secondly, we compared the full 7 means model to the reduced model with 2 means:

1 for Spock’s judge, and one for all other judges. This test had a small F and a large

p-value so we failed to reject the null model.

10

• Assumptions:

– Normality is not critical.

– Observations within and between groups need to be independent.

– SD’s are equal across groups.

– No outliers

• Use diagnostic plots of residuals to assess violations of assumptions.

– Residuals versus fitted. Look for non-constant spread, outliers.

– Residuals versus time of collection. Look for a trend.

– Normal quantile plot of the residuals should show a straight line.

• If outliers are present we can use the Kruskal-Wallis test. Convert the response to ranks

and run anova on the ranks.

• Fixed versus Random effects:

Levels of a factor are fixed effects if these are the only ones of interest.

Random Effects are not repeatable, are drawn from some bigger population of effects.

Inference for random effects relates to estimating the variances between them instead of

estimating particular shifts.

Chapter 6

• Linear combinations:

γ = C1 µ1 + C2 µ2 + . . . + CI µI

• We often use contrasts where the Ci ’s add up to zero.

• Estimate:

g = C1 Y 1 + C2 Y 2 + . . . + CI Y I

• which has standard error:

s

SE(g) = sp

using sp =

√

C2

C12 C22

+

+ ... + I

n1

n2

nI

M SE from the ANOVA table and df = n − I.

• Inferences:

– Test H0 : γ = 0 using

t=

g−0

SE(g)

– CI:

g ± tn−I (1 − α/2)SE(g)

11

Chapter 7

• Model for linear regression: yi = β0 + β1 xi + i or µ(y|x) = β0 +]beta1 x

• Assumptions:

– Normality i ∼ iidN (0, σ) or y ∼ N (β0 + β1 x, σ). Exactly normal is not critical if

sample size is moderately large and no outliers.

– Observations are independent.

– Constant variance.

– Means fall on a straight line.

• Interpret intercept and slope. Extract tests and CI’s from summary of R output.

• Dangers of interpolation and extrapolation.

12