18-447: Computer Architecture Lecture 27: Multi-Core Potpourri Carnegie Mellon University

advertisement

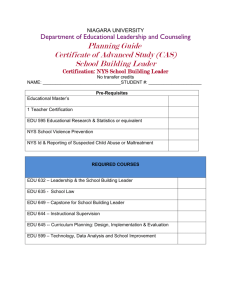

18-447: Computer Architecture Lecture 27: Multi-Core Potpourri Prof. Onur Mutlu Carnegie Mellon University Spring 2012, 5/2/2012 Labs 6 and 7 Lab 7 MESI cache coherence protocol (extra credit: better protocol) Due May 4 You can use 2 additional days without any penalty No additional days at all after May 6 Lab 6 Binary for golden solution released You can debug your lab Extended deadline: Same due date as Lab 7, but 20% penalty We’ll multiply your grade by 0.8 if you turn in by the new due date No late Lab 6’s accepted after May 6 2 Total number of students Fully correct Attempted EC Average Median Max Min 2059 2215 2985 636 Max Possible (w/o EC) 2595 2900 - 3000 2800 - 2900 2700 - 2800 2600 - 2700 2500 - 2600 2400 - 2500 2300 - 2400 2200 - 2300 2100 - 2200 2000 - 2100 1900 - 2000 1800 - 1900 1700 - 1800 1600 - 1700 1500 - 1600 1400 - 1500 1300 - 1400 1200 - 1300 1100 - 1200 1000 - 1100 900 - 1000 800 - 900 700 - 800 600 - 700 Lab 6 Grades 9 8 7 6 5 4 3 2 1 0 40 6 2 3 Lab 6 Honors Extra credit Jason Lin Stride prefetcher for D-cache misses, next-line prefetcher for I-cache misses Full credit Eric Brunstad Jason Lin Justin Wagner Rui Cai Tyler Huberty 4 Final Exam May 10 Comprehensive (over all topics in course) Three cheat sheets allowed We will have a review session Remember this is 30% of your grade I will take into account your improvement over the course Know the previous midterm concepts by heart 5 Final Exam Preparation Homework 7 Past Exams For your benefit This semester And, relevant questions from the exams on the course website Review Session 6 A Note on 742, Research, Jobs I am teaching Parallel Computer Architecture next semester (Fall 2012) Deep dive into many topics we covered And, many topics we did not cover Systolic arrays, speculative parallelization, nonvolatile memories, deep dataflow, more multithreading, … Research oriented with an open-ended research project Cutting edge research and topics in HW/SW interface If you enjoy 447 and do well in class, you can take it talk with me If you are excited about Computer Architecture research or looking for a job in this area talk with me 7 Course Evaluations Please do not forget to fill out the course evaluations Your feedback is very important I read these very carefully, and take into account every piece of feedback Please take the time to write out feedback And, improve the course for the future State the things you liked, topics you enjoyed, and what we can improve on both the good and the not-so-good Due May 15 8 Last Lecture Wrap up cache coherence VI MSI MESI MOESI ? Directory vs. snooping tradeoffs Interconnects Why important? Topologies Handling contention 9 Today Interconnection networks wrap-up Handling serial and parallel bottlenecks better Caching in multi-core systems 10 Interconnect Basics 11 Handling Contention in A Switch Two packets trying to use the same link at the same time What do you do? Buffer one Drop one Misroute one (deflection) Tradeoffs? 12 Multi-Core Design 13 Many Cores on Chip Simpler and lower power than a single large core Large scale parallelism on chip AMD Barcelona Intel Core i7 IBM Cell BE IBM POWER7 8 cores 8+1 cores 8 cores Nvidia Fermi Intel SCC Tilera TILE Gx 448 “cores” 48 cores, networked 100 cores, networked 4 cores Sun Niagara II 8 cores 14 With Many Cores on Chip What we want: N times the performance with N times the cores when we parallelize an application on N cores What we get: Amdahl’s Law (serial bottleneck) 15 Caveats of Parallelism Amdahl’s Law f: Parallelizable fraction of a program N: Number of processors 1 Speedup = 1-f + f N Amdahl, “Validity of the single processor approach to achieving large scale computing capabilities,” AFIPS 1967. Maximum speedup limited by serial portion: Serial bottleneck Parallel portion is usually not perfectly parallel Synchronization overhead (e.g., updates to shared data) Load imbalance overhead (imperfect parallelization) Resource sharing overhead (contention among N processors) 16 Demands in Different Code Sections What we want: In a serial code section one powerful “large” core In a parallel code section many wimpy “small” cores These two conflict with each other: If you have a single powerful core, you cannot have many cores A small core is much more energy and area efficient than a large core 17 “Large” vs. “Small” Cores Large Core Out-of-order Wide fetch e.g. 4-wide Deeper pipeline Aggressive branch predictor (e.g. hybrid) • Multiple functional units • Trace cache • Memory dependence speculation • • • • Small Core • • • • In-order Narrow Fetch e.g. 2-wide Shallow pipeline Simple branch predictor (e.g. Gshare) • Few functional units Large Cores are power inefficient: e.g., 2x performance for 4x area (power) 18 Large vs. Small Cores Grochowski et al., “Best of both Latency and Throughput,” ICCD 2004. 19 Meet Large: IBM POWER4 Tendler et al., “POWER4 system microarchitecture,” IBM J R&D, 2002. Another symmetric multi-core chip… But, fewer and more powerful cores 20 IBM POWER4 2 cores, out-of-order execution 100-entry instruction window in each core 8-wide instruction fetch, issue, execute Large, local+global hybrid branch predictor 1.5MB, 8-way L2 cache Aggressive stream based prefetching 21 IBM POWER5 Kalla et al., “IBM Power5 Chip: A Dual-Core Multithreaded Processor,” IEEE Micro 2004. 22 Meet Small: Sun Niagara (UltraSPARC T1) Kongetira et al., “Niagara: A 32-Way Multithreaded SPARC Processor,” IEEE Micro 2005. 23 Niagara Core 4-way fine-grain multithreaded, 6-stage, dual-issue in-order Round robin thread selection (unless cache miss) Shared FP unit among cores 24 Remember the Demands What we want: In a serial code section one powerful “large” core In a parallel code section many wimpy “small” cores These two conflict with each other: If you have a single powerful core, you cannot have many cores A small core is much more energy and area efficient than a large core Can we get the best of both worlds? 25 Performance vs. Parallelism Assumptions: 1. Small cores takes an area budget of 1 and has performance of 1 2. Large core takes an area budget of 4 and has performance of 2 26 Tile-Large Approach Large core Large core Large core Large core “Tile-Large” Tile a few large cores IBM Power 5, AMD Barcelona, Intel Core2Quad, Intel Nehalem + High performance on single thread, serial code sections (2 units) - Low throughput on parallel program portions (8 units) 27 Tile-Small Approach Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core “Tile-Small” Tile many small cores Sun Niagara, Intel Larrabee, Tilera TILE (tile ultra-small) + High throughput on the parallel part (16 units) - Low performance on the serial part, single thread (1 unit) 28 Can we get the best of both worlds? Tile Large + High performance on single thread, serial code sections (2 units) - Low throughput on parallel program portions (8 units) Tile Small + High throughput on the parallel part (16 units) - Low performance on the serial part, single thread (1 unit), reduced single-thread performance compared to existing single thread processors Idea: Have both large and small on the same chip Performance asymmetry 29 Asymmetric Chip Multiprocessor (ACMP) Large core Large core Large core Large core “Tile-Large” Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core “Tile-Small” Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Large core ACMP Provide one large core and many small cores + Accelerate serial part using the large core (2 units) + Execute parallel part on small cores and large core for high throughput (12+2 units) 30 Accelerating Serial Bottlenecks Single thread Large core Large core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core Small core ACMP Approach 31 Performance vs. Parallelism Assumptions: 1. Small cores takes an area budget of 1 and has performance of 1 2. Large core takes an area budget of 4 and has performance of 2 32 ACMP Performance vs. Parallelism Area-budget = 16 small cores Large core Large core Large core Large core Small Small Small Small core core core core Small Small Small Small core core core core Large core Small Small core core Small Small core core Small Small Small Small core core core core Small Small Small Small core core core core Small Small Small Small core core core core Small Small Small Small core core core core “Tile-Small” ACMP “Tile-Large” Large Cores 4 0 1 Small Cores 0 16 12 Serial Performance 2 1 2 2x4=8 1 x 16 = 16 1x2 + 1x12 = 14 Parallel Throughput 33 33 Caveats of Parallelism, Revisited Amdahl’s Law f: Parallelizable fraction of a program N: Number of processors 1 Speedup = 1-f + f N Amdahl, “Validity of the single processor approach to achieving large scale computing capabilities,” AFIPS 1967. Maximum speedup limited by serial portion: Serial bottleneck Parallel portion is usually not perfectly parallel Synchronization overhead (e.g., updates to shared data) Load imbalance overhead (imperfect parallelization) Resource sharing overhead (contention among N processors) 34 Accelerating Parallel Bottlenecks Serialized or imbalanced execution in the parallel portion can also benefit from a large core Examples: Critical sections that are contended Parallel stages that take longer than others to execute Idea: Identify these code portions that cause serialization and execute them on a large core 35 An Example: Accelerated Critical Sections Problem: Synchronization and parallelization is difficult for programmers Critical sections are a performance bottleneck Idea: HW/SW ships critical sections to a large, powerful core in an asymmetric multi-core architecture Benefit: Reduces serialization due to contended locks Reduces the performance impact of hard-to-parallelize sections Programmer does not need to (heavily) optimize parallel code fewer bugs, improved productivity Suleman et al., “Accelerating Critical Section Execution with Asymmetric Multi-Core Architectures,” ASPLOS 2009, IEEE Micro Top Picks 2010. Suleman et al., “Data Marshaling for Multi-Core Architectures,” ISCA 2010, IEEE Micro Top Picks 2011. 36 Contention for Critical Sections Critical Section Parallel Thread 1 Thread 2 Thread 3 Accelerating Thread 4 Idle critical sections not only helps the thread executing t t t t t t thet critical sections, but also the waiting threads Thread 1 Critical Sections 1 2 3 4 5 6 Thread 2 Thread 3 Thread 4 7 execute 2x faster t1 t2 t3 t4 t5 t6 t7 37 Impact of Critical Sections on Scalability • Contention for critical sections increases with the number of threads and limits scalability 8 7 Speedup LOCK_openAcquire() foreach (table locked by thread) table.lockrelease() table.filerelease() if (table.temporary) table.close() LOCK_openRelease() 6 5 4 3 2 1 0 0 8 16 24 32 Chip Area (cores) MySQL (oltp-1) 38 Accelerated Critical Sections EnterCS() PriorityQ.insert(…) LeaveCS() 1. P2 encounters a critical section (CSCALL) 2. P2 sends CSCALL Request to CSRB 3. P1 executes Critical Section 4. P1 sends CSDONE signal Core executing critical section P1 P2 P3 Critical Section Request Buffer (CSRB) P4 OnchipInterconnect 39 Accelerated Critical Sections (ACS) Small Core Small Core A = compute() A = compute() PUSH A CSCALL X, Target PC LOCK X result = CS(A) UNLOCK X print result … … … … … … … Large Core CSCALL Request Send X, TPC, STACK_PTR, CORE_ID … Waiting in Critical Section … Request Buffer … (CSRB) TPC: Acquire X POP A result = CS(A) PUSH result Release X CSRET X CSDONE Response POP result print result Suleman et al., “Accelerating Critical Section Execution with Asymmetric Multi-Core Architectures,” ASPLOS 2009. 40 ACS Comparison Points Niagara Niagara Niagara Niagara -like -like -like -like core core core core Niagara Niagara Niagara Niagara -like -like -like -like core core core core Large core Niagara Niagara -like -like core core Niagara Niagara -like -like core core Large core Niagara Niagara -like -like core core Niagara Niagara -like -like core core Niagara Niagara Niagara Niagara -like -like -like -like core core core core Niagara Niagara Niagara Niagara -like -like -like -like core core core core Niagara Niagara Niagara Niagara -like -like -like -like core core core core Niagara Niagara Niagara Niagara -like -like -like -like core core core core Niagara Niagara Niagara Niagara -like -like -like -like core core core core Niagara Niagara Niagara Niagara -like -like -like -like core core core core SCMP ACMP ACS • All small cores • Conventional locking • One large core (area-equal 4 small cores) • Conventional locking • ACMP with a CSRB • Accelerates Critical Sections 41 ACS Performance Chip Area = 32 small cores Equal-area comparison Number of threads = Best threads 269 160 140 120 100 80 60 40 20 0 180 185 Coarse-grain locks ea n hm eb ca ch e w sp ec jb b -2 ol tp -1 ol tp ip lo ok up ts p sq lit e qs or t Accelerating Sequential Kernels Accelerating Critical Sections pu zz le pa ge m in e Speedup over SCMP SCMP = 32 small cores ACMP = 1 large and 28 small cores Fine-grain locks 42 ACS Performance Tradeoffs Fewer threads vs. accelerated critical sections Accelerating critical sections offsets loss in throughput As the number of cores (threads) on chip increase: Overhead of CSCALL/CSDONE vs. better lock locality Fractional loss in parallel performance decreases Increased contention for critical sections makes acceleration more beneficial ACS avoids “ping-ponging” of locks among caches by keeping them at the large core More cache misses for private data vs. fewer misses for shared data 43 Cache misses for private data PriorityHeap.insert(NewSubProblems) Private Data: NewSubProblems Shared Data: The priority heap Puzzle Benchmark 44 ACS Performance Tradeoffs Fewer threads vs. accelerated critical sections Accelerating critical sections offsets loss in throughput As the number of cores (threads) on chip increase: Overhead of CSCALL/CSDONE vs. better lock locality Fractional loss in parallel performance decreases Increased contention for critical sections makes acceleration more beneficial ACS avoids “ping-ponging” of locks among caches by keeping them at the large core More cache misses for private data vs. fewer misses for shared data Cache misses reduce if shared data > private data 45 ACS Comparison Points Niagara Niagara Niagara Niagara -like -like -like -like core core core core Niagara Niagara Niagara Niagara -like -like -like -like core core core core Large core Niagara Niagara -like -like core core Niagara Niagara -like -like core core Large core Niagara Niagara -like -like core core Niagara Niagara -like -like core core Niagara Niagara Niagara Niagara -like -like -like -like core core core core Niagara Niagara Niagara Niagara -like -like -like -like core core core core Niagara Niagara Niagara Niagara -like -like -like -like core core core core Niagara Niagara Niagara Niagara -like -like -like -like core core core core Niagara Niagara Niagara Niagara -like -like -like -like core core core core Niagara Niagara Niagara Niagara -like -like -like -like core core core core SCMP ACMP ACS • All small cores • Conventional locking • One large core (area-equal 4 small cores) • Conventional locking • ACMP with a CSRB • Accelerates Critical Sections 46 ------ SCMP ------ ACMP ------ ACS Equal-Area Comparisons Number of threads = No. of cores Speedup over a small core 3.5 3 2.5 2 1.5 1 0.5 0 3 5 2.5 4 2 7 6 5 4 3 2 1 0 3 1.5 2 1 0.5 1 0 0 3.5 3 2.5 2 1.5 1 0.5 0 14 12 10 8 6 4 2 0 0 8 16 24 32 0 8 16 24 32 0 8 16 24 32 0 8 16 24 32 0 8 16 24 32 0 8 16 24 32 (a) ep (b) is (c) pagemine (d) puzzle (e) qsort (f) tsp 6 10 5 8 4 8 6 6 3 1 2 0 0 3 12 10 2.5 10 8 2 8 6 1.5 6 4 1 4 2 0.5 2 0 0 0 4 4 2 12 2 0 0 8 16 24 32 0 8 16 24 32 (g) sqlite (h) iplookup 0 8 16 24 32 (i) oltp-1 0 8 16 24 32 0 8 16 24 32 0 8 16 24 32 (i) oltp-2 (k) specjbb (l) webcache Chip Area (small cores) 47