#32 - Machine Learning 11/07/07 BCB 444/544 Machine Learning

advertisement

#32 - Machine Learning

11/07/07

Required Reading

BCB 444/544

(before lecture)

Fri Oct 30 - Lecture 30

Lecture 32

Phylogenetic – Distance-Based Methods

• Chp 11 - pp 142 – 169

Mon Nov 5 - Lecture 31

Phylogenetics – Parsimony and ML

Machine Learning

• Chp 11 - pp 142 – 169

Wed Nov 7 - Lecture 32

Machine Learning

#32_Nov07

Fri Nov 9 - Lecture 33

Functional and Comparative Genomics

• Chp 17 and Chp 18

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

1

BCB 544 Only:

New Homework Assignment

11/07/07

2

Seminars this Week

BCB List of URLs for Seminars related to Bioinformatics:

544 Extra#2

Due:

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

http://www.bcb.iastate.edu/seminars/index.html

√PART 1 - ASAP

PART 2 - meeting prior to 5 PM Fri Nov 2

• Nov 7 Wed - BBMB Seminar 4:10 in 1414 MBB

• Sharon Roth Dent

MD Anderson Cancer Center

• Role of chromatin and chromatin modifying proteins in

Part 1 - Brief outline of Project, email to Drena & Michael

regulating gene expression

after response/approval, then:

• Nov 8 Thurs - BBMB Seminar 4:10 in 1414 MBB

• Jianzhi George Zhang

Part 2 - More detailed outline of project

U. Michigan

• Evolution of new functions for proteins

Read a few papers and summarize status of problem

• Nov 9 Fri - BCB Faculty Seminar 2:10 in 102 SciI

Schedule meeting with Drena & Michael to discuss ideas

• Amy Andreotti

ISU

• Something about NMR

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

3

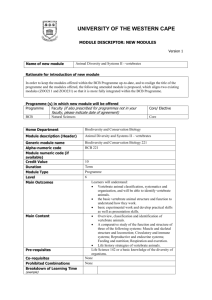

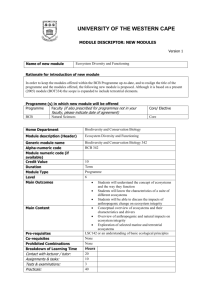

Chp 11 – Phylogenetic Tree Construction Methods

and Programs

Distance-Based Methods

Character-Based Methods

Phylogenetic Tree Evaluation

Phylogenetic Programs

BCB 444/544 Fall 07 Dobbs

4

• Bootstrapping

• Jackknifing

• Bayesian Simulation

• Statistical difference tests (are two

trees significantly different?)

Xiong: Chp 11 Phylogenetic Tree Construction Methods

and Programs

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

Phylogenetic Tree Evaluation

SECTION IV MOLECULAR PHYLOGENETICS

•

•

•

•

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

• Kishino-Hasegawa Test (paired t-test)

• Shimodaira-Hasegawa Test (χ2 test)

11/07/07

5

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

6

1

#32 - Machine Learning

11/07/07

Bootstrapping

Bootstrapping Comments

• Bootstrapping doesn’t really assess the

accuracy of a tree, only indicates the

consistency of the data

• To get reliable statistics, bootstrapping

needs to be done on your tree 500 – 1000

times, this is a big problem if your tree

took a few days to construct

• A bootstrap sample is obtained by sampling sites

randomly with replacement

• Obtain a data matrix with same number of taxa and

number of characters as original one

• Construct trees for samples

• For each branch in original tree, compute fraction

of bootstrap samples in which that branch appears

• Assigns a bootstrap support value to each branch

• Idea: If a grouping has a lot of support, it will be

supported by at least some positions in most of the

bootstrap samples

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

7

Jackknifing

11/07/07

9

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

10

Phylogenetic Programs

• Huge list at:

• http://evolution.genetics.washington.edu/phylip/so

ftware.html

• PAUP* - one of the most popular programs,

commercial, Mac and Unix only, nice user interface

• PHYLIP – free, multiplatform, a bit difficult to use

but web servers make it easier

• WebPhylip – another interface for PHYLIP online

BCB 444/544 Fall 07 Dobbs

8

• Using a Bayesian ML method to produce a tree

automatically calculates the probability of many

trees during the search

• Most trees sampled in the Bayesian ML search are

near an optimal tree

Phylogenetic Programs

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

Bayesian Simulation

• Another resampling technique

• Randomly delete half of the sites in the

dataset

• Construct new tree with this smaller

dataset, see how often taxa are grouped

• Advantage – sites aren’t duplicated

• Disadvantage – again really only measuring

consistency of the data

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

• TREE-PUZZLE – uses a heuristic to allow ML on

large datasets, also available as a web server

• PHYML – web based, uses genetic algorithm

• MrBayes – Bayesian program, fast and can handle

large datasets, multiplatform download

• BAMBE – web based Bayesian program

11

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

12

2

#32 - Machine Learning

11/07/07

Final Comments on Phylogenetics

Machine Learning

• What is learning?

• What is machine learning?

• Learning algorithms

• Machine learning applied to bioinformatics and

computational biology

• No method is perfect

• Different methods make very

different assumptions

• If multiple methods using different

assumptions come up with similar

results, we should trust the results

more than any single method

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

• Some slides adapted from Dr. Vasant Honavar and Dr. Byron Olson

13

What is Learning?

11/07/07

15

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

16

Contributing Disciplines

• Machine learning is an area of artificial intelligence

concerned with development of techniques which

allow computers to “learn”

• Computer Science – artificial intelligence,

algorithms and complexity, databases, data mining

• Statistics – statistical inference, experimental

design, exploratory data analysis

• Mathematics – abstract algebra, logic, information

theory, probability theory

• Psychology and neuroscience – behavior, perception,

learning, memory, problem solving

• Philosophy – ontology, epistemology, philosophy of

mind, philosophy of science

• Machine learning is a method for creating computer

programs by the analysis of data sets

• We understand a phenomenon when we can write a

computer program that models it at the desired

level of detail

BCB 444/544 Fall 07 Dobbs

14

• Rote learning – useful when it is less expensive to

store and retrieve some information than to

compute it

• Learning from instruction – transform instructions

into useful knowledge

• Learning from examples – extract predictive or

descriptive regularities from data

• Learning from deduction – generalize instances of

deductive problem-solving

• Learning from exploration – learn to choose actions

that maximize reward

What is Machine Learning?

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

Types of Learning

• Learning is a process by which the learner improves

his performance on a task or a set of tasks as a

result of experience within some environment

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

17

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

18

3

#32 - Machine Learning

11/07/07

Machine Learning Applications

•

•

•

•

•

•

•

•

Machine Learning Algorithms

Bioinformatics and Computational Biology

Environmental Informatics

Medical Informatics

Cognitive Science

E-Commerce

Human Computer Interaction

Robotics

Engineering

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

• Many types of algorithms differing in the

structure of the learning problem as well as the

approach to learning used

• Regression vs. Classification

• Supervised vs. Unsupervised

• Generative vs. Discriminative

• Linear vs. Non-Linear

11/07/07

19

Machine Learning Algorithms

11/07/07

21

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

22

Machine Learning Algorithms

• Generative vs. Discriminative

• Philosophical difference

• Generative models attempt to recreate or

understand the process that generated the data

• Discriminative models attempt to simply separate

or determine the class of input data without regard

to the process

BCB 444/544 Fall 07 Dobbs

20

• Supervised vs. Unsupervised

• Data difference

• Supervised learning involves using pairs of

input/output relationships to learn an input output

mapping (called labeled pairs often denoted {X,Y}

• Unsupervised learning involves examining input data

to find patterns (clustering)

Machine Learning Algorithms

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

Machine Learning Algorithms

• Regression vs. Classification

• Structural difference

• Regression algorithms attempt to map inputs into

continuous outputs (integers, real numbers, etc.)

• Classification algorithms attempt to map inputs

into one of a set of classes (color, cellular

locations, good and bad credit risks, etc.)

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

• Linear vs. Non-linear

• Modeling difference

• Linear models involve only linear combinations of

input variables

• Non-linear models are not restricted in their form

(commonly include exponentials or quadratic terms)

23

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

24

4

#32 - Machine Learning

11/07/07

Summary of Machine Learning

Algorithms

Linear vs. Non-linear

• This is only the tip of the iceberg

• No single algorithm works best for every

application

• Some simple algorithms are effective on many data

sets

• Better results can be obtained by preprocessing

the data to suit the algorithm or adapting the

algorithm to suit the characteristics of the data

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

25

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

26

Trade Off Between Specificity and

Sensitivity

Measuring Performance

• Classification threshold controls a trade off

between specificity and sensitivity

• High specificity – predict fewer instances with

higher confidence

• High sensitivity – predict more instances with lower

confidence

• Commonly shown as a Receiver Operating

Characteristic (ROC) Curve

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

27

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

Measuring Performance

BCB 444/544 Fall 07 Dobbs

28

11/07/07

30

Machine Learning in Bioinformatics

• Using any single measure of performance is

problematic

• Accuracy can be misleading – when 95% of

examples are negative, we can achieve 95%

accuracy by predicting all negative. We are 95%

accurate, but 100% wrong on positive examples

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

11/07/07

•

•

•

•

•

•

•

29

Gene finding

Binding site identification

Protein structure prediction

Protein function prediction

Genetic network inference

Cancer diagnosis

etc.

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

5

#32 - Machine Learning

11/07/07

Sample Learning Scenario – Protein

Function Prediction

Some Examples of Algorithms

• Naïve Bayes

• Neural network

• Support Vector Machine

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

31

• Problem: Given an amino acid sequence, classify

each residue as RNA binding or non-RNA binding

• Input to the classifier is a string of amino acid

identities

• Output from the classifier is a class label, either

binding or not

11/07/07

P( A | B) =

P (c = 1 | X = x ) =

33

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

P(c = 1 | X = x)

P(c = 0 | X = x)

=

P(c = 1) P( X = x | c = 1)

P( X = x)

34

11/07/07

P( X1 = x1 , X 2 = x2 ,..., X n = xn | c =1) × P( c =1)

P ( X1 = x1 , X 2 = x2 ,..., X n = xn | c = 0) × P( c = 0)

n

=

P(c = 0) P ( X = x | c = 0)

P (c = 0 | X = x ) =

P( X = x)

BCB 444/544 Fall 07 Dobbs

11/07/07

P ( A) P ( B | A)

P( B)

Naïve Bayes Algorithm

P (binding ) P ( aa seq | binding )

P ( aa seq )

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

32

P(A) = prior probability

P(A|B) = posterior probability

Bayes Theorem Applied to Classification

P (binding | aa seq ) =

11/07/07

Bayes Theorem

Predicting RNA binding sites in proteins

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

P(c = 1)∏ P( X i = xi | c = 1)

i =1

n

P(c = 0)∏ P( X i = xi | c = 0)

i =1

35

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

36

6

#32 - Machine Learning

11/07/07

Naïve Bayes Algorithm

Assign c=1 if

Example

P(c =1| X = x)

≥θ

P(c = 0| X = x)

ARG 6

TSKKKRQRGSR

p(X1 = T | c = 1) p(X2 = S | c = 1) …

p(X1 = T | c = 0) p(X2 = S | c = 0) …

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

37

Predictions for Ribosomal protein L15

PDB ID 1JJ2:K

Actual

11/07/07

39

38

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

40

11/07/07

42

Artificial Neuron – “Perceptron”

Dendrites receive inputs, Axon gives output

Image from Christos Stergiou and Dimitrios Siganos

http://www.doc.ic.ac.uk/~nd/surprise_96/journal/vol4/cs11/report.html

Image from Christos Stergiou and Dimitrios Siganos

http://www.doc.ic.ac.uk/~nd/surprise_96/journal/vol4/cs11/report.html

BCB 444/544 Fall 07 Dobbs

11/07/07

•The most successful methods for predicting secondary

structure are based on neural networks. The overall idea is

that neural networks can be trained to recognize amino

acid patterns in known secondary structure units, and to

use these patterns to distinguish between the different

types of secondary structure.

•Neural networks classify “input vectors” or “examples”

into categories (2 or more).

•They are loosely based on biological neurons.

Biological Neurons

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

Neural networks

Predicted

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

≥ θ

11/07/07

41

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

7

#32 - Machine Learning

11/07/07

The perceptron

The perceptron

X1

X2

w1

S = ∑ X i Wi

i =1

XN

• The input is a vector X and the weights can be stored in another

vector W.

⎧1 S > T

⎨

⎩0 S < T

T

N

w2

wN

• The perceptron computes the dot product S = X.W

• The output F is a function of S: it is often discrete (i.e. 1 or 0), in

which case the function is the step function.

• For continuous output, often use a sigmoid:

Input

Threshold Unit

Output

1

The perceptron classifies the input vector X into two categories.

If the weights and threshold T are not known in advance, the perceptron

must be trained. Ideally, the perceptron must be trained to return the correct

answer on all training examples, and perform well on examples it has never seen.

1

F(X ) =

1 + e− X

1/2

0

0

The training set must contain both type of data (i.e. with “1” and “0” output).

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

43

The perceptron

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

44

11/07/07

46

11/07/07

48

Biological Neural Network

Training a perceptron:

Find the weights W that minimizes the error function:

P: number of training data

Xi: training vectors

F(W.Xi): output of the perceptron

t(Xi) : target value for Xi

E = ∑ (F ( X i .W ) − t ( X i ) )

P

2

i =1

Use steepest descent:

- compute gradient:

- update weight vector:

- iterate

⎛ ∂E ∂E ∂E

∂E

∇E = ⎜⎜

,

,

,...,

∂wN

⎝ ∂w1 ∂w2 ∂w3

⎞

⎟⎟

⎠

Wnew = Wold − ε∇E

Image from http://en.wikipedia.org/wiki/Biological_neural_network

(e: learning rate)

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

45

Artificial Neural Network

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

Support Vector Machines - SVMs

A complete neural network

is a set of perceptrons

interconnected such that

the outputs of some units

becomes the inputs of

other

units. Many topologies are

possible!

Neural networks are trained just like perceptron, by minimizing an error function:

E=

∑ (NN ( X

Ndata

i

) − t ( X i ))

i =1

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

BCB 444/544 Fall 07 Dobbs

2

Image from http://en.wikipedia.org/wiki/Support_vector_machine

11/07/07

47

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

8

#32 - Machine Learning

11/07/07

Kernel Function

SVM finds the maximum margin hyperplane

Image from http://en.wikipedia.org/wiki/Support_vector_machine

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

49

Kernel Function

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

50

Take Home Messages

• Must consider how to set up the learning problem

(supervised or unsupervised, generative or

discriminative, classification or regression, etc.)

• Lots of algorithms out there

• No algorithm performs best on all problems

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

BCB 444/544 Fall 07 Dobbs

11/07/07

51

BCB 444/544 F07 ISU Terribilini #32- Machine Learning

11/07/07

52

9