Document 10414700

advertisement

308

IEEE TRANSACTIONS ON COMPUTER-AIDED DESIGN OF INTEGRATED CIRCUITS AND SYSTEMS, VOL. 31, NO. 2, FEBRUARY 2012

Physically-Aware N-Detect Test

Yen-Tzu Lin, Member, IEEE, Osei Poku, Student Member, IEEE, Naresh K. Bhatti,

R. D. (Shawn) Blanton, Fellow, IEEE, Phil Nigh, Peter Lloyd, Vikram Iyengar, Member, IEEE

Abstract—Physically-aware N-detect (PAN-detect) test improves defect coverage by exploiting defect locality. This paper

presents physically-aware test selection (PATS) to efficiently generate PAN-detect tests for large industrial designs. Compared to

traditional N-detect test, the quality resulting from PAN-detect is

enhanced without any increase in test execution cost. Experiment

results from an IBM in-production application-specific integrated

circuit demonstrate the effectiveness of PATS in improving defect

coverage. Moreover, utilizing novel test-metric evaluation, we

compare the effectiveness of traditional N-detect and PAN-detect,

and demonstrate the impact of automatic test pattern generation

parameters on the effectiveness of PAN-detect.

Index Terms—N-detect, physically-aware, test effectiveness,

test generation, test selection.

I. Introduction

W

ITH THE ADVANCE of integrated circuit (IC) manufacturing technology, the transistor count and density

of an IC has dramatically increased, which enables the integration of more functionality into a single chip. The increased

circuit complexity, however, brings new challenges to IC

testing, whose objective is to detect defective chips. As manufacturing technology improves, defect behaviors become more

complicated and harder to characterize [1]; the distribution and

types of defects change as well [2].

Test methodologies have been evolving to capture the

changing characteristics of chip failures. Tests generated based

on stuck-at faults are widely used, but it has been shown that

the stuck-at fault model alone is not sufficient for defects

now encountered in advanced manufacturing processes [3]–

Manuscript received August 2, 2011; accepted September 9, 2011. Date of

current version January 20, 2012. This work was supported in part by the

National Science Foundation, under Award CCF-0427382, and in part by the

SRC, under Contract 1246.001. This paper was recommended by Associate

Editor S. S. Sapatnekar.

Y.-T. Lin was with the Department of Electrical and Computer Engineering

Department, Carnegie Mellon University, Pittsburgh, PA 15213 USA. She

is now with NVIDIA Corporation, Santa Clara, CA 95050 USA (e-mail:

yenlin@nvidia.com).

O. Poku and R. D. (Shawn) Blanton are with the Department of Electrical

and Computer Engineering, Carnegie Mellon University, Pittsburgh, PA 15213

USA (e-mail: opoku@ece.cmu.edu; blanton@ece.cmu.edu).

N. K. Bhatti was with the Department of Electrical and Computer

Engineering, Carnegie Mellon University, Pittsburgh, PA 15213 USA.

He is now with Mentor Graphics, Fremont, CA 94538 USA (e-mail:

naresh bhatti@mentor.com).

P. Nigh and P. Lloyd are with IBM, Essex Junction, VT 05452 USA (e-mail:

nigh@us.ibm.com; ptlloyd@us.ibm.com).

V. Iyengar is with IBM Test Methodology, Pittsburgh, PA 15212 USA

(e-mail: vikrami@us.ibm.com).

Color versions of one or more of the figures in this paper are available

online at http://ieeexplore.ieee.org.

Digital Object Identifier 10.1109/TCAD.2011.2168526

[6]. Other test methods that are complex and target some

specific defect behaviors have been developed and employed

to improve defect coverage [3], [7]–[14]. Among existing test

methods, physically-aware N-detect (PAN-detect) enhances

test quality without significantly increasing test development

cost. Specifically, PAN-detect test improves test quality by

utilizing layout information and exploring conditions that are

more likely to activate and detect unmodeled defects. PANdetect test is general in that it does not presume specific defect

behaviors and therefore is not limited to existing fault models.

It however subsumes other static test methods through the

concept of physical neighborhoods.

This paper presents a physically-aware test selection (PATS)

method that creates compact, PAN-detect test sets [15]–[17].

The objective of PATS is to efficiently generate a PAN-detect

test set from a large, traditional M-detect test set (M > N)

in the absence of a physically-aware automatic test pattern

generation (ATPG) tool. Specifically, PATS can be used to

create a test set that is more effective than a traditional

N-detect test set in exercising physical neighborhoods that

surround targeted lines without increasing test-execution costs.

Additionally, if a PAN-detect test set is provided, PATS can

be used as a static compaction technique since it is possible

that tests generated later in the ATPG process may be more

effective than those generated earlier.

We examine the effectiveness of PAN-detect test using

two approaches. In the first approach, tester responses from

failing IBM application-specific integrated circuits (ASICs)

are analyzed to compare the PAN-detect tests with other traditional tests that include stuck-at, quiescent current (IDDQ),

logic built-in self-test (BIST), and delay tests. In the second

approach, diagnostic results from the failure logs of an LSI test

chip are used to compare traditional N-detect and PAN-detect.

Results from both experiments demonstrate the effectiveness

of PAN-detect. This paper subsumes and extends existing

work [16], [17] by: 1) providing a thorough introduction

to PAN-detect (Sections II and III-A); 2) conducting new

experiments to quantitatively demonstrate the effectiveness of

PAN-detect over N-detect (Section V-C); and 3) analyzing the

impact of ATPG parameters on the effectiveness of PAN-detect

(Section VI).

The remainder of this paper is organized as follows. Section II describes previous work relevant to PAN-detect test.

In Section III, we introduce PAN-detect test and qualitatively

compare it with some other test methods. An efficient, PATS

approach for generating PAN-detect test sets is described

in Section IV. Section V presents experiment results that

c 2012 IEEE

1051-8215/$31.00 © 2012 IEEE. Personal use of this material is permitted. Permission from IEEE must be obtained for all other uses, in any current or future media,

including reprinting/republishing this material for advertising or promotional purposes, creating new collective works, for resale or redistribution

to servers or lists, or reuse of any copyrighted component of this work in other works.

LIN et al.: PHYSICALLY-AWARE N-DETECT TEST

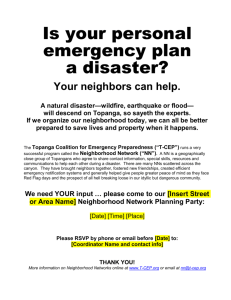

Fig. 1. (a) Example gate-level circuit and (b) its interconnect layout illustrating the physical proximity of signals l6 and l7 .

demonstrate the effectiveness of both PATS and PAN-detect.

Section VI analyzes the sensitivity of PAN-detect effectiveness

on various ATPG parameters. Finally, in Section VII, conclusions are drawn.

II. Background

The stuck-at fault model [18] assumes that a single signal

line in a faulty circuit is permanently fixed to either logic 0

or 1. It has been widely used as the basis of test generation

and evaluation because of its low cost and low complexity. The

stuck-at fault model alone however is not sufficient for capturing emerging defects encountered in advanced manufacturing

processes. To reduce test escapes, complex fault models have

been developed to closely emulate possible defect behavior,

such as bridge [7], open [19], [20], net [9], nasty polysilicon

defect [21], transition [12], and input-pattern [10] fault models.

Specifically, in defect-based test [1], physical information is

analyzed to identify possible defect sites. The behavior of the

defects are mapped to the gate-level to form the fault models.

By targeting complex fault models in ATPG, it is expected that

the resulting test set will improve defect coverage. The large

variety of defect behaviors and their associated fault models

however make it impractical to generate tests for all of them.

N-detect test was proposed to improve defect coverage

without drastically increasing complexity in terms of modeling

and test generation [3]. In N-detect test, each stuck-at fault

is detected at least N times by N distinct test patterns. One

advantage of N-detect test over other defect-based models is

that existing approaches to ATPG for the stuck-at fault model

can be easily modified to generate N-detect test patterns.

The effectiveness of N-detect test can be easily understood

by its impact on defect activation; the detection of an

unmodeled defect depends on whether the defect is

fortuitously activated by tests targeting existing fault models.

N-detect test increases the probability of detecting an

arbitrary defect that affects a targeted line since more “circuit

states” and therefore more defect excitation conditions are

established. Here, a circuit state is the set of logic values

established on all the signal lines within the circuit under the

application of a test pattern. Any (single) error established

by the fortuitous activation of the defect can be propagated

to an observation point (i.e., a primary output or scanned

memory element) by the sensitized path(s) established by the

N-detect test pattern. Consequently, N-detect test has a greater

likelihood of activating and detecting unmodeled defects as

compared to traditional stuck-at (i.e., 1-detect) test, which

only requires the targeted fault be detected once.

Various experiments have demonstrated the ability of Ndetect to improve defect detection. Experiments in the original

309

work on N-detect [3] showed that a 5-detect and a 15-detect

test set reduced the defective parts per million shipped (DPPM)

of a test chip by 75% and 100%, respectively. Subsequent

work provided quality measurements based on the number of

times each stuck-at fault is detected [13], [22]–[26] and the

number of established circuit states on lines physically close

to the targeted line [15]. Experiments with real products [3],

[5], [6], [13], [22], [27]–[29] also showed that N-detect test

improves defect coverage.

The original requirement for N-detect test is to ensure every

stuck-at fault is detected by at least N “different” test patterns.

Two test patterns t1 and t2 are different if at least one input

(primary or scan) has opposite logic values applied. Under this

definition, it is possible that t1 and t2 detect the same stuck-at

fault with different circuit inputs but the application of t2 does

not increase the likelihood of detecting some defect affecting

the targeted stuck line. For example, consider a logic circuit

and a portion of its layout in metal layers two and three, as

illustrated in Fig. 1. Suppose that the adjacent lines l6 and

l7 are affected by a defect, causing the logic value of l6 to

dominate the value of l7 . If an N-detect test set alters the

logic value of l6 while detecting a stuck-at fault affecting l7 ,

then the defect has a higher probability of being activated and

detected. However, an N-detect test set may target l7 multiple

times with l6 fixed while changing values applied to input l3 ,

l4 , or l5 , resulting in no increase in the likelihood of defect

activation. This illustrates how physical information can be

used to enhance a test set based on the N-detect criteria for

improving defect coverage.

III. PAN-Detect

In this section, we first introduce the PAN-detect metric.

Then, we compare PAN-detect with traditional N-detect and

defect-based test. Finally, issues with PAN-detect ATPG and

their mitigation are discussed.

A. Improving N-Detect

PAN-detect test improves test quality achieved by traditional

N-detect by exploiting defect locality. In [15], a PAN-detect

metric was proposed to guide test generation. The metric has

since been generalized and defines the physical neighborhood

of a targeted line to include: 1) physical neighbors, signal lines

(and/or their drivers) that are within some specified, physical

distance d from the targeted line in every routing layer that

the targeted line exists (signal lines on different routing layers

are not considered); 2) driver-gate inputs, inputs of the gate

that drives the targeted line; and 3) receiver-side inputs, side

inputs of the gates that receive the targeted line [15], [16],

[30]. Given a defect that affects the functionality of a targeted

line li , the signal lines in the neighborhood of li are those

deemed to most likely influence li . Ensuring these signal lines

are exercised during test ensures the exploration of a richer

set of defect activation and propagation conditions. The logic

values of the neighborhood lines established by a test pattern

that sensitizes the targeted line is defined as a neighborhood

state. For PAN-detect, the objective is to detect every stuck-at

fault N times, where each detection is associated with a unique

310

IEEE TRANSACTIONS ON COMPUTER-AIDED DESIGN OF INTEGRATED CIRCUITS AND SYSTEMS, VOL. 31, NO. 2, FEBRUARY 2012

TABLE I

Possible Neighborhood States for the Stuck-at Faults

Involving Targeted Line l6 , Which Has Two Neighbors l5 and l7

Stuck-at Fault

l6 SA0

Neighborhood State

l5

l7

Stuck-at Fault

0

0

0

1

l6 SA1

1

0

1

1

Neighborhood State

l5

l7

0

0

0

1

1

0

1

1

The preferred states for each targeted stuck-at fault are highlighted.

neighborhood state. In other words, a test for a given stuck-at

fault is counted under the PAN-detect metric if and only if:

1) it detects the targeted stuck-at fault, and 2) establishes a

distinct neighborhood state.

The following example is used to illustrate the PAN-detect

metric. Again, consider signal lines l6 and l7 in Fig. 1. Suppose

the distance between l6 and l7 is d1 , and the distance used for

extracting physical neighbors is d2 . Then line l7 is deemed

to be in the neighborhood of l6 if d1 ≤ d2 . Assume signal

lines l5 and l7 are the neighbors of the targeted line l6 .

For both l6 stuck-at-0 and stuck-at-1, there are four possible

neighborhood states, as shown in Table I. A physically-aware

4-detect test set should have at least eight tests, four of which

detect line l6 stuck-at-0 with four unique neighborhood states,

while the remaining four detect l6 stuck-at-1 with four unique

neighborhood states.

The neighborhood state definition, as previously described,

implicitly assumes that all possible neighborhood states are of

equal importance. Both failure analysis results and intuition

reveal that certain neighborhood states are more likely to cause

defect activation [31]. If a list of “preferred” states is provided,

the test generation/selection procedure can be biased toward

achieving those states since it is too cost prohibitive to consider

all states especially when the number of neighbors is larger

than three or four. We define a class of neighborhood states,

called preferred states, which are believed to have a greater

likelihood of activating a defect affecting the targeted line. For

a targeted stuck-at-0 (1) fault, the preferred states include those

that maximize the number of logic zeroes (ones) achievable

on the neighborhood lines. Note that we use maximum since

the all-0 and all-1 states may be impossible to achieve due

to the constraints imposed by the circuit structure. For the

stuck-at faults involving l6 in Fig. 1, all the neighborhood

states shown in Table I are achievable. Then the preferred

state for l6 stuck-at-0 is “00” and that for l6 stuck-at-1 is

“11,” as highlighted in Table I. Intuitively, these states produce

a neighborhood environment that has a greater capability of

activating the associated defect behavior, and therefore are

targeted first before other neighborhood states.

Driver-gate and receiver-side inputs can be obtained directly

from analyzing the logical description of the circuit. Physical

neighbors can be obtained in a variety of ways. For instance,

one approach can use critical area [32], while another, less

accurate approach, can utilize parasitic-extraction data. Critical area is a measure of design sensitivity to random, spot

defects, and is defined as the region in the layout where

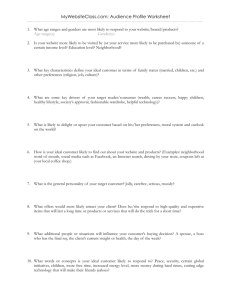

Fig. 2. Example gate-level circuits with signal lines affected by defects,

where (a) l1 is a bridged net, (b) l5 an opened net, and (c) l9 is driven by a

defective gate.

the occurrence of a defect with radius r can lead to chip

failure. Consider a bridge defect that affects the targeted line.

The signal lines that have a nonzero critical area with the

targeted line for the considered defect radius are those likely

to be involved in the defect, and are deemed the physical

neighbors of the targeted line. Critical-area analysis is, in

general, a computationally intensive task, especially when the

required accuracy is high [33]. Some efficient approaches have

been developed however to reduce the runtime of critical-area

analysis [34]–[36]. On the other hand, information obtained

from parasitic extraction includes the coupling capacitance

between a targeted line and each neighboring signal line.

Signal lines that have some coupling capacitance with the

targeted line can also be used as the physical neighbors.

The upper bound on the number of unique neighborhood

states for a targeted line li with k neighbors is 2k . However,

the structure of the circuit may prevent some states from being

established. Also, these “impossible” states may be stuckat fault dependent, i.e., a given state may be possible for li

stuck-at-0 but not for li stuck-at-1. For example, consider

line l9 in Fig. 1. Assume signal lines l3 and l10 are the

neighbors of l9 . The achievable neighborhood states for l9

stuck-at-0 include l3 l10 ∈ {00, 01, 11}, while those for l9

stuck-at-1 include l3 l10 ∈ {10, 11}. The number of achievable

neighborhood states for a stuck-at fault provides a feasible

upper bound of the value N, the targeted number of stuck-at

fault detections. Traditional N-detect tests simply ignore the

actual number of possible neighborhood states. ATPG tools

that generate traditional N-detect tests may produce tests that

detect a stuck-at fault N times even though the fault has less

than N achievable neighborhood states. On the contrary, PANdetect allows test generation resources to be redirected when

more unique neighborhood states cannot be achieved. In other

words, realizing that some states are impossible means that

tests that do not or cannot achieve additional states for a

given stuck-at fault can be retargeted to other lines. Thus,

test resources are better utilized when the locality of defects

is exploited.

B. N-Detect Versus PAN-Detect

Here we use examples to illustrate why PAN-detect test is

believed to be more effective than traditional N-detect. Fig. 2

shows three gate-level circuits. In Fig. 2(a), l1 is a bridged net,

and the logic value of l1 is assumed to be controlled by its

physical neighbors, i.e., l2 , and l3 . In PAN-detect test, the logic

LIN et al.: PHYSICALLY-AWARE N-DETECT TEST

values of l2 and l3 will be driven to the all-0 and all-1 states for

at least two tests that establish the preferred states and sensitize

l1 for both stuck-at-0 and stuck-at-1. For this situation, the

bridged net l1 is more likely to be driven to the faulty value

if indeed the neighborhood lines control activation.

In Fig. 2(b), the defective line l5 is an opened net, and

its floating fanout, l5a , l5b , and l5c , is assumed to behave

like a multiple stuck-at fault that can vary from pattern to

pattern [9]. Recall that receiver-side inputs are included in

the neighborhood of a targeted line. Thus, the neighborhood

of l5 includes l6 , l7 , and l8 . For this neighborhood, there is

a test that sensitizes one of the fanout lines (e.g., l5a ) that

establishes a neighborhood state (e.g., l6 l7 l8 = 101) that blocks

error propagation for two fanout lines thus transforming the

multiple stuck-at fault to a single stuck-at fault that is now

easily detected by the pattern.

In Fig. 2(c), l9 is driven by a defective logic gate causing l9

to be faulty under certain gate input combinations. Among all

possible input combinations for the AND gate driving l9 , the

one that has more than one controlling value, i.e., state l11 l12 =

00, is not normally targeted during ATPG. Since driver-gate

inputs are included in the neighborhood, exploring multiple

neighborhood states increases the likelihood of detecting gate

defects. By including driver-gate inputs as neighbors, PANdetect test subsumes gate exhaustive test [14], which requires

all possible input combinations to be applied to each gate while

its output is sensitized.

By explicitly considering physical neighbors, driver-gate

inputs, and receiver-side inputs in the physical neighborhoods,

and by exploring multiple neighborhood states of a targeted

line, PAN-detect test increases the likelihood of activating

and detecting unmodeled defects. Since traditional N-detect

test is physically unaware, it is less likely to establish the

neighborhood states necessary to activate a bridge, open, or

gate defect that affects the targeted line. In later sections, we

support this hypothesis concerning PAN-detect with test data

taken from actual failing ICs.

C. Defect-Based Test Versus PAN-Detect Test

In defect-based test, defect behaviors are characterized as

fault models, e.g., bridge and open faults [1]. These fault

models, which presume specific defect behaviors, are then

targeted in ATPG. Nevertheless, the behaviors of bridges and

opens depend on many factors and are not known a priori.

To ensure completeness, a plethora of defect behaviors must

be considered, which is impractical. On the other hand, in

PAN-detect test, establishing all neighborhood states subsumes

any static activation conditions of any potential gate, bridge,

or open defect behavior, and accomplishes this task concisely

through the neighborhood concept. It should be noted however

that PAN-detect test may establish neighborhood states that are

not necessary for defect detection.

Although PAN-detect test and defect-based test both utilize

layout information, the layout usage of the former is very

limited. Specifically, in PAN-detect test, physical neighbors

are required for each targeted line. This type of information

is essentially already available in netlists generated during

parasitic extraction. Thus, there is no additional cost related to

311

Fig. 3.

Overview of PATS.

the use of layout. Other neighborhood information involving

driver-gate inputs and receiver-side inputs is easily obtained

from the logical netlist, so layout is not required in those

cases. On the contrary, defect-based models such as opens and

bridges require precise analysis of the layout to identify their

location. The complexity and cost associated with defect-based

test is therefore higher than that of PAN-detect test.

D. Test Generation and Compaction

In [37], a PAN-detect ATPG algorithm has been developed.

Each stuck-at fault is systematically targeted multiple times

with different neighborhood states. This approach, however, is

time consuming and therefore not applicable to large industrial

designs. On the other hand, a significant issue that arises with

N-detect is the expanded test-set size that results for both

the traditional N-detect [3], [38], [24] and PAN-detect [37]

metrics. While increasing the number of detections improves

defect coverage, the test-set size also grows approximately

linearly [39]. The DO-RE-ME [22], [23] and the (n, n2 ,

n3 )-detection test generation method [40] were proposed to

intelligently guide the N-detect ATPG process and has the

capability to limit the generated test-set size. Other techniques

were also introduced to generate smaller N-detect test sets

directly [38], [41]–[44]. Nevertheless, these approaches do

not consider physical information. Although the test-set size

can be limited or reduced by using these approaches, the test

quality can be further enhanced by employing the PAN-detect

metric. An efficient approach for generating compact PANdetect test sets is therefore needed.

IV. Physically-Aware Test Selection

To efficiently generate high-quality test patterns, we develop

a PATS procedure. PATS explores the neighborhood states

most likely to affect a targeted line, increasing the likelihood of

activating and detecting unmodeled defects affecting the target.

The greedy, selection-based approach inherent in PATS enhances test-generation efficiency, and therefore enables PANdetect test generation for large industrial designs. In the following sections, we present an overview and the detailed steps

of PATS (Sections IV-A–IV-D), followed by descriptions of the

heuristics created for handling large designs (Sections IV-E–

IV-G).

A. PATS Overview

PATS utilizes the PAN-detect metric described in Section III-A to generate a compact test set. The objective of

312

IEEE TRANSACTIONS ON COMPUTER-AIDED DESIGN OF INTEGRATED CIRCUITS AND SYSTEMS, VOL. 31, NO. 2, FEBRUARY 2012

of faults detected with neighborhood states not yet established

by previously selected tests. The main procedure of test selection consists of two phases: 1) preferred-state test selection,

and 2) generic-state test selection. Tests that establish the

preferred states are given priority in the selection process to

improve the detection capability of the selected test set. The

generic-state test selection phase is similar to the preferredstate test selection phase, except that the bias toward preferred

states is removed. Initially, the selected test set TN is empty.

Tests are then selected one at a time and removed from TM and

added to TN . After each test is selected, the weights of the remaining tests in TM are updated. The one-at-a-time test selection process repeats until the size of TN reaches the constraint.

B. Neighborhood States

The first step of PATS is to obtain the neighborhood states

established by the M-detect test set TM given the neighborhood

of each targeted line in the circuit under test. TM is a

pregenerated test set that provides a pool of tests that PATS

uses to derive TN . All stuck-at faults are simulated, without

fault dropping, over the test set TM . For each test tj that

detects a fault fi , the neighborhood state established for fi

is recorded. Let nbr(fi , tj ) denote the neighborhood state of

fault fi under the application of test tj . If tj does not detect

fi , then nbr(fi , tj ) is empty. The set of neighborhood states

established for each stuck-at fault fi by TM , Snbr (fi ), is the

union of the neighborhood states for fi over each test tj ∈ TM

that detects fi as follows:

Snbr (fi ) =

nbr(fi , tj ),

j = 1 . . . |TM |.

j

Fig. 4. Detailed steps of the PATS procedure, which consists of preferredstate and generic-state test selection.

PATS is to maximize neighborhood state coverage for all

targeted lines for a user-provided, test-set size constraint.

Fig. 3 provides an overview of the PATS flow. Besides a

logic-level circuit description and a list of all the uncollapsed

stuck-at faults, PATS requires several inputs that include:

1) the neighborhood for each signal line; 2) a large M-detect

test set TM (M > N); 3) the desired number of detections

N; and 4) a test-set size constraint. TM is fault simulated

to extract the neighborhood states established by TM for

each detected stuck-at fault fi . Using the neighborhood state

information, tests in TM are weighted based on the number of

faults detected with unique neighborhood states. PATS greedily

selects heavily weighted tests from TM until the size of the

selected test set reaches the user-provided limit. The output is

a compact, PAN-detect test set, TN , whose size satisfies the

given size constraint.

Fig. 4 shows a flowchart describing detailed steps of PATS.

Once the neighborhood states established by TM are obtained,

tests are weighted and selected from TM based on the number

The size of Snbr (fi ), i.e., |Snbr (fi )|, represents the number of

times the fault fi is detected with a unique neighborhood

state. Note that the selected N-detect test set can only establish

up to |Snbr (fi )| neighborhood states for a given fault fi . The

quality of the original test set therefore affects the quality of

the selected test set TN . A larger M-detect test set TM (i.e.,

a larger M) may have a higher |Snbr (fi )| for each fault fi ,

providing more tests and a larger search space for selection.

Therefore, the value of M should be sufficiently large to ensure

high-quality results. The neighborhood state coverage of the 1detect test set of the circuit under test can serve as the reference

for determining the value M.

C. Test Weighting

PATS greedily selects the most effective tests from the

original test set TM . To evaluate the effectiveness of a test in

exercising the physical neighborhood of each detected fault,

we define the weight of each fault and test using (1) and (2),

respectively, as follows:

FW(fi ) = (N − AS(fi ))s

TW(tj ) =

F

i=1

FW(fi ) · NS(fi , tj ) · P(fi , tj )

(1)

(2)

LIN et al.: PHYSICALLY-AWARE N-DETECT TEST

where

F = total number of stuck-at faults

⎧

1, if test tj detects fault fi and the

⎪

⎪

⎨

state has not yet been established by

NS(fi , tj ) =

tests currently placed into TN

⎪

⎪

⎩

0, otherwise

1, if tj establishes a preferred state of fi

P(fi , tj ) =

0, otherwise.

The weight of a fault fi , denoted by FW(fi ), is a dynamic

function that changes as the tests are selected. It is an

exponential whose base is the difference between N (the target

number of detections, each with a unique state) and AS(fi )

(the number of neighborhood states of fi established by the

current set of tests placed into TN ). TN is initially empty and

therefore AS(fi ) = 0 initially for all i. Both AS(fi ) and FW(fi )

are updated (if necessary) after each test is selected and added

to TN .

The exponent s in (1) is a constant used to spread the

distribution of weights for faults that have a similar number

of unestablished states. For example, suppose at some time in

the selection process, fault f1 has two unique neighborhood

states established by the tests in TN , and fault f2 has three.

That is, AS(f1 ) = 2 and AS(f2 ) = 3. Let the target number

of detections, N, be five. By setting s to one, fault weights

for f1 and f2 become (5 − 2)1 = 3 and (5 − 3)1 = 2,

respectively. The difference between FW(f1 ) and FW(f2 ) is

therefore small at 3 − 2 = 1. When s however is set to three,

the difference becomes (5 − 2)3 − (5 − 3)3 = 27 − 8 = 19.

With a larger s, a fault with fewer established neighborhood

states will have a much higher weight than a fault with

more established states. Since fault weights are used in the

calculation of test weights, tests that detect faults with fewer

established states will have much greater test weights and

therefore be selected with higher priority. Tests selected into

TN will therefore establish approximately the same number

of unique neighborhood states for each stuck-at fault (if

structurally possible). In our experiments, s = 3 is empirically

chosen as an appropriate spreading exponent.

The weight for a test tj , denoted by TW(tj ) in (2), is the

sum of the weights of faults that are detected by tj . The

parameter NS(fi , tj ) indicates whether test tj establishes a

neighborhood state for fault fi that has not yet been established

by the tests already selected and placed into TN . Specifically,

if test tj generates a duplicate neighborhood state for fi , i.e.,

the state has been established by some other test in TN , then

NS(fi , tj ) = 0 resulting in the removal of FW(fi ) from TW(tj ).

Conversely, NS(fi , tj ) is set to one if adding tj to TN will

increase AS(fi ). In the preferred-state test selection phase, the

function P(fi , tj ) represents whether the state established by

tj is a preferred state. In the generic-state test selection stage,

P(fi , tj ) defaults to 1 for all i and j.

D. Test Selection

Given the neighborhood states established by the test set

TM and the test weights previously described, PATS performs

preferred-state test selection followed by generic-state test

313

selection, as illustrated in Fig. 4. In the preferred-state test

selection phase, only tests establishing the preferred states are

considered using P(fi , tj ) as described in (2).

Initially, when TN = φ, the weights of all the faults are

equal to N s . The test selected, tsel , is the one that detects

the most faults with preferred states. Test tsel is removed

from TM and added to TN . Fault and test weights are then

updated as described next. For each fault fi detected by

test tsel , if tsel establishes a new neighborhood state for fi

that has not been established by tests already selected into

TN , then the neighborhood state is marked as covered. The

number of established neighborhood states AS(fi ) is also

incremented by one, thereby reducing the weight FW(fi ) of

fault fi . The weights of detected faults are removed from

the weights of any currently-unselected tests that establish the

same neighborhood states. For instance, suppose tests t2 and

tsel (t2 = tsel ) both detect f1 with a neighborhood state not

established by tests in TN . When tsel is chosen and added

to TN , the neighborhood state of f1 is marked as covered.

Test t2 no longer establishes a new neighborhood state for f1 ,

so FW(f1 ) is removed from TW(t2 ) by setting NS(f1 , t2 ) to

zero. Whenever a test is chosen from TM and added to TN ,

the fault and test weights are updated as just described. Ties

among tests with the highest weight are broken by arbitrarily

choosing one test. The preferred-state test selection procedure

continues selecting tests with the highest weight in the test set

TM until all testable faults have preferred states established,

which implies that each stuck-at fault is detected at least

once.

After preferred-state test selection, PATS enters the genericstate test selection phase. This phase is similar to the preferredstate test selection phase, except the termination condition and

the use of P(fi , tj ) are changed. Each P(fi , tj ) is set to one

permanently in the generic-state test selection phase. That is,

the weight of fault fi is added to the weight of test tj as long

as tj detects fi and establishes a unique neighborhood state

not yet established by tests in TN . The test weights are updated

before this phase begins due to the change in value of P(fi , tj ).

As in the preferred-state test selection phase, the procedure

continues by repeatedly selecting the highest-weighted test

followed by an update of the weights of the remaining tests.

The process stops however when the size of TN reaches the

user-provided constraint.

E. Fault List Management

Initially, the fault list includes all uncollapsed stuck-at faults.

An uncollapsed fault list is used since the physical nets corresponding to a set of equivalent faults do not necessarily have

the same neighborhoods. Hence, it is necessary to consider

the neighborhood for each uncollapsed fault separately. For

an industrial design, the size of the fault list can therefore be

quite significant. To reduce fault simulation and test selection

time, the fault types listed below can be excluded.

1) Faults affecting clock signals: Faults affecting clocks are

likely to result in failures that are easy to detect, and thus

do not require explicit attention.

2) Faults detected by tests for the scan chain: These faults

affect the scan paths and will alter the logic values on the

314

IEEE TRANSACTIONS ON COMPUTER-AIDED DESIGN OF INTEGRATED CIRCUITS AND SYSTEMS, VOL. 31, NO. 2, FEBRUARY 2012

fault sites in every scan-in/scan-out operation. Therefore,

faults detected by tests aimed at the scan chain are

relatively easy to detect and can also be safely ignored.

3) Faults affecting global lines: Here global lines are defined to be signal lines that have a large fanout. In this

paper, lines with fanout greater than eight are deemed

global. A line with a large fanout is likely to have a

strong drive capability, and therefore is less likely to

be controlled by its neighborhood. For example, if a

bridge defect affects a global line and one or more of

its neighbors, the defect is likely detected when the fault

involving the neighbor is sensitized. For a global line

affected by an open, it is likely that one of the many

floating fanout lines will be detected. For these reasons,

global lines are excluded from explicit consideration

during PATS.

F. Neighborhood Management

As mentioned in Section III-A, the neighborhood of a

targeted line includes physical neighbors, driver-gate inputs,

and receiver-side inputs. For some neighborhoods, including

all three types of signal lines in a neighborhood may significantly increase the overall number of neighbors. To keep the

neighborhood size tractable for test selection, we construct

neighborhoods based on the following rules.

1) Include up to 16 physical neighbors with maximum

critical area: Some neighbors are more likely to be

problematic than others. Specifically, one neighbor may

have long parallel runs next to the targeted line while

another only “enters” the neighborhood with a small

footprint. We utilize critical area information [32] to

measure the physical proximity of two wires. Physical

neighbors are ordered according to their critical area

involving the targeted line. Up to 16 neighbors with the

greatest critical-area values are considered.

2) Include all the driver-gate inputs: Since a single cell

(gate) drives a targeted line, the number of driver-gate

inputs to be included in a neighborhood is usually small.

Therefore, driver-gate inputs are always included in the

neighborhoods.

3) Include receiver-side inputs only if the target is not a

global line: Excluding global lines also has the effect of

constraining the number of receiver-side inputs. Therefore, if the target is not a global line, all the receiver-side

inputs are included in the neighborhood.

G. Top-Off Test Selection

In cases where PATS is applied together with other tests

such as stuck-at test, PATS can be used to “top-off” (augment)

the existing tests to maximize defect detection of the overall

tests. Top-off test selection is easily accomplished by: 1)

grading the existing tests using the PAN-detect metric, and 2)

using those results as the starting point for PATS. In other

words, in top-off test selection, TN is initialized using the

baseline tests. Next, among all tests in the test pool, only those

that establish neighborhood states not yet been established by

the existing tests in TN are considered by PATS.

V. Experiment Results

In this section, we present simulation and actual IC experiment results from applying PATS to two industrial designs, an

LSI test chip and an IBM ASIC. In addition, the effectiveness

of traditional N-detect and PAN-detect test is compared using

a fine-grained approach that uses diagnosis of failing test chips

from LSI.

A. LSI 90 nm Test Chip

The LSI test chip consists of seven blocks with a total of 352

64-bit arithmetic-logic units (ALUs), fabricated using 90 nm

technology. The design employs full-scan, where each block

has its own scan chain and scan ports. One ALU of a given

block is tested at a time, and consequently each ALU can be

deemed an independent combinational circuit from the testing

point of view. The experiment is therefore performed on one

ALU, consisting of 5684 logic gates, as a representative.

In apply PATS to the LSI test chip, a traditional 50-detect

test set is generated as the test pool using FastScan from

Mentor Graphics [45]. The test-set size constraint comes from

the size of a traditional 10-detect test set, which consists of

1243 patterns. The techniques described in Sections IV-E–

IV-G are not employed in this experiment due to the small

size of the ALU design. PATS is used to select a separate

physically-aware 10-detect test set (i.e., TN = T10 ) from the

50-detect test set (i.e., TM = T50 ) with a size no larger than

the traditional 10-detect test set. Physical neighborhoods are

extracted from the layout using a distance of 300 nm, which

is assumed to completely contain any defect affecting the

targeted line. Neighborhood state information achieved by the

50-detect test set is obtained by fault simulating the test set

using the fault tuple [46] simulator FATSIM [47]. Preferredstate test selection is performed followed by generic-state test

selection. In the rest of this paper, Nd and Ns will be used

in place of N when it is necessary to distinguish between the

number of detections (Nd ) and the number of detections with

unique neighborhood states (Ns ).

Table II shows a comparison of the 1-detect, 10-detect,

PATS, and 50-detect test sets. Although the PATS test set

performs slightly worse than the traditional 10-detect test set

based on the traditional N-detect metric (0.4% less faults are

detected with Nd ≥ 10 by the PATS test set), it significantly

outperforms the 10-detect test set based on the PAN-detect

metric. The number of neighborhood states established by the

10-detect test set is 3.85 million, while the PATS test set covers

4.55 million states, an 18.0% increase. Our test set also detects

4.7% more faults that satisfy the 10-detect requirement based

on the PAN-detect metric (i.e., Ns ≥ 10). These results show

that neighborhood state coverage can be significantly increased

over traditional N-detect with no increase in test execution cost

(i.e., test-set size is maintained). It should be noted that we

do not explicitly consider preferred states when calculating

the neighborhood state coverage or the value of Ns for the

considered faults. In other words, all the unique neighborhood

states are weighted the same.

Fig. 5 plots the distribution of the number of faults against

the number of neighborhood states established by each test

LIN et al.: PHYSICALLY-AWARE N-DETECT TEST

315

TABLE II

Comparison of Test-Set Sizes and Effectiveness of Traditional N -Detect and PATS

Test Set Properties

Test-set size

No. of established states (million)

No. of faults with Nd ≥ 10

No. of faults with Ns ≥ 10

1-Detect

165

1.21

69 162

35 997

10-Detect

1243

3.85

91 590

53 090

PATS

1243

4.55

91 199

55 604

50-Detect

5753

9.01

91 590

57 172

Percentage Change (%)

PATS Versus 1-Detect

653.3

277.4

31.9

54.6

Percentage Change (%)

PATS Versus 10-Detect

0

18.0

−0.4

4.7

TABLE III

Comparison of Test-Set Sizes and the Number of Neighborhood States Established

Test Set Properties

Test-set size

No. of established states

No. of faults with Nd ≥ 10

No. of faults with Ns ≥ 10

1-Detect

3594

(3431)

155.80M

3.00M

1.35M

PATS

5000

(5000)

243.48M

3.70M

1.70M

20-Detect

16 358

(15 328)

500.79M

3.89M

1.81M

Fig. 5. Comparison of the test sets based on the distribution of the number

of faults versus the number of established states.

set. Specifically, the chart shows the number of faults (y-axis)

that satisfy the physically-aware Ns -detect metric for various

values of Ns (x-axis). The numbers from the 1-detect test

set form the lower bound, and the upper bound stems from

the 50-detect test set. Comparing the PATS test set with the

10-detect, it can be seen that for every established neighborhood state count (i.e., for every possible value of Ns ), the PATS

test set has an equal or greater number of faults. This implies

that the PATS test set detects more faults that satisfy the

physically-aware Ns -detect metric for each Ns , and the average

number of established neighborhood states is increased. The

result also shows that the overall neighborhood state count

is increased without reducing the state count achieved for

individual faults. Consequently, the PATS test set is more

effective than the traditional 10-detect test set when measured

by the PAN-detect metric.

The PATS test set is used for wafer sort of the LSI test

chips. Each ALU in a chip is tested with the scan-chain flush,

1-detect, and PATS test sets, regardless of whether any other

ALU has failed. Among ∼140 dice that went through this flow,

the PATS test set uniquely failed one die. The die however also

failed the 1-detect test set during retest.1 Since a scan retest is

1 The original and retest results could be different for many reasons, which include: 1) tester marginality; 2) defect behavior marginality;

3) circuit marginality; and/or 4) soft failures become hard failures under some

previously applied tests.

Fig. 6.

Percentage Change

PATS Versus 1-Detect

39.12%

(45.73%)

56.28%

23.33%

25.93%

Percentage Change

20-Detect Versus PATS

227.16%

(206.56%)

105.68%

5.14%

6.47%

Distribution of neighborhood sizes.

not normally performed in a production test environment, the

DPPM reduction that could be achieved by PATS is ∼7000.

B. IBM 130 nm ASIC

PATS is also applied to an IBM production ASIC, fabricated

using 130 nm technology. The chip design has nearly a million

gates, and the physical neighborhood information includes all

the signal lines within 0.6 μm of the targeted line. PATS is

used to select a physically-aware Ns =10-detect test set of

5000 test patterns from an Nd =20-detect test set of 16 358

patterns. Cadence Encounter Test [48] was used to generate the

20-detect test set. It was also used to fault simulate and extract

neighborhood states for both the IBM stuck-at test set and

the 20-detect test set. Techniques described in Sections IV-E–

IV-G are employed. Specifically, we use PATS to top-off the

existing stuck-at test patterns in order to maximize defect

detection during slow, structural scan-test. After applying the

rules described in Section IV-E, approximately four million

uncollapsed stuck-at faults are considered.

Fig. 6 shows the distribution of neighborhood sizes, i.e., the

number of faults (y-axis) that have the specified number of

neighbors (x-axis). Since driver-gate and receiver-side inputs

are included along with the actual physical neighbors, every

fault has at least one neighbor. From Fig. 6, it is evident that a

majority of faults have less than 20 neighbors. The plot would

have a much longer tail however if all faults and neighbors

316

IEEE TRANSACTIONS ON COMPUTER-AIDED DESIGN OF INTEGRATED CIRCUITS AND SYSTEMS, VOL. 31, NO. 2, FEBRUARY 2012

TABLE IV

Production Test Details for the IBM ASIC

Test Type

Stuck-at

IDDQ

PATS

Delaya

a Applied

No. of Patterns

3594

4

5000

19 258

Test Speed

(MHz)

33–50

−

33–50

77–143

Fault Coverage

(%)

98.73

−

43.5

66.89

at low VDD.

were included (i.e., if techniques described in Section IV-F

were not applied).

For a design with scan, the neighborhood state of a targeted

line should be extracted after the scan-in operation but before

the capture-clock pulse. Some test patterns generated by

Encounter Test however involve multiple capture clocks [49]–

[51]. Determining fault detection when multiple capture clocks

are used is difficult and makes neighborhood state extraction

extremely complex. Sequential fault simulation over multiple clock cycles would be required to extract states of the

detected faults, a process that would increase analysis time

tremendously. We instead choose to ignore test patterns with

these characteristics in this experiment since the percentage

of faults uniquely detected by these complex patterns is very

small (< 0.01%).

A comparison of the 1-detect, physically-aware 10-detect,

and the 20-detect test sets for the IBM chip is shown in

Table III. The last two columns of Table III provide the

percentage of change for various test-set attributes for PATS

with respect to the original (1-detect) stuck-at test applied

by IBM and the 20-detect test pool used by PATS. The

size of each test set is given in the second row, where the

number in parentheses gives the number of test patterns whose

neighborhood states are easily extractable. The third row is

the total number of neighborhood states established by each

test set. Table III reveals that PATS achieves 56% more states

with only a 39% increase in test-set size over the 1-detect test

set. The traditional 20-detect test set establishes 1.06× more

neighborhood states than the PATS test set but at the cost of

a 2.27× increase in the number of tests. This means there are

patterns within the 20-detect test set that do not establish new

neighborhood states, implying inefficient use of test resources

in the generation and application of traditional N-detect tests.

The fourth and fifth rows show the number of faults that have

Nd ≥ 10 and Ns ≥ 10, respectively. Although the 20-detect

test set has more faults reported for both metrics, its advantage

over PATS is not significant and comes at the cost of a much

larger pattern count.

Fig. 7 plots the number of faults (y-axis) that have ≥Ns

detections with unique states for various values of Ns (x-axis).

For clarity, the tails of the curves are not shown in Fig. 7. It can

be observed that PATS and the 20-detect test set are very close

for Ns ≤ 10, thus demonstrating the ability of PATS to boost

neighborhood state counts for faults with fewer established

states. For Ns > 10, PATS gradually departs from the 20detect test set as expected since we do not target faults that

have Ns ≥ 10. Test resources are therefore not wasted on

well-tested faults that are not targeted.

Fig. 7. Analysis of N-detect and PATS test sets based on the distribution of

the number of faults versus Ns .

Fig. 8.

IBM test results.

The production test for the IBM chip, applied in the

following sequence, included scan-based stuck-at test, IDDQ,

PATS, and delay test. These tests are applied in a stop-onfirst-fail environment, i.e., failure of any chip means that

subsequent tests are not applied. Features of the various test

sets are shown in Table IV. The stuck-at and PATS test sets

are applied with a latch launch-capture speed between 33 MHz

and 50 MHz. Delay test is applied at different speeds, mostly

ranging from 77 MHz to 143 MHz, but is performed at low

supply voltage (VDD). For IDDQ, four measurements are

taken. Fault coverage, where known, is reported using the

appropriate test metric. For stuck-at tests, coverage is simply

the percentage of stuck-at faults detected. For delay test, it is

the percentage of transition faults detected. Finally, for PATS,

coverage is the percentage of stuck-at faults that have Ns ≥ 10

or Ns = 2n < 10, where n is the number of neighbors of a

targeted line.

The outcome of the production test for nearly 100 000 IBM

chips is described in Fig. 8. Of the 99 718 parts that passed the

stuck-at test, 57 parts failed the IDDQ test, 16 failed the PATS

tests, and 330 failed delay test. The 16 chips that failed the

PATS tests were subjected to retest using the transition-fault

delay tests and logic BIST. Logic BIST runs at-speed at about

300 MHz under nominal VDD without any signal weighting,

and applies 42 000 test patterns. Eight of the 16 chips that

failed the PATS tests were detected by the delay test which

LIN et al.: PHYSICALLY-AWARE N-DETECT TEST

317

means its application in production reduced DPPM by about

80. Of the remaining eight chips, five were detected by logic

BIST. Thus, the addition of logic BIST to the production flow

implies that DPPM reduction solely due to PATS would be

approximately 30.

Together, logic BIST and delay test apply over 60 000

patterns which is 11× more than the number of PATS tests.

Even with this large difference, PATS is able to uniquely fail

three chips. With respect to the delay test patterns alone, eight

chips are uniquely failed even though nearly 3× more patterns

are applied. It is likely the effectiveness of the 5000 PATS test

patterns can be further improved if, along with the stuck-at

tests, the delay test and BIST patterns are graded and used as

the starting point for PATS.

C. N-Detect Versus PAN-Detect

In this experiment, we directly compare the effectiveness

of traditional N-detect and PAN-detect using a fine-grained,

test-metric evaluation technique [17], [52], [53]. Specifically,

diagnosis is used to identify the defective site(s) that established the first evidence of failure. The test patterns that

sensitize this site are then examined to compare the traditional

N-detect and PAN-detect test. This approach to measuring

the effectiveness of a test methodology is quite general and

very precise, and can be used to examine the utility of a

test method without necessitating the need for additional test

patterns. This characteristic is demonstrated using fail logs

from LSI test chips (note that this design is different from the

one used in Section V-A). This chip consists of 384 64-bit

ALUs that are interconnected through multiplexers and scan

chains, and is fabricated using a 110 nm process. Each ALU

has approximately 3000 gates, 10 220 stuck-at faults, and is

subjected to scan-chain integrity test, scan-based stuck-at test,

and IDDQ test. The stuck-at test of an ALU consists of ∼260

scan-test patterns, achieving >99% stuck-at fault coverage.

We first select failing chips that are suitable for our analysis.

These failing chips must be: 1) diagnosable, and 2) hard

to detect. Here diagnosable means that suspect site(s) that

established the first evidence of failure can be identified, and

hard to detect means that the identified suspect was sensitized

at least once before the chip failed, i.e., the chip would not

necessarily be detected by a 1-detect test set. These chips are

the target of N-detect and PAN-detect test, and therefore are the

subject of our analysis. The chip selection results are shown

in Fig. 9. From the 2533 chips in the stuck-at failure logs, 720

chips are diagnosable and 87 of them are hard-to-detect. The

87 chips are partitioned into two categories: 28 chips have only

one suspect, and 59 have two or more suspects, each of which

could alone cause the chip’s first failing pattern (FFP). The 28

failing chips are of particular interest since we have significant

confidence in the failure location identified by diagnosis. The

59 failing chips with multiple suspects are of interest as well

however since best, worst, and average case analyses can be

performed.

For two of the 28 single-suspect failing chips, the chips’

FFPs established a neighborhood state that has been established by some previously applied test patterns. In other words,

the two chips failed due to some mechanism that is not

Fig. 9.

LSI failing chip selection.

TABLE V

Number of Detections Nd and the Number of Unique States Ns

for the 26 LSI Chip Failures That Have One Suspect for the

Chip’s FFP

Nd

2

3

3

4

5

5

7

7

9

9

10

30

Ns

2

2

3

4

4

5

6

7

8

9

9

30

No. of Chips

11

1

3

2

1

1

1

1

1

1

2

1

accounted for by the PAN-detect test. If the driver-gate inputs

of physical neighbors are included in the neighborhoods,

however, the FFPs of the two hard-to-detect chips establish

new neighborhood states for the suspects of these chips. This

implies that the two chips are likely to be affected by bridge

defects whose manifestation is determined by the driving

strength of the bridged neighboring nets of the identified

suspect. N-detect test is considered effective in detecting these

two particular chips since the chips’ FFPs increase the number

of detections for the suspects and detect the chip failures.

For the remaining 26 single-suspect failing chips, the chips’

FFPs established a new neighborhood state that has never been

established by any of the previously applied test patterns. PANdetect test is deemed effective in detecting these failing chips.

We further examine the test of the suspects with respect to Nd

and Ns using the test patterns up to and including the FFP.

Table V shows the distribution of Nd and Ns for the 26

failing chips. For each chip, multiple states were encountered

before the chip failed. We further examine one failing chip that

has Nd = 10 and Ns = 9. Table VI shows the neighborhood

states established by the applied test patterns up to and including the FFP (i.e., test 19) for this particular chip. The suspect

was sensitized ten times with nine different neighborhood

states, and the chip did not fail until test 19 was applied and

318

IEEE TRANSACTIONS ON COMPUTER-AIDED DESIGN OF INTEGRATED CIRCUITS AND SYSTEMS, VOL. 31, NO. 2, FEBRUARY 2012

TABLE VI

Established Neighborhood States and the Detection Status

for a Sample Failing Chip

Test ID

6

7

8

9

10

11

12

13

14

19

Fig. 10.

Suspect Sensitized

Yes

Yes

Yes

Yes

Yes

Yes

Yes

Yes

Yes

Yes

State

1101111

1111110

1000100

1111101

1110110

1101100

1110100

1000100

1010100

0111011

Pass/Fail

Pass

Pass

Pass

Pass

Pass

Pass

Pass

Pass

Pass

FAIL

Histograms of the ratio R for the 59 multiple-suspect failing chips.

established a new neighborhood state. Note that none of these

26 hard-to-detect failing chips would be necessarily detected

by a traditional N-detect test set.

For the 59 chips with multiple suspects, we calculate a

ratio R for each chip, where R is the percentage of suspects

for which the chip’s FFP establishes a new neighborhood

state among all the suspects of the chip. Fig. 10 shows the

histogram of R for the 59 multiple-suspect failing chips. It can

be observed that for 39 failing chips, the chips’ FFPs establish

new neighborhood states for all the suspects. The FFPs of the

remaining 20 failing chips establish new neighborhood states

for at least one but not all of the suspects.

We draw the following conclusions from analyzing Tables V

and VI, and Fig. 10.

1) For defective chips that are hard to detect, it appears

there is some particular neighborhood state required for

defect activation. PAN-detect test is therefore needed to

detect these chips.

2) For any of the failing chips, it is possible that some state

before the chip’s FFP activated the defect and sensitized

the defect site to some observable point but did not cause

failure due to timing issues. However, these cases are

beyond the scope of this paper since N-detect inherently

focuses only on static defects, i.e., the defects that do

not depend on the timing or sequence of the applied test

patterns.

3) Similar observations are made for the chips from Fig. 9

not successfully diagnosed. All of these chips, for at

least one failing pattern, appeared to have suffered from

at least two, simultaneously faulty lines. Because of this

characteristic, they too are not directly targeted by Ndetect.

VI. Sensitivity to ATPG Parameters

For some test methods, the parameters used for ATPG can

have significant impact on test effectiveness, such as the bridge

fault model (e.g., distance used for extracting bridges) [7], [8],

[54] and the input pattern fault model (e.g., hierarchy level

at which the gate/cell is exhaustively tested) [10]. For PANdetect test, the definition of the neighborhood will affect test

effectiveness. The neighborhood of a targeted line includes the

physical neighbors, driver-gate inputs, and receiver-side inputs.

Any signal line within a distance d from the targeted line is

deemed to be a physical neighbor. If d is small, the extracted

physical neighbors may not include all the signal lines that

are likely to influence the targeted line. With a large d, the

neighborhood likely includes all the signal lines that could

affect the targeted line, but may also include some extraneous

lines that will likely never cause the targeted line to fail. One

approach to guide the selection of d is to use the test-metric

evaluation technique applied in Section V-C. Another option

is to analyze the defect size distribution (if available) of the

manufacturing process used to fabricate the circuit under test.

The neighborhood is not limited to the three types of signal

lines used in this paper but can include other types of neighbors as well. For example, diagnosis procedures described

in [30] and [55] use driver-gate inputs of physical neighbors

instead of the physical neighbors themselves. It is known that

driver-gate input values can have an impact on the voltage

level (i.e., its strength) of a signal line [8]. Using driver-gate

inputs of physical neighbors instead of the physical neighbors

therefore increases the likelihood of detecting defects where

drive strength influences defect manifestation.

The types of signal lines included in the neighborhood, as

well as the distance d used, will impact the effectiveness

of PAN-detect. In the following, we utilize the test-metric

evaluation technique [17], [52], [53] and extend the analysis

described in Section V-C to demonstrate how existing test data

can be exploited to determine the value of d.

The 28 hard-to-detect and single-suspect failing chips analyzed in Section V-C are further analyzed in this experiment.

The objective is to identify the smallest distance dc, s where

PAN-detect test is effective in detecting a defect that affects

suspect s of chip c. In other words, the FFP of chip c should

establish a new neighborhood state for suspect s of c, where

the neighborhood of s includes the driver-gate inputs, receiverside inputs, and physical neighbors that are within distance dc, s

of the suspect.

The procedure for identifying dc, s is as follows. For suspect

s of a hard-to-detect, single-suspect failing chip c, the physical

neighbors of s is extracted using the smallest distance dmin .

Next, we examine whether the neighborhood state established

by the FFP has been established by previous test patterns. If the

neighborhood state is newly established, then the distance used

is recorded as dc, s and the process terminates. Otherwise, d is

increased by dstep and the aforementioned steps are repeated.

LIN et al.: PHYSICALLY-AWARE N-DETECT TEST

Fig. 11. Distribution of the distance dc, s for the suspects of the 28 hard-todetect and single-suspect failing ALU chips fabricated in 110 nm technology.

Fig. 12. Distribution of the distance dc, s for all the suspects of the 2533

failing ALU chips.

The procedure continues until dc, s is found, i.e., the PANdetect metric captures failing chip c by considering physical

neighbors extracted using dc, s .

In this experiment, we use dmin = 0 and dstep = 20 nm.

Using d = dmin = 0 corresponds to the case where only

driver-gate inputs and receiver-side inputs are included in the

neighborhood. Physical neighbors are considered for d > 0.

The distribution for dc, s for the 28 single-suspect LSI ALU

test chips is shown in Fig. 11. The blue curve represents

the number of suspects (i.e., the number of chips) where

PAN-detect is effective using the corresponding distance (xaxis). Fig. 11 shows that the distance required for PAN-detect

test to capture all single-suspect failing chips is 600 nm (i.e.,

max dc, s = 600 nm), although using a distance of 220 nm

is sufficient for the majority of the chips. To analyze the

effect of including different types of neighbors, we repeat the

aforementioned procedure but replace physical neighbors with

driver-gate inputs of physical neighbors. The results are shown

as the red curve in Fig. 11. It can be observed that when

driver-gate inputs of physical neighbors are used, the distance

required for PAN-detect to be effective is smaller at 220 nm.

For statistically significant results, we perform the same

analysis for all available failing chips. In this experiment, a

site is deemed a suspect if the stuck-at fault response of the

site sensitized by the FFP exactly matches the failing-pattern

tester response. The distribution for dc, s for the suspects of all

the LSI ALU failing chips is shown in Fig. 12. The peak at

distance 0 is due to the suspects for the easy-to-detect failing

chips that exhibit stuck-at behavior. For other chips, the trend

is similar to what we observed for the 28 hard-to-detect, single-

319

Fig. 13. Neighborhood size as a function of distance d averaged over all the

signal lines in the LSI test chip.

suspect failing chips. Note that the value of dc, s for about 5000

suspects examined in the experiment is greater than 1000 nm.

The count of these suspects is shown as the rightmost data

point on the x-axis. It is likely that these suspects are not the

real defect sites, and therefore altering the neighborhoods for

these suspects does not affect defect detection.

It can be expected that as d increases, the size of neighborhood for a signal line increases as well. On the other hand,

for the same distance, including driver-gate inputs of physical

neighbors is expected to result in a larger neighborhood size

than just including physical neighbors. This is because a

signal line typically has two or more driver-gate inputs (except

the outputs of buffers or inverters). To show the impact of

increasing d, we plot the neighborhood size as a function of

d for all the signal lines in the LSI test chip, and calculate

the average over all the signal lines. The blue and the red

curves in Fig. 13 show the average neighborhood size as a

function of d when physical neighbors and driver-gate inputs

of physical neighbors are used, respectively. In general, the

average neighborhood size constructed using driver-gate inputs

of physical neighbors is approximately 50%–60% larger than

the one constructed using physical neighbors except when

d ≤ 200 nm. Using driver-gate inputs of physical neighbors

extracted with d ≥ 220 nm leads to a larger neighborhood

size than using physical neighbors extracted with d = 700 nm.

Although including driver-gate inputs of physical neighbors

in the neighborhood allows a smaller distance for PAN-detect

test to capture all the failing chips, the resulting neighborhoods

are still larger than those constructed using physical neighbors

extracted with a larger d.

VII. Conclusion

A PATS method was described for generating compact

PAN-detect test sets. PATS utilized the PAN-detect metric,

which exploits defect locality but does not presume specific

defect behaviors, to guide the selection of test patterns. By

exploring conditions that were more likely to activate and

detect arbitrary, unmodeled defects, the defect detection

characteristics of the resulting test set can be improved.

Compared to traditional N-detect test sets, the quality of

PATS test sets was enhanced without any increase in test

execution cost. The effectiveness of PATS and PAN-detect

was evaluated using tester responses from LSI test chips

320

IEEE TRANSACTIONS ON COMPUTER-AIDED DESIGN OF INTEGRATED CIRCUITS AND SYSTEMS, VOL. 31, NO. 2, FEBRUARY 2012

and an IBM ASIC. Results demonstrated that utilizing the

PAN-detect metric in ATPG helps improve defect detection.

PATS can be easily integrated into an existing testing flow,

and can be used to generate new or top-off test sets. If a

PAN-detect test set was provided, PATS can be used as a

static compaction technique. PATS can also be used to improve

diagnosis accuracy by creating additional test patterns for

suspect sites. Specifically, knowledge of which neighborhood

states lead to defect activation and which ones do not, enabled

the extraction of various defect characteristics [30].

The greedy, selection-based approach inherent in PATS,

together with the heuristics for handling large fault lists and

neighborhoods, made PATS efficient and therefore applicable

to large, industrial designs. Moreover, the PAN-detect

metric, which was the center of PATS, was quite general

in that it subsumes all other static test metrics, and can be

easily extended to handle all sequence dependent defects

as well. PATS therefore provided a cost-effective approach

for generating compact, PAN-detect test sets, which have

better detection capability for emerging, unmodeled defects.

For products that require high quality (i.e., defect level

<100 DPPM), PATS and PAN-detect can be effectively used

to augment traditional test methods.

Acknowledgment

The authors would like to thank B. Keller from Cadence

Design Systems, Inc., San Jose, CA, for his valuable feedback

on Encounter Test. The authors would also like to thank LSI

Corporation, Milpitas, CA, for providing design and tester data

for the ALU test chips. We would like to thank M. Kassab for

his work in extracting the neighborhood information for the

IBM ASIC, and C. Fontaine for performing all of the retest and

characterization experiments of the failing IBM chips, both

from IBM Microelectronics, Essex Junction, VT. Finally, the

authors would like to thank J. Brown from Carnegie Mellon

University, Pittsburgh, PA, for his help with extracting physical

neighborhood information for the LSI test chips.

References

[1] S. Sengupta, S. Kundu, S. Chakravarty, P. Parvathala, R. Galivanche,

G. Kosonocky, M. Rodgers, and T. M. Mak, “Defect-based test: A key

enabler for successful migration to structural test,” Intel Technol. J., pp.

1–14, Jan.–Mar. 1999.

[2] International Technology Roadmap for Semiconductors. (2009). Test and

Test Equipment [Online]. Available: http://www.itrs.net

[3] S. C. Ma, P. Franco, and E. J. McCluskey, “An experimental chip to

evaluate test techniques experiment results,” in Proc. Int. Test Conf.,

Oct. 1995, pp. 663–672.

[4] P. Nigh, W. Needham, K. Butler, P. Maxwell, R. Aitken, and W. Maly,

“So what is an optimal test mix? A discussion of the SEMATECH

methods experiment,” in Proc. Int. Test Conf., Nov. 1997, pp. 1037–

1038.

[5] E. J. McCluskey and C.-W. Tseng, “Stuck-fault tests vs. actual defects,”

in Proc. Int. Test Conf., Oct. 2000, pp. 336–342.

[6] M. E. Amyeen, S. Venkataraman, A. Ojha, and S. Lee, “Evaluation of

the quality of N-detect scan ATPG patterns on a processor,” in Proc. Int.

Test Conf., Oct. 2004, pp. 669–678.

[7] K. C. Y. Mei, “Bridging and stuck-at faults,” IEEE Trans. Comput., vol.

C-23, no. 7, pp. 720–727, Jul. 1974.

[8] J. M. Acken and S. D. Millman, “Accurate modeling and simulation of

bridging faults,” in Proc. Custom Integr. Circuits Conf., May 1991, pp.

12–15.

[9] S. Venkataraman and S. Drummonds, “A technique for logic fault

diagnosis of interconnect open defects,” in Proc. VLSI Test Symp., Apr.–

May 2000, pp. 313–318.

[10] R. D. Blanton and J. P. Hayes, “Properties of the input pattern fault

model,” in Proc. Int. Conf. Comput. Des., Oct. 1997, pp. 372–380.

[11] Y. Levendel and P. Menon, “Transition faults in combinational circuits:

Input transition test generation and fault simulation,” in Proc. Fault

Tolerant Comput. Symp., 1986, pp. 278–283.

[12] J. A. Waicukauski, E. Lindbloom, B. K. Rosen, and V. S. Iyengar,

“Transition fault simulation,” IEEE Des. Test Comput., vol. 4, no. 2,

pp. 32–38, Apr. 1987.

[13] B. Benware, C. Schuermyer, N. Tamarapalli, K.-H. Tsai, S. Ranganathan, R. Madge, J. Rajski, and P. Krishnamurthy, “Impact of

multiple-detect test patterns on product quality,” in Proc. Int. Test Conf.,

Sep.–Oct. 2003, pp. 1031–1040.

[14] K. Y. Cho, S. Mitra, and E. J. McCluskey, “Gate exhaustive testing,” in

Proc. Int. Test Conf., Nov. 2005, pp. 771–777.

[15] R. D. Blanton, K. N. Dwarakanath, and A. B. Shah, “Analyzing the

effectiveness of multiple-detect test sets,” in Proc. Int. Test Conf., Sep.–

Oct. 2003, pp. 876–885.

[16] Y.-T. Lin, O. Poku, N. K. Bhatti, and R. D. Blanton, “Physically-aware

N-detect test pattern selection,” in Proc. Des., Automat. Test Eur., Mar.

2008, pp. 634–639.

[17] Y.-T. Lin, O. Poku, R. D. Blanton, P. Nigh, P. Lloyd, and V. Iyengar,

“Evaluating the effectiveness of physically-aware N-detect test using real

silicon,” in Proc. Int. Test Conf., Oct. 2008, pp. 1–9.

[18] M. L. Bushnell and V. D. Agrawal, Essentials of Electronic Testing

for Digital, Memory, and Mixed-Signal VLSI Circuits. Boston MA:

Kluwer, 2000.

[19] S. Spinner, I. Polian, P. Engelke, B. Becker, M. Keim, and W.-T. Cheng,

“Automatic test pattern generation for interconnect open defects,” in

Proc. VLSI Test Symp., May 2008, pp. 181–186.

[20] X. Lin and J. Rajski, “Test generation for interconnect opens,” in Proc.