EYP102607_FINAL - Northwestern University Information

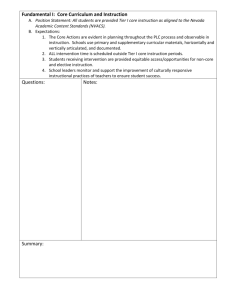

advertisement

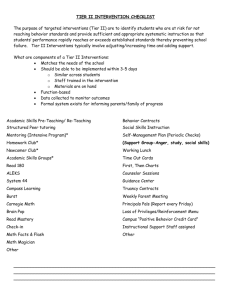

Northwestern University Dialogue on October 26, 2007 EYP Mission Critical Facilities, Inc. 200 West Adams Street, Suite 2750 Chicago, IL 60606 312-846-8500 Albany – Atlanta – Chicago – Dallas – London – Los Angeles – Middletown – New York City – San Francisco – Washington DC – White Plains Agenda | Firm Overview and Experience | Research University Data Center Trends | Q&A Firm Overview Firm Overview | EYP A&E founded in 1972; EYP MCF becomes stand-alone firm in 2001 | 300-person international MEP/FP Engineering Design, MEP/FP Systems Operations and IT Consulting firm | 95% of current projects are data centers | Best-in-class experience in planning, design, construction, migration and project management of HPC and Tiered Data Centers | HPC and high-density cooling thought leadership | Designed fifteen 15+MW data centers, including five 35+MW data centers | In-house building performance simulation tools to develop cost/benefit strategies and reduce unnecessary costs | CFD Modeling | Reliability Modeling | Energy Modeling | Cost Modeling EYP MCF Firm Overview | | | | | | | | | | Designed 32 million sq. ft. of data centers Planned and designed 50+ Greenfield data centers since 2001 800 MW power/back-up power systems design since 2001 200,000 tons of critical cooling design in the last 5 years 23 million sq. ft. of data center risk, reliability & feasibility assessments Commissioned 15 million sq. ft. of critical facilities Breadth of experience to bring multiple options to the table Designing in flexibility & scalability We know higher costs don’t equate with higher reliability & efficiency Team very driven by client satisfaction, trust, communication, and long-term relationships EYP MCF Strategic Industry Leadership | Collaborating with IBM, HP and Dell, we understand the evolution taking place in computing technologies – also assessing & designing this trio’s own data centers | LBNL data center energy consumption benchmarking study | Close working relationship with the Uptime Institute/Computer Site Engineering | Vendor, contractor and real estate relationships | Industry and International Publications EYP MCF Chicago Office — est. January 2004 ׀ ׀ ׀ Office at 200 West Adams Street, one block east of Sears Tower EYP MCF Center of Excellence re: ׀Strategic Consulting for IT and facilities infrastructure ׀Greenfield data centers ׀High-performance computing ׀Sustainability/energy efficiency/LEED ׀Building performance simulation EYP MCF Chicago’s accomplishments over the last 3 years: ׀200+ projects equating to $2+ Billion in construction ׀60 corporate and institutional clients ׀40 talented professionals on staff ׀30 invitations from national organizations to present and publish our thought-leader expertise in the design of high-reliability and highperformance facilities Representative Clients Representative Clients – Higher Education Representative Clients – HPC Representative Experience University of Illinois | Providing strategic consulting services | Determining the best options for data center consolidation | Determining the requirements for a purpose-built data center to accommodate future enterprise computing needs | Schematic design and ROM cost estimates UrbanaChampaign, IL NCSA/UIUC | New Greenfield 81,000 sq. ft. building housing 28,200 sq. ft. of scalable HPC machine rooms | Master planning, feasibility study, programming, data center layout, development of power and cooling loads and high-level design concepts, and ROM construction cost estimating | Requires in excess of 8,000 tons of chilled water and in excess of 25 MW of power Indiana University ׀80,000 sq. ft. data center part of new Greenfield Cyber Infrastructure Building complex ׀Provided programming, conceptual design, order of magnitude cost estimating, design development-level drawings, construction documents peer reviews, and construction administration services ׀20,000 sq. ft. of research computing raised floor for academic research computing ׀10,000 sq. ft. of Tier III raised floor for enterprise data center ׀Anticipated load densities of 100-300 W/sq. ft. Bloomington, IN ׀Also, data center risk assessments and feasibility studies of three existing sites to support future research computing expansion scenarios Children’s Memorial Hospital Chicago, IL | Overall Technology Infrastructure Consultant for a new 1 million square foot replacement hospital building | Performing a variety of services throughout the planning, design, construction, and migration phases | | | | | Delivering IT/network and medical technology consulting Conceptual designs Final designs Migration planning/execution Project management of the entire technology infrastructure build-out and the eventual migration efforts San Diego Supercomputer Center at UCSD | 13,800 sq ft supercomputing machine room | Data Center Assessment, CFD Modeling, and Master Planning for multiple upgrade/expansion scenarios | Analysis of load increase from 100 W/sq ft to 150 W/sq ft to 300+ W/sq ft San Diego, CA Rensselaer Polytechnic Institute (opened September 2007) ׀Ranked #7 on TOP500 June 2007 list ׀New 70TFlops Computational Center for Nanotechnology Innovation (CCNI) ׀Conversion of existing manufacturing building into a new, state-of-the-art computing facility with BlueGene/L machine and blade servers ׀5,000 sq. ft. of 48” raised floor area at 250-300 W/sq. ft. (zones approaching 600 W/sq. ft. ׀7,500 SF of office/hoteling area and 7,500 SF of mechanical/electrical support space Troy, NY Argonne National Laboratory (opened October 10, 2007) ׀Goal is to be in Top 3 on TOP500 June 2008 list ׀New Interim Supercomputing Support Facility (ISSF) within existing high-bay lab building ׀Supports a BlueGene/P supercomputer with 44 kW per rack loads ׀ISSF will house a 100 TFlops expandable to 500TFlops, then 1PFlop system, ׀Master planning, feasibility study, and conceptual design for new Greenfield 160,000 sq. ft. Theory & Computational Sciences facility to house 10sPFlop system Lawrence Berkeley Laboratory Computational Research and Theory Building – LEED Silver* Research University Data Center Trends University Benchmarking Academic Research Computing ׀Quality of research computing facilities is increasingly a point of separation for top institutions. ׀Existing data centers cannot accommodate the projected high growth rates especially for power and cooling . ׀New researchers often require research computing resources immediately. (ND Engineering college stated 50% of their new hires require new research computing resources.) ׀Top researchers also bring funding opportunities if computing facilities are available. University Benchmarking University Conceptual Scenario 1 Administrative and other non-HPC (~100 Watts/sq ft) ׀Usually combined into a single data center ׀Administrative space utilizes more traditional corporate enterprise computing reliability model ׀Slower growth rates University Benchmarking University Conceptual Scenario 2 HPC for research only use (> 200 Watts/sq ft) ׀Separate purpose-built HPC data center facility only ׀Very high power and cooling density with lower reliability goals ׀Usually associated with a specific NSF grant (e.g., Track 1 and Track 2 grants) University Benchmarking University Conceptual Scenario 3 Combined general University and HPC facility (~100 to 200+ Watts/sq ft) ׀Usually combined into a single data center ׀Typically a blended reliability/power density infrastructure addressing both general university and HPC needs ׀More cost-effective versus stand-alone facilities ׀Requires separation of general university and research raised floor areas for security, accessibility and reliability University Trends Matrix (All AAU Member Institutions) Institution Administrative (ft²) Research (ft²) Power (watts/ft²) Redundancy UIUC (Planned) 13,200 7500 130 N + 1/ N Indiana University 10,000 20,000 166 Tier 3/Tier 2 HPC Standalone University of Chicago University of Texas 6,000 3,200 Unknown Tier 2/Tier 1 15,000 10,000 125 Tier 3/Tier 1 Regional DC University of Minnesota 6,000 4,000 150 - 200 Tier 3/Tier 1 Stanford 17,000 33,000 Unknown Unknown Cornell 8,500 20,000 Unknown Tier 3 Princeton 5.000 10,000 Unknown Unknown University of Washington 6,000 12,000 150 Tier2 /Tier1 or NT Holistic View of Data Center Design The Growth of Power Consumption This is where we are now Source: IBM Corporation Four Tier System Tier 1 – Basic Non-Redundant Data Center | Single path for power and cooling distribution without redundant components Tier 2 – Basic Redundant Data Center | Single path for power and cooling distribution with redundant components Tier 3 – Concurrently Maintainable Data Center | Multiple paths for power and cooling distribution with only one path active and with redundant components Tier 4 – Fault Tolerant Data Center | Multiple active power and cooling distribution paths with redundant components and fault tolerant Site Availability TIER 1 TIER 2 TIER 3 TIER 4 SITE AVAILABILITY 99.67% 99.75% 99.98% 99.99% OUTAGE OVER 5 YEARS 144 HOURS 110 HOURS 8 HOURS 4 HOURS Sample - Rough Order of Magnitude Cost Estimate* INFRASTRUCTURE CONCEPT 7500 FT² Day 1 Tier 1 Tier 2 Tier 3 $8,200,000 $8,800,000 $14,200,000 $14,200,000 $15,400,000 $26,200,000 with 2500 FT² Shell 80 WATTS / FT² 600 KW Total Ultimate Build Out 10,000 FT² 120 WATTS / FT² 1200 KW Total * Utilizing the Uptime Institute data center cost estimation methodology * Actual data center cost may vary widely (more than 20%) based on final design Public Law 109-431 To study and promote the use of energy-efficient computer servers in the United States Questions/Discussion Michael Thomas 312.846.8515 mthomas@eypmcf.com Bill Kosik, PE, CEM, LEED AP 312.846.8510 wkosik@eypmcf.com Tom Kutz 312.846.8546 tkutz@eypmcf.com End Slide