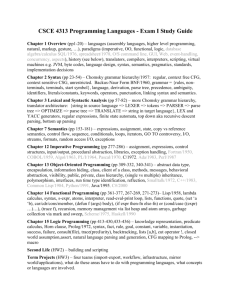

Chapter 1

advertisement

Chapter 4

Lexical and Syntax Analysis

Chapter 4 Topics

4.1

4.2

4.3

4.4

4.5

Introduction

Lexical Analysis

The Parsing Problem

Recursive-Descent Parsing

Bottom-Up Parsing

4-2

4.1 Introduction

• Language

implementation systems

must analyze source

code, regardless of the

specific implementation

approach

• Nearly all syntax analysis

is based on a formal

description of the syntax

of the source language

(BNF)

4-3

Syntax Analysis

• The syntax analysis portion of a language

processor nearly always consists of two

parts:

– A low-level part called a lexical analyzer

(mathematically, a finite automaton based on a

regular grammar)

– A high-level part called a syntax analyzer, or

parser (mathematically, a push-down

automaton based on a context-free grammar,

or BNF)

1-4

Advantages of Using BNF to Describe Syntax

• BNF provides a clear and concise syntax

description, both for human and for software

system that uses them.

• BNF description can be used as the direct basis for

the syntax analyzer.

• Implementations based on BNF are relatively easy

to maintain because of their modularity.

1-5

Reasons to Separate Lexical and Syntax Analysis

• Simplicity - less complex approaches can be used

for lexical analysis; separating them simplifies the

parser

• Efficiency - separation allows optimization of the

lexical analyzer

• Portability - parts of the lexical analyzer may not

be portable, but the parser always is portable

1-6

4.2 Lexical Analysis

• A lexical analyzer is a pattern matcher for character strings.

• A lexical analyzer is a “front-end” for the parser. It is a part

of syntax analyzer, and performs syntax analysis at the

lowest level of program structure.

An input program appears to a compiler as a single string of

character. It collects characters into logical groupings and

assigned internal codes to the groupings.

• Logical groupings are named lexemes. Internal codes for

categories of these groupings are named tokens.

1-7

Example: result = oldsum – value / 100;

Token

IDENT

ASSIGN_OP

IDENT

SUB_OP

IDENT

DIV_OP

INT_LIT

SEMICOLON

Lexeme

Result

=

oldsum

–

value

/

100

;

4-8

Lexical Analysis (continued)

• The lexical analyzer is usually a function that is

called by the parser when it needs the next token.

• Three approaches to building a lexical analyzer:

– Write a formal description of the tokens and use a

software tool that constructs table-driven lexical

analyzers given such a description

– Design a state diagram that describes the tokens and

write a program that implements the state diagram

– Design a state diagram that describes the tokens and

hand-construct a table-driven implementation of the

state diagram

1-9

Lexical Analysis (cont’d): State Diagram

Design

– A naïve state diagram would have a transition

from every state on every character in the

source language - such a diagram would be

very large!

1-10

Lexical Analysis (cont.)

• In many cases, transitions can be combined

to simplify the state diagram

– When recognizing an identifier, all uppercase

and lowercase letters are equivalent

• Use a character class that includes all 52 letters

– When recognizing an integer literal, all digits are

equivalent - use a digit class for 10 integral

literal.

1-11

Lexical Analysis (cont.)

• Reserved words and identifiers can be

recognized together (rather than having a

part of the diagram for each reserved word)

– Use a table lookup to determine whether a

possible identifier is in fact a reserved word

1-12

Lexical Analysis (cont.)

• Convenient utility subprograms:

– getChar - gets the next character of input, puts

it in nextChar, determines its class and puts

the class in charClass

– addChar - puts the character from nextChar

into the place the lexeme is being accumulated,

lexeme

– lookup - determines whether the string in

lexeme is a reserved word (returns a code)

1-13

State Diagram for Recognizing

Arithmetic Expressions

1-14

Lexical Analyzer

Implementation:

SHOW front.c (pp. 176-181)

- Following is the output of the lexical analyzer of

front.c when used on (sum + 47) / total

Next

Next

Next

Next

Next

Next

Next

Next

token

token

token

token

token

token

token

token

is:

is:

is:

is:

is:

is:

is:

is:

25

11

21

10

26

24

11

-1

Next

Next

Next

Next

Next

Next

Next

Next

lexeme

lexeme

lexeme

lexeme

lexeme

lexeme

lexeme

lexeme

is

is

is

is

is

is

is

is

(

sum

+

47

)

/

total

EOF

1-15

4.3 The Parsing Problem

• Goals of the parser, given an input

program:

– Find all syntax errors; for each, produce an

appropriate diagnostic message and recover

quickly

– Produce the parse tree, or at least a trace of the

parse tree, for the program

1-16

4.3.1 Introducing to Parsing

• Two categories of parsers

– Top down - produce the parse tree, beginning

at the root

• Order is that of a leftmost derivation

• Traces or builds the parse tree in preorder

– Bottom up - produce the parse tree, beginning

at the leaves

• Order is that of the reverse of a rightmost derivation

• Useful parsers look only one token ahead in

the input

1-17

A set of notational conventions for grammar

symbols

1. Terminals : lowercase letters at the beginning of the

alphabet (a, b, …)

2. Nonterminals: uppercase letters at the beginning of the

alphabet (A, B, …)

3. Terminals or nonterminals: uppercase letters at the end of

the alphabet (W, X, Y, Z)

4. Strings of terminals: lowercase letters at the end of the

alphabet (w, x, y, z)

5. Mixed strings (terminals and/or nonterminals):lowercase

Greek letters (α, β, δ, γ)

4-18

4.3.2 Top-Down Parsers

• Top-down Parsers

– Top-down parsing is a type of parsing strategy

wherein one first looks at the highest level of the

parse tree and works down the parse tree by

using the rewriting rules of a formal grammar.

For example, if the current sentential form is:

xAα

and the A-rules are AbB, AcBb, and Aa,

a top-down parser must choose among three

rules to get the next sentential form, which

could be xbBα, xcBbα, or xaα

1-19

Top-Down Parsers (cont’d)

• The most common top-down parsing

algorithms:

– Recursive descent parser - a coded

implementation based directly on BNF

description of syntax language.

– Most common alternative to recursive descent

parser is to use parsing table to implement BNF

rules.

Both are LL parsers (left-to-right leftmost

derivation)

1-20

4.3.3 Bottom-Up Parsers

• Bottom-up parsers

– A bottom-up parser constructs a parse tree by beginning

at the leaves and processing toward the root.

In term of derivation, it can described as follows: Given a

right sentential form, , determine what substring of is

the right-hand side of the rule in the grammar that must

be reduced to produce the previous sentential form in the

right derivation

– The most common bottom-up parsing algorithms are in

the LR (left-to-right scan, rightmost derivation) family

(such as LALR, canonical LR parser (LR(1) parser) , LR(0)

parser)

1-21

4.3.4 The Complexity of Parsing

• The Complexity of Parsing

– Parsers that work for any unambiguous

grammar are complex and inefficient ( O(n3),

where n is the length of the input )

– All algorithms used for the syntax analyzers of

commercial compilers have complexity O(n).

1-22

4.4 Recursive-Descent Parsing

4.4.1 Recursive-Descent Parsing Process

• A recursive-descent parsing is so named because

it consists of collection of subprograms, many of

which are recursive, and it produce a parse tree in

top-down order.

• EBNF is ideally suited for being the basis for a

recursive-descent parser, because EBNF

minimizes the number of nonterminals

1-23

Recursive-Descent Parsing (cont.)

• A grammar for simple arithmetic expressions:

<expr> <term> {(+ | -) <term>}

<term> <factor> {(* | /) <factor>}

<factor> id | int_constant | ( <expr> )

Note: Inf EBNF, additional metacharacters

– { } for a series of zero or more

– ( ) for a list, must pick one

– [ ] for an optional list; pick none or one

1-24

Recursive-Descent Parsing (cont.)

• Assume we have a lexical analyzer named

lex, which puts the next token code in

nextToken

• The coding process:

– For each terminal symbol in the RHS, compare it

with the nextToken. If they match, continue,

else there is a syntax error

– For each nonterminal symbol in the RHS, call its

associated parsing subprogram

1-25

Recursive-Descent Parsing (cont.)

/* Function expr

Parses strings in the language

generated by the rule:

<expr> → <term> {(+ | -) <term>}

*/

void expr() {

/* Parse the first term */

term();

/* As long as the next token is + or -, call

lex to get the next token and parse the

next term */

while (nextToken = = ADD_OP ||

nextToken = = SUB_OP){

lex();

term();

}

}

1-26

Recursive-Descent Parsing (cont.)

• This particular routine does not detect errors

• Convention: Every parsing routine leaves the next

token in nextToken

1-27

Recursive-Descent Parsing (cont.)

• A nonterminal that has more than one RHS

requires an initial process to determine which RHS

it is to parse.

– The correct RHS is chosen on the basis of the next token

of input (the lookahead)

– The next token is compared with the first token that can

be generated by each RHS until a match is found

– If no match is found, it is a syntax error

1-28

Recursive-Descent Parsing (cont.)

/* term

Parses strings in the language generated by the rule:

<term> -> <factor> {(* | /) <factor>)

*/

void term() {

printf("Enter <term>\n");

/* Parse the first factor */

factor();

/* As long as the next token is * or /,

next token and parse the next factor */

while (nextToken == MULT_OP || nextToken == DIV_OP) {

lex();

factor();

}

printf("Exit <term>\n");

} /* End of function term */

1-29

Recursive-Descent Parsing (cont.)

/* Function factor

Parses strings in the language

generated by the rule:

<factor> -> id | (<expr>) */

void factor() {

/* Determine which RHS */

if (nextToken) == ID_CODE || nextToken == INT_CODE)

/* For the RHS id, just call lex */

lex();

/* If the RHS is (<expr>) – call lex to pass over the left parenthesis,

call expr, and check for the right parenthesis */

else if (nextToken == LP_CODE) {

lex();

expr();

if (nextToken == RP_CODE)

lex();

else

error();

} /* End of else if (nextToken == ... */

else error(); /* Neither RHS matches */

}

1-30

Recursive-Descent Parsing (cont.)

- Trace of the lexical and syntax analyzers on (sum + 47) / total

Next token is:

Enter <expr>

Enter <term>

Enter <factor>

Next token is:

Enter <expr>

Enter <term>

Enter <factor>

Next token is:

Exit <factor>

Exit <term>

Next token is:

Enter <term>

Enter <factor>

Next token is:

Exit <factor>

Exit <term>

Exit <expr>

Next token is:

Exit <factor>

25 Next lexeme is (

11 Next lexeme is sum

Next token is: 11 Next lexeme is total

Enter <factor>

Next token is: -1 Next lexeme is EOF

Exit <factor>

Exit <term>

Exit <expr>

21 Next lexeme is +

10 Next lexeme is 47

26 Next lexeme is )

24 Next lexeme is /

1-31

<expr>

<term>

<factor>

(

<expr>

<term>

<factor>

<id>

sum

+

/

)

<term>

<factor>

<id>

total

<factor>

int_constant

47

<expr> <term>{(+|-)<term>}

<term> <factor>{(*|/)<factor>}

<factor> <id> | int_constant | (<expr>)4-32

4.4.2 The LL Grammar Class

The Left Recursion Problem

• If a grammar has left recursion, either direct or

indirect, it causes a catastrophic problem for LL

parsers.

e.g., A A + B

•

A grammar can be modified to remove left recursion

For each nonterminal, A,

1. Group the A-rules as A → Aα1 | … | Aαm | β1 | β2 | … | βn

where none of the β’s begins with A

2. Replace the original A-rules with

A → β1A’ | β2A’ | … | βnA’

A’ → α1A’ | α2A’ | … | αmA’ | ε

(Note : ε specifies the empty string)

1-33

• Example Grammar with Left Recursion

E E + T | T

T T*F | F

F (E) | id

Grammar 1

• Complete Replacement Grammar

E T E’

E’ + T E’|ε

T F T’

T’ *F T’| ε

F (E) | id

Grammar 2

E-rules

α1=+T, β=T,

(m=1,n=1)

E-rules

α1=*F, β=F,

(m=1,n=1)

Grammar 2 generates the same language as

Grammar 1, but it is not left recursive.

4-34

• Indirect Left Recursion Problem

e.g., A B a A

B A b

To remove indirect left recursion => Paper (Aho et

al., 2006)

4-35

Left Recursion Problem (cont’d)

Two issues:

• Left recursion disallow top-down parsing;

• Whether the parser can always choose the correct RHS on the

basis of the next token of input, using only the first token

generated by the leftmost nonterminal in the current

sentential form.

Solution: To conduct a Pairwise Disjointness Test

• Pairwise Disjointness Test: a test of non-left recursive

grammar.

4-36

Pairwise disjointness test

• Define: FIRST(α) = { a | α =>* a β} (If α =>* ε, ε is in FIRST(α))

• For each nonterminal, A, that has more than one RHS, for

each pair of rules, A

αi

and A

αj,

if FIRST(αi) FIRST(αj) = φ, this pair passes the

test; otherwise it fails the test.

4-37

Pairwise disjointness test

Example: Perform the pairwise disjointness test for the

following rules:

A aB | bAb | Bb

B cB | d

Sol: FIRST(aB)={a}, FIRST(bAb)={b}, FIRST(Bb)={c,d}

FIRST(aB) FIRST(bAb)= φ

FIRST(aB) FIRST(Bb)= φ

FIRST(bAb) FIRST(Bb)= φ

4-38