CS-605 Advance Computer Architecture(ACA)

advertisement

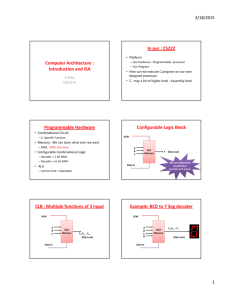

GEC Group Of College Advanced Computer Architecture CS-605 By:Asst. Prof Anchal Bhatt Cs Dept (GIIT) UNIT-1 Flynn Classification:Flynn's taxonomy is a classification of computer architecture, proposed by Michael J. Flynn in 1966. The classification system has stuck, and has been used as a tool in design of modern processors and their functionalities. Since the rise of multiprocessing CPUs, a multiprogramming context has evolved as an extension of the classification system. 1. Single Instruction Single Data (SISD): Single instruction is performed on a single set of data in a sequential form .Most of our computers today are based on this architecture .Von Neumann fits into this category .Data is executed in a sequential fashion (one by one). Fig- SISD 2. Single Instruction Multiple Data (SIMD): Single Instruction is performed on multiple data. A good example is the 'For' loop statement .Over here instruction is the same but the data stream is different. Fig- MISD 2|Page 3. Multiple Instruction Single Data (MISD): Over here N no. of processors are working on different set of instruction on the same set of data .There is no commercial computer of this kind also these are used in Space Shuttle controlling computer (all the buttons you must have noticed in the control centre ). Fig-SIMD 4. Multiple Instruction Multiple Data (MIMD): Over here there is an interaction of N no. of processors on a same data stream shared by all processors .Now over here if you have noticed a lot of computers connected to each other and when they perform a task on the same data (data is then shared).If the processing is high it is called Tightly Coupled and Loosely Coupled viceversa .Most Multiprocessor fit into this category. Fig-MIMD Terms to measure performance of computer:1. Clock rate & CPI (Cycle Per Instruction - A clock with constant cycle time (Tao in nano-seconds) is used to drive the CPU of today computer .The clock rate (f=1/Tao in megahertz) is the inverse of the cycle time program size is calculated by the instruction count( Ic) of a program. The different no. Of clock cycles may be needed by different machine instruction execute .The CPI is an important parameter for find out the time required to run each instruction. 2 .MIPS (Million Instruction Per Second) - Suppose c be the total no. Of clock cycles required to run a program .The cpu time is determined asT=c* Tao=c / f CPI=c/ Ic T=Ic * CPI*Tao=Ic *CPI/f 3|Page The cpu speed is measured in MIPS, This is called as MIPs rate of given Processor, Where, factors I c=Instruction Count, f=Clock Rate. 3. Through-put Rate- System through-put (Ws) in program /seconds is a measure of how many programs a system can execute per unit time .In multiprogramming system, the system through-put is mostly smaller compared to the cpu through-put (Wp) Wp = f/IC* CPI 4. Performance Factors- Suppose Ic be the no. of instruction in a given program or the instruction count .we can estimate the cpu time (T in seconds/program) required to execute the program by finding the product of three contributing factors. T=Ic*CPI*Tao Parallel computer models :1. Multiprocessor- Multiprocessing is the use of two or more central processing units (CPUs) within a single computer system. The term also refers to the ability of a system to support more than one processor and/or the ability to allocate tasks between them. There are many variations on this basic theme, and the definition of multiprocessing can vary with context, mostly as a function of how CPUs are defined 2. Multi-computers:- Multi-computers have unshared distributed memory. The system composed of multiple computers, known as nodes interconnected by a message-passing network. Each node is an autonomous computer composed of a processor, local memory & sometimes attached disks or I/O peripherals. The point-to-point static connections are provided among the nodes by the messagepassing networks. All local processors because they are private. This is because traditional multi-computers have been referred to as No-Remote-Memory-Access machine (NORMA) machines. 4|Page Shared-memory Multiprocessor:1.UMA(Uniform Memory Access)- The physical memory is uniformly shared by all the processors .A processors have same access time to all memory words, that is why it is known as UMA. Each processor may have a private cache peripherals are also shared in some manner. Because of the high degree resource sharing, multiprocessors are known as “Tightly Coupled Systems”. The system is known as a “Symmetric Multiprocessor” when all processor have equal access to all access to all peripheral devices. When only one or subsets of processors are executive-capability, the system is called an “Asymmetric Multiprocessors”. The rest of the processors have no I/O capability it is known as “Attached Processors (APs)”. 2. NUMA (Non-Uniform-Memory-access):- Non-uniform memory access (NUMA) is a computer memory design used in multiprocessing, where the memory access time depends on the memory location relative to the processor. Under NUMA, a processor can access its own local memory faster than non-local memory (memory local to another processor or memory shared between processors). The benefits of NUMA are limited to particular workloads, notably on servers where the data are often associated strongly with certain tasks or users. NUMA architectures logically follow in scaling from Symmetric multiprocessing (SMP) architectures. They were developed commercially during the 1990s. 5|Page 3.COMA(Cache-Only-Memory Access)- Cache only memory architecture (COMA) is a computer memory organization for use in multiprocessors in which the local memories (typically DRAM ) at each node are used as cache. This is in contrast to using the local memories as actual main memory, as in NUMA organizations. In NUMA, each address in the global address space is typically assigned a fixed home node. When processors access some data, a copy is made in their local cache, but space remains allocated in the home node. Instead, with COMA, there is no home. An access from a remote node may cause that data to migrate. Compared to NUMA, this reduces the number of redundant copies and may allow more efficient use of the memory resources. On the other hand, it raises problems of how to find a particular data (there is no longer a home node) and what to do if a local memory fills up (migrating some data into the local memory then needs to evict some other data, which doesn't have a home to go to). Hardware memory coherence mechanisms are typically used to implement the migration. Fig: COMA Data Dependence: - The data dependence indicates the ordering relationship between statements. (a)Flow Dependence- A statement S2 is flow dependent on S1 (written ) if and only if S1 modifies a resource that S2 reads and S1 precedes S2 in execution. The following is an example of a flow dependence (RAW: Read After Write): S1 x: = 10 S2 y: = x + c (b)Anti-dependence- A statement S2 is antidependent on S1 ( written ) if and only if S2 modifies a resource that S1 reads and S1 precedes S2 in execution. The following is an example of antidependence (WAR: Write after Read): S1 6|Page x: = y + c, S2 y: = 10 Here, S2 sets the value of y but S1 reads a prior value of y . (c) Output Dependences- A statement S2 is output dependent on S1 (written ) if and only if S1 and S2 modify the same resource and S1 precedes S2 in execution. The following is an example of output dependence (WAW: Write after Write): S1 x: = 10 S2 x: = 20 Here, S2 and S1 both set the variable x . (d) I/O Dependences- A statement S2 is input dependent on S1 (written ) if and only if S1 and S2 read the same resource and S1 precedes S2 in execution. The following is an example of an input dependence (RAR: Read-After-Read): S1 y: = x + 3 S2 z: = x + 5 Here, S2 and S1 both access the variable x . This dependence does not prohibit reordering. Control Dependencies: - Control dependence is a situation in which a program instruction executes if the previous instruction evaluates in a way that allows its execution. A statement S2 is control dependent' on S1 (written ) if and only if S2s execution is conditionally guarded by S1. The following is an example of such control dependence: S1 if x > 2 go to L1 S2 y: = 3 S3 L1: Z: = y + 1 Here, S2 only runs if the predicate in S1 is false. Resource Dependences: - It is thought of as the conflicts in using shared resources like integer units, floating-points units, registers & memory areas, among parallel events. If the conflicting resource is an ALU, it is called ALU dependence .When the conflicts include workplace storage, it is known as storage dependence. In the storage dependence situation every task must work on independent storage locations & use protected access (like locks or monitors) to shared variable data. Hardware Parallelism: - It is defined by the machine architecture & hardware multiplicity. It is a function of cost & performance tradeoffs. It shows the resource utilization patterns of simultaneously executable operations. It also specifies the peak performance of the processor. In a processor one approach to characterize the parallelism is by the no. of instruction issues per machine cycle. A 7|Page processor is known as K-Issue processor, when it issues K instruction per machine cycle. A multiprocessor system constructed with n K-issues processors should be capable of handling a max no. of nk threads of instruction. Software Parallelism: - Software parallelism is defined by the control & data dependence of programs. It is a function of algorithm programming style & compiler optimization. The degree of parallelism is shown in the program profile or in the program flow graph .The flow graph shows the pattern of simultaneously executable operation. During the execution period, parallelism in a program varies. The first is control parallelism, which permits two or more operations to be performed simultaneously. The second is data parallelism in which almost the same operation is performed over many data elements by many processors simultaneously. Grain Size: - A measure of the amount of computation involved in a software process is called as a grain size or granularity .Grain size specifies the basic program segment selected for parallel processing. Latency: - A time measure of the communication overheads incurred between machine sub systems is called as Latency. For instances the time needed by a processor to access the memory is called the memory latency. The synchronization latency is the time needed for two processes to synchronize with each other. Control Flow: - The shared memory is used by control- flow computers to hold program instruction & data objects. Many instruction update variables in the shared memory. There may be side effects of the execution of one instruction on other instruction because memory is shared. The side effects prevent parallel processing from taking place in many cases because of the use of the control-driven mechanisms , a uni-processor computer is inherently sequential. Data- Flow: - The execution of an instruction in a data-flow computer is driven by data avialiability instead of being guided by a program counter. Whenever operands become available any instruction should be ready for execution .The instruction in a data-driven programs are not ordered. Data are directly held inside instructions in data-driven programs are not ordered. Data are directly held inside instruction instead of being stored in a shared memory. Static Interconnection & Dynamic Networks:-Direct links are fixed once built . Static networks use direct links .This type of networks is appropriate for building computers where the communication patterns are predictable or implementable with static connection. Those network use configurable paths and do not have a processor associated with each node .Processors are connected dynamically via switch. We require to use dynamic connections which can implement all communication patterns depending on program demands for multipurpose or general-purpose application. 8|Page Bus system:A system bus is a single computer bus that connects the major components of a computer system. The technique was developed to reduce costs and improve modularity. It combines the functions of a data bus to carry information, an address bus to determine where it should be sent, and a control bus to determine its operation. Although popular in the 1970s and 1980s, modern computers use a variety of separate buses adapted to more specific needs. The Von Neumann architecture, a central control unit and Arithmetic logic unit (ALU, which he called the central arithmetic part) were combined with computer memory and I/O functions to form a stored program computer. The Report presented a general organization and theoretical model of the computer, however, not the implementation of that model.[2] Soon designs integrated the control unit and ALU into what became known as the CPU. Computers in the 1950s and 1960s were generally constructed in an ad-hoc fashion. For example, the CPU, memory, and input/output units were each one or more cabinets connected by cables. Engineers used the common techniques of standardized bundles of wires and extended the concept as backplanes were used to hold printed circuits boards in these early machines. The name "bus" was already used for "bus bars" that carried electrical power to the various parts of electric machines, including early mechanical calculators. The advent of integrated circuits vastly reduced the size of each computer unit, and buses became more standardized. Standard modules could be interconnected in more uniform ways and were easier to develop and maintain. Internal bus: -The internal bus, also known as internal data bus, memory bus, system bus or Front-Side-Bus, connects all the internal components of a computer, such as CPU and memory, to the motherboard. Internal data buses are also referred to as a local bus, because they are intended to connect to local devices. This bus is typically rather quick and is independent of the rest of the computer operations. External bus:-The external bus, or expansion bus is made up of the electronic pathways that connect the different external devices, such as printer etc., to the computer. Fig: Bus –connected multiprocessor system 9|Page Crossbar Switch Organization:A crossbar switch is an assembly of individual switches between a set of inputs and a set of outputs. The switches are arranged in a matrix. If the crossbar switch has M inputs and N outputs, then a crossbar has a matrix with M × N cross-points or places where the connections cross. At each cross-point is a switch; when closed, it connects one of the inputs to one of the outputs. A given crossbar is a single layer, non-blocking switch. Non-blocking means that other concurrent connections do not prevent connecting other inputs to other outputs. Collections of crossbars can be used to implement multiple layer and blocking switches. A crossbar switching system is also called a coordinate switching system. The matrix layout of a crossbar switch is also used in some semi conductor’s devices. Here the "bars" are extremely thin metal "wires", and the "switches" are fusible links. The fuses are blown or opened using high voltage and read using low voltage. Such devices are called programmable read only memory. Furthermore, matrix arrays are fundamental to modern flat-panel displays. Thin-film-transistor LCDs have a transistor at each cross-point, so they could be considered to include a crossbar switch as part of their structure. For video switching in home and professional theater applications, a crossbar switch (or a matrix switch, as it is more commonly called in this application) is used to make the output of multiple video appliances available simultaneously to every monitor or every room throughout a building. In a typical installation, all the video sources are located on an equipment rack, and are connected as inputs to the matrix switch. The matrix switch enables the signals to be re-routed on a whim, thus allowing the establishment to purchase / rent only those boxes needed to cover the total number of unique programs viewed anywhere in the building also it makes it easier to control and get sound from any program to the overall speaker / sound system. 10 | P a g e Multiport Memory Organization:Systems and methods for program directed memory access patterns including a memory system with a , memory a memory controller and a virtual memory management system. The memory includes a plurality of memory devices organized into one or more physical groups accessible via associated busses for transferring data and control information. The memory controller receives and responds to memory access requests that contain application access information to control access pattern and data organization within the memory. Responding to memory access request includes accessing one or more memory devices. The virtual management system includes: a plurality of page table entries for mapping virtual memory addresses to real addresses in the memory ; a hint state responsive to application access information for indicating how real memory for associated pages is to be physically organized within the memory ; and a means for conveying the hint state to the memory controller. Multistage interconnection networks:Multistage interconnection networks (MINs) are a class of high-speed computer networks usually composed of processing elements (PEs) on one end of the network and memory elements (MEs) on the other end, connected by switching elements (SEs). The switching elements themselves are usually connected to each other in stages, hence the name. Such networks include networks, omega networks and many other types. MINs are typically used in highperformance or parallel computing as a low-latency interconnection (as opposed to traditional packet switching networks), though they could be implemented on top of a packet switching network. Though the network is typically used for routing purposes, it could also be used as a co-processor to the actual processors. 11 | P a g e UNIT-2 Instruction Set:An instruction set, or instruction set architecture (ISA), is the part of the computer architecture related to programming , including the native data types, instructions ,registers, addressing, memory architecture , interrupt and exception handling , and external I/O. An ISA includes a specification of the set of opcodes (machine language), and the native commands implemented by a particular processor Instruction set architecture is distinguished from the micro architecture, which is the set of processor design techniques used to implement the instruction set. Computers with different micro architectures can share a common instruction set. For example, the Intel Pentium and the AMD Athlon implement nearly identical versions of the x86 instructions set, but have radically different internal designs. Complex Instruction Set:A CISC instruction sets consists of nearly 120 to 350 instruction employing variable instruction / data formats, utilizes a small set of 8 to 24 general-purpose registers & execute many memory reference operations based on more than a twelve addressing modes. In CISC architecture, large number HLL statements are implemented directly in hardware / firmware. It enhances execution efficiency, simplifies the compiler development & permit on extension from scalar instruction to symbolic & vector instructions. CISC Scalar:A scalar processor runs with scalar data. The simplest scalar processor runs integer instructions with the help of fixed point operands. A CISC scalar processor is built either with single chip or with multiple chips mounted on a processor board depending on a complex instruction set. The performance of CISC scalar processor is similar to the base scalar processor in the ideal case. 12 | P a g e RISC Sets:Reduced instruction set computing, or RISC (pronounced 'risk'), is a CPU design strategy based on the insight that a simplified instruction sets (as opposed to a complex set) provides higher performance when combined with a microprocessor capable of executing those instructions using fewer microprocessor cycles per instruction .A computer based on this strategy is a reduced instruction set computer, also called RISC. The opposing architecture is called Complex Instruction sets Computer, i.e. CISC. Various suggestions have been made regarding a precise definition of RISC, but the general concept is that of a system that uses a small, highly optimized set of instructions, rather than a more versatile set of instructions often found in other types of architecture. Another common trait is that RISC systems use the load architecture where memory is normally accessed only through specific instructions, rather than accessed as part of other instruction. RISC Scalar Processor:Scalar RISC is the generic RISC processors as they are designed to issue one instruction per cycle, identical to the base scalar processor. Theoretically, both CISC & RISC scalar processor should perform about the same when they run with equal program length & with the same clock rate .These two considerations are sometimes valid because the architecture influences the density & quality of code produced by compilers. 13 | P a g e Architectural Distinction B/W RISC & CISC Processors:- CISC Computer RISC Computer The acronym is variously used. The acronym is variously used. If it reads as above (i.e. as CISC computer), it means a computer that has a CISC CHIP as its CPU. If it reads as above (i.e. as RISC computer), it means a computer that has a RISC CHIP as its CPU. It is also referred to as CISC computing. It is also referred to as RISC computing. It is sometimes called a CISC “chip”. This could have a tautology in the last two words, but it can be overcome by thinking of it as a CISC chip. It is sometimes called a RISC “chip”. This could have a tautology in the last two words, but it can be overcome by thinking of it as RISC chip. CISC chips have an increasing number of components and an ever increasing instruction set and so are always slower and less powerful at executing “common” instructions RISC chips have fewer components and a smaller instruction set, allowing faster accessing of “common” instructions CISC chips execute an instruction in two to ten machine cycles RISC chips execute an instruction in one machine cycle CISC chips do all of the processing themselves RISC chips distribute some of their processing to other chips CISC chips are more common in RISC chips are finding their way into computers that have a wider range of components that need faster processing of a instructions to execute limited number of instructions, such as printers and games machines FIG: Comparison B/W RISC & CISC 14 | P a g e VLIW Processor Architecture:Very Long Instruction Word (VLIW) processors have instruction words with fixed "slots" for instructions that map to the functional units available. This makes the instruction issue unit much simpler, but places an enormous burden on the compiler to allocate useful work to every slot of every instruction. Very long instruction word (VLIW) refers to processor architectures designed to take advantage of instruction level parallelism (ILP). Whereas conventional processors mostly allow programs only to specify instructions that will be executed in sequence, a VLIW processor allows programs to explicitly specify instructions that will be executed at the same time (that is, in parallel). This type of processor architecture is intended to allow higher performance without the inherent complexity of some other approaches. Pipelining in VLIW Processors:The execution of instruction by an ideal VLIW processor and each instruction defines multiple operations. VLIW machines behave very similar to superscalar machines. In a VLIW architecture, data movement & instruction parallelism are given at compile time. Therefore, synchronization & run-time resources scheduling are completely removed. A VLIW processor can be consider as an extreme of a superscalar processor where all unrelated or independent operations are already synchronously compacted together in advance. The VLIW processor CPI can be even lower as comparison to a superscalar processor. 15 | P a g e Memory Hierarchy Technology:The term memory hierarchy is used in computer architecture when discussing performance issues in computer architectural design, algorithm predictions, and the lower level programming constructs such as involving locality of reference. A "memory hierarchy" in computer storage distinguishes each level in the "hierarchy" by response time. Since response time, complexity, and capacity are related the levels may also be distinguished by the controlling technology. The many trade-offs in designing for high performance will include the structure of the memory hierarchy, i.e. the size and technology of each component. So the various components can be viewed as forming a hierarchy of memories (m1,m2,...,mn) in which each member mi is in a sense subordinate to the next highest member mi+1 of the hierarchy. To limit waiting by higher levels, a lower level will respond by filling a buffer and then signaling to activate the transfer. There are four major storage levels. 1. 2. 3. 4. Internal – Processor registers and cache. Main – the system RAM and controller cards. On-line mass storage – Secondary storage. Off-line bulk storage – Tertiary and off-lines storage. This is a general memory hierarchy structuring. Many other structures are useful. For example, a paging algorithm may be considered as a level for virtual memory when designing a computer architecture (a) Registers & Caches: - The registers are parts of the processors, multi-level caches are built either on the processor chip or on the processor board .The caches is controlled by the MMU & is programmer- transparent. The caches can also be implemented at one or multiple levels, depending on the speed & application requirement. (b) Main memory: - The main memory is sometimes called the primary memory of a computer system. It’s usually much larger than the cache & often implemented by the most cost-effective RAM chips, such as DDR-SDRAMs, i.e. Dual Data Rate Synchronous Dynamic RAMs. The main memory by a MMU in co-operation with the operating system. (c) Disk Drives & Backup Storage:-The disk storage is considered the highest level of on-line memory. It holds the system programs, such as OS & compilers & user programs & their data sets. (d) Peripheral Technology:- The high demand for multimedia I/O ,such as image ,speech, video, & music has resulted in further advances in I/O technology. 16 | P a g e Inclusion, Coherence & Locality:Information stored in a memory hierarchy (M1, M2 ...Mn) satisfies three important properties inclusion, coherence & locality. (a) Inclusion Property: - The inclusion property is stated as M1<M2<M3<.......<Mn. The set inclusion relationship implies that all information items are originally stored in the outermost level Mn. During the processing, subsets of Mn are copied into Mn-1 are copied into Mn-2 & so-on. Information transfer between the CPU & caches is in terms of words (4 or 8 bytes each depending on the word length of a machine). The cache (M1) is divided into cache blocks, also called cache lines by some authors .Each block may be typically 32 bytes (8 words). Block is the units of data transfer between the cache & main memory Between L1 & L2 cache etc. (b) Coherence Property: - In computer science, cache coherence is the consistency of shared resource data that ends up stored in multiple local caches. When clients in a system maintain caches of a common memory resource, problems may arise with inconsistent data. This is particularly true of CPUs in a multiprocessing system. Referring to the illustration on the right, if the top client has a copy of a memory block from a previous read and the bottom client changes that memory block, the top client could be left with an invalid cache of memory without any notification of the change. Cache coherence is intended to manage such conflicts and maintain consistency between cache and memory. (c) Locality References:- In computer science, locality of reference, also known as the principle of locality, is a phenomenon describing the same value, or related storage locations, being frequently accessed. There are two basic types of reference locality – temporal and spatial locality. Temporal locality refers to the reuse of specific data, and/or resources, within relatively small time duration. Spatial locality refers to the use of data elements within relatively close storage locations. Sequential locality, a special case of spatial locality, occurs when data elements are arranged and accessed linearly, such as, traversing the elements in a one-dimensional array. Locality is merely one type of predictable behaviour that occurs in computer systems. Systems that exhibit strong locality of reference are great candidates for performance optimization through the use of techniques such as the cache, instruction prefetch technology for memory, or the advanced branch predictor at the of processor. Interleaved memory organisation:In computing, interleaved memory is a design made to compensate for the relatively slow speed of DRAM or core memory, by spreading memory addresses evenly across memory banks. That way, contiguous memory reads and writes are using each memory bank in turn, resulting in higher memory throughputs due to reduced waiting for memory banks to become ready for desired operations .It is different from multi-channel memory architectures primarily as interleaved memory is not adding more channels between the main memory and the memory controller. However, channel interleaving is also possible, for example in free scale i.MX6 processors, which allow interleaving to be done between two channels. Main memory (random-access memory, RAM) is usually composed of a collection of DRAM memory chips, where a number of chips can be grouped together to form a memory bank. It is then possible, with a memory controller that supports interleaving, to lay out these memory banks so that the memory banks will be interleave. In traditional (flat) layouts, memory banks can be allocated a continuous block of memory addresses, which is very simple for the memory controller and gives equal performance in completely random access scenarios, when compared to performance levels achieved through interleaving. However, in reality memory reads are rarely random due to locality of reference and optimizing for close together access gives far better performance in interleaved layouts. 17 | P a g e Memory Interleaving:Memory interleaving is a way to distribute individual addresses over memory modules. Its aim is to keep the most of modules busy as computations proceed. With memory interleaving, the low-order k bits of the memory address generally specify the module on several buses. 18 | P a g e 19 | P a g e 20 | P a g e