Slides

advertisement

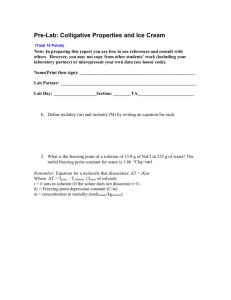

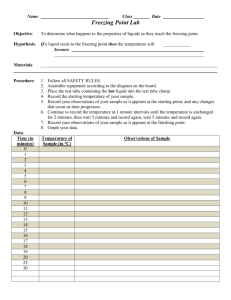

VLDB 2011 Seattle, WA Optimizing and Parallelizing Ranked Enumeration Konstantin Golenberg Benny Kimelfeld Yehoshua Sagiv The Hebrew University of Jerusalem IBM Research – Almaden The Hebrew University of Jerusalem Background: DB Search at HebrewU eu brussels search demo in SIGMOD’10, implementation in SIGMOD’08, algorithms in PODS’06 • Initial implementation was too slow… • Purchased a multi-core server • Didn’t help: cores were usually idle – Due to the inherent flow of the enumeration technique we used • Needed deeper understanding of ranked enumeration to benefit from parallelization – This paper 2 Outline Lawler-Murty’s Ranked Enumeration Optimizing by Progressive Bounds Parallelization / Core Utilization Conclusions Ranked Enumeration Problem rd 23nd best best answer . . . Huge number (e.g., 2|Problem|) of ranked answers (Can’t afford to instantiate all answers) “Complexity”: • What is the delay between successive answers? • How much time to get top-k? Here User Examples: • Various graph optimizations – Shortest paths – Smallest spanning trees – Best perfect matchings • Top results of keyword search on DBs (graph search) • Most probable answers in probabilistic DBs • Best recommendations for schema integration 4 Abstract Problem Formulation A collection of objects O= … A= 21 31 Huge, described by a condition on A’s subsets 27 28 score() Answers a⊆O 17 score(a) is high a is of high-quality Goal: Find top-k answers 32 a1 … 17 28 31 a2 a3 ak 5 Graph Search in The Abstraction Edges of G • Data graph G • Set Q of keywords O= … A= Answers a⊆O Subtrees (edge sets) a containing all keywords in Q (w/o redundancy, see [GKS 2008]) Goal: Find top-k answers score(a): 1 weight(a) , IR measures, etc. 6 What is the Challenge? O= start 32 1st (top) answer ... 31 2nd answer j th answer ? ? Optimization problem How to handle these constraints? (j may be large!) ... 17 • ≠ previous (j-1) answers • best remaining answer Conceivably, much more complicated than top-1! 7 Lawler-Murty’s Procedure [Murty, 1968] [Lawler, 1972] Lawler-Murty’s gives a general reduction: Finding top-k answers then PTIME if PTIME Finding top-1 answer under simple constraints We understand optimization much better! Often, amounts to classical optimization, e.g., shortest path (but sometimes it may get involved, e.g., [KS 2006]) Other general top-k procedure: [Hamacher & Queyranne 84], very similar! 8 Among the Uses of Lawler-Murty’s Graph/Combinatorial Algorithms: • • • • • Shortest simple paths [Yen 1972] Minimum spanning trees [Gabow 1977, Katoh et al., 1981] Best solutions in resource allocation [Katoh et al. 1981] Best perfect matchings, best cuts [Hamacher & Queyranne 1985] Minimum Steiner trees [KS 2006] Bioinformatics: • Yen’s algorithm to find sets of metabolites connected by chemical reactions [Takigawa & Mamitsuka 2008] Data Management: • • • • • ORDER-BY queries [KS 2006, 2007] Graph/XML search [GKS 2008] Generation of forms over integrated data [Talukdar et al. 2008] Course recommendation [Parameswaran & Garcia-Molina 2009] Querying Markov sequences [K & Ré 2010] 9 Lawler-Murty’s Method: Conceptual start 10 1. Find & Print the Top Answer In principle, at this point we should find the second-best answer But Instead… start Output 11 2. Partition theby Remaining Answers Partition defined a set of simple constraints start • Inclusion constraint: “must contain ” Output • Exclusion constraint: “must not contain ” 12 3. Find the Top of Each Set start Output 13 4. Find & Print the Second Answer start Best among all the top answers in the partitions 14 NextOutput answer: 5. Further Divide the Chosen Partition … and so on … (until k answers are printed) start ... Output 15 Lawler-Murty’s: Actual Execution Output 34 30 Printed already Best of each partition best 19 24 Partition Reps. + Best of Each 18 16 Lawler-Murty’s: Actual Execution Output 34 30 For each new partition, a task to find the best answer 19 24 Partition Reps. + Best of Each 18 17 Lawler-Murty’s: Actual Execution Output 34 30 22 24 18 21 best… 19 18 Partition Reps. + Best of Each 18 Outline Lawler-Murty’s Ranked Enumeration Optimizing by Progressive Bounds Parallelization / Core Utilization Conclusions Typical Bottleneck Output 34 14 30 24 Partition Reps. + Best of Each 12 20 Typical Bottleneck Output 34 30 22 24 20 15 In top k? 12 14 Partition Reps. + Best of Each 21 Progressive Upper Bound • Throughout the execution, an optimization alg. often upper bounds it’s final solution’s score • Progressive: bound gets smaller in time • Often, nontrivial bounds, e.g., – Dijkstra's algorithm: distance at the top of the queue • Similarly: some Steiner-tree algorithms [DreyfusWagner72] – Viterbi algorithms: max intermediate probability – Primal-dual methods: value of dual LP solution ≤24 ≤22 ≤18 Time ≤14 12 22 Freezing Tasks (Simplified) Output 34 14 30 24 Partition Reps. + Best of Each 12 23 Freezing Tasks (Simplified) Output 34 30 ≤24 ≤23 22 24 ≤22 ≤23 ≤24 20 12 14 Partition Reps. + Best of Each 24 Freezing Tasks (Simplified) Output 34 30 24 ≤20 ≤23 ≤24 22 > 20 14 22 20 Partition Reps. + Best of Each 12 25 Freezing Tasks (Simplified) Output 34 30 24 ≤15 ≤16 ≤18 ≤20 ≤23 ≤24 15 best ≤20 14 22 20 Partition Reps. + Best of Each 12 26 Improvement of Freezing Experiments: Graph Search 2 Intel Xeon processors (2.67GHz), 4 cores each (8 total); 48GB memory Simple Lawler-Murty w/ Freezing ms ms ms 1000 10000 800 8000 600 6000 400 4000 200 2000 0 0 120000 100000 80000 60000 40000 20000 0 Mondial DBLP (part) DBLP (full) k = 10 , 100 k = 10 , 100 k = 10 , 100 On average, freezing saved 56% of the running time 27 Outline Lawler-Murty’s Ranked Enumeration Optimizing by Progressive Bounds Parallelization / Core Utilization Conclusions Straightforward Parallelization Output 34 30 Awaiting Tasks 14 24 12 29 Straightforward Parallelization Output 34 30 24 22 20 Awaiting Tasks 15 14 12 30 Straightforward Parallelization Output 34 30 24 Awaiting Tasks 14 22 20 15 12 31 Not so fast… Typical: reduced 30% of running time Same for 2,3…,8 threads! Idle Cores while Waiting Output 34 30 Awaiting Tasks 14 24 12 33 Idle Cores while Waiting Output 34 30 24 idle 22 20 Awaiting Tasks 15 14 12 34 Early Popping Output 34 30 24 ≤22 Skipped issues: • Thread synchronization – semaphores, locking, etc. • Correctness 14 22 ≤22 Awaiting Tasks 22 > 20 20 ≤23 ≤20 ≤19 ≤24 12 35 Improvement of Early Popping Experiments: Graph Search 2 Intel Xeon processors (2.67GHz), 4 cores each (8 total); 48GB memory Medium Long 150% 100% 50% 0% 1 2 4 6 8 Number of Threads Short % of Lawler-Murty % of Lawler-Murty Short Medium Long 150% 100% 50% 0% 1 2 4 6 8 Number of Threads Mondial DBLP (part) short, medium-size & long queries short, medium-size & long queries 36 Early Popping vs. (Serial) Freezing Experiments: Graph Search 2 Intel Xeon processors (2.67GHz), 4 cores each (8 total); 48GB memory Medium Long 300 200 100 0 1 2 4 6 8 Number of Threads Short % of Serial Freezing % of Serial Freezing Short Medium Long 300 200 100 0 1 2 4 6 8 Number of Threads Mondial DBLP (part) short, medium-size & long queries short, medium-size & long queries •Need 4 threads to start gaining •And even then, fairly poor… 37 Combining Freezing & Early Popping • We discuss additional ideas and techniques to further utilize the cores – Not here, see the paper • Main speedup by combining early popping with freezing – Cores kept busy… on high-potential tasks – Thread synchronization is quite involved • At the high level, the final algorithm has the following flow: 38 Combining: General Idea Output 20 computed answers 34 30 12 24 Computed Answers (to-print) frozen + new tasks Threads work on frozen tasks 26 15 25 17 Partition Reps. as Frozen Tasks 39 Combining: General Idea Output 20 computed answers 34 30 12 24 Computed Answers (to-print) frozen + new tasks 20 Threads work on frozen tasks 15 25 17 Partition Reps. as Frozen Tasks 40 Combining: General Idea Output 34 30 Main task just pops computed results to print … but validates: no better results by frozen tasks 20 computed answers 12 24 Computed Answers (to-print) 22 22 Threads work on frozen tasks 20 15 frozen + new tasks 25 17 Partition Reps. as Frozen Tasks 41 Combined vs. (Serial) Freezing Experiments: Graph Search 2 Intel Xeon processors (2.67GHz), 4 cores each (8 total); 48GB memory Medium Long 120% 100% 80% 60% 40% 20% 0% 1 2 4 6 Number of Threads Mondial 8 Long Medium Short % of Serial Freezing % of Serial Freezing Short 120% 100% 80% 60% 40% 20% 0% 1 2 4 6 8 Number of Threads DBLP Now, significant gain (≈50%) already w/ 2 threads 42 Improvement of Combined Experiments: Graph Search 2 Intel Xeon processors (2.67GHz), 4 cores each (8 total); 48GB memory Medium Long 50% 4%-5% 40% 30% 20% 10% 0% 1 2 4 6 8 Short % of Lawler-Murty % of Lawler-Murty Short Medium 50% Long 3%-10% 40% 30% 20% 10% 0% 1 2 4 6 Number of Threads Number of Threads Mondial DBLP 8 On average, with 8 threads we got 5.7% of the original running time 43 Outline Lawler-Murty’s Ranked Enumeration Optimizing by Progressive Bounds Parallelization / Core Utilization Conclusions Conclusions • Considered Lawler-Murty’s ranked enumeration – Theoretical complexity guarantees – …but a direct implementation is very slow – Straightforward parallelization poorly utilizes cores • Ideas: progressive bounds, freezing, early popping – In the paper: additional ideas, combination of ideas • Most significant speedup by combining these ideas – Flow substantially differs from the original procedure – 20x faster on 8 cores • Test case: graph search; focus: general apps – Future: additional test cases Questions? 45