Lecture17

advertisement

Engineering Analysis ENG 3420 Fall 2009

Dan C. Marinescu

Office: HEC 439 B

Office hours: Tu-Th 11:00-12:00

Lecture 17

Reading assignment Chapters 10 and 11, Linear Algebra ClassNotes

Last time:

Today:

Symmetric matrices; Hermitian matrices.

Matrix multiplication

Linear algebra functions in Matlab

The inverse of a matrix

Vector products

Tensor algebra

Characteristic equation, eigenvectors, eigenvalues

Norm

Matrix condition number

Next Time

More on

LU Factorization

Cholesky decomposition

Lecture 17

2

Matrix analysis in MATLAB

Norm

normest

rank

det

trace

null

orth

rref

subspace

Matrix or vector norm

Estimate the matrix 2-norm

Matrix rank

Determinant

Sum of diagonal elements

Null space

Orthogonalization

Reduced row echelon form

Angle between two subspaces

Eigenvalues and singular values

eig

svd

eigs

svds

poly

polyeig

condeig

hess

qz

schur

Eigenvalues and eigenvectors

Singular value decomposition

A few eigenvalues

A few singular values

Characteristic polynomial

Polynomial eigenvalue problem

Condition number for eigenvalues

Hessenberg form

QZ factorization

Schur decomposition

Matrix functions

Expm

Logm

Sqrtm

Funm

Matrix exponential

Matrix logarithm

Matrix square root

Evaluate general matrix function

Linear systems of equations

\ and /

Linear equation solution

inv

Matrix inverse

cond

Condition number for inversion

condest 1-norm condition number estimate

chol

Cholesky factorization

cholinc

Incomplete Cholesky factorization

linsolve Solve a system of linear equations

lu

LU factorization

ilu

Incomplete LU factorization

luinc

Incomplete LU factorization

qr

Orthogonal-triangular decomposition

lsqnonneg Nonnegative least-squares

pinv

Pseudoinverse

lscov

Least squares with known covariance

The inverse of a square

If [A] is a square matrix, there is another matrix [A]-1,

called the inverse of [A], for which [A][A]-1=[A]-1[A]=[I]

The inverse can be computed in a column by column

fashion by generating solutions with unit vectors as the

right-hand-side constants:

1

Ax1 0

0

0

Ax 2 1

0

A x1

1

x2

0

Ax 3 0

1

x3

Canonical base of an n-dimensional vector space

100……000

010……000

001……000

…………….

000…….100

000…….010

000…….001

Matrix Inverse (cont)

LU factorization can be used to efficiently evaluate a

system for multiple right-hand-side vectors - thus, it is

ideal for evaluating the multiple unit vectors needed to

compute the inverse.

The response of a linear system

The response of a linear system to some stimuli can be found

using the matrix inverse.

Interactions response stimuli

Ar s

1

1

A Ar A s

1

A A I

1

rA s

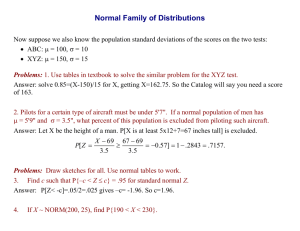

Distance and norms

Metric space a set where the ”distance” between elements of the

set is defined, e.g., the 3-dimensional Euclidean space. The

Euclidean metric defines the distance between two points as the

length of the straight line connecting them.

A norm real-valued function that provides a measure of the size

or “length” of an element of a vector space.

Vector Norms

The p-norm of a vector X is:

X

p

1/ p

n

p

x i

i1

Important examples of vector p-norms include:

p 1:sum of the absolute values

n

X 1 xi

i1

p 2 : Euclidian norm (length)

p : maximum magnitude

X2 X e

X

max xi

1in

n

x

2

i

i1

Matrix Norms

Common matrix norms for a matrix [A] include:

n

column - sum norm

Frobenius norm

A 1 max aij

1 jn

A

f

n

i1

n

a

i1 j1

n

row - sum norm

spectral norm (2 norm)

A

A

2

max aij

1in

j1

1/2

max

Note - max is the largest eigenvalue of [A]T[A].

2

ij

Matrix Condition Number

The matrix condition number Cond[A] is obtained by calculating

Cond[A]=||A||·||A-1||

In can be shown that:

X

A

Cond A

X

A

The relative error of the norm of the computed solution can be as

large as the relative error of the norm of the coefficients of [A]

multiplied by the condition number.

If the coefficients

of [A] are known to t digit precision, the solution [X]

may be valid to only

t-log10(Cond[A]) digits.

Built-in functions to compute norms and

condition numbers

norm(X,p) Compute the p norm of vector X, where p

can be any number, inf, or ‘fro’ (for the Euclidean norm)

norm(A,p) Compute a norm of matrix A, where p can

be 1, 2, inf, or ‘fro’ (for the Frobenius norm)

cond(X,p) or cond(A,p) Calculate the condition

number of vector X or matrix A using the norm specified

by p.

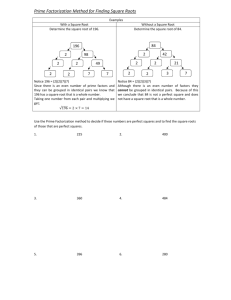

LU Factorization

LU factorization involves two steps:

Decompose the [A] matrix into a product of:

a lower triangular matrix [L] with 1 for each entry on the diagonal.

and an upper triangular matrix [U

Substitution to solve for {x}

Gauss elimination can be implemented using LU factorization

The forward-elimination step of Gauss elimination comprises the

bulk of the computational effort.

LU factorization methods separate the time-consuming elimination

of the matrix [A] from the manipulations of the right-hand-side [b].

Gauss Elimination as LU Factorization

To solve [A]{x}={b}, first decompose [A] to get [L][U]{x}={b}

MATLAB’s lu function can be used to generate the [L] and [U] matrices:

[L, U] = lu(A)

Step 1 solve [L]{y}={b}; {y} can be found using forward substitution.

Step 2 solve [U]{x}={y}, {x} can be found using backward substitution.

In MATLAB:

[L, U] = lu(A)

d = L\b

x = U\d

LU factorization requires the same number of floating point operations

(flops) as for Gauss elimination.

Advantage once [A] is decomposed, the same [L] and [U] can be

used for multiple {b} vectors.

Cholesky Factorization

A symmetric matrix a square matrix, A, that is equal to

its transpose:

A = AT (T stands for transpose).

The Cholesky factorization based on the fact that a symmetric

matrix can be decomposed as:

[A]= [U]T[U]

The rest of the process is similar to LU decomposition and Gauss

elimination, except only one matrix, [U], needs to be stored.

Cholesky factorization with the built-in chol command:

U = chol(A)

MATLAB’s left division operator \ examines the system to see which

method will most efficiently solve the problem. This includes trying

banded solvers, back and forward substitutions, Cholesky

factorization for symmetric systems. If these do not work and the

system is square, Gauss elimination with partial pivoting is used.