Feature ranking - UIC - Computer Science

advertisement

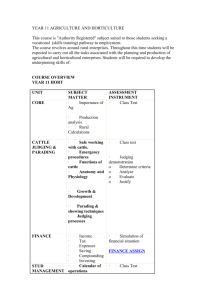

Extracting and Ranking Product Features in Opinion Documents Lei Zhang #, Bing Liu #, Suk Hwan Lim *, Eamonn O’Brien-Strain * # University of Illinois at Chicago * HP Labs 1. Introduction 3.2 Feature Ranking An important task of opinion mining is to extract people’s opinions on features/attributes of an entity. The sentence, “I love the GPS function of Motorola Droid”, expresses a positive opinion on the “GPS function” of the Motorola phone. “GPS function” is the feature. The basic idea is to rank the extracted feature candidates by feature importance. If a feature candidate is correct and important, it should be ranked high. For unimportant feature or noise, it should be ranked low. 2. Existing Techniques We identify two major factors affecting the feature importance. Feature relevance: it describes how possible a feature candidate is a correct feature. A recently proposed unsupervised technique for extracting features from reviews 2.1 Double Propagation (Qiu et al 2010) Observation : Opinion words are often used to modify features ; opinion words and features themselves have relations in opinionated expressions too, Method: Double propagation assumes that features are nouns/noun phrases and opinion words are adjectives Opinion words can be recognized by identified features, and features can be identified by known opinion words. The extracted opinion words and features are utilized to identify new opinion words and new features which are used again to extract more opinion words and features. This propagation or bootstrapping process ends when no more opinion words or features can be found. The opinion word/feature relations can be identified via a dependency parser based on the dependency grammar. Feature frequency: a feature is important, if appears frequently in opinion documents. We find that there is a mutual enforcement relation between opinion words, part-whole relation and “no” patterns and features. If an adjective modifies many correct features, it is highly possible to be a good opinion word. Similarly, if a feature candidate can be extracted by many opinion words, part-whole patterns, or “no” pattern, it is also highly likely to be a correct feature. The Web page ranking algorithm HITS is applicable. Bipartite graph and HITS algorithm 2.2. Dependency Grammar It describes the dependency relations between words in a sentence Direct relations: it represents that one word depends on the other word directly or they both depend on a third word directly (e.g. “ The camera has a good lens” ) Indirect relations: it represents that one word depends on the other word through other words or they both depend on a third word indirectly Dependency relations 3.3. The Whole Algorithm Step 1 : Extract products features using double propagation, part-whole patterns and “no” patterns Step 2 : Compute feature score using HITS without considering frequency Step 3 : The final score function considering the feature frequency is given as follows S = S(f) * log( freq(f) ) Freq (f) is the frequency count of feature f, and S(f) is the authority score of the feature f. Feature extraction and ranking Opinion Lexicon Parsing indirect relations is error-prone for Web corpora. Thus we only use direct relation to extract opinion words and feature candidates in our application. Rank features Algorithms Feature extraction double propagation, part-whole relation, “no” pattern” Class Concept Word Feature ranking HITS algorithm Video Picture quality GPS function . . . 2.3. Weakness For large corpora, double propagation may introduce a lotof noise ( error propagation). For small corpora, it may miss some important features ( features are not modified by any opinion word). Corpus1 3. Proposed Techniques To deal with the problem of double propagation, we propose a novel method to mine features, which consists of two steps: feature extraction and feature ranking. 3.1 Feature Extraction We still adopt double propagation idea to populate feature candidates. But two improvements based on part-whole relation patterns and a “no” pattern are made to find features which double propagation cannot find. Corpus2 … Corpusn 4. Experiments We used 4 diverse corpora to evaluate the techniques. They were obtained from a commercial company. The data were crawled and extracted from multiple online message boards and blogs discussing different products and services. Table 1. Descriptions of the 4 corpora So there are three kinds of feature indicators: Double propagation Part-whole relation pattern A part-whole pattern indicates one object is part of another object. It is a good indicator for features if the class concept word (the “whole” part) is known. Table 2. Results of 1000 sentences (1) Phrase pattern NP + Prep + CP CP + with + NP NP CP or CP NP (e.g. battery of the camera) (e.g. mattress with a cover) (e.g. mattress pad ) Table 3. Precision at top 50 feature candidates (2) Sentence pattern CP verb NP (e.g. the phone has a big screen) “no” pattern 4. Conclusions a specific pattern for product review and forum posts. People often express their comments or opinions on features by this short pattern (e.g. no noise) A new method to deal with problems of double propagation. Part-whole and “no” patterns are used to increase recall and then ranks the extracted feature candidates by feature importance, which is determined by feature relevance and frequency. HITS was applied to compute feature relevance.