GSAppt03 - Cloudfront.net

ADDRESSING QUANTITATIVE REASONING AND ANALYTICAL WRITING SKILLS IMPROVEMENT USING

GLOBAL AND LOCAL DATA SETS IN AN INTRODUCTORY GLOBAL CLIMATE CHANGE COURSE

NIEMITZ, JEFFREY W., Department of Geology, Dickinson College, P.O. Box 1773, Carlisle, PA 17013, niemitz@dickinson.edu

ABSTRACT # 63108

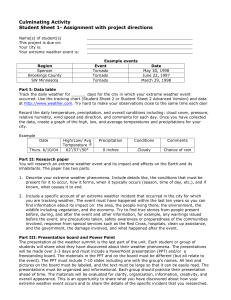

Many undergraduate students cannot adequately interpret large, complex datasets even when presented in graphical form. The need to improve our student’s quantitative reasoning and analytical writing skills has lead to the development of a series of integrated exercises in our introductory global climate change course. Global climate datasets are excellent resources for helping students improve their quantitative reasoning skills and understand of temporal and spatial interactive global processes. In an effort to provide formative assessment for student progress in both these critical skills, labs start with simple data extraction from newspapers and hand graphing and culminate in large and complex database analyses using Excel with computer graphing skills and basic statistics integrated into short written assignments. In advance of the first exercise, students gather a week’s worth of data from their hometown newspapers. Then the students find their state climatologist’s website and download the same data from the year before. They graph these data for both time periods, compare them, and turn their data and reasoned interpretations into a two-page paper.

The following week a few students’ examples are highlighted to show the range of weather and climate change. By analyzing student results anonymously all learn the kinds of misinterpretations that can result and the depth of analysis that can be done even with a small dataset. Dataset size and complexity increases in subsequent labs using climate phenomena such as ENSO, monsoon intensity, and drought to explore the relationships between global climate change and local manifestations of those changes over time. Datasets come from the websites including NCDC climate,

USGS stream gauge, and LTRR tree ring records. Besides learning the basic functions of Excel, students’ data analyses include regression and basic spectral analysis. Improved quantitative and written skills do translate to other courses and, hopefully, the quantitative literacy all citizens need in the 21 st century.

INTRODUCTION

Over the last two decades the sciences at Dickinson College have reformed their curricula from a traditionally separated lecture and laboratory to an integrated active learning experience where by inductive reasoning students learn fundamental scientific principles and concepts. In Geology, we use topics of broad geologic interest (e.g., History of Life, Plate Tectonics,

Oceanography) as a context for giving students practice in honing basic life skills specifically writing and quantitative reasoning. Topical courses allow significant depth in the content and thus lend themselves to using large datasets as vehicles for teaching fundamental principles and quantitative reasoning. The following discussion uses as an example our Global Climate

Change introductory course. While traditional in its content (meteorology, climatology, paleoclimatology), the difference between weather and climate, the interactions between regional climate phenomena via teleconnections, and the evidence for and substantiation of long term climate change can be inferred using large datasets available for the most part on the World Wide

Web. We have found that the introduction and statistical manipulation of large datasets needs to be progressive in nature. Starting with simple exercises using EXCEL as a tool give the students confidence when more complex datasets and statistical analyses are introduced. In addition we found that initially most students were unfamiliar with the basic functions of EXCEL. As time goes on we see less and less need for remedial spreadsheet instruction and can “raise the bar” with regard to the complexity of the datasets and exercise objectives. Moreover, we are finding that the students are readily translating the EXCEL and data analysis skills to other classes as we track those who take introductory classes and continue on to other electives or courses in the major. Here we present three exercises which require the acquisition and analysis of different datasets and increase in complexity over time. Each exercise requires a certain amount of dataset extraction, manipulation, and analysis as substantiation of inferences made in a 2-3 page fully formatted analytical paper.

EXERCISE I: UNDERSTANDING WEATHER AND CLIMATE

ASSIGNMENT:

Collect one week of local weather data (predicted and actual temperature and precipitation) from your hometown newspaper.

The data collected is typical for most newspapers i.e. max. , min., and average temp for the day, the normal max., min. and average temps for that day, the extreme temps for the day, and the precipitation for the data and record rainfall for the day. They are informed they will need to do this for the first class of the semester.

During the first class we talk about the difference between weather and climate. They are then asked to find the climate data for the same week of days from any other year in the climate record for their hometown or nearby city. This requires them to search the Web for historical climate data for their town.

OBJECTIVES:

1) To start collecting and analyzing weather data; 2) to begin searching the Web for the required climate data; 3) to learn to graphically present all data subsets using EXCEL; 4) to begin to understand basic statistics such as maximum, minimum and averages, and the concept of standard deviation; 5) the difficulty of predicting weather even 24 hours in advance; and 6) the difference between weather and climate in terms of time and meteorological variability.

EXAMPLE:

WEATHER DATA FOR HARRISBURG, PA (JANUARY 15-22, 2000)

Day Max Temp Min Temp Mean Norm X Last yr hi Last yr lo Record hi Record lo pred hi pred lo

15-Jan 33 18 26 28 25 13 67 -3

16-Jan

17-Jan

18-Jan

19-Jan

54

24

19

39

27

12

7

17

41

18

13

28

28

28

28

28

32

40

52

44

17

15

25

32

62

65

66

66

-4

-6

-6

14

42

26

25

32

24

13

18

22

20-Jan

21-Jan

22-Jan

31

19

23

26

12

7

29

16

15

28

28

28

47

42

39

31

29

27

68

64

64

-16

-22

-9

32

25

26

16

0

14

PPT MDTppt Norm ppt

0 0.97

1.26

0

0

0

0

0.97

0.97

0.97

0.97

1.35

1.44

1.53

1.62

0.01

0.18

0

0.98

1.16

1.16

1.71

1.8

1.89

Means-Extreme Temperature

Max-Min-Mean Temperatures

60

50

40

30

20

Max Temp

Min Temp

Daily

Norm

10

A

0

15-Jan 16-Jan 17-Jan 18-Jan 19-Jan 20-Jan 21-Jan 22-Jan

40

30

20

10

80

70 1937

60

50

1990

1990 1990 1951

1994

1951

1959

1967

Daily

Norm

Record hi

Record lo

0

1964 1893 1994 1936

1982

-10

1994

1994

-20 B

-30

15-Jan 16-Jan 17-Jan 18-Jan 19-Jan 20-Jan 21-Jan 22-Jan

Plot A shows a typical data set for one week in January. Students would recognize that the max., min., average, and range of temperatures varies even over a short time period

Plot B shows the standard deviation of temperatures for one week

Including the extreme range. Note that several days of extreme lows and highs were set in one year

60

50

40

30

20

Max-Min-Predicted Temperatures

Max Temp

Min Temp pred hi pred lo

10

C

0

15-Jan 16-Jan 17-Jan 18-Jan 19-Jan 20-Jan 21-Jan 22-Jan

Plot C shows the differences between predicted and actual high and low temperatures. Students note the difficulty in even short-term forecasts

Precipitation

2 0.4

1.8

1.6

1.4

1.2

1

Left Scale

0.35

0.3

0.25

0.2

0.8

0.15

Ppt to date

0.6

Norm ppt 0.1

Right Scale

0.4

PPT

0.05

0.2

D

0 0

15-Jan 16-Jan 17-Jan 18-Jan 19-Jan 20-Jan 21-Jan 22-Jan

Plot D (precipitation) shows that in the long-term record it has rained every day and in the year-to-date record Harrisburg was behind and in fact in a prolonged drought

Mean Temperatures

Harrisburg Max T -Dec. 1999, 2000 Harrisburg Min T - Dec. 1999, 2000

Harrisburg Avg. T - Dec. 1999, 2000

45 80 50 60

40

70 max 2000 max 1999 max norm

40 min 2000 min 1999 min norm

50 avg 1999 avg 2000 avg norm

35

60

30 40

30

50

25

20 30

40

20

Daily

Norm

Last yr

15

E

10

15-Jan 16-Jan 17-Jan 18-Jan 19-Jan 20-Jan 21-Jan 22-Jan

Plot E shows the students the variability of daily mean temperatures from one year to the next compared to the longterm mean

10 20

30

20

F

G H

0 10

1 8 15 22 29 1 8 15 22 29 1 8 15

Dates in December

22 29

Dates in December Dates in December

When comparing the months of December for 1999 and 2000, the maximum (F), minimum (G), and average (H) temperatures for 1999 are significantly warmer than for December 2000. December 1999 was one of the warmest on record for Harrisburg while December 20

EXERCISE II: ANALYSIS OF EFFECTS OF LATITUDE, ATTITUDE, AND CONTINENTALITY USING HISTORICAL TEMPERATURE AND PRECIPITATION RECORDS

ASSIGNMENT:

Using the National Climate Data Center’s Global Historical Climatology Network find historical temperature and precipitation data for two cities, one in North America and one on any continent but North America.

Your two cities should be at least 40 o latitude apart. One city should be on a coastline, the other at least 500 km from the coast.

OBJECTIVES:

1) to determine if regional climate phenomena have global control of weather historically 2) to use EXCEL to do regression an

OBJECTIVES:

1) A geography lesson in finding appropriate cities; 2) Finding good historical records in a dataset with 7000+ stations with records back to 1800; 3) manipulating, graphing, and analyzing a large dataset in EXCEL; 4) synthesizing the data to determine the

EXAMPLES: SNOHOMISH, WA and TOMBSTONE, AZ

EXAMPLE:

Students are given a list of weather stations for which there are associated USGS gauging station in the states of Arizona, California, Oregon, and Washington. Road maps of these states help the students to find the locations of the

Locale they choose. The students already know how to get to the NCDC site, now they must find a site with good ENSO data and explore the USGS Water Resources site to ascertain historical stream flow data. Frequently the range of dates for each station

DATA SHEET

City #1 PORT TOWNSEND, WASHINGTON, USA

Longitude:122.75

o W

Latitude: 48.1

o N

Elevation (meters): 0 m

Distance from coast (km): 0 km

# of years of record: Temp: 86 PPT: 86

Other geographically interesting facts:

In rain shadow of Olympic Mts.

On the Straits of Juan de Fuca

City #2 BOULIA, AUSTRALIA

Longitude: 139.9

o E

Latitude: 22.9

o S

Elevation (meters): 146 m

Distance from coast (km): 780 KM

# of years of record: Temp: 86 PPT: 86

Other geographically interesting facts:

Middle of Great Australian Desert

Version 1

Precipitation Data:

Rainfall Data

Through

1990

Version 2

Temperature Data:

Regularly Updated

Max/Min

Temperature Data* http://www.ncdc.noaa.gov/oa/climate/ghcn/ghcn.SELECT.html

Southern Oscillation Index (SOI) http://www.cgd.ucar.edu/cas/catalog/climind/soi.html

Water Resources of the United States http://water.usgs.gov./

Plots above come from the CLIMVIS website. Students would work in EXCEL to average the max and min temperatures and with precipitation graph the monthly values for the period of record. This would be discussed in the paper in terms of relative temperature range and amount of precipitation at each site. Extreme and average years for Temp and Ppt for each city would also be compared (see plots A and B below) graphically.

Average and Extreme Temp. Years

60

50

40

30

20

10

100

90

80

70

EXTREME YR

AVERAGE YR

1944 Pt Town Temp

1950 Pt Town Temp

1915 Boul Temp

1961 Boul Temp

0

JA

N

FEB M

A

R

A

PR

M

A

Y

JU

N

JU

L

A

U

G

SEP OC

T

N

OV

D

EC

Average and Extreme Precipitation

7

6

5

4

3

2

1

10

9

8

RIGHT SCALE

EXTREME YRS

AVERAGE YRS

1874 Pt Town Ppt

1942 Pt Town Ppt

1914 Boul Ppt

1974 Boul Ppt

0

JA

N

FEB M

A

R

A

PR

M

A

Y

JU

N

JU

L

A

U

G

SEP OC

T

N

OV

D

EC

20

18

16

14

12

10

8

6

4

2

0

0

-2

-4

-6

-8

-10

6

4

2

1200

1000

800

600

400

200

0

0

140

130

120

110

100

90

80

70

60

50

40

A

2

Tombstone Q vs PPT

Rain-Discharge Relationship

4 6 8

PRECIPITATION (in.)

10

TOMBSTONE Q VS SOI

SOI

Q Tombstone

1937 1942 1947 1952 1957 1962 1967 1972 1977 1982

12

1200

B

1000

800

600

400

200

0

C

TOMBSTONE TEMP VS SOI

Temp Tombstone

SOI 4

2

1937 1942 1947 1952 1957 1962 1967 1972 1977 1982

YEARS

0

-2

-4

-6

-8

-10

-12

14

Snohomish Q vs. PPT

SNOHOMISH

16000

14000

12000

10000

8000

6000

4000

2000

0

0

D

Snow Melt-Discharge Relationship

Rain-Discharge Relationship

5 10 15

PRECIPITATION (in.)

20 25

SNOHOMISH Q VS SOI

Q Snohomish

SOI

12 per. Mov. Avg. (SOI)

6 TOMBSTONE 20000

18000 E

16000

14000

12000

Two examples of association of SOI with

Precipitation, temperature, and stream discharge show relatively weak correlation For Tombstone,

AZ and a relatively strong correlation for

Snohomish, WA. Plot A shows good correlation of rainfall with discharge because rains tend to be monsoonal with significant runoff potential.

However, in Plot B discharge and SOI do not show consistent correlation. Monsoonal rains are correlated to El Nino and La Nina events. Plot C shows small temperature range variations.

Abnormally low high and low temps for a year show inconsistent correlation with La Nina events

(note circled years). Rain-Discharge relations in

Snohomish (plot D) is attributable to runoff and snowmelt depending on time of year. Both discharge and rainfall versus SOI show strong correlations with abnormally high rain and discharge being correlated with La Nina events and low rain and discharge correlated with El

Nino events. 12-point moving averages help in delineating trends.

10000

8000

6000

4000

2000

6

4

2

0

-2

-4

-6

-8

0

1937 1942 1947 1952 1957 1962 1967 1972 1977 1982

YEARS

SNOHOMISH PPT VS SOI

SOI

Ppt Snohomish

12 per. Mov. Avg. (SOI)

12 per. Mov. Avg. (Ppt Snohomish)

1937 1942 1947 1952 1957 1962 1967 1972 1977 1982

YEARS

F

4

2

0

-2

-4

-6

-8

-10

30

25

20

15

10

5

0

DISCUSSION AND CONCLUSIONS:

At Dickinson College we have used large datasets obtained from the World

Wide Web for a variety of disciplines including climate change, environmental geology, oceanography, geochemistry, geomorphology, and others. Large data sets can be generated from long-term studies of various geologic processes for example, stream chemistry and changes in meanders of small streams. I have also used tree ring and ice core databases to do quantitative reasoning in my climate course.

With each analysis a paper is required which asks the students to formulate the problem, the methodologies used to obtain and synthesize the data, and a discussion of the data analysis. In this way the students receive practice in analytical writing. By the third or fourth exercise the students have become quite good at manipulating data in EXCEL and better at synthesizing data. They learn as we see in the SOI vs. climate of the Western US exercise that positive results will not always be achieved and frequently the data are not easy to analyze. However, there is always an explanation for the data which may require a change in ones hypothesis.

One other benefit we have found is that the statistical analysis skills have translated to other courses minimizing the time spent reteaching basic EXCEL techniques.

There are some pitfalls to these kinds of exercises:

• In the teaching of EXCEL for use as a statistical analysis tool, instructions must be very clear and detailed otherwise the students will be lost before encountering the data from which we desire them to learn about process. A primer in EXCEL will help those who are totally unfamiliar with EXCEL and be a good refresher for those who are familiar with EXCEL.

•

Add new statistical techniques gradually and re-use the familiar techniques. Some redundancy is good.

• In crease the sophistication of the databases from week to week to challenge students.

•

Make sure databases used do not contain complexities for searching which would confuse introductory students. If they do and are still useful, the instructor may need to tailor the database searching as was done in Exercise III. This requires a lot of pre-lab trial and error experimentation on the part of the instructor.

•

The expected outcomes of exercises can be obtained more rapidly if part of the database search is done for the students ahead of time. This is particularly useful if you are using data from a dataset searched in previous labs and re-searching would be unnecessarily time consuming.