Kappa-Mathalon2005

advertisement

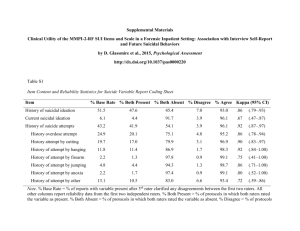

Inter-rater Reliability of Clinical Ratings: A Brief Primer on Kappa Daniel H. Mathalon, Ph.D., M.D. Department of Psychiatry Yale University School of Medicine Inter-rater Reliability of Clinical Interview Based Measures • Ratings of clinical severity for specific symptom domains (e.g, PANSS, BPRS, SAPS, SANS) – Continuous scales – Use intraclass correlations to assess inter-rater reliability. • Diagnostic Assessment – Categorical Data / Nominal Scale Data – How do we quantify reliability between diagnosticians? – Percent Agreement, Chi-Square, Kappa Two raters classify n cases into k mutually exclusive categories. Rater 2 Category 1 2 . 1 n11 n12 2 n21 n22 . Rater 1 i . k ∑inij n.1 n.2 . j k ∑jnij n1. n2. nii nij ni. n.j n.. nij=number of cases falling into cell =freq of joint event ij n..=total number of cases pij= nij / n.. = proportion of cases falling into particular cell. Reliability by Percentage Agreement = ∑ipii = 1/n ∑inii Percent Agreement Fails to Consider Agreement by Chance Rater 2 Rater 1 Schiz Other Schiz .81 .09 .90 Other .09 .01 .10 .90 x .90 = .81 .10 x .10 = .01 Proportion Agreement .90 .10 1.0 = .82 •Assume that two raters whose judgments are completely independent (i.e., not influenced by the true diagnostic status of the patient) each diagnose 90% of cases to have schizophrenia and 10% of cases to not have schizophrenia (i.e., Other). •Expected agreement by chance for each category obtained by multiplying the marginal probabilities together. •Can get Percentage Agreement of 82% strictly by chance. Chi-Square Test of Association as Proposed Solution • Can perform a Chi-Square Test of Association to test null hypothesis that the two raters’ judgments are independent. • To reject independence, show that observed agreement departs from what would be expected by chance alone. Chi-Square = ∑cells (Observed - Expected)2 / Expected • Problem: In example below, we have a perfect association between the Raters with zero agreement. Chi-Square is a test of Association, not Agreement. It is sensitive to any departure from chance agreement, even when the dependency between the raters’ judgments involves perfect non-agreement. • So, we cannot use Chi-Square Test to assess agreement between raters. Rater 2 Sz Sz 0 Rater 1 BP 0 Other 5 5 BP 5 0 0 5 Other 0 5 0 5 5 5 5 n=15 Kappa Coefficient (Cohen, 1960) •High reliability requires that the frequencies along the diagonal should be > chance and off diagonal frequencies should be < chance. • Use marginal frequencies/probabilities to estimate chance agreement. Proportion agreement observed, po= ∑ pii = 1/n ∑inii i Proportion agreement expected by chance, pc= ∑ pi. x p.i i Rater 2 Sz Bp Other ni. pi. R Sz a t BP e r Other 1 Kappa, K = 106 .53 (78) .39 10 4 120 .6 22 28 .14 (15) .075 10 60 .3 12 6 .03 (2) .01 20 .1 200 2 n.j 130 50 20 p.j .65 .25 .1 pi. x p.i .39 .075 .01 1 po - pc 1-pc po= .53 + .14 .03 = .7 pc= .39 + .075 + .01 = .475 K= .7 - .475 1 - .475 = .429 K = 1, perfect agreement K = 0, chance agreement K< 0, agreement worse than chance. po= .53 + .14 .03 = .7 Kappa, K = po - pc 1-pc pc= .39 + .075 + .01 = .475 K= .7 - .475 1 - .475 = .429 • Interpretations of Kappa K = P (agreement | no agreement by chance) 1-pc = 1- .475 = .525 of cases where no agreement by chance po - pc = .7- .475 = .225 of cases are those non-chance agreement cases where observers agreed. Kappa is the probability that judges will agree given no agreement by chance. Can test Ho that Kappa = 0, Kappa is normally distributed with large samples, can test significance using normal distribution. Can erect confidence intervals for Kappa. Weighted Kappa Coefficient Can assign weights, wij, to classification errors according to their seriousness using ratio scale weights. Kw= po(w) - pc(w) 1 - pc(w) Rater 2 Schizophrenia Other Psychosis Personality Disorder ni. p i. R a t e r Schizophrenia 106 .53 .39 0 10 .05 .15 1 4 .02 .06 6 120 .6 Other Psychosis 22 .11 .195 1 28 .14 .075 0 10 .05 .03 3 60 .3 1 Personality Disorder 2 .01 .065 6 12 .06 .025 3 6 .03 .01 0 20 .1 n.j p.j 130 50 20 200 .65 .25 .1 1.0 Kappa Rules of Thumb • K ≥ .75 is considered excellent agreement. • K ≤ .46 is considered poor agreement. Weighted Kappa and the ICC • Is an intraclass correlation coefficient ( except for factor of 1/n) when weights have following property: wij = 1 - (i - j)2 (k - 1) 2 Problems with Kappa • Affected by base rates of diagnoses. – Can’t easily compare across studies that have different base rates, either in the population, or in the reliability study. • Chance agreement is a problem? – When the null hypothesis of rater independence is not met (which is most of the time), the estimate of chance agreement is inaccurate and possibly inappropriate).