CA660_DA_L5_2013_2014

advertisement

DATA ANALYSIS

Module Code: CA660

Lecture Block 5

ESTIMATION /H.T.

Rationale, Other Features & Alternatives

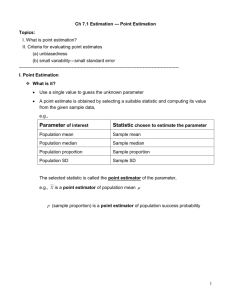

• Estimator validity – how good?

• Basis statistical properties (variance, bias, distributional etc.)

• Bias E (ˆ) where ˆ is the point estimate, the true

parameter. Bias can be positive, negative or zero.

Permits calculation of other properties, e.g. MSE E (ˆ ) 2

where this quantity and variance of estimator are the same if

estimator is unbiased.

Obtained by both analytical and “bootstrap methods”

Bias

ˆ j f ( x)

j

Similarly, for continuous variables

or for b bootstrap replications,

Bias B

1

b

b

ˆi

i 1

2

Estimation/H.T. Rationale etc. - contd.

• For any, estimator ˆ , even unbiased, there is a difference

between estimator and true parameter = sampling error

Hence the need for probability statements around ˆ

P{T 1 ˆ T 2}

C.L. for estimator = (T1 , T2), similarly to before and the

confidence coefficient. If the estimator is unbiased, in other

words, = P{that true parameter falls into the interval}.

• In general, confidence intervals can be determined using

parametric and non-parametric approaches, where parametric

construction needs a pivotal quantity = variable which is a

function of parameter and data, but whose distribution does

not depend on the parameter.

3

Related issues in Hypothesis Testing -POWER

of the TEST

• Probability of False Positive and False Negative errors

e.g. false positive if linkage between two genes declared, when

really independent

Fact

H0 True

H0 False

Hypothesis Test Result

Accept H0

Reject H0

1-

False negative

=Type II error=

False positive

= Type I error =

Power of the Test

= 1-

• Power of the Test or Statistical Power = probability of rejecting

H0 when correct to do so. (Related strictly to alternative

hypothesis and )

4

Example on Type II Error and Power

• Suppose have a variable, with known population S.D. = 3.6. From

the population, a r.s. size n=100, used to test at =0.05, that

H 0 : 0 17.5

H1 : 17.5

• critical values of

x C.I for a 2-sided test are:

xi 0 U

0 1.96

n

n

for =0.05 where for xi , i = upper or lower and 0 under H0

• So substituting our values gives:

xU 17.50 1.96 (0.36) 18.21;

xL 17.50 1.96 (0.36) 16.79

But, if H0 false, is not 17.5, but some other value …e.g. 16.5 say ??

5

Example contd.

• Want new distribution with mean = 16.5, i.e. new distribution

is shifted w.r.t. the old.

• Thus the probability of the Type II error - failing to reject false H0

is the area under the curve in the new distribution which

overlaps the non-rejection region specified under H0

• So, this is

18.21 16.5

1.71

16.79 16.5

0.29

P

U

U

P

0

.

36

0

.

36

0

.

36

0

.

36

P{0.81 U 4.75}

1 0.7910 0.209

• Thus, probability of taking the appropriate action (rejecting H0

when this is false) is 0.791 = Power

6

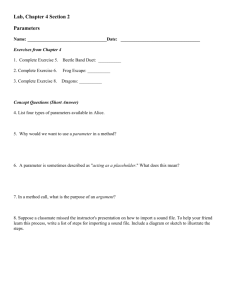

Shifting the distributions

Non-Rejection

region

Rejection region

f ( x0 )

Rejection region

/2

/2

16.79

17.5

18.21

f ( x1 )

16.5

7

Example contd.

Power under alternative for given

Possible values of

under H1 for H0 false

16.0

16.5

17.0

18.0

18.5

19.0

0.0143

0.2090

0.7190

0.7190

0.2090

0.0143

1-

0.9857

0.7910

0.2810

0.2810

0.7910

0.9857

• Balancing and : tends to be large c.f. unless original

hypothesis way off. So decision based on a rejected H0 more

conclusive than one based on H0 not rejected, as probability of

being wrong is larger in the latter case.

8

SAMPLE SIZE DETERMINATION

• Example: Suppose wanted to design a genetic mapping

experiment, or comparative product survey. Conventional

experimental design - ANOVA), genetic marker type (or product

type) and sample size considered.

Questions might include:

What is the statistical power to detect linkage for certain

progeny size? (or common ‘shared’ consumer preferences, say)

What is the precision of estimated R.F. (or grouped

preferences) when sample size is N?

• Sample size needed for specific Statistical Power

• Sample size needed for specific Confidence Interval

9

Sample size - calculation based on C.I.

For some parameter , Normal approximation approach valid, C.I. are

ˆ U (1 ) / 2ˆ

ˆ U / 2ˆ

U =standardized normal deviate (S.N.D.) and range is from lower to

upper limits, i.e. for 95% limits

d LU 3.92 ˆ 2 1.96 ˆ

is just a precision measurement for the estimator

Given a true parameter ,

2

ˆ

or in " Information" terms

n

1

nI ( )

So manipulation gives:

2

2

2

2

(

2

U

)

3.92

(

2

U

)

ˆ

2

ˆ

n

2

2

d

d

d

LU

LU

LU I ( )

10

Sample size - calculation based on Power

(firstly, what affects power)?

• Suppose = 0.05, =3.5, n=100, testing H0: 0=25 when true =24;

assume H1 : 1 < 25. Sample mean found = 24.45.

One-tailed test (U = 1.645) : shift small, lower limit of original distribution

virtually coincides with actual sample value

xL 0 U

n

25 1.645(0.35) 24.43

;

U alt

x

n

24.43 24

1.23

0.35

Under H1 Power = 0.50+0.39 = 0.89; correct decision 89% of time

Note: Two-sided test at = 0.05 gives critical values, under H0 given by

xL 24.31 , xU 25.69 : equivalently UL= + 0.89, Uu = 4.82 for H1

In general: substitute for x limits & then recalculate for new = 1

So, P{do not reject H0: =25 when true mean =24} = 0.1867 = (Type II)

Thus, Power = 1 - 0.1867 = 0.8133

11

Sample Size and Power contd.

• Suppose, n=25, other values same. 1-tailed now

Power = 0.4129

xL 23.85, U L 0.22

• Suppose = 0.01, critical values 2-tailed xL 24.10 , xu 25.90

with, equivalently, UL = + 0.29, UU = +5.43

So, P{do not reject H0: =25 when true mean =24} = 0.1141

Power = 0.8859

FACTORS : , n and type of test (1- or 2-sided), true parameter value

2 (U U ) 2

n

( 0 1 ) 2

where subscripts 0 and 1 refer to null and alternative, and value

taken as ‘generic’ (either all in one tail, 1-sided test/limit or split

between two, 2-sided test/limit)

12

‘Other’ Estimation/Test Methods

NON-PARAMETRICS/DISTN FREE

• Standard Pdfs can not be assumed for data, sampling distributions or

test statistics – uncertain due to small or unreliable data sets, nonindependence etc. Parameter estimation - not key issue.

• Example / Empirical-basis. Weaker assumptions. Less ‘information’

e.g. median used. Simple hypothesis testing as opposed to estimation.

Power and efficiency are issues.

Counts - nominal, ordinal (natural non-parametric data type/ measure).

• Nonparametric Hypothesis Tests - (has parallels to parametric case).

e.g. H.T. of locus orders requires complex ‘test statistic’ distribution, so

need to construct empirical pdf. Usually, assume the null hypothesis

and use re-sampling techniques, e.g. permutation tests, bootstrap,

jack-knife.

13

LIKELIHOOD METHOD - DEFINITIONS

• Suppose X can take a set of values x1,x2,…with

L( ) P{ X x }

where

is a2vector of parameters affecting observed x’s

• e.g. ( , ) . So can say something about P{X} if we

know, say, X ~ N ( , 2 )

• But not usually case, i.e. observe x’s, knowing nothing of

• Assuming x’s a random sample size n from a known

distribution, then

n

likelihood for

L( ) L( x1, x 2,....xn)

L( xi )

i 1

• Finding most likely or s for given data is equivalent to

Maximising the Likelihood function, (where M.L.E. is ˆ )

14

LIKELIHOOD –SCORE and INFO. CONTENT

• The Log-likelihood is a support function [S()] evaluated at point, ´

say

• Support function for any other point, say ´´ can also be obtained – basis for

computational iterations for MLE e.g. using Newton-Raphson

• SCORE = first derivative of support function w.r.t. the parameter

d [ S ( )] or, numerically/discretely, S ( ) S ( )

d

• INFORMATION CONTENT evaluated at (i) arbitrary point = Observed

Info. (ii)support function maximum = Expected Info.

2

log L ( / x )

I ( ) E

2

2 log L ( / x )

E

15

Example - Binomial variable

(e.g. use of Score, Expected Info. Content to determine type of mapping

population and sample size for genomics experiments)

Likelihood function

n x

L( ) L(n, p ) P{ X x / n, p} (1 ) n x

x

Log-likelihood

n

Log{L( )} Log xLog (n x) Log (1 )

x

Assume n constant, so first term can be ignored for given x - invariant

Log[ L( p)] xLogp (n x) Log (1 p)

Maximising w.r.t. p i.e. set the derivative of S w.r.t. to 0 so

SCORE

x

nx

0

ˆ

ˆ

1

x

so M.L.E. ˆ pˆ

n

How does it work, why bother?

16

Numerical Examples

See some data sets and test examples:

Basics:

http://www.unc.edu/~monogan/computing/r/MLE_in_R.pdf

http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.74.671&rep=r

ep1&type=pdf

Context:

http://statgen.iop.kcl.ac.uk/bgim/mle/sslike_1.html All sections useful,

but especially examples, sections 1-3 and 6

Also, e.g. for R

http://www.montana.edu/rotella/502/binom_like.pdf

for SPSS – see e.g. tutorial for data sets or

http://www.spss.ch/upload/1126184451_Linear%20Mixed%20Effects

%20Modeling%20in%20SPSS.pdf general for mixed Linear Models

For SAS – of possible interest also for Newton-Raphson

http://blogs.sas.com/content/iml/2011/10/12/maximum-likelihoodestimation-in-sasiml/

17

Bayesian Estimation- in context

• Parametric Estimation - in “classical approach” f(x,) for a r.v. X of

density f(x) , with the unknown parameter dependency of

distribution on parameter to be estimated.

• Bayesian Estimation- is a random variable, so can consider the

density as conditional and write f(x| )

Given a random sample X1, X2,… Xn the sample random variables are

jointly distributed with parameter r.v. . So, joint pdf

f X 1 , X 2 ,... Xn , ( x1, x 2,...xn, )

• Objective - to form an estimator that gives value of , dependent

on observations of the sample random variables. Thus conditional

density of given X1, X2,… Xn also plays a role. This is the posterior

density

18

Bayes - contd.

• Posterior Density

f ( x1, x2,...., xn)

• Relationship - prior and posterior:

f ( x1, x 2,....xn )

( )

n

f ( xk )

k 1

n

f ( xk ) d

( )

k 1

where () prior density of

• Value: Close to MLE for large n, or for small n if sample values

compatible with the prior distribution. Also, has strong sample

basis, -(simpler to calculate than M.L.E.)

19

Estimator Comparison in brief.

• Classical: uses objective probabilities, intuitive estimators, additional

assumptions for sampling distributions: good properties for some

estimators.

• Moments : { less calculation, less efficient. Despite analytical solutions

& low bias, not well-used for large-scale data because less good

asymptotic properties; even simple solutions may not be unique.}

• Bayesian - subjective prior knowledge, sample info. , close to MLE

under certain conditions - see earlier.

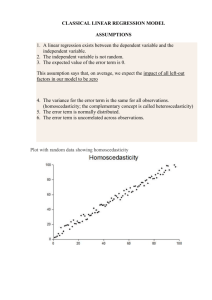

• LSE - if assumptions met, ’s unbiased + variances obtained, {(XTX)-1} .

Few assumptions for response variable distributions, just

expectations, variance-covariance structure. (Unlike MLE where need

to specify joint prob. distribution of variables). Requires additional

assumptions for sampling distns. Close to MLE if these are met.

Computation easier.

1

β̂ ~ N (β, I(β) 1 ), I 2 XT X

20