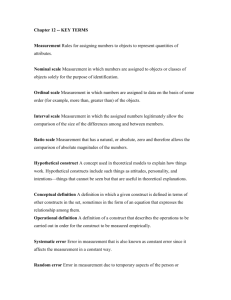

Reliability and Objectivity Chapter 3

advertisement

Now that you know what assessment is, you know that it begins with a test. Ch 4 Questions to ask yourself: How can you make sure your test is trustworthy? Will it’s measurement be true? If not, what does that say about your evaluation and thus your assessment? Your Test Must Have • • • • Sensitivity Reliability Objectivity Validity To Be a Good Test Sensitivity The ability to detect a true difference. Sensitivity • A measurement device or tool….. – Used to apply a number or value - scale – Indicates and separates differences – scale – You can only be sure of your measurement when it fits the scale – Potential for error is high when sensitivity is poor. – Examples What Happens When Sensitivity is Poor? • Too High? – Detect everything – Unable to discriminate between what you’re searching for and everything else you find – Example: Signal vs. signal • To Low? – Can’t detect enough – Unable to discriminate between what you’re looking for and nothing – Example: Signal vs. noise or signal vs. silence • Either case, the result is an incorrect result. • Incorrect result leads to incorrect judgment. • Incorrect judgment leads to incorrect intervention. Sensitivity True State True False True True Positive False Positive False False Negative True Negative Measurement Result Reliability • Reliability refers to the consistency of a test – A test given on one day should yield the same results on the next day Can One Test Trial Give Reliable Scores? Selecting a Criterion Score • Mean Score vs. Best Score – Take the average score of multiple trials – Take the best score of multiple trials Selecting a Norm-Referenced Score • Comparison against… – Individuals • Best example? – Tabled Values • Match the group? Types of Reliability • Stability – Use Test-Retest Method • Two administrations of a test with a calculation of how similar they are Types of Reliability • Internal Consistency – Use Split Half Method • One group completes odd numbered components (or questions) while the other completes evens with a calculation of how similar they are Factors Affecting Reliability • Method of scoring – objective format is more reliable than subjective format • Homogeneity of the group tested – more alike = reliable • Length of test – longer tests are more reliable than shorter tests (number of questions/elements) • Administrative procedures – clear directions, technique, motivation of subjects, good environment Objectivity • Objectivity is also referred to as rater reliability • Objectivity is the close agreement between scores assigned by two or more judges • Juggler please…. Judges’ scores……… Factors Affecting Objectivity • The clarity of the scoring system Neatness = 37% x difficulty factor (.87 / prime rate) Style = 42.489349834 – (IQ x shoe size) Originality = 3.14 x (E = MC2) / date of birth Factors Affecting Objectivity • The degree to which judges can assign scores accurately (fairly, no bias) – Can you say, “French Skating Judge” eh? Validity • Validity is considered the most important characteristic of a test Types of Validity • Logical (content) Validity – validity that indicates a measurement instrument (test) actually measures the capacities about which conclusions are drawn – Examples: • Tennis serve for accuracy versus • 40 yd. run for time/speed in football or baseball Types of Validity • Construct Validity - validity for an instrument that measures a variable or factor that is not directly observable – Examples: • Attitudes, feelings, motivation • Learning new skills Factors Affecting Validity • Student characteristics – Examples: sex, age, experience • Administrative procedures – Examples: unclear directions, poor weather • Reliability – Example: same results after repeated test When a A Test is Not Valid….. It is a waste of everyone’s time. A true judgment (evaluation) cannot be made. So….what’s the point? A True Professional Values his/her field. Seeks the truth. Knows that bad data are worse than no data Wastes no one’s time. Summary A Test Must Have • • • • Sensitivity Reliability Objectivity Validity To Be a Good Test Now to the testing process…. Tests and Their Administration • • • • • • • Test Characteristics Administrative Concerns Participant Concerns Pre Test Procedures Administering the Test Post Test Procedures Individuals with Disabilities Tests and Their Administration Test Characteristics • Validity, Reliability, Objectivity • Content Related Attributes – Important Attributes – a sample, not everything – Discrimination – best from better from good – Resemblance to the Activity - similarity – Specificity – single attribute vs. multiple attributes, ID’s limitations – Unrelated Measures – battery - different aspects – “independence” Test Administration • • • • • • • • Administrative Concerns Mass Testability Minimal Practice Minimal Equipment Minimal Personnel Ease of Preparation Adequate Directions Norms / Criterion Useful Scores Test Administration • • • • • • Participant Concerns Appropriateness to Participants Individual Scores Enjoyable Safety Confidentiality and Privacy Motivation Test Administration Pretest Procedures - Knowing the Test - Equipment and Facilities - Developing Test Procedures - Score Sheet (Recording) - Developing Directions - Estimating Time - Preparing the Requirements Participants - Planning Warm-up and Test Trials Test Administration Administering the Test • • • • Preparation Motivation Safety Recording Test Results Test Administration Posttest Procedures • Analyze Test Scores • “Share” Information – highs vs. lows Test Administration Individuals with Disabilities • Cannot be compared to the typical student/person • Few norm-referenced standards for evaluation • Disabilities are varied – even within categories Test Administration Individuals with Disabilities • Disabilities Include: • Mental Retardation – Mild, Moderate, Severe • Serious Emotional Disturbance – Autism, Schizophrenia • Orthopedic Impairment – Neurological, Musculoskeletal, Trauma • Other Health Impaired – Asthma, Cardiovascular Disorders, Diabetes, Epilepsy, Obesity Test Administration Individuals with Disabilities – Measure Physical Ability or Capacity • Not learning, cognition, or language acquisition • Find norm-referenced standards or validated tests Summary Tests and Their Administration – Test Characteristics – Administrative Concerns – Participant Concerns – Pre Test Procedures – Administering the Test – Post Test Procedures – Individuals with Disabilities Questions? End Ch 4