The Unsupervised Learning of Natural Language Structure

advertisement

Learning Language from

Distributional Evidence

Christopher Manning

Depts of CS and Linguistics

Stanford University

Workshop on Where Does Syntax Come From?, MIT, Oct 2007

There’s a lot to agree on!

[C. D. Yang. 2004. UG, statistics or both? Trends in CogSci 8(10)]

Both endowment (priors/biases) and learning from

experience contribute to language acquisition

“To be effective, a learning algorithm … must have an

appropriate representation of the relevant … data.”

Languages, and hence models of language, have intricate

structure, which must be modeled.

2

More points of agreement

Learning language structure requires priors or

biases, to work at all, but especially at speed

Yang is “in favor of probabilistic learning

mechanisms that may well be domain-general”

I am too.

Probabilistic methods (and especially Bayesian prior +

likelihood methods) are perfect for this!

Probabilistic models can achieve and explain:

gradual learning and robustness in acquisition

non-homogeneous grammars of individuals

gradual language change over time

[and also other stuff, like online processing]

3

The disagreements are important,

but two levels down

In his discussion of Saffran, Aslin, and Newport (1998),

Yang contrasts “statistical learning” (of syllable bigram

transition probabilities) from use of UG principles such as

one primary stress per word.

I would place both parts in the same probabilistic model

Stress is a cue for word learning, just as syllable transition

probabilities are a cue (and it’s very subtle in speech!!!)

Probability theory is an effective means of combining multiple,

often noisy, sources of information with prior beliefs

Yang keeps probabilities outside of the grammar, by

suggesting that the child maintains a probability

distribution over a collection of competing grammars

I would place the probabilities inside the grammar

It is more economical, explanatory, and effective.

4

The central questions

1.

2.

What representations are appropriate for

human languages?

What biases are required to learn languages

successfully?

3.

Linguistically informed biases – but perhaps

fairly general ones are enough

How much of language structure can be

acquired from the linguistic input?

This gives a lower bound on how much is

innate.

5

1. A mistaken meme: language as a

homogeneous, discrete system

Joos (1950: 701–702):

“Ordinary mathematical techniques fall mostly into two

classes, the continuous (e.g., the infinitesimal calculus)

and the discrete or discontinuous (e.g., finite group

theory). Now it will turn out that the mathematics

called ‘linguistics’ belongs to the second class. It does

not even make any compromise with continuity as

statistics does, or infinite-group theory. Linguistics is a

quantum mechanics in the most extreme sense. All

continuities, all possibilities of infinitesimal gradation,

are shoved outside of linguistics in one direction or the

other.”

[cf. Chambers 1995]

6

The quest for homogeneity

Bloch (1948: 7):

“The totality of the possible utterances of one

speaker at one time in using a language to

interact with one other speaker is an idiolect.

… The phrase ‘with one other speaker’ is

intended to exclude the possibility that an

idiolect might embrace more than one style

of speaking.”

Sapir (1921: 147)

“Everyone knows that language is variable”

7

Variation is everywhere

The definition of an idiolect fails, as

variation occurs even internal to the usage

of a speaker in one style.

As least as:

Black voters also turned out at least as well

as they did in 1996, if not better in some

regions, including the South, according to

exit polls. Gore was doing as least as well

among black voters as President Clinton did

that year.

(Associated Press, 2000)

8

Linguistic Facts vs. Linguistic

Theories

Weinreich, Labov and Herzog (1968) see 20th

century linguistics as having gone astray by

mistakenly searching for homogeneity in

language, on the misguided assumption that

only homogeneous systems can be

structured

Probability theory provides a method for

describing structure in variable systems!

9

The need for probability

models inside syntax

The motivation comes from two sides:

Categorical linguistic theories claim too much:

They place a hard categorical boundary of

grammaticality, where really there is a fuzzy edge,

determined by many conflicting constraints and issues

of conventionality vs. human creativity

Categorical linguistic theories explain too little:

They say nothing at all about the soft constraints

which explain how people choose to say things

Something that language educators, computational NLP

people – and historical linguists and sociolinguists

dealing with real language – usually want to know about

10

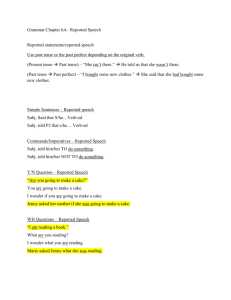

Clausal argument

subcategorization frames

Problem: in context, language is used more flexibly

than categorical constraints suggest:

E.g., most subcategorization frame ‘facts’ are wrong

Pollard and Sag (1994) inter alia [regard vs. consider]:

*We regard Kim to be an acceptable candidate

We regard Kim as an acceptable candidate

The New York Times:

As 70 to 80 percent of the cost of blood tests, like

prescriptions, is paid for by the state, neither physicians nor

patients regard expense to be a consideration.

Conservatives argue that the Bible regards homosexuality to

be a sin.

And the same pattern repeats for many verbs and frames….

11

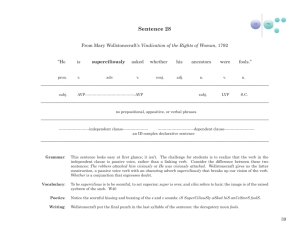

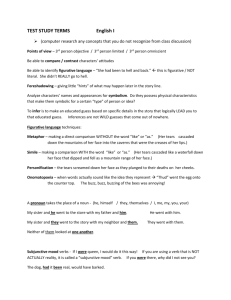

Probability mass functions:

subcategorization of regard

80.0%

70.0%

60.0%

50.0%

40.0%

30.0%

20.0%

10.0%

0.0%

__ NP as NP

__ NP NP

__ NP as AdjP

__ NP as PP

__ NP as VP[ing]

__ NP VP[inf]

12

Bresnan and Nikitina (2003)

on the Dative Alternation

Pinker (1981), Krifka (2001): verbs of instantaneous force

allow dative alternation but not “verbs of continuous

imparting of force” like push

“As Player A pushed him the chips, all hell broke loose at the

table.”

Pinker (1981), Levin (1993), Krifka (2001): verbs of

instrument of communication allow dative shift but not

verbs of manner of speaking

“Hi baby.” Wade says as he stretches. You just mumble him

an answer. You were comfy on that soft leather couch.

Besides …

www.cardplayer.com/?sec=afeature&art id=165

www.nsyncbitches.com/thunder/fic/break.htm

In context such usages are unremarkable!

It’s just the productivity and context-dependence of

language

Examples are rare → because these are gradient constraints.

Here data is gathered from a really huge corpus: the web.

13

The disappearing hard constraints of

categorical grammars

We see the same thing over and over

Another example is constraints on Heavy NP Shift

[cf. Wasow 2002]

You start with a strong categorical theory, which is

mostly right.

People point out exceptions and counter examples

You either weaken it in the face of counterexamples

Or you exclude the examples from consideration

Either way you end up without an interesting theory

There’s little point in aiming for explanatory

adequacy when the descriptive adequacy of the used

representations [as opposed to particular

descriptions] just isn’t there.

There is insight in the probability distributions!

14

Explaining more:

What do people say?

What people say has two parts:

Contingent facts about the world

People have been talking a lot about Iraq lately

The way speakers choose to express ideas

using the resources of the language

People don’t often put that clauses pre-verbally:

It appears almost certain that we will have to take a

loss

That we will have to take a loss appears almost

certain

The latter is properly part of people’s

Knowledge of Language. Part of syntax.

15

Variation is part of competence

[Labov 1972: 125]

“The variable rules themselves require at so

many points the recognition of grammatical

categories, of distinctions between

grammatical boundaries, and are so closely

interwoven with basic categorical rules, that

it is hard to see what would be gained by

extracting a grain of performance from this

complex system. It is evident that [both the

categorical and the variable rules proposed]

are a part of the speaker’s knowledge of

language.”

16

What do people say?

Simply delimiting a set of grammatical sentences provides

only a very weak description of a language, and of the

ways people choose to express ideas in it

Probability densities over sentences and sentence

structures can give a much richer view of language

structure and use

In particular, we find that (apparently) categorical

constraints in one language often reappear as the same

soft generalizations and tendencies in other languages

[Givón 1979, Bresnan, Dingare, and Manning 2001]

Linguistic theory should be able to uniformly capture

these constraints, rather than only recognizing them when

they are categorical

17

Explaining more: what determines

ditransitive vs. NP PP for dative verb

[Bresnan, Cueni, Nikitina, and Baayen 2005]

Build mixed effects [logistic regression] model over a

corpus of examples

Model is able to pull apart the correlations between

various predictive variables

Explanatory variables:

Discourse accessibility, definiteness, pronominality, animacy

(Thompson 1990, Collins 1995)

Differential length in words of recipient and theme (Arnold et al.

2000, Wasow 2002, Szmrecsanyi 2004b)

Structural parallelism in dialogue (Weiner and Labov 1983,

Bock1986, Szmrecsanyi 2004a)

Number, person (Aissen1999, 2003; Haspelmath 2004; Bresnan

and Nikitina 2003)

Concreteness of theme

Broad semantic class of verb (transfer, prevent, communicate, …)

18

Explaining more: what determines

ditransitive vs. NP PP for dative verb

[Bresnan, Cueni, Nikitina, and Baayen 2005]

What does one learn?

Almost all the predictive variables have independent

significant effects

Shows that reductionist theories of the phenomenon that

tries to reduce things to one or two factors are wrong

First object NP is preferred to be:

Only a couple fail to: e.g., number of recipient

Given, animate, definite, pronoun, shorter

Model can predict whether to use a double object or NP

PP construction correctly 94% of the time.

It captures much of what is going on in this choice

These factors exceed in importance differences from

individual variation

19

Statistical parsing models also give

insight into processing

[Levy 2004]

A realistic model of human sentence processing explain:

Robustness to arbitrary input, accurate disambiguation

Inference on the basis of incomplete input [Tanenhaus et al

1995, Altmann and Kamide 1999, Kaiser and Trueswell 2004]

Sentence processing difficulty is differential and localized

On the traditional view, resource limitations, especially

memory, drive processing difficulty

Locality-driven processing [Gibson 1998, 2000]: multiple

and/or more distant dependencies are harder to process

the reporter who attacked

the senator

the reporter who the senator attacked

Easy

Hard

Processing

20

Expectation-driven processing

[Hale 2001, Levy 2005]

Alternative paradigm: Expectation-based

models of syntactic processing

Expectations are weighted averages over

probabilities

Structures we expect are easy to process

Modern computational linguistics techniques

of statistical parsing

precise psycholinguistic model

Model matches empirical results of many

recent experiments better than traditional

memory-limitation models

21

Example: Verb-final domains

Locality predictions and empirical results

[Konieczny 2000] looked at reading times at German final

verbs

Locality-based models (Gibson 1998) predict difficulty for

longer clauses

But Konieczny found that final verbs were read faster in

longer clauses

Prediction Result

Er hat die Gruppe geführt

easy

slow

He led the group

Er hat die Gruppe auf den Berg geführt

hard

fast

He led the group to the mountain

Er hat die Gruppe auf den sehr schönen Berg geführt

He led the group to the very beautiful mountain

hard

fastest

22

Deriving Konieczny’s results

Seeing more = having more information

More information = more accurate expectations

S

VP

NPVfin

NP

Er hat

die Gruppe

PP

V

auf den Berg geführt

NP?

PP-goal?

PP-loc?

Verb?

ADVP?

Once we’ve seen a PP goal we’re unlikely to see

another

So the expectation of seeing anything else goes up

Rigorously tested: for pi(w), using a PCFG derived

empirically from a syntactically annotated corpus of

German (the NEGRA treebank)

23

Predictions from the

Hale 2001/Levy 2004 model

520

510

Reading time (ms)

500

490

480

470

460

450

Locality-based difficulty (ordinal)

Er hat die Gruppe (auf den (sehr schönen) Berg) geführt

16.2

Reading time at final verb

3

Negative Log probability

16

15.8

15.6

2

15.4

15.2

1

15

14.8

No PP

Short PP

Long PP

Locality-based models (e.g., Gibson 1998, 2000) would violate monotonicity

24

2.&3. Learning sentence structure

from distributional evidence

Start with raw language, learn syntactic structure

Some have argued that learning syntax from

positive data alone is impossible:

Many others have felt it should be possible:

Gold, 1967: Non-identifiability in the limit

Chomsky, 1980 : The poverty of the stimulus

Lari and Young, 1990

Carroll and Charniak, 1992

Alex Clark, 2001

Mark Paskin, 2001

… but it is a hard problem

25

Language learning idea 1:

Lexical affinity models

Words select other words on syntactic grounds

congress narrowly passed the amended bill

Link up pairs with high mutual information

[Yuret, 1998]: Greedy linkage

[Paskin, 2001]: Iterative re-estimation with EM

Evaluation: compare linked pairs (undirected) to a gold standard

Method

Accuracy

Paskin, 2001

39.7

Random

41.7

26

Idea: Word Classes

Mutual information between words does not necessarily

indicate syntactic selection.

expect brushbacks but no beanballs

Individual words like brushbacks are entwined with

semantic facts about the world.

Syntactic classes, like NOUN and ADVERB are bleached of

word-specific semantics.

We could build dependency models over word classes.

[cf. Carroll and Charniak, 1992]

NOUN ADVERB

congress

narrowly VERB

passedDET

the PARTICIPLE

amended NOUN

bill

congress narrowly passed the

amended

bill

27

Problems: Word Class Models

Random

41.7

Carroll and Charniak, 92 44.7

Adjacent Words

53.2

Issues:

Too simple a model – doesn’t work much better supervised

No representation of valence/distance of arguments)

congress narrowly passed the amended bill

NOUN NOUN VERB

NOUN NOUN VERB

stock prices fell

stock prices fell

28

Bias: Using better dependency

representations [Klein and Manning 2004]

?

head

arg

distance

Classes?

Paskin 01

Distance

Local Factor

P(a | h)

P(c(a) | c(h))

P(c(a) | c(h), d)

Carroll & Charniak 92

Klein/Manning (DMV)

Adjacent Words

55.9

Klein/Manning (DMV)

63.6

29

Idea: Can we learn phrase structure

constituency as distributional clustering

the president said that the downturn was over

president

the __ of

president

the __ said

governor

the __ of

governor

the __ appointed

said

sources __

said

president __ that

reported

sources __

president

governor

the

a

said

reported

[Finch and Chater 92, Schütze 93, many others]

30

Distributional learning

There is much debate in the child language

acquisition literature about distributional learning

and the possibility of kids successfully using it:

[Maratsos & Chalkley 1980] suggest it

[Pinker 1984] suggests it‘s impossible (too many

correlations, too abstract properties needed)

[Reddington et al. 1998] say it succeeds because there

are dominant cues relevant to language

[Mintz et al. 2002] look at distributional structure of

input

Speaking in terms of engineering, let me just tell you

that it works really well!

It’s one of the most successful techniques that we have

– my group uses it everywhere for NLP.

31

Idea: Distributional Syntax?

[Klein and Manning NIPS 2003]

Can we use distributional clustering for

learning syntax?

S

VP

NP

PP

factory payrolls fell in september

Span

fell in september

Context

payrolls __

payrolls fell in

factory __ sept

32

Problem: Identifying Constituents

Distributional classes are easy to find…

the final vote

two decades

most people

the final

the intitial

two of the

of the

with a

without many

in the end

on time

for now

decided to

took most of

go with

NP

VP

PP

Principal Component 1

Principal

Component 2

Principal

Component 2

… but figuring out which are constituents is hard.

+

-

Principal Component 1

33

A Nested Distributional Model:

Constituent-Context Model (CCM)

P(S|T) =

P(fpfis|+)

P(__|+)

+

+

+

P(fp|+)

P(__ fell|+)

factory payrolls fell in september

P(fis|+)

P(p __ |+)

P(is|+)

P(fell __ |+)

+

34

Initialization: A little UG?

Tree Uniform

Split Uniform

35

Results: Constituency

van Zaanen, 00

35.6

Right-Branch

70.0

Our Model (CCM)

81.6

Treebank Parse

CCM Parse

36

Combining the two models

[Klein and Manning ACL 2004]

Dependency Evaluation

Random

45.6

DMV

62.7

CCM + DMV

64.7

Constituency Evaluation

Random

39.4

CCM

81.0

CCM + DMV

88.0

!

Supervised PCFG constituency recall is at 92.8

Qualitative improvements

Subject-verb groups gone, modifier placement improved

37

Beyond surface syntax…

[Levy and Manning, ACL 2004]

Syntactic category, parent,

grandparent (subj vs obj

extraction; VP finiteness

Head words (wanted vs to

vs eat)

Presence of daughters (NP

under S)

Syntactic path (Gildea &

Jurafsky 2002):

origin?

<SBAR,S,VP,S,VP>

Plus: feature conjunctions, specialized features for expletive

subject dislocations, passivizations, passing featural

information properly through coordinations, etc., etc.

cf. Campbell (2004 ACL) – a lot of linguistic knowledge

38

Evaluation on dependency metric:

gold-standard input trees

ADVP

SBAR

Levy&Manning,

gold input trees

ADJP

VP

CF gold tree deps

S

NP

Overall

70

75

80

85

90

95

100

Accuracy (F1)

39

Might we also learn a linking to

argument structure distributionally?

Why it might be possible:

Instances of open:

Word Distributions:

agent

she, he

She opened the door with a key.

patient

door, bottle

The door opened as they approached.

instrument key, corkscrew

The bottle opened easily.

Fortunately, the corkscrew opened the bottle. Allowed Linkings:

{ agent=subject, patient=object }

He opened the bottle with a corkscrew.

{ patient=subject }

She opened the bottle very carefully.

{ agent=subject, patient=object,

This key opens the door of the cottage.

instrument=obl_with }

He opened the door.

{ instrument=subject,

patient=object }

40

Probabilistic Model Learning

[Grenager and Manning 2006 EMNLP]

Given a set of observed verb

instances, what are the most

likely model parameters?

Use unsupervised learning in

a structured probabilistic

graphical model

A good application for EM!

M-step:

Trivial computation

E-Step:

We compute conditional

distributions over

possible role vectors for

each instance

And we repeat

subj

np

obj1

obj2

v

give

l

{ 0=subj, 1=obj2, 2=obj1 }

o

[ 0=subj, M=np 1=obj2, 2=obj1 ]

s1

r1

s2

r2

s3

r3

s4

r4

0

M

2

1

w1

w2

w3

w4

plunge

today

them

test

41

Semantic role induction results

The model achieve some traction, but it’s hard

Learning becomes harder with greater abstraction

This is the right research frontier to explore!

Verb: give

Linkings:

Roles:

Verb: pay

{0=subj,1=obj2, 2=obj1}

0.46

{0=subj,1=obj1}

0.32

{0=subj,1=obj1, 2=to}

0.19

{0=subj,1=obj1, 2=for}

0.21

{0=subj,1=obj1}

0.05

{0=subj}

0.07

…

…

{0=subj,1=obj1, 2=to}

0.05

Linkings:

0

it, he, bill, they, that, …

{0=subj,1=obj2, 2=obj1}

0.05

1

power, right, stake, …

…

…

2

them, it, him, dept., …

0

it, they, company, he, …

1

$, bill, price, tax …

2

stake, gov., share, amt., …

… …

Roles:

… …

42

Conclusions

Probabilistic models give precise descriptions of a variable,

uncertain world

There are many phenomena in syntax that cry out for noncategorical or probabilistic representations of language

Probabilistic models can –and should – be used over rich

linguistic representations

They support effective learning and processing

Language learning does require biases or priors

But a lot more can be learned from modest amounts of

input than people have thought

There’s not much evidence of a poverty of the stimulus

preventing such models being used in acquisition.

43