Experiments to show Mental Images

advertisement

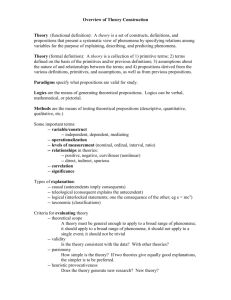

This means they are Functionalism is… Clarifying what “multiply realisable” Sensory systems Central systems = ableSight to be manifested in Categorisation various Attention Hearing systems, even perhaps computers, Memory Taste Motor systems Voice Limbs Fingers Functionalism says Knowledge representation Smell Head Motor sensory we can study the output Numerical cognition input so long Touchas the system … information performs the appropriate Thinking Balance processing tasks functions Learning Heat/cold (and algorithms for Language … doing them) (Wikipedia definition) independently from the physical level Physical Implementation Clarifying what Functionalism is… Sensory systems Sight Hearing Taste sensory input Smell Central systems What about Brooks? Categorisation (remember tutorial article) Attention Motor systems Voice Limbs Memory Fingers Knowledge representation Head Is he a functionalist? Numerical Yes! Otherwise hecognition wouldn’t be trying to … use computers Thinking to implement the Balance processing in his robots. Learning Touch Heat/cold … Language He would instead be trying to use some organic system, as a non-functionalist would believe that the processing happening in an animals’ neurons could not be performed by a computer Physical Implementation Motor output Clarifying what Functionalism is… Sensory systems Sight Categorisation Voice Hearing Attention Limbs Taste sensory input So what was it Central Brooks was saying aboutMotor systems systems the real world? Smell Touch Balance Heat/cold … Memory He said this side needs Knowledge representation to be connected to the Numerical cognition real world, not a Thinking Learningsimulation Language e.g. digital camera getting data from real world, with noise, and messy lighting conditions, etc. Physical Implementation Fingers Head … Motor output Clarifying what Functionalism is… Sensory systems Sight Categorisation Voice Hearing Attention Limbs Taste sensory input So what was it Central Brooks was saying aboutMotor systems systems the real world? Smell Touch Balance Heat/cold … Memory He said this side needs Knowledge representation to be connected to the Numerical cognition real world, not a Thinking Learningsimulation Language e.g. wheels on the robot, which might slip on the ground or stick on the carpet, etc. i.e. messy Physical Implementation Fingers Head … Motor output Clarifying what Functionalism is… Sensory systems So what was it Central Brooks was saying aboutMotor systems systems the real world? Sight Categorisation Voice Hearing Attention Limbs Taste He didn’t say he Memory Knowledge representation Smell sensoryhad any problem Numerical cognition input Touch with the Balance being Thinking algorithms Heat/cold implemented on aLearning Language … computer Physical Implementation Fingers Head … Motor output The Physical Symbol System Some sort of Physical Symbol System seems to be needed to explain human abilities Humans are “programmable” We can take on new information and instructions We can learn to follow new procedures e.g. a new mathematical procedure Human mind is very flexible …But not true of other animals, even apes Animals have special solutions for specific tasks Frog prey location Human flexible Physical Symbol System must have evolved from animals’ processing systems Details of physical implementation are unknown Let’s stick with Physical Symbol System for now… See can we flesh out more details The Language of Thought What is the language we “think in”? Is it our natural language, e.g. English, or mentalese? Some introspective arguments against natural language Word is “on the tip of my tongue”, but can’t find it Difficult to define concepts in natural language, e.g. dog, anger We have a feeling of knowing something, but hard to translate to language Some observable evidence against natural language Children reason with concepts before they can speak We often remember gist of what is said, not exact words Cognitive science experiment: (recall after 20 second delay) He sent a letter about it to Galileo, the great Italian Scientist. He sent Galileo, the great Italian Scientist, a letter about it. A letter about it was sent to Galileo, the great Italian Scientist. Galileo, the great Italian Scientist, sent him a letter about it. Represent as Propositions Just like the logic we had for AI isa likes(john,mary) likes a apple gives mary john john a mary Evidence for Propositions A cognitive Science experiment (Kintsch and Glass) Consider two different sentences, but both with three “content words” The settler built the cabin by hand. One 3-place relation The crowded passengers squirmed uncomfortably. Three 1-place relations Subjects recalled first sentence better Suggests it was simpler in the representation (Cognitive Science involves a fair bit of guessing!) Associative Networks Idea: put together the bits of the propositions that are similar likes isa mary john a apple gives Associative Networks Idea: put together the bits of the propositions that are similar Each node has some level of activation Activation spreads in parallel to connecting nodes Activation fades rapidly with time A node’s total activation is divided among its links These rules make sure it doesn’t spread everywhere Nodes and links can have different capacities Important ones are activated very often Have higher capacity These ideas seem to match our intuition from introspection One thought links to another connected one Associative Networks Cognitive Science experiment (McKoon and Ratcliff) Made short paragraphs of connected propositions Subjects viewed 2 paragraphs for a short time Subjects were shown 36 test words in sequence and asked if those words occurred in one of the stories For some of the 36 words, they were preceded by a word from same story For some of the 36 words, they were preceded by a word from other story Word from same story helped them remember …Suggests it is because they were linked in a network They also showed recall was better if closer in the network …Suggests activation weakens as it spreads Schemas Propositional networks can represent specific knowledge John gave the apple to Mary …but what about general knowledge, or commonsense? Apple is edible fruit Grows on a tree Roundish shape Often red when ripe… Could augment our proposition network Add more propositions to the node for apple Apple then becomes a concept The connections to apple are a schema for the concept What about more advanced concepts/schemas like a trip to a restaurant?... Scripts Elements of a script… Identifying name or theme Eating in a restaurant Visiting the doctor Typical roles Customer Waiter Cook Entry conditions Customer hungry, has money Scripts Sequence of goal directed scenes Enter Get a table Order Eat Pay bill Leave Sequence of actions within scene Get menu Read menu Decide order Give order to waiter Scripts How to represent a script? Could use proposition network for all the parts … but maybe whole script should be a unit Introspection suggests that it is activated as a unit without interference from associated propositions Experimental evidence (Bower, Black, Turner 1979)… Got subjects to read a short story Story followed a script, but didn’t fill in all details They were then presented various sentences Some from story, and some not Some trick sentences were included: Not from the story, but part of the script Subjects were asked to rate 1(sure I didn’t read it) -7(sure I did read it) Subjects had a tendency to think they read the trick sentences Suggests that they activate the script and fill in the blanks in memory …Starting to get a Model of the Mind Propositional-schema representations stored in long-term memory Associative activation used to retrieve relevant memories …but many details unspecified Need more machinery to account for Assess retrieved information, see does it relate to current goals Decompose goals into subgoals Draw conclusions, make decisions, solve problems More importantly: How to get new propositions and schemas into memory Schemas are often generalised from examples, not taught What about working memory? Working Memory Most long-term memory not “active” most of the time Just keep a few things in working memory for current processing Very limited: try multiplying 3-digit numbers without paper Working memory holds 3-4 chunks at a time Why so limited? (it seems useful to have more nowadays) Maybe complex circuitry required Maybe costly in energy Maybe tasks were less complex in environment of early humans Or maybe more working memory would cause too many clashes, or be too hard to manage However limits can be overcome by skill formation Note also: limit of 3-4 does not mean other “propositions” inactive Could be a lot more going on subconsciously Skill Acquisition With a lot of practice we can “automate” many tasks We distinguish this from “controlled processing” – using working memory Once automated: Takes little attention or working memory (these are “freed up”) Hard not to perform the task – cannot control it well Most advanced skills use a combination Automatic processes under direction of controlled processes, to meet goals Examples: martial arts expert, or musician Is Skill Acquisition Separate? Evidence from Neuropsychology: People with severe “anterograde amnesia” Cannot learn new facts i.e. can’t get them into long-term propositional memory …but can learn new skills Example: Can learn to solve towers of Hanoi with practice But cannot remember any occasion when they practised it Suggests that a different part of the brain handles each Skill may reside in visual and motor systems, rather than central systems Maybe because of evolution: Animals often have good skill acquisition Maybe humans evolved a specific new module for high level functions Mental Images Sometimes we seem to evoke visual images in “mind’s eye” Subjective experience suggests visual image is separate from propositions …but need experimental evidence In imagining a scene: Example: search a box of blocks for 3cm cube with two adjacent blue sides Properties are added to a description But not so many properties as would be present in a real visual scene Support, illumination, shading, shadows on near surfaces Image does not include properties not available to visual perception Other side of cube Intuition suggests that “mind’s eye” mimics visual perception Maybe it uses the same hardware? Would mean that “central system” sends information to vision system Mental Images Hypothesis: there is a human “visual buffer” Short-term memory structure Used in both visual perception and “mind’s eye” Special features/procedures: Can load it, refresh it, perform transformations Has a centre with high resolution Focus of attention can be moved around Assuming it exists… what good is it? Allows you to pull things out of your visual long term memory Use it to build a scene, with all spatial details filled in Useful to plan a route, or a rearrangement of objects Experiment: how many edges on a cube? (Assuming answer is not in long term memory) Experiments to show Mental Images Test a special procedure: mental rotation Experiments to show Mental Images Time taken depended on how much rotation was needed Suggests that we really rotate in the “visual buffer” Experiments to show Mental Images Experiments to show Mental Images However… just because we rotate stuff doesn’t necessarily mean that we do it in the “visual buffer” …Need more evidence PET brain scans have shown that the “occipital cortex” is used “occipital cortex” is known to be involved in visual processing So far… The “Symbolic” Approach to explaining cognition an alternative… the “Connectionist” approach… Connectionist Approach What is connectionism? Concepts are not stored as clean “propositions” They are spread throughout a large network “Apple” activates thousands of microfeatures Activation of apple depends on context, no single dedicated unit Neural plausibility Graceful degradation, unlike logical representations Cognitive plausibility Could explain entire system, rather than some task in central system (symbolic accounts can be quite fragmented) Could explain the “pattern matching” that seems to happen everywhere (for example in retrieval of memories) Explain how human concepts/categories do not have clear cut definitions Certain attributes increase likelihood (ANN handles this well) But not hard and fast rules Explains how concepts are learned Adjust weights with experience Another Perspective on Cognitive Science / AI We have seen multiple models for the mind, and each has an “AI version” too Propositions AI’s logic statements Scripts AI’s case based reasoning Mental images AI: some work, but not much Connectionist models AI’s neural networks This gives us another perspective on Cognitive Science / AI Both are working in different directions AI person starts with a computer and says How can I make this do something that a mind does? May take some inspiration from what/how a mind does it Cognitive Science person starts with a mind and says How can I explain something this does, using the “computer metaphor”? May take some inspiration from how computers can do it Especially from how AI people have shown certain things can be done Another Perspective on Cognitive Science / AI We have seen multiple models for the mind, Which modeltoo is correct? and each has an “AI version” Propositions AI’s logic statements …possibly… all of them Scripts AI’s case based reasoning Mental images AI: some work, but not much i.e. all working together Connectionist models AI’s neural networks This gives us another perspective on Cognitive e.g. we have seen that logic could be Science / AI Both are workingimplemented in different directions on top of Neurons (need in “clean” and symbolic AI person starts withnota be computer saysway) How can I make this do something that a mind does? This would give opportunity logical May take some inspiration from what/howfor a mind does it Cognitive Science personreasoning, starts with a mind and says while still having “scruffy” intuitions How can I explain going something does, using the “computer metaphor”? on in this the background. May take some inspiration from how computers can do it Especially from how AI people have shown certain things can be done