6_Online_Assessment_Evaluation

advertisement

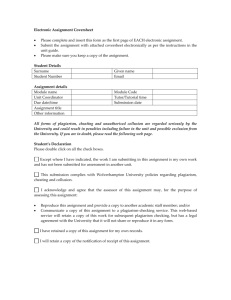

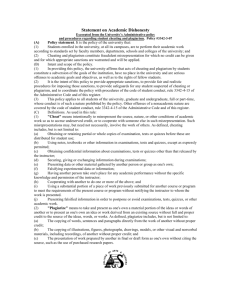

6. Online Assessment and Evaluation Practices Dr. Curtis J. Bonk Indiana University and CourseShare.com http://php.indiana.edu/~cjbonk cjbonk@indiana.edu Online Student Assessment Assessment Takes Center Stage in Online Learning (Dan Carnevale, April 13, 2001, Chronicle of Higher Education) “One difference between assessment in classrooms and in distance education is that distanceeducation programs are largely geared toward students who are already in the workforce, which often involves learning by doing.” Focus of Assessment? 1. 2. 3. 4. Basic Knowledge, Concepts, Ideas Higher-Order Thinking Skills, Problem Solving, Communication, Teamwork Both of Above!!! Other… Assessments Possible Online Portfolios of Work Discussion/Forum Participation Online Mentoring Weekly Reflections Tasks Attempted or Completed, Usage, etc. More Possible Assessments Quizzes and Tests Peer Feedback and Responsiveness Cases and Problems Group Work Web Resource Explorations & Evaluations Sample Portfolio Scoring Dimensions (10 pts each) (see: http://php.indiana.edu/~cjbonk/p250syla.htm) 1. 2. 3. 4. 5. 6. 7. 8. Richness Coherence Elaboration Relevancy Timeliness Completeness Persuasiveness Originality 1. 2. 3. 4. 5. 6. 7. 8. 9. Insightful Clear/Logical Original Learning Fdback/Responsive Format Thorough Reflective Overall Holistic E-Peer Evaluation Form Peer Evaluation. Name: ____________________ Rate on Scale of 1 (low) to 5 (high): ___ 1. Insight: creative, offers analogies/examples, relationships drawn, useful ideas and connections, fosters growth. ___ 2. Helpful/Positive: prompt feedback, encouraging, informative, makes suggestions & advice, finds, shares info. ___ 3. Valuable Team Member: dependable, links group members, there E-Case Analysis Evaluation Peer Feedback Criteria (1 pt per item; 5 pts/peer feedback) (a) Provides additional points that may have been missed. (b) Corrects a concept, asks for clarification where needed, debates issues, disagrees & explains why. (c) Ties concepts to another situation or refers to the text or coursepack. (d) Offer valuable insight based on personal experience. (e) Overall constructive feedback. Issues to Consider… 1. 2. 3. 4. 5. Bonus pts for participation? Peer evaluation of work? Assess improvement? Is it timed? Allow retakes if lose connection? How many retakes? Give unlimited time to complete? Issues to Consider… 6. 7. 8. 9. 10. Cheating? Is it really that student? Authenticity? Negotiating tasks and criteria? How measure competency? How do you demonstrate learning online? Increasing Cheating Online ($7-$30/page, http://www.syllabus.com/ January, 2002, Phillip Long, Plagiarism: IT-Enabled Tools for Deceit?) http://www.academictermpapers.com/ http://www.termpapers-on-file.com/ http://www.nocheaters.com/ http://www.cheathouse.com/uk/index.html http://www.realpapers.com/ http://www.pinkmonkey.com/ (“you’ll never buy Cliffnotes again”) Reducing Cheating Online Ask yourself, why are they cheating? Do they value the assignment? Are tasks relevant and challenging? What happens to the task after submitted—reused, woven in, posted? Due at end of term? Real audience? Look at pedagogy b4 calling plagiarism police! Reducing Cheating Online Proctored exams Vary items in exam Make course too hard to cheat Try Plagiarism.com ($300) Use mastery learning for some tasks Random selection of items for item pool Use test passwords, rely on IP# screening Assign collaborative tasks Reducing Cheating Online ($7-$30/page, http://www.syllabus.com/ January, 2002, Phillip Long, Plagiarism: IT-Enabled Tools for Deceit?) http://www.plagiarism.org/ (resource) http://www.turnitin.com/ (software, $100, free 30 day demo/trial) http://www.canexus.com/ (software; essay verification engine, $19.95) http://www.plagiserve.com/ (free database of 70,000 student term papers & cliff notes) http://www.academicintegrity.org/ (assoc.) http://sja.ucdavis.edu/avoid.htm (guide) http://www.georgetown.edu/honor/plagiarism.html Turnitin Testimonials "Many of my students believe that if they do not submit their essays, I will not discover their plagiarism. I will often type a paragraph or two of their work in myself if I suspect plagiarism. Every time, there was a "hit." Many students were successful plagiarists in high school. A service like this is needed to teach them that such practices are no longer acceptable and certainly not ethical!” Online Testing Tools Test Selection Criteria (Hezel, 1999) Easy to Configure Items and Test Handle Symbols Scheduling of Feedback (immediate?) Provides Clear Input of Dates for Exam Easy to Pick Items for Randomizing Randomize Answers Within a Question Weighting of Answer Options More Test Selection Criteria Recording of Multiple Submissions Timed Tests Comprehensive Statistics Summarize in Portfolio and/or Gradebook Confirmation of Test Submission More Test Selection Criteria (Perry & Colon, 2001) Supports multiple items types—multiple choice, true-false, essay, keyword Can easily modify or delete items Incorporate graphic or audio elements? Control over number of times students can submit an activity or test Provides feedback for each response More Test Selection Criteria (Perry & Colon, 2001) Flexible scoring—score first, last, or average submission Flexible reporting—by individual or by item and cross tabulations. Outputs data for further analysis Provides item analysis statistics (e.g., Test Item Frequency Distributions). Web Resources on Assessment 1. 2. 3. http://www.indiana.edu/~best/ http://www.indiana.edu/~best/best_suggest ed_links.shtml http://www.indiana.edu/~best/samsung/ Rubric for evaluation technology projects: http://www.indiana.edu/~tickit/learningcenter/r ubric.htm Online Survey Tools for Assessment Sample Survey Tools Zoomerang (http://www.zoomerang.com) IOTA Solutions (http://www.iotasolutions.com) QuestionMark (http://www.questionmark.com/home.html) SurveyShare (http://SurveyShare.com; from Courseshare.com) Survey Solutions from Perseus Infopoll (http://www.infopoll.com) (http://www.perseusdevelopment.com/fromsurv.htm) Web-Based Survey Advantages Faster collection of data Standardized collection format Computer graphics may reduce fatigue Computer controlled branching and skip sections Easy to answer clicking Wider distribution of respondents Web-Based Survey Problems: Why Lower Response Rates? Low response rate Lack of time Unclear instructions Too lengthy Too many steps Can’t find URL Survey Tool Features Support different types of items (Likert, multiple choice, forced ranking, paired comparisons, etc.) Maintain email lists and email invitations Conduct polls Adaptive branching and cross tabulations Modifiable templates & library of past surveys Publish reports Different types of accounts—hosted, corporate, professional, etc. Web-Based Survey Solutions: Some Tips… Send second request Make URL link prominent Offer incentives near top of request Shorten survey, make attractive, easy to read Credible sponsorship—e.g., university Disclose purpose, use, and privacy E-mail cover letters Prenotify of intent to survey Tips on Authentification Check e-mail access against list Use password access Provide keycode, PIN, or ID # (Futuristic Other: Palm Print, fingerprint, voice recognition, iris scanning, facial scanning, handwriting recognition, picture ID) Evaluation… Champagne & Wisher (in press) “Simply put, an evaluation is concerned with judging the worth of a program and is essentially conducted to aid in the making of decisions by stakeholders.” (e.g., does it work as effectively as the standard instructional approach). Evaluation Purposes Cost Savings Improved Efficiency/Effectiveness Learner Performance/Competency Improvement/Progress What did they learn? Assessing learning impact How well do learners use what they learned? How much do learners use what they learn? Kirkpatrick’s Reaction Learning Behavior Results 4 Levels Percent of Respondents Figure 26. How Respondent Organizations Measure Success of Web-Based Learning According to the Kirkpatrick Model 90 80 70 60 50 40 30 20 10 0 Learner satisfaction Change in knowledge, skill, atttitude Job performance Kirkpatrick's Evaluation Level ROI My Evaluation Plan… Considerations in Evaluation Plan 8. University or Organization 7. Program 6. Course 5. Tech Tool 1. Student 2. Instructor 3. Training 4. Task What to Evaluate? 1. 2. 3. 4. 5. 6. 7. 8. Student—attitudes, learning, jobs. Instructor—popularity, survival. Training—effectiveness, integratedness. Task--relevance, interactivity, collab. Tool--usable, learner-centered, friendly, supportive. Course—interactivity, completion. Program—growth, model(s), time to build. University—cost-benefit, policies, vision. 1. Measures of Student Success (Focus groups, interviews, observations, surveys, exams, records) Positive Feedback, Recommendations Increased Comprehension, Achievement High Retention in Program Completion Rates or Course Attrition Jobs Obtained, Internships Enrollment Trends for Next Semester 1. Student Basic Quantitative Grades, Achievement Number of Posts Participated Computer Log Activity—peak usage, messages/day, time of task or in system Attitude Surveys 1. Student High-End Success Message complexity, depth, interactivity, q’ing Collaboration skills Problem finding/solving and critical thinking Challenging and debating others Case-based reasoning, critical thinking measures Portfolios, performances, PBL activities 2. Instructor Success Technology training programs Funding adequate Utilize Web to share teaching Positive attitudes, more signing up Course recognized in tenure decisions Understands how to coach 3. Training Outside Support Training (FacultyTraining.net) Courses and Certificates (JIU, e-education) Reports, Newsletter, & Pubs (e.g., surveys) Aggregators of Info (CourseShare, Merlot) Global Forums (FacultyOnline.com; GEN: http://www.vu.vlei.com) Resources, Guides/Tips, Link Collections, Online Journals, Library Resources (e-global Library) TELEStraining.com Courses: 1. DWeb: Training the Trainer—Designing, Developing, and Delivering Web-Based Training ($1,200 Canadian) (8 weeks: Technology, design, learning, moderating, assessment, course development, 2. 3. Techniques for Online Teaching and Moderation Writing Multimedia Messages for Training Certified Online Instructor Program Walden Institute—12 Week Online Certification (Cost = $995) 2 tracks: one for higher ed and one for online corporate trainer Online tools and purpose Instructional design theory & techniques Distance ed evaluation Quality assurance Collab learning communities Distance Ed Certificate Program (Univ of Wisconsin-Madison) 12-18 month self-paced certificate program, 20 CEUs, $2,500-$3,185 Integrate into practical experiences Combines distance learning formats to cater to busy working professionals Open enrollment and self-paced Support services http://www.utexas.edu/world/lecture/ 3. Training Inside Support… Instructional Consulting Mentoring (strategic planning $) Small Pots of Funding Facilities Summer and Year Round Workshops Office of Distributed Learning Colloquiums, Tech Showcases, Guest Speakers Newsletters, guides, active learning grants, annual reports, faculty development, brown bags Technology and Professional Dev: Ten Tips to Make it Better (Rogers, 2000) 1. Offer training 2. Give technology to take home 3. Provide on-site technical support 4. Encourage collegial collaboration 5. Send to prof development conference 6. Stretch the day 7. Encourage research 8. Provide online resources 9. Lunch bytes, faculty institutes 10. Celebrate success RIDIC5-ULO3US Model of Technology Use 4. Tasks (RIDIC): Relevance Individualization Depth of Discussion Interactivity Collaboration-Control-ChoiceConstructivistic-Community RIDIC5-ULO3US Model of Technology Use 5. Tech Tools (ULOUS): Utility/Usable Learner-Centeredness Opportunities with Outsiders Online Ultra Friendly Supportive 6. Course Success Few technological glitches/bugs Adequate online support Increasing enrollment trends Course quality (interactivity rating) Monies paid Accepted by other programs 7. Online Program or Course Budget http://webpages.marshall.edu/~morgan16/onlinecosts/ brian.morgan@marshall.edu (asks how pay, how large is course, tech fees charged, # of courses, tuition rate, etc.) Indirect Costs: learner disk space, coordination, phone, admin training, creating student criteria, accreditation, integration with existing technology and procedures, library resources, on site orientation & tech training, faculty training, office space, supplies Direct Costs: courseware, instructor, business manager, help desk, books, seat time, bandwidth and data communications, server, server back-up, course developers, postage 7. Program: Online Content Considerations Live mentors? Beyond content dumping? Interactivity? Collaboration? Individual or cohort groups? Lecture or problem-based learning? Record keeping and assessment? 8. Institutional Success E-Enrollments from new students, alumni, existing students Additional grants Press, publication, partners, attention Cost-Benefit model Faculty attitudes Acceptable policies 8. Increase Accessibility Make Web material ADA compliant (Bobby) Embed interactivity in lessons Determine student learning preferences Conduct usability testing Consider slowest speed systems Orientations, training, support materials e.g., CD-ROM 8. Initial Lessons to Learn Start small, be clear, flexible Create standards and policies Consider Instructor Compensation: online teaching is not the same Look at obstacles and support structures Mixed or blended may dominate 8. What steps in getting it work? Institutional support/White Paper Identify goals, policies, assess plans, resources (hardware, software, support, people) Faculty qualifications & compensation Audience Needs: student or corporate Finding Funding & Partnering Test software usability testing, system compatibility, fits tech plans 8. How long to build a program? Year 1: Experimental Stage Year 2: Development Stage Hire people, creating marketing materials, assess, etc. Year 3: Revision Stage Year 4: Move On Stage Final advice…whatever you do… Ok, How and What Do You Assess and Evaluate…?