Ch 9: Interests, Creativity, and Nontest Indicators

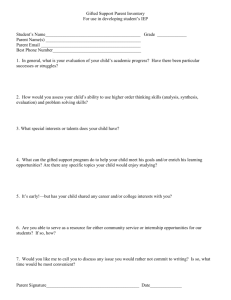

advertisement

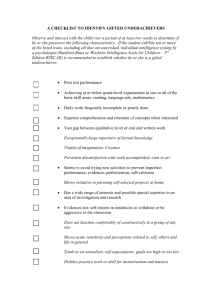

Chapters 9 & 12 “The time has come,” the Walrus said, “To talk of many things . . . .” As teachers, we tend to believe that how we feel affects how we think . . . of course, cognitive psychology tells us it is the other way around . . . so, let’s explore! For purposes of discussion, let’s use the passé phrase “Affective Domain” for this important sub-surface area related to achievement. In this chapter we explore ways to attain and analyze this type of useful information. 1 “Affective Domain” Explorations . . . Interest Inventories / Attitude Surveys Ability and Aptitude Tests Creativity Tests Personality Tests Non-test Indicators & Unobtrusive Measures 2 Let’s begin with a little attitude . . . Satisfaction Surveys / Self-Assessment Reports Could be used with individuals in your class, grade level, building or school district. Organizing the survey: Whose is the target (students, parents, public)? What questions will be asked (school climate, achievement)? How will it be administered (in class, sent home, telephone)? Typical survey statements: I believe I am doing well in class. My child’s teacher really knows my child. Teachers teach me in a way that makes me want to learn. I feel my tax money is being well spent. 3 Thoughts on include . . . Student Self-Reports and Self-Assessment May encourage students to develop skills in self- awareness and self-assessment. Like any self-report, honesty is an issue. Classroom needs to have a positive atmosphere. Don’t use to determine a student’s grade; anonymous data collection could ensure this. Best used for your own feedback. In a nonthreatening environment, there is a positive correlation better self-reports of achievement and actual achievement measured on academic tests. Most of assessments of this nature use a Likert Scale . . . let’s learn a bit about this scale. 4 We’ve got class, some classroom ideas on using . . . Inventories/Surveys, and the Likert Scale The Likert Scale is the most common method used in assessment for the areas in the Affective Domain. It is both simple and flexible. A Likert Scale can be created related to any topic on which you want to assess students’ interests, attitudes, opinions, or feelings. Simply: 1. Define an affective domain topic related to your classroom. 2. Think of different facets about the topic. 3. Generate a series of favorable and unfavorable statements regarding the topic. These are sometimes called “survey items” and the whole group is often called a “survey” or “inventory.” 4. Develop the response scale for the survey. 5. Administer the survey. 6. Score the results. 7. Identify and eliminate items that fail to function in accord with the other items (i.e., look for bad items). 5 Rensis Likert (1903–1981) (pronounced 'Lick-urt') Likert, born and raised in Cheyenne, WY, was training to be an engineer with the Union Pacific Railroad when the Great Railroad Strike of 1922 occurred. The lack of communication between the two parties made a profound impression on him and may have led him to study conflict management and organizational theory for most of his life. In 1926, he graduated from the University of Michigan, Ann Arbor. He returned there in 1946 as professor of psychology and sociology. In addition to his famous “Likert Scale” he is noted for his management dictum that “The greater the loyalty of a group toward the group, the greater is the motivation among the members to achieve the goals of the group, and the greater the probability that the group will achieve its goals.” 6 A Likert-type item may have many . . . Response Label Variations 7 Creating Scores for Likert-type Items Provide number values to the scale; add them up to suggest an individual’s overall attitude score. (See below). If you have many people’s opinions, you can also add the numbers by opinion topic then divide by the number of respondents to get an average attitude score (See President Concerns, 2008). 8 Reverse Wording Option when . . . Creating and Scoring Likert-type Items To avoid having some students straight line their responses, state some statements in a reverse direction. Be sure to remember you did this when you total the points. (See Below) Also see Rosenberg Self-Esteem Scale. 9 Pitfalls to Avoid . . . when creating a survey for your classroom or school. Be sure you and your students know the purpose of the survey and how the information will be used. (e.g., Will individual responses be confidential?) Keep it short (e.g., generally one page is sufficient). Beware of lingo or jargon terms (e.g., Do you favor inclusion?). Watch out for ambiguous meaning (e.g., Which class is best?). Do not ask more than one question at a time (e.g., Do you favor more homework and more library assignments?). Avoid loaded or leading questions (e.g., Do you believe that it is important to treat your fellow students fairly?). Make sure that fixed-response questions have a place for every possible answer (e.g., Would you prefer to study history or economics?). Place the more sensitive questions at the end of the survey. Run the survey by other professionals before you distribute it. If necessary, obtain clearance from your principal or school district. Don't reward or punish students based on their responses. 10 Interests, Attitudes and Opinion Assessment: . . . some closing questions What about student faking? May choose “socially desirable” response. May try to please or shock the teacher. Main remedy is non-threatening environment. How stable are students’ interests, attitudes and opinions? May depend on the topic and person. We do expect to change them . . . (or do you?). What about using constructed or free-response measures? Can be used. Not often used in practice. 11 And a closing example . . . Career Interest Inventories These tests attempt to match a person’s personality and interests with a specific work environment and/or career. Problems: Honesty 1 . . . “Would you rather compute wages for payroll records or read to a blind person?” Which is more socially acceptable? Honesty 2 . . . Knowing where the questions are leading . . . I want Special Education so I know I should choose “read to blind person.” Is there a connection between what one would like to do and what one would really be good at doing? What about the idea of “learning to like” on the job and/or in developing new interests? Widely used inventories (taking more than one is recommended) Strong Kuder 12 Mental Ability Tests . . . usual purpose of ability testing is prediction Mental ability (also called intelligence, aptitude, learning ability, academic potential, cognitive ability, ad infinitum). We have already discussed the IQ as a normed score . . . Let’s look a little deeper. Theories about Mental Ability Unitary theory; “g” Multiple, independent abilities (about 7) e.g., verbal, numerical, spatial, perceptual Hierarchical theory – currently dominant [See next slide] 13 Hierarchical Theory of Mental Ability 14 Individually Administered Mental Ability Tests General features One-on-one administration Requires advanced training for administration Usually about 1 hour Mixture of items Examples WISC-IV Stanford-Binet 15 Group Administered Mental Ability Tests General Features Administered to any size group Types of items; content similar to individually administered but in multiple-choice format Examples Elementary/secondary Others (e.g.. SAT, ACT, GRE) 16 Learning Disabilities The basic definition is simple, to wit, there is a discrepancy between measured intelligence and measured achievement. But enter the Diagnostic and Statistical Manual of Mental Disorders (DSM-IV) The DSM-IV organizes each psychiatric diagnosis into five levels (axes) relating to different aspects of disorder or disability. Located in the Axis 1 level are developmental and learning disorders. Common Axis I disorders include phobias, depression, anxiety disorders, bipolar disorders, and learning disabilities (like reading disorder, mathematics disorder, disorder of written expression, ADHD) and communications disorders (like stuttering). The DSM-IV manual states that this manual is produced for the completion of Federal legislative mandates and its use by people without clinical training can lead to inappropriate application of its contents. Appropriate use of the diagnostic criteria is said to require extensive clinical training, and its contents “cannot simply be applied in a cookbook fashion”. 17 . . . Among Professionals The American Psychological Association (APA) has stated clearly that its “diagnostic labels” are primarily for use as a “convenient shorthand” among professionals. Do you think the “among professionals” phrase as used by the APA applies to high teachers whose field is not Special Education? Implications? 18 IDEA 2004 . . . . . . children with learning disabilities in high school. The Individuals with Disabilities Education Act (IDEA) is a law ensuring services to children with disabilities throughout the nation. Children and youth (ages 3-21) receive special education and related services under IDEA Part B. In updating the IDEA, Congress found that the education of children with disabilities, including learning disabilities (LD), can be made more effective by having high expectations for such children and ensuring their access to the general education curriculum in the regular classroom to the maximum extent possible. If students with LD are going to succeed in school, they must have access to teachers who know the general curriculum, as well as support from teachers trained in instructional strategies and techniques that address their specific learning needs. Unfortunately, studies have shown that students with LD are often the victims of watered down curriculum and teaching approaches that are neither individualized nor proven to be effective. 19 Creativity (aka Creative Thinking) Definition? More than 60 different definitions of creativity can be found in the psychological literature. Other terms one often hears associated with creative thinking are: divergent thinking, originality, ingenuity, unusualness. Creative thinking is generally considered to be involved with the creation or generation of ideas, processes, experiences or objects. Critical thinking is concerned with their evaluation. In measuring creativity, we typically use constructed response items looking for one or more of the “creativity” characteristics. The format is similar to that of an essay, the student is given a prompt . . . except now we are looking for divergent thinking, not convergent thinking. 20 Example “Prompts” for creative thinking General All the uses for (a common object) All the words beginning with (a letter) Captions or titles for . . . Field specific (tailored to content) How would U.S. differ today if . . . Different ending for a play or story Diverse descriptions for a work of art 21 Creativity: Scoring . . . a menu of five primary ways to score responses. 1. Count – sum the number of ideas or responses. 2. Count with quality rating – each response has a quality rating (e.g. 1-3); sum the ratings. 3. Single best response – scan all responses, find the student’s “best” response, rate only that response using a quality rating scale (e.g. 1-3). 4. Originality – the response(s) provided is/are infrequently seen (you might need experience to determine this). 5. Different perspectives – count opposing ideas the student generates in responding to a prompt. 22 Standardized Tests for Creativity Nationwide, many school districts use standardized creativity tests for the purpose of screening and identifying gifted students. One of the most often used is the Torrance Tests of Creative Thinking (TTCT). It is produced in two forms: “Thinking Creatively with Pictures” & “Thinking Creatively with Words.” Check it out at the “Scholastic Testing Service – Gifted” website. http://www.ststesting.com/2005giftttct.html 23 In the News . . Cleveland Plain Dealer, January 22, 2008, post on Gifted Education in Ohio “No federal law requires school districts to identify or serve gifted students -- unlike special education for children with disabilities. That leaves it up to the individual states, and only 31 of them require districts to provide gifted services, according to the National Association for Gifted Children. Ohio is not among them.” In Ohio, “districts are only required to identify gifted students. Roughly 16 percent of the state's public school enrollment is classified as gifted. But last school year, only 26 percent of those students received either full or partial services, according to data filed with the Ohio Department of Education . . . . Research shows that while some gifted students do well without special services, the majority need more than the usual classroom experience.” 24 As Paul Harvey might say, And now, the rest of the story . . . Teachers like to use “rest of” variations in teaching. These tasks (aka tests) are often called “projective techniques.” Students are asked to be creative and think about what came before, what might happen next, or how a story might end. Before leaving this area, let’s take a look some “rest of” tests. To the top right is an example of the Rorschach Ink Blot Test . What do you think it measures? 25 The Thematic Apperception Test . . . what do you think this test measures? 26 The “Draw a Person” Test . . . What do you think this test measures? 27 Behavior Rating Scales Many professionals express the need to move away from norm-referenced measures and recommend utilizing a more functional assessment approach. Members of the counseling field also are advocating for behavioral assessment alternatives to more formal procedures. The following recommendations for educators are appropriate when considering implementing a behavior rating scale: (1) Have a variety of people who know the child complete the scale (e.g., caregivers, parents, teachers). (2) Make sure ratings on the child’s behavior is being collected from a number of different environments. (3) Before using a particular rating scale, make sure it reflects overall goals of the assessment process. (4) Care should be taken so that information about the student is not skewed toward the negative. (5) Be aware that scales reflect perceptions about students and multiple informants and inter-rater reliability checks can corroborate or contradict these perceptions. 28 Areas often covered on a . . . Behavior Rating Scale Aggression Anger Anxiety Depression Hyperactivity Inattention Opposition Withdrawal 29 Example of a simple . . . Behavior Rating Scale This student . . . 1. Arrives late for class 2. Daydreams 3. Does sloppy work 4. Talks inappropriately 5. Disrespects authority 6. Completes work late 7. Seeks attention 8. Hits other students 0 1 2 3 Never Sometimes Often Always 0 0 0 0 0 0 0 0 1 1 1 1 1 1 1 1 2 2 2 2 2 2 2 2 3 3 3 3 3 3 3 3 30 Non-test Indicators as . . . important sources of data on student accomplishment. These indicators serve to remind us that schools are pursing goals other than high test scores. The Ohio School Report Card includes both test and non-test indicators Examples of Data Collected: Routine Record Indexes Absentee rates; tardiness rates; graduation rates; discipline rates; athletic, club, volunteer participation rates, teachers who students took for class. Destination after high school – what are the connections among grades, test scores, routine records to later “achievements”? College? Where (selective or open admission)? Scholarship? Stay with it or drop out? Workforce? Type of Job? Pay? Fired (Why)? Other? (jail, unemployed, etc.) – Is school complicit? IF YOU CAN’T READ THIS, THANK A TEACHER . . . . 31 Non-test indicators include . . . Unobtrusive measures Unobtrusive measures are those assessments that occur in the normal environment and that the persons involved are oblivious to the assessment. Examples: Student graffiti - desktops, lockers, restrooms . . . Library books checked out in Spanish . . . Winners at YSU History Day . . . Hits on class website . . . The best source of unobtrusive data is gathered daily, in the classroom, by the teachers like you, as they teach. 32 How about . . . An unobtrusive measure for yourself. Stress & the Biodot . . . Notice the scale differences on the two cards displayed 33 Practical Advice 1. Include objectives related to interests and attitudes in your objectives and in assessment. 2. Identify and use a few non-test indicators of student accomplishment. 3. Practice making up simple scales for measuring interests and attitudes using the Likert method. Apply concepts of reliability and validity to all these tests. 4. Gain experience in developing prompts calling for divergent thinking and in scoring responses. 34 Terms Concepts to Review and Study on Your Own (1) cognitive outcomes non-cognitive outcomes convergent thinking divergent thinking faking Likert method non-test indicator unobtrusive measure 35 Terms Concepts to Review and Study on Your Own (2) behavior rating scale DSM-IV hierarchical model projective technique self-report inventory 36