Document

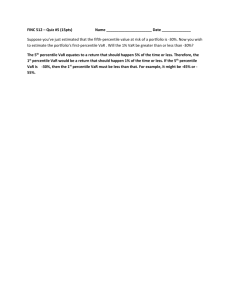

advertisement

Section 2.5

Important definition in the text:

The definition of the moment generating function (m.g.f.)

Definition 2.5-1

If S is the space for a random variable X with p.m.f. f(x), then

M(t) =

etxf(x)

xS

and

M(0) = f(x) = 1

xS

M /(t) = xetxf(x)

and

M /(0) = xf(x) = E(X)

M //(t) = x2etxf(x)

and

M //(0) =

x2f(x) = E(X2)

xS

x3etxf(x)

xS

and

M ///(0) =

x3f(x) = E(X3)

xS

xnetxf(x)

xS

and

M(n)(0) =

xnf(x) = E(Xn)

xS

xS

xS

M ///(t) =

xS

and in general,

M(n)(t) =

1. An envelope contains two blue sheets of paper and three green

sheets of paper. Two sheets are randomly selected without

replacement, and the random variable X is defined to be the number

of blue sheets.

(a) Name the type of distribution X has.

X has a hypergeometric distribution with N = 5, N1 = 2 , n = 2 .

(b) Find the p.m.f. for X.

f(x) =

3

—

10

3

—

5

1

—

10

if x = 0

if x = 1

if x = 2

(c) Find the m.g.f. for X.

3 + 6et + e2t

for – < t <

M(t) = —————

10

2. An envelope contains two blue sheets of paper and three green

sheets of paper. Two sheets are randomly selected with replacement,

and the random variable X is defined to be the number of blue

sheets.

(a) Name the type of distribution X has.

X has a b( 2 , 0.4 ) distribution.

(b) Find the p.m.f. for X.

f(x) =

9

—

25

12

—

25

4

—

25

if x = 0

if x = 1

if x = 2

(c) Find the m.g.f. for X.

9 + 12et + 4e2t

for – < t <

M(t) = —————–

25

3. Suppose the random variable X has m.g.f. M(t) = (e–9t + e4t)3/8. Find

P(X < 0).

(e–9t + e4t)3 e–27t + 3e–18te4t + 3e–9te8t + e12t

M(t) = ———— = ———————————— =

8

8

1

3

3

1

e–27t + 3e–14t + 3e–t + e12t

–27t

–14t

–t

—————————— = — e + — e + — e + — e12t =

8

8

8

8

8

etxf(x)

1

—

8

The p.m.f. for X must be f(x) =

3

—

8

3

—

8

1

—

8

x

if x = –27

if x = –14

if x = –1

if x = 12

P(X < 0) = 1/8 + 3/8 + 3/8 = 7/8

4. A random variable X has a b(n,p) distribution. Find the m.g.f. for X,

and use the m.g.f. to find E(X) and Var(X).

n

M(t) = E(etX) =

etx

x=0

n

n

x

px(1

–

p)n–x

=

x=0

n

x

(pet)x(1 – p)n–x =

(pet + 1 – p)n = (1 – p + pet)n for – < t <

Since M(t) = (1 – p + pet)n ,

M (t) = n(1 – p + pet)n–1pet , and

M (t) = n(1 – p + pet)n–1pet + n(n – 1)(1 – p + pet)n–2p2e2t ,

then

M (0) = np

M (0) = np + n(n – 1)p2

E(X) = = np

Var(X) = 2 = E(X2) – [E(X)]2 = np + n(n – 1)p2 – (np)2 = np(1 – p)

Return to Section 2.4

A Bernoulli experiment is one which must result in one of two

mutually exclusive and exhaustive outcomes often labeled in general

as “success” and “failure”. The probability of “success” is often

denoted as p, and the probability of “failure” is often denoted as q, that

is, q = 1 − p. If X is a random variable defined to be one (1) when a

success occurs and zero (0) when a failure occurs, then X is said to

have a Bernoulli distribution with success probability p. The p.m.f. of

X is

x

1–x

f(x) = p (1 – p)

E(X) = p

if x = 0 , 1 .

Var(X) = p(1 – p)

The m.g.f. of X is M(t) = (1 – p + pet) for – < t <

A sequence of Bernoulli trials is a sequence of independent Bernoulli

experiments where the probability of the outcome labeled “success”,

often denoted as p, remains the same on each trial; the probability of

“failure” is often denoted as q, that is, q = 1 – p. Suppose X1 , X2 , ..., Xn

make up a sequence of Bernoulii trials. If X = X1 + X2 + ... + Xn , then X

is a random variable equal to the number of successes in the sequence

of Bernoulli trials, and X is said to have a binomial distribution with

success probability p, denoted b(n, p). The p.m.f. of X is

n!

x(1 – p)n–x if x = 0, 1, … , n

p

————

f(x) =

x! (n – x)!

E(X) = np

Var(X) = np(1 – p)

The m.g.f. of X is M(t) = (1 – p + pet)n for – < t <

Return to Class Exercise #3 in Section 2.4

(c) Consider the random variable Q = “the number of clear marbles

when 3 marbles are selected at random with replacement” with

p.m.f. f(q). Find f(q), E(Q), and Var(Q).

3

f(q) =

q

2

—

7

q

5

—

7

3–q

if q = 0, 1, 2, 3

E(Q) = Q = (3)(2/7) = 6/7

Var(Q) = Q2 = (3)(2/7)(5/7) = 30/49

(d) Consider the random variable V = “the number of clear marbles

when 7 marbles are selected at random with replacement” with

p.m.f. g(v). Find g(v), E(V), and Var(V).

7

g(v) =

v

7–v

v

2

—

7

5

—

7

if v = 0, 1, 2, …, 7

E(V) = V = (7)(2/7) = 2

Var(V) = V2 = (7)(2/7)(5/7) = 10/7

(e) Consider the random variable W = “the number of colored marbles

when 7 marbles are selected at random with replacement” with

p.m.f. h(w). Find h(w), E(W), and Var(W). (Note that V + W = 7.)

7

h(w) =

w

7–w

w

5

—

7

2

—

7

if w = 0, 1, 2, …, 7

E(W) = W = (7)(5/7) = 5

Var(W) = W2 = (7)(5/7)(2/7) = 10/7

Return to

Section 2.5

5. A random variable X has p.m.f. f(x) = 2(1/3)x if x = 1, 2, 3, … .

(a) Verify that f(x) is a p.m.f.

2(1/3)x

= 2

x=1

(1/3)x

= 2/3

x=1

(1/3)x

x=0

2

1

= — ——— = 1

3 1 – 1/3

(b) Find the m.g.f. for X.

M(t) = E(etX) =

etx(2)(1/3)x =

x=1

2

(et/3)x =

2(et/3)

x=1

2(et/3)

(et/3)x–1 =

x=1

[ 1 + (et/3) + (et/3)2 + (et/3)3 + (et/3)4 +… ] =

1

2(et/3) ———— =

1 – (et/3)

2et

—— for t < ln(3)

3 – et

(c) Use the moment generating function to find E(X) and Var(X).

2

Since M(t) = ——— , M (t) = 6e–t(3e–t – 1)–2 , and

3e–t – 1

M (t) = – 6e–t(3e–t – 1)–2 + 36e–2t(3e–t – 1)–3 ,

then

M (0) = 6/4 = 3/2

M (0) = – 6/4 + 36/8 = 3

E(X) = = 3/2

Var(X) = 2 = E(X2) – [E(X)]2 = 3 – (3/2)2 = 3/4

6. Among all the boxes of cereal produced at a certain factory, 8% are

underweight. Boxes are randomly selected and weighed, and the

following random variables are defined:

X = number of boxes weighed to get the first underweight box

Y = number of boxes weighed to get the third underweight box

V = number of acceptable boxes weighed to get the first

underweight box

W = number of acceptable boxes weighed to get the third

underweight box

S = number of boxes weighed before the third underweight box

(a) Find each of the following:

P(X = 1) = 0.08

P(X = 2) = (0.92)(0.08)

P(X = 3) = (0.92)2(0.08)

P(X = 4) = (0.92)3(0.08)

(b) Find each of the following:

P(Y = 3) = (0.08)3

P(Y = 5) =

4

2

(0.92)2(0.08)3

P(Y = 4) =

3

1

(0.92)(0.08)3

P(Y = 6) =

5

3

(0.92)3(0.08)3

(c) Find the p.m.f. of X.

f1(x) = (0.92)x–1(0.08) if x = 1, 2, 3, …

(d) Find the p.m.f. of Y.

f2(y) =

y–1

y–3

(0.92)y–3(0.08)3 if y = 3, 4, 5, …

same as

y–1

2

Suppose independent Bernoulli trials are performed until the rth success

is observed. If the random variable X is defined to be the number of

trials to observe the rth success, then the random variable X is said to

have a negative binomial distribution; in the special case where r = 1,

we say that X has a geometric distribution. The p.m.f. of X is

f(x) =

x–1

r–1

pr(1 – p)x–r if x = r , r + 1 , r + 2 , …

same as

E(X) =

Var(X) =

The m.g.f. of X is

x–1

x–r

7. Do Text Exercise 2.5-19.

R(t) = ln[M(t)]

/(t)

M

R /(t) = ——

M(t)

//(t) – [M /(t)]2

M(t)M

R //(t) = ————————

[M(t)]2

M /(0)

E(X)

/

R (0) = —— = —— = E(X) =

M(0)

1

M(0)M //(0) – [M /(0)]2

E(X2) – [E(X)]2

2

Var(X)

=

=

——————

=

R //(0) = —————————

[M(0)]2

12

Look at the handout “Some Well-Known Series”:

1

——— = 1 + v + v2 + v3 + v4 + v5 + v6 + …

1–v

for 1 < v < 1

Take the anti-derivative of both sides to get

1 2 1 3 1 4 1 5 1 6

ln(1 – v) = v + —v + —v + —v + —v + —v + … for 1 < v < 1

2

3

4

5

6

Let w = 1 – v

(1 – w)2 (1 – w)3 (1 – w)4 (1 – w)5 (1 – w)6

ln(w) = 1 – w + ——— + ——— + ——— + ——— + ——— + …

2

3

4

5

6

for 0 < w < 2

(w – 1)2 (w – 1)3 (w – 1)4 (w – 1)5 (w – 1)6

ln(w) = w – 1 – ——— + ——— – ——— + ——— – ——— + …

2

3

4

5

6

for 0 < w < 2

1

——— = 1 + w + w2 + w3 + w4 + w5 + w6 + …

1–w

for 1 < w < 1

Take the derivative of both sides to get

1

———2 = 1 + 2w + 3w2 + 4w3 + 5w4 + 6w5 + 7w6 + … for 1 < w < 1

(1 – w)

2

1

3

1

4

1

5

1

6

1

7

1

Take the derivative of both sides to get

2

———3 = 2 + (3)(2)w + (4)(3)w2 + (5)(4)w3 + (6)(5)w4 + (7)(6)w5 + …

(1 – w)

for 1 < w < 1

Divide both sides by 2 to get

1

(4)(3) 2 (5)(4) 3 (6)(5) 4 (7)(6) 5

———3 = 1 + 3w + ——w + ——w + ——w + ——w + …

(1 – w)

2

2

2

2

3

for 1 < w < 1

4

5

6

7

2

2

2

2

2

We can recognize the general pattern to be

1

———r =

(1 – w)

x=r

x–1

r–1

wx–r

for |w| < 1

8. A random variable X has a negative binomial distribution. Find the

m.g.f. for X, and use the m.g.f. to find E(X) and Var(X).

M(t) = E(etX) = etx

x=r

x–1

r–1

pr(1 – p)x–r =

x=r

(pet)r

x=r

x–1

r–1

[(1 – p)et]x–r

x–1

r–1

(pet)r [(1 – p)et]x–r =

(pet)r

for t < – ln(1 – p)

= ——————

t

r

[1 – (1 – p)e ]

R(t) = ln[M(t)] = rln(pet) – rln[1 – (1 – p)et] =

rln(p) + rt – rln[1 – (1 – p)et]

r(1 – p)et

R //(t) = ——————

[1 – (1 – p)et]2

r(1 – p)et

r

/

R (t) = r + —————t = —————t

1 – (1 – p)e

1 – (1 – p)e

E(X) = = R

/(0)

r

=—

p

Var(X) =

2

=R

//(0)

r(1 – p)

= ———

p2

Suppose independent Bernoulli trials are performed until the rth success

is observed. If the random variable X is defined to be the number of

trials to observe the rth success, then the random variable X is said to

have a negative binomial distribution; in the special case where r = 1,

we say that X has a geometric distribution. The p.m.f. of X is

f(x) =

x–1

r–1

pr(1 – p)x–r if x = r , r + 1 , r + 2 , …

same as

x–1

x–r

r

E(X) = —

p

r(1 – p)

Var(X) = ———

p2

(pet)r

for t < – ln(1 – p)

The m.g.f. of X is M(t) = ——————

t

r

[1 – (1 – p)e ]

Return to Class Exercise #6

(e) Find each of the following:

1

25

E(X) = X = —— = — = 12.5

0.08

2

Var(X) =

X2

(1)(0.92) 575

= —– = 143.75

= ———–

2

(0.08)

4

3

75

E(Y) = Y = —— = — = 37.5

0.08

2

(3)(0.92) 1725

= —— = 431.25

Var(Y) = Y = ———–

2

(0.08)

4

2

23

E(V) = V = E(X – 1) = — = 11.5

2

Var(V) =

V2

575

= Var(X – 1) = Var(X) = —– = 143.75

4

69

E(W) = W = E(Y – 3) = — = 34.5

2

Var(W) =

W2

1725

= Var(Y – 3) = Var(Y) = —— = 431.25

4

73

E(S) = S = E(Y – 1) = — = 36.5

2

1725

Var(S) = S = Var(Y – 1) = Var(Y) = —— = 431.25

4

2

(f) Find each of the following:

the p.m.f. of V

f3(v) = (0.92)v(0.08)

if v = 0, 1, 2, …

the p.m.f. of W

f4(w) =

w+2

w

(0.92)w(0.08)3

same as

if w = 0, 1, 2, …

w+2

2

the p.m.f. of S

f5(s) =

s

s–2

(0.92)s–2(0.08)3

same as

s

2

if s = 2, 3, 4, …

(g) Find the expected number of boxes that must be weighed in order

to obtain the third underweight box.

E(Y) = Y = 75 / 2 = 37.5

(h) Find the probability that exactly 5 boxes must be weighed in order

to find the first underweight box (i.e., the fifth box weighed is the

first underweight box).

P(X = 5) = (0.92)4(0.08) = 0.0573

(i) Find the probability that at least 5 boxes must be weighed in order

to find the first underweight box.

P(X 5) = P(first 4 boxes weighed are acceptable) =

(0.92)4 = 0.7164

(j) Find the probability that at most 5 boxes must be weighed in order

to find the first underweight box.

P(X 5) = 1 – P(X 6) =

1 – P(first 5 boxes weighed are acceptable) =

1 – (0.92)5 = 0.3409

(k) Find the probability that exactly 8 boxes must be weighed in order

to find the third underweight box (i.e. exactly 5 acceptable boxes

are weighed before the third underweight box).

7

P(Y = 8) =

2

(0.92)5(0.08)3 = 0.0071

(l) Find the probability that at least 6 boxes must be weighed in order

to find the second underweight box (i.e. at least 4 acceptable

boxes are weighed before the second underweight box).

P(first 5 boxes are all acceptable or contain exactly 1 underweight box) =

P(first 5 boxes are all acceptable) +

P(first 5 boxes contain exactly 1 underweight box) =

5

(0.92)5 +

1

(0.92)4(0.08) = 0.9456

(m) Find the probability that at most 6 boxes must be weighed in order

to find the second underweight box (at most 4 acceptable boxes

are weighed before the second underweight box).

1 – P(at least 7 boxes must be weighed to find the 2nd underweight box)

= 1 – [ (0.92)6 +

6

1

(0.92)5(0.08) ] = 0.0773

9. Find E(X) and Var(X), if the moment generating function of X is

0.64et

(a) M(t) = ————t

1 – 0.36e

10

for t < – ln(0.36)

We recognize this as the moment generating function for a

negative binomial distribution with p = 0.64 and r = 10.

E(X) = 10/0.64 = 125 / 8

Var(X) = (10) (0.36) / (0.64)2 = 1125 / 128

(b) M(t) = (4e–t – 3)–1 for t < ln(4/3)

After some algebra, we recognize this as the moment

generating function for a geometric distribution with p = 1/4 .

E(X) = 4

Var(X) = 12

(c) M(t) = (4e–5t – 3)–1 for t < (1/5)ln(4/3)

We do not recognize this moment generating function.

M /(t) = 20e–5t(4e–5t – 3)–2

M //(t) = – 100e–5t(4e–5t – 3)–2 + 800e–10t(4e–5t – 3)–3

M /(0) = 20

M //(0) = 700

E(X) =

Var(X) = 700 – (20)2 = 300

20

(d) M(t) = 4/(4 – et) – 1/3 for t < ln(4)

We do not recognize this moment generating function.

M(t) =

4(4 – et)–1 – 1/3

M /(t) = 4et(4 – et)–2

M //(t) = 4et(4 – et)–2 + 8e2t(4 – et)–3

M /(0) = 4/9

M //(0) = 4/9 + 8/27 = 20/27

E(X) =

Var(X) = 20/27 – (4/9)2 = 44/81

4/9

(e) M(t) = (0.2 + 0.8et)27 for – < t <

We recognize this as the moment generating function for a

binomial distribution with p = 0.8 and n = 27 .

E(X) = (27)(0.8) = 21.6

Var(X) = (27)(0.8)(0.2) = 4.32

10. Two people, Ms. A and Mr. B, take turns flipping a coin which

has probability p of displaying heads, and Ms. A gets to flip first.

(a) Suppose the person who gets a head first wins the game. Find

the probability that Ms. A wins with a fair coin (i.e., p = 0.5).

P(Ms. A wins) = P(H) + P(TTH) + P(TTTTH) + … =

(1/2) + (1/2)3 + (1/2)5 + (1/2)7 + … =

(1/2){1 + (1/2)2 + [(1/2)2]2 + [(1/2)2]3 + … } =

1

1

2

— ——— = —

2 1 – 1/4

3

(b) For what value(s) of p will the game in part (a) to be fair (i.e.,

both Ms. A and Mr. B have an equal chance of winning)?

P(Ms. A wins) = P(H) + P(TTH) + P(TTTTH) + … =

p + (1 – p)2p + (1 – p)4p + (1 – p)6p + … =

p{1 + (1 –

p)2

+ [(1 –

p)2]2

+ [(1 –

p)2]3

p

+ … } = ———— 2

1 – (1 – p)

p

For what value(s) of p is ———— 2 = 1/2 ?

1 – (1 – p)

There are no values of p which make this equation true,

and therefore it is not possible for the game to be fair.

(c) Suppose Ms. A must get a head to win, and Mr. B must get a

tail to win. For what value(s) of p will this game be fair?

P(Ms. A wins) = P(H) + P(THH) + P(THTHH) + … =

p + (1 – p)p2 + (1 – p)2p3 + (1 – p)3p4 + … =

p{1 + (1 – p)p + [(1 –

p)p]2

+ [(1 –

p)p]3

p

+ … } = —————

1 – (1 – p)p

p

For what value(s) of p is ————— = 1/2 ?

1 – (1 – p)p

p =

3 – 5

——— 0.382

2

11. A factory produces pieces of candy in equal proportions of eight

different colors: red, orange, purple, pink, blue, green, brown, and

white.

(a) If random pieces of candy are purchased, find the expected

number of pieces of candy to obtain at least one of each color.

Define the following random variables:

X1 = number of purchases to obtain any color

X2 = number of purchases to obtain a color different from the 1st color

obtained

X3 = number of purchases to obtain a color different from the 1st and 2nd

colors obtained

.

.

.

X8 = number of purchases to obtain a color different from the 1st, 2nd,

3rd, …, and 7th colors obtained

The expected number of purchases to obtain one candy of each color is

E(X1 + X2 + X3 + X4 + X5 + X6 + X7 + X8) = E(X1) + E(X2) + … + E(X8) .

X1 has a geometric distribution with p = 1, that is, X1 = 1.

X2 has a geometric distribution with p = 7/8.

X3 has a geometric distribution with p = 6/8 = 3/4.

X4 has a geometric distribution with p = 5/8.

X5 has a geometric distribution with p = 4/8 = 1/2.

X6 has a geometric distribution with p = 3/8.

X7 has a geometric distribution with p = 2/8 = 1/4.

X8 has a geometric distribution with p = 1/8.

For k = 1, 2, …, 8, Xk has a geometric distribution with p = (9 – k) / 8 ,

and E(Xk) = 8 / (9 – k) .

E(X1) + E(X2) + … + E(X8) = 8/8 + 8/7 + 8/6 + … + 8/2 + 8/1 21.743

(b) Sandy has three pieces of candy in her pocket: a red, an orange,

and a purple. She is carrying a lot of material and only has one

hand free. With her free hand, she reaches into her pocket to

select one piece of candy at random. If it is the purple candy,

she holds onto it. If it is not purple, she holds the selected piece

in her palm while selecting one of the other two, after which

releases the piece of candy in her palm. She repeats this

process until she obtains the purple piece. Find the expected

number of selections to obtain the purple piece.

X = number of selections to obtain the purple candy

Space of X = {1, 2, 3, …}

P(X = 1) = 1/3

P(X = 2) = (2/3)(1/2)

P(X = 3) = (2/3)(1/2)2

P(X = 4) = (2/3)(1/2)3

The p.m.f. of X is f(x) =

1/3

if x = 1

(2/3)(1/2)x–1

if x = 2, 3, 4, …

M(t) = E(etX) =

(1/3) et +

(1/3) et

(1/3) et

x=2

x=2

etx(2/3)(1/2)x–1 = (1/3) et + (e2t/3) et(x–2)(1/2)x–2 =

[ 1 + (et/2) + (et/2)2 + (et/2)3 + (et/2)4 + … ] =

+

(e2t/3)

+

1

(e2t/3) ————

1 – (et/2)

=

2e2t

et/3 + ———

6 – 3et

if t < ln(2)

M (t) = et/3 + 4e2t(6 – 3et)–1 + 2e2t(–1)(6 – 3et)–2(–3et)

E(X) = M (0) = 1/3 + 4/3 + 6/9 = 7/3

12. The random variable X has p.m.f.

3/4

if x = 0

f(x) =

(1/5)x if x = 1, 2, 3, …

Verify that f(x) is a p.m.f., and find E(X).

The sum of the probabilities is

2

3

3

1

1

1

3

1

1

— + — + — + — + … = — + — ——— = 1

4

5

5

5

4

5 1 – 1/5

so f(x) is a p.m.f.

2

3

3

1

1

1

E(X) = (0)— + (1) — + (2) — + (3) — + … =

4

5

5

5

5

1

1

— ————2 = —

16

5 (1 – 1/5)

Note: The mean could also be

found by first finding the m.g.f.