search-uis - UC Berkeley School of Information

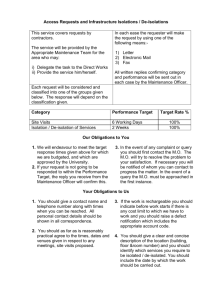

advertisement

I Learned It the Hard Way: Observations about Search Interface Design and Evaluation Marti Hearst UC Berkeley 1 Outline • Why is Supporting Search Difficult? • What Works? • How to Evaluate? 2 Search Interface Evaluation • Timing Data • Matching Users to Tasks • Spool’s Treasure Hunt Technique 3 Highly Motivated Participants • Jared Spool makes this claim 4 Fancy Often Fails 5 Use Topic-Matched Users 6 Timing • Information-intensive interfaces are very sensitive to: – Task effects • Match of task to search results • Participants’ familiarity with task topic • Task difficulty – In general – With respect to this system – Individual differences • Reading ability • Reading style (scan vs. read thoroughly) • General knowledge and reasoning strategies – (CHI Browse-Off) • Spatial ability • Timing isn’t everything – Subjective assessment – Return usage – Longitudinal studies are often quite revealing • Browsing interfaces: longer can be better 7 Cool Doesn’t Cut It • It’s very difficult to design a search interface that users prefer over the standard • Some ideas have a strong WOW factor – Examples: • Kartoo • Groxis • Hyperbolic tree – But they don’t pass the “will you use it” test • Even some simpler ideas fall by the wayside – Example: • Visual ranking indicators for results set listings 8 Early Visual Rank Indicators 9 Metadata Matters • When used correctly, text to describe text, images, video, etc. works well • “Searchers” often turn into “browsers” with approapriate links • However, metadata has many perils – The Kosher Recipe Incident 10 Small Details Matter • UIs for search especially require great care in small details – In part due to the text-heavy nature of search – A tension between more information and introducing clutter • How and where to place things important – People tend to scan or skim – Only a small percentage reads instructions 11 Small Details Matter • UIs for search especially require endless tiny adjustments – In part due to the text-heavy nature of search • Example: – In an earlier version of the Google Spellchecker, people didn’t always see the suggested correction • Used a long sentence at the top of the page: “If you didn’t find what you were looking for …” • People complained they got results, but not the right results. • In reality, the spellchecker had suggested an appropriate correction. Interview with Marissa Mayer by Mark Hurst: http://www.goodexperience.com/columns/02/1015google.html 12 Small Details Matter • The fix: – Analyzed logs, saw people didn’t see the correction: • clicked on first search result, • didn’t find what they were looking for (came right back to the search page • scrolled to the bottom of the page, did not find anything • and then complained directly to Google – Solution was to repeat the spelling suggestion at the bottom of the page. • More adjustments: – The message is shorter, and different on the top vs. the bottom Interview with Marissa Mayer by Mark Hurst: http://www.goodexperience.com/columns/02/1015google.html 13 14 Small Details Matter • Layout, font, and whitespace for informationcentric interfaces requires very careful design • Example: – Photo thumbnails – Search results summaries 15 16 17 18 19 Query: Seaborg 20 Query: “Phase II” 21 22 TileBars • Graphical Representation of Term Distribution and Overlap • Simultaneously Indicate: – – – – relative document length query term frequencies query term distributions query term overlap 23 Query terms: DBMS (Database Systems) Reliability What roles do they play in retrieved documents? Mainly about DBMS & reliability Mainly about DBMS, discusses reliability Mainly about banking, subtopic discussion on DBMS/Reliability Mainly about high-tech layoffs 24 25 Pagis Pro97 26 Pagis Pro 97 • Received three prestigious industry editorial awards – Windows Magazine's Win 100 award – Home PC selected Pagis Pro for its annual Hit Parade: – PC Computing rated Pagis Pro over competitors • Version 2.0: – MatchBars dropped! – “Our usability testing found that people didn't understand the simple 3-term/3-bar implementation.” – Replaced with a simple bar whose length is related to the score returned by Verity. I believe the problem was in our reduced implementation, and not in the fundamental idea. 27 28 Why is Supporting Search Difficult? • • • • • Everything is fair game Abstractions are difficult to represent The vocabulary disconnect Users’ lack of understanding of the technology Clutter vs. Information 29 Everything is Fair Game • The scope of what people search for is all of human knowledge and experience. – Other interfaces are more constrained (word processing, formulas, etc) • Interfaces must accommodate human differences in: – – – – Knowledge / life experience Cultural background and expectations Reading / scanning ability and style Methods of looking for things (pilers vs. filers) 30 Abstractions Are Hard to Represent • Text describes abstract concepts – Difficult to show the contents of text in a visual or compact manner • Exercise: – How would you show the preamble of the US Constitution visually? – How would you show the contents of Joyce’s Ulysses visually? How would you distinguish it from Homer’s The Odyssey or McCourt’s Angela’s Ashes? • The point: it is difficult to show text without using text 31 Vocabulary Disconnect – If you ask a set of people to describe a set of things there is little overlap in the results. 32 The Vocabulary Problem Data sets examined (and # of participants) – Main verbs used by typists to describe the kinds of edits that they do (48) – Commands for a hypothetical “message decoder” computer program (100) – First word used to describe 50 common objects (337) – Categories for 64 classified ads (30) – First keywords for a each of a set of recipes (24) Furnas, Landauer, Gomez, Dumais: The Vocabulary Problem in Human-System Communication. 33 Commun. ACM 30(11): 964-971 (1987) The Vocabulary Problem These are really bad results – If one person assigns the name, the probability of it NOT matching with another person’s is about 80% – What if we pick the most commonly chosen words as the standard? Still not good: Furnas, Landauer, Gomez, Dumais: The Vocabulary Problem in Human-System Communication. 34 Commun. ACM 30(11): 964-971 (1987) Lack of Technical Understanding • Most people don’t understand the underlying methods by which search engines work. 35 People Don’t Understand Search Technology A study of 100 randomly-chosen people found: – 14% never type a url directly into the address bar • Several tried to use the address bar, but did it wrong – Put spaces between words – Combinations of dots and spaces – “nursing spectrum.com” “consumer reports.com” – Several use search form with no spaces • “plumber’slocal9” “capitalhealthsystem” – People do not understand the use of quotes • Only 16% use quotes • Of these, some use them incorrectly – Around all of the words, making results too restrictive – “lactose intolerance –recipies” » Here the – excludes the recipes – People don’t make use of “advanced” features • Only 1 used “find in page” • Only 2 used Google cache Hargattai, Classifying and Coding Online Actions, Social Science Computer Review 22(2), 2004 210-227. 36 People Don’t Understand Search Technology Without appropriate explanations, most of 14 people had strong misconceptions about: • ANDing vs ORing of search terms – Some assumed ANDing search engine indexed a smaller collection; most had no explanation at all • For empty results for query “to be or not to be” – 9 of 14 could not explain in a method that remotely resembled stop word removal • For term order variation “boat fire” vs. “fire boat” – Only 5 out of 14 expected different results – Understanding was vague, e.g.: » “Lycos separates the two words and searches for the meaning, instead of what’re your looking for. Google understands the meaning of the phrase.” Muramatsu & Pratt, “Transparent Queries: Investigating Users’ Mental Models of Search Engines, SIGIR 2001. 37 What Works? 38 What Works for Search Interfaces? • Query term highlighting – in results listings – in retrieved documents • Sorting of search results according to important criteria (date, author) • Grouping of results according to well-organized category labels (see Flamenco) • DWIM only if highly accurate: – Spelling correction/suggestions – Simple relevance feedback (more-like-this) – Certain types of term expansion • So far: not really visualization Hearst et al: Finding the Flow in Web Site Search, CACM 45(9), 2002. 39 Highlighting Query Terms • Boldface or color • Adjacency of terms with relevant context is a useful cue. 40 41 42 Highlighted query term hits using Google toolbar Microso US found! Blackout found! PGA don’t know Microsoft don’t know 43 How to Introduce New Features? • Example: Yahoo “shortcuts” – Search engines now provide groups of enriched content • Automatically infer related information, such as sports statistics – Accessed via keywords • User can quickly specify very specific information – united 570 (flight arrival time) – map “san francisco” • We’re heading back to command languages! 44 45 46 47 48 Introducing New Features • A general technique: scaffolding • Scaffolding: – Facilitate a student’s ability to build on prior knowledge and internalize new information. – The activities provided in scaffolding instruction are just beyond the level of what the learner can do already. – Learning the new concept moves the learner up one “step” on the conceptual “ladder” 49 Scaffolding Example • The problem: how do people learn about these fantastic but unknown options? • Example: scaffolding the definition function – Where to put a suggestion for a definition? – Google used to simply hyperlink it next to the statistics for the word. – Now a hint appears to alert people to the feature. 50 Unlikely to notice the function here 51 Scaffolding to teach what is available 52 Using DWIM • DWIM – Do What I Mean – Refers to systems that try to be “smart” by guessing users’ unstated intentions or desires • Examples: – Automatically augment my query with related terms – Automatically suggest spelling corrections – Automatically load web pages that might be relevant to the one I’m looking at – Automatically file my incoming email into folders – Pop up a paperclip that tells me what kind of help I need. • THE CRITICAL POINT: – Users love DWIM when it really works – Users DESPISE it when it doesn’t • unless not very intrusive 53 DWIM that Works • Amazon’s “customers who bought X also bought Y” – And many other recommendation-related features 54 DWIM Example: Spelling Correction/Suggestion • Google’s spelling suggestions are highly accurate • But this wasn’t always the case. – Google introduced a version that wasn’t very accurate. People hated it. They pulled it. (According to a talk by Marissa Mayer of Google.) – Later they introduced a version that worked well. People love it. • But don’t get too pushy. – For a while if the user got very few results, the page was automatically replaced with the results of the spelling correction – This was removed, presumably due to negative responses Information from a talk by Marissa Mayer of Google 55 Tutorial Outline • Introduction – What do people search for (and how)? – Why is designing for search difficult? • How to Design for Search – – – – – HCI and iterative design What works? Small details matter Scaffolding The Role of DWIM • Core Problems – Query specification and refinement – Browsing and searching collections • Information Visualization for Search • Summary 56 Query Reformulation • Query reformulation: – After receiving unsuccessful results, users modify their initial queries and submit new ones intended to more accurately reflect their information needs. • Web search logs show that searchers often reformulate their queries – A study of 985 Web user search sessions found • 33% went beyond the first query • Of these, ~35% retained the same number of terms while 19% had 1 more term and 16% had 1 fewer Use of query reformulation and relevance feedback by Excite users, 57 Spink, Janson & Ozmultu, Internet Research 10(4), 2001 Query Reformulation • Many studies show that if users engage in relevance feedback, the results are much better. – In one study, participants did 17-34% better with RF – They also did better if they could see the RF terms than if the system did it automatically (DWIM) • But the effort required for doing so is usually a roadblock. Koenemann & Belkin, A Case for Interaction: A Study of Interactive Information Retrieval Behavior and Effectiveness, CHI’96 58 Query Reformulation • What happens when the web search engines suggests new terms? • Web log analysis study using the Prisma term suggestion system: Anick, Using Terminological Feedback for Web Search Refinement – A Log-based Study, SIGIR’03. 59 Query Reformulation Study • Feedback terms were displayed to 15,133 user sessions. – Of these, 14% used at least one feedback term – For all sessions, 56% involved some degree of query refinement • Within this subset, use of the feedback terms was 25% – By user id, ~16% of users applied feedback terms at least once on any given day • Looking at a 2-week session of feedback users: – Of the 2,318 users who used it once, 47% used it again in the same 2-week window. • Comparison was also done to a baseline group that was not offered feedback terms. – Both groups ended up making a page-selection click at the same rate. Anick, Using Terminological Feedback for Web Search Refinement – A Log-based Study, SIGIR’03. 60 Query Reformulation Study Anick, Using Terminological Feedback for Web Search Refinement – A Log-based Study, SIGIR’03. 61 Query Reformulation Study • Other observations – Users prefer refinements that contain the initial query terms – Presentation order does have an influence on term uptake Anick, Using Terminological Feedback for Web Search Refinement – A Log-based Study, SIGIR’03. 62 Query Reformulation Study • Types of refinements Anick, Using Terminological Feedback for Web Search Refinement – A Log-based Study, SIGIR’03. 63 Prognosis: Query Reformulation • Researchers have always known it can be helpful, but the methods proposed for user interaction were too cumbersome – Had to select many documents and then do feedback – Had to select many terms – Was based on statistical ranking methods which are hard for people to understand • RF is promising for web-based searching – The dominance of AND-based searching makes it easier to understand the effects of RF – Automated systems built on the assumption that the user will only add one term now work reasonably well – This kind of interface is simple 64 Supporting the Search Process • We should differentiate among searching: – The Web – Personal information – Large collections of like information • Different cues useful for each • Different interfaces needed • Examples – The “Stuff I’ve Seen” Project – The Flamenco Project 65 The “Stuff I’ve Seen” project • Did intense studies of how people work • Used the results to design an integrated search framework • Did extensive evaluations of alternative designs – The following slides are modifications of ones supplied by Sue Dumais, reproduced with permission. Dumais, Cutrell, Cadiz, Jancke, Sarin and Robbins, Stuff I've Seen: A system for personal information retrieval and re-use. SIGIR 2003. 66 Searching Over Personal Information • Many locations, interfaces for finding things (e.g., web, mail, local files, help, history, notes) Slide adapted from Sue Dumais. 67 The “Stuff I’ve Seen” project • Unified index of items touched recently by user – – – – All types of information, e.g., files of all types, email, calendar, contacts, web pages, etc. Full-text index of content plus metadata attributes (e.g., creation time, author, title, size) Automatic and immediate update of index Rich UI possibilities, since it’s your content Search only over things already seen Re-use vs. initial discovery Slide adapted from Sue Dumais. 68 SIS Interface Slide adapted from Sue Dumais 69 Search With SIS Slide adapted from Sue Dumais 70 Evaluating SIS • Internal deployment – ~1500 downloads – Users include: program management, test, sales, development, administrative, executives, etc. • Research techniques – – – – – Free-form feedback Questionnaires; Structured interviews Usage patterns from log data UI experiments (randomly deploy different versions) Lab studies for richer UI (e.g., timeline, trends) • But even here must work with users’ own content Slide adapted from Sue Dumais 71 SIS Usage Data Detailed analysis for 234 people, 6 weeks usage • Personal store characteristics – 5k – 100k items; index <150 meg • Query characteristics – Short queries (1.59 words) – Few advanced operators or fielded search in query box (7.5%) – Frequent use of query iteration (48%) • 50% refined queries involve filters – type, date most common • 35% refined queries involve changes to query • 13% refined queries involve re-sort • Query content – Importance of people • 29% of the queries involve people’s names Slide adapted from Sue Dumais 72 SIS Usage Data, cont’d – 76% Email – 14% Web pages – 10% Files • Age of items opened – 7% today – 22% within the last week – 46% within the last month • Ease of finding information – Easier after SIS for web, email, files – Non-SIS search decreases for web, email, files Log(Freq) = -0.68 * log(DaysSinceSeen) + 2.02 120 100 Frequency Characteristics of items opened • File types opened 80 60 40 20 0 0 500 1000 1500 2000 2500 Days Since Item First Seen 6 5 Pre-usage Post-usage 4 3 2 1 0 Files Slide adapted from Sue Dumais Email Web Pages 73 SIS Usage, cont’d UI Usage • Small effects of Top/Side, Previews • Sort order 3500 Number of Queries Issued – Date by far the most common sort field, even for people who had Okapi Rank as default – Importance of time – Few searches for “best” match; many other criteria … 3000 2500 Date Rank 2000 1500 1000 500 0 Date Rank Starting Default Sort Column Slide adapted from Sue Dumais 74 Web Sites and Collections A report by Forrester research in 2001 showed that while 76% of firms rated search as “extremely important” only 24% consider their Web site’s search to be “extremely useful”. Johnson, K., Manning, H., Hagen, P.R., and Dorsey, M. Specialize Your Site's Search. Forrester Research, (Dec. 2001), Cambridge, MA; www.forrester.com/ER/Research/Report/Summary/0,1338,13322,00 75 There are many ways to do it wrong • Examples: – Melvyl online catalog: • no way to browse enormous category listings – Audible.com, BooksOnTape.com, and BrillianceAudio: • no way to browse a given category and simultaneosly select unabridged versions – Amazon.com: • has finally gotten browsing over multiple kinds of features working; this is a recent development • but still restricted on what can be added into the query 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 The Flamenco Project • Incorporating Faceted Hierarchical Metadata into Interfaces for Large Collections • Key Goals: – Support integrated browsing and keyword search • Provide an experience of “browsing the shelves” – Add power and flexibility without introducing confusion or a feeling of “clutter” – Allow users to take the path most natural to them • Method: – User-centered design, including needs assessment and many iterations of design and testing Yee, Swearingen, Li, Hearst, Faceted Metadata for Image Search and Browsing, Proceedings of CHI 2003. 91 Some Challenges • Users don’t like new search interfaces. • How to show lots more information without overwhelming or confusing? • Our approach: – Integrate the search seamlessly into the information architecture. – Use proper HCI methodologies. – Use faceted metadata 92 Example of Faceted Metadata: Medical Subject Headings (MeSH) Facets 1. Anatomy [A] 2. Organisms [B] 3. Diseases [C] 4. Chemicals and Drugs [D] 5. Analytical, Diagnostic and Therapeutic Techniques and Equipment [E] 6. Psychiatry and Psychology [F] 7. Biological Sciences [G] 8. Physical Sciences [H] 9. Anthropology, Education, Sociology and Social Phenomena [I] 10. Technology and Food and Beverages [J] 11. Humanities [K] 12. Information Science [L] 13. Persons [M] 14. Health Care [N] 15. Geographic Locations [Z] 93 Each Facet Has Hierarchy 1. Anatomy [A] Body Regions [A01] 2. [B] Musculoskeletal System [A02] 3. [C] Digestive System [A03] 4. [D] Respiratory System [A04] 5. [E] Urogenital System [A05] 6. [F] …… 7. [G] 8. Physical Sciences [H] 9. [I] 10. [J] 11. [K] 12. [L] 13. [M] 94 Descending the Hierarchy 1. Anatomy [A] Body Regions [A01] 2. [B] Musculoskeletal System [A02] 3. [C] Digestive System [A03] 4. [D] Respiratory System [A04] 5. [E] Urogenital System [A05] 6. [F] …… 7. [G] 8. Physical Sciences [H] 9. [I] 10. [J] 11. [K] 12. [L] 13. [M] Abdomen [A01.047] Back [A01.176] Breast [A01.236] Extremities [A01.378] Head [A01.456] Neck [A01.598] …. 95 Descending the Hierarchy 1. Anatomy [A] Body Regions [A01] 2. [B] Musculoskeletal System [A02] 3. [C] Digestive System [A03] 4. [D] Respiratory System [A04] 5. [E] Urogenital System [A05] 6. [F] …… 7. [G] 8. Physical Sciences [H] Electronics 9. [I] Astronomy 10. [J] Nature 11. [K] Time 12. [L] Weights and Measures 13. [M] …. Abdomen [A01.047] Back [A01.176] Breast [A01.236] Extremities [A01.378] Head [A01.456] Neck [A01.598] …. 96 The Flamenco Interface • Hierarchical facets • Chess metaphor – Opening – Middle game – End game • • • • Tightly Integrated Search Expand as well as Refine Intermediate pages for large categories For this design, small details *really* matter 97 98 99 100 101 102 103 104 105 106 What is Tricky About This? • It is easy to do it poorly – Yahoo directory structure • It is hard to be not overwhelming – Most users prefer simplicity unless complexity really makes a difference • It is hard to “make it flow” – Can it feel like “browsing the shelves”? 107 Using HCI Methodology • Identify Target Population – Architects, city planners • Needs assessment. – Interviewed architects and conducted contextual inquiries. • Lo-fi prototyping. – Showed paper prototype to 3 professional architects. • Design / Study Round 1. – Simple interactive version. Users liked metadata idea. • Design / Study Round 2: – Developed 4 different detailed versions; evaluated with 11 architects; results somewhat positive but many problems identified. Matrix emerged as a good idea. • Metadata revision. – Compressed and simplified the metadata hierarchies 108 Using HCI Methodology • Design / Study Round 3. – New version based on results of Round 2 – Highly positive user response • Identified new user population/collection – Students and scholars of art history – Fine arts images • Study Round 4 – Compare the metadata system to a strong, representative baseline 109 Most Recent Usability Study • Participants & Collection – 32 Art History Students – ~35,000 images from SF Fine Arts Museum • Study Design – Within-subjects • Each participant sees both interfaces • Balanced in terms of order and tasks – Participants assess each interface after use – Afterwards they compare them directly • Data recorded in behavior logs, server logs, paper-surveys; one or two experienced testers at each trial. • Used 9 point Likert scales. • Session took about 1.5 hours; pay was $15/hour 110 The Baseline System • Floogle • Take the best of the existing keyword-based image search systems 111 Comparison of Common Image Search Systems System Collection Google Web 20 No 27 AltaVista Web 15 No 8 Corbis Photos 9-36 No 8 Getty Photos, Art 12-90 Yes 6 MS Office Photos, Clip art 6-100 Yes N/A Thinker Fine arts images 10 Yes 4 BASELINE Fine arts images 40 Yes N/A # Results /page Categor # ies? Familiar 112 sword sword 113 114 115 116 Evaluation Quandary • How to assess the success of browsing? – Timing is usually not a good indicator – People often spend longer when browsing is going well. • Not the case for directed search – Can look for comprehensiveness and correctness (precision and recall) … – … But subjective measures seem to be most important here. 117 Hypotheses • We attempted to design tasks to test the following hypotheses: – Participants will experience greater search satisfaction, feel greater confidence in the results, produce higher recall, and encounter fewer dead ends using FC over Baseline – FC will perceived to be more useful and flexible than Baseline – Participants will feel more familiar with the contents of the collection after using FC – Participants will use FC to create multi-faceted queries 118 Four Types of Tasks – Unstructured (3): Search for images of interest – Structured Task (11-14): Gather materials for an art history essay on a given topic, e.g. • Find all woodcuts created in the US • Choose the decade with the most • Select one of the artists in this periods and show all of their woodcuts • Choose a subject depicted in these works and find another artist who treated the same subject in a different way. – Structured Task (10): compare related images • Find images by artists from 2 different countries that depict conflict between groups. – Unstructured (5): search for images of interest 119 Other Points • Participants were NOT walked through the interfaces. • The wording of Task 2 reflected the metadata; not the case for Task 3 • Within tasks, queries were not different in difficulty (t’s<1.7, p >0.05 according to post-task questions) • Flamenco is and order of magnitude slower than Floogle on average. – In task 2 users were allowed 3 more minutes in FC than in Baseline. – Time spent in tasks 2 and 3 were significantly longer in FC (about 2 min more). 120 Results • Participants felt significantly more confident they had found all relevant images using FC (Task 2: t(62)=2.18, p<.05; Task 3: t(62)=2.03, p<.05) • Participants felt significantly more satisfied with the results (Task 2: t(62)=3.78, p<.001; Task 3: t(62)=2.03, p<.05) • Recall scores: – Task2a: In Baseline 57% of participants found all relevant results, in FC 81% found all. – Task 2b: In Baseline 21% found all relevant, in FC 77% found all. 121 Post-Interface Assessments All significant at p<.05 except simple and overwhelming 122 Perceived Uses of Interfaces What is interface useful for? 9.00 7.97 7.91 8.00 7.00 6.64 6.44 6.00 5.47 6.16 5.91 4.91 5.00 Baseline SHASTA DENALI 4.00 3.00 2.00 FC 1.00 0.00 Useful for my coursework Useful for exploring an unfamiliar collection Useful for finding Useful for seeing a particular image relationships b/w images 123 Post-Test Comparison Baseline Which Interface Preferable For: 15 Find images of roses 2 Find all works from a given period 1 Find pictures by 2 artists in same media FC 16 30 29 Overall Assessment: More useful for your tasks Easiest to use Most flexible More likely to result in dead ends Helped you learn more Overall preference 4 28 8 23 6 24 28 3 1 31 2 29 124 Facet Usage • Facets driven largely by task content – Multiple facets 45% of time in structured tasks • For unstructured tasks, – – – – – Artists (17%) Date (15%) Location (15%) Others ranged from 5-12% Multiple facets 19% of time • From end game, expansion from – Artists (39%) – Media (29%) – Shapes (19%) 125 Qualitative Observations • Baseline: – Simplicity, similarity to Google a plus – Also noted the usefulness of the category links • FC: – Starting page “well-organized”, gave “ideas for what to search for” – Query previews were commented on explicitly by 9 participants – Commented on matrix prompting where to go next • 3 were confused about what the matrix shows – Generally liked the grouping and organizing – End game links seemed useful; 9 explicitly remarked positively on the guidance provided there. – Often get requests to use the system in future 126 Study Results Summary • Overwhelmingly positive results for the faceted metadata interface. • Somewhat heavy use of multiple facets. • Strong preference over the current state of the art. • This result not seen in similarity-based image search interfaces. • Hypotheses are supported. 127 Summary • Usability studies done on 3 collections: – Recipes: 13,000 items – Architecture Images: 40,000 items – Fine Arts Images: 35,000 items • Conclusions: – Users like and are successful with the dynamic faceted hierarchical metadata, especially for browsing tasks – Very positive results, in contrast with studies on earlier iterations – Note: it seems you have to care about the contents of the collection to like the interface 128 Clustering Study 3: NIRVE NIRVE Interface by Cugini et al. 96. Each rectangle is a cluster. Larger clusters closer to the “pole”. Similar clusters near one another. Opening a cluster causes a projection that shows the titles. 129 Study 3 This study compared: – 3D graphical clusters – 2D graphical clusters – textual clusters • 15 participants, between-subject design • Tasks – – – – – Locate a particular document Locate and mark a particular document Locate a previously marked document Locate all clusters that discuss some topic List more frequently represented topics Visualization of search results: a comparative evaluation of text, 2D, and 3D interfaces Sebrechts, Cugini, Laskowski, Vasilakis and Miller, SIGIR ‘99. 130 Study 3 • Results (time to locate targets) – – – – Text clusters fastest 2D next 3D last With practice (6 sessions) 2D neared text results; 3D still slower – Computer experts were just as fast with 3D • Certain tasks equally fast with 2D & text – Find particular cluster – Find an already-marked document • But anything involving text (e.g., find title) much faster with text. – Spatial location rotated, so users lost context • Helpful viz features – Color coding (helped text too) – Relative vertical locations Visualization of search results: a comparative evaluation of text, 2D, and 3D interfaces Sebrechts, Cugini, Laskowski, Vasilakis and Miller, SIGIR ‘99. 131 Summary: Visualizing for Search Using Clusters • Huge 2D maps may be inappropriate focus for information retrieval – cannot see what the documents are about – space is difficult to browse for IR purposes – (tough to visualize abstract concepts) • Perhaps more suited for pattern discovery and gist-like overviews 132 More Recent Attempts • Analzying retrieval results – KartOO – Grokker http://www.kartoo.com/ http://www.groxis.com/service/grok • Networks of Words – TextArc – VisualThesaurus http://www.textarc.org http://www.visualthesaurus.com 133 134 135 136 137 138 139 Summary: Viz and Search • So far no big wins (from a usability point of view) • Hyperlinks and text, in tandem with careful design of layout, font, etc., can be made to work well – Google – Stuff I’ve Seen – Flamenco • Perhaps there will be a breakthrough! 140 What We’ve Covered • Introduction – What do people search for (and how)? – Why is designing for search difficult? • How to Design for Search – – – – – HCI and iterative design What works? Small details matter Scaffolding The Role of DWIM • Core Problems – Query specification and refinement – Browsing and searching collections • Information Visualization for Search 141 Final Words • User interfaces for search remains a fascinating and challenging field • Search has taken a primary role in the web and internet busiess • Thus, we can expect fascinating developments, and maybe some breakthroughs, in the next few years! 142 Thank you! Marti Hearst SIGIR 2004 Tutorial www.sims.berkeley.edu/~hearst 143 References Anick, Using Terminological Feedback for Web Search Refinement –A Log-based Study, SIGIR’03. Bates, The Berry-Picking Search: UI Design, in “User Interface Design”, Thimbley (ED), Addison-Wesley 1990 Chen, Houston, Sewell, and Schatz, JASIS 49(7) Chen and Yu, Empirical studies of information visualization: a meta-analysis, IJHCS 53(5),2000 Dumais, Cutrell, Cadiz, Jancke, Sarin and Robbins, Stuff I've Seen: A system for personal information retrieval and re-use. SIGIR 2003. Furnas, Landauer, Gomez, Dumais: The Vocabulary Problem in Human-System Communication. Commun. ACM 30(11): 964-971 (1987) Hargattai, Classifying and Coding Online Actions, Social Science Computer Review 22(2), 2004 210-227. Hearst, English, Sinha, Swearingen, Yee. Finding the Flow in Web Site Search, CACM 45(9), 2002. Hearst, User Interfaces and Visualization, Chapter 10 of Modern Information Retrieval, Baeza-Yates and Rebeiro-Nato (Eds), Addison-Wesley 1999. Johnson, Manning, Hagen, and Dorsey. Specialize Your Site's Search. Forrester Research, (Dec. 2001), Cambridge, MA 144 References Koenemann & Belkin, A Case for Interaction: A Study of Interactive Information Retrieval Behavior and Effectiveness, CHI’96 Marissa Mayer Interview by Mark Hurst: http://www.goodexperience.com/columns/02/1015google.html Muramatsu & Pratt, “Transparent Queries: Investigating Users’ Mental Models of Search Engines, SIGIR 2001. O’Day & Jeffries, Orienteering in an information landscape: how information seekers get from here to there, Proceedings of InterCHI ’93. Rose & Levinson, Understanding User Goals in Web Search, Proceedings of WWW’04 Russell, Stefik, Pirolli, Card, The Cost Structure of Sensemaking , Proceedings of InterCHI ’93. Sebrechts, Cugini, Laskowski, Vasilakis and Miller, Visualization of search results: a comparative evaluation of text, 2D, and 3D interfaces, SIGIR ‘99. Swan and Allan, Aspect windows, 3-D visualizations, and indirect comparisons of information retrieval systems, SIGIR 1998. Spink, Janson & Ozmultu, Use of query reformulation and relevance feedback by Excite users, Internet Research 10(4), 2001 Yee, Swearingen, Li, Hearst, Faceted Metadata for Image Search and Browsing, Proceedings of CHI 2003 145