Document

advertisement

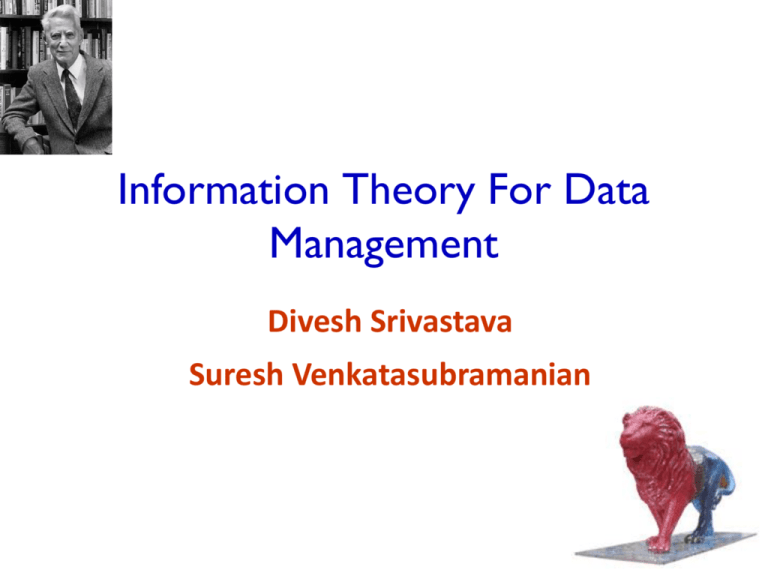

Information Theory For Data

Management

Divesh Srivastava

Suresh Venkatasubramanian

Motivation

-- Abstruse Goose (177)

Information Theory is relevant to all of humanity...

Background

Many problems in data management need precise reasoning

about information content, transfer and loss

Structure Extraction

– Privacy preservation

– Schema design

– Probabilistic data ?

–

Information Theory

First developed by Shannon as a way of quantifying capacity

of signal channels.

Entropy, relative entropy and mutual information capture

intrinsic informational aspects of a signal

Today:

Information theory provides a domain-independent way to

reason about structure in data

– More information = interesting structure

– Less information linkage = decoupling of structures

–

Tutorial Thesis

Information theory provides a mathematical framework for the

quantification of information content, linkage and loss.

This framework can be used in the design of data management

strategies that rely on probing the structure of information in

data.

Tutorial Goals

Introduce information-theoretic concepts to VLDB audience

Give a ‘data-centric’ perspective on information theory

Connect these to applications in data management

Describe underlying computational primitives

Illuminate when and how information theory might be of use in

new areas of data management.

Outline

Part 1

Introduction to Information Theory

Application: Data Anonymization

Application: Data Integration

Part 2

7

Review of Information Theory Basics

Application: Database Design

Computing Information Theoretic Primitives

Open Problems

Histograms And Discrete Distributions

X

x1

x3

x2

x4

x1

x1

x2

aggregate

counts

x1

Column of data

X

f(X)

X

p(X)

x1

4

x1

0.5

x2

2

x2

0.25

x3

1

x3

0.125

x4

1

x4

0.125

Histogram

normalize

Probability

distribution

Histograms And Discrete Distributions

reweight

X

x1

x3

X

f(x)*w(X)

x1

4*5=20

x2

2*3=6

x3

1*2=2

x4

1*2=2

normalize

x2

x4

x1

x1

x2

aggregate

counts

x1

Column of data

X

f(X)

X

p(X)

x1

4

x1

0.667

x2

2

x2

0.2

x3

1

x3

0.067

x4

1

x4

0.067

Histogram

Probability

distribution

From Columns To Random Variables

We can think of a column of data as “represented” by a

random variable:

X is a random variable

– p(X) is the column of probabilities p(X = x1), p(X = x2), and so on

– Also known (in unweighted case) as the empirical distribution

induced by the column X.

–

Notation:

X (upper case) denotes a random variable (column)

– x (lower case) denotes a value taken by X (field in a tuple)

– p(x) is the probability p(X = x)

–

Joint Distributions

Discrete distribution: probability p(X,Y,Z)

X

Y

Z

p(X,Y,Z)

X

Y

p(X,Y)

X

p(X)

x1

y1

z1

0.125

x1

y1

0.25

x1

0.5

x1

y2

z2

0.125

x1

y2

0.25

x2

0.25

x1

y1

z2

0.125

x2

y3

0.25

x3

0.125

x1

y2

z1

0.125

x3

y3

0.125

x4

0.125

x2

y3

z3

0.125

x4

y3

0.125

x2

y3

z4

0.125

Y

p(Y)

x3

y3

z5

0.125

y1

0.25

x4

y3

z6

0.125

y2

0.25

y3

0.5

p(Y) = ∑x p(X=x,Y) = ∑x ∑z p(X=x,Y,Z=z)

11

Entropy Of A Column

Let h(x) = log2 1/p(x)

h(X) is column of h(x) values.

H(X) = EX[h(x)] = SX p(x) log2 1/p(x)

X

p(X)

h(X)

x1

0.5

1

x2

0.25

2

x3

0.125

3

x4

0.125

3

H(X) = 1.75 < log |X| = 2

Two views of entropy

It captures uncertainty in data: high entropy, more

unpredictability

It captures information content: higher entropy, more

information.

Examples

X uniform over [1, ..., 4]. H(X) = 2

Y is 1 with probability 0.5, in [2,3,4] uniformly.

H(Y) = 0.5 log 2 + 0.5 log 6 ~= 1.8 < 2

– Y is more sharply defined, and so has less uncertainty.

–

Z uniform over [1, ..., 8]. H(Z) = 3 > 2

–

Z spans a larger range, and captures more information

X

Y

Z

Comparing Distributions

How do we measure difference between two distributions ?

Kullback-Leibler divergence:

–

dKL(p, q) = Ep[ h(q) – h(p) ] = Si pi log(pi/qi)

Inference

mechanism

Prior belief

Resulting belief

Comparing Distributions

Kullback-Leibler divergence:

–

–

–

–

–

dKL(p, q) = Ep[ h(q) – h(p) ] = Si pi log(pi/qi)

dKL(p, q) >= 0

Captures extra information needed to capture p given q

Is asymmetric ! dKL(p, q) != dKL(q, p)

Is not a metric (does not satisfy triangle inequality)

There are other measures:

–

2-distance, variational distance, f-divergences, …

Conditional Probability

Given a joint distribution on random variables X, Y, how much

information about X can we glean from Y ?

Conditional probability: p(X|Y)

p(X = x1 | Y = y1) = p(X = x1, Y = y1)/p(Y = y1)

–

X

Y

p(X,Y)

p(X|Y)

p(Y|X)

X

p(X)

x1

y1

0.25

1.0

0.5

x1

0.5

x1

y2

0.25

1.0

0.5

x2

0.25

x2

y3

0.25

0.5

1.0

x3

0.125

x3

y3

0.125

0.25

1.0

x4

0.125

x4

y3

0.125

0.25

1.0

Y

p(Y)

y1

0.25

y2

0.25

y3

0.5

Conditional Entropy

Let h(x|y) = log2 1/p(x|y)

H(X|Y) = Ex,y[h(x|y)] = Sx Sy p(x,y) log2 1/p(x|y)

H(X|Y) = H(X,Y) – H(Y)

X

Y

p(X,Y)

p(X|Y)

h(X|Y)

x1

y1

0.25

1.0

0.0

x1

y2

0.25

1.0

0.0

x2

y3

0.25

0.5

1.0

x3

y3

0.125

0.25

2.0

x4

y3

0.125

0.25

2.0

H(X|Y) = H(X,Y) – H(Y) = 2.25 – 1.5 = 0.75

If X, Y are independent, H(X|Y) = H(X)

Mutual Information

Mutual information captures the difference between the joint

distribution on X and Y, and the marginal distributions on X

and Y.

Let i(x;y) = log p(x,y)/p(x)p(y)

I(X;Y) = Ex,y[I(X;Y)] = Sx Sy p(x,y) log p(x,y)/p(x)p(y)

X

Y

p(X,Y)

h(X,Y)

i(X;Y)

X

p(X)

h(X)

x1

y1

0.25

2.0

1.0

Y

p(Y)

h(Y)

x1

0.5

1.0

x1

y2

0.25

2.0

1.0

y1

0.25

2.0

x2

0.25

2.0

x2

y3

0.25

2.0

1.0

y2

0.25

2.0

x3

0.125

3.0

x3

y3

0.125

3.0

1.0

y3

0.5

1.0

x4

0.125

3.0

x4

y3

0.125

3.0

1.0

Mutual Information: Strength of linkage

I(X;Y) = H(X) + H(Y) – H(X,Y) = H(X) – H(X|Y) = H(Y) – H(Y|X)

If X, Y are independent, then I(X;Y) = 0:

–

H(X,Y) = H(X) + H(Y), so I(X;Y) = H(X) + H(Y) – H(X,Y) = 0

I(X;Y) <= max (H(X), H(Y))

Suppose Y = f(X) (deterministically)

– Then H(Y|X) = 0, and so I(X;Y) = H(Y) – H(Y|X) = H(Y)

–

Mutual information captures higher-order interactions:

Covariance captures “linear” interactions only

– Two variables can be uncorrelated (covariance = 0) and have

nonzero mutual information:

– X R [-1,1], Y = X2. Cov(X,Y) = 0, I(X;Y) = H(X) > 0

–

Information-Theoretic Clustering

Clustering takes a collection of objects and groups them.

Given a distance function between objects

– Choice of measure of complexity of clustering

– Choice of measure of cost for a cluster

–

Usually,

Distance function is Euclidean distance

– Number of clusters is measure of complexity

– Cost measure for cluster is sum-of-squared-distance to center

–

Goal: minimize complexity and cost

–

Inherent tradeoff between two

Feature Representation

Let V = {v1, v2, v3, v4}

X

X is “explained” by distribution over V.

v1

“Feature vector” of X is [0.5, 0.25, 0.125, 0.125]

v3

v2

v4

v1

v1

v2

aggregate

counts

v1

Column of data

X

f(X)

X

p(X)

v1

4

v1

0.5

v2

2

v2

0.25

v3

1

v3

0.125

v4

1

v4

0.125

Histogram

normalize

Probability

distribution

Feature Representation

V

v1

v2

v3

v4

X1

0.5

0.25

0.125

0.125

X2

0.5

0.2

0.15

0.15

X

p(v2|X2) = 0.2

Feature vector

Information-Theoretic Clustering

Clustering takes a collection of objects and groups them.

Given a distance function between objects

– Choice of measure of complexity of clustering

– Choice of measure of cost for a cluster

–

In information-theoretic setting

What is the distance function ?

– How do we measure complexity ?

– What is a notion of cost/quality ?

–

Goal: minimize complexity and maximize quality

–

Inherent tradeoff between two

Measuring complexity of clustering

Take 1: complexity of a clustering = #clusters

–

standard model of complexity.

Doesn’t capture the fact that clusters have different sizes.

Measuring complexity of clustering

Take 2: Complexity of clustering = number of bits needed to

describe it.

Writing down “k” needs log k bits.

In general, let cluster t T have |t| elements.

set p(t) = |t|/n

– #bits to write down cluster sizes = H(T) = S pt log 1/pt

–

H(

) < H(

)

Information-theoretic Clustering (take I)

Given data X = x1, ..., xn explained by variable V, partition X

into clusters (represented by T) such that

H(T) is minimized and quality is maximized

Soft clusterings

In a “hard” clustering, each point is assigned to exactly one

cluster.

Characteristic function

–

p(t|x) = 1 if x t, 0 if not.

Suppose we allow points to partially belong to clusters:

p(T|x) is a distribution.

– p(t|x) is the “probability” of assigning x to t

–

How do we describe the complexity of a clustering ?

Measuring complexity of clustering

Take 1:

p(t) = Sx p(x) p(t|x)

– Compute H(T) as before.

–

Problem:

T1

t1

t2

T2

t1

t2

x1

0.5

0.5

x1

0.99

0.01

x2

0.5

0.5

x2

0.01

0.99

h(T)

0.5

0.5

h(T)

0.5

0.5

H(T1) = H(T2) !!

Measuring complexity of clustering

By averaging the memberships, we’ve lost useful information.

Take II: Compute I(T;X) !

X

T1

p(X,T)

i(X;T)

X

T2

p(X,T)

i(X;T)

x1

t1

0.25

0

x1

t1

0.495

0.99

x1

t2

0.25

0

x1

t2

0.005

-5.64

x2

t1

0.25

0

x2

t1

0.25

0

x2

t2

0.25

0

x2

t2

0.25

0

I(T1;X) = 0

I(T2;X) = 0.46

Even better: If T is a hard clustering of X, then I(T;X) = H(T)

Information-theoretic Clustering (take II)

Given data X = x1, ..., xn explained by variable V, partition X

into clusters (represented by T) such that

I(T,X) is minimized and quality is maximized

Measuring cost of a cluster

Given objects Xt = {X1, X2, …, Xm} in cluster t,

Cost(t) = (1/m)Si d(Xi, C) = Si p(Xi) dKL(p(V|Xi), C)

where C = (1/m) Si p(V|Xi) = Si p(Xi) p(V|Xi) = p(V)

Mutual Information = Cost of Cluster

Cost(t) = (1/m)Si d(Xi, C) = Si p(Xi) dKL(p(V|Xi), p(V))

Si p(Xi) KL( p(V|Xi), p(V)) = Si p(Xi) Sj p(vj|Xi) log p(vj|Xi)/p(vj)

= Si,j p(Xi, vj) log p(vj, Xi)/p(vj)p(Xi)

= I(Xt, V) !!

Cost of a cluster = I(Xt,V)

Cost of a clustering

If we partition X into k clusters X1, ..., Xk

Cost(clustering) = Si pi I(Xi, V)

(pi = |Xi|/|X|)

Cost of a clustering

Each cluster center t can be “explained” in terms of V:

–

p(V|t) = Si p(Xi) p(V|Xi)

Suppose we treat each cluster center itself as a point:

Cost of a clustering

We can write down the “cost” of this “cluster”

–

Cost(T) = I(T;V)

Key result [BMDG05] :

Cost(clustering) = I(X, V) – (T, V)

Minimizing cost(clustering) => maximizing I(T, V)

Information-theoretic Clustering (take III)

Given data X = x1, ..., xn explained by variable V, partition X

into clusters (represented by T) such that

I(T;X) - bI(T;V) is maximized

This is the Information Bottleneck Method [TPB98]

Agglomerative techniques exist for the case of ‘hard’

clusterings

b is the tradeoff parameter between complexity and cost

I(T;X) and I(T;V) are in the same units.

Information Theory: Summary

We can represent data as discrete distributions (normalized

histograms)

Entropy captures uncertainty or information content in a

distribution

The Kullback-Leibler distance captures the difference

between distributions

Mutual information and conditional entropy capture linkage

between variables in a joint distribution

We can formulate information-theoretic clustering problems

Outline

Part 1

Introduction to Information Theory

Application: Data Anonymization

Application: Data Integration

Part 2

38

Review of Information Theory Basics

Application: Database Design

Computing Information Theoretic Primitives

Open Problems

Data Anonymization Using Randomization

Goal: publish anonymized microdata to enable accurate ad hoc

analyses, but ensure privacy of individuals’ sensitive attributes

Key ideas:

Randomize numerical data: add noise from known distribution

– Reconstruct original data distribution using published noisy data

–

Issues:

How can the original data distribution be reconstructed?

– What kinds of randomization preserve privacy of individuals?

–

39

Information Theory for Data Management - Divesh & Suresh

Data Anonymization Using Randomization

Many randomization strategies proposed [AS00, AA01, EGS03]

Example randomization strategies: X in [0, 10]

R = X + μ (mod 11), μ is uniform in {-1, 0, 1}

– R = X + μ (mod 11), μ is in {-1 (p = 0.25), 0 (p = 0.5), 1 (p = 0.25)}

– R = X (p = 0.6), R = μ, μ is uniform in [0, 10] (p = 0.4)

–

Question:

Which randomization strategy has higher privacy preservation?

– Quantify loss of privacy due to publication of randomized data

–

40

Information Theory for Data Management - Divesh & Suresh

Data Anonymization Using Randomization

X in [0, 10], R1 = X + μ (mod 11), μ is uniform in {-1, 0, 1}

41

Id

X

s1

0

s2

3

s3

5

s4

0

s5

8

s6

0

s7

6

s8

0

Information Theory for Data Management - Divesh & Suresh

Data Anonymization Using Randomization

X in [0, 10], R1 = X + μ (mod 11), μ is uniform in {-1, 0, 1}

42

Id

X

μ

Id

R1

s1

0

-1

s1

10

s2

3

0

s2

3

s3

5

1

s3

6

s4

0

0

s4

0

s5

8

1

s5

9

s6

0

-1

s6

10

s7

6

1

s7

7

s8

0

0

s8

0

→

Information Theory for Data Management - Divesh & Suresh

Data Anonymization Using Randomization

X in [0, 10], R1 = X + μ (mod 11), μ is uniform in {-1, 0, 1}

43

Id

X

μ

Id

R1

s1

0

0

s1

0

s2

3

-1

s2

2

s3

5

0

s3

5

s4

0

1

s4

1

s5

8

1

s5

9

s6

0

-1

s6

10

s7

6

-1

s7

5

s8

0

1

s8

1

→

Information Theory for Data Management - Divesh & Suresh

Reconstruction of Original Data Distribution

X in [0, 10], R1 = X + μ (mod 11), μ is uniform in {-1, 0, 1}

Reconstruct distribution of X using knowledge of R1 and μ

– EM algorithm converges to MLE of original distribution [AA01]

–

44

Id

X

μ

Id

R1

Id

X | R1

s1

0

0

s1

0

s1

{10, 0, 1}

s2

3

-1

s2

2

s2

{1, 2, 3}

s3

5

0

s3

5

s3

{4, 5, 6}

s4

0

1

s4

1

s4

{0, 1, 2}

s5

8

1

s5

9

s5

{8, 9, 10}

s6

0

-1

s6

10

s6

{9, 10, 0}

s7

6

-1

s7

5

s7

{4, 5, 6}

s8

0

1

s8

1

s8

{0, 1, 2}

→

→

Information Theory for Data Management - Divesh & Suresh

Analysis of Privacy [AS00]

X in [0, 10], R1 = X + μ (mod 11), μ is uniform in {-1, 0, 1}

–

45

If X is uniform in [0, 10], privacy determined by range of μ

Id

X

μ

Id

R1

Id

X | R1

s1

0

0

s1

0

s1

{10, 0, 1}

s2

3

-1

s2

2

s2

{1, 2, 3}

s3

5

0

s3

5

s3

{4, 5, 6}

s4

0

1

s4

1

s4

{0, 1, 2}

s5

8

1

s5

9

s5

{8, 9, 10}

s6

0

-1

s6

10

s6

{9, 10, 0}

s7

6

-1

s7

5

s7

{4, 5, 6}

s8

0

1

s8

1

s8

{0, 1, 2}

→

→

Information Theory for Data Management - Divesh & Suresh

Analysis of Privacy [AA01]

X in [0, 10], R1 = X + μ (mod 11), μ is uniform in {-1, 0, 1}

–

46

If X is uniform in [0, 1] [5, 6], privacy smaller than range of μ

Id

X

μ

Id

R1

Id

X | R1

s1

0

0

s1

0

s1

{10, 0, 1}

s2

1

-1

s2

0

s2

{10, 0, 1}

s3

5

0

s3

5

s3

{4, 5, 6}

s4

6

1

s4

7

s4

{6, 7, 8}

s5

0

1

s5

1

s5

{0, 1, 2}

s6

1

-1

s6

0

s6

{10, 0, 1}

s7

5

-1

s7

4

s7

{3, 4, 5}

s8

6

1

s8

7

s8

{6, 7, 8}

→

→

Information Theory for Data Management - Divesh & Suresh

Analysis of Privacy [AA01]

X in [0, 10], R1 = X + μ (mod 11), μ is uniform in {-1, 0, 1}

If X is uniform in [0, 1] [5, 6], privacy smaller than range of μ

– In some cases, sensitive value revealed

–

47

Id

X

μ

Id

R1

Id

X | R1

s1

0

0

s1

0

s1

{0, 1}

s2

1

-1

s2

0

s2

{0, 1}

s3

5

0

s3

5

s3

{5, 6}

s4

6

1

s4

7

s4

{6}

s5

0

1

s5

1

s5

{0, 1}

s6

1

-1

s6

0

s6

{0, 1}

s7

5

-1

s7

4

s7

{5}

s8

6

1

s8

7

s8

{6}

→

→

Information Theory for Data Management - Divesh & Suresh

Quantify Loss of Privacy [AA01]

Goal: quantify loss of privacy based on mutual information I(X;R)

Smaller H(X|R) more loss of privacy in X by knowledge of R

– Larger I(X;R) more loss of privacy in X by knowledge of R

– I(X;R) = H(X) – H(X|R)

–

I(X;R) used to capture correlation between X and R

p(X) is the prior knowledge of sensitive attribute X

– p(X, R) is the joint distribution of X and R

–

48

Information Theory for Data Management - Divesh & Suresh

Quantify Loss of Privacy [AA01]

Goal: quantify loss of privacy based on mutual information I(X;R)

–

X is uniform in [5, 6], R1 = X + μ (mod 11), μ is uniform in {-1, 0, 1}

X

R1 p(X,R1) h(X,R1) i(X;R1)

X

5

4

5

5

5

6

5

6

6

5

R1

6

6

4

6

7

5

p(X)

h(X)

p(R1)

h(R1)

6

7

49

Information Theory for Data Management - Divesh & Suresh

Quantify Loss of Privacy [AA01]

Goal: quantify loss of privacy based on mutual information I(X;R)

–

50

X is uniform in [5, 6], R1 = X + μ (mod 11), μ is uniform in {-1, 0, 1}

X

R1 p(X,R1) h(X,R1) i(X;R1)

X

p(X)

5

4

0.17

5

0.5

5

5

0.17

6

0.5

5

6

0.17

6

5

0.17

R1

p(R1)

6

6

0.17

4

0.17

6

7

0.17

5

0.34

6

0.34

7

0.17

h(X)

h(R1)

Information Theory for Data Management - Divesh & Suresh

Quantify Loss of Privacy [AA01]

Goal: quantify loss of privacy based on mutual information I(X;R)

–

51

X is uniform in [5, 6], R1 = X + μ (mod 11), μ is uniform in {-1, 0, 1}

X

R1 p(X,R1) h(X,R1) i(X;R1)

X

p(X)

h(X)

5

4

0.17

2.58

5

0.5

1.0

5

5

0.17

2.58

6

0.5

1.0

5

6

0.17

2.58

6

5

0.17

2.58

R1

p(R1)

h(R1)

6

6

0.17

2.58

4

0.17

2.58

6

7

0.17

2.58

5

0.34

1.58

6

0.34

1.58

7

0.17

2.58

Information Theory for Data Management - Divesh & Suresh

Quantify Loss of Privacy [AA01]

Goal: quantify loss of privacy based on mutual information I(X;R)

X is uniform in [5, 6], R1 = X + μ (mod 11), μ is uniform in {-1, 0, 1}

– I(X;R) = 0.33

–

52

X

R1 p(X,R1) h(X,R1) i(X;R1)

X

p(X)

h(X)

5

4

0.17

2.58

1.0

5

0.5

1.0

5

5

0.17

2.58

0.0

6

0.5

1.0

5

6

0.17

2.58

0.0

6

5

0.17

2.58

0.0

R1

p(R1)

h(R1)

6

6

0.17

2.58

0.0

4

0.17

2.58

6

7

0.17

2.58

1.0

5

0.34

1.58

6

0.34

1.58

7

0.17

2.58

Information Theory for Data Management - Divesh & Suresh

Quantify Loss of Privacy [AA01]

Goal: quantify loss of privacy based on mutual information I(X;R)

X is uniform in [5, 6], R2 = X + μ (mod 11), μ is uniform in {0, 1}

– I(X;R1) = 0.33, I(X;R2) = 0.5 R2 is a bigger privacy risk than R1

–

53

X

R2 p(X,R2) h(X,R2) i(X;R2)

X

p(X)

h(X)

5

5

0.25

2.0

1.0

5

0.5

1.0

5

6

0.25

2.0

0.0

6

0.5

1.0

6

6

0.25

2.0

0.0

6

7

0.25

2.0

1.0

R2

p(R2)

h(R2)

5

0.25

2.0

6

0.5

1.0

7

0.25

2.0

Information Theory for Data Management - Divesh & Suresh

Quantify Loss of Privacy [AA01]

Equivalent goal: quantify loss of privacy based on H(X|R)

X is uniform in [5, 6], R2 = X + μ (mod 11), μ is uniform in {0, 1}

– Intuition: we know more about X given R2, than about X given R1

– H(X|R1) = 0.67, H(X|R2) = 0.5 R2 is a bigger privacy risk than R1

–

54

X

R1 p(X,R1) p(X|R1) h(X|R1)

X

R2 p(X,R2) p(X|R2) h(X|R2)

5

4

0.17

1.0

0.0

5

5

0.25

1.0

0.0

5

5

0.17

0.5

1.0

5

6

0.25

0.5

1.0

5

6

0.17

0.5

1.0

6

6

0.25

0.5

1.0

6

5

0.17

0.5

1.0

6

7

0.25

1.0

0.0

6

6

0.17

0.5

1.0

6

7

0.17

1.0

0.0

Information Theory for Data Management - Divesh & Suresh

Quantify Loss of Privacy

Example: X is uniform in [0, 1]

R3 = e (p = 0.9999), R3 = X (p = 0.0001)

– R4 = X (p = 0.6), R4 = 1 – X (p = 0.4)

–

Is R3 or R4 a bigger privacy risk?

55

Information Theory for Data Management - Divesh & Suresh

Worst Case Loss of Privacy [EGS03]

Example: X is uniform in [0, 1]

R3 = e (p = 0.9999), R3 = X (p = 0.0001)

– R4 = X (p = 0.6), R4 = 1 – X (p = 0.4)

–

X

R3

p(X,R3)

h(X,R3)

i(X;R3)

X

R4 p(X,R4) h(X,R4) i(X;R4)

0

e

0.49995

1.0

0.0

0

0

0.3

1.74

0.26

0

0

0.00005

14.29

1.0

0

1

0.2

2.32

-0.32

1

e

0.49995

1.0

0.0

1

0

0.2

2.32

-0.32

1

1

0.00005

14.29

1.0

1

1

0.3

1.74

0.26

I(X;R3) = 0.0001 << I(X;R4) = 0.028

56

Information Theory for Data Management - Divesh & Suresh

Worst Case Loss of Privacy [EGS03]

Example: X is uniform in [0, 1]

R3 = e (p = 0.9999), R3 = X (p = 0.0001)

– R4 = X (p = 0.6), R4 = 1 – X (p = 0.4)

–

X

R3

p(X,R3)

h(X,R3)

i(X;R3)

X

R4 p(X,R4) h(X,R4) i(X;R4)

0

e

0.49995

1.0

0.0

0

0

0.3

1.74

0.26

0

0

0.00005

14.29

1.0

0

1

0.2

2.32

-0.32

1

e

0.49995

1.0

0.0

1

0

0.2

2.32

-0.32

1

1

0.00005

14.29

1.0

1

1

0.3

1.74

0.26

I(X;R3) = 0.0001 << I(X;R4) = 0.028

–

57

But R3 has a larger worst case risk

Information Theory for Data Management - Divesh & Suresh

Worst Case Loss of Privacy [EGS03]

Goal: quantify worst case loss of privacy in X by knowledge of R

–

Use max KL divergence, instead of mutual information

Mutual information can be formulated as expected KL divergence

I(X;R) = ∑x ∑r p(x,r)*log2(p(x,r)/p(x)*p(r)) = KL(p(X,R) ||p(X)*p(R))

– I(X;R) = ∑r p(r) ∑x p(x|r)*log2(p(x|r)/p(x)) = ER [KL(p(X|r) ||p(X))]

– [AA01] measure quantifies expected loss of privacy over R

–

[EGS03] propose a measure based on worst case loss of privacy

–

58

IW(X;R) = MAXR [KL(p(X|r) ||p(X))]

Information Theory for Data Management - Divesh & Suresh

Worst Case Loss of Privacy [EGS03]

Example: X is uniform in [0, 1]

R3 = e (p = 0.9999), R3 = X (p = 0.0001)

– R4 = X (p = 0.6), R4 = 1 – X (p = 0.4)

–

X

R3

p(X,R3)

p(X|R3)

i(X;R3)

X

R4 p(X,R4) p(X|R4) i(X;R4)

0

e

0.49995

0.5

0.0

0

0

0.3

0.6

0.26

0

0

0.00005

1.0

1.0

0

1

0.2

0.4

-0.32

1

e

0.49995

0.5

0.0

1

0

0.2

0.4

-0.32

1

1

0.00005

1.0

1.0

1

1

0.3

0.6

0.26

IW(X;R3) = max{0.0, 1.0, 1.0} > IW(X;R4) = max{0.028, 0.028}

59

Information Theory for Data Management - Divesh & Suresh

Worst Case Loss of Privacy [EGS03]

Example: X is uniform in [5, 6]

R1 = X + μ (mod 11), μ is uniform in {-1, 0, 1}

– R2 = X + μ (mod 11), μ is uniform in {0, 1}

–

X

R1 p(X,R1) p(X|R1)

i(X;R1)

X

R2 p(X,R2) p(X|R2)

5

4

0.17

5

5

5

i(X;R2)

1.0

1.0

5

5

0.25

1.0

1.0

0.17

0.5

0.0

5

6

0.25

0.5

0.0

6

0.17

0.5

0.0

6

6

0.25

0.5

0.0

6

5

0.17

0.5

0.0

6

7

0.25

1.0

1.0

6

6

0.17

0.5

0.0

6

7

0.17

1.0

1.0

IW(X;R1) = max{1.0, 0.0, 0.0, 1.0} = IW(X;R2) = {1.0, 0.0, 1.0}

–

60

Unable to capture that R2 is a bigger privacy risk than R1

Information Theory for Data Management - Divesh & Suresh

Data Anonymization: Summary

Randomization techniques useful for microdata anonymization

–

Randomization techniques differ in their loss of privacy

Information theoretic measures useful to capture loss of privacy

Expected KL divergence captures expected loss of privacy [AA01]

– Maximum KL divergence captures worst case loss of privacy [EGS03]

– Both are useful in practice

–

61

Information Theory for Data Management - Divesh & Suresh

Outline

Part 1

Introduction to Information Theory

Application: Data Anonymization

Application: Data Integration

Part 2

62

Review of Information Theory Basics

Application: Database Design

Computing Information Theoretic Primitives

Open Problems

Information Theory for Data Management - Divesh & Suresh

Schema Matching

Goal: align columns across database tables to be integrated

–

Fundamental problem in database integration

Early useful approach: textual similarity of column names

False positives: Address ≠ IP_Address

– False negatives: Customer_Id = Client_Number

–

Early useful approach: overlap of values in columns, e.g., Jaccard

False positives: Emp_Id ≠ Project_Id

– False negatives: Emp_Id = Personnel_Number

–

63

Information Theory for Data Management - Divesh & Suresh

Opaque Schema Matching [KN03]

Goal: align columns when column names, data values are opaque

Databases belong to different government bureaucracies

– Treat column names and data values as uninterpreted (generic)

–

A

B

C

D

W

X

Y

Z

a1

b2

c1

d1

w2

x1

y1

z2

a3

b4

c2

d2

w4

x2

y3

z3

a1

b1

c1

d2

w3

x3

y3

z1

a4

b3

c2

d3

w1

x2

y1

z2

Example: EMP_PROJ(Emp_Id, Proj_Id, Task_Id, Status_Id)

Likely that all Id fields are from the same domain

– Different databases may have different column names

–

64

Information Theory for Data Management - Divesh & Suresh

Opaque Schema Matching [KN03]

Approach: build complete, labeled graph GD for each database D

Nodes are columns, label(node(X)) = H(X), label(edge(X, Y)) = I(X;Y)

– Perform graph matching between GD1 and GD2, minimizing distance

–

Intuition:

Entropy H(X) captures distribution of values in database column X

– Mutual information I(X;Y) captures correlations between X, Y

– Efficiency: graph matching between schema-sized graphs

–

65

Information Theory for Data Management - Divesh & Suresh

Opaque Schema Matching [KN03]

Approach: build complete, labeled graph GD for each database D

–

66

Nodes are columns, label(node(X)) = H(X), label(edge(X, Y)) = I(X;Y)

A

B

C

D

A

p(A)

B

p(B)

C

p(C)

D

p(D)

a1

b2

c1

d1

a1

0.5

b1

0.25

c1

0.5

d1

0.25

a3

b4

c2

d2

a3

0.25

b2

0.25

c2

0.5

d2

0.5

a1

b1

c1

d2

a4

0.25

b3

0.25

d3

0.25

a4

b3

c2

d3

b4

0.25

Information Theory for Data Management - Divesh & Suresh

Opaque Schema Matching [KN03]

Approach: build complete, labeled graph GD for each database D

–

Nodes are columns, label(node(X)) = H(X), label(edge(X, Y)) = I(X;Y)

A

B

C

D

A

h(A)

B

h(B)

C

h(C)

D

h(D)

a1

b2

c1

d1

a1

1.0

b1

2.0

c1

1.0

d1

2.0

a3

b4

c2

d2

a3

2.0

b2

2.0

c2

1.0

d2

1.0

a1

b1

c1

d2

a4

2.0

b3

2.0

d3

2.0

a4

b3

c2

d3

b4

2.0

H(A) = 1.5, H(B) = 2.0, H(C) = 1.0, H(D) = 1.5

67

Information Theory for Data Management - Divesh & Suresh

Opaque Schema Matching [KN03]

Approach: build complete, labeled graph GD for each database D

–

Nodes are columns, label(node(X)) = H(X), label(edge(X, Y)) = I(X;Y)

A

B

C

D

A

h(A)

B

h(B)

A

B

h(A,B)

i(A;B)

a1

b2

c1

d1

a1

1.0

b1

2.0

a1

b2

2.0

1.0

a3

b4

c2

d2

a3

2.0

b2

2.0

a3

b4

2.0

2.0

a1

b1

c1

d2

a4

2.0

b3

2.0

a1

b1

2.0

1.0

a4

b3

c2

d3

b4

2.0

a4

b3

2.0

2.0

H(A) = 1.5, H(B) = 2.0, H(C) = 1.0, H(D) = 1.5, I(A;B) = 1.5

68

Information Theory for Data Management - Divesh & Suresh

Opaque Schema Matching [KN03]

Approach: build complete, labeled graph GD for each database D

–

Nodes are columns, label(node(X)) = H(X), label(edge(X, Y)) = I(X;Y)

A

B

C

D

a1

b2

c1

d1

a3

b4

c2

d2

a1

b1

c1

d2

a4

b3

c2

d3

1.5

1.5

A

B

1.0

1.0

1.5

1.0

1.0

C

D

0.5

69

2.0

Information Theory for Data Management - Divesh & Suresh

1.5

Opaque Schema Matching [KN03]

Approach: build complete, labeled graph GD for each database D

Nodes are columns, label(node(X)) = H(X), label(edge(X, Y)) = I(X;Y)

– Perform graph matching between GD1 and GD2, minimizing distance

–

1.5

1.5

A

B

2.0

2.0

1.0

1.5

1.0

1.5

1.0

1.0

0.5

C

D

0.5

1.5

1.0

Y

Z

1.0

[KN03] uses euclidean and normal distance metrics

70

X

1.5

1.0

1.0

1.5

W

Information Theory for Data Management - Divesh & Suresh

1.5

Opaque Schema Matching [KN03]

Approach: build complete, labeled graph GD for each database D

Nodes are columns, label(node(X)) = H(X), label(edge(X, Y)) = I(X;Y)

– Perform graph matching between GD1 and GD2, minimizing distance

–

1.5

1.5

A

B

2.0

2.0

1.0

1.5

1.0

1.5

1.0

1.0

0.5

C

D

0.5

71

X

1.5

1.0

1.0

1.5

W

1.5

1.0

Y

Z

1.0

Information Theory for Data Management - Divesh & Suresh

1.5

Opaque Schema Matching [KN03]

Approach: build complete, labeled graph GD for each database D

Nodes are columns, label(node(X)) = H(X), label(edge(X, Y)) = I(X;Y)

– Perform graph matching between GD1 and GD2, minimizing distance

–

1.5

1.5

A

B

2.0

2.0

1.0

1.5

1.0

1.5

1.0

1.0

0.5

C

D

0.5

72

X

1.5

1.0

1.0

1.5

W

1.5

1.0

Y

Z

1.0

Information Theory for Data Management - Divesh & Suresh

1.5

Heterogeneity Identification [DKOSV06]

Goal: identify columns with semantically heterogeneous values

–

Can arise due to opaque schema matching [KN03]

Key ideas:

Heterogeneity based on distribution, distinguishability of values

– Use Information Bottleneck to compute soft clustering of values

–

Issues:

Which information theoretic measure characterizes heterogeneity?

– How to set parameters in the Information Bottleneck method?

–

73

Information Theory for Data Management - Divesh & Suresh

Heterogeneity Identification [DKOSV06]

Example: semantically homogeneous, heterogeneous columns

74

Customer_Id

Customer_Id

h8742@yyy.com

h8742@yyy.com

kkjj+@haha.org

kkjj+@haha.org

qwerty@keyboard.us

qwerty@keyboard.us

555-1212@fax.in

555-1212@fax.in

alpha@beta.ga

(908)-555-1234

john.smith@noname.org

973-360-0000

jane.doe@1973law.us

360-0007

jamesbond.007@action.com

(877)-807-4596

Information Theory for Data Management - Divesh & Suresh

Heterogeneity Identification [DKOSV06]

Example: semantically homogeneous, heterogeneous columns

75

Customer_Id

Customer_Id

h8742@yyy.com

h8742@yyy.com

kkjj+@haha.org

kkjj+@haha.org

qwerty@keyboard.us

qwerty@keyboard.us

555-1212@fax.in

555-1212@fax.in

alpha@beta.ga

(908)-555-1234

john.smith@noname.org

973-360-0000

jane.doe@1973law.us

360-0007

jamesbond.007@action.com

(877)-807-4596

Information Theory for Data Management - Divesh & Suresh

Heterogeneity Identification [DKOSV06]

Example: semantically homogeneous, heterogeneous columns

Customer_Id

Customer_Id

h8742@yyy.com

h8742@yyy.com

kkjj+@haha.org

kkjj+@haha.org

qwerty@keyboard.us

qwerty@keyboard.us

555-1212@fax.in

555-1212@fax.in

alpha@beta.ga

(908)-555-1234

john.smith@noname.org

973-360-0000

jane.doe@1973law.us

360-0007

jamesbond.007@action.com

(877)-807-4596

More semantic types in column greater heterogeneity

–

76

Only email versus email + phone

Information Theory for Data Management - Divesh & Suresh

Heterogeneity Identification [DKOSV06]

Example: semantically homogeneous, heterogeneous columns

77

Customer_Id

Customer_Id

h8742@yyy.com

h8742@yyy.com

kkjj+@haha.org

kkjj+@haha.org

qwerty@keyboard.us

qwerty@keyboard.us

555-1212@fax.in

555-1212@fax.in

alpha@beta.ga

(908)-555-1234

john.smith@noname.org

973-360-0000

jane.doe@1973law.us

360-0007

(877)-807-4596

(877)-807-4596

Information Theory for Data Management - Divesh & Suresh

Heterogeneity Identification [DKOSV06]

Example: semantically homogeneous, heterogeneous columns

Customer_Id

Customer_Id

h8742@yyy.com

h8742@yyy.com

kkjj+@haha.org

kkjj+@haha.org

qwerty@keyboard.us

qwerty@keyboard.us

555-1212@fax.in

555-1212@fax.in

alpha@beta.ga

(908)-555-1234

john.smith@noname.org

973-360-0000

jane.doe@1973law.us

360-0007

(877)-807-4596

(877)-807-4596

Relative distribution of semantic types impacts heterogeneity

–

78

Mainly email + few phone versus balanced email + phone

Information Theory for Data Management - Divesh & Suresh

Heterogeneity Identification [DKOSV06]

Example: semantically homogeneous, heterogeneous columns

79

Customer_Id

Customer_Id

187-65-2468

h8742@yyy.com

987-64-6837

kkjj+@haha.org

789-15-4321

qwerty@keyboard.us

987-65-4321

555-1212@fax.in

(908)-555-1234

(908)-555-1234

973-360-0000

973-360-0000

360-0007

360-0007

(877)-807-4596

(877)-807-4596

Information Theory for Data Management - Divesh & Suresh

Heterogeneity Identification [DKOSV06]

Example: semantically homogeneous, heterogeneous columns

80

Customer_Id

Customer_Id

187-65-2468

h8742@yyy.com

987-64-6837

kkjj+@haha.org

789-15-4321

qwerty@keyboard.us

987-65-4321

555-1212@fax.in

(908)-555-1234

(908)-555-1234

973-360-0000

973-360-0000

360-0007

360-0007

(877)-807-4596

(877)-807-4596

Information Theory for Data Management - Divesh & Suresh

Heterogeneity Identification [DKOSV06]

Example: semantically homogeneous, heterogeneous columns

Customer_Id

Customer_Id

187-65-2468

h8742@yyy.com

987-64-6837

kkjj+@haha.org

789-15-4321

qwerty@keyboard.us

987-65-4321

555-1212@fax.in

(908)-555-1234

(908)-555-1234

973-360-0000

973-360-0000

360-0007

360-0007

(877)-807-4596

(877)-807-4596

More easily distinguished types greater heterogeneity

–

81

Phone + (possibly) SSN versus balanced email + phone

Information Theory for Data Management - Divesh & Suresh

Heterogeneity Identification [DKOSV06]

Heterogeneity = space complexity of soft clustering of the data

More, balanced clusters greater heterogeneity

– More distinguishable clusters greater heterogeneity

–

Soft clustering

Soft assign probabilities to membership of values in clusters

– How many clusters: tradeoff between space versus quality

– Use Information Bottleneck to compute soft clustering of values

–

82

Information Theory for Data Management - Divesh & Suresh

Heterogeneity Identification [DKOSV06]

Hard clustering

X = Customer_Id T = Cluster_Id

83

187-65-2468

t1

987-64-6837

t1

789-15-4321

t1

987-65-4321

t1

(908)-555-1234

t2

973-360-0000

t1

360-0007

t3

(877)-807-4596

t2

Information Theory for Data Management - Divesh & Suresh

Heterogeneity Identification [DKOSV06]

Soft clustering: cluster membership probabilities

X = Customer_Id T = Cluster_Id p(T|X)

789-15-4321

t1

0.75

987-65-4321

t1

0.75

789-15-4321

t2

0.25

987-65-4321

t2

0.25

(908)-555-1234

t1

0.25

973-360-0000

t1

0.5

(908)-555-1234

t2

0.75

973-360-0000

t2

0.5

How to compute a good soft clustering?

84

Information Theory for Data Management - Divesh & Suresh

Heterogeneity Identification [DKOSV06]

Represent strings as q-gram distributions

85

Customer_Id

X = Customer_Id

V = 4-grams

p(X,V)

187-65-2468

987-65-4321

987-

0.10

987-64-6837

987-65-4321

87-6

0.13

789-15-4321

987-65-4321

7-65

0.12

987-65-4321

987-65-4321

-65-

0.15

(908)-555-1234

987-65-4321

65-4

0.05

973-360-0000

987-65-4321

5-43

0.20

360-0007

987-65-4321

-432

0.15

(877)-807-4596

987-65-4321

4321

0.10

Information Theory for Data Management - Divesh & Suresh

Heterogeneity Identification [DKOSV06]

iIB: find soft clustering T of X that minimizes I(T;X) – β*I(T;V)

Customer_Id

X = Customer_Id

V = 4-grams

p(X,V)

187-65-2468

987-65-4321

987-

0.10

987-64-6837

987-65-4321

87-6

0.13

789-15-4321

987-65-4321

7-65

0.12

987-65-4321

987-65-4321

-65-

0.15

(908)-555-1234

987-65-4321

65-4

0.05

973-360-0000

987-65-4321

5-43

0.20

360-0007

987-65-4321

-432

0.15

(877)-807-4596

987-65-4321

4321

0.10

Allow iIB to use arbitrarily many clusters, use β* = H(X)/I(X;V)

–

86

Closest to point with minimum space and maximum quality

Information Theory for Data Management - Divesh & Suresh

Heterogeneity Identification [DKOSV06]

Rate distortion curve: I(T;V)/I(X;V) vs I(T;X)/H(X)

β*

87

Information Theory for Data Management - Divesh & Suresh

Heterogeneity Identification [DKOSV06]

Heterogeneity = mutual information I(T;X) of iIB clustering T at β*

X = Customer_Id T = Cluster_Id p(T|X) i(T;X)

789-15-4321

t1

0.75

0.41

987-65-4321

t1

0.75

0.41

789-15-4321

t2

0.25

-0.81

987-65-4321

t2

0.25

-0.81

(908)-555-1234

t1

0.25

-1.17

973-360-0000

t1

0.5

-0.17

(908)-555-1234

t2

0.75

0.77

973-360-0000

t2

0.5

0.19

0 ≤I(T;X) (= 0.126) ≤ H(X) (= 2.0), H(T) (= 1.0)

–

88

Ideally use iIB with an arbitrarily large number of clusters in T

Information Theory for Data Management - Divesh & Suresh

Heterogeneity Identification [DKOSV06]

Heterogeneity = mutual information I(T;X) of iIB clustering T at β*

89

Information Theory for Data Management - Divesh & Suresh

Data Integration: Summary

Analyzing database instance critical for effective data integration

–

Matching and quality assessments are key components

Information theoretic measures useful for schema matching

Align columns when column names, data values are opaque

– Mutual information I(X;V) captures correlations between X, V

–

Information theoretic measures useful for heterogeneity testing

Identify columns with semantically heterogeneous values

– I(T;X) of iIB clustering T at β* captures column heterogeneity

–

90

Information Theory for Data Management - Divesh & Suresh

Outline

Part 1

Introduction to Information Theory

Application: Data Anonymization

Application: Data Integration

Part 2

91

Review of Information Theory Basics

Application: Database Design

Computing Information Theoretic Primitives

Open Problems

Information Theory for Data Management - Divesh & Suresh

Review of Information Theory Basics

Discrete distribution: probability p(X)

X

Y

Z

p(X,Y,Z)

X

Y

p(X,Y)

X

p(X)

x1

y1

z1

0.125

x1

y1

0.25

x1

0.5

x1

y2

z2

0.125

x1

y2

0.25

x2

0.25

x1

y1

z2

0.125

x2

y3

0.25

x3

0.125

x1

y2

z1

0.125

x3

y3

0.125

x4

0.125

x2

y3

z3

0.125

x4

y3

0.125

x2

y3

z4

0.125

Y

p(Y)

x3

y3

z5

0.125

y1

0.25

x4

y3

z6

0.125

y2

0.25

y3

0.5

p(X,Y) = ∑z p(X,Y,Z=z)

92

Information Theory for Data Management - Divesh & Suresh

Review of Information Theory Basics

Discrete distribution: probability p(X)

X

Y

Z

p(X,Y,Z)

X

Y

p(X,Y)

X

p(X)

x1

y1

z1

0.125

x1

y1

0.25

x1

0.5

x1

y2

z2

0.125

x1

y2

0.25

x2

0.25

x1

y1

z2

0.125

x2

y3

0.25

x3

0.125

x1

y2

z1

0.125

x3

y3

0.125

x4

0.125

x2

y3

z3

0.125

x4

y3

0.125

x2

y3

z4

0.125

Y

p(Y)

x3

y3

z5

0.125

y1

0.25

x4

y3

z6

0.125

y2

0.25

y3

0.5

p(Y) = ∑x p(X=x,Y) = ∑x ∑z p(X=x,Y,Z=z)

93

Information Theory for Data Management - Divesh & Suresh

Review of Information Theory Basics

Discrete distribution: conditional probability p(X|Y)

X

Y

p(X,Y)

p(X|Y)

p(Y|X)

X

p(X)

x1

y1

0.25

1.0

0.5

x1

0.5

x1

y2

0.25

1.0

0.5

x2

0.25

x2

y3

0.25

0.5

1.0

x3

0.125

x3

y3

0.125

0.25

1.0

x4

0.125

x4

y3

0.125

0.25

1.0

Y

p(Y)

y1

0.25

y2

0.25

y3

0.5

p(X,Y) = p(X|Y)*p(Y) = p(Y|X)*p(X)

94

Information Theory for Data Management - Divesh & Suresh

Review of Information Theory Basics

Discrete distribution: entropy H(X)

X

Y

p(X,Y)

h(X,Y)

X

p(X)

h(X)

x1

y1

0.25

2.0

x1

0.5

1.0

x1

y2

0.25

2.0

x2

0.25

2.0

x2

y3

0.25

2.0

x3

0.125

3.0

x3

y3

0.125

3.0

x4

0.125

3.0

x4

y3

0.125

3.0

Y

p(Y)

h(Y)

y1

0.25

2.0

y2

0.25

2.0

y3

0.5

1.0

h(x) = log2(1/p(x))

H(X) = ∑X=x p(x)*h(x) = 1.75

– H(Y) = ∑Y=y p(y)*h(y) = 1.5 (≤ log2(|Y|) = 1.58)

– H(X,Y) = ∑X=x ∑Y=y p(x,y)*h(x,y) = 2.25 (≤ log2(|X,Y|) = 2.32)

–

95

Information Theory for Data Management - Divesh & Suresh

Review of Information Theory Basics

Discrete distribution: conditional entropy H(X|Y)

X

Y

p(X,Y)

p(X|Y)

h(X|Y)

X

p(X)

h(X)

x1

y1

0.25

1.0

0.0

x1

0.5

1.0

x1

y2

0.25

1.0

0.0

x2

0.25

2.0

x2

y3

0.25

0.5

1.0

x3

0.125

3.0

x3

y3

0.125

0.25

2.0

x4

0.125

3.0

x4

y3

0.125

0.25

2.0

Y

p(Y)

h(Y)

y1

0.25

2.0

y2

0.25

2.0

y3

0.5

1.0

h(x|y) = log2(1/p(x|y))

H(X|Y) = ∑X=x ∑Y=y p(x,y)*h(x|y) = 0.75

– H(X|Y) = H(X,Y) – H(Y) = 2.25 – 1.5

–

96

Information Theory for Data Management - Divesh & Suresh

Review of Information Theory Basics

Discrete distribution: mutual information I(X;Y)

X

Y

p(X,Y)

h(X,Y)

i(X;Y)

X

p(X)

h(X)

x1

y1

0.25

2.0

1.0

x1

0.5

1.0

x1

y2

0.25

2.0

1.0

x2

0.25

2.0

x2

y3

0.25

2.0

1.0

x3

0.125

3.0

x3

y3

0.125

3.0

1.0

x4

0.125

3.0

x4

y3

0.125

3.0

1.0

Y

p(Y)

h(Y)

y1

0.25

2.0

y2

0.25

2.0

y3

0.5

1.0

i(x;y) = log2(p(x,y)/p(x)*p(y))

I(X;Y) = ∑X=x ∑Y=y p(x,y)*i(x;y) = 1.0

– I(X;Y) = H(X) + H(Y) – H(X,Y) = 1.75 + 1.5 – 2.25

–

97

Information Theory for Data Management - Divesh & Suresh

Outline

Part 1

Introduction to Information Theory

Application: Data Anonymization

Application: Data Integration

Part 2

98

Review of Information Theory Basics

Application: Database Design

Computing Information Theoretic Primitives

Open Problems

Information Theory for Data Management - Divesh & Suresh

Information Dependencies [DR00]

Goal: use information theory to examine and reason about

information content of the attributes in a relation instance

Key ideas:

Novel InD measure between attribute sets X, Y based on H(Y|X)

– Identify numeric inequalities between InD measures

–

Results:

InD measures are a broader class than FDs and MVDs

– Armstrong axioms for FDs derivable from InD inequalities

– MVD inference rules derivable from InD inequalities

–

99

Information Theory for Data Management - Divesh & Suresh

Information Dependencies [DR00]

Functional dependency: X → Y

–

100

FD X → Y holds iff t1, t2 ((t1[X] = t2[X]) (t1[Y] = t2[Y]))

X

Y

Z

x1

y1

z1

x1

y2

z2

x1

y1

z2

x1

y2

z1

x2

y3

z3

x2

y3

z4

x3

y3

z5

x4

y3

z6

Information Theory for Data Management - Divesh & Suresh

Information Dependencies [DR00]

Functional dependency: X → Y

–

101

FD X → Y holds iff t1, t2 ((t1[X] = t2[X]) (t1[Y] = t2[Y]))

X

Y

Z

x1

y1

z1

x1

y2

z2

x1

y1

z2

x1

y2

z1

x2

y3

z3

x2

y3

z4

x3

y3

z5

x4

y3

z6

Information Theory for Data Management - Divesh & Suresh

Information Dependencies [DR00]

Result: FD X → Y holds iff H(Y|X) = 0

–

Intuition: once X is known, no remaining uncertainty in Y

X

Y

p(X,Y)

p(Y|X)

h(Y|X)

X

p(X)

x1

y1

0.25

0.5

1.0

x1

0.5

x1

y2

0.25

0.5

1.0

x2

0.25

x2

y3

0.25

1.0

0.0

x3

0.125

x3

y3

0.125

1.0

0.0

x4

0.125

x4

y3

0.125

1.0

0.0

Y

p(Y)

y1

0.25

y2

0.25

y3

0.5

H(Y|X) = 0.5

102

Information Theory for Data Management - Divesh & Suresh

Information Dependencies [DR00]

Multi-valued dependency: X →→ Y

–

103

MVD X →

→ Y holds iff R(X,Y,Z) = R(X,Y)

X

Y

Z

x1

y1

z1

x1

y2

z2

x1

y1

z2

x1

y2

z1

x2

y3

z3

x2

y3

z4

x3

y3

z5

x4

y3

z6

R(X,Z)

Information Theory for Data Management - Divesh & Suresh

Information Dependencies [DR00]

Multi-valued dependency: X →→ Y

–

104

MVD X →

→ Y holds iff R(X,Y,Z) = R(X,Y)

R(X,Z)

X

Y

Z

X

Y

X

Z

x1

y1

z1

x1

y1

x1

z1

x1

y2

z2

x1

y2

x1

z2

x1

y1

z2

x2

y3

x2

z3

x1

y2

z1

x3

y3

x2

z4

x2

y3

z3

x4

y3

x3

z5

x2

y3

z4

x4

z6

x3

y3

z5

x4

y3

z6

=

Information Theory for Data Management - Divesh & Suresh

Information Dependencies [DR00]

Multi-valued dependency: X →→ Y

–

105

MVD X →

→ Y holds iff R(X,Y,Z) = R(X,Y)

R(X,Z)

X

Y

Z

X

Y

X

Z

x1

y1

z1

x1

y1

x1

z1

x1

y2

z2

x1

y2

x1

z2

x1

y1

z2

x2

y3

x2

z3

x1

y2

z1

x3

y3

x2

z4

x2

y3

z3

x4

y3

x3

z5

x2

y3

z4

x4

z6

x3

y3

z5

x4

y3

z6

=

Information Theory for Data Management - Divesh & Suresh

Information Dependencies [DR00]

Result: MVD X →→ Y holds iff H(Y,Z|X) = H(Y|X) + H(Z|X)

–

Intuition: once X known, uncertainties in Y and Z are independent

X

Y

Z

h(Y,Z|X)

X

Y

h(Y|X)

X

Z

h(Z|X)

x1

y1

z1

2.0

x1

y1

1.0

x1

z1

1.0

x1

y2

z2

2.0

x1

y2

1.0

x1

z2

1.0

x1

y1

z2

2.0

x2

y3

0.0

x2

z3

1.0

x1

y2

z1

2.0

x3

y3

0.0

x2

z4

1.0

x2

y3

z3

1.0

x4

y3

0.0

x3

z5

0.0

x2

y3

z4

1.0

x4

z6

0.0

x3

y3

z5

0.0

x4

y3

z6

0.0

=

H(Y|X) = 0.5, H(Z|X) = 0.75, H(Y,Z|X) = 1.25

106

Information Theory for Data Management - Divesh & Suresh

Information Dependencies [DR00]

Result: Armstrong axioms for FDs derivable from InD inequalities

Reflexivity: If Y X, then X → Y

–

H(Y|X) = 0 for Y X

Augmentation: X → Y X,Z → Y,Z

–

0 ≤ H(Y,Z|X,Z) = H(Y|X,Z) ≤ H(Y|X) = 0

Transitivity: X → Y & Y → Z X → Z

–

107

0 ≥ H(Y|X) + H(Z|Y) ≥ H(Z|X) ≥ 0

Information Theory for Data Management - Divesh & Suresh

Database Normal Forms

Goal: eliminate update anomalies by good database design

–

Need to know the integrity constraints on all database instances

Boyce-Codd normal form:

Input: a set ∑ of functional dependencies

– For every (non-trivial) FD R.X → R.Y ∑+, R.X is a key of R

–

4NF:

Input: a set ∑ of functional and multi-valued dependencies

– For every (non-trivial) MVD R.X →

→ R.Y ∑+, R.X is a key of R

–

108

Information Theory for Data Management - Divesh & Suresh

Database Normal Forms

Functional dependency: X → Y

–

109

Which design is better?

X

Y

Z

X

Y

X

Z

x1

y1

z1

x1

y1

x1

z1

x1

y1

z2

x2

y2

x1

z2

x2

y2

z3

x3

y3

x2

z3

x2

y2

z4

x4

y4

x2

z4

x3

y3

z5

x3

z5

x4

y4

z6

x4

z6

=

Information Theory for Data Management - Divesh & Suresh

Database Normal Forms

Functional dependency: X → Y

–

Which design is better?

X

Y

Z

X

Y

X

Z

x1

y1

z1

x1

y1

x1

z1

x1

y1

z2

x2

y2

x1

z2

x2

y2

z3

x3

y3

x2

z3

x2

y2

z4

x4

y4

x2

z4

x3

y3

z5

x3

z5

x4

y4

z6

x4

z6

=

Decomposition is in BCNF

110

Information Theory for Data Management - Divesh & Suresh

Database Normal Forms

Multi-valued dependency: X →→ Y

–

111

Which design is better?

X

Y

Z

X

Y

X

Z

x1

y1

z1

x1

y1

x1

z1

x1

y2

z2

x1

y2

x1

z2

x1

y1

z2

x2

y3

x2

z3

x1

y2

z1

x3

y3

x2

z4

x2

y3

z3

x4

y3

x3

z5

x2

y3

z4

x4

z6

x3

y3

z5

x4

y3

z6

=

Information Theory for Data Management - Divesh & Suresh

Database Normal Forms

Multi-valued dependency: X →→ Y

–

Which design is better?

X

Y

Z

X

Y

X

Z

x1

y1

z1

x1

y1

x1

z1

x1

y2

z2

x1

y2

x1

z2

x1

y1

z2

x2

y3

x2

z3

x1

y2

z1

x3

y3

x2

z4

x2

y3

z3

x4

y3

x3

z5

x2

y3

z4

x4

z6

x3

y3

z5

x4

y3

z6

=

Decomposition is in 4NF

112

Information Theory for Data Management - Divesh & Suresh

Well-Designed Databases [AL03]

Goal: use information theory to characterize “goodness” of a

database design and reason about normalization algorithms

Key idea:

Information content measure of cell in a DB instance w.r.t. ICs

– Redundancy reduces information content measure of cells

–

Results:

Well-designed DB each cell has information content > 0

– Normalization algorithms never decrease information content

–

113

Information Theory for Data Management - Divesh & Suresh

Well-Designed Databases [AL03]

Information content of cell c in database D satisfying FD X → Y

Uniform distribution p(V) on values for c consistent with D\c and FD

– Information content of cell c is entropy H(V)

–

X

Y

Z

V62 p(V62) h(V62)

x1

y1

z1

y1

0.25

2.0

x1

y1

z2

y2

0.25

2.0

x2

y2

z3

y3

0.25

2.0

x2

y2

z4

y4

0.25

2.0

x3

y3

z5

x4

y4

z6

H(V62) = 2.0

114

Information Theory for Data Management - Divesh & Suresh

Well-Designed Databases [AL03]

Information content of cell c in database D satisfying FD X → Y

Uniform distribution p(V) on values for c consistent with D\c and FD

– Information content of cell c is entropy H(V)

–

X

Y

Z

V22 p(V22) h(V22)

x1

y1

z1

y1

1.0

x1

y1

z2

y2

0.0

x2

y2

z3

y3

0.0

x2

y2

z4

y4

0.0

x3

y3

z5

x4

y4

z6

0.0

H(V22) = 0.0

115

Information Theory for Data Management - Divesh & Suresh

Well-Designed Databases [AL03]

Information content of cell c in database D satisfying FD X → Y

–

Information content of cell c is entropy H(V)

X

Y

Z

c

H(V)

x1

y1

z1

c12

0.0

x1

y1

z2

c22

0.0

x2

y2

z3

c32

0.0

x2

y2

z4

c42

0.0

x3

y3

z5

c52

2.0

x4

y4

z6

c62

2.0

Schema S is in BCNF iff D S, H(V) > 0, for all cells c in D

–

116

Technicalities w.r.t. size of active domain

Information Theory for Data Management - Divesh & Suresh

Well-Designed Databases [AL03]

Information content of cell c in database D satisfying FD X → Y

–

Information content of cell c is entropy H(V)

X

Y

X

Z

V12 p(V12) h(V12)

V42 p(V42) h(V42)

x1

y1

x1

z1

y1

0.25

2.0

y1

0.25

2.0

x2

y2

x1

z2

y2

0.25

2.0

y2

0.25

2.0

x3

y3

x2

z3

y3

0.25

2.0

y3

0.25

2.0

x4

y4

x2

z4

y4

0.25

2.0

y4

0.25

2.0

x3

z5

x4

z6

H(V12) = 2.0, H(V42) = 2.0

117

Information Theory for Data Management - Divesh & Suresh

Well-Designed Databases [AL03]

Information content of cell c in database D satisfying FD X → Y

–

Information content of cell c is entropy H(V)

X

Y

X

Z

c

H(V)

x1

y1

x1

z1

c12

2.0

x2

y2

x1

z2

c22

2.0

x3

y3

x2

z3

c32

2.0

x4

y4

x2

z4

c42

2.0

x3

z5

x4

z6

Schema S is in BCNF iff D S, H(V) > 0, for all cells c in D

118

Information Theory for Data Management - Divesh & Suresh

Well-Designed Databases [AL03]

Information content of cell c in DB D satisfying MVD X →→ Y

–

Information content of cell c is entropy H(V)

X

Y

Z

V52 p(V52) h(V52)

V53 p(V53) h(V53)

x1

y1

z1

y3

z1

0.2

2.32

x1

y2

z2

z2

0.2

2.32

x1

y1

z2

z3

0.2

2.32

x1

y2

z1

z4

0.0

x2

y3

z3

z5

0.2

2.32

x2

y3

z4

z6

0.2

2.32

x3

y3

z5

x4

y3

z6

1.0

0.0

H(V52) = 0.0, H(V53) = 2.32

119

Information Theory for Data Management - Divesh & Suresh

Well-Designed Databases [AL03]

Information content of cell c in DB D satisfying MVD X →→ Y

–

Information content of cell c is entropy H(V)

X

Y

Z

c

H(V)

c

H(V)

x1

y1

z1

c12

0.0

c13

0.0

x1

y2

z2

c22

0.0

c23

0.0

x1

y1

z2

c32

0.0

c33

0.0

x1

y2

z1

c42

0.0

c43

0.0

x2

y3

z3

c52

0.0

c53

2.32

x2

y3

z4

c62

0.0

c63

2.32

x3

y3

z5

c72

1.58

c73

2.58

x4

y3

z6

c82

1.58

c83

2.58

Schema S is in 4NF iff D S, H(V) > 0, for all cells c in D

120

Information Theory for Data Management - Divesh & Suresh

Well-Designed Databases [AL03]

Information content of cell c in DB D satisfying MVD X →→ Y

–

Information content of cell c is entropy H(V)

X

Y

X

Z

V32 p(V32) h(V32)

V34 p(V34) h(V34)

x1

y1

x1

z1

y1

0.33

1.58

z1

0.2

2.32

x1

y2

x1

z2

y2

0.33

1.58

z2

0.2

2.32

x2

y3

x2

z3

y3

0.33

1.58

z3

0.2

2.32

x3

y3

x2

z4

z4

0.0

x4

y3

x3

z5

z5

0.2

2.32

x4

z6

z6

0.2

2.32

H(V32) = 1.58, H(V34) = 2.32

121

Information Theory for Data Management - Divesh & Suresh

Well-Designed Databases [AL03]

Information content of cell c in DB D satisfying MVD X →→ Y

–

Information content of cell c is entropy H(V)

X

Y

X

Z

c

H(V)

c

H(V)

x1

y1

x1

z1

c12

1.0

c14

2.32

x1

y2

x1

z2

c22

1.0

c24

2.32

x2

y3

x2

z3

c32

1.58

c34

2.32

x3

y3

x2

z4

c42

1.58

c44

2.32

x4

y3

x3

z5

c52

1.58

c54

2.58

x4

z6

c64

2.58

Schema S is in 4NF iff D S, H(V) > 0, for all cells c in D

122

Information Theory for Data Management - Divesh & Suresh

Well-Designed Databases [AL03]

Normalization algorithms never decrease information content

–

123

Information content of cell c is entropy H(V)

X

Y

Z

c

H(V)

x1

y1

z1

c13

0.0

x1

y2

z2

c23

0.0

x1

y1

z2

c33

0.0

x1

y2

z1

c43

0.0

x2

y3

z3

c53

2.32

x2

y3

z4

c63

2.32

x3

y3

z5

c73

2.58

x4

y3

z6

c83

2.58

Information Theory for Data Management - Divesh & Suresh

Well-Designed Databases [AL03]

Normalization algorithms never decrease information content

–

124

Information content of cell c is entropy H(V)

X

Y

Z

X

Y

X

Z

c

H(V)

c

H(V)

x1

y1

z1

x1

y1

x1

z1

c13

0.0

c14

2.32

x1

y2

z2

x1

y2

x1

z2

c23

0.0

c24

2.32

x1

y1

z2

x2

y3

x2

z3

c33

0.0

c34

2.32

x1

y2

z1

x3

y3

x2

z4

c43

0.0

c44

2.32

x2

y3

z3

x4

y3

x3

z5

c53

2.32

c54

2.58

x2

y3

z4

x4

z6

c63

2.32

c64

2.58

x3

y3

z5

c73

2.58

x4

y3

z6

c83

2.58

=

Information Theory for Data Management - Divesh & Suresh

Well-Designed Databases [AL03]

Normalization algorithms never decrease information content

–

125

Information content of cell c is entropy H(V)

X

Y

Z

X

Y

X

Z

c

H(V)

c

H(V)

x1

y1

z1

x1

y1

x1

z1

c13

0.0

c14

2.32

x1

y2

z2

x1

y2

x1

z2

c23

0.0

c24

2.32

x1

y1

z2

x2

y3

x2

z3

c33

0.0

c34

2.32

x1

y2

z1

x3

y3

x2

z4

c43

0.0

c44

2.32

x2

y3

z3

x4

y3

x3

z5

c53

2.32

c54

2.58

x2

y3

z4

x4

z6

c63

2.32

c64

2.58

x3

y3

z5

c73

2.58

x4

y3

z6

c83

2.58

=

Information Theory for Data Management - Divesh & Suresh

Database Design: Summary

Good database design essential for preserving data integrity

Information theoretic measures useful for integrity constraints

FD X → Y holds iff InD measure H(Y|X) = 0

– MVD X →

→ Y holds iff H(Y,Z|X) = H(Y|X) + H(Z|X)

– Information theory to model correlations in specific database

–

Information theoretic measures useful for normal forms

Schema S is in BCNF/4NF iff D S, H(V) > 0, for all cells c in D

– Information theory to model distributions over possible databases

–

126

Information Theory for Data Management - Divesh & Suresh

Outline

Part 1

Introduction to Information Theory

Application: Data Anonymization

Application: Data Integration

Part 2

127

Review of Information Theory Basics

Application: Database Design

Computing Information Theoretic Primitives

Open Problems

Information Theory for Data Management - Divesh & Suresh

Domain size matters

For random variable X, domain size = supp(X) = {xi | p(X = xi) >

0}

Different solutions exist depending on whether domain size is

“small” or “large”

Probability vectors usually very sparse

Entropy: Case I - Small domain size

Suppose the #unique values for a random variable X is small

(i.e fits in memory)

Maximum likelihood estimator:

–

p(x) = #times x is encountered/total number of items in set.

1

2

2

1

1 5

4

1

2

3

4

5

Entropy: Case I - Small domain size

HMLE = Sx p(x) log 1/p(x)

This is a biased estimate:

–

E[HMLE] < H

Miller-Madow correction:

–

H’ = HMLE + (m’ – 1)/2n

m’ is an estimate of number of non-empty bins

n = number of samples

Bad news: ALL estimators for H are biased.

Good news: we can quantify bias and variance of MLE:

Bias <= log(1 + m/N)

– Var(HMLE) <= (log n)2/N

–

Entropy: Case II - Large domain size

|X| is too large to fit in main memory, so we can’t maintain

explicit counts.

Streaming algorithms for H(X):

Long history of work on this problem

– Bottomline:

(1+e)-relative-approximation for H(X) that allows for updates

to frequencies, and requires “almost constant”, and optimal

space [HNO08].

–

Streaming Entropy [CCM07]

High level idea: sample randomly from the stream, and track

counts of elements picked [AMS]

PROBLEM: skewed distribution prevents us from sampling

lower-frequency elements (and entropy is small)

Idea: estimate largest frequency, and

distribution of what’s left (higher entropy)

Streaming Entropy [CCM07]

Maintain set of samples from original distribution and

distribution without most frequent element.

In parallel, maintain estimator for frequency of most frequent

element

normally this is hard

– but if frequency is very large, then simple estimator exists

[MG81] (Google interview puzzle!)

–

At the end, compute function of these two estimates

Memory usage: roughly 1/e2 log(1/e) (e is the error)

Entropy and MI are related

I(X;Y) = H(X,Y) – H(X) – H(Y)

Suppose we can c-approximate H(X) for any c > 0:

Find H’(X) s.t |H(X) – H’(X)| <= c