Parallel and Distributed Simulation (PADS, DIS, and the HLA)

advertisement

tutorial:

Parallel & Distributed Simulation Systems:

From Chandy/Misra to the High Level

Architecture and Beyond

Richard M. Fujimoto

College of Computing

Georgia Institute of Technology

Atlanta, GA 30332-0280

fujimoto@cc.gatech.edu

References

R. Fujimoto, Parallel and Distributed Simulation Systems, Wiley

Interscience, 2000.

(see also http://www.cc.gatech.edu/classes/AY2000/cs4230_spring)

HLA:

F. Kuhl, R. Weatherly, J. Dahmann, Creating Computer Simulation

Systems: An Introduction to the High Level Architecture for

Simulation, Prentice Hall, 1999.

(http://hla.dmso.mil)

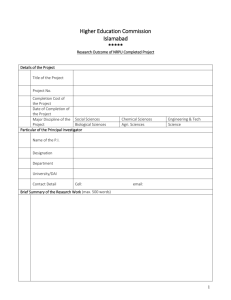

Outline

Part I:

Introduction

Part II:

Time Management

Part III:

Distributed Virtual Environments

Parallel and Distributed Simulation

Parallel simulation involves the

execution of a single simulation

program on a collection of tightly

coupled processors (e.g., a shared

memory multiprocessor).

simulation

model

P

P

M

P

M

P

M

parallel

processor

Replicated trials involves the

execution of several, independent

simulations concurrently on different

processors

Distributed simulation involves

the execution of a single simulation

program on a collection of loosely

coupled processors (e.g., PCs

interconnected by a LAN or WAN).

Reasons to Use Parallel / Distributed Simulation

Enable the execution of time consuming simulations that could

not otherwise be performed (e.g., simulation of the Internet)

• Reduce model execution time (proportional to # processors)

• Ability to run larger models (more memory)

Enable simulation to be used as a forecasting tool in time

critical decision making processes (e.g., air traffic control)

• Initialize simulation to current system state

• Faster than real time execution for what-if experimentation

• Simulation results may be needed in seconds

Create distributed virtual environments, possibly including

users at distant geographical locations (e.g., training,

entertainment)

• Real-time execution capability

• Scalable performance for many users & simulated entities

Geographically Distributed Users/Resources

Geographically distributed users and/or resources are sometime needed

• Interactive games over the Internet

• Specialized hardware or databases

Server architecture

Cluster of workstations

on LAN

Distributed architecture

Distributed computers

WAN interconnect

LAN interconnect

Stand-Alone vs. Federated Simulation Systems

Stand-Alone

Simulation System

Federated Simulation

Systems

Simulator 2

Process 1

Simulator 1

Simulator 3

Process 2

Process 3

Process 4

Run Time Infrastructure-RTI

(simulation backplane)

• federation set up & tear down

• synchronization, message ordering

• data distribution

Parallel simulation environment

Homogeneous programming

environment

• simulation language

Interconnect autonomous,

heterogeneous simulators

• interface to RTI software

Principal Application Domains

Parallel Discrete Event

Simulation (PDES)

• Discrete event simulation

to analyze systems

• Fast model execution (asfast-as-possible)

• Produce same results as a

sequential execution

• Typical applications

–

–

–

–

Telecommunication networks

Computer systems

Transportation systems

Military strategy and tactics

Distributed Virtual

Environments (DVEs)

• Networked interactive,

immersive environments

• Scalable, real-time

performance

• Create virtual worlds that

appear realistic

• Typical applications

– Training

– Entertainment

– Social interaction

Historical Perspective

High Performance Computing Community

Chandy/Misra/Bryant

algorithm

Time Warp algorithm

second generation algorithms

making it fast and

easy to use

early experimental data

1975

1980

1985

1990

SIMulator NETworking (SIMNET)

(1983-1990)

Defense Community

2000

High Level Architecture

(1996 - today)

Distributed Interactive Simulation (DIS)

Aggregate Level Simulation Protocol (ALSP)

(1990 - 1997ish)

Dungeons and Dragons

Board Games

Multi-User Dungeon (MUD)

Adventure

Games

(Xerox PARC)

Internet & Gaming Community

1995

Multi-User Video Games

Part II:

Time Management

Parallel discrete event simulation

Conservative synchronization

Optimistic synchronization

Time Management in the High Level Architecture

Time

• physical system: the actual or imagined system being modeled

• simulation: a system that emulates the behavior of a physical system

main()

{ ...

double clock;

...

}

physical system

simulation

• physical time: time in the physical system

– Noon, December 31, 1999 to noon January 1, 2000

• simulation time: representation of physical time within the simulation

– floating point values in interval [0.0, 24.0]

• wallclock time: time during the execution of the simulation, usually

output from a hardware clock (e.g., GPS)

– 9:00 to 9:15 AM on September 10, 1999

Paced vs. Unpaced Execution

Modes of execution

• As-fast-as-possible execution (unpaced): no fixed

relationship necessarily exists between advances in

simulation time and advances in wallclock time

• Real-time execution (paced): each advance in simulation

time is paced to occur in synchrony with an equivalent

advance in wallclock time

• Scaled real-time execution (paced): each advance in

simulation time is paced to occur in synchrony with S * an

equivalent advance in wallclock time (e.g., 2x wallclock

time)

Here, focus on as-fast-as-possible; execution can be paced

to run in real-time (or scaled real-time) by inserting delays

Discrete Event Simulation Fundamentals

Discrete event simulation: computer model for a system where changes in

the state of the system occur at discrete points in simulation time.

Fundamental concepts:

• system state (state variables)

• state transitions (events)

• simulation time: totally ordered set of values representing time in the

system being modeled (physical system)

• simulator maintains a simulation time clock

A discrete event simulation computation can be viewed as a sequence of

event computations

Each event computation contains a (simulation time) time stamp indicating

when that event occurs in the physical system.

Each event computation may:

(1) modify state variables, and/or

(2) schedule new events into the simulated future.

A Simple DES Example

H1

H0

Time Event

0 H0 Send Pkt

100 Done

•

•

•

•

Time

Event

1 H1 Recv Pkt

6 H0 Retrans

100 Done

Time

Event

1 H1 Send Ack

6 H0 Retrans

100 Done

Time

Event

2 H0 Recv Ack

6 H0 Retrans

100 Done

Time

Event

2 H0 Send Pkt

100 Done

Simulator maintains event list

Events processed in simulation time order

Processing events may generate new events

Complete when event list is empty (or some other termination condition)

Parallel Discrete Event Simulation

A parallel discrete event simulation program can be viewed as

a collection of sequential discrete event simulation programs

executing on different processors that communicate by

sending time stamped messages to each other

“Sending a message” is synonymous with “scheduling an

event”

Parallel Discrete Event Simulation Example

Physical system

physical process

logical process

interactions among physical processes

time stamped event (message)

LP (Subnet 1)

Simulation

Recv Pkt

@15

LP

(Subnet 2)

all interactions between LPs must be via messages (no shared state)

LP

(Subnet 3)

The “Rub”

Golden rule for each logical process:

“Thou shalt process incoming messages in time

stamp order!!!” (local causality constraint)

Parallel Discrete Event Simulation Example

Physical system

physical process

logical process

Simulation

interactions among physical processes

time stamped event (message)

Safe to process???

LP (Subnet 1)

Recv Pkt

@15

LP

(Subnet 2)

all interactions between LPs must be via messages (no shared state)

LP

(Subnet 3)

The Synchronization Problem

Local causality constraint: Events within each logical process must be

processed in time stamp order

Observation: Adherence to the local causality constraint is sufficient to

ensure that the parallel simulation will produce exactly the same

results as the corresponding sequential simulation*

Synchronization (Time Management) Algorithms

• Conservative synchronization: avoid violating the local causality

constraint (wait until it’s safe)

– 1st generation: null messages (Chandy/Misra/Bryant)

– 2nd generation: time stamp of next event

• Optimistic synchronization: allow violations of local causality to occur,

but detect them at runtime and recover using a rollback mechanism

– Time Warp (Jefferson)

– approaches limiting amount of optimistic execution

* provided events with the same time stamp are processed in the same order

as in the sequential execution

Part II:

Time Management

Parallel discrete event simulation

Conservative synchronization

Optimistic synchronization

Time Management in the High Level Architecture

Chandy/Misra/Bryant “Null Message” Algorithm

Assumptions

• logical processes (LPs) exchanging time stamped events (messages)

• static network topology, no dynamic creation of LPs

• messages sent on each link are sent in time stamp order

• network provides reliable delivery, preserves order

Observation: The above assumptions imply the time stamp of the last

message received on a link is a lower bound on the time stamp

(LBTS) of subsequent messages received on that link

H1

H2

9 8 2

H3

5 4

H3

logical

process

Goal: Ensure LP processes events in time stamp order

one FIFO

queue per

incoming link

A Simple Conservative Algorithm

Algorithm A (executed by each LP):

Goal: Ensure events are processed in time stamp order:

WHILE (simulation is not over)

wait until each FIFO contains at least one message

remove smallest time stamped event from its FIFO

process that event

END-LOOP

H1

H2

• process time stamp 2 event

9 8 2

5 4

H3

logical

process

• process time stamp 4 event

• process time stamp 5 event

• wait until a message is received from H2

Observation: Algorithm A is prone to deadlock!

Deadlock Example

H1

(waiting

on H2)

15

10

H2

(waiting

on H3)

7

H3

(waiting

on H1)

9 8

A cycle of LPs forms where each is waiting on the next LP in the cycle.

No LP can advance; the simulation is deadlocked.

Deadlock Avoidance Using Null Messages

Break deadlock by having each LP send “null” messages indicating a

lower bound on the time stamp of future messages it could send.

11

15

10

H2

(waiting

on H3)

H1

(waiting

on H2)

8

7

H3

(waiting

on H1)

9 8

Assume minimum delay between hosts is 3 units of simulation time

• H3 initially at time 5

• H3 sends null message to H2 with time stamp 8

• H2 sends null message to H1 with time stamp 11

• H1 may now process message with time stamp 7

Deadlock Avoidance Using Null Messages

Null Message Algorithm (executed by each LP):

Goal: Ensure events are processed in time stamp order and avoid

deadlock

WHILE (simulation is not over)

wait until each FIFO contains at least one message

remove smallest time stamped event from its FIFO

process that event

send null messages to neighboring LPs with time stamp indicating a

lower bound on future messages sent to that LP (current time plus

lookahead)

END-LOOP

The null message algorithm relies on a “lookahead” (minimum delay).

Lookahead Creep

6.0

H1

(waiting

on H2)

7

7.5

6.5

15

10

H2

(waiting

on H3)

5.5 7.0

H3

(waiting

on H1)

9 8

0.5

Assume minimum delay between hosts is 3 units of time

• H3 initially at time 5

• H3 sends null message, time stamp 5.5;

H2 sends null message, time stamp 6.0

• H1 sends null message, time stamp 6.5;

H3 send null message, time stamp 7.0

• H2 sends null message, time stamp 7.5; H1 can process time stamp 7 message

Five null messages to process a single event

If lookahead is small, there may be many null messages!

Preventing Lookahead Creep: Next Event Time Information

H1

(waiting

on H2)

15

10

H2

(waiting

on H3)

7

H3

(waiting

on H1)

9 8

Observation: If all LPs are blocked, they can immediately

advance to the time of the minimum time stamp event in the

system

Lower Bound on Time Stamp

No null messages, assume any LP can send messages to any other LP

When a LP blocks, compute lower bound on time stamp (LBTS) of messages

it may later receive; those with time stamp < LBTS safe to process

H3@6

(not blocked)

8

H3

9

H2@6.1

(blocked)

10

H2

15

7 transient

H1

min (6, 10, 7) (assume zero lookahead)

H1@5 LBTS = ?

5

6

7

8

9

10

11

12

13

Simulation time

14

15

16

Given a snapshot of the computation, LBTS is the minimum among

• Time stamp of any transient messages (sent, but not yet received)

• Unblocked LPs: Current simulation time + lookahead

• Blocked LPs: Time of next event + lookahead

Lower Bound on Time Stamp (LBTS)

LBTS can be computed asynchonously using a distributed

snapshot algorithm (Mattern)

LP4

cut

message

LP3

LP2

LP1

Past

Future

wallclock time

cut point: an instant dividing process’s computation into past and future

cut: set of cut points, one per process

cut message: a message that was sent in the past, and received in the future

consistent cut: cut + all cut messages

cut value: minimum among (1) local minimum at each cut point and (2) time

stamp of cut messages; non-cut messages can be ignored

It can be shown LBTS = cut value

A Simple LBTS Algorithm

LP4

cut

message

LP3

LP2

LP1

Past

Future

wallclock time

Initiator broadcasts start LBTS computation message to all LPs

Each LP sets cut point, reports local minimum back to initiator

Account for transient (cut) messages

•

Identify transient messages, include time stamp in minimum computation

– Color each LP (color changes with each cut point); message color = color of sender

– An incoming message is transient if message color equals previous color of receiver

– Report time stamp of transient to initiator when one is received

•

Detecting when all transients have been received

– For each color, LPi keeps counter of messages sent (Sendi) and received (Receivei)

– At cut point, send counters to initiator: # transients = (Sendi – Receivei)

– Initiator detects termination (all transients received), broadcasts global minimum

Another LBTS Algorithm

LP4

cut

message

LP3

LP2

LP1

Past

Future

wallclock time

An LP initiates an LBTS computation when it blocks

Initiator: broadcasts start LBTS message to all LPs

LPi places cut point, report local minimum and (Sendi –

Receivei) back to initiator

Initiator: After all reports received

if ( (Sendi – Receivei) = 0) LBTS = global minimum,

broadcast LBTS value

Else Repeat broadcast/reply, but do not establish a new cut

Synchronous Algorithms

Barrier Synchronization: when a process reaches a barrier synchronization, it

must block until all other processors have also reached the barrier.

process 1

process 2

process 3

process 4

- barrier -

wallclock

time

- barrier wait

- barrier -

wait

- barrier -

wait

Synchronous algorithm

DO WHILE (unprocessed events remain)

barrier synchronization; flush all messages from the network

LBTS = min (Ni + LAi); Ni = time of next event in LPi; LAi = lookahead of LPi

all i

S = set of events with time stamp ≤ LBTS

process events in S

endDO

Variations proposed by Lubachevsky, Ayani, Chandy/Sherman, Nicol

Topology Information

ORD

4:00

6:00

LAX

0:30

SAN

2:00

JFK

10:45

10:00

Global LBTS algorithm is overly conservative: does not exploit topology

information

• Lookahead = minimum flight time to another airport

• Can the two events be processed concurrently?

– Yes because the event @ 10:00 cannot affect the event @ 10:45

• Simple global LBTS algorithm:

– LBTS = 10:30 (10:00 + 0:30)

– Cannot process event @ 10:45 until next LBTS computation

Distance Between LPs

• Associate a lookahead with each link: LAB is the lookahead on the link

from LPA to LPB

– Any message sent on the link from LPA to LPB must have a time

stamp of TA + LAB where TA is the current simulation time of LPA

• A path from LPA to LPZ is defined as a sequence of LPs: LPA, LPB, …,

LPY, LPZ

• The lookahead of a path is the sum of the lookaheads of the links

along the path

• DAB, the minimum distance from LPA to LPB is the minimum

lookahead over all paths from LPA to LPB

• The distance from LPA to LPB is the minimum amount of simulated

time that must elapse for an event in LPA to affect LPB

Distance Between Processes

The distance from LPA to LPB is the minimum amount of simulated time

that must elapse for an event in LPA to affect LPB

11

3

LPA

LPB

4

1

3

1

4

2

LPC

LPD

2

Distance Matrix:

D [i,j] = minimum distance from LPi to LPj

LPA LPB LPC LPD

LPA 4

3

1

3

LPB 4

5

3

1

min (1+2, 3+1)

LPC 3

6

4

2

LPD 5

4

2

4

13 15

• An event in LPY with time stamp TY depends on an event in

LPX with time stamp TX if TX + D[X,Y] < TY

• Above, the time stamp 15 event depends on the time stamp

11 event, the time stamp 13 event does not.

Computing LBTS

Assuming all LPs are blocked and there are no transient messages:

LBTSi=min(Nj+Dji) (all j) where Ni = time of next event in LPi

11

3

LPA

LPB

4

1

3

1

4

2

LPC

LPD

2

13 15

Distance Matrix:

D [i,j] = minimum distance from LPi to LPj

LPA LPB LPC LPD

LPA 4

3

1

3

LPB 4

5

3

1

min (1+2, 3+1)

LPC 3

6

4

2

LPD 5

4

2

4

LBTSA = 15 [min (11+4, 13+5)]

LBTSB = 14 [min (11+3, 13+4)]

LBTSC = 12 [min (11+1, 13+2)]

LBTSD = 14 [min (11+3, 13+4)]

Need to know time of next event of every other LP

Distance matrix must be recomputed if lookahead changes

Example

ORD

4:00

6:00

LAX

0:30

SAN

2:00

JFK

10:45

10:00

Using distance information:

• DSAN,JFK = 6:30

• LBTSJFK = 16:30 (10:00 + 6:30)

• Event @ 10:45 can be processed this iteration

• Concurrent processing of events at times 10:00 and 10:45

Lookahead

problem: limited concurrency

each LP must process events in time stamp order

event

without lookahead

possible message

OK to process

LP D

LP C

with lookahead

possible message

LP B

OK to process

LP A

not OK to process yet

TA

TA+LA

Logical Time

Each LP A using declares a lookahead value LA; the time stamp of any event

generated by the LP must be ≥ TA+ LA

• Used in virtually all conservative synchronization protocols

• Relies on model properties (e.g., minimum transmission delay)

Lookahead is necessary to allow concurrent processing of events with

different time stamps (unless optimistic event processing is used)

Speedup of Central Server Queueing Model Simulation

Deadlock Detection and Recovery Algorithm

(5 processors)

4

Optimized

(deterministic service

time)

Optimized (exponential

service time)

Speedup

3

2

Classical (deterministic

service time)

1

Classical (exponential

service time)

0

1

2

16 32 64 128 256

8

4

Number of Jobs in Network

Exploiting lookahead is essential to obtain good performance

Summary: Conservative Synchronization

• Each LP must process events in time stamp order

• Must compute lower bound on time stamp (LBTS) of

future messages an LP may receive to determine which

events are safe to process

• 1st generation algorithms: LBTS computation based on

current simulation time of LPs and lookahead

– Null messages

– Prone to lookahead creep

• 2nd generation algorithms: also consider time of next

event to avoid lookahead creep

• Other information, e.g., LP topology, can be exploited

• Lookahead is crucial to achieving concurrent processing

of events, good performance

Conservative Algorithms

Pro:

• Good performance reported for many applications containing

good lookahead (queueing networks, communication

networks, wargaming)

• Relatively easy to implement

• Well suited for “federating” autonomous simulations, provided

there is good lookahead

Con:

• Cannot fully exploit available parallelism in the simulation

because they must protect against a “worst case scenario”

• Lookahead is essential to achieve good performance

• Writing simulation programs to have good lookahead can be

very difficult or impossible, and can lead to code that is

difficult to maintain

Part II:

Time Management

Parallel discrete event simulation

Conservative synchronization

Optimistic synchronization

Time Management in the High Level Architecture

Time Warp Algorithm (Jefferson)

Assumptions

• logical processes (LPs) exchanging time stamped events (messages)

• dynamic network topology, dynamic creation of LPs OK

• messages sent on each link need not be sent in time stamp order

• network provides reliable delivery, but need not preserve order

Basic idea:

• process events w/o worrying about messages that will arrive later

• detect out of order execution, recover using rollback

H1

9 8 2

5 4

H2

H3

H3

logical

process

process all available events (2, 4, 5, 8, 9) in time stamp order

Time Warp (Jefferson)

Each LP: process events in time stamp order, like a sequential simulator, except:

(1) do NOT discard processed events and (2) add a rollback mechanism

Input Queue

(event list)

processed event

12

21

35

41

unprocessed event

snapshot of LP state

State Queue

Output Queue

(anti-messages)

anti-message

12

12

19

42

18

straggler message arrives in the past, causing rollback

Adding rollback:

• a message arriving in the LP’s past initiates rollback

• to roll back an event computation we must undo:

– changes to state variables performed by the event;

solution: checkpoint state or use incremental state saving (state queue)

– message sends

solution: anti-messages and message annihilation (output queue)

Anti-Messages

42

positive message

anti-message

42

• Used to cancel a previously sent message

• Each positive message sent by an LP has a corresponding antimessage

• Anti-message is identical to positive message, except for a sign bit

• When an anti-message and its matching positive message meet in

the same queue, the two annihilate each other (analogous to matter

and anti-matter)

• To undo the effects of a previously sent (positive) message, the LP

need only send the corresponding anti-message

• Message send: in addition to sending the message, leave a copy of

the corresponding anti-message in a data structure in the sending LP

called the output queue.

Rollback: Receiving a Straggler Message

2. roll back events at times 21 and 35

2(a) restore state of LP to that prior to processing time stamp 21 event

Input Queue

(event list)

12

21

35

41

processed event

unprocessed event

State Queue

snapshot of LP state

anti-message

Output Queue

(anti-messages)

12

12

19

42

2(b) send anti-message

18

BEFORE

1. straggler message arrives in the past, causing rollback

Input Queue

(event list)

12

18

State Queue

Output Queue

(anti-messages)

AFTER

21

35

41

5. resume execution by processing event at time 18

12

12

19

Processing Incoming Anti-Messages

Case I: corresponding message has not yet been processed

– annihilate message/anti-message pair

Case II: corresponding message has already been processed

– roll back to time prior to processing message (secondary rollback)

– annihilate message/anti-message pair

1. anti-message arrives

3. Annihilate message

and anti-message

27

42

42

2. roll back events (time stamp 42 and 45)

2(a) restore state

processed event

45

55

unprocessed event

snapshot of LP state

anti-message

33

57

2(b) send anti-message

may cause “cascaded” rollbacks; recursively applying eliminates all effects of error

Case III: corresponding message has not yet been received

– queue anti-message

– annihilate message/anti-message pair when message is received

Global Virtual Time and Fossil Collection

A mechanism is needed to:

• reclaim memory resources (e.g., old state and events)

• perform irrevocable operations (e.g., I/O)

Observation: A lower bound on the time stamp of any rollback that can

occur in the future is needed.

Global Virtual Time (GVT) is defined as the minimum time stamp of any

unprocessed (or partially processed) message or anti-message in the

system. GVT provides a lower bound on the time stamp of any future

rollback.

• storage for events and state vectors older than GVT (except one state

vector) can be reclaimed

• I/O operations with time stamp less than GVT can be performed.

• GVT algorithms are similar to LBTS algorithms in conservative

synchronization

Observation: The computation corresponding to GVT will not be rolled

back, guaranteeing forward progress.

Time Warp and Chandy/Misra Performance

8

Time Warp (64 logical

processes)

Time Warp (16 logical

processes)

Deadlock Avoidance

(64 logical processes)

Deadlock Avoidance

(16 logical processes)

Deadlock Recovery (64

logical processes)

Deadlock Recovery (64

logical processes)

7

Speedup

6

5

4

3

2

1

0

0

•

•

•

•

48

32

Message Density

(messages per logical process)

16

64

eight processors

closed queueing network, hypercube topology

high priority jobs preempt service from low priority jobs (1% high priority)

exponential service time (poor lookahead)

Other Optimistic Algorithms

Principal goal: avoid excessive optimistic execution

A variety of protocols have been proposed, among them:

• window-based approaches

– only execute events in a moving window (simulated time, memory)

• risk-free execution

– only send messages when they are guaranteed to be correct

• add optimism to conservative protocols

– specify “optimistic” values for lookahead

• introduce additional rollbacks

– triggered stochastically or by running out of memory

• hybrid approaches

– mix conservative and optimistic LPs

• scheduling-based

– discriminate against LPs rolling back too much

• adaptive protocols

– dynamically adjust protocol during execution as workload changes

Summary of Optimistic Algorithms

Pro:

• Good performance reported for a variety of applications

(queueing communication networks, combat models,

transportation systems)

• Good transparency: offers the best hope for “general

purpose” parallel simulation software (more resilient to poor

lookahead than conservative methods)

Con:

• Memory requirements may be large

• Implementation is generally more complex and difficult to

debug than conservative mechanisms

– System calls, memory allocation ...

– Must be able to recover from exceptions

• Use in federated simulations requires adding rollback

capability to existing simulations

Part II:

Time Management

Parallel discrete event simulation

Conservative synchronization

Optimistic synchronization

Time Management in the High Level Architecture

High Level Architecture (HLA)

• based on a composable “system of systems” approach

– no single simulation can satisfy all user needs

– support interoperability and reuse among DoD simulations

• federations of simulations (federates)

– pure software simulations

– human-in-the-loop simulations (virtual simulators)

– live components (e.g., instrumented weapon systems)

• mandated as the standard reference architecture for all M&S in the U.S.

Department of Defense (September 1996)

The HLA consists of

• rules that simulations (federates) must follow to achieve proper

interaction during a federation execution

• Object Model Template (OMT) defines the format for specifying the set

of common objects used by a federation (federation object model), their

attributes, and relationships among them

• Interface Specification (IFSpec) provides interface to the Run-Time

Infrastructure (RTI), that ties together federates during model execution

HLA Federation

Interconnecting autonomous simulators

Federation

Simulation

(federate)

Simulation

(federate)

Interface

Specification

Simulation

(federate)

Interface

Specification

Runtime Infrastructure(RTI)

Services to create and manage the execution of the federation

• Federation setup / tear down

• Transmitting data among federates

• Synchronization (time management)

Interface Specification

Category

Functionality

Federation Management

Create and delete federation executions

join and resign federation executions

control checkpoint, pause, resume, restart

Declaration Management

Establish intent to publish and subscribe

to object attributes and interactions

Object Management

Create and delete object instances

Control attribute and interaction

publication

Create and delete object reflections

Ownership Management

Transfer ownership of object attributes

Time Management

Coordinate the advance of logical time

and its relationship to real time

Data Distribution Management Supports efficient routing of data

A Typical Federation Execution

initialize federation

• Create Federation Execution (Federation Mgt)

• Join Federation Execution (Federation Mgt)

declare objects of common interest among federates

• Publish Object Class (Declaration Mgt)

• Subscribe Object Class Attribute (Declaration Mgt)

exchange information

• Update/Reflect Attribute Values (Object Mgt)

• Send/Receive Interaction (Object Mgt)

• Time Advance Request, Time Advance Grant (Time Mgt)

• Request Attribute Ownership Assumption (Ownership Mgt)

• Modify Region (Data Distribution Mgt)

terminate execution

• Resign Federation Execution (Federation Mgt)

• Destroy Federation Execution (Federation Mgt)

HLA Message Ordering Services

The HLA provides two types of message ordering:

• receive order (unordered): messages passed to federate in an arbitrary order

• time stamp order (TSO): sender assigns a time stamp to message; successive

messages passed to each federate have non-decreasing time stamps

Property

Latency

reproduce before and after

relationships?

all federates see same

ordering of events?

execution repeatable?

typical applications

Receive

Order (RO)

Time Stamp

Order (TSO)

low

higher

no

yes

no

yes

no

yes

training, T&E

analysis

• receive order minimizes latency, does not prevent temporal anomalies

• TSO prevents temporal anomalies, but has somewhat higher latency

Advancing Logical Time

HLA TM services define a protocol for federates to advance logical time; logical

time only advances when that federate explicitly requests an advance

• Time Advance Request: time stepped federates

• Next Event Request: event stepped federates

• Time Advance Grant: RTI invokes to acknowledge logical time advances

federate

Time Advance Request

or

Next Event Request

Time Advance Grant

RTI

If the logical time of a federate is T, the RTI guarantees no more TSO

messages will be passed to the federate with time stamp < T

Federates responsible for pacing logical time advances with wallclock time in

real-time executions

HLA Time Management Services

event

ordering

receive

order

messages

time stamp

order

messages

Runtime Infrastructure (RTI)

FIFO

queue

time

synchronized

delivery

time

stamp

ordered

queue

state updates

and interactions

federate

logical time

logical time advances

• local time and event management

• mechanism to pace execution with

wallclock time

wallclock time (if necessary)

(synchronized with

• federate specific techniques (e.g.,

other processors)

compensation for message latencies)

Synchronizing Message Delivery

Goal: process all events (local and incoming messages) in time stamp

order; To support this, RTI will

• Deliver messages in time stamp order (TSO)

• Synchronize delivery with simulation time advances

TSO

messages

local

events

next

TSO

message

RTI

T’

federate

next

local

event

T

current

time

logical

time

Federate: next local event has time stamp T

• If no TSO messages w/ time stamp < T, advance to T, process local event

• If there is a TSO message w/ time stamp T’ ≤ T, advance to T’ and process TSO

message

Next Event Request (NER)

• Federate invokes Next Event Request (T) to request its logical time be

advanced to time stamp of next TSO message, or T, which ever is smaller

• If next TSO message has time stamp T’ ≤ T

– RTI delivers next TSO message, and all others with time stamp T’

– RTI issues Time Advance Grant (T’)

• Else

– RTI advances federate’s time to T, invokes Time Advance Grant (T)

Typical execution sequences

Federate

RTI

NER(T)

Federate

RTI

NER(T) NER: Next Event Request

RAV (T’)

TAG(T)

RAV (T’)

Federate calls in black

RTI callbacks in red

TAG(T’)

RTI delivers events

Wall clock

time

TAG: Time Advance Grant

RAV: Reflect Attribute Values

no TSO events

Lookahead in the HLA

• Each federate must declare a non-negative lookahead value

• Any TSO sent by a federate must have time stamp at least the federate’s

current time plus its lookahead

• Lookahead can change during the execution (Modify Lookahead)

– increases take effect immediately

– decreased do not take effect until the federate advances its logical

time

T

T+L

Logical time

1. Current time is T, lookahead L

2. Request lookahead decrease by ∆L to L’

T+L

Logical time

3. Advance ∆T, lookahead, decreases ∆T

T+L

Logical time

4. After advancing ∆L, lookahead is L’

L- ∆T

∆T

T+ ∆T

L’

∆L

T+∆L

Minimum Next Event Time and LBTS

network

TSO queue

13

LBTS0=10

11

MNET0=8

8

Current Time =8

Lookahead = 2

Current Time =9

Lookahead = 8

Federate0

Federate1

Federate2

• LBTSi: Lower Bound on Time Stamp of TSO messages that could later

be placed into the TSO queue for federate i

– TSO messages w/ TS ≤ LBTSi eligible for delivery

– RTI ensures logical time of federate i never exceeds LBTSi

• MNETi: Minimum Next Event Time is a lower bound on the time stamp of

any message that could later be delivered to federate i.

– Minimum of LBTSi and minimum time stamp of messages in TSO

queue

Event Retraction

Previously sent events can be “unsent” via the Retract service

– Update Attribute Values and Send Interaction return a “handle” to

the scheduled event

– Handle can be used to Retract (unschedule) the event

– Can only retract event if its time stamp > current time + lookahead

– Retracted event never delivered to destination (unless Flush

Queue used)

Sample execution sequence:

Vehicle

1. NER (100)

2. Receive Interaction (90)

1. Handle=UAV (100)

2. TAG (90)

NER: Next Event Request

UAV: Update Attribute Values)

TAG: Time Advance Grant (callback)

3. Retract(Handle)

Observer

Wallclock time

1. Vehicle schedules position update at time 100, ready to advance to time 100

2. receives interaction (break down event) invalidating position update at time 100

3. Vehicle retracts update scheduled for time 100

Optimistic Time Management

Mechanisms to ensure events are processed in time stamp order:

• conservative: block to avoid out of order event processing

• optimistic: detect out-of-order event processing, recover (e.g., Time Warp)

Primitives for optimistic time management

• Optimistic event processing

– Deliver (and process) events without time stamp order delivery guarantee

– HLA: Flush Queue Request

•

Rollback

– Deliver message w/ time stamp T, other computations already performed at times >

T

– Must roll back (undo) computations at logical times > T

– HLA: (local) rollback mechanism must be implemented within the federate

•

Anti-messages & secondary rollbacks

– Anti-message: message sent to cancel (undo) a previously sent message

– Causes rollback at destination if cancelled message already processed

– HLA: Retract service; deliver retract request if cancelled message already delivered

•

Global Virtual Time

– Lower bound on future rollback to commit I/O operations, reclaim memory

– HLA: Query Next Event Time service gives current value of GVT

Optimistic Time Management in the HLA

HLA Support for Optimistic Federates

• federations may include conservative and/or optimistic federates

• federates not aware of local time management mechanism of other

federates (optimistic or conservative)

• optimistic events (events that may be later canceled) will not be delivered

to conservative federates that cannot roll back

• optimistic events can be delivered to other optimistic federates

• individual federates may be sequential or parallel simulations

Flush Queue Request: similar to NER except

(1) deliver all messages in RTI’s local message queues,

(2) need not wait for other federates before issuing a Time Advance Grant

Summary: HLA Time Management

Functionality:

• allows federates with different time management requirements (and local TM

mechanisms) to be combined within a single federation execution

– DIS-style training simulations

– simulations with hard real-time constraints

– event-driven simulations

– time-stepped simulations

– optimistic simulations

HLA Time Management services:

• Event order

– receive order delivery

– time stamp order delivery

• Logical time advance mechanisms

– TAR/TARA: unconditional time advance

– NER/NERA: advance depending on message time stamps

Part III:

Distributed Virtual Environments

Introduction

Dead reckoning

Data distribution

Example: Distributed Interactive Simulation (DIS)

“The primary mission of DIS is to define an infrastructure for linking

simulations of various types at multiple locations to create realistic,

complex, virtual ‘worlds’ for the simulation of highly interactive

activities” [DIS Vision, 1994].

• developed in U.S. Department of Defense, initially for training

• distributed virtual environments widely used in DoD; growing use in other

areas (entertainment, emergency planning, air traffic control)

A Typical DVE Node Simulator

network

visual display

system

Image

Generator

terrain

database

control/

display

interface

Other Vehicle

State Table

own vehicle

dynamics

network

interface

sound

generator

controls

and panels

Execute every 1/30th of a second:

• receive incoming messages & user inputs, update state of remote vehicles

• update local display

• for each local vehicle

• compute (integrate) new state over current time period

• send messages (e.g., broadcast) indicating new state

Reproduced from Miller, Thorpe (1995), “SIMNET: The Advent of Simulator Networking,” Proceedings of the IEEE,

83(8): 1114-1123.

Typical Sequence

visual display

3

6

7

4

8

1. Detect trigger press

Image

Generator

terrain

database

control/

display

interface

Other Vehicle

State Table

own vehicle

dynamics

1

network

interface

2. Audio “fire” sound

3. Display muzzel flash

4. Send fire PDU

sound

generator

5. Display muzzel flash

2

6. Compute trajectory,

display tracer

7. Display shell impact

Controls/panels

visual display

5

9

Image

Generator

terrain

database

control/

display

interface

4

Other Vehicle

State Table

own vehicle

dynamics

10

Controls/panels

network

interface

sound

generator

8 11

8. Send detonation PDU

9. Display shell impact

10. Compute damage

11. Send Entity state PDU

indicating damage

DIS Design Principles

•

•

•

•

•

Autonomy of simulation nodes

– simulations broadcast events of interest to other simulations; need not determine

which others need information

– receivers determine if information is relevant to it, and model local effects of new

information

– simulations may join or leave exercises in progress

Transmission of “ground truth” information

– each simulation transmits absolute truth about state of its objects

– receiver is responsible for appropriately “degrading” information (e.g., due to

environment, sensor characteristics)

Transmission of state change information only

– if behavior “stays the same” (e.g., straight and level flight), state updates drop to a

predetermined rate (e.g., every five seconds)

“Dead Reckoning” algorithms

– extrapolate current position of moving objects based on last reported position

Simulation time constraints

– many simulations are human-in-the-loop

– humans cannot distinguish temporal difference < 100 milliseconds

– places constraints on communication latency of simulation platform

Part III:

Distributed Virtual Environments

Introduction

Dead reckoning

Data distribution

Distributed Simulation Example

• Virtual environment simulation containing two moving

vehicles

• One vehicle per federate (simulator)

• Each vehicle simulator must track location of other

vehicle and produce local display (as seen from the local

vehicle)

• Approach 1: Every 1/60th of a second:

– Each vehicle sends a message to other vehicle

indicating its current position

– Each vehicle receives message from other vehicle,

updates its local display

Limitations

• Position information corresponds to location when the

message was sent; doesn’t take into account delays in

sending message over the network

• Requires generating many messages if there are many

vehicles

Dead Reckoning

• Send position update messages less frequently

• local dead reckoning model predicts the position of remote

entities between updates

“infrequent” position

update messages

visual display

system

get position

at frame rate

last reported state:

position = (1000,1000)

traveling east @ 50 feet/sec

(1050,1000)

1000

Image

Dead reckoning

Generator

model

predicted

position

one second later

terrain

database

1000

• When are updates sent?

• How does the DRM predict vehicle position?

1050

Re-synchronizing the DRM

When are position update messages generated?

• Compare DRM position with exact position, and generate an update message if

error is too large

• Generate updates at some minimum rate, e.g., 5 seconds (heart beats)

High

Fidelity

Model

aircraft 1

close

enough?

timeout?

DRM

aircraft 1

simulator for

aircraft 1

DRM

aircraft 2

over threshold

or timeout

entity state

update PDU

DRM

aircraft 1

display

sample DRM at

frame rate

simulator for

aircraft 2

Dead Reckoning Models

•

•

•

•

P(t) = precise position of entity at time t

Position update messages: P(t1), P(t2), P(t3) …

v(ti), a(ti) = ith velocity, acceleration update

DRM: estimate D(t), position at time t

– ti = time of last update preceding t

– ∆t = ti - t

• Zeroth order DRM:

– D(t) = P(ti)

• First order DRM:

– D(t) = P(ti) + v(ti)*∆t

• Second order DRM:

– D(t) = P(ti) + v(ti)*∆t + 0.5*a(ti)*(∆t)2

DRM Example

DRM estimates position

A

true position

state update

message

DRM estimate of

true position

B

C

t1

t2

generate state

update message

receive

message

just before

screen update

D

E

display update

Potential problems:

• Discontinuity may occur when position update arrives;

may produce “jumps” in display

• Does not take into account message latency

Time Compensation

Taking into account message latency

• Add time stamp to message when update is generated

(sender time stamp)

• Dead reckon based on message time stamp

A

true position

state update

message

DRM estimate of

true position

display update

B

C

t1

t2

update with time

compensation

D

E

Smoothing

Reduce discontinuities after updates occur

• “phase in” position updates

• After update arrives

– Use DRM to project next k positions

– Interpolate position of next update

A

B

C

true position

state update

t1

t2

message

interpolated

position

D

DRM estimate of

true position

display update

E

extrapolated position

used for smoothing

Accuracy is reduced to create a more natural display

Part III:

Distributed Virtual Environments

Introduction

Dead reckoning

Data distribution

Data Distribution Management

A federate sending a message (e.g., updating its current location) cannot

be expected to explicitly indicate which other federates should receive

the message

Basic Problem: which federates receive messages?

• broadcast update to all federates (SimNet, early versions of DIS)

– does not scale to large numbers of federates

• grid sectors

– OK for distribution based on spacial proximity

• routing spaces (STOW-RTI, HLA)

– generalization of grid sector idea

Content-based addressing: in general, federates must specify:

• Name space: vocabulary used to specify what data is of interest and to

describe the data contained in each message (HLA: routing space)

• Interest expressions: indicate what information a federate wishes to

receive (subset of name space; HLA: subscription region)

• Data description expression: characterizes data contained within

each message (subset of name space; HLA: publication region)

HLA Routing Spaces

1.0

• Federate 1 (sensor): subscribe to S1

• Federate 2 (sensor): subscribe to S2

• Federate 3 (target): update region U

S2

0.5

0.0

0.0

S1

U

update messages by target are sent to

federate 1, but not to federate 2

0.5

1.0

HLA Data Distribution Management

• N dimensional normalized routing space

• interest expressions are regions in routing space (S1=[0.1,0.5], [0.2,0.5])

• each update associated with an update region in routing space

• a federate receives a message if its subscription region overlaps with

the update region

Implementation: Grid Based

1.0

21

22

23

24

25

16

17

18

19

20

13

14

15

partition routing space into

grid cells, map each cell to

a multicast group

multicast group,

id=15

S2

U

0.5

0.0

0.0

11

S1

12

6

7

8

9

10

1

2

3

4

5

0.5

U publishes to 12, 13

S1 subscribes to 6,7,8,11,12,13

S2 subscribes to 11,12,16,17

1.0

• subscription region: Join each group overlapping subscription region

• attribute update: send Update to each group overlapping update region

• need additional filtering to avoid unwanted messages, duplicates

Changing a Subscription Region

33

34

35

36

37

38

39

40

25

26

27

28

29

30

31

32

17

18

19

20

21

22

23

24

Join group

9

10

11

12

13

14

15

16

no operations issued

1

2

3

4

5

6

7

8

new region

existing subscription region

Leave group

Change subscription region:

• issue Leave operations for (cells in old region - cells in new region)

• issue Join operations for (cells in new region - cells in old region)

Observation: each processor can autonomously join/leave groups

whenever its subscription region changes w/o communication.

Another Approach: Region-Based Implementation

1.0

•

•

•

S2

0.5

0.0

0.0

S1

U

0.5

Multicast group associated with U

Membership of U: S1

Modify U to U’:

– determine subscription regions

overlapping with U’

– Modify membership accordingly

1.0

• Associate a multicast group with each update region

• Membership: all subscription regions overlapping with update region

• Changing subscription (update) region

– Must match new subscription (update) region against all existing

update (subscription) regions to determine new membership

• No duplicate or extra messages

• Change subscription: typically requires interprocessor communication

• Can use grids (in addition to regions) to reduce matching cost

Distributed Virtual Environments: Summary

• Perhaps the most dominant application of distributed

simulation technology to date

– Human in the loop: training, interactive, multi-player video games

– Hardware in the loop

• Managing interprocessor communication is the key

– Dead reckoning techniques

– Data distribution management

• Real-time execution essential

• Many other issues

–

–

–

–

Terrain databases, consistent, dynamic terrain

Real-time modeling and display of physical phenomena

Synchronization of hardware clocks

Human factors

Future Research

Directions

The good news...

Parallel and distributed simulation technology is seeing

widespread use in real-world applications and systems

• HLA, standardization (OMG, IEEE 1516)

• Other defense systems

• Interactive games (no time management yet, though!)

Interoperability and Reuse

Reuse the principal reason distributed simulation technology

is seeing widespread use (scalability still important!)

“Sub-objective 1-1: Establish a common high level simulation

architecture to facilitate interoperability [and] reuse of M&S

components.”

- U.S. DoD Modeling and Simulation Master Plan

“critical capability that underpin the IMTR vision [is]... total,

seamless model interoperability: Future models and

simulations will be transparently compatible, able to plugand-play …”

- Integrated Manufacturing Technology Roadmap

• Enterprise Management

• Circuit design, embedded systems

• Transportation

• Telecommunications

Research Challenges

• Shallow (communication) vs. deep (semantics)

interoperability: How should I develop simulations today,

knowing they may be used tomorrow for entirely different

purposes?

• Multi-resolution modelling

• Smart, adaptable interfaces?

• Time management: A lot of work completed already, but

still not a solved problem!

– Conservative: zero lookahead simulations will continue to be the

common case

– Optimistic: automated optimistic-ization of existing simulations

(e.g., state saving)

– Challenge basic assumptions used in the past: New problems &

solutions, not new solutions to the old problems

• Relationship of interoperability and reuse in simulation to

other domains (e.g., e-commerce)?

Real-Time Systems

Interactive distributed simulations for immersive virtual

environments represents fertile new ground for distributed

simulation research

The distinction between the virtual and real world is

disappearing

Research Challenges

• Real-time time management

–

–

–

–

Time managed, real-time distributed simulations?

Predictable performance

Performance tunable (e.g., synchronization overheads)

Mixed time management (e.g., federations with both analytic and

real-time training simulations)

• Distributed simulation over WANs, e.g., the Internet

– Server vs. distributed architectures?

– PDES over unreliable transport and nodes

• Distributed simulations that work!

– High quality, robust systems

• Data Distribution Management

–

–

–

–

Implementation architectures

Managing large numbers of multicast groups

Join/leave times

Integration and exploitation of network QoS

Simulation-Based Decision Support

Real World

System

Real-Time Advisor

Automated and/or

interactive

analysis tools

analysts

live

data

feeds

Information

system

forecasting tool

(fast simulation)

• Battle management

• Enterprise control

• Transportation Systems

decision makers

Research Challenges

• Ultra fast model execution

– Much, much faster-than-real-time execution

– Instantaneous model execution: Spreadsheet-like performance

• Ultra fast execution of multiple runs

• Automated simulation analyses: smart, self-managed

devices and systems?

• Ubiquitous simulation?

Closing Remarks

After years of being largely an academic endeavor,

parallel and distributed simulation technology is

beginning to thrive.

Many major challenges remain to be addressed before

the technology can achieve its fullest potential.

The

End