Lecture 10 - BCCN) Goettingen

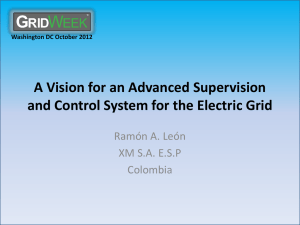

Overview over different methods

M a c h in e L e a rn in g C la s s ic a l C o n d it io n in g

A n tic ip a to r y C o n tr o l o f A c tio n s a n d P r e d ic tio n o f V a lu e s

D y n a m ic P r o g .

( B e llm a n E q .)

R E IN F O R C E M E N T L E A R N IN G e x a m p le b a s e d d

- R u le s u p e r v is e d L .

=

R e s c o r la /

W a g n e r

=

E lig ib ilit y T ra c e s

T D ( 1 ) T D ( 0 )

D iffe r e n tia l

H e b b - R u le

(”s lo w ”)

S y n a p t ic P la s t ic it y

C o r r e la tio n o f S ig n a ls

U N - S U P E R V IS E D L E A R N IN G c o r r e la tio n b a s e d

H e b b - R u le

D iffe r e n tia l

H e b b - R u le

(”fa s t”)

LT P

( LT D = a n ti)

=

N e u r.T D - fo r m a lis m

M o n te C a r lo

C o n tr o l

A c to r /C r itic

N e u r.T D - M o d e ls

(“C ritic ”) te c h n ic a l & B a s a l G a n g l.

IS O - L e a r n in g

S T D P - M o d e ls b io p h y s ic a l & n e tw o r k

IS O - M o d e l o f S T D P

S T D P

S A R S A

B io p h y s . o f S y n . P la s tic ity

D o p a m in e G lu ta m a te

Q - L e a r n in g

C o r r e la tio n b a s e d C o n tr o l

( n o n - e v a lu a t iv e )

I S O - C o n t r o l

N e u r o n a l R e w a r d S y s te m s

( B a s a l G a n g lia )

N O N - E VA L U AT IV E F E E D B A C K ( C o r r e la tio n s )

E VA L U AT IV E F E E D B A C K ( R e w a r d s )

Different Types/Classes of Learning

Unsupervised Learning (non-evaluative feedback)

• Trial and Error Learning.

• No Error Signal.

• No influence from a Teacher, Correlation evaluation only.

Reinforcement Learning (evaluative feedback)

• (Classic. & Instrumental) Conditioning, Reward-based Lng.

• “Good-Bad” Error Signals.

• Teacher defines what is good and what is bad.

Supervised Learning (evaluative error-signal feedback)

• Teaching, Coaching, Imitation Learning, Lng. from examples and more.

• Rigorous Error Signals.

• Direct influence from a teacher/teaching signal.

An unsupervised learning rule: d w

Basic Hebb-Rule: dt i m u m

<< 1

For Learning: One input, one output.

A reinforcement learning rule (TD-learning): w i

!

w i

+ ц[r(t + 1) + н v(t + 1) а v(t)]uа(t)

One input, one output, one reward.

A supervised learning rule (Delta Rule):

!

i

!

!

i а цr

!

i

E

No input, No output, one Error Function Derivative, where the error function compares input- with outputexamples.

input

Self-organizing maps: unsupervised learning map

Neighborhood relationships are usually preserved (+)

Absolute structure depends on initial condition and cannot be predicted (-)

An unsupervised learning rule: d w

Basic Hebb-Rule: dt i m u m

<< 1

For Learning: One input, one output

A reinforcement learning rule (TD-learning): w i

!

w i

+ ц[r(t + 1) + н v(t + 1) а v(t)]uа(t)

One input, one output, one reward

A supervised learning rule (Delta Rule):

!

i

!

!

i а цr

!

i

E

No input, No output, one Error Function Derivative, where the error function compares input- with outputexamples.

Classical Conditioning

I. Pawlow

An unsupervised learning rule: d w

Basic Hebb-Rule: dt i m u m

<< 1

For Learning: One input, one output

A reinforcement learning rule (TD-learning): w i

!

w i

+ ц[r(t + 1) + н v(t + 1) а v(t)]uа(t)

One input, one output, one reward

A supervised learning rule (Delta Rule):

!

i

!

!

i а цr

!

i

E

No input, No output, one Error Function Derivative, where the error function compares input- with outputexamples.

Supervised Learning: Example OCR

The influence of the type of learning on speed and autonomy of the learner

Learning Speed

Correlation based learning: No teacher

Reinforcement learning , indirect influence

Autonomy

Reinforcement learning, direct influence

Supervised Learning, Teacher

Programming

Hebbian learning

When an axon of cell A excites cell B and repeatedly or persistently takes part in firing it, some growth processes or metabolic change takes place in one or both cells so that A‘s efficiency ... is increased.

Donald Hebb (1949)

A

B

A t

B

Overview over different methods

M a c h in e L e a rn in g C la s s ic a l C o n d it io n in g

A n tic ip a to r y C o n tr o l o f A c tio n s a n d P r e d ic tio n o f V a lu e s

D y n a m ic P r o g .

( B e llm a n E q .)

R E IN F O R C E M E N T L E A R N IN G e x a m p le b a s e d d

- R u le s u p e r v is e d L .

=

R e s c o r la /

W a g n e r

=

E lig ib ilit y T ra c e s

T D ( 1 ) T D ( 0 )

D iffe r e n tia l

H e b b - R u le

(”s lo w ”)

S y n a p t ic P la s t ic it y

C o r r e la tio n o f S ig n a ls

U N - S U P E R V IS E D L E A R N IN G c o r r e la tio n b a s e d

H e b b - R u le

D iffe r e n tia l

H e b b - R u le

(”fa s t”)

LT P

( LT D = a n ti)

=

N e u r.T D - fo r m a lis m

M o n te C a r lo

C o n tr o l

A c to r /C r itic

N e u r.T D - M o d e ls

(“C ritic ”) te c h n ic a l & B a s a l G a n g l.

IS O - L e a r n in g

S T D P - M o d e ls b io p h y s ic a l & n e tw o r k

IS O - M o d e l o f S T D P

S T D P

S A R S A

B io p h y s . o f S y n . P la s tic ity

D o p a m in e G lu ta m a te

Q - L e a r n in g

C o r r e la tio n b a s e d C o n tr o l

( n o n - e v a lu a t iv e )

I S O - C o n t r o l

N e u r o n a l R e w a r d S y s te m s

( B a s a l G a n g lia )

N O N - E VA L U AT IV E F E E D B A C K ( C o r r e la tio n s )

E VA L U AT IV E F E E D B A C K ( R e w a r d s )

Hebbian Learning u

1 w

1 v

…correlates inputs with outputs by the… d w dt m v u m

<< 1

Vector Notation

Cell Activity: v = w .

u

This is a dot product, where w is a weight vector and u the input vector. Strictly we need to assume that weight changes are slow, otherwise this turns into a differential eq.

Single Input d w

1 dt

= m v u

1 m

<< 1

Many Inputs d w dt

= m v u m

<< 1

As v is a single output, it is scalar.

Averaging Inputs d w

= m

<v u > m

<< 1 dt

We can just average over all input patterns and approximate the weight change by this. Remember, this assumes that weight changes are slow.

If we replace v with w

.

u we can write: d w dt

= m

Q .

w where Q = < uu > is the input correlation matrix

Note: Hebb yields an instable (always growing) weight vector!

Synaptic plasticity evoked artificially

Examples of Long term potentiation (LTP) and long term depression (LTD).

LTP First demonstrated by Bliss and Lomo in

1973. Since then induced in many different ways, usually in slice.

LTD, robustly shown by Dudek and Bear in 1992, in Hippocampal slice.

LTP will lead to new synaptic contacts

Conventional LTP = Hebbian Learning

Synaptic change %

Pre Post t

Pre t

Post

Pre t

Pre

Post t

Post

Symmetrical Weight-change curve

The temporal order of input and output does not play any role

Spike timing dependent plasticity - STDP

Markram et. al. 1997

Spike Timing Dependent Plasticity: Temporal Hebbian Learning

Synaptic change %

Pre t

Pre

Post t

Post

Pre Post t

Pre t

Post

Pre precedes Post:

Long-term

Potentiation

Pre follows Post:

Long-term

Depression

Weight-change curve

(Bi&Poo, 2001)

Back to the Math. We had:

Single Input d w

1 dt

= m v u

1 m

<< 1

Many Inputs d w

= m v u m

<< 1 dt

As v is a single output, it is scalar.

Averaging Inputs d w

= m

<v u > m

<< 1 dt

We can just average over all input patterns and approximate the weight change by this. Remember, this assumes that weight changes are slow.

If we replace v with w

.

u we can write: d w dt

= m

Q .

w where Q = < uu > is the input correlation matrix

Note: Hebb yields an instable (always growing) weight vector!

Covariance Rule(s)

Normally firing rates are only positive and plain Hebb would yield only LTP.

Hence we introduce a threshold to also get LTD d w dt

= m

(v -

Q

) u m

<< 1

Output threshold d w dt

= m v ( u -

Q ) m

<< 1 Input vector threshold

Many times one sets the threshold as the average activity of some reference time period (training period)

Q

= <v> or

Q

= < u > together with v = w

.

u we get: d w dt

= m

C

.

w , where C is the covariance matrix of the input http://en.wikipedia.org/wiki/Covariance_matrix

C = <( u -< u >)( u -< u >)> = < uu > - < u 2 > = <( u -< u >) u >

The covariance rule can produce LTP without (!) post-synaptic output.

This is biologically unrealistic and the BCM rule (Bienenstock, Cooper,

Munro) takes care of this.

BCM- Rule d w dt

= m v u (v -

Q

) m

<< 1

As such this rule is again unstable, but BCM introduces a sliding threshold d

Q dt

= n

(v 2 -

Q

) n

< 1

Note the rate of threshold change n should be faster than then weight changes ( m

), but slower than the presentation of the individual input patterns. This way the weight growth will be over-dampened relative to the

(weight – induced) activity increase.

Problem: Hebbian Learning can lead to unlimited weight growth.

Solution: Weight normalization a) subtractive (subtract the mean change of all weights from each individual weight).

b) multiplicative (mult. each weight by a gradually decreasing factor).

Evidence for weight normalization:

Reduced weight increase as soon as weights are already big

(Bi and Poo, 1998, J.

Neurosci.)

Examples of Applications

• Kohonen (1984). Speech recognition - a map of phonemes in the Finish language

• Goodhill (1993) proposed a model for the development of retinotopy and ocular dominance, based on Kohonen Maps (SOM)

• Angeliol et al (1988) – travelling salesman problem (an optimization problem)

• Kohonen (1990) – learning vector quantization

(pattern classification problem)

• Ritter & Kohonen (1989) – semantic maps

OD

ORI

Differential Hebbian Learning of Sequences

Learning to act in response to sequences of sensor events

Overview over different methods

M a c h in e L e a rn in g C la s s ic a l C o n d it io n in g

A n tic ip a to r y C o n tr o l o f A c tio n s a n d P r e d ic tio n o f V a lu e s

D y n a m ic P r o g .

( B e llm a n E q .)

R E IN F O R C E M E N T L E A R N IN G e x a m p le b a s e d d

- R u le

=

R e s c o r la /

W a g n e r

=

E lig ib ilit y T ra c e s

T D ( 1 ) T D ( 0 )

D iffe r e n tia l

H e b b - R u le

(”s lo w ”)

S y n a p t ic P la s t ic it y

C o r r e la tio n o f S ig n a ls

U N - S U P E R V IS E D L E A R N IN G c o r r e la tio n b a s e d

H e b b - R u le

D iffe r e n tia l

H e b b - R u le

(”fa s t”)

LT P

( LT D = a n ti)

=

N e u r.T D - fo r m a lis m

M o n te C a r lo

C o n tr o l

A c to r /C r itic

N e u r.T D - M o d e ls

(“C ritic ”) te c h n ic a l & B a s a l G a n g l.

IS O - L e a r n in g

S T D P - M o d e ls b io p h y s ic a l & n e tw o r k

IS O - M o d e l o f S T D P

S T D P

S A R S A

B io p h y s . o f S y n . P la s tic ity

D o p a m in e G lu ta m a te

Q - L e a r n in g

C o r r e la tio n b a s e d C o n tr o l

( n o n - e v a lu a t iv e )

I S O - C o n t r o l

N e u r o n a l R e w a r d S y s te m s

( B a s a l G a n g lia )

N O N - E VA L U AT IV E F E E D B A C K ( C o r r e la tio n s )

E VA L U AT IV E F E E D B A C K ( R e w a r d s )

History of the Concept of Temporally

Asymmetrical Learning: Classical Conditioning

I. Pawlow

History of the Concept of Temporally

Asymmetrical Learning: Classical Conditioning

Correlating two stimuli which are shifted with respect to each other in time.

Pavlov’s Dog: “Bell comes earlier than

Food”

This requires to remember the stimuli in the system.

Eligibility Trace : A synapse remains

“eligible” for modification for some time after it was active (Hull 1938, then a still abstract concept).

I. Pawlow

Classical Conditioning: Eligibility Traces

Conditioned Stimulus (Bell)

X

Stimulus Trace E

Dw

1

+ w

1

S S

Response w

0

= 1

Unconditioned Stimulus (Food)

The first stimulus needs to be “remembered” in the system

History of the Concept of Temporally

Asymmetrical Learning: Classical Conditioning

Eligibility Traces

Note: There are vastly different time-scales for (Pavlov’s) hehavioural experiments:

Typically up to 4 seconds as compared to STDP at neurons:

Typically 40-60 milliseconds (max.)

I. Pawlow

Defining the Trace

In general there are many ways to do this, but usually one chooses a trace that looks biologically realistic and allows for some analytical calculations, too.

n h(t) = h k

(t) tõ 0

0 t< 0

EPSP-like functions: a

-function: h(t) = te

à at k

Dampened

Sine wave: h(t) = k

1 b sin(bt) e

à at

Shows an oscillation.

Double exp.: h(t) = k

î

1

(e

à at а e à bt

)

This one is most easy to handle analytically and, thus, often used.

Overview over different methods

M a c h in e L e a rn in g d

- R u le

C la s s ic a l C o n d it io n in g S y n a p t ic P la s t ic it y

A n tic ip a to r y C o n tr o l o f A c tio n s a n d P r e d ic tio n o f V a lu e s C o r r e la tio n o f S ig n a ls Mathematical formulation

R E IN F O R C E M E N T L E A R N IN G e x a m p le b a s e d

U N - S U P E R V IS E D L E A R N IN G of learning rules is c o r r e la tio n b a s e d

D y n a m ic P r o g .

( B e llm a n E q .) s u p e r v is e d L .

=

R e s c o r la / are much different.

W a g n e r

LT P

( LT D = a n ti)

=

E lig ib ilit y T ra c e s

T D ( 1 ) T D ( 0 )

D iffe r e n tia l

H e b b - R u le

(”s lo w ”)

D iffe r e n tia l

H e b b - R u le

(”fa s t”)

=

N e u r.T D - fo r m a lis m

M o n te C a r lo

C o n tr o l

A c to r /C r itic

N e u r.T D - M o d e ls

(“C ritic ”) te c h n ic a l & B a s a l G a n g l.

IS O - L e a r n in g

S T D P - M o d e ls b io p h y s ic a l & n e tw o r k

IS O - M o d e l o f S T D P

S T D P

S A R S A

B io p h y s . o f S y n . P la s tic ity

D o p a m in e G lu ta m a te

Q - L e a r n in g

C o r r e la tio n b a s e d C o n tr o l

( n o n - e v a lu a t iv e )

I S O - C o n t r o l

N e u r o n a l R e w a r d S y s te m s

( B a s a l G a n g lia )

N O N - E VA L U AT IV E F E E D B A C K ( C o r r e la tio n s )

E VA L U AT IV E F E E D B A C K ( R e w a r d s )

Differential Hebb Learning Rule d dt w i

( t )

m u i

( t ) y

Simpler Notation x = Input u = Traced Input

Early: “Bell”

X i u i x w

S

V

X

0

Late: “Food” u

0

h ( x )

f ( u ) g ( x

u ) du

f ( x ) g ( x )

g ( x ) f ( x ) h ( x )

f ( u ) g ( u

x ) du

g ( x )

f ( x )

f ( x )

g ( x )

Convolution used to define the traced input,

Correlation used to calculate weight growth.

Differential Hebbian Learning

d dt w i

( t )

m u i

( t ) v ' ( t )

Filtered

Input

Derivative of the Output

Output v ( t )

w i

( t ) u i

( t )

Dw

Produces asymmetric weight change curve

(if the filters h produce unimodal „humps“)

T

Conventional LTP

Synaptic change %

Pre Post t

Pre t

Post

Pre t

Pre

Post t

Post

Symmetrical Weight-change curve

The temporal order of input and output does not play any role

Differential Hebbian Learning

d dt w i

( t )

m u i

( t ) v ' ( t )

Filtered

Input

Derivative of the Output

Output v ( t )

w i

( t ) u i

( t )

Dw

Produces asymmetric weight change curve

(if the filters h produce unimodal „humps“)

T

Spike-timing-dependent plasticity

(STDP): Some vague shape similarity

Synaptic change %

Pre t

Pre

Post t

Post

Pre Post t

Pre t

Post

Pre precedes Post:

Long-term

Potentiation

Pre follows Post:

Long-term

Depression

T=t

Post ms

t

Pre

Weight-change curve

(Bi&Poo, 2001)

Overview over different methods

M a c h in e L e a rn in g C la s s ic a l C o n d it io n in g

A n tic ip a to r y C o n tr o l o f A c tio n s a n d P r e d ic tio n o f V a lu e s

D y n a m ic P r o g .

( B e llm a n E q .)

R E IN F O R C E M E N T L E A R N IN G e x a m p le b a s e d d

- R u le s u p e r v is e d L .

=

R e s c o r la /

W a g n e r

S y n a p t ic P la s t ic it y

C o r r e la tio n o f S ig n a ls

U N - S U P E R V IS E D L E A R N IN G c o r r e la tio n b a s e d

H e b b - R u le

LT P

( LT D = a n ti)

E lig ib ilit y T ra c e s

T D ( 1 ) T D ( 0 )

D iffe r e n tia l

H e b b - R u le

(”s lo w ”)

D iffe r e n tia l

H e b b - R u le

(”fa s t”)

=

N e u r.T D - fo r m a lis m

M o n te C a r lo

C o n tr o l

A c to r /C r itic

N e u r.T D - M o d e ls

(“C ritic ”) te c h n ic a l & B a s a l G a n g l.

IS O - L e a r n in g

S T D P - M o d e ls b io p h y s ic a l & n e tw o r k

IS O - M o d e l o f S T D P

S T D P

S A R S A

B io p h y s . o f S y n . P la s tic ity

D o p a m in e G lu ta m a te

Q - L e a r n in g

C o r r e la tio n b a s e d C o n tr o l

( n o n - e v a lu a t iv e )

I S O - C o n t r o l

N e u r o n a l R e w a r d S y s te m s

( B a s a l G a n g lia )

N O N - E VA L U AT IV E F E E D B A C K ( C o r r e la tio n s )

E VA L U AT IV E F E E D B A C K ( R e w a r d s )

The biophysical equivalent of

Hebb’s postulate

Plastic

Synapse

Presynaptic Signal

(Glu)

NMDA/AMPA

Pre-Post Correlation, but why is this needed?

Postsynaptic:

Source of

Depolarization

Plasticity is mainly mediated by so called

N-methyl-D-Aspartate (NMDA) channels.

These channels respond to Glutamate as their transmitter and they are voltage depended: out in out in

Biophysical Model: Structure

x

NMDA synapse v

Source of depolarization:

1) Any other drive (AMPA or NMDA)

2) Back-propagating spike

Hence NMDA-synapses (channels) do require a (hebbian) correlation between pre and post-synaptic activity!

Local Events at the Synapse

x

1

S

Local

Current sources “under” the synapse:

• Synaptic current

• Currents from all parts of the dendritic tree

• Influence of a Back-propagating spike u

1 v

I synaptic

I

BP

S

Global

I

Dendritic

Membrane potential:

C d dt

V ( t )

i

( w i

D w i

) g i

( t )( E i

V )

V rest

R

V ( t )

I dep

Weight

Synaptic input

Depolarization source

Pre-syn. Spike g

NMDA

[nS]

On „Eligibility Traces“

0.1

0

0.2

0.15

0.1

0.05

0

0

40

0.4

0.35

0.3

g

NMDA

80 t [ms]

*

2 4 6

V*h

8 10 w

S x

1 x

0 h h

ISO-Learning

X w

1

S w

0 v’ v

Model structure

•

Dendritic compartment

• Plastic synapse with NMDA channels

Source of Ca 2+ influx and coincidence detector

• Source of depolarization:

1. Back-propagating spike

2. Local dendritic spike

Plastic

Synapse

NMDA/AMPA g

NMDA/AMPA dV dt

~

i g i

( t )( E i

V )

I dep

Source of

Depolarization

BP spike

Dendritic spike

NMDA synapse -

Plastic synapse

NMDA/AMPA g

NMDA/AMPA dV dt

~

i g i

( t )( E i

V )

I dep

Source of depolarization

Plasticity Rule

(Differential Hebb)

Instantenous weight change: d dt w

( t )

m c

N

( t ) F ' ( t )

Presynaptic influence

Glutamate effect on

NMDA channels

Postsynaptic influence

NMDA synapse -

Plastic synapse

NMDA/AMPA g

NMDA/AMPA dV dt

~

i g i

( t )( E i

V )

I dep

Source of depolarization d dt w

( t )

m c

N

( t ) F ' ( t )

Pre-synaptic influence

Normalized NMDA conductance: c

N

1 e

t /

1

e

t /

2

[ Mg

2

] e

V

0.1

g

NMDA

[nS]

80 t [ms] 0 40

NMDA channels are instrumental for LTP and LTD induction

(Malenka and Nicoll, 1999; Dudek and Bear ,1992)

Depolarizing potentials in the dendritic tree

20

V [mV]

0

-20

-40

-60

-20

-40

-60

0

20

V [mV]

10

0

0 10

20

V [mV]

0

-20

-40

-60

0

20

V [mV]

0

-20

-40

-60

0

10

10

20 t [ms]

20 t [ms]

Dendritic spikes

(Larkum et al., 2001

Golding et al, 2002

H äusser and Mel, 2003)

20 t [ms]

Backpropagating spikes

(Stuart et al., 1997)

20 t [ms]

NMDA synapse -

Plastic synapse

NMDA/AMPA g

NMDA/AMPA dV dt

~

i g i

( t )( E i

V )

I dep d dt w

( t )

m c

N

( t ) F ' ( t )

Source of depolarization

Postsyn. Influence

For F we use a lowpass filtered („slow“) version of a back-propagating or a dendritic spike.

BP and D-Spikes

0

-20

V

[mV]

-40

-60

0 50 100 150 t [ms]

0

V

-20

[mV]

-40

-60

0 50 100 150 t [ms]

20

V [mV]

0

-20

-40

-60

0 10 20 t [ms]

20

V [mV]

0

-20

-40

-60

0 10 20 t [ms]

0

-20

V

[mV]

-40

-60

0 20 40 60 80 t [ms]

20

V [mV]

0

-20

-40

-60

0 10 20 t [ms]

20

V [mV]

0

-20

-40

-60

0 10 20 t [ms]

0

-20

V

[mV]

-40

-60

0 20 40 60 80 t [ms]

Weight Change Curves

Source of Depolarization: Back-Propagating Spikes

Back-propagating spike

Weight change curve

0.01

Dw

NMDAr activation

Back-propagating spike

T

20

V [mV]

0

-20

-40

-60

0 10 20 t [ms]

-0.03

-40 -20 0 20 40 T [ms]

T=t

Post

– t

Pre

0.01

Dw

20

V [mV]

0

-20

-40

-60

0 10 20 t [ms]

-0.03

-40 -20 0 20 40 T [ms]

CLOSED LOOP LEARNING

• Learning to Act (to produce appropriate behavior)

• Instrumental (Operant) Conditioning

Sensor 2 conditioned

Input

Pavlov, 1927

Bell Food

Temporal Sequence

Salivation

Sensing

Closed loop

Adaptable

Neuron

Behaving

Env.

Instrumental/Operant

Conditioning

B.F. Skinner

(1904-1990)

Behaviorism

“All we need to know in order to describe and explain behavior is this: actions followed by good outcomes are likely to recur, and actions followed by bad outcomes are less likely to recur.” (Skinner, 1953)

Skinner had invented the type of experiments called operant conditioning.

Operant behavior: occurs without an observable external stimulus.

Operates on the organism’s environment.

The behavior is instrumental in securing a stimulus more representative of everyday learning.

Skinner Box

OPERANT CONDITIONING TECHNIQUES

• POSITIVE REINFORCEMENT = increasing a behavior by administering a reward

• NEGATIVE REINFORCEMENT = increasing a behavior by removing an aversive stimulus when a behavior occurs

• PUNISHMENT = decreasing a behavior by administering an aversive stimulus following a behavior OR by removing a positive stimulus

• EXTINCTION = decreasing a behavior by not rewarding it

Overview over different methods

M a c h in e L e a rn in g C la s s ic a l C o n d it io n in g

A n tic ip a to r y C o n tr o l o f A c tio n s a n d P r e d ic tio n o f V a lu e s

D y n a m ic P r o g .

( B e llm a n E q .)

R E IN F O R C E M E N T L E A R N IN G e x a m p le b a s e d d

- R u le s u p e r v is e d L .

=

R e s c o r la /

W a g n e r

=

E lig ib ilit y T ra c e s

T D ( 0 )

D iffe r e n tia l

H e b b - R u le

(”s lo w ”)

S y n a p t ic P la s t ic it y

C o r r e la tio n o f S ig n a ls

U N - S U P E R V IS E D L E A R N IN G c o r r e la tio n b a s e d

H e b b - R u le

D iffe r e n tia l

H e b b - R u le

(”fa s t”)

LT P

( LT D = a n ti)

=

N e u r.T D - fo r m a lis m

M o n te C a r lo

C o n tr o l

A c to r /C r itic

N e u r.T D - M o d e ls

(“C ritic ”) te c h n ic a l & B a s a l G a n g l.

IS O - L e a r n in g

S T D P - M o d e ls b io p h y s ic a l & n e tw o r k

IS O - M o d e l o f S T D P

S T D P

S A R S A

B io p h y s . o f S y n . P la s tic ity

D o p a m in e G lu ta m a te

Q - L e a r n in g

C o r r e la tio n b a s e d C o n tr o l

( n o n - e v a lu a t iv e )

I S O - C o n t r o l

N e u r o n a l R e w a r d S y s te m s

( B a s a l G a n g lia )

N O N - E VA L U AT IV E F E E D B A C K ( C o r r e la tio n s )

E VA L U AT IV E F E E D B A C K ( R e w a r d s )

How to assure behavioral & learning convergence ??

This is achieved by starting with a stable reflex-like action and learning to supercede it by an anticipatory action.

Remove before being hit !

Reflex Only

(Compare to an electronic closed loop controller!)

Disturbances

Set-Point

X

0

Controller

Control

Signals

Feedback

Controlled

System

Think of a Thermostat !

This structure assures initial (behavioral) stability (“homeostasis”)

Robot Application

Early: “Vision”

Late: “Bump” x w

S

Robot Application

Learning Goal:

Correlate the vision signals with the touch signals and navigate without collisions.

Initially built-in behavior: Retraction reaction whenever an obstacle is touched.

Robot Example

What has happened during learning to the system ?

The primary reflex re-action has effectively been eliminated and replaced by an anticipatory action

Disturbances

Set-Point

X

1

X

0 early late

Controller

Control

Signals

Feedback

Controlled

System

Overview over different methods – Supervised Learning

M a c h in e L e a rn in g C la s s ic a l C o n d it io n in g

A n tic ip a to r y C o n tr o l o f A c tio n s a n d P r e d ic tio n o f V a lu e s

D y n a m ic P r o g .

( B e llm a n E q .)

R E IN F O R C E M E N T L E A R N IN G e x a m p le b a s e d d

- R u le

=

R e s c o r la /

W a g n e r

=

E lig ib ilit y T ra c e s

T D ( 1 ) T D ( 0 )

D iffe r e n tia l

H e b b - R u le

(”s lo w ”)

S y n a p t ic P la s t ic it y

C o r r e la tio n o f S ig n a ls

U N - S U P E R V IS E D L E A R N IN G c o r r e la tio n b a s e d

H e b b - R u le

D iffe r e n tia l

H e b b - R u le

(”fa s t”)

LT P

( LT D = a n ti)

=

N e u r.T D - fo r m a lis m

M o n te C a r lo

C o n tr o l

A c to r /C r itic

N e u r.T D - M o d e ls

(“C ritic ”) te c h n ic a l & B a s a l G a n g l.

IS O - L e a r n in g

S T D P - M o d e ls b io p h y s ic a l & n e tw o r k

IS O - M o d e l o f S T D P

S T D P

S A R S A

B io p h y s . o f S y n . P la s tic ity

D o p a m in e G lu ta m a te

Q - L e a r n in g

C o r r e la tio n b a s e d C o n tr o l

( n o n - e v a lu a t iv e )

I S O - C o n t r o l

N e u r o n a l R e w a r d S y s te m s

( B a s a l G a n g lia )

N O N - E VA L U AT IV E F E E D B A C K ( C o r r e la tio n s )

E VA L U AT IV E F E E D B A C K ( R e w a r d s )

Supervised learning methods are mostly non-neuronal and will therefore not be discussed here.

Reinforcement Learning (RL)

Learning from rewards (and punishments)

Learning to assess the value of states.

Learning goal directed behavior.

RL has been developed rather independently from two different fields:

1) Dynamic Programming and Machine Learning (Bellman

Equation).

2) Psychology (Classical Conditioning) and later

Neuroscience (Dopamine System in the brain)

Back to Classical Conditioning

U(C)S = Unconditioned Stimulus

U(C)R = Unconditioned Response

CS = Conditioned Stimulus

CR = Conditioned Response

I. Pawlow

Less “classical” but also Conditioning !

(Example from a car advertisement)

Learning the association

CS → U(C)R

Porsche → Good Feeling

Overview over different methods – Reinforcement Learning

M a c h in e L e a rn in g C la s s ic a l C o n d it io n in g

A n tic ip a to r y C o n tr o l o f A c tio n s a n d P r e d ic tio n o f V a lu e s

D y n a m ic P r o g .

( B e llm a n E q .)

R E IN F O R C E M E N T L E A R N IN G e x a m p le b a s e d d

- R u le s u p e r v is e d L .

=

R e s c o r la /

W a g n e r

=

E lig ib ilit y T ra c e s

T D ( 1 ) T D ( 0 )

D iffe r e n tia l

H e b b - R u le

(”s lo w ”)

S y n a p t ic P la s t ic it y

C o r r e la tio n o f S ig n a ls

U N - S U P E R V IS E D L E A R N IN G c o r r e la tio n b a s e d

H e b b - R u le

You are here !

D iffe r e n tia l

H e b b - R u le

(”fa s t”)

LT P

( LT D = a n ti)

=

N e u r.T D - fo r m a lis m

M o n te C a r lo

C o n tr o l

A c to r /C r itic

N e u r.T D - M o d e ls

(“C ritic ”) te c h n ic a l & B a s a l G a n g l.

IS O - L e a r n in g

S T D P - M o d e ls b io p h y s ic a l & n e tw o r k

IS O - M o d e l o f S T D P

S T D P

S A R S A

B io p h y s . o f S y n . P la s tic ity

D o p a m in e G lu ta m a te

Q - L e a r n in g

C o r r e la tio n b a s e d C o n tr o l

( n o n - e v a lu a t iv e )

I S O - C o n t r o l

N e u r o n a l R e w a r d S y s te m s

( B a s a l G a n g lia )

N O N - E VA L U AT IV E F E E D B A C K ( C o r r e la tio n s )

E VA L U AT IV E F E E D B A C K ( R e w a r d s )

Overview over different methods – Reinforcement Learning

M a c h in e L e a rn in g C la s s ic a l C o n d it io n in g

A n tic ip a to r y C o n tr o l o f A c tio n s a n d P r e d ic tio n o f V a lu e s

S y n a p t ic P la s t ic it y

C o r r e la tio n o f S ig n a ls

D y n a m ic P r o g .

( B e llm a n E q .)

R E IN F O R C E M E N T L E A R N IN G e x a m p le b a s e d d

- R u le s u p e r v is e d L .

=

R e s c o r la /

W a g n e r

=

E lig ib ilit y T ra c e s

T D ( 1 )

U N - S U P E R V IS E D L E A R N IN G c o r r e la tio n b a s e d

H e b b - R u le

And later also here !

T D ( 0 )

D iffe r e n tia l

H e b b - R u le

(”s lo w ”)

D iffe r e n tia l

H e b b - R u le

(”fa s t”)

LT P

( LT D = a n ti)

=

N e u r.T D - fo r m a lis m

M o n te C a r lo

C o n tr o l

A c to r /C r itic

N e u r.T D - M o d e ls

(“C ritic ”) te c h n ic a l & B a s a l G a n g l.

IS O - L e a r n in g

S T D P - M o d e ls b io p h y s ic a l & n e tw o r k

IS O - M o d e l o f S T D P

S T D P

S A R S A

B io p h y s . o f S y n . P la s tic ity

D o p a m in e G lu ta m a te

Q - L e a r n in g

C o r r e la tio n b a s e d C o n tr o l

( n o n - e v a lu a t iv e )

I S O - C o n t r o l

N e u r o n a l R e w a r d S y s te m s

( B a s a l G a n g lia )

N O N - E VA L U AT IV E F E E D B A C K ( C o r r e la tio n s )

E VA L U AT IV E F E E D B A C K ( R e w a r d s )

Notation

US = r,R = “Reward”

CS = s,u = Stimulus = “State 1 ”

CR = v,V = (Strength of the) Expected Reward = “Value”

UR = --- (not required in mathematical formalisms of RL)

Weight = w = weight used for calculating the value; e.g. v= w u

Action = a = “Action”

Policy = p = “Policy”

1 Note: The notion of a “state” really only makes sense as soon as there is more than one state.

A note on “Value” and “Reward Expectation”

If you are at a certain state then you would value this state according to how much reward you can expect when moving on from this state to the end-point of your trial.

Hence:

Value = Expected Reward !

More accurately:

Value = Expected cumulative future discounted reward.

(for this, see later!)

Types of Rules

1) Rescorla-Wagner Rule: Allows for explaining several types of conditioning experiments.

2) TD-rule (TD-algorithm) allows measuring the value of states and allows accumulating rewards. Thereby it generalizes the Resc.-Wagner rule.

3) TD-algorithm can be extended to allow measuring the value of actions and thereby control behavior either by ways of a) Q or SARSA learning or with b) Actor-Critic Architectures

Overview over different methods – Reinforcement Learning

M a c h in e L e a rn in g C la s s ic a l C o n d it io n in g

A n tic ip a to r y C o n tr o l o f A c tio n s a n d P r e d ic tio n o f V a lu e s

D y n a m ic P r o g .

( B e llm a n E q .)

R E IN F O R C E M E N T L E A R N IN G e x a m p le b a s e d d

- R u le s u p e r v is e d L .

=

R e s c o r la /

W a g n e r

=

E lig ib ilit y T ra c e s

T D ( 1 ) T D ( 0 )

D iffe r e n tia l

H e b b - R u le

(”s lo w ”)

S y n a p t ic P la s t ic it y

C o r r e la tio n o f S ig n a ls

U N - S U P E R V IS E D L E A R N IN G c o r r e la tio n b a s e d

H e b b - R u le

You are here !

D iffe r e n tia l

H e b b - R u le

(”fa s t”)

LT P

( LT D = a n ti)

=

N e u r.T D - fo r m a lis m

M o n te C a r lo

C o n tr o l

A c to r /C r itic

N e u r.T D - M o d e ls

(“C ritic ”) te c h n ic a l & B a s a l G a n g l.

IS O - L e a r n in g

S T D P - M o d e ls b io p h y s ic a l & n e tw o r k

IS O - M o d e l o f S T D P

S T D P

S A R S A

B io p h y s . o f S y n . P la s tic ity

D o p a m in e G lu ta m a te

Q - L e a r n in g

C o r r e la tio n b a s e d C o n tr o l

( n o n - e v a lu a t iv e )

I S O - C o n t r o l

N e u r o n a l R e w a r d S y s te m s

( B a s a l G a n g lia )

N O N - E VA L U AT IV E F E E D B A C K ( C o r r e la tio n s )

E VA L U AT IV E F E E D B A C K ( R e w a r d s )

Rescorla-Wagner Rule

Pavlovian:

Pre-Train Train u→r

Result u→v=max

Extinction: u→r u→● u→v=0

Partial: u→r u →● u→v<max

We define: v = w u, with u=1 or u=0, binary and w → w + md u with d = r - v

The associability between stimulus u and reward r is represented by the learning rate m .

This learning rule minimizes the avg. squared error between actual reward r and the prediction v, hence min<(r-v) 2 >

We realize that d is the prediction error.

Pawlovian

Extinction

Partial

Stimulus u is paired with r=1 in 100% of the discrete “epochs” for Pawlovian and in 50% of the cases for Partial.

Rescorla-Wagner Rule, Vector Form for Multiple Stimuli

We define: v = w.u, and w → w + md u with d = r – v

Where we minimize d.

Blocking:

Pre-Train u

1

→r

Train u

1

+u

2

→r

Result u

1

→v=max, u

2

→ v=0

For Blocking: The association formed during pre-training leads to d =0. As w v= w

1 u

1

+ w

2 u

2

2 starts with zero the expected reward remains at r. This keeps association with u

2 cannot be learned. d =0 and the new

Rescorla-Wagner Rule, Vector Form for Multiple Stimuli

Inhibitory:

Pre-Train Train Result u

1

+u

2

→●, u

1

→r u

1

→v=max, u

2

→ v<0

Inhibitory Conditioning: Presentation of one stimulus together with the reward and alternating presenting a pair of stimuli where the reward is missing. In this case the second stimulus actually predicts the ABSENCE of the reward (negative v).

Trials in which the first stimulus is presented together with the reward lead to w

1

>0.

In trials where both stimuli are present the net prediction will be v= w

1 u

1

+ w

2 u

2

= 0.

As u

1,2

=1 (or zero) and w

1 consequentially, v(u

2

)<0.

>0, we get w

2

<0 and,

Rescorla-Wagner Rule, Vector Form for Multiple Stimuli

Overshadow:

Pre-Train Train u

1

+u

2

→r

Result u

1

→v<max, u

2

→v<max

Overshadowing: Presenting always two stimuli together with the reward will lead to a “sharing” of the reward prediction between them. We get v= learning rates two stimuli.

m w

1 u

1

+ w

2 u

2

= r. Using different will lead to differently strong growth of w

1,2 and represents the often observed different saliency of the

Rescorla-Wagner Rule, Vector Form for Multiple Stimuli

Secondary:

Pre-Train u

1

→r

Train u

2

→u

1

Result u

2

→ v=max

Secondary Conditioning reflect the “replacement” of one stimulus by a new one for the prediction of a reward.

As we have seen the Rescorla-Wagner Rule is very simple but still able to represent many of the basic findings of diverse conditioning experiments.

Secondary conditioning, however, CANNOT be captured.

Predicting

Future

Reward

The Rescorla-Wagner Rule cannot deal with the sequentiallity of stimuli (required to deal with Secondary Conditioning). As a consequence it treats this case similar to Inhibitory

Conditioning lead to negative w

2

.

Animals can predict to some degree such sequences and form the correct associations. For this we need algorithms that keep track of time.

Here we do this by ways of states that are subsequently visited and evaluated.

Prediction and Control

The goal of RL is two-fold:

1) To predict the value of states (exploring the state space following a policy) – Prediction Problem .

2) Change the policy towards finding the optimal policy –

Control Problem .

Terminology (again):

• State,

• Action,

• Reward,

• Value,

• Policy

Markov Decision Problems (MDPs)

te rm in a l s ta te s 1 5 1 6

1 3 1 4

9 rewards actions a

1 r

1 r

2 a

2 states s 1 2 3

1 0

4 5

11

6

1 2

7 a

1 4 a

1 5

8

If the future of the system depends always only on the current state and action then the system is said to be “Markovian”.

What does an RL-agent do ?

An RL-agent explores the state space trying to accumulate as much reward as possible. It follows a behavioral policy performing actions (which usually will lead the agent from one state to the next).

For the Prediction Problem: It updates the value of each given state by assessing how much future (!) reward can be obtained when moving onwards from this state (State

Space). It does not change the policy, rather it evaluates it.

( Policy Evaluation ).

value = 0.0

everywhere reward R=1

R

0.0

Policy: p(N) = 0.5

p(S) = 0.125

p(W) = 0.25

p(E) = 0.125

0.9

R

0.8

0.9

etc x x x x x 0.1 0.1 0.1 0.1 0.1

Policy Evaluation give values of states possible start locations

For the Control Problem: It updates the value of each given action at a given state and of by assessing how much future reward can be obtained when performing this action at that state (State-

Action Space

, which is larger than the State Space

). and all following actions at the following state moving onwards.

Guess: Will we have to evaluate ALL states and actions onwards?

What does an RL-agent do ?

Exploration – Exploitation Dilemma: The agent wants to get as much cumulative reward (also often called return ) as possible. For this it should always perform the most rewarding action “exploiting” its (learned) knowledge of the state space. This way it might however miss an action which leads (a bit further on) to a much more rewarding path.

Hence the agent must also “explore” into unknown parts of the state space. The agent must, thus, balance its policy to include exploitation and exploration.

Policies

1) Greedy Policy: The agent always exploits and selects the most rewarding action. This is sub-optimal as the agent never finds better new paths.

Policies

2) e -Greedy Policy: With a small probability e the agent will choose a non-optimal action. *All non-optimal actions are chosen with equal probability.* This can take very long as it is not known how big e should be.

One can also “anneal” the system by gradually lowering e to become more and more greedy.

3) Softmax Policy: e -greedy can be problematic because of (*). Softmax ranks the actions according to their values and chooses roughly following the ranking using for example:

P n exp(

Qa

)

T b= 1 exp(

Qb

)

T where Q a is value of the currently to be evaluated action a and T is a temperature parameter. For large all actions have approx. equal

T probability to get selected.

Overview over different methods – Reinforcement Learning

M a c h in e L e a rn in g C la s s ic a l C o n d it io n in g

A n tic ip a to r y C o n tr o l o f A c tio n s a n d P r e d ic tio n o f V a lu e s

D y n a m ic P r o g .

( B e llm a n E q .)

R E IN F O R C E M E N T L E A R N IN G e x a m p le b a s e d d

- R u le s u p e r v is e d L .

=

R e s c o r la /

W a g n e r

=

E lig ib ilit y T ra c e s

T D ( 1 ) T D ( 0 )

D iffe r e n tia l

H e b b - R u le

(”s lo w ”)

S y n a p t ic P la s t ic it y

C o r r e la tio n o f S ig n a ls

U N - S U P E R V IS E D L E A R N IN G c o r r e la tio n b a s e d

H e b b - R u le

You are here !

D iffe r e n tia l

H e b b - R u le

(”fa s t”)

LT P

( LT D = a n ti)

=

N e u r.T D - fo r m a lis m

M o n te C a r lo

C o n tr o l

A c to r /C r itic

N e u r.T D - M o d e ls

(“C ritic ”) te c h n ic a l & B a s a l G a n g l.

IS O - L e a r n in g

S T D P - M o d e ls b io p h y s ic a l & n e tw o r k

IS O - M o d e l o f S T D P

S T D P

S A R S A

B io p h y s . o f S y n . P la s tic ity

D o p a m in e G lu ta m a te

Q - L e a r n in g

C o r r e la tio n b a s e d C o n tr o l

( n o n - e v a lu a t iv e )

I S O - C o n t r o l

N e u r o n a l R e w a r d S y s te m s

( B a s a l G a n g lia )

N O N - E VA L U AT IV E F E E D B A C K ( C o r r e la tio n s )

E VA L U AT IV E F E E D B A C K ( R e w a r d s )

Towards TD-learning – Pictorial View

In the following slides we will treat “Policy evaluation”: We define some given policy and want to actions or in improving policies. evaluate the state space.

We are at the moment still not interested in evaluating

Back to the question: To get the value of a given state, will we have to evaluate ALL states and actions onwards?

There is no unique answer to this! Different methods exist which assign the value of a state by using differently many (weighted) values of subsequent states.

We will discuss a few but concentrate on the most commonly used TD-algorithm(s).

Temporal Difference (TD) Learning

Formalising RL: Policy Evaluation

with goal to find the optimal value function of the state space

We consider a sequence s next future reward which can be reached starting from state s complete return given by:

R t t

, r t+1

, s t+1

, r t+2

, . . . , r

T

, s

T

. Note, rewards occur downstream (in the future) from a visited state. Thus, r to be expected in the future from state s t t+1 t is the

. The is, thus, where ≤1 is a discount factor. This accounts for the fact that rewards in the far future should be valued less.

Reinforcement learning assumes that the value of a state V(s) is directly equivalent to the expected return E p at this state, where p denotes the

(here unspecified) action policy to be followed.

Thus, the value of state s t can be iteratively updated with:

We use a as a step-size parameter, which is not of great importance here, though, and can be held constant.

Note, if V(s t

) correctly predicts the expected complete return R t

, the update will be zero and we have found the final value. This method is called constanta Monte Carlo update . It requires to wait until a sequence has reached its terminal state (see some slides before!) before the update can commence. For long sequences this may be problematic. Thus, one should try to use an incremental procedure instead. We define a different update rule with:

The elegant trick is to assume that, if the process converges, the value of the next state V(s t+1

) should be an accurate estimate of the expected return downstream to this state (i.e., downstream to s t+1

). Thus, we would hope that the following holds:

| { z }

This is why it is called TD

(temp. diff.)

Learning

Indeed, proofs exist that under certain boundary conditions this procedure, known as TD(0) , converges to the optimal value function for all states.

Reinforcement Learning – Relations to Brain Function I

M a c h in e L e a rn in g C la s s ic a l C o n d it io n in g

A n tic ip a to r y C o n tr o l o f A c tio n s a n d P r e d ic tio n o f V a lu e s

D y n a m ic P r o g .

( B e llm a n E q .)

R E IN F O R C E M E N T L E A R N IN G e x a m p le b a s e d d

- R u le s u p e r v is e d L .

=

R e s c o r la /

W a g n e r

=

E lig ib ilit y T ra c e s

T D ( 1 ) T D ( 0 )

D iffe r e n tia l

H e b b - R u le

(”s lo w ”)

S y n a p t ic P la s t ic it y

C o r r e la tio n o f S ig n a ls

U N - S U P E R V IS E D L E A R N IN G c o r r e la tio n b a s e d

H e b b - R u le

You are here !

D iffe r e n tia l

H e b b - R u le

(”fa s t”)

LT P

( LT D = a n ti)

=

N e u r.T D - fo r m a lis m

M o n te C a r lo

C o n tr o l

A c to r /C r itic

N e u r.T D - M o d e ls

(“C ritic ”) te c h n ic a l & B a s a l G a n g l.

IS O - L e a r n in g

S T D P - M o d e ls b io p h y s ic a l & n e tw o r k

IS O - M o d e l o f S T D P

S T D P

S A R S A

B io p h y s . o f S y n . P la s tic ity

D o p a m in e G lu ta m a te

Q - L e a r n in g

C o r r e la tio n b a s e d C o n tr o l

( n o n - e v a lu a t iv e )

I S O - C o n t r o l

N e u r o n a l R e w a r d S y s te m s

( B a s a l G a n g lia )

N O N - E VA L U AT IV E F E E D B A C K ( C o r r e la tio n s )

E VA L U AT IV E F E E D B A C K ( R e w a r d s )

How to implement TD in a Neuronal Way

Now we have:

We had defined:

(first lecture!) w i

!

w i

+ ц[r(t + 1) + н v(t + 1) а v(t)]uа(t) r

Trace

E

X d

S w

1

S

v’

v

r e w a r d

X n

X

1

( n - i)

X

0

How to implement TD in a Neuronal Way x d x

v’

v ( t) v(t+1)-v(t)

Serial-Compound representations

X

1

,…X n for defining an eligibility trace.

Note: v(t+1)v(t) is acausal

(future!). Make it “causal” by using delays.

X

1

X

0 r e w a r d

x w = 1

0 d v (t) r

Reinforcement Learning – Relations to Brain Function II

M a c h in e L e a rn in g C la s s ic a l C o n d it io n in g

A n tic ip a to r y C o n tr o l o f A c tio n s a n d P r e d ic tio n o f V a lu e s

D y n a m ic P r o g .

( B e llm a n E q .)

R E IN F O R C E M E N T L E A R N IN G e x a m p le b a s e d d

- R u le s u p e r v is e d L .

=

R e s c o r la /

W a g n e r

=

E lig ib ilit y T ra c e s

T D ( 1 ) T D ( 0 )

D iffe r e n tia l

H e b b - R u le

(”s lo w ”)

S y n a p t ic P la s t ic it y

C o r r e la tio n o f S ig n a ls

U N - S U P E R V IS E D L E A R N IN G c o r r e la tio n b a s e d

H e b b - R u le

You are here !

D iffe r e n tia l

H e b b - R u le

(”fa s t”)

LT P

( LT D = a n ti)

=

N e u r.T D - fo r m a lis m

M o n te C a r lo

C o n tr o l

A c to r /C r itic

N e u r.T D - M o d e ls

(“C ritic ”) te c h n ic a l & B a s a l G a n g l.

IS O - L e a r n in g

S T D P - M o d e ls b io p h y s ic a l & n e tw o r k

IS O - M o d e l o f S T D P

S T D P

S A R S A

B io p h y s . o f S y n . P la s tic ity

D o p a m in e G lu ta m a te

Q - L e a r n in g

C o r r e la tio n b a s e d C o n tr o l

( n o n - e v a lu a t iv e )

I S O - C o n t r o l

N e u r o n a l R e w a r d S y s te m s

( B a s a l G a n g lia )

N O N - E VA L U AT IV E F E E D B A C K ( C o r r e la tio n s )

E VA L U AT IV E F E E D B A C K ( R e w a r d s )

TD-learning & Brain Function

N o v e lty R e s p o n s e : n o p r e d ic tio n , r e w a r d o c c u r s

A fte r le a r n in g : p r e d ic te d r e w a r d o c c u r s

This neuron is supposed to represent the d -error of

TD-learning, which has moved forward as expected.

n o C S r

DA-responses in the basal ganglia pars compacta of the substantia nigra and the medially adjoining ventral tegmental area (VTA).

C S

A fte r le a r n in g : r p r e d ic te d r e w a r d d o e s n o t o c c u r

C S

1 .0 s

Omission of reward leads to inhibition as also predicted by the TD-rule.

TD-learning & Brain Function

R e w a r d

E x p e c ta tio n

R e w a r d E x p e c ta tio n

( P o p u la tio n R e s p o n s e )

T r r

1 .0 s

This is even better visible from the population response of 68 striatal neurons

T r r

1 .5 s

This neuron is supposed to represent the reward expectation signal v. It has extended forward (almost) to the CS (here called Tr) as expected from the TD-rule. Such neurons are found in the striatum, orbitofrontal cortex and amygdala.

Reinforcement Learning – The Control Problem

So far we have concentrated on evaluating and unchanging policy. Now comes the question of how to actually improve a policy p trying to find the optimal policy .

We will discuss:

1) Actor-Critic Architectures

But not:

2) SARSA Learning

3) Q-Learning

Abbreviation for policy: p

Reinforcement Learning – Control Problem I

M a c h in e L e a rn in g C la s s ic a l C o n d it io n in g

A n tic ip a to r y C o n tr o l o f A c tio n s a n d P r e d ic tio n o f V a lu e s

D y n a m ic P r o g .

( B e llm a n E q .)

R E IN F O R C E M E N T L E A R N IN G e x a m p le b a s e d d

- R u le s u p e r v is e d L .

=

R e s c o r la /

W a g n e r

=

E lig ib ilit y T ra c e s

T D ( 1 ) T D ( 0 )

D iffe r e n tia l

H e b b - R u le

(”s lo w ”)

S y n a p t ic P la s t ic it y

C o r r e la tio n o f S ig n a ls

U N - S U P E R V IS E D L E A R N IN G c o r r e la tio n b a s e d

H e b b - R u le

You are here !

D iffe r e n tia l

H e b b - R u le

(”fa s t”)

LT P

( LT D = a n ti)

=

N e u r.T D - fo r m a lis m

M o n te C a r lo

C o n tr o l

A c to r /C r itic

N e u r.T D - M o d e ls

(“C ritic ”) te c h n ic a l & B a s a l G a n g l.

IS O - L e a r n in g

S T D P - M o d e ls b io p h y s ic a l & n e tw o r k

IS O - M o d e l o f S T D P

S T D P

S A R S A

B io p h y s . o f S y n . P la s tic ity

D o p a m in e G lu ta m a te

Q - L e a r n in g

C o r r e la tio n b a s e d C o n tr o l

( n o n - e v a lu a t iv e )

I S O - C o n t r o l

N e u r o n a l R e w a r d S y s te m s

( B a s a l G a n g lia )

N O N - E VA L U AT IV E F E E D B A C K ( C o r r e la tio n s )

E VA L U AT IV E F E E D B A C K ( R e w a r d s )

Control Loops

D istu rb a n ce s

S e t-P o in t

X

0

C o n tro lle r

C o n tro l

S ig n a ls

F e e d b a ck

C o n tro lle d

S yste m

A basic feedback–loop controller (Reflex) as in the slide before.

C o n te xt

Control Loops

C ritic

A cto r

(C o n tro lle r)

D istu rb a n ce s

R e in fo rce m e n t

S ig n a l

A ctio n s

(C o n tro l S ig n a ls )

E n viro n m e n t

(C o n tro lle d S y s te m )

X

0

F e e d b a ck

An Actor-Critic Architecture : The Critic produces evaluative , reinforcement feedback for the Actor by observing the consequences of its actions. The Critic takes the form of a TD-error which gives an indication if things have gone better or worse than expected with the preceding action. Thus, this TD-error can be used to evaluate the preceding action: If the error is positive the tendency to select this action should be strengthened or else, lessened.

Example of an Actor-Critic Procedure

Action selection here follows the Gibb’s

Softmax method: щ(s; a) =

P e p(s;a) b e p(s;b) where p(s,a) are the values of the modifiable (by the Critcic!) policy parameters of the actor, indicting the tendency to select action a when being in state s.

We can now modify p for a given state action pair at time t with: p(s t

; a t

) p(s t

; a t

) + мо t where d t is the d -error of the TD-Critic.

Reinforcement Learning – Control I

& Brain Function

III

M a c h in e L e a rn in g C la s s ic a l C o n d it io n in g

A n tic ip a to r y C o n tr o l o f A c tio n s a n d P r e d ic tio n o f V a lu e s

D y n a m ic P r o g .

( B e llm a n E q .)

R E IN F O R C E M E N T L E A R N IN G e x a m p le b a s e d d

- R u le s u p e r v is e d L .

=

R e s c o r la /

W a g n e r

=

E lig ib ilit y T ra c e s

T D ( 1 ) T D ( 0 )

D iffe r e n tia l

H e b b - R u le

(”s lo w ”)

S y n a p t ic P la s t ic it y

C o r r e la tio n o f S ig n a ls

U N - S U P E R V IS E D L E A R N IN G c o r r e la tio n b a s e d

H e b b - R u le

You are here !

D iffe r e n tia l

H e b b - R u le

(”fa s t”)

LT P

( LT D = a n ti)

=

N e u r.T D - fo r m a lis m

M o n te C a r lo

C o n tr o l

A c to r /C r itic

N e u r.T D - M o d e ls

(“C ritic ”) te c h n ic a l & B a s a l G a n g l.

IS O - L e a r n in g

S T D P - M o d e ls b io p h y s ic a l & n e tw o r k

IS O - M o d e l o f S T D P

S T D P

S A R S A

B io p h y s . o f S y n . P la s tic ity

D o p a m in e G lu ta m a te

Q - L e a r n in g

C o r r e la tio n b a s e d C o n tr o l

( n o n - e v a lu a t iv e )

I S O - C o n t r o l

N e u r o n a l R e w a r d S y s te m s

( B a s a l G a n g lia )

N O N - E VA L U AT IV E F E E D B A C K ( C o r r e la tio n s )

E VA L U AT IV E F E E D B A C K ( R e w a r d s )

C o rt e x (C )

Actor-Critics and the Basal Ganglia

F ro n t a l

C o rt e x

T h a la m u s

The basal ganglia are a brain structure involved in motor control .

It has been suggested that they learn by ways of an Actor-Critic mechanism.

V P S N r G P i

S t ria t u m (S )

D A -S y s t e m

( S N c ,V T A ,R R A )

S T N

G P e

VP=ventral pallidum,

SNr=substantia nigra pars reticulata,

SNc=substantia nigra pars compacta,

GPi=globus pallidus pars interna,

GPe=globus pallidus pars externa,

VTA=ventral tegmental area,

RRA=retrorubral area,

STN=subthalamic nucleus.

Actor-Critics and the Basal Ganglia: The Critic

DA

C

C

S T N

C o r tic o s tr ia ta l

( ” p r e ” )

N ig r o s tr ia ta l

( ” D A ” )

S

-

+

D A r

Cortex=C, striatum=S, STN=subthalamic

Nucleus, DA=dopamine system, r=reward.

G lu

D A

M e d i u m -s i z e d S p i n y P ro j e c ti o n

N e u ro n in th e S tria tu m (”p o s t”)

So called striosomal modules fulfill the functions of the adaptive Critic. The prediction-error ( d ) characteristics of the DA-neurons of the Critic are generated by: 1) Equating the reward r with excitatory input from the lateral hypothalamus.

2) Equating the term v(t) with indirect excitation at the DA-neurons which is initiated from striatal striosomes and channelled through the subthalamic nucleus onto the DA neurons. 3) Equating the term v(t−1) with direct, long-lasting inhibition from striatal striosomes onto the DA-neurons. There are many problems with this simplistic view though: timing, mismatch to anatomy, etc.