pptx

advertisement

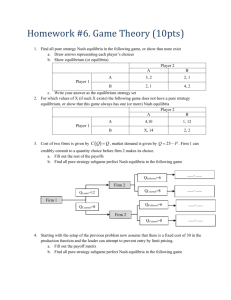

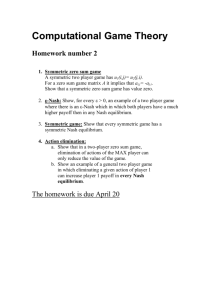

Worlds with many intelligent agents An important consideration in AI, as well as games, distributed systems and networking, economics, sociology, political science, international relations, and other disciplines Multi-agent Systems, or “Games” Worlds with multiple agents, somtimes called “games”, are VASTLY more complicated than worlds with single agents. They’re also more interesting and fun! There are many different aspects to learn about: - Representations (we will consider two, but there are MANY) - Evaluation metrics (I’ll show you a few standard ones, but again there are MANY) - Inference and planning (we will talk about a few techniques) - Learning (We won’t really cover this) - Communication (We won’t directly cover this) - Computational Complexity (I’ll mention this in passing) - Applications (We’ll look at several examples, but there are TONS) Example Game (Prisoner’s Dilemma) Student A and Student B have been naughty, cheating on an exam. Their teacher finds some evidence, and sends them to the dean’s office, where the dean grills them individually to find out more. B rats out A Outcome: A rats out B Dean now has lots of evidence Dean has lots of evidence on on both students, both are A, gives B a light punishment suspended for the semester. for cooperating. A: -7, B: -7 B says nothing A says nothing Dean has lots of evidence on B, gives A a light punishment for cooperating. A: -10, B: -1 Dean has little evidence on both, gives both some penalty. A: -3, B: -3 A: -1, B: -10 Example Game (Prisoner’s Dilemma) Definitions: the set of choices available to each agent is called their action set, denoted Actions(A) or Actions(B). Each square in the grid is called an outcome. Mathematically, let a be an action in Actions(A), and b in Actions(B). O(a, b) is the outcome square. OA(a, b) is the reward for A, and OB(a, b) is the reward for B. This grid is called a normal form representation of the game. B rats out A Outcome: A rats out B Dean now has lots of evidence Dean has lots of evidence on on both students, both are A, gives B a light punishment suspended for the semester. for cooperating. A: -7, B: -7 B says nothing A says nothing Dean has lots of evidence on B, gives A a light punishment for cooperating. A: -10, B: -1 Dean has little evidence on both, gives both some penalty. A: -3, B: -3 A: -1, B: -10 Quiz: Best outcome? Which outcome is the best, and why? B rats out A Outcome: A rats out B Dean now has lots of evidence Dean has lots of evidence on on both students, both are A, gives B a light punishment suspended for the semester. for cooperating. A: -7, B: -7 B says nothing A says nothing Dean has lots of evidence on B, gives A a light punishment for cooperating. A: -10, B: -1 Dean has little evidence on both, gives both some penalty. A: -3, B: -3 A: -1, B: -10 Answer: Best outcome? The answer depends. Let’s look at a few definitions of “best”. B rats out A Outcome: A rats out B Dean now has lots of evidence Dean has lots of evidence on on both students, both are A, gives B a light punishment suspended for the semester. for cooperating. A: -7, B: -7 B says nothing A says nothing Dean has lots of evidence on B, gives A a light punishment for cooperating. A: -10, B: -1 Dean has little evidence on both, gives both some penalty. A: -3, B: -3 A: -1, B: -10 Definition: Dominant Strategy Definition: A strategy (or pure strategy) for player A (ditto for B) is a selection of one of the actions from Actions(A). Definition: A’s strategy a ∊ Actions(A) is dominant if ∀b ∊ Actions(B), ∀a’≠a ∊ Actions(A) . OA(a, b) > OA(a’, b) Another way of saying this is: a is a dominant strategy for A if it is better than any other strategy a’ for A, no matter what strategy B chooses. Quiz: Dominant Strategy Definition: A’s strategy a ∊ Actions(A) is dominant if ∀b ∊ Actions(B), ∀a’≠a ∊ Actions(A) . OA(a, b) > OA(a’, b) Does A have a dominant strategy for the game below? What is it? What about B? B rats out A Outcome: A rats out B Dean now has lots of evidence Dean has lots of evidence on on both students, both are A, gives B a light punishment suspended for the semester. for cooperating. A: -7, B: -7 B says nothing A says nothing Dean has lots of evidence on B, gives A a light punishment for cooperating. A: -10, B: -1 Dean has little evidence on both, gives both some penalty. A: -3, B: -3 A: -1, B: -10 Answer: Dominant Strategy Dominant strategy for B! B says B rats nothing out A Definition: A’s strategy a ∊ Actions(A) is dominant if ∀b ∊ Actions(B), ∀a’≠a ∊ Actions(A) . OA(a, b) > OA(a’, b) Does A have a dominant strategy for the game below? What is it? What about B? Dominant strategy for A! A rats out B A says nothing Dean now has lots of evidence Dean has lots of evidence on on both students, both are A, gives B a light punishment suspended for the semester. for cooperating. A: -7, B: -7 Dean has lots of evidence on B, gives A a light punishment for cooperating. A: -10, B: -1 Dean has little evidence on both, gives both some penalty. A: -3, B: -3 A: -1, B: -10 Definition: Pareto Optimal Definition: An outcome o is called pareto optimal if there is no other outcome o’ such that all players prefer o’ to o. Notice: pareto optimality can lead to lots of optimal outcomes, not just one. That’s because o’ is considered better than o only if ALL players prefer o’ to o. Quiz: Pareto Optimal Definition: An outcome o is called pareto optimal if there is no other outcome o’ such that all players prefer o’ to o. Quiz: which outcome(s) is (are) pareto optimal? B rats out A Outcome: A rats out B Dean now has lots of evidence Dean has lots of evidence on on both students, both are A, gives B a light punishment suspended for the semester. for cooperating. A: -7, B: -7 B says nothing A says nothing Dean has lots of evidence on B, gives A a light punishment for cooperating. A: -10, B: -1 Dean has little evidence on both, gives both some penalty. A: -3, B: -3 A: -1, B: -10 Answer: Pareto Optimal Definition: An outcome o is called pareto optimal if there is no other outcome o’ such that all players prefer o’ to o. Quiz: which outcome(s) is (are) pareto optimal? B rats out A Outcome: A rats out B Dean now has lots of evidence Dean has lots of evidence on on both students, both are A, gives B a light punishment suspended for the semester. for cooperating. A: -7, B: -7 B says nothing A says nothing Dean has lots of evidence on B, gives A a light punishment for cooperating. A: -10, B: -1 Dean has little evidence on both, gives both some penalty. A: -3, B: -3 A: -1, B: -10 Definition: (Nash) Equilibrium Definition: An outcome O(a, b) is called a Nash Equilibrium (or just equilibrium) if 1. ∀a’≠a ∊ Actions(A) . OA(a, b) >= OA(a’, b) and 2. ∀b’≠b ∊ Actions(B) . OB(a, b) >= OB(a, b’) Another way of saying this is that an outcome is an equilibrium if each player is as happy with this outcome as any other available option, assuming the other player sticks with his equilibrium strategy. Notice that this means an outcome is stable, in the sense that both players have no incentive to change their strategy. Quiz: (Nash) Equilibrium Definition: An outcome O(a, b) is called a Nash Equilibrium if 1. ∀a’≠a ∊ Actions(A) . OA(a, b) >= OA(a’, b) and 2. ∀b’≠b ∊ Actions(B) . OB(a, b) >= OB(a, b’) Which outcome(s) is (are) a Nash equilibrium? B rats out A Outcome: A rats out B Dean now has lots of evidence Dean has lots of evidence on on both students, both are A, gives B a light punishment suspended for the semester. for cooperating. A: -7, B: -7 B says nothing A says nothing Dean has lots of evidence on B, gives A a light punishment for cooperating. A: -10, B: -1 Dean has little evidence on both, gives both some penalty. A: -3, B: -3 A: -1, B: -10 Answer: (Nash) Equilibrium Definition: An outcome O(a, b) is called a Nash Equilibrium if 1. ∀a’≠a ∊ Actions(A) . OA(a, b) >= OA(a’, b) and 2. ∀b’≠b ∊ Actions(B) . OB(a, b) >= OB(a, b’) Which outcome(s) is (are) a Nash equilibrium? B rats out A Outcome: A rats out B Dean now has lots of evidence Dean has lots of evidence on on both students, both are A, gives B a light punishment suspended for the semester. for cooperating. A: -7, B: -7 B says nothing A says nothing Dean has lots of evidence on B, gives A a light punishment for cooperating. A: -10, B: -1 Dean has little evidence on both, gives both some penalty. A: -3, B: -3 A: -1, B: -10 Answer: (Nash) Equilibrium One reason that the prisoner’s dilemma is famous: The only outcome that is a Nash equilibrium is also the only outcome that is NOT a pareto optimum. This is not true in all games; it depends on the rewards! For the prisoner’s dilemma, it means that the only stable solution is probably the worst possible solution for the players. (It’s sort of a depressing example.) B rats out A A rats out B Dean now has lots of evidence Dean has lots of evidence on on both students, both are A, gives B a light punishment suspended for the semester. for cooperating. A: -7, B: -7 B says nothing A says nothing Dean has lots of evidence on B, gives A a light punishment for cooperating. A: -10, B: -1 Dean has little evidence on both, gives both some penalty. A: -3, B: -3 A: -1, B: -10 Quiz: The game of Chicken (a.k.a. “Hawk-Dove”, “snow-drift”) What are the dominant strategies, pareto optima, and Nash equlibria for the Chicken game? Player 1 Swerve Straight Player 2 Swerve Neither player wins; a tie. P1: 0, P2: 0 Player 2 wins. Straight Player 1 wins. P1: +1, P2: -1 The players crash; a catastrophe! P1: -1, P2: +1 P1: -10, P2: -10 Answer: The game of Chicken (a.k.a. “Hawk-Dove”, “snow-drift”) What are the dominant strategies, pareto optima, and Nash equlibria for the Chicken game? Pareto Nash Player 1 Optimum Equilibrium Swerve Straight Player 2 No dominant strategies! Swerve Neither player wins; a tie. P1: 0, P2: 0 Player 2 wins. Straight Player 1 wins. P1: +1, P2: -1 The players crash; a catastrophe! P1: -1, P2: +1 P1: -10, P2: -10 Quiz: Coordination Games Left P1: 10, P2: 10 P1: -10, P2: -10 P1: -10, P2: -10 P1: 10, P2: 10 Party Home Party Right 10, 10 0, 0 Home Left Right What are the dominant strategies, pareto optima, and Nash equlibria for each of the games below? 0, 0 5, 5 Pure coordination game Party 10, 5 0, 0 0, 0 5, 10 “Battle of the Sexes” game Stag Hare Stag Home 10, 10 7, 0 Hare Party Home Drive on which side of the road? 0, 7 7, 7 “Stag Hunt” game Dominant Strategy Pareto Optimum Nash Equilibrium Answer: Coordination Games Left P1: 10, P2: 10 P1: -10, P2: -10 P1: -10, P2: -10 P1: 10, P2: 10 Party Home Party Right 10, 10 0, 0 Home Left Right What are the dominant strategies, pareto optima, and Nash equlibria for each of the games below? 0, 0 5, 5 Pure coordination game Party 10, 5 0, 0 0, 0 5, 10 “Battle of the Sexes” game Stag Hare Stag Home 10, 10 7, 0 Hare Party Home Drive on which side of the road? 0, 7 7, 7 “Stag Hunt” game Humans don’t necessarily play Nash equlibria The “Guess 2/3 of the Average” Game is famous because in experiments with people, they often don’t play Nash equilibrium strategies. (Other games like this are the centipede game and the prisoner’s dilemma.) Thus Nash equilibria aren’t perfect models of human behavior. Let’s try it. Here are the rules to “Guess 2/3 of the average”: 1. Everyone guess a number between 0 and 100 (integer or real). Once everyone guesses, we’ll compute the average. 2. The set of people who come closest to 2/3 of the average are the winners (payoff 1), everyone else loses (payoff 0). Nash equilibrium for “Guess 2/3 of the Average” If everyone guesses 100, then 2/3 of the average is 66.7, so 66.7 is the biggest possible result, so everyone has an incentive to guess 66.7 or less, so anything more than 66.7 can’t be a Nash equilibrium. If everyone guesses 66.7, then 2/3 of the average will be around 44.4, so everyone has an incentive to guess lower, so guessing more than 44.4 can’t be a Nash equilibrium. … If everyone guesses 0, then we have a Nash equilibrium (they’d all guess right and win, and there’s no incentive for anyone to change their guess assuming everyone else remains at 0). Constant-Sum Games Player 1 Player 2 Scissors Paper Rock Rock Paper Scissors 0, 0 +1, -1 -1, +1 -1, +1 0, 0 +1, -1 +1, -1 -1, +1 0, 0 Notice: if you add up the reward for P1 and P2 in each square, you get 0. The fact that all squares have the same sum makes this a “constantsum” game. This game is in fact a “zero-sum” game. Constant-Sum Games Player 2 Scissors Paper Rock Player 1 Rock Paper Scissors 0, 0 +1, -1 -1, +1 -1, +1 0, 0 +1, -1 +1, -1 -1, +1 0, 0 Actually, any constant-sum game can be converted to an equivalent zerosum game by subtracting a constant from every payoff. They are “equivalent” in that this transformation preserves equilibria, optimal, and dominant strategies. Constant-Sum Games Player 1 Player 2 Scissors Paper Rock Rock Paper Scissors 0, 0 +1, -1 -1, +1 -1, +1 0, 0 +1, -1 +1, -1 -1, +1 0, 0 In constant-sum games, any gain for one player is offset by a loss for the other player. So these are hypercompetitive games. Quiz: Pure Strategy Nash Equilibria Player 1 Player 2 Scissors Paper Rock Rock Paper Scissors 0, 0 +1, -1 -1, +1 -1, +1 0, 0 +1, -1 +1, -1 -1, +1 0, 0 Can you find a Nash equilibrium for Rock-PaperScissors? Answer: Pure Strategy Nash Equilibria Player 2 Scissors Paper Rock Player 1 Rock Paper Scissors 0, 0 +1, -1 -1, +1 -1, +1 0, 0 +1, -1 +1, -1 -1, +1 0, 0 Can you find a Nash equilibrium for Rock-PaperScissors? No “pure” strategy for either player can lead to a Nash equilibrium for this game! (Our definition of “strategy” is equivalent to “pure strategy”. We’ll talk about unpure strategies next.) Definition: Mixed Strategy A mixed strategy for player A is a probability distribution over the set of available actions, Actions(A). For instance, for Rock-Paper-Scissors, the distribution P(Rock) = 1/3 P(Paper) = 1/3 P(Scissors) = 1/3 is a mixed strategy. A pure strategy is also a mixed strategy, but with a probability distribution that places all the probability on one outcome. E.g.: P(Rock) = 0, P(Paper) = 1, P(Scissors) = 0. Definition: Mixed Strategy Nash Equilibrium A mixed strategy profile for players A and B is a pair of mixed strategies, distribution PA for player A and distribution PB for player B. A mixed strategy Nash equilibrium is a mixed strategy profile (PA, PB) such that neither player would gain by choosing a different mixed strategy, assuming the other player’s mixed strategy stays the same. Quiz: Mixed Strategy Nash Equilibria Player 2 Scissors Paper Rock Player 1 Rock Paper Scissors 0, 0 +1, -1 -1, +1 Can you find a mixed-strategy Nash equilibrium for Rock Paper Scissors? (This can be hard in general, but see if you can guess it for this game.) -1, +1 0, 0 +1, -1 +1, -1 -1, +1 0, 0 Answer: Mixed Strategy Nash Equilibria Player 2 Scissors Paper Rock Player 1 Rock Paper Scissors 0, 0 +1, -1 -1, +1 -1, +1 0, 0 +1, -1 +1, -1 -1, +1 0, 0 The mixed strategy profile where each player has 1/3 probability for each action is a Nash equilibrium. (It’s the only Nash equilibrium for this game.) Nash’s Theorem Theorem (Nash, 1951): Every finite game (finite number of players, finite number of pure strategies) has at least one mixed-strategy Nash equilibrium. Nash, John (1951) "Non-Cooperative Games" The Annals of Mathematics 54(2):286-295. (John Nash did not call them “Nash equilibria”, that name came later.) He shared the 1994 Nobel Memorial Prize in Economic Sciences with game theorists Reinhard Selten and John Harsanyi for his work on Nash equilibria. He suffered from schizophrenia in the 1950s and 1960s, as depicted in the 1998 film, “A Beautiful Mind”. He nevertheless recovered enough to return to academia and continue his research. More on Constant-Sum Games Minimax Theorem (John von Neumann, 1928): For every two-person, zero-sum game with finitely many pure strategies, there exists a mixed strategy for each player and a value V such that: Given player 2’s strategy, the best possible payoff for player 1 is V Given player 1’s strategy, the best possible payoff for player 2 is –V. The existence of strategies part is a special case of Nash’s theorem, and a precursor to it. This basically says that player 1 can guarantee himself a payoff of at least V, and player 2 can guarantee himself a payoff of at least –V. If both players play optimally, that’s exactly what they will get. It’s called “minimax” because the players get this value by pursuing a strategy that tries to minimize the maximum payoff of the other player. We’ll come back to this. Definition: The value V is called the value of the game. Eg: The value of Rock-paper-scissors is 0; the best that P1 can hope to achieve, assuming P2 plays optimally (1/3 probability of each action), is a payoff of 0.