Shou-mech-design

advertisement

Eric Shou

Stat/CSE 598B

What is Game Theory?

Game theory is a branch of applied

mathematics that is often used in the

context of economics.

Studies strategic interactions between

agents.

Agents maximize their return, given the

strategies the other agents choose

(Wikipedia).

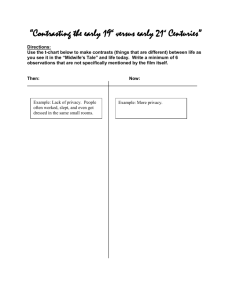

Example

Player 2

Player 1

Left

Right

Up

10,10

2,15

Down

15, 2

5, 5

Dominant strategy for Player 1 is to choose down

and the dominant strategy for Player 2 is to choose

right.

When Player 1 chooses down and Player 2 chooses

right, they are in equilibrium because neither

player will gain utility if he/she changes his/her

position given the other player’s position.

What is Mechanism Design?

In economics, mechanism design is the art of

designing rules of a game to achieve a specific

outcome.

Each player has an incentive to behave as the designer

intends.

Game is said to implement the desired outcome.

strength of such a result depends on the solution

concept used in the game (Wikipedia).

Unlimited Supply Goods

A seller is considered to have an unlimited supply of a

good if the seller has at least as many identical items as

the number of consumers, or the seller can reproduce

items at a negligible marginal cost (Goldberg).

Examples: digital audio files, pay-per-view television.

Pricing of Unlimited Supply Goods

Use market analysis and then set a

fixed price.

Fixed pricing often does not lead to

optimal fixed price revenue due to

inaccuracies in market analysis.

Pricing of Unlimited Supply Goods

Revenue

Pricing of Unlimited Goods

Use auctions to take input bids from bidders to

determine what price to sell at and which bidders to

give a copy of the item to.

Assume bidders in the auction each have a private

utility value, the maximum value they are willing to

pay for the good.

Assume each bidder is rational; each bidder bids so as

to maximize their own personal welfare, i.e., the

difference between their utility value and the price

they must pay for the good.

Digital Goods Auctions

n bidders

Each bidder has private utility of a good at hand

Bidders submit bids in [0,1]

Auctioneer determines who receives good and at what

prices.

Truthful Auctions

Most common solution concept for mechanism design

is “truthfulness.”

Mechanism designed so that truthfully reporting one’s

value is dominant strategy.

Bid auctions are considered truthful if each bidder’s

personal welfare is maximized when he/she bids

his/her true utility value.

Truthful Mechanisms

Mechanisms that are truthful simplifies analysis by

removing need to worry about potential gaming users

might apply to raise their utility.

Thus, truthfulness as a solution concept is desired!

Setting of Truthful Auctions

Collusion among multiple players is prohibited.

Utility functions of bidders are constrained to simple

classes.

Mechanisms are executed once.

These strong assumptions limit domains in which

these mechanisms can be implemented.

How do you get people to truthfully bid the price they

are willing to pay without the assumptions?

Mechanism Design

Differential Privacy

Main idea of paper: “Strong privacy guarantees, such

as given by differential privacy, can inform and enrich

the field of Mechanism Design.”

Differential privacy allows the relaxation of

truthfulness where the incentive to misrepresent a

value is non-zero, but tightly controlled.

What is Differential Privacy?

A randomized function M gives ε-differential privacy if

for all data sets D1 and D2 differing on a single user,

and all S ⊆ Range(M),

Pr[M(D1) ∈ S] ≤ exp(ε) × Pr[M(D2) ∈ S]

Previous approaches focus on real valued functions

whose values are insensitive to the change in data of a

single individual and whose usefulness is relatively

unaffected by additive perturbations.

Game Theory Implications

Differential Privacy implies many game theoretic

properties:

Approximate truthfulness

Collusion Resistance

Composability (Repeatability)

Approximate Truthfulness

For any mechanism M giving ε-differential privacy and

any non-negative function g of its range, for any D1

and D2 differing on a single input,

E[g(M(D1))] ≤ exp(ε) × E[g(M(D2))]

Example: In an auction with .001-differential privacy,

one bidder can change the sell price of the item so that

the sell price if the bidder was truthful was at most

exp(.001)=1.001 times the sell price if the bidder was

untruthful.

Collusion Resistance

One fortunate property of differential privacy is that it

degrades smoothly with the number of changes in the

data set.

For any mechanism M giving ε-differential privacy and

any non-negative function g of its range, for any D1

and D2 differing on at most t inputs,

E[g(M(D1))] ≤ exp(εt) × E[g(M(D2))]

Example

If a mechanism has .001-differential privacy, and there

were a group of 10 bidders trying to improve their

utility by underbidding, the 10 bidders can change the

sell price of the item so that the sell price if they were

truthful was at most exp(10*.001)=1.01 times the sell

price if the bidders were untruthful.

If the auctioned item was a music file, which was

supposed to be sold at $1 if the bidders were truthful,

the most the 10 bidders can lower it to is $.99.

$1 / $.99 = 1.01

Composability

The sequential application of mechanisms{Mi}, each

giving {εi}-differential privacy, gives (Σi εi)-differential

privacy.

Example: If an auction with .001-differential privacy is

rerun daily for a week, the seven prices of the week

ahead can be skewed by at most exp(7*.001)=1.007 by a

single bidder

General Differential Privacy

Mechanism

Goal: randomly map a set of n inputs from a domain D

to some output in a range R.

Mechanism is driven by an input query function

q: Dn * R ->

that assigns any a score to any pair (d,r)

from Dn * R given that higher scores are more

appealing.

Goal of mechanism is to return an r є R given d є D

such that q(d,r) is approximately maximized while

guaranteeing differential privacy.

Example: Revenue is q(d,r) = r * #{i: di > r}.

General Differential Privacy

Mechanism

Let

:= Choose r with probability proportional to

exp(εq(d,r)) * μ(r)

probability measure

(d) output r with probability α exp(εq(d,r))

A change to q(d,r) caused by a single participant has a

small multiplicative influence on the density of any

output, thus guaranteeing differential privacy.

Example: p(r) α exp(ε r * #{i: di > r})

General Differential Privacy

Mechanism

Let

(d) output r with probability α exp(εq(d,r))

Higher scores are more probable because probability

associated with a score increases as eεq(d,r) increases.

ex is an increasing function.

Thus in an auction with ε-differential privacy, the

expected revenue is close to the optimal fixed price

revenue (OPT).

General Differential Privacy

Mechanism

Two properties:

Privacy

Accuracy

Privacy

(d) gives (2εΔq)-differential privacy.

Δq is the largest possible difference in the query

function when applied to two inputs that differ only on

a single user’s value, for all r.

Proof: Letting μ be a base measure, the density of

at r is equal to:

exp(q(d, r))μ(r) / ∫exp(q(d, r))μ(r)dr

Single change in d can change q by at most Δq ,

By a factor of at most exp(εΔq) in the numerator and at

least exp(-εΔq) in the denominator.

exp(εΔq) / exp(-εΔq) = exp(2εΔq)

Example: Δq = 1

Accuracy

Good

outcomes

Set value

Lemma: Let St = {r : q(d, r) > OPT− t},

Pr(S2t) < exp(−t)/μ(St)

Bad outcomes

Theorem (Accuracy):

For those t ≥ ln(OPT/tμ(St))/ε,

E[q(d, εqє(d))] > OPT − 3t

Size of μ(St) as a function of t defines how large t must

before exponential bias can overcome small size of

μ(St).

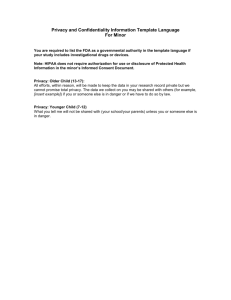

Graph of Price vs. Revenue

OPT

μ(St) = width

Pr(S2t) < exp(−t)/μ(St) = small

Source: Mcsherry, Talwar

Applications to Pricing and Auctions

Unlimited supply auctions

Attribute auctions

Constrained pricing problems

Unlimited Supply Auctions

Bidder has demand curve bi: [0,1]

+

describing

how much of an item they want at a given price, p.

Demand is non-increasing with price, and resources of

a bidder are limited such that pbi ≤ 1 for all i, p.

q(b,p) = pΣibi(p) dollars in revenue

Mechanism

gives 2ε-differential privacy, and has

expected revenue at least:

OPT – 3ln(e + ε2OPTm)/ ε, where m is the number of

items sold in OPT.

Cost of approximate

truthfulness

Attribute Auctions

Introduce public attributes to each of the bidders (e.g.

age, gender, state of residence).

Attributes can be used to segment the market. By

offering different prices to different segments and

leading to larger optimal revenue.

SEGk = # of different segmentations into k markets

OPTk = optimal revenue using k market segments

Taking q to be the revenue function over

segmentations into k markets and their prices,

has expected revenue at least:

OPTk – 3(ln(e + εk+1OPTkSEGkmk)/ε

Constrained Pricing Problem

Limited set of offered

prices that can go to

bidders.

Example: A movie

theater must decide

which movie to run.

Solicit bids from patrons

on different films.

Theater only collects

revenue from bids for

one film.

Constrained Pricing Problem

Bidders bid on k different items

Demand curve bij : [0,1] for each item j є [k]

Demand non-increasing and bidders’ resources

limited so that pbij(p) ≤ 1 for each i, j, p.

For each item j, at price p, revenue

q(b, (j, p)) = pΣibij(p)

Expected revenue at least:

OPT − 3 ln(e + ε2OPTkm)/ε

Comments

Tradeoff between approximate truthfulness and

expected revenue.

Attribute auctions – price discrimination?

Application of mechanism to other games?

Parallels with disclosure limitation?

Conclusions

General different privacy mechanism,

robust than truthful mechanisms.

Approximate truthfulness

Collusion resistance

Repeatability

Properties

Privacy

Accuracy

Applications

Unlimited supply auctions

Attribute auctions

Constrained pricing

, is more

Questions?