Construct Validity: A Universal Validity System or Just AnotheTest

advertisement

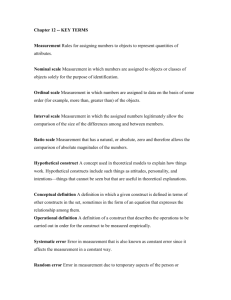

Construct Validity: A Universal Validity System Susan Embretson Georgia Institute of Technology University of Maryland Conference on the Concept of Validity Introduction • Validity is a controversial concept in educational and psychological testing • Research on educational and psychological tests during the last half of the 20th century was guided by distinction of types of validity • Criterion-related validity, content validity and construct validity • Construct validity is the most problematic type of validity • It involves theory and the relationship of data to theory Introduction Yet the most controversial type of validity became the sole type of validity in the revised joint standards for educational and psychological tests (AERA/APA/NCME, 1999) In the current standards “Validity refers to the degree to which evidence and theory support the interpretations of test scores entailed by proposed uses of test” Content validity and criterion-related validity are two of five different kinds of evidence. Reflects substantial impact from Messick’s (1989) thesis of a single type of validity (construct validity) with several different aspects. Topics Overview of the validity concept Current issues on validity Discontent with construct validity for educational tests Need for content validity Critique of content validity as basis for educational testing Universal system for construct validity Applies to all tests Achievement tests Ability tests Personality/psychopathology Summary History of the Construct Validity Concept: Origins • American Psychological Association (1954). Technical recommendations for psychological tests and diagnostic techniques. Psychological Bulletin, 51, 2, 1-38. • Prepared by a joint committee of the American Psychological Association, American Educational Research Association, and National Council on Measurements Used in Education. – “Validity information indicates to the test user the degree to which the test is capable of achieving certain aims. … “Thus, a vocabulary test might be used simply as a measure of present vocabulary, as a predictor of college success, as a means of discriminating schizophrenics from organics, or as a means of making inferences about "intellectual capacity.“ – “We can distinguish among the four types of validity by noting that each involves a different emphasis on the criterion. (p. 13) Implications of Original Views • Same test can be used in different ways • Relevant type of validity depends on test use • The types of validity differ in the importance of the behaviors involved in the test More Recent Views on Types of Validity • Standards for Educational and Psychological Testing (1954; 1966; 1974, 1985, 1999) • 1985 – “Traditionally, the various means of accumulating validity evidence have been grouped into categories called content-related, criterion-related and constructrelated evidence of validity. …” “These categories are convenient.…but the use of category labels does not imply that there are distinct types of validity…” – “An ideal validation includes several types of evidence, which span all three of the traditional categories.” Conceptualizations of Validity: Psychological Testing Textbooks • “All validity analyses address the same basic question: Does the test measure knowledge and characteristics that are appropriate to its purpose. There are three types of validity analysis, each answering this question in a slight different way.” (Friedenberg,1995) • “ …..the types of validity are potentially independent of one another.” (Murphy & Davidshofer,1988) • “There are three types of evidence: (1) construct-related, (2) criterion-related, and (3) content-related.” …..”It is important to emphasize that categories for grouping different types of validity are convenient; however, the use of categories does not imply that there are distinct forms of validity.” Kaplan & Saccuszzo (1993) Most Recent View on Validity • Standards for Educational & Psychological Testing 1999 • “Validity refers to the degee to which evidence and theory support the interpretations of test scores entailed by proposed uses of tests”. (p.9) • “These sources of evidence may illuminate different aspects of validity, but they do not represent distinct types of validity. Validity is a unitary concept.” • “The wide variety of tests and circumstances makes it natural that some types of evidence will be especially critical in a given case, whereas other types will be less useful.” (p. 9) • “Because a validity argument typically depends on more than one proposition, strong evidence in support of one in no way diminishes the need for evidence to support others. (p. 11). Implications of 1999 Validity Concept No distinct types of validity Multiple sources of evidence for single test aim Example-Mathematical achievement test used to assess readiness for more advanced course Propositions for inference 1) Certain skills are prerequisite for advanced course 2) Content domain structure for the test represents skills 3) Test scores represent domain performance 4) Test scores are not unduly influenced by irrelevant variables, such as writing ability, spatial ability, anxiety etc. 5) Success in advanced course can be assessed 6) Test scores are related to success in advanced curriculum Current Issues with the Validity Concept: Educational Testing Lissitz and Samuelson (2007) Propose some changes in terminology and emphasis in the validity concept Argue that “construct validity as it currently exists has little to offer test construction in educational testing”. In fact, their system leads to a most startling conclusion Construct validity is irrelevant to defining what is measured by an educational test!! Content validity becomes primary in determining what an educational test measures Critique of Content Validity as Basis for Educational Testing • Content validity is not up to the burden of defining what is measured by a test • Relying on content validity evidence, as available in practice, to determine the meaning of educational tests could have detrimental impact on test quality • Giving content validity primacy for educational tests could lead to very different types and standards of evidence for educational and psychological tests Validity in Educational Tests Response to Lissitz & Samuelson • Background • Embretson, S. E. (1983). Construct validity: Construct representation versus nomothetic span. Psychological Bulletin, 93, 179-197. • Construct representation • Establishes the meaning of test scores from Identifying the theoretical mechanisms that underlie test performance (i.e., the processes, strategies and knowledge) • Nomothetic span • Establishes the significance of test scores by Identifying the network of relationships of test scores with other variables Validity in Lissitz and Samuelson’s Framework Taxonomy of test evaluation procedures 1) Investigative Focus Internal sources = analysis of the test and its items Provides evidence about what is measured External sources =relationship of test scores to other measures & criteria Provides evidence about impact, utility and trait theory 2) Perspective Theoretical orientation = concern with measuring traits Practical orientation = concern with measuring achievement Figure 2. Taxonomy of Test Evaluation Procedures Perspective Theoretical Internal Latent Process External Nomological Network Practical Content and Reliability Utility and Impact Figure 1. The Structure of the Technical Evaluation of Educational Testing Test Evaluation Internal External Latent Process Theory (Nomological) Content Utility (Criterion) Reliability Impact Validity Implications for Validity System represents best current practices Internal meaning (validity) established For educational tests, content and reliability evidence Evidence based on internal structure (i.e., reliability, etc.) Evidence based on test content For psychological tests, depends on latent processes Evidence based on response processes Evidence based on internal structure (item correlations) But, notice the limitations Response process and test content evidence are not relevant to both types of tests External evidence based on relations to other variables has no role in validity Internal Evidence for Educational Tests Part I Reliability concept in the Lissitz and Samuelson framework is generally multifaceted and traditional Item interrelationships Relationship of test scores over conditions or time Differential item functioning (DIF) Adverse impact (Perhaps adverse impact and DIF could be considered as external information) Internal Evidence for Educational Tests Part II • Concept of Content Validity • Previous test standards (1985)** Content validity was a type of evidence that “…..demonstrates the degree to which a sample of items, tasks or questions on a test are representative of some defined universe or domain of content” Two important elements added by L&S Cognitive complexity level “whether the test covers the relevant instructional or content domain and the coverage is at the right level of cognitive complexity” Test development procedures Information about item writer credentials and quality control Test Blueprints as Content Validity Evidence Blueprints represent domain strcture by specifying percentages of test items that should fall in various categories Example- test blueprint for NAEP for mathematics Five content strands Three levels of complexity Majority of states employ similar strands But, blueprints and other forms of test specifications (along with reliability evidence) are not sufficient to establish meaning for an educational test 1. Domain Structure is a Theory Which Changes Over Time NAEP framework, particularly for cognitive complexity, has evolved (NAGB, 2006) Views on complexity level also may change based on empirical evidence, such as item difficulty modeling, task decomposition and other methods Changes in domain structure also could evolve in response to recommendations of panels of experts. National Mathematics Advisory Panel 2. Reliability of Classifications is Not Well Documented Scant evidence that items can be reliably classified into the blueprint categories Certain factors in an achievement domain may make these categorizations difficult For example, in mathematics a single real-world problem may involve algebra and number sense, as well as measurement content Item could be classified into three of the five strands. Similarly, classifying items for mathematical complexity also can be difficult Abstract definitions of the various levels in many systems 3. Unrepresentative Samples from Domain Practical limitations on testing conditions may lead to unrepresentative samples of the content domain More objective item formats, such as multiple choice and limited constructed response have long been favored Reliably and inexpensively scored But these formats may not elicit the deeper levels of reasoning that experts believe should be assessed for the subject matter 4. Irrelevant Item Solving Processes Using content specifications, along with item writer credentials and item quality control, may not be sufficient to assure high quality tests Leighton and Gierl (2007) view content specifications as one of three cognitive models for making inferences about examinee’s thinking processes For the cognitive model of test specifications for inferences is that no evidence is provided that examinees are in fact using the presumed skills and knowledge to solve items NAEP Validity Study for Mathematics: Grade 4 and Grade 8 Mathematicians examined items from NAEP and some state accountability tests Small percent of items deemed flawed (3-7%), Larger percent of items deemed marginal (23-30%) Marginal items had construct-irrelevant difficulties problems with pattern specifications unduly complicated presentation unclear or misleading language excessively time-consuming processes Marginal items previously had survived both contentrelated and empirical methods of evaluation Examples of Irrelevant Knowledge, Skills and Abilities • Source • National Mathematics Advisory Panel (2008). Foundations for success: The final report of the National Mathematics Advisory Panel. Washington, DC: Department of Education • Method- logical-theoretical analysis by mathematicians & curriculum experts • Mathematics involves aspects of logical analysis, spatial ability and verbal reasoning, yet their role can be excessive Dependence on Non-Mathematical Knowledge Dependence on Logic, Not Mathematics Excessive Dependence on Spatial Ability Excessive Dependence on Reasoning and Minimal Mathemataics Implication for Educational Tests Identifying irrelevant sources of item performance requires more than content-related evidence Latent process evidence is relevant E.g., methods include cognitive analysis (e.g., item difficulty modeling), verbal reports of examinees and factor analysis External sources of evidence may provide needed safeguards Example: Implications of the correlation of an algebra test with a test of English If this correlation is too high, it may suggest a failure in the system of internal evidence that supports test meaning Construct Validity as a Universal System and a Unifying Concept Features Consistent with current Test Standards (1999) Consistent with many of Lissitz and Samuelson’s distinctions and elaborations Validity Concept Universal All sources of evidence are included Appropriate for both educational and psychological tests Interactive Evidence in one category is influenced or informed by adequacy in the other categories Categories of Evidence in the Validity System • Eleven categories of evidence – Categories apply to both educational and psychological tests • Consistent with most validity frameworks and the current Test Standards (1999), it is postulated that tests differ in which categories in the system are most crucial to test meaning, depending on its intended use • Even so, most categories of evidence are potentially relevant to a test A Universal Validity System Testing Conditions Latent Process Studies Logic/ Theory Item Design Principles Test Specs Domain Structure Internal Meaning Scoring Models Psychometric Properties Other Measures Utility Impact ExternalSignificance Internal Categories of Evidence Logic/Theoretical Analysis Theory of the subject matter content, specification of areas and their interrelationships Latent Process Studies Studies on content interrelationships, prerequisite skills, impact of task features & testing conditions on responses, etc. Testing Conditions Available test administration methods, scoring mechanisms (raters, machine scoring, computer algorithms), testing time, locations, etc. Item Design Principles Scientific evidence and knowledge about how features of items impact the KSAs applied by examinees-- Formats, item context, complexity and specific content Internal Categories of Evidence Domain Structure Specification of content areas and levels, as well as relative importance and interrelationships Test Specifications Blueprints specifying domain structure representation, constraints on item features, specification of testing conditions Psychometric Properties Item interrelationships, DIF, reliability, relationship of item psychometric properties to content & stimulus features, reliability Scoring Models Psychometric models and procedures to combine responses within and between items, weighting of items, item selection standards, relationship of scores to proficiency categories, etc. Decisions about dimensionality, guessing, elimination of poorly fitting items etc. impacts scores and their relationships External Categories of Evidence Utility Relationship of scores to external variables, criteria & categories Other Measures Relationship of scores to other tests of knowledge, skills and abilities Impact Consequences of test use, adverse impact, proficiency levels & etc The Universal System of Validity • Test Specifications is the most essential category: it determines (with Scoring Models) • Representation of domain structure • Psychometric properties of the test • External relationships of test scores • Preceding Test Specifications are categories of scientific evidence, knowledge and theory • Domain Structure • Item Design Principles • In turn preceded by • Latent Process Studies • Logical/Theoretical Analysis • Testing Conditions General Features of Validity System Test meaning is determined by internal sources of information Test significance is determined by external sources of information Content aspects of the test are central to test meaning Test specifications, which includes test content and test development procedures, have a central role in determining test meaning Test specifications also determine the psychometric properties of tests, including reliability information General Features of the Universal Validity System Broad system of evidence is relevant to support Test Specifications Item Design Principles --Relevancy of examinees’ responses to the intended domain Domain Structure --Regarded as a theory Other preceding evidence Latent Process Studies Logical/theoretical analyses of the domain Testing Conditions General Features of the Universal Validity System Interactions among components Internal evidence expectations for external External evidence informs adequacy of evidence from internal sources Potential inadequacies arise when Hypotheses are not confirmed Unintended consequences of test use System of evidence includes both theoretical and practical elements Relevant to educational and psychological tests The Universal System of Validity • Example of Feedback • Speeded math test to emphasize automatic numerical processes • External evidence-- strong adverse impact • Internal evidence categories to question • Item Design • Relationship of item speededness to automaticity • Domain Structure • Heavy emphasis on the automaticity of numerical skills Application to Educational and Psychological Tests: Achievement Current emphasis Test specification Central to standards-based testing Domain structures Essential to blueprints Scoring models & Psychometric properties State-of-art in large scale testing Underemphasized areas Item design principles Research basis is emerging Latent process studies Important in establishing construct-relevancy of student responses Logical/Theoretical Analysis Important in defining domain structure Implications of feedback from studies on Utility, Other Measures, Impact Application to Educational and Psychological Tests: Achievement • Example: Item Design & Latent Process Studies • Item response format for mathematics items • Katz, I.R., Bennett, R.E., & Berger, A.E. (2000). Effects of response format on difficulty of SATMathematics items: It’s not the strategy. Journal of Educational Measurement, 37(1), 39-57. • Mathematical & non-mathematical item content • National Mathematics Advisory Panel Application to Educational and Psychological Tests: Personality Current emphasis Logical/Theoretical Analysis I.e., personality theories Utility Prediction of job performance Other Measures Factor analytic studies Underemphasized areas Test Specifications Domain Structure Item Design Principles Latent Process Studies Application to Educational and Psychological Tests: Personality Test Specifications & Domain Structure Ignoring domain structure Lack of convergent validity Multifaceted personality constructs Unbalanced or uncontrolled item set Best represented facet emphasizied in item selection Item selection will not be consistent Example– Conscientiousness construct facets Dependabilty, Achievement (Moutafi et al 2006) Opposing relationship to commitment Duty (-), Achievement Striving (+) Application to Educational and Psychological Tests: Personality Test Specifications & Domain Structure • Example of structure in personality – Facet theory to • Define domain membership • Define domain structure & observations • Roskam, E. & Broers, N. (1996). Constructing questionnaires: An application of facet design and item response theory to the study of lonesomeness. In G. Engelhard & M. Wilson (Eds.). Objective Measurement: Theory into Practice Volume 3. Norwood, NJ: Ablex Publishing. Pp. 349-385. Facet Theory Approach to Measure of Lonesomeness Application to Educational and Psychological Tests: Personality Item Design Principles & Latent Process Studies Most measures are self-report format Basis of self-report may involve strong construct-irrelevant aspects Tasks require judgments about relevance of statement to own behavior and then reliably summarizing California Psychological Inventory items When in a group of people I usually do what the others want rather than make suggestions There have been a few times when I have been very mean to another person. I am a good mixer. I am a better talker than listener. Application to Educational and Psychological Tests: Personality • Science of self-report is emerging and linked to cognitive psychology • Stone, A. A., Turkkan, J. S., Bachrach, C.A., Jobe, J. B., Kurtzman, H. S. & Cain, V. S. (2000). The science of selfreport. Mahwah, NJ: Erlbaum Publishers. • Studies on how item and test design impacts self-report accuracy – Self-reports under optimal conditions are biased • Daily diaries of dietary self-reports contain insufficient calories to sustain life • Smith, A. F., Jobe, J. B., & Mingay, D. M. (1991b). Retrieval from memory of dietary information. Applied Cognitive Psychology, 5, 269-296. • Personality inventories are far less optimal for reliable reporting Application to Educational and Psychological Tests: Personality Mechanisms in self-report Response styles Social desirability Acquiesence Memory & Context When memory information is sufficient, other methods are applied Context Information earlier in the questionnaire Ambiguity of issue discussed Moods evoked by earlier questions Self-Report Context Effects Summary History of validity shows changes in the concept Notion of types still apparent Construct validity is a universal system of evidence relevant to diverse tests Construct validity is appropriate for educational tests Content aspect is not sufficient