Presentation - Jordan University of Science and Technology

advertisement

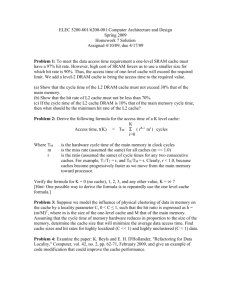

Memory Management Jordan University of Science & Technology CPE 746 Embedded Real-Time Systems Prepared By: Salam Al-Mandil & Hala Obaidat Supervised By: Dr. Lo’ai Tawalbeh Outline Introduction Common Memory Types Composing Memory Memory Hierarchy Caches Application Memory Management Static memory management Dynamic memory management Memory Allocation The problem of fragmentation Memory Protection Recycling techniques Introduction An embedded system is a special-purpose computer system designed to perform one or a few dedicated functions, sometimes with real-time computing constraints. An embedded system is part of a larger system. Embedded systems often have small memory and are required to run a long time, so memory management is a major concern when developing real-time applications. Common Memory Types RAM DRAM: Volatile memory. Address lines are multiplexed. The 1st half is sent 1st & called the RAS. The 2nd half is sent later & called CAS. A capacitor and a single transistor / bit => better capacity. Requires periodical refreshing every 10-100 ms => dynamic. Cheaper / bit => lower cost. Slower => used for main memory. Reading DRAM Super cell (2,1) Step 1(a): Row access strobe (RAS) selects row 2. Step 1(b): Row 2 copied from DRAM array to row buffer. 16 x 8 DRAM chip cols 0 RAS = 2 2 / 1 2 3 0 addr 1 rows memory controller 2 8 / 3 data internal row buffer Reading DRAM Super cell (2,1) Step 2(a): Column access strobe (CAS) selects column 1. Step 2(b): Super cell (2,1) copied from buff to data lines, and eventually back to the 16 x 8 DRAM chip CPU. cols 0 CAS = 1 2 / 2 3 0 addr To CPU 1 rows memory controller super cell (2,1) 1 2 8 / 3 data super cell (2,1) internal row buffer RAM SRAM: Volatile memory. Six transistors / bit => lower capacity. No refreshing required => faster & lower power consumption. More expensive / bit => higher cost. Faster => used in caches. Some Memory Types ROM: Non-volatile memory. Can be read from but not written to, by a processor in an embedded system. Traditionally written to, “programmed”, before inserting to embedded system. Stores constant data needed by system. Horizontal lines = words, vertical lines = data. Some embedded systems work without RAM, exclusively on ROM, because their programs and data are rarely changed. Some Memory Types Flash Memory: Non-volatile memory. Can be electrically erased & reprogrammed. Used in memory cards, and USB flash drives. It is erased and programmed in large blocks at once, rather than one word at a time. Examples of applications include PDAs and laptop computers, digital audio players, digital cameras and mobile phones. Type Volatile? Writeable? Erase Size Max Erase Cycles Cost (per Byte) Speed SRAM Yes Yes Byte Unlimited Expensive Fast DRAM Yes Yes Byte Unlimited Moderate Moderate Masked ROM No No n/a n/a Inexpensive Fast No Once, with a device program mer n/a n/a Moderate Fast No Yes, with a device program mer Moderate Fast Expensive Fast to read, slow to erase/wri te Moderate Fast to read, slow to erase/wri te PROM EPROM EEPROM Flash NVRAM No No No Yes Yes Yes Entire Chip Limited (consult datasheet ) Byte Limited (consult datasheet ) Sector Limited (consult datasheet ) Byte Unlimited Expensive (SRAM + battery) Fast Composing Memory When available memory is larger, simply ignore unneeded high-order address bits and higher data lines. When available memory is smaller, compose several smaller memories into one larger memory: Connect side-by-side. Connect top to bottom. Combine techniques. Connect side-by-side To increase width of words. 2m × 3n ROM 2m × n ROM enable 2m × n ROM 2m × n ROM Increase width of words A0 … … … Am … Q3n-1 … Q2n-1 … Q0 Connect top to bottom To increase number of words. Increase number of words 2m+1 × n ROM 2m × n ROM A0 Am-1 … … 1 × 2 decoder … Am 2m × n ROM enable … … … Qn-1 Q0 Combine techniques To increase number and width of words. A Increase number and width of words enable outputs Memory Hierarchy Is an approach for organizing memory and storage systems. A memory hierarchy is organized into several levels – each smaller, faster, & more expensive / byte than the next lower level. For each k, the faster, smaller device at level k serves as a cache for the larger, slower device at level k+1. Programs tend to access the data at level k more often than they access the data at level k+1. An Example Memory Hierarchy L0: registers Smaller, faster, and costlier (per byte) storage devices L1: on-chip L1 cache (SRAM) L2: L3: Larger, slower, and cheaper (per byte) storage devices L5: L4: CPU registers hold words retrieved from L1 cache. off-chip L2 cache (SRAM) L1 cache holds cache lines retrieved from the L2 cache memory. L2 cache holds cache lines retrieved from main memory. main memory (DRAM) Main memory holds disk blocks retrieved from local disks. local secondary storage (local disks) Local disks hold files retrieved from disks on remote network servers. remote secondary storage (distributed file systems, Web servers) Caches Cache: The first level(s) of the memory hierarchy encountered once the address leaves the CPU. The term is generally used whenever buffering is employed to reuse commonly occurring items such as webpage caches, file caches, & name caches. Caching in a Memory Hierarchy Level k: 8 4 9 10 4 Level k+1: 14 10 3 Smaller, faster, more expensive device at level k caches a subset of the blocks from level k+1 Data is copied between levels in block-sized transfer units 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Larger, slower, cheaper storage device at level k+1 is partitioned into blocks. General Caching Concepts 14 12 Level k: 0 1 2 3 4* 12 9 14 3 12 4* Level k+1: Request 12 14 Request 12 0 1 2 3 4 4* 5 6 7 8 9 10 11 12 13 14 15 Program needs object d, which is stored in some block b. Cache hit Program finds b in the cache at level k. E.g., block 14. Cache miss b is not at level k, so level k cache must fetch it from level k+1. E.g., block 12. If level k cache is full, then some current block must be replaced (evicted). Which one is the “victim”? We’ll see later. Cache Placement 1. 2. 3. There are 3 categories of cache organization: Direct-mapped. Fully-associative. Set-associative. Direct-Mapped The block can appear in 1 place only. Fastest & simplest organization but highest miss rate due to contention. Mapping is usually: Block address % Number of blocks in cache. Tag Index Offset V T D Data Valid = Fully-Associative The block can appear anywhere in the cache. Slowest organization but lowest miss rate. Tag Offset Data V T D V T D V T D … Valid = = = Set-Associative The block can appear anywhere within a single set. (n-way set associative) The set number is usually: Block address % Number of sets in the cache. Tag Index V T D Offset V T D Data Valid = = Cache Replacement In a direct-mapped cache, only 1 block is checked for a hit, & only that block can be replaced. For set-associative or fully-associative caches, the evicted block is chosen using three strategies: Random. LRU. FIFO. Cache Replacement As the associativity increases => LRU harder & more expensive to implement => LRU is approximated. LRU & random perform almost equally for larger caches. But LRU outperforms others for small caches. Write Policies 1. 1. Write Back: the information is only written to the block in the cache. Write Through: the information is written to both the block in the cache & to the block in lower levels. Reducing the Miss Rate 1. 2. Larger Block Sizes & Caches. Higher Associativity. Application Memory Management Allocation: to allocate portions of memory to programs at their request. Recycling: freeing it for reuse when no longer needed. Memory Management In many embedded systems, the kernel and application programs execute in the same space i.e., there is no memory protection. The embedded operating systems thus make large effort to reduce its memory occupation size. Memory Management An RTOS uses small memory size by including only the necessary functionality for an application. We have two kinds of memory management: Static Dynamic Static memory management provides tasks with temporary data space. The system’s free memory is divided into a pool of fixed sized memory blocks. When a task finishes using a memory block it must return it to the pool. Static memory management Another way is to provide temporary space for tasks is via priorities: A high priority pool : is sized to have the worst-case memory demand of the system A low priority pool : is given the remaining free memory. Dynamic memory management employs memory swapping, overlays, multiprogramming with a fixed number of tasks (MFT), multiprogramming with a variable number of tasks (MVT) and demand paging. Overlays allow programs larger than the available memory to be executed by partitioning the code and swapping them from disk to memory. Dynamic memory management MFT: a fixed number of equalized code parts are in memory at the same time. MVT: is like MFT except that the size of the partition depends on the needs of the program. Demand paging : have fixed-size pages that reside in non-contiguous memory, unlike those in MFT and MVT Memory Allocation is the process of assigning blocks of memory on request . Memory for user processes is divided into multiple partitions of varying sizes. Hole : is a block of available memory. Static memory allocation means that all memory is allocated to each process or thread when the system starts up. In this case, you never have to ask for memory while a process is being executed. This is very costly. The advantage of this in embedded systems is that the whole issue of memory-related bugs-due to leaks, failures, and dangling pointers-simply does not exist . Dynamic Storage-Allocation How to satisfy a request of size n from a list of free holes. This means that during runtime, a process is asking the system for a memory block of a certain size to hold a certain data structure. Some RTOSs support a timeout function on a memory request. You ask the OS for memory within a prescribed time limit. Dynamic Storage-Allocation Schemes First-fit: Allocate the first hole that is big enough, so it is fast Best-fit: Allocate the smallest hole that is big enough; must search entire list, unless ordered by size. Buddy: it divides memory into partitions to try to satisfy a memory request as suitably as possible. Buddy memory allocation allocates memory in powers of 2 it only allocates blocks of certain sizes has many free lists, one for each permitted size How buddy works? If memory is to be allocated 1-Look for a memory slot of a suitable size (the minimal 2k block that is larger then the requested memory) If it is found, it is allocated to the program If not, it tries to make a suitable memory slot. The system does so by trying the following: Split a free memory slot larger than the requested memory size into half If the lower limit is reached, then allocate that amount of memory Go back to step 1 (look for a memory slot of a suitable size) Repeat this process until a suitable memory slot is found How buddy works? 1. 2. 3. If memory is to be freed Free the block of memory Look at the neighboring block - is it free too? If it is, combine the two, and go back to step 2 and repeat this process until either the upper limit is reached (all memory is freed), or until a non-free neighbor block is encountered Example: buddy system 64K 64K 64K 64K 64K 64K 64K 64K 64K t=0 1024K t=1 A64K 64K 128K 256K 512K t=2 A64K 64K B-128K 256K 512K t=3 A64K C64K B-128K 256K 512K t=4 A64K C64K B-128K D-128K 128K 512K t=5 A64K 64K B-128K D-128K 128K 512K t=6 128K B-128K D-128K 128K 512K t=7 256K D-128K 128K 512K t=8 1024K 64K 64K 64K 64K 64K 64K 64 K The problem of fragmentation neither first fit nor best fit is clearly better that the other in terms of storage utilization, but first fit is generally faster. All the previous schemes has external fragmentation. the buddy memory system has little external fragmentation. Fragmentation External Fragmentation–total memory space exists to satisfy a request, but it is not contiguous. Internal Fragmentation–allocated memory may be slightly larger than requested memory; this size difference is memory internal to a partition, but not being used. Example: Internal Fragmentation Memory Protection it may not be acceptable for a hardware failure to corrupt data in memory. So, use of a hardware protection mechanism is recommended. This hardware protection mechanism can be found in the processor or MMU. MMUs also enable address translation, which is not needed in RT because we use crosscompilers that generate PIC code (Position Independent Code). Hardware Memory Protection Recycling techniques There are many ways for automatic memory managers to determine what memory is no longer required garbage collection relies on determining which blocks are not pointed to by any program variables . Recycling techniques Tracing collectors : Automatic memory managers that follow pointers to determine which blocks of memory are reachable from program variables. Reference counts : is a count of how many references (that is, pointers) there are to a particular memory block from other blocks . Example : Tracing collectors Mark-sweep collection: Phase1: all blocks that can be reached by the program are marked. Phase2: the collector sweeps all allocated memory, searching for blocks that have not been marked. If it finds any, it returns them to the allocator for reuse. Mark-sweep collection The drawbacks : It must scan the entire memory in use before any memory can be freed. It must run to completion or, if interrupted, start again from scratch. Example : Reference counts Simple reference counting : a reference count is kept for each object. The count is incremented for each new reference, decremented if a reference is overwritten, or if the referring object is recycled. If a reference count falls to zero, then the object is no longer required and can be recycled. it is hard to implement efficiently because of the cost of updating the counts. References: http://www.memorymanagement.org/articles /recycle.html http://www.dedicated-systems.com http://www.Wikipedia.org http://www.cs.utexas.edu http://www.netrino.com S. Baskiyar,Ph.D. and N.Meghanathan,A Survey of Contemporary Real-time Operating Systems.